Triggering hallucinations in model-based MRI reconstruction via adversarial perturbations

Generative models are increasingly used to improve the quality of medical imaging, such as reconstruction of magnetic resonance images and computed tomography. However, it is well-known that such models are susceptible to hallucinations: they may ins…

Authors: Suna Buğday, Yvan Saeys, Jonathan Peck

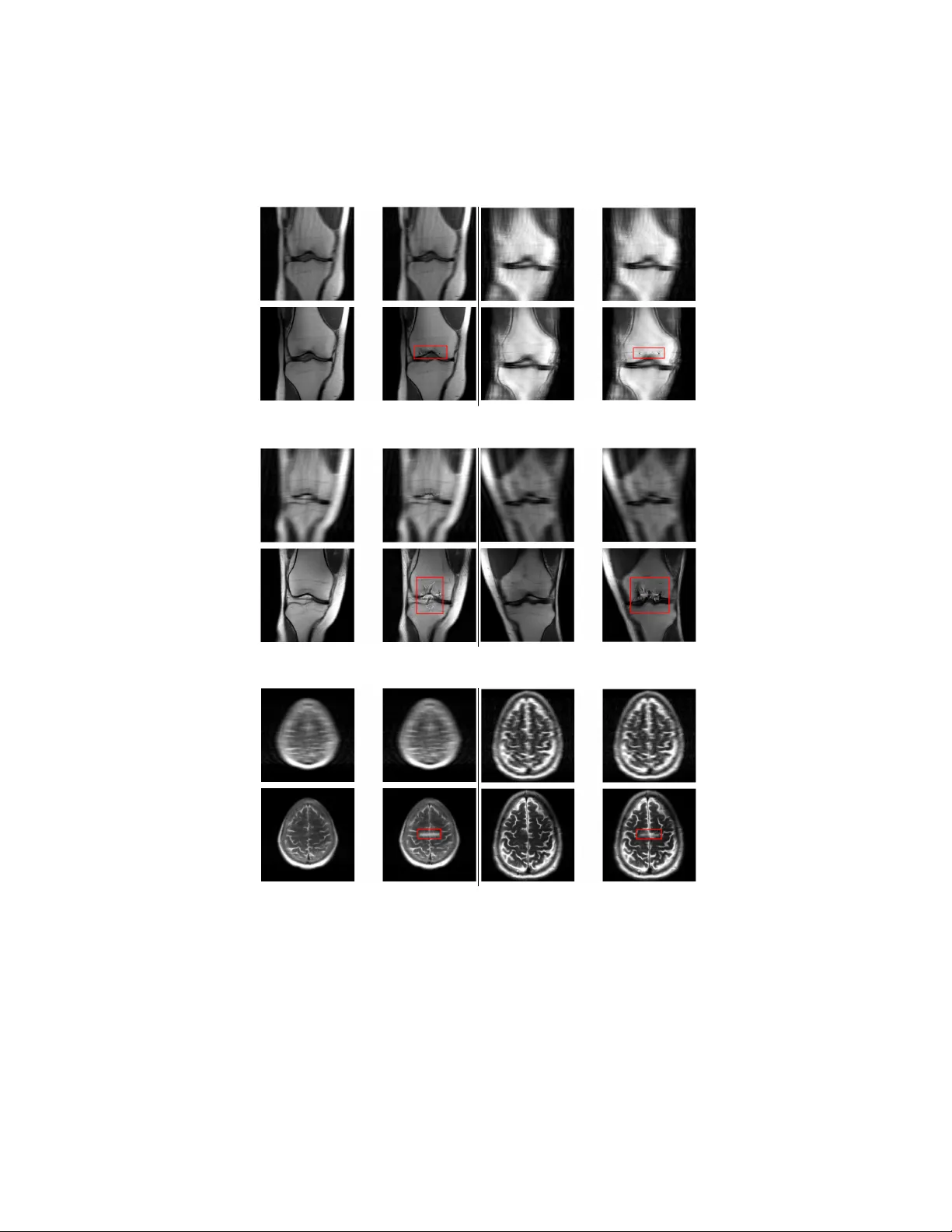

T riggering hallucinations in mo del-based MRI reconstruction via adv ersarial p erturbations Suna Buğda y 1 , Y v an Saeys 2 , 3 , and Jonathan P eck 2 , 3 ⋆ 1 F aculty of Engineering and Architecture/Computer Engineering, İzmir Bakırçay Univ ersity , İzmir, T urkey 2 Departmen t of Computer Science, Mathematics and Statistics, Ghen t Universit y , Ghen t, Belgium 3 Data Mining and Mo deling for Biomedicine Group, VIB Inflammation Researc h Cen ter, Ghen t, Belgium Abstract. Generativ e mo dels are increasingly used to impro ve the qual- it y of medical imaging, suc h as reconstruction of magnetic resonance images and computed tomograph y . How ever, it is well-kno wn that suc h mo dels are susceptible to hal lucinations : they may insert features into the reconstructed image whic h are not actually present in the original image. In a medical setting, such hallucinations ma y endanger patien t health as they can lead to incorrect diagnoses. In this work, we aim to quan tify the extent to whic h state-of-the-art generative mo dels suffer from hal- lucinations in the context of magnetic resonance image reconstruction. Sp ecifically , we craft adversarial p erturbations resembling random noise for the unprocessed input images which induce hallucinations when re- constructed using a generative model. W e perform this ev aluation on the brain and knee images from the fastMRI data set using UNet and end-to-end V arNet arc hitectures to reconstruct the images. Our results sho w that these models are highly susceptible to small perturbations and can be easily coaxed in to producing hallucinations. This fragilit y ma y partially explain wh y hallucinations occur in the first place and suggests that a carefully constructed adversarial training routine ma y reduce their prev alence. Moreo v er, these hallucinations cannot b e reli- ably detected using traditional image quality metrics. Nov el approac hes will th erefore need to b e developed to detect when hallucinations hav e o ccurred. Keyw ords: deep learning · computer vision · magnetic resonance imag- ing · robustness 1 In tro duction When patients go in for a medical scan, such as magnetic resonance imaging (MRI) or computed tomography (CT), the resulting images must first b e cleaned up or r e c onstructe d before they can b e used for diagnostic purp oses. There are ⋆ Corresp onding author: Jonathan.Peck@UGent.be 2 Buğda y et al. m ultiple reasons for this: patients never stay completely still during scans, whic h causes blurring; the physical measurement pro cess is sub ject to thermal noise and electromagnetic interference; etc [7]. Scanners also frequently undersample or ac c eler ate their measuremen ts, which can lead to aliasing and other artifacts due to incomplete data [30]. F or MRI, acceleration is usually p erformed to sav e costs and to increase patient comfort: a fully-sampled MRI would tak e several hours compared to the few min utes patients typically sp end inside suc h a scanner in practice [5]. F or CT, minimizing t he acquisition time is actually a necessit y due to the exp osure to a radioactiv e isotope which can damage tissues ov er an extended p eriod of time. (a) Ra w (b) FIST A (c) FIST A-Net Fig. 1. Comparison of classical FIST A and mo del-based FIST A-Net reconstruction of brain MR images. These images were taken from [3]. Naturally , there is a long line of research into prop er wa ys of reconstruct- ing medical imaging data [6, 12, 32, 42]. These “classical” algorithms are based on mathematical optimization problems and ha ve attractive theoretical guaran- tees, such as conv ergence rates and b ounds on the reconstruction error. More recen tly , the use of neural netw orks to reconstruct medical imaging data has b een explored [1, 3, 29, 46]. Sev eral studies hav e confirmed that the resulting reconstructions are more visually app ealing than those generated by classical algorithms [37, 38]. As an example, figure 1 compares the classical FIST A algo- rithm [6] to the more recen t mo del-based FIST A-Net metho d [3]. The impro ve- men t in visual quality is ob vious here. Nev ertheless, generativ e neural netw orks typically lack theoretical guaran- tees, calling their reliability in this context into question [28, 45]. Empirically , it is known that generativ e mo dels of any kind are susceptible to so-called hal luci- nations . Hallucinations occur when a generativ e mo del adds an imp ortan t feature to its output that w as not supp osed to b e there, or remov es a feature that should ha ve b een presen t. This problem is particularly well-kno wn in the field of lan- guage mo deling: mo dern large language mo dels (LLMs) are notoriously prone to generating con vincing misinformation or outright nonsense [24, 55]. Ho w ever, imaging mo dels are not exempt from this problem: a few examples are sho wn in figure 2. These alterations can hav e a significan t impact on patient care. As suc h, it is an important open problem to detect when suc h hallucinations ma y T riggering hallucinations in MRI reconstruction 3 (a) (b) Fig. 2. Examples of hallucinations introduced by model-based reconstructions of MR images. (a) In this image from [37], the model-based reconstruction in tro duces an additional sulcus in the brain whic h was not presen t in the original. (b) In this image tak en from [18], the mo del-based reconstruction remov es evidence of a meniscal tear that is presen t in the original data. ha ve o ccurred and to preven t them from happ ening if p ossible. Curren tly , there are no effectiv e metho ds to address these issues [30]. In this w ork, we aim to con tribute tow ards effective mitigation of hallucina- tions in medical imaging b y crafting an algorithm which actively pr ovokes them in state-of-the-art mo del-based reconstructions. Our motiv ation is that suc h an algorithm would facilitate follo w-up researc h into the detection and mitigation of hallucinations. F or instance, the algorithm could b e used to create a data set of reconstructions con taining hallucinations. Combined with clean reconstructions, a training data set can b e created to train a detector that flags hallucinations. Alternativ ely , the algorithm could also serve as a comp onent in an adversarial training regime that improv es the robustness of generativ e models suc h that hallucinations o ccur less frequen tly . W e ev aluate our attack on the fastMRI data set [56] against UNet [43] and E2E-V arNet [46] mo dels, which hav e been found to obtain go od perfor- mance on these reconstruction tasks. Our attack seems to be highly successful at eliciting hallucinations via imperceptibly small p erturbations of the input, leading to the conclusion that these mo dels are highly unstable and hallucina- tions can easily arise in practice purely as a result of noise. W e perform fur- ther exp erimen ts to attempt to detect the hallucinations using standard im- age qualit y metrics, suc h as p eak signal-to-noise ratio (PSNR), normalized ro ot mean squared error (NRMSE) and structural similarity index (SSIM; [53]). W e find that the distributions of these metrics ov erlap almost completely and hence cannot be used to distinguish reliable reconstructions from those con- taining hallucinations. The code to repro duce our exp erimen ts is a v ailable at https://github.com/saeyslab/adversarial- mri . 2 Bac kground Man y imaging reconstruction problems are essentially instances of a particular line ar inverse pr oblem [41, 51]. F ormally , w e observ e some noisy vector y ∈ C m 4 Buğda y et al. whic h is assumed to b e pro duced according to a so-called forwar d mo del : y = Φ · x ♮ + e . (1) Here, x ♮ ∈ C n is the true signal, Φ ∈ C m × n is a measuremen t matrix (also referred to as the forwar d tr ansform ) and e ∈ C m is noise. W e alwa ys hav e m < n , so the original signal x ♮ is sub ject to b oth compression (via Φ ) and additiv e noise (giv en by e ). The goal is to reconstruct x ♮ as faithfully as p ossible giv en the noisy and compressed observ ations y . MRI and CT scans present t ypical examples of linear in verse problems. In CT, the observ ations y are sinograms obtained via X-ra y pro jections of the original image x ♮ from v arious directions [57]. In the case of MRI, the observ ations are spatial frequencies in F ourier space, referred to as k-sp ac e in the literature [30]. Mathematically , the measurement matrices of CT and MRI are giv en b y the discrete Radon transform [8] and discrete F ourier transform [54], resp ectiv ely . The solutions to linear inv erse problems are typically obtained via iterative optimization pro cedures based on a probabilistic mo del of the noise and the forw ard transform. The conditional densit y p Y | X ( y | x ) is usually known from the ph ysics of the measurement process. The most common choice is to assume Gaussian noise, so that y is normally distributed around Φ · x ♮ . This leads to an optimization problem kno wn as b asis pursuit [16]: ˆ x = arg min x ∈ C n 1 2 ∥ y − Φ · x ∥ 2 2 + λ R ( x ) . (2) The L 2 p enalt y is referred to as the data c onsistency obje ctive and is a direct result of the Gaussian assumption on e . The second term, R ( x ) , is a regularizer whic h dep ends on the choice of prior p X ( x ) . Common choices for R include the L 1 norm in image space, ∥ x ∥ 1 ; the L 1 norm in w a velet space, ∥ Ψ · x ∥ 1 , where Ψ is a discrete wa velet transform [49]; or the total v ariation of x [14]. The resulting reconstruction algorithms are well-documented in v arious surv eys and textb ooks [25]. 2.1 Mo del-based reconstruction The success of deep learning approac hes in the image domain suggests that deep neural netw orks may hav e potential in determining go od solutions to linear in verse problems for medical imaging. While it is possible to train a neural net work to directly learn the mapping from the observ ation space to the original image space [29], this strategy p oses the risk of hallucinations [9, 28, 30] and lac ks formal guarantees on the reconstruction qualit y . In particular, when a neural netw ork is used to reconstruct the image directly , it is not clear what the underlying inv erse problem actually is that is b eing solved, which casts doubt on the reliabilit y of the results [45]. In ligh t of these issues, some authors hav e attempted to use deep netw orks as a regularizer rather than a full reconstruction algorithm. Specifically , they retain the original optimization problem (2) but incorp orate the neural netw ork into T riggering hallucinations in MRI reconstruction 5 the regularizer R ( x ) . This is the approach tak en by the MoDL framework [1], whic h reconstructs the image by solving (2) with R ( x ) = ∥ x − F θ ( x ) ∥ 2 2 , where F θ is a trained denoising neural netw ork. In this case, the regularization consists of minimizing the estimated noise in the image as determined by a neural net work reconstruction. 2.2 A dversarial perturbations A dversarial p erturbations are w orst-case alterations of the inputs to a mac hine learning model, in the sense that they are intended to b e imp erceptible or harm- less to a human observer while causing mo dels to make mistakes [48]. In the image domain, they typically tak e the form of (nearly) imp erceptible distor- tions. F or discriminativ e mo dels, the goal of adv ersarial p erturbations is usually to cause misclassification. In the case of generative models, performance is gener- ally harder to quan tify but usually relies on minimizing some measure of similar- it y b et ween the output of the model and a reference. Examples include the mean squared error (MSE) or the structural similarit y index measure (SSIM) [53]. A dversarial perturbations hav e been the sub ject of muc h researc h for a long time no w [10, 11], and while certain promising defenses ha ve b een prop osed, the problem is still far from b eing considered “solved” in an y meaningful wa y [21, 40]. In this w ork, we will make use of adversarial p erturbations to craft imperceptible distortions that, when added to an MR image, result in hallucinations when reconstructed b y a neural netw ork. 2.3 Related w ork A dversarial p erturbations for medical images are not ne w, and many attacks and defenses ha ve b een prop osed to date [23, 31]. Ho wev er, the v ast ma jority of existing w ork fo cuses on classification tasks, which is the most straightforw ard setting for an adversarial attac k because success is easy to measure through misclassification rate. By con trast, w e fo cus here on attac king r e c onstruction tasks, which is a generativ e setting where the ob jective is to remo ve undesirable artifacts from the data and impro ve visual qualit y . In this vein, [22] also consider the UNet and E2E-V arNet mo dels we study here and compare their robustness to classical metho ds suc h as total v ariation (TV) minimization. They find that b oth neural net works as w ell as classical re- construction algorithms are vulnerable to adv ersarial p erturbations sp ecifically tailored to the metho ds in question. This finding seems to con tradict other stud- ies such as [26] who claim that TV minimization is prov ably robust. In fact, [26] further find that neural net works are resilient against adv ersarial perturbations, whic h also con tradicts our o wn results. Although the existing bo dy of w ork on robustness of image reconstruction seems to fav or the view that classical recon- struction algorithms are stable whereas neural netw orks are not, consensus on this question do es not seem to ha ve b een reached y et. 6 Buğda y et al. Theoretical results on the stability of classical reconstruction algorithms hav e b een obtained b y [2]. F rom their w ork, w e can conclude that classical metho ds should not suffer from the same instability with resp ect to small p erturbations, giv en the fav orable contin uity properties these metho ds enjoy . [20] prov e several results related to the (non-)existence of accurate and stable neural net works for in verse problems. They also in tro duce FIRENET s, a family of neural net works that can b e efficien tly trained and hav e prov able stability properties. More re- cen tly , [28] related the instabilities of model-based reconstruction methods to the k ernel of the measurement matrix. Their main results are broadly applicable to man y reconstruction algorithms (not merely neural netw orks), and consist of a few “no free lunch” theorems which sho w that (paradoxically) higher p erfor- mance increases the probabilit y of hallucination, and that hallucinations are not rare ev ents (in the sense of ha ving non-zero probability of occurring). The work that comes closest to ours is [36], who also study adv ersarial per- turbations for MRI reconstruction. They ev aluate adversarial attacks against UNet [43] and E2E-V arNet [46] on the fastMRI data set [56]. These attacks are untargeted in the sense that they aim to maximize the reconstruction error within a sp ecified region with respect to fully sampled reference images. By con- trast, in this work, w e study a targeted adv ersarial attack which aims to insert a specific detail into the reconstruction that is not actually present. This attac k requires no reference data and supp orts any type of detail whic h can be effi- cien tly rendered on to an image. W e also p erform additional exp erimen ts in an attempt to detect the hallucinations we inserted using standard image quality metrics, suc h as PSNR, NRMSE and SSIM, and find that these are ineffective. 3 Metho d The goal of our attac k is to craft invisible p erturbations suc h that mo del- based reconstructions of the p erturbed data p oin ts exhibit distortions that could mislead diagnostic interpretation. T o achiev e this, given a k -space data v ector z ∈ C n and a reconstruction map F : C n → R n , we solve the following opti- mization problem: δ ⋆ = arg min δ ∈ C n ∥ m ⊙ ( F ( z + δ ) − y t ) ∥ 2 2 ∥ m ∥ 1 + ∥ (1 − m ) ⊙ ( F ( z + δ ) − F ( z )) ∥ 2 2 ∥ 1 − m ∥ 1 (3) sub ject to the constraint ∥ δ ∥ ∞ ≤ ε , where ε > 0 is the p erturbation budget, m ∈ R n is a binary mask, and y t is a target reconstruction. The budget ε m ust b e sufficien tly small so that the generated perturbations are in visible to the nak ed ey e, but large enough to allow insertion of the desired hallucinations in the reconstruction. The sp ecific v alue therefore depends on the application; see section 4 for further details. The target reconstruction y t is designed by drawing a short white line in the center of the original reconstruction F ( z ) . The mask m delimits the region T riggering hallucinations in MRI reconstruction 7 where this line was added. In this wa y , the ob jectiv e function in (3) strikes a balance b etw een the insertion of the specified detail on the one hand and faithful reconstruction of the image on the other: the first term in the loss penalizes the difference betw een the reconstruction and the target image, whereas the second term p enalizes the difference betw een the reconstruction and the ground truth. The optimal solution is achiev ed when the p erturbation δ is such that F ( z + δ ) is identical to y t within the target region defined by the mask m and iden tical to F ( z ) outside the target region. Pseudo code describing the attac k is pro vided in appendix A. The method is inspired by the basic iterativ e method (BIM), which is a popular algorithm for generating adv ersarial examples [33]. Note that k -space data is complex-v alued, but w e consider only real-v alued perturbations δ in our algorithm. This is done to simplify the computations on the one hand, but also b ecause w e noticed that, for our purposes, it suffices to only perturb the real components of the k -space v ector. Hence we lea ve the imaginary components of all samples unc hanged. Although in this work we only consider lines drawn onto the center of the image, the attack we dev elop ed here is agnostic to the sp ecific target. Indeed, the attack can use an y target y t with asso ciated mask m and hence can be used to prov oke all kinds of hallucinations, as long as they can be efficien tly rendered on to an image. W e c hose lines here because they are simple y et already highly effectiv e at introducing unw anted artifacts, but other shapes such as squares or circles ma y also be used. An in teresting further exp eriment could b e to use parts of other images, which include real pathologies, and render them onto images whic h do not contain them. In this wa y , a convincing meniscal tear might b e hallucinated on to an image of a healthy knee, for instance. This can b e facilitated using annotations of pathologies in existing MRI data sets, such as fastMRI+. 4 4 Exp erimen ts W e ev aluated our attack algorithm on the fastMRI data set [56] using a pre- trained UNet [43] and E2E-V arNet [46]. W e consider single-coil and m ulti-coil knee images, as w ell as m ulti-coil brain images. W e do not ev aluate E2E-V arNet on the single-coil knee images, since there were no pretrained c heckpoints a v ail- able for that model on this part of the fastMRI data set. The adversarial p ertur- bations are generated by solving (3) using algorithm A.1 with T = 150 iterations. The magnitude of the perturbation w as b ounded in the L ∞ norm to a maxim um of ε = 1 × 10 − 6 with a step size of α = 1 × 10 − 7 . 4.1 Results W e p erformed a quan titativ e ev aluation of the results using peak signal-to-noise ratio (PSNR), normalized ro ot mean squared error (NRMSE), and structural similarit y index measure (SSIM). These metrics are computed separately for the 4 https://github.com/microsoft/fastmri- plus 8 Buğda y et al. pairs of original and perturb ed input samples, as well as the pair of original and p erturbed reconstructions. W e also inv estigated the v alue of the ob jective function in (3) and found that it was almost alw ays negligibly small across all mo dels and data sets (on the order of 10 − 8 or less). W e therefore do not include these v alues in the rep ort, but we conclude that they are indicative of a successful attac k. T able 1. Summary statistics of the exp eriments. The rep orted num b ers are means o ver the en tire data set along with their standard deviations in parentheses. Mo del Data PSNR ↑ NRMSE ↓ SSIM ↑ UNet sc-knee 55.60 (6.18) 0.01 (0.01) 1.00 (0.00) 34.24 (10.10) 0.17 (0.33) 0.95 (0.10) mc-knee 50.35 (5.74) 0.02 (0.01) 1.00 (0.00) 28.48 (4.62) 0.23 (0.13) 0.82 (0.13) mc-brain 61.72 (4.58) 0.01 (0.00) 1.00 (0.00) 26.00 (11.06) 0.76 (1.07) 0.68 (0.34) V arNet mc-knee 50.69 (6.24) 0.02 (0.01) 1.00 (0.01) 31.70 (1.70) 0.42 (0.18) 0.93 (0.03) mc-brain 60.10 (4.44) 0.01 (0.00) 1.00 (0.00) 41.72 (7.48) 0.10 (0.10) 0.98 (0.03) Summary statistics of these metrics are given in table 1, with v alues rounded to tw o decimal places. F or ev ery metric M ∈ { PSNR , NRMSE , SSIM } , w e report the mean and standard deviation of t wo v alues across the data set: 1. The metric computed on the zero-filled 5 original and adv ersarial input sam- ples, M (ZF( z ) , ZF( z + δ )) , where ZF( · ) denotes the zero-filling op eration. 2. The metric computed on the reconstructions, M ( F ( z ) , F ( z + δ )) . W e rep ort these tw o v alues for each metric to gauge the success of the attac k: in eac h case, we expect the metric computed on the zero-filled pair to b e “goo d” – high in the case of PSNR and SSIM, low for NRMSE – but the reconstructions should b e noticeably worse. W e do indeed observe this pattern in table 1: the metric v alues are consisten tly b etter for the zero-filled originals compared to the reconstructions. F or the originals, PSNR v alues are alwa ys at least 50 dB, the NRMSE is 0.02 or less and the SSIM is almost 100%. This suggests that the clean input samples and the adversarial samples are visually indistinguishable, as desired. On the other hand, the metric v alues for the reconstructed samples are noticeably worse: PSNR v alues drop to around 40 dB or less, NRMSE in- creases b y at least an order of magnitude and SSIM v alues can drop up to thirty 5 Zero-filling is the most basic reconstruction method where w e simply apply an inv erse F ourier transform on the ra w k -space data (possibly padded with additional zeros), without making an y attempt at reducing noise. T riggering hallucinations in MRI reconstruction 9 p ercen tage points. Although these v alues still indicate that the reconstructions are similar, there is a significan t difference. Moreov er, it is expected for the re- constructions to retain muc h similarity , b ecause the artificial detail we added using our adv ersarial attack only co vers a small portion of the image. 10 20 30 40 50 60 70 80 PSNR 1 0 3 1 0 2 1 0 1 1 0 0 MSE 0.4 0.5 0.6 0.7 0.8 0.9 1.0 value S SIM original adversarial (a) sc-knee 20 30 40 50 60 PSNR 1 0 2 1 0 1 MSE 0.5 0.6 0.7 0.8 0.9 1.0 value S SIM original adversarial (b) mc-knee 0 10 20 30 40 50 60 70 PSNR 1 0 2 1 0 1 1 0 0 MSE 0.2 0.4 0.6 0.8 1.0 value SSIM original adversarial (c) mc-b rain Fig. 3. Distributions of the metric v alues for the UNet model. T o further in vestigate the extent to whic h our attack can b e called successful, w e plot the full distributions of the metric v alues in figure 3 for the UNet mo del and figure 4 for the V arNet mo del. These v alues are visualized as histogram- based ridge plots, showing the o v erlap b etw een the distributions. Note that the MSE v alues are sho wn on a semi-logarithmic scale. Consisten t with table 1, we obse rv e that the distributions of all metric v alues tend to b e well-separated b etw een the original samples and the reconstructions. Some exceptions to this can b e observed: the distributions of NRMSE for the sc-knee data reconstructed by the UNet model largely ov erlap, as do the distri- butions of SSIM v alues for the sc-knee data reconstructed by the UNet mo del and mc-brain data reconstructed b y the E2E-V arNet mo del. In all of these cases, ho wev er, w e observe that the metrics inv olved hav e a significan tly larger spread after reconstruction. This indicates that the hallucinated details may not alwa ys b e v ery visible, but given the large v ariance in the v alues, the attac k is still v ery lik ely to pro duce noticeable distortions for the ma jority of inputs. A qualitative assessment of the generated images was also performed but has b een deferred to app endix B due to space constraints. F rom those results, 10 Buğday et al. 25 30 35 40 45 50 55 60 PSNR 1 0 2 1 0 1 1 0 0 MSE 0.86 0.88 0.90 0.92 0.94 0.96 0.98 1.00 value SSIM original adversarial (a) mc-knee 30 40 50 60 70 PSNR 1 0 2 1 0 1 MSE 0.90 0.92 0.94 0.96 0.98 1.00 value S SIM original adversarial (b) mc-b rain Fig. 4. Distributions of the metric v alues for the E2E-V arNet model. w e conclude that the adversarially p erturbed samples can lead to realistic re- constructions whic h exhibit biologically plausible distortions that could mislead exp ert interpretation, and that the insertion of the artificial detail will often cause further distortion far b ey ond the target region delineated by our mask. 4.2 Detecting hallucinations In this section, we turn to the question of detectability of our generated hal- lucinations. W e consider a simple threat mo del where an adversary may hav e con taminated part of a data set using p erturbations generated b y algorithm A.1. The defender is given access only to k -space vectors z 1 , . . . , z N and must decide whic h (if any) of the samples ha ve been corrupted. As argued abov e, it is exp ected that classical reconstruction algorithms suc h as total v ariation, conjugate gradients and FIST A do not suffer from hallucina- tions. It therefore stands to reason that suc h algorithms may b e used to compute an expected distribution of residual reconstruction errors against which we may test the mo del-based reconstructions. Specifically , giv en a p otentially p erturbed k -space vector z , we ask whether it is possible to detect hallucinations using standard image quality metrics applied to the pair R ( z ) and F ( z ) , where R ( · ) denotes some classical reconstruction algorithm. W e test this hypothesis b y first using algorithm A.1 to generate a data set of p erturbed k -space vectors ˜ z 1 , . . . , ˜ z N . Then, for each unp erturb ed k -space v ector z and its adversarially p erturbed coun terpart ˜ z , we compute PSNR, NRMSE and SSIM metrics on the pairs of reconstructions (TV ( z ) , F ( z )) and (TV( ˜ z ) , F ( ˜ z )) , where TV( · ) is the total v ariation reconstruction algorithm as implemented in the SigPy library 6 using sensitivity maps estimated by ESPIRiT [52]. If these distributions are well-separated, then the defender can simply compute these metrics for eac h sample in the data set and compare them to a pre-determined threshold. The distributions of the resulting v alues are given in figure 5 for the UNet mo del and figure 6 for the E2E-V arNet mo del. F rom these results w e observe 6 https://github.com/mikgroup/sigpy T riggering hallucinations in MRI reconstruction 11 5.0 7.5 10.0 12.5 15.0 17.5 20.0 22.5 PSNR 1 0 0 3 × 1 0 1 4 × 1 0 1 6 × 1 0 1 MSE 0.20 0.25 0.30 0.35 0.40 0.45 0.50 0.55 0.60 value S SIM original adversarial (a) sc-knee 10 12 14 16 18 20 22 PSNR 1 0 0 4 × 1 0 1 6 × 1 0 1 2 × 1 0 0 MSE 0.40 0.45 0.50 0.55 0.60 0.65 value S SIM original adversarial (b) mc-knee 5 10 15 20 25 PSNR 1 0 0 4 × 1 0 1 6 × 1 0 1 MSE 0.1 0.2 0.3 0.4 0.5 0.6 0.7 value SSIM original adversarial (c) mc-b rain Fig. 5. Distributions of the metric v alues for the UNet mo del using total v ariation reconstructions. that, for every metric, the tw o distributions almost en tirely ov erlap. The only exception seems to b e the SSIM metric on the mc-brain data for the UNet mo del, whic h exhibits an unusual p eak near 10%. How ever, this pattern never repeats for an y of the other data sets and do es not app ear at all for the E2E-V arNet mo del. It therefore seems unreliable, and the distributions still ov erlap sufficiently to mak e any attempt at separation using the SSIM prone to error. W e stress that algorithm A.1 w as not explicitly constructed to maximize o verlap b et ween these metrics. Indeed, our attac k merely minimizes (3) and does not mak e any use of other reconstruction algorithms, nor do es it directly opti- mize PSNR or SSIM. Y et it appears this approac h already suffices to make the generated hallucinations essentially undetectable using standard image qualit y metrics. As is common practice in adv ersarial attac ks [50], algorithm A.1 may b e extended by including such additional ob jectives in to the loss function in order to mak e the p erturbations ev en less detectable, but it seems this is not necessary here. Note that we do not explore the possibility of training mo del-based detectors for these perturbations here. This omission is due to our exp ectation that suc h approac hes are b ound to be ineffective, since the field of adv ersarial mac hine learning has learned through exp erience [4, 13, 40, 50] that adversarial attacks can b e easily adapted to incorp orate the outputs of such detectors. Hence, an ev aluation where we train a neural netw ork to differentiate b et ween clean and 12 Buğday et al. 8 10 12 14 16 PSNR 1 0 1 MSE 0.10 0.15 0.20 0.25 0.30 0.35 0.40 0.45 value SSIM original adversarial (a) mc-knee 10 12 14 16 18 20 22 PSNR 1 0 0 6 × 1 0 1 2 × 1 0 0 3 × 1 0 0 4 × 1 0 0 MSE 0.40 0.45 0.50 0.55 0.60 0.65 value S SIM original adversarial (b) mc-b rain Fig. 6. Distributions of the metric v alues for the E2E-V arNet model using total v aria- tion reconstructions. p erturbed k -space v ectors w ould merely serv e to create a false sense of security , and w ould likely result in a swift break of the detector in a follo w-up work. These findings suggest that, in order to solve the problem of hallucinations in mo del-based MRI reconstructions, mathematically principled approaches will b e needed that give rise to c ertifie d defenses or detectors. In this vein, carefully constructed adversarial training regimes [34, 35] or the application of denoised smo othing [19, 44] may b e explored. 5 Conclusions W e hav e proposed an adv ersarial attac k which can insert hallucinations in to mo del-based MRI reconstructions via invisible perturbations of the input sam- ples. W e ev aluated the algorithm using several image quality metrics and found that it often succeeds in causing significan t distortion of the reconstructed sam- ples while remaining invisible to the naked ey e in input space. Our results imply that, in the absence of reference data, such distortions are not easily detected using “traditional” metrics suc h as p eak signal-to-noise ratio, mean squared error or structural similarit y index. Moreov er, a qualitative assessmen t of the results indicates that whenev er our attack is successful, it tends to cause further biolog- ically plausible distortions b ey ond the neighborho o d of the artificially inserted detail. W e conclude that state-of-the-art mo del-based MRI reconstruction algorithms are highly unstable with respect to small noise and can easily b e induced to in- sert hallucinatory structures. This p oses a risk when these mo dels are used in medical con texts, where such p erturbations ma y arise sp on taneously through noise and ma y affect diagnosis and even tual treatmen t of patients. F uture w ork in this area should fo cus on reducing the instability of reconstruction maps to small p erturbations as well as developing detection mec hanisms whic h can signal when reconstructions ma y b e unreliable. The attack in tro duced in this work ma y form the basis for an adversarial training regime that could impro ve the robust- T riggering hallucinations in MRI reconstruction 13 ness of mo del-based MRI reconstruction or serv e as a baseline against which to b enc hmark p oten tial detection approaches. Giv en the historical failure of many approac hes to detecting adversarial p er- turbations, w e argue that future researc h in this direction should fo cus on mathe- matically principled tec hniques that lead to detectors with prov able correctness ev en in the presence of w orst-case perturbations. It is kno wn that detecting adv ersarial p erturbations is a very hard problem in general, and no satisfying general-purp ose solutions exist at this time [11, 40]. How ever, when the problem is constrained specifically to the detection of hallucinations in MRI reconstruction, existing mathematical results in compressed sensing may b e employ ed whic h are not av ailable in more general settings [17, 39, 47]. It is our hop e that this may lead to pro v able solutions to the problem of hallucinations in mo del-based MRI reconstruction. A ckno wledgmen ts. Suna Buğday w as supp orted by an Erasmus+ mobility grant during her sta y at Ghent Universit y . References 1. Aggarw al, H.K., Mani, M.P ., Jacob, M.: MoDL: Model-based deep learning archi- tecture for inv erse problems. IEEE transactions on medical imaging 38 (2), 394–405 (2018) 2. del Aguila Pla, P ., Neuma yer, S., Unser, M.: Stabilit y of image-reconstruction algorithms. IEEE T ransactions on Computational Imaging 9 , 1–12 (2023) 3. Aromal, C., Datta, S.: FIST A-NET: Compressed sensing MRI reconstruction us- ing unrolled iterative netw orks. In: 2024 IEEE 21st India Council International Conference (INDICON). pp. 1–6. IEEE (2024) 4. A thalye, A., Carlini, N., W agner, D.: Obfuscated gradients give a false sense of securit y: Circumv enting defenses to adversarial examples. In: International confer- ence on mac hine learning. pp. 274–283. PMLR (2018) 5. Ballinger, J., Wilczek, M., Knip e, H.: Acquisition time (Apr 2013). https://doi. org/10.53347/rid- 22553 , http://dx.doi.org/10.53347/rID- 22553 6. Bec k, A., T eboulle, M.: A fast iterativ e shrink age-thresholding algorithm for linear in verse problems. SIAM journal on imaging sciences 2 (1), 183–202 (2009) 7. Bellon, E.M., Haac ke, E.M., Coleman, P .E., Sacco, D.C., Steiger, D.A., Gangarosa, R.E.: MR artifacts: a review. American Journal of Ro en tgenology 147 (6), 1271– 1281 (1986) 8. Beylkin, G.: Discrete Radon transform. IEEE transactions on acoustics, sp eech, and signal processing 35 (2), 162–172 (1987) 9. Bhadra, S., Kelk ar, V.A., Brooks, F.J., Anastasio, M.A.: On hallucinations in to- mographic image reconstruction. IEEE transactions on medical imaging 40 (11), 3249–3260 (2021) 10. Biggio, B., Corona, I., Maiorca, D., Nelson, B., Šrndić, N., Lask ov, P ., Giacinto, G., Roli, F.: Ev asion attac ks against machine learning at test time. In: Joint Europ ean conference on machine learning and kno wledge disco very in databases. pp. 387–402. Springer (2013) 11. Biggio, B., Roli, F.: Wild patterns: T en years after the rise of adv ersarial mac hine learning. In: Proceedings of the 2018 ACM SIGSA C Conference on Computer and Comm unications Securit y . pp. 2154–2156 (2018) 14 Buğday et al. 12. Blo c k, K.T., Ueck er, M., F rahm, J.: Undersampled radial MRI with multiple coils. Iterativ e image reconstruction using a total v ariation constrain t. Magnetic Reso- nance in Medicine: An Official Journal of the International So ciety for Magnetic Resonance in Medicine 57 (6), 1086–1098 (2007) 13. Carlini, N., W agner, D.: Adv ersarial examples are not easily detected: Bypassing ten detection metho ds. In: Pro ceedings of the 10th ACM w orkshop on artificial in telligence and security . pp. 3–14 (2017) 14. Cham b olle, A., Caselles, V., Cremers, D., Nov aga, M., Pock, T., et al.: An introduc- tion to total v ariation for image analysis. Theoretical foundations and n umerical metho ds for sparse reco very 9 (263-340), 227 (2010) 15. Chattopadh ya y , N., Chattopadh ya y , A., Gupta, S.S., Kasper, M.: Curse of dimen- sionalit y in adv ersarial examples. In: 2019 International Joint Conference on Neural Net works (IJCNN). pp. 1–8. IEEE (2019) 16. Chen, S., Donoho, D.: Basis pursuit. In: Proceedings of 1994 28th Asilomar Con- ference on Signals, Systems and Computers. v ol. 1, pp. 41–44. IEEE (1994) 17. Chen, Y., Caramanis, C., Mannor, S.: Robust sparse regression under adversarial corruption. In: In ternational conference on machine learning. pp. 774–782. PMLR (2013) 18. Cheng, K., Caliv á, F., Shah, R., Han, M., Ma jumdar, S., Pedoia, V.: A ddress- ing The F alse Negative Problem of Deep Learning MRI Reconstruction Mo d- els by Adv ersarial A ttacks and Robust T raining. In: Arb el, T., Ben A y ed, I., de Bruijne, M., Descoteaux, M., Lombaert, H., Pal, C. (eds.) Pro ceedings of the Third Conference on Medical Imaging with Deep Learning. Pro ceedings of Mac hine Learning Research, vol. 121, pp. 121–135. PMLR (06–08 Jul 2020), https://proceedings.mlr.press/v121/cheng20a.html 19. Cohen, J., Rosenfeld, E., K olter, Z.: Certified adversarial robustness via random- ized smo othing. In: in ternational conference on mac hine learning. pp. 1310–1320. PMLR (2019) 20. Colbro ok, M.J., Antun, V., Hansen, A.C.: The difficulty of computing stable and accurate neural netw orks: On the barriers of deep learning and Smale’s 18th prob- lem. Pro ceedings of the National Academ y of Sciences 119 (12), e2107151119 (2022) 21. Costa, J.C., Roxo, T., Pro ença, H., Inacio, P .R.M.: How deep learning sees the w orld: A surv ey on adv ersarial attac ks & defenses. IEEE A ccess 12 , 61113–61136 (2024) 22. Darestani, M.Z., Chaudhari, A.S., Hec kel, R.: Measuring robustness in deep learn- ing based compressive sensing. In: International Conference on Machine Learning. pp. 2433–2444. PMLR (2021) 23. Dong, J., Chen, J., Xie, X., Lai, J., Chen, H.: Survey on adv ersarial attack and defense for medical image analysis: Methods and challenges. A CM Computing Surv eys 57 (3), 1–38 (2024) 24. F arquhar, S., Kossen, J., Kuhn, L., Gal, Y.: Detecting hallucinations in large lan- guage mo dels using seman tic en tropy . Nature 630 (8017), 625–630 (2024) 25. F oucart, S., Rauh ut, H.: A Mathematical Introduction to Compressiv e Sens- ing. Springer New Y ork (2013). https://doi.org/10.1007/978- 0- 8176- 4948- 7 , http://dx.doi.org/10.1007/978- 0- 8176- 4948- 7 26. Genzel, M., Macdonald, J., März, M.: Solving inv erse problems with deep neu- ral netw orks – robustness included? IEEE transactions on pattern analysis and mac hine in telligence 45 (1), 1119–1134 (2022) 27. Gilmer, J., Metz, L., F aghri, F., Schoenholz, S., Ragh u, M., W atten b erg, M., Go odfellow, I.: Adv ersarial spheres (2018), https://openreview.net/forum?id= SyUkxxZ0b T riggering hallucinations in MRI reconstruction 15 28. Gottsc hling, N.M., Antun, V., Hansen, A.C., A dco c k, B.: The troublesome kernel: On hallucinations, no free lunc hes, and the accuracy-stability tradeoff in inv erse problems. SIAM Review 67 (1), 73–104 (2025) 29. Hammernik, K., Klatzer, T., Kobler, E., Rec ht, M.P ., Sodickson, D.K., Pock, T., Knoll, F.: Learning a v ariational netw ork for reconstruction of accelerated MRI data. Magnetic resonance in medicine 79 (6), 3055–3071 (2018) 30. Hec kel, R., Jacob, M., Chaudhari, A., Perlman, O., Shimron, E.: Deep learn- ing for accelerated and robust MRI reconstruction: a review. arXiv preprint arXiv:2404.15692 (2024) 31. Ka viani, S., Han, K.J., Sohn, I.: Adv ersarial attacks and defenses on ai in medical imaging informatics: A survey . Expert Systems with Applications 198 , 116815 (2022) 32. Ka wata, S., Nalcioglu, O.: Constrained iterative reconstruction by the conjugate gradien t method. IEEE transactions on medical imaging 4 (2), 65–71 (2007) 33. Kurakin, A., Goo dfellow, I.J., Bengio, S.: Adv ersarial examples in the physical w orld. In: Artificial in telligence safet y and securit y , pp. 99–112. Chapman and Hall/CR C (2018) 34. Lee, S., Lee, W., Park, J., Lee, J.: T ow ards b etter understanding of train- ing certifiably robust models against adversarial examples. In: Ranzato, M., Beygelzimer, A., Dauphin, Y., Liang, P ., V aughan, J.W. (eds.) A dv ances in Neu- ral Information Pro cessing Systems. v ol. 34, pp. 953–964. Curran Asso ciates, Inc. (2021), https://proceedings.neurips.cc/paper_files/paper/2021/file/ 07c5807d0d927dcd0980f86024e5208b- Paper.pdf 35. Mao, Y., Müller, M., Fischer, M., V ec hev, M.: Connecting certified and adversarial training. A dv ances in Neural Information Processing Systems 36 , 73422–73440 (2023) 36. Morsh uis, J.N., Gatidis, S., Hein, M., Baumgartner, C.F.: A dversarial robustness of MR image reconstruction under realistic perturbations. In: International W orkshop on Machine Learning for Medical Image Reconstruction. pp. 24–33. Springer (2022) 37. Muc kley , M.J., Riemenschneider, B., Radmanesh, A., Kim, S., Jeong, G., Ko, J., Jun, Y., Shin, H., Hw ang, D., Mostapha, M., et al.: State-of-the-art mac hine learn- ing MRI reconstruction in 2020: Results of the second fastMRI challenge. arXiv preprin t arXiv:2012.06318 2 (6) (2020) 38. Muc kley , M.J., Riemenschneider, B., Radmanesh, A., Kim, S., Jeong, G., Ko, J., Jun, Y., Shin, H., Hw ang, D., Mostapha, M., et al.: Results of the 2020 fastMRI c hallenge for machine learning MR image reconstruction. IEEE transactions on medical imaging 40 (9), 2306–2317 (2021) 39. P eck, J., Goossens, B.: Robust width: A ligh tw eight and certifiable adversarial defense. arXiv preprin t arXiv:2405.15971 (2024) 40. P eck, J., Go ossens, B., Saeys, Y.: An introduction to adversarially robust deep learning. IEEE T ransactions on Pattern Analysis and Mac hine Intelligence (2023) 41. Rib es, A., Sc hmitt, F.: Linear in verse problems in imaging. IEEE Signal Pro cessing Magazine 25 (4), 84–99 (2008) 42. Ro emer, P .B., Edelstein, W.A., Hay es, C.E., Souza, S.P ., Mueller, O.M.: The NMR phased array . Magnetic resonance in medicine 16 (2), 192–225 (1990) 43. Ronneb erger, O., Fischer, P ., Bro x, T.: U-net: Conv olutional netw orks for biomedi- cal image segmen tation. In: International Conference on Medical image computing and computer-assisted in terven tion. pp. 234–241. Springer (2015) 44. Salman, H., Sun, M., Y ang, G., Kapo or, A., Kolter, J.Z.: Denoised smo othing: A pro v able defense for pretrained classifiers. Adv ances in Neural Information Pro- cessing Systems 33 , 21945–21957 (2020) 16 Buğday et al. 45. Sidky , E.Y., Loren te, I., Branko v, J.G., P an, X.: Do CNNs solve the CT in verse problem? IEEE T ransactions on Biomedical Engineering 68 (6), 1799–1810 (2020) 46. Sriram, A., Zb on tar, J., Murrell, T., Defazio, A., Zitnic k, C.L., Y akubov a, N., Knoll, F., Johnson, P .: End-to-end v ariational netw orks for accelerated mri recon- struction. In: Martel, A.L., Ab olmaesumi, P ., Stoy anov, D., Mateus, D., Zuluaga, M.A., Zhou, S.K., Racoceanu, D., Josko wicz, L. (eds.) Medical Image Computing and Computer Assisted In terven tion – MICCAI 2020. pp. 64–73. Springer In ter- national Publishing, Cham (2020) 47. Sulam, J., Muth ukumar, R., Arora, R.: A dversarial robustness of sup ervised sparse co ding. Adv ances in neural information pro cessing systems 33 , 2110–2121 (2020) 48. Szegedy , C., Zaremba, W., Sutskev er, I., Bruna, J., Erhan, D., Go odfellow, I., F er- gus, R.: Intriguing prop erties of neural netw orks. arXiv preprin t (2013) 49. T orrence, C., Comp o, G.P .: A practical guide to wa velet analysis. Bulletin of the American Meteorological society 79 (1), 61–78 (1998) 50. T ramer, F., Carlini, N., Brendel, W., Madry , A.: On adaptive attacks to adv ersarial example defenses. A dv ances in neural information pro cessing systems 33 , 1633– 1645 (2020) 51. T ropp, J.A., W righ t, S.J.: Computational methods for sparse solution of linear in verse problems. Proceedings of the IEEE 98 (6), 948–958 (2010) 52. Uec ker, M., Lai, P ., Murphy , M.J., Virtue, P ., Elad, M., Pauly , J.M., V asana wala, S.S., Lustig, M.: ESPIRiT—an eigenv alue approach to auto calibrating parallel MRI: where SENSE meets GRAPP A. Magnetic resonance in medicine 71 (3), 990– 1001 (2014) 53. W ang, Z., Bovik, A.C., Sheikh, H.R., Simoncelli, E.P .: Image quality assessmen t: from error visibility to structural similarity . IEEE transactions on image processing 13 (4), 600–612 (2004) 54. Winograd, S.: On computing the discrete F ourier transform. Mathematics of com- putation 32 (141), 175–199 (1978) 55. Xu, Z., Jain, S., Kank anhalli, M.S.: Hallucination is inevitable: An innate limitation of large language mo dels. CoRR abs/2401.11817 (2024), https://doi.org/10. 48550/arXiv.2401.11817 56. Zb on tar, J., Knoll, F., Sriram, A., Murrell, T., Huang, Z., Muckley , M.J., De- fazio, A., Stern, R., Johnson, P ., Bruno, M., et al.: fastMRI: An open dataset and b enc hmarks for accelerated MRI. arXiv preprint arXiv:1811.08839 (2018) 57. Zhang, M., Gu, S., Shi, Y.: The use of deep learning metho ds in low-dose computed tomograph y image reconstruction: a systematic review. Complex & in telligent sys- tems 8 (6), 5545–5561 (2022) T riggering hallucinations in MRI reconstruction 17 A Pseudo co de of the attack Algorithm A.1 Masked Iterative F GSM Attac k F Reconstruction map. z Raw k -space input sample. y t T arget reconstruction with artificial detail. m Binary mask delineating the target region. ε Maxim um perturbation magnitude. α Step size per iteration. T Number of iterations. 1: function MaskedItera tiveFGSM ( F , z , y t , m , ε , α , T ) 2: y 0 ← F ( z ) ▷ Obtain clean reconstruction 3: δ ∼ Unif ( − ε, ε ) ▷ Random initial p erturbation 4: δ ⋆ ← δ ▷ Initial best perturbation 5: L min ← + ∞ ▷ Initial best loss v alue // Optimization loop 6: for t ← 1 to T do // Compute perturb ed sample 7: z adv ← clip( ℜ ( z ) + δ , 0 , 1) + ℑ ( z ) // Compute loss 8: L 1 ← ∥ m ⊙ ( F ( z adv ) − y t ) ∥ 2 2 9: L 2 ← ∥ (1 − m ) ⊙ ( F ( z adv ) − F ( z )) ∥ 2 2 10: L ← L 1 ∥ m ∥ 1 + L 2 ∥ 1 − m ∥ 1 // Keep trac k of the best perturbation 11: if L < L min then 12: δ ⋆ ← δ 13: L min ← L 14: end if // Compute update 15: δ ← δ − α · sign ( ∇ δ L ) 16: δ ← clip( δ , − ε, ε ) 17: end for 18: return δ ⋆ 19: end function 18 Buğday et al. B Qualitativ e assessmen t of the attac k F or a qualitative assessment of the results, we sho w some examples in figure B.1 for the UNet model and figure B.2 for the E2E-V arNet model of reconstructions where the attack was successful. These figures are structured as 2x2 panels of images where the first ro w displa ys the original and p erturbed input samples and the second row displays the corresp onding mo del-based reconstructions. Areas whic h we believe con tain hallucinatory structures are highlighted in red. W e notice from figures B.1a and B.1b that the multi-coil images seem to b e more vulnerable than the single-coil ones, in the sense that the resulting dis- tortions are more severe for multi-coil data. The generated p erturbations also seem easier to spot for single-coil data. This ma y b e explained b y the fact that p erturbations in the multi-coil images can b e more “spread out” across the coils, whereas with single-coil data this is not possible. This is consistent with the observ ation that vulnerability to adversarial examples increases with data di- mensionalit y [15, 27]. Although we cannot compare to single-coil data for the E2E-V arNet, we do notice large distortions in figure B.2a as w ell that go far b ey ond the b oundaries of our inserted detail. On the multi-coil brain data, the hallucinations seem less sev ere for b oth models compared to the knee data, but the distortions can still be significan t as they tend to resemble non-existen t sulci. F or knee images, the distortions app ear to substantially change the structure of the knee join t, esp ecially on multi-coil data. W e conclude that the adversarially p erturbed samples can lead to realistic reconstructions which exhibit biologically plausible distortions that could mis- lead exp ert in terpretation, and that the insertion of the artificial detail will often cause further distortion b ey ond the original target region. F or b oth mo dels, this is esp ecially apparent in the multicoil knee data, where the shap e of the knee join t tends to change significan tly when the detail is inserted. T riggering hallucinations in MRI reconstruction 19 (a) sc-knee (b) mc-knee (c) mc-b rain Fig. B.1. Examples of successful attac ks on the UNet mo del. F or each data set, the first row displays the input samples (original on the left, adversarially perturb ed on the right) and the second ro w displa ys the corresp onding outputs. The generated hal- lucinations are annotated in red. 20 Buğday et al. (a) mc-knee (b) mc-b rain Fig. B.2. Examples of successful attacks on the E2E-V arNet model. F or each data set, the first ro w displa ys the input samples (original on the left, adv ersarially perturb ed on the right) and the second ro w displa ys the corresp onding outputs. The generated hallucinations are annotated in red.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment