PRISM-FCP: Byzantine-Resilient Federated Conformal Prediction via Partial Sharing

We propose PRISM-FCP (Partial shaRing and robust calIbration with Statistical Margins for Federated Conformal Prediction), a Byzantine-resilient federated conformal prediction framework that utilizes partial model sharing to improve robustness agains…

Authors: Ehsan Lari, Reza Arablouei, Stefan Werner

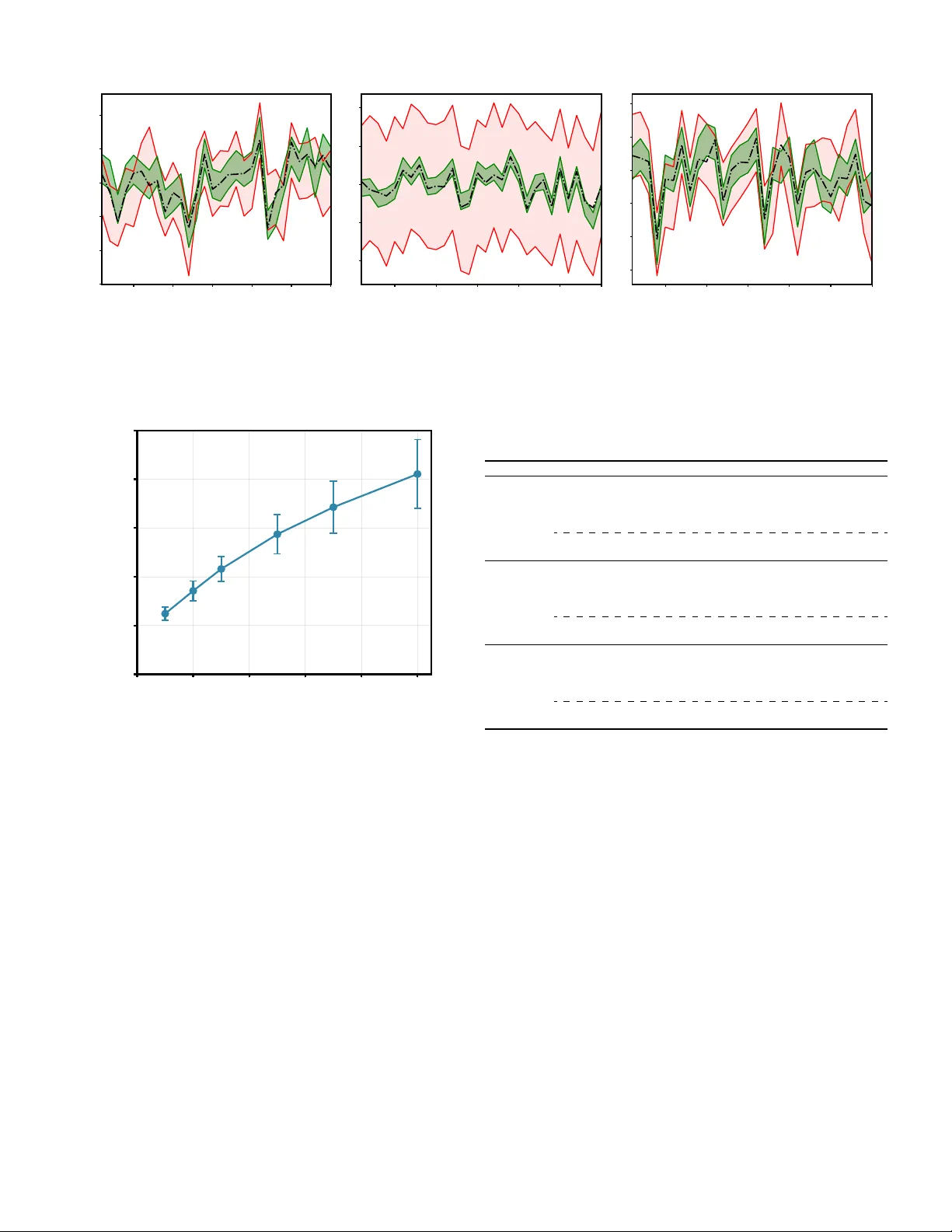

IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 1 PRISM-FCP: Byzantine-Resilient Federated Conformal Prediction via P artial Sharing Ehsan Lari, Reza Arablouei, and Stefan W erner , F ellow , IEEE Abstract —W e propose PRISM-FCP (Partial shaRing and r obust calIbration with Statistical Margins f or Federated Conf ormal Prediction), a Byzantine-resilient federated conformal prediction framework that utilizes partial model sharing to impro ve robust- ness against Byzantine attacks during both model training and conformal calibration. Existing approaches addr ess adversarial behavior only in the calibration stage, leaving the learned model susceptible to poisoned updates. In contrast, PRISM-FCP mitigates attacks end-to-end. During training, clients partially share updates by transmitting only M of D parameters per round. This attenuates the expected energy of an adversary’ s perturbation in the aggregated update by a factor of M /D , yielding lower mean-square error (MSE) and tighter prediction intervals. During calibration, clients conv ert nonconformity scores into characteri- zation vectors, compute distance-based maliciousness scores, and downweight or filter suspected Byzantine contributions befor e estimating the conformal quantile. Extensive experiments on both synthetic data and the UCI Superconductivity dataset demonstrate that PRISM-FCP maintains nominal coverage guarantees under Byzantine attacks while a voiding the interval inflation observed in standard FCP with reduced communication, pro viding a robust and communication-efficient appr oach to federated uncertainty quantification. Index T erms —federated learning, conformal prediction, uncer- tainty quantification, Byzantine attacks, partial sharing I . I N T R O D U C T I O N F EDERA TED learning (FL) [1]–[5] is a distributed learn- ing paradigm in which a population of clients, such as smartphones, hospitals, or financial institutions, collaboratively train a shared model without exchanging raw data. Over the past fe w years, FL has progressed from early formulations rooted in distributed optimization [6]–[8] to increasingly large- scale and production-oriented implementations [9]–[11]. This paradigm is particularly attracti ve when data is inherently decentralized and centralized training is unfeasible or impractical due to priv acy , regulatory , or logistical constraints [12]–[14]. Ho wev er, FL also introduces ne w attack surfaces: the serv er must This work was partially supported by the Research Council of Norway and the Research Council of Finland (Grant 354523). A conference v ersion of this work is submitted to the 2026 European Signal Processing Conference (EUSIPCO), Bruges, Belgium, Aug. 2026. (Corr esponding author: Ehsan Lari.) Ehsan Lari is with the Department of Electronic Systems, Norwegian Univ ersity of Science and T echnology , 7491 Trondheim, Norway (e-mail: ehsanl@alumni.ntnu.no). Reza Arablouei is with the Commonwealth Scientific and Industrial Research Organisation, Pullen vale, QLD 4069, Australia (e-mail: reza.arablouei@csiro.au). Stefan W erner is with the Department of Electronic Systems, Norwegian Univ ersity of Science and T echnology , 7491 Trondheim, Norway . He is also with the Department of Information and Communications Engineering, Aalto Univ ersity , 00076, Finland (e-mail: stefan.werner@ntnu.no). Digital Object Identifier XXXXXX aggregate client-provided updates that are often un verifiable, making the learning process vulnerable to corrupted or malicious participants [15], [16]. As FL moves into high-stakes domains, reliable deployment requires not only accurate point predictions, but also calibrated uncertainty with rigorous guarantees. For instance, in a network of hospitals collaborativ ely training a diagnostic model, a single compromised institution could poison its updates to induce systematically overconfident predictions, leading to unsafe clinical decisions that should have been flagged as uncertain. Conformal prediction (CP) [17]–[21] is a principled frame- work for uncertainty quantification that constructs prediction sets (or intervals) with user -specified co verage, assuming only that samples are exchangeable. In regression, the distribution-free, finite-sample guarantees established in [22] provide a canonical foundation for prediction interval construction. In addition, extensions to rob ust conformal inference can maintain validity under distribution shift, including cov ariate shift [23], a property that is especially relev ant in federated en vironments where client distributions may differ . T o bring these benefits to decentralized learning, federated conformal prediction (FCP) has emerged [24]– [28], enabling clients to compute prediction intervals without sharing calibration data and addressing practical constraints such as communication ef ficiency and heterogeneity across clients (e.g., label shift). In network environments, Byzantine clients pose a critical challenge due to arbitrary and potentially malicious beha vior that can derail collaborativ e training. The work in [29] formalizes this threat model and demonstrates that ev en a single Byzantine participant can drive gradient-based learning toward arbitrarily poor solutions. In practice, adversaries may inject misleading gradients or manipulate model updates [30], [31], degrading performance and compromising the integrity of the global model. More recently , self-driv en entropy aggregation has been pro- posed for Byzantine-robust FL under client heterogeneity [32], reflecting the realistic regime in which benign clients themselves hold non-IID data. For FCP , the threat is amplified as Byzantine behavior can target not only training but also calibration. T raining-phase attacks reduce predictive accuracy , whereas calibration-phase attacks can silently violate cov erage guarantees by distorting the estimated conformal quantile, producing overly narrow intervals and systematic undercoverage, a particularly dangerous failure mode in safety-critical applications such as clinical decision support or financial risk assessment. T o mitigate Byzantine behavior during training, a large body of work has proposed robust aggregation rules [29], [33], IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 2 [34]. Coordinate-wise median and trimmed-mean estimators, for example, can suppress outlying (potentially adversarial) updates [33]. Recent adv ances further include Byzantine client identification with false discovery rate control [35] and pri v acy- preserving Byzantine-robust mechanisms [36]. Howe ver , these approaches are typically most effecti ve when many clients participate in each round (often under assumptions akin to full participation and can suf fer noticeable de gradation under strong client heterogeneity and partial participation, conditions that are common in practical FL deployments. In the context of FCP , the Rob-FCP algorithm [37] addresses calibration-stage attacks by encoding local nonconformity-score distributions into characterization vectors and excluding clients whose vectors deviate significantly from the population, thereby providing certifiable coverage guarantees. Ne vertheless, Rob- FCP assumes that training is secure and focuses exclusi vely on calibration. Con versely , robust training aggregators protect the training phase b ut remain obli vious to calibration-phase manipulation. As a result, existing defenses are stage-specific and do not provide an end-to-end solution for Byzantine- resilient FCP . This gap motiv ates methods that can jointly defend both training and calibration while remaining communication- efficient. In this paper , we propose PRISM-FCP , which integrates partial-sharing online federated learning (PSO-Fed) [16], [38] with FCP to achieve Byzantine resilience across both training and calibration phases while reducing communication. In each round, ev ery selected client exchanges only a randomly chosen subset of M out of D model parameters with the server . Our key moti vation is that partial sharing (originally introduced for communication ef ficiency) provides a principled Byzantine- robustness effect at no additional computational cost to clients. Specifically , the random parameter mask acts as a stochastic filter: when a Byzantine client injects an adversarial perturbation, the perturbation energy entering the aggregated update is attenuated by a factor of M /D in expectation. This reduced Byzantine influence during training improves model accuracy , which in turn tightens the residual distributions at benign clients. T ighter residuals yield more concentrated nonconformity-score distributions. This increases separability in the characterization- vector space, improving distance-based maliciousness scoring and mitigating coverage loss and interval distortions under attack. In summary , our main contrib utions are: • T raining rob ustness via partial sharing: W e demonstrate that partial model sharing attenuates Byzantine pertur- bations by a factor proportional to M /D , reducing the steady-state mean-square error (MSE) and yielding tighter prediction intervals (Section IV). • Calibration r obustness via impro ved separability: W e show that this training-phase attenuation concentrates benign clients’ nonconformity-score distributions, improving the separability of characterization vectors and enhancing distance-based detection of Byzantine outliers during calibration (Section IV -D). • End-to-end empirical validation: Through experiments on synthetic benchmarks and the UCI Superconductivity dataset, we demonstrate that PRISM-FCP preserves nomi- nal coverage under Byzantine attacks that cause standard FCP to fail, while reducing communication relativ e to full-model-sharing baselines (Section V). W e organize the remainder of the paper as follows . In Section II, we introduce the system model, revie w the PSO- Fed algorithm, and summarize CP in federated settings. In Section IV, we theoretically analyze Byzantine perturbation attenuation under partial sharing and derive the resulting interval- width scaling. In Section IV -D , we examine how training-phase attenuation improves calibration-phase Byzantine detection. In Section V, we verify our theoretical findings through numerical experiments. Finally , in Section VI, we present some concluding remarks. Mathematical Notations: W e denote scalars by italic letters, column vectors by bold lo wercase letters, and matrices by bold uppercase letters. The superscripts ( · ) ⊺ and ( · ) − 1 denote the transpose and inv erse operations, respectively , and ∥·∥ denotes the Euclidean norm. W e use 1 {·} as the indicator function of its event ar gument and I D for the D × D identity matrix. Lastly , tr( · ) denotes the trace of a matrix, and calligraphic letters such as S and B denote sets. I I . P R E L I M I N A R I E S In this section, we introduce the considered system model, revie w the partial sharing mechanism used in PSO-Fed [15], [16], [38], [39], and outline CP in a federated setting. A. System Model W e consider a federated network consisting of K clients communicating with a central server . At iteration n , client k observes a data (feature-label) pair x k,n ∈ R D and y k,n ∈ R , generated according to y k,n = w ⋆ ⊺ x k,n + ν k,n , (1) where w ⋆ ∈ R D is the unknown parameter vector to be estimated collaborati vely and ν k,n denotes observ ation noise. The global learning objecti ve is to minimize the MSE aggregated across clients: J ( w ) = 1 K K X k =1 J k ( w ) , (2) where the local risk at client k is J k ( w ) = E | y k,n − w ⊺ x k,n | 2 (3) and the expectation is taken with respect to the (possibly client-dependent) data distribution at client k . In general, these client distrib utions may dif fer substantially (i.e., the data can be non-IID across clients). The FL goal is to obtain the minimizer arg min w J ( w ) via decentralized collaboration, without exchanging raw data. IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 3 server (aggregate) benign client benign client Byzantine client (a) Full sharing ( M = D ): Byzantine perturbation is passed in full. server (aggregate) benign client benign client Byzantine client (b) Partial sharing ( M < D ): Byzantine perturbation is attenuated by M /D . Fig. 1. Illustration of ho w partial sharing attenuates Byzantine perturbations. Remark 1 (Scope of the linear model): The linear re gression model in (1) is adopted for analytical tractability , enabling a closed-form characterization of Byzantine perturbation attenua- tion under partial sharing. While a complete theory for more general models is beyond the scope of this work, the underlying mechanism (attenuating adversarial energy by a factor of M /D ) extends conceptually be yond linear regression, and developing such analyses is an interesting direction for future work. B. P artial Model Sharing In our prior work [15], [16], we have studied partial model sharing through PSO-Fed in detail (see Fig. 1). Here, we summarize the mechanism most rele vant to this paper . Unlike con ventional FL, where the full parameter vector is e xchanged between the server and clients at ev ery iteration, PSO-Fed communicates only a subset of parameters. Specifically , at iteration n , client k receiv es the masked global model estimate and uploads only a masked version of its local model estimate. This masking is represented by a diagonal selection matrix S k,n ∈ R D × D with exactly M ones on the diagonal (and D − M zeros), which specifies the model parameters communicated between client k and the server at iteration n . Therefore, the PSO-Fed recursions for minimizing (2) are gi ven by ϵ k,n = y k,n − [ S k,n − 1 w n − 1 + ( I D − S k,n − 1 ) w k,n − 1 ] ⊺ x k,n w k,n = S k,n − 1 w n − 1 + ( I D − S k,n − 1 ) w k,n − 1 + µ x k,n ϵ k,n w n = 1 |S n | X k ∈S n [ S k,n w k,n + ( I D − S k,n ) w n − 1 ] , where w k,n is the local model estimate at client k and iteration n , w n is the global model estimate at iteration n , µ is the stepsize parameter , and S n is the set of participating clients at iteration n , and |S n | is the cardinality of S n . C. Byzantine Attac k during T raining Phase Let S B denote the set of Byzantine clients, and let β k ∈ { 0 , 1 } indicate whether client k is Byzantine ( β k = 1 if client k ∈ S B and β k = 0 otherwise). The number of Byzantine clients is |S B | , which is assumed to be known by the server 1 . W e consider an uplink attack model in which, at each iteration, ev ery Byzantine client perturbs its transmitted (partial) model update with probability p a . Specifically , we model the corruption as w k,n + τ k,n δ k,n , where τ k,n is a Bernoulli random variable with Pr( τ k,n = 1) = p a , and δ k,n ∼ N ( 0 , σ 2 B I D ) is zero-mean white Gaussian noise [40]. Accordingly , the server receives S k,n w k,n + β k τ k,n S k,n δ k,n instead of the benign partial update S k,n w k,n . This model captures stochastic Byzantine behavior that injects additive noise into the aggre gation process. Remark 2 (Stochastic vs. adversarial attack model): The Gaussian perturbation model is standard in the Byzantine- resilient distributed learning [29], [40] and enables closed- form analysis. More powerful adversaries may be adaptiv e and concentrate perturbations on the shared coordinates if they observe the selection masks S k,n . In our setting, the masks are selected randomly and independently (Assumption A3 in Section IV -A ), implying that partial sharing attenuates the injected perturbation energy by a factor proportional to M /D in expectation. Extending the guarantees to fully adaptiv e adversaries is an interesting direction for future work. D. F ederated Conformal Pr ediction CP for regression constructs prediction intervals with a user- specified confidence level (e.g., 90% ) under the assumption of data exchangeability . Gi ven a trained predictor , CP calculates nonconformity scores (typically absolute residuals) on a calibra- tion set and uses an empirical quantile of these scores to form prediction intervals with distribution-free marginal coverage. Importantly , these guarantees do not rely on the correctness of the underlying model and hold for arbitrary model classes. In the standard CP pipeline, we train a model on a training set and compute conformal calibration scores on a held-out cali- bration dataset { ( x j , y j ) } N j =1 using the learned model parameter vector ˆ w . The non-conformity score for each calibration sample is r j = | y j − ˆ w ⊺ x j | , j = 1 , . . . , N . (4) Let q 1 − α be the ⌈ ( N + 1)(1 − α ) ⌉ -th order statistic of { r j } N j =1 . Then, the two-sided (1 − α ) prediction interval for a ne w input x is C ( x ) = [ ˆ w ⊺ x − q 1 − α , ˆ w ⊺ x + q 1 − α ] . (5) The confidence parameter α ∈ (0 , 1) controls interval width: smaller α yields wider interv als. Under exchangeability , CP guarantees marginal cov erage P ( y ∈ C ( x )) ≥ 1 − α. (6) 1 This assumption can be relaxed to an upper bound or an estimate. IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 4 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 Normalized Nonconformit y Score 0.00 0.05 0.10 0.15 0.20 0.25 Probability Benign Clien ts Byzantine Clients (a) Efficienc y attack 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 Normalized Nonconformit y Score 0.00 0.03 0.05 0.08 0.10 0.12 0.15 0.18 0.20 (b) Coverage attack 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 Normalized Nonconformit y Score 0.00 0.03 0.05 0.08 0.10 0.12 0.15 0.18 (c) Random attack Fig. 2. Histograms illustrating the effect of different Byzantine attacks during the calibration phase: (a) efficienc y attack (adversaries report all-zero normalized scores), (b) coverage attack (adversaries report all-one normalized scores), and (c) random attack (adversaries add Gaussian noise to their scores). In FL, after training of a global model ˆ w , each client k holds a local calibration set { ( x k,j , y k,j ) } N k j =1 (with partial exc hangeability [24], [41] assumption) and computes local nonconformity scores r k,j = | y k,j − ˆ w ⊺ x k,j | , j = 1 , . . . , N k . (7) Priv acy constraints prevent clients from sharing raw calibration data (or e ven individual scores) with the server . The FCP algorithm [24] addresses this by employing secure aggre gation or sketching mechanisms (e.g., T -Digest [42]) to enable the server to approximate the global conformal quantile from the pooled score population as ˆ q 1 − α ≈ Quan tile 1 − α K [ k =1 { r k,j } N k j =1 ! . (8) The resulting interval is constructed by replacing q 1 − α with ˆ q 1 − α . In practice, when the sketching/aggregation error is small, this approximation can preserv e co verage closely while protecting client-lev el calibration priv acy . E. Byzantine Attac k during Calibration Phase During the calibration phase, Byzantine clients in S B may submit arbitrary or adversarially crafted nonconformity scores (or score summaries) instead of their true v alues, with the aim of biasing the global quantile estimate used to construct prediction interv als. By manipulating the estimated quantile q 1 − α , adversaries can induce either ov erly narro w intervals (causing systematic undercoverage) or ov erly wide intervals (yielding vacuous uncertainty), thereby compromising the prac- tical reliability of CP . In this work, we consider three representativ e calibration- phase Byzantine attacks, whose effects are illustrated in Fig. 2: • Efficiency attack : Byzantine clients report near-zero scores (e.g., all zeros after normalization), deflating q 1 − α and leading to undercoverage. • Cover age attac k : Byzantine clients report maximal scores (e.g., all ones after normalization), inflating q 1 − α and producing excessi vely wide prediction interv als. • Random attack : Byzantine clients perturb their scores by ad- diti ve noise, injecting randomness into the quantile estimation (e.g., ˜ r k,j = r k,j + η k,j with η k,j ∼ N (0 , σ 2 C ) , optionally clipped to the score range). I I I . B Y Z A N T I N E - R E S I L I E N T F C P V I A P A RT I A L S H A R I N G In this section, we present PRISM-FCP , an efficient FCP framew ork that is resilient to Byzantine attacks at both the training and calibration phases. The key insight is that the partial model sharing mechanism [16], which is originally designed for communication ef fi cienc y , also attenuates Byzantine perturbations during training. This, in turn, impro ves do wnstream calibration robustness by making benign score distributions more concentrated and hence easier to separate from outliers. A. Mitigating Byzantine Attack during T raining Partial model sharing reduces communication overhead by allowing each participating client to exchange only a subset of model parameters with the server [15], [16]. Beyond communication sa vings, partial sharing also improv es rob ustness to stochastic model-poisoning attacks. In particular, prior results establish mean and mean-square conv ergence of PSO-Fed under noisy Byzantine updates [16]. In this work, we exploit this built-in property to mitigate Byzantine influence during training, thereby reducing the prediction error that propagates into conformal calibration. B. Mitigating Byzantine Attacks during Calibration T o mitigate Byzantine attacks in the calibration phase, each benign client computes nonconformity scores { r k,j } N k j =1 on its IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 5 local calibration set and summarizes them via a histogram-based characterization vector v k ∈ ∆ H defined as v k,h = 1 N k N k X j =1 1 { a h − 1 ≤ ˜ r k,j < a h } , h = 1 , . . . , H, (9) where ˜ r k,n denotes a normalized (or clipped) score mapped to [0 , 1] , and 0 = a 0 < a 1 < · · · < a H = 1 are H bin boundaries. Each entry v k,h is the empirical probability that the local scores fall into bin h . The set ∆ H ≜ ( v k ∈ R H v k,h ≥ 0 , H X h =1 v k,h = 1 ) (10) denotes the H -dimensional probability simplex. Clients transmit v k to the server , while Byzantine clients may submit arbitrary or adversarial vectors (cf. Fig. 2 with H = 30 ). In practice, the bin boundaries { a h } can be fixed a priori based on expected residual ranges or estimated from a small benign pilot round. This histogram summary preserves coarse distributional structure without transmitting raw scores, reducing commu- nication and offering an additional priv acy layer . Moreover , benign clients tend to produce similar characterization v ectors, whereas Byzantine submissions typically deviate, enabling robust detection. W e compute pairwise ℓ 2 distances between characterization vectors as d k,k ′ = ∥ v k − v k ′ ∥ 2 , ∀ k , k ′ ∈ { 1 , · · · , K } . (11) Let K b := K − |S B | denote the number of benign clients. Follo wing Rob-FCP [37], we assign each client k a malicious- ness score, m k , as the sum of distances to its K b − 1 farthest neighbors: m k = X k ′ ∈ FN( k,K b − 1) d k,k ′ , (12) where FN( k , K b − 1) is the set of the K b − 1 clients with the largest distances from client k . Intuitiv ely , Byzantine clients lie farther from the benign population and hence attain lar ger m k . W e declare the |S B | clients with the largest maliciousness scores as Byzantine and form the benign set B from the remaining clients. Remark 3 (Handling unknown |S B | ): When the number of Byzantine clients is unknown, we replace the top- |S B | filtering by a robust outlier rule such as the median absolute deviation (MAD) criterion [43]. Specifically , we compute each client’ s distance to a robust population center (e.g., the coordinate-wise median characterization v ector) and flag clients whose distance exceeds a MAD-based threshold. Our experiments in Section V demonstrate that both known- |S B | filtering and MAD-based filtering can preserve nominal coverage under attack. Finally , the server estimates the conformal quantile ˆ q 1 − α using only the scores (or sketches) contributed by clients in B , and constructs prediction intervals accordingly . This calibration-stage filtering follows Rob-FCP’ s robust detection procedure [37], while PRISM-FCP additionally reduces training- stage Byzantine influence and communication via partial sharing, yielding reliable prediction interv als under Byzantine attacks. I V . T H E O R E T I C A L A N A L Y S I S In this section, we provide theoretical justification for the Byzantine resilience of PRISM-FCP . Our main results are: (i) partial sharing attenuates the energy of injected Byzantine perturbations by a factor proportional to M /D per iteration, (ii) the corresponding steady-state MSE under Byzantine attacks is reduced, and (iii) this reduction translates into tighter residual distributions and hence narrower FCP prediction intervals at the same nominal cov erage. Collectively , these results formalize the intuition that partial sharing acts as a stochastic filter that dilutes adversarial influence. A. Assumptions and Definitions T o make the analysis tractable, we adopt the following standard assumptions. A1: The input vectors x k,n at each client k and iteration n are drawn from a wide-sense stationary multiv ariate random process with cov ariance R k = E x k,n x ⊺ k,n . A2: The observ ation noise ν k,n and the Byzantine perturbations δ k,n are IID across clients and iterations. Moreov er, they are mutually independent and independent of all other stochastic variables, including x k,n and S k,n . A3: The selection matrices S k,n are independent across clients and iterations. B. Mean and Mean-Square Conver gence and Steady-State MSE W e summarize key results from [16] for PSO-fed, which we will use to analyze PRISM-FCP under Byzantine attacks. Lemma 1 (Mean conver gence ): PSO-Fed con verges in the mean sense under Byzantine attacks with a suitable stepsize [16, Sec. III-A]. Lemma 2 (Mean-squar e con ver gence): PSO-Fed conv erges in the mean-square sense under Byzantine attacks with a suitable stepsize [16, Sec. III-B]. Lemma 3 (Steady-state MSE decomposition): Under mean- square con vergence, the steady-state MSE of PSO-Fed admits the decomposition [16, Sec. III-C] E := E ϕ + E ω + E Θ . (13) Remark 4 (Interpr etation of the MSE decomposition): The steady-state MSE (13) decomposes into three additi ve terms: 1) E ϕ : error due to observation noise as it propagates through the learning dynamics (affected by partial sharing, client scheduling, and stepsize), 2) E ω : error induced exclusively by Byzantine perturbations (the key quantity for attack resilience), 3) E Θ : irreducible error due to observation noise that is independent of the learning dynamics. IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 6 Lemma 4 (P artial sharing attenuates Byzantine contribution): Partial sharing and client scheduling reduce the Byzantine- induced term E ω , thereby enhancing resilience to model- poisoning attacks [16, Sec. IV]. In particular , when S k,n selects M parameters uniformly at random, the expected energy (squared ℓ 2 -norm) of the injected perturbation in the aggregated update is attenuated by a factor M /D . C. Consequences of P artial Sharing for FCP Quantiles W e connect the training-phase partial sharing to the prediction- interval width. In particular , we show that attenuation of the Byzantine-induced MSE term (Lemma 4) leads to smaller param- eter error , which tightens residual (nonconformity) distrib utions and thus reduces the conformal quantile to form prediction intervals. A central objective of CP is to produce intervals that are both valid (achieving target marginal cov erage 1 − α ) and efficient (as narrow as possible). V alidity is ensured by conformal quantile calibration under exchangeability , whereas ef ficiency depends on the distribution of nonconformity scores, which is directly influenced by the trained model’ s prediction error . Lemma 5 (Lipschitz continuity of residuals): Let r k,n ( w ) = | y k,n − w ⊺ x k,n | denote the residual at client k and iteration n . For any w and w ⋆ , we have | r k,n ( w ) − r k,n ( w ⋆ ) | ≤ ∥ x k,n ∥ 2 ∥ w − w ⋆ ∥ 2 . (14) Pr oof: Let a = y k,n − w ⊺ x k,n and b = y k,n − w ⋆ ⊺ x k,n . By the rev erse triangle inequality , | a | − | b | ≤ | a − b | . Moreov er , a − b = ( w ⋆ − w ) ⊺ x k,n , hence | a − b | = | ( w − w ⋆ ) ⊺ x k,n | ≤ ∥ w − w ⋆ ∥ 2 ∥ x k,n ∥ 2 , where the last inequality follows from Cauchy-Schwarz. Theor em 1 (Quantile stability under local density bounds): Let q ⋆ = F ⋆ − 1 (1 − α ) and q k,n = F − 1 k,n (1 − α ) denote the (1 − α ) quantiles of the benign residual distribution F ⋆ (under w ⋆ ) and a perturbed residual distribution F k,n (under w k,n ), respectiv ely . Assume there e xists a neighborhood N of q ⋆ such that: (i) F ⋆ admits a density f ⋆ with inf t ∈N f ⋆ ( t ) ≥ f min > 0 , and (ii) F ⋆ is Lipschitz on N with constant L , i.e., | F ⋆ ( t ) − F ⋆ ( s ) | ≤ L | t − s | for all s, t ∈ N . Then, with X := r k,n ( w k,n ) and Y := r k,n ( w ⋆ ) , we have | q k,n − q ⋆ | ≤ 2 √ 2 L f min p E | X − Y | . (15) Pr oof: Since inf t ∈N f ⋆ ( t ) ≥ f min , the in verse CDF F ⋆ − 1 is locally Lipschitz on N with constant 1 /f min , hence | q k,n − q ⋆ | ≤ 1 f min sup t ∈N | F k,n ( t ) − F ⋆ ( t ) | . Fix ε > 0 . For any t , we have 1 { X ≤ t } − 1 { Y ≤ t } ≤ 1 {| X − Y | ≥ ε } + 1 { t − ε < Y ≤ t + ε } . (16) T aking e xpectations giv es | F k,n ( t ) − F ⋆ ( t ) | ≤ P ( | X − Y | ≥ ε ) + F ⋆ ( t + ε ) − F ⋆ ( t − ε ) ≤ E | X − Y | ε + 2 Lε, (17) where we use Markov’ s inequality and the Lipschitz property of F ⋆ on N . Optimizing over ε yields sup t ∈N | F k,n ( t ) − F ⋆ ( t ) | ≤ 2 p 2 L E | X − Y | and the claim follows. Remark 5 (Interpr eting the constant): The prefactor admits a natural decomposition. First, 1 /f min arises from the local Lipschitz continuity of the in verse CDF around q ⋆ , which requires the benign density to be bounded aw ay from zero in a neighborhood of q ⋆ . Second, the √ L dependence comes from a smoothing ar gument that conv erts an expected residual perturbation, E | X − Y | , into a uniform bound on the local CDF gap sup t ∈N | F k,n ( t ) − F ⋆ ( t ) | , with L capturing the local steepness of q ⋆ . In particular , when scores are normalized so that L ≤ 1 , the bound simplifies to | q k,n − q ⋆ | ≤ 2 √ 2 f min p E | X − Y | . Cor ollary 1 (Impact of partial sharing on FCP quantiles): Let L x := p E ∥ x k,n ∥ 2 2 and e w k,n := w k,n − w ⋆ . Under the assumptions of Theorem 1, we ha ve | q k,n − q ⋆ | ≤ 2 √ 2 L f min L 1 / 2 x E ∥ e w k,n ∥ 2 2 1 / 4 . (18) Pr oof: By Lemma 5, we have | r k,n ( w k,n ) − r k,n ( w ⋆ ) | ≤ ∥ x k,n ∥ 2 ∥ e w k,n ∥ 2 . (19) T aking e xpectations and applying Cauchy-Schwarz yields E | r k,n ( w k,n ) − r k,n ( w ⋆ ) | ≤ q E ∥ x k,n ∥ 2 2 q E ∥ e w k,n ∥ 2 2 = L x q E ∥ e w k,n ∥ 2 2 . (20) Substituting into Theorem 1, which bounds | q k,n − q ⋆ | by a constant times p E | X − Y | , gi ves the stated fourth-root dependence on E ∥ e w k,n ∥ 2 2 . Finally , Lemma 4 implies that partial sharing attenuates the injected Byzantine perturbation energy by a factor proportional to M /D , thereby reducing the steady-state E ∥ e w k,n ∥ 2 2 and consequently tightening the quantile deviation bound. Cor ollary 2 (Quantile deviation via steady-state MSE de- composition): Assume the conditions of Theorem 1 and let L x := p E ∥ x k,n ∥ 2 2 . If the steady-state parameter error sat- isfies lim n →∞ E ∥ w k,n − w ⋆ ∥ 2 2 = E with the decomposition E = E ϕ + E ω + E Θ in (13), then lim sup n →∞ | q k,n − q ⋆ | ≤ 2 √ 2 L f min L 1 / 2 x E ϕ + E ω + E Θ 1 / 4 . (21) Moreov er , by Lemma 4, partial sharing reduces the Byzantine- induced term E ω (and hence tightens the above bound). Remark 6 (Coverage vs. efficiency): Conformal validity is enforced by the calibration quantile construction (under ex- changeability), whereas efficienc y is governed by the interv al width. Byzantine perturbations degrade the trained model and increase the steady-state MSE through the Byzantine-induced IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 7 term E ω in (13) . This increases the typical magnitude of residuals and hence the (1 − α ) quantile used for calibration, leading to wider prediction intervals. Partial sharing mitigates this ef fect: by reducing E ω (Lemma 4), it decreases the resulting quantile shift and the associated interval inflation. This prediction is consistent with the empirical trends in section V. Pr oposition 1 (W idth perturbation bound): Let C ( x ) = [ w ⊺ x − q , w ⊺ x + q ] with width ω = 2 q . Let ω ⋆ := 2 q ⋆ (under w ⋆ ) and ω k,n := 2 q k,n (under w k,n ). Assume the conditions of Theorem 1 hold on a neighborhood N of q ⋆ with inf t ∈N f ⋆ ( t ) ≥ f min > 0 and local Lipschitz constant L for F ⋆ . Let R k := E [ x k,n x ⊺ k,n ] and e w k,n := w k,n − w ⋆ . Then, | ω k,n − ω ⋆ | ≤ 4 √ 2 L f min tr( R k ) 1 / 4 | {z } =: K ω E ∥ e w k,n ∥ 2 2 1 / 4 . (22) Theor em 2 (P er-iter ation Byzantine attenuation and im- plications for width inflation): Consider the training-phase Byzantine model in Section II-C , where client k uploads w k,n +1 + β k τ k,n δ k,n , with τ k,n ∼ Bernoulli( p a ) and δ k,n having IID entries of variance σ 2 B . Under partial sharing, the transmitted perturbation is mask ed by S k,n (a diagonal matrix with exactly M ones). Then the injected perturbation energy satisfies E h β k τ k,n S k,n δ k,n 2 2 i = β k p a M σ 2 B . (23) In particular , conditional on an attack occurring (i.e., τ k,n = 1 ) at a Byzantine client ( β k = 1 ), we have E ∥ S k,n δ k,n ∥ 2 2 τ k,n = 1 = M σ 2 B , (24) whereas under full sharing ( M = D ) the corresponding ener gy is D σ 2 B . Hence, partial sharing reduces the instantaneous injected energy by the exact factor M /D relati ve to full sharing. Let ω k,n = 2 q k,n and ω ⋆ = 2 q ⋆ denote the conformal interval widths under w k,n and w ⋆ , respectively , and define the steady- state width inflation Φ( M ) := lim sup n →∞ | ω k,n − ω ⋆ | . If Proposition 1 bounds | ω k,n − ω ⋆ | by a constant times E ∥ w k,n − w ⋆ ∥ 2 2 1 / 4 and Lemma 3 provides the steady-state decomposition E = E ϕ + E ω + E Θ , then Φ( M ) ≤ e K E ϕ ( M ) + E ω ( M ) + E Θ 1 / 4 , (25) for an explicit constant e K inherited from Proposition 1. While (23) gi ves an exact M /D reduction in the instantaneous injected energy , the resulting dependence of E ω ( M ) (and also E ϕ ( M ) ) on M is determined by how the attenuated perturbations propagate through the system dynamics via the selection matrices S k,n . Pr oof: Since S k,n is diagonal with exactly M ones and δ k,n has IID entries with variance E [ δ 2 k,n,d ] = σ 2 B , we have E ∥ S k,n δ k,n ∥ 2 2 = D X d =1 [ S k,n ] 2 dd E [ δ 2 k,n,d ] = M σ 2 B . Multiplying by β k and using E [ τ 2 k,n ] = E [ τ k,n ] = p a yields (23) . The M /D reduction in (24) follows by comparing M σ 2 B to the full-sharing case D σ 2 B . For the steady-state width bound in (25) , Proposition 1 links the width deviation to the fourth root of the steady-state parameter error (MSE). By Lemma 3, this errors decomposes as E = E ϕ ( M ) + E ω ( M ) + E Θ . The Byzantine component E ω ( M ) reflects the attenuated injected energy in (23) , while its steady- state impact is determined by how this perturbation propagates through the system dynamics via the in volved matrices whose spectral properties also depend on M . D. How T r aining-Phase P artial Sharing Impr oves Calibration In Section IV -C , we showed that partial sharing yields tighter conformal intervals by reducing the steady-state MSE and hence the residual quantiles. Here, we sho w an additional benefit: it also improves Byzantine detection during calibration. The ke y insight is that smaller training error concentrates benign clients’ nonconformity-score histograms, increasing their separation from Byzantine outliers and enabling more reliable filtering. W e adopt the histogram-based characterization approach of Rob-FCP [37], which summarizes each client’ s local score distribution by a finite-dimensional vector and applies distance- based outlier detection. W e show that partial sharing strengthens this procedure, i.e., by reducing training error , it makes benign histograms more tightly clustered, thereby increasing the detec- tion margin and further tightening the post-filtering quantiles. Remark 7 (Homog eneity assumption and non-IID e xtension): For analytical tractability , we assume benign clients’ calibration residuals are IID drawn from distributions F k that are small perturbations of a common reference F ⋆ . In practice, federated clients often hold heterogeneous (non-IID) data, hence popula- tion histograms may differ across benign clients ev en under the optimal model. Our e xperiments in Section V include non-IID data and still yield effecti ve filtering, suggesting robustness to moderate heterogeneity in practice. Let F ⋆ denote the benign residual CDF under the optimal parameter vector w ⋆ . After training, all clients calibrate using a trained model b w (e.g., the steady-state global model), and define the parameter error e := b w − w ⋆ . Client k holds N k calibration residuals r k, 1 , . . . , r k,N k drawn IID from a residual distribution F k that is a perturbation of F ⋆ induced by e 2 W e partition the (normalized/clipped) score range [0 , 1] into H bins [ a h − 1 , a h ) and form the empirical histogram vector v k ∈ ∆ H . Define the corresponding benign population histogram p ⋆ := F ⋆ ( a h ) − F ⋆ ( a h − 1 ) H h =1 , and let q ∈ ∆ H denote an attack-dependent adversarial mean histogram. Intuitively , partial sharing reduces ∥ e ∥ and hence makes benign v k concentrate more tightly around p ⋆ , whereas Byzantine submissions tend to deviate toward q (cf. Fig. 2). Following Rob-FCP [37], the server computes pairwise distances and assigns each client a 2 For linear models with square loss, Lemma 5 implies that residual perturbations scale as O ( ∥ e ∥ ) , which in turn induces a small local perturbation of the residual CDF . IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 8 maliciousness score based on its farthest neighbors: d k,k ′ := ∥ v k − v k ′ ∥ 2 , m k := P k ′ ∈ FN( k,K b − 1) d k,k ′ , where FN( k , K b − 1) is the set of the K b − 1 farthest clients from k and K b := K − |S B | is the e xpected number of benign clients. The server then retains the K b clients with the smallest maliciousness scores as the benign set used for quantile estimation. Lemma 6 (Histogram concentration for benign clients): Let a benign client k hav e N k IID calibration residuals, binned into H fixed intervals [ a h − 1 , a h ) to form the empirical histogram v k ∈ ∆ H , with population vector p k = E [ v k ] . Then, for any δ ∈ (0 , 1) , with probability at least 1 − δ , we have ∥ v k − p k ∥ 2 ≤ C H s log(2 H /δ ) N k , (26) where one can take C H = √ 3 . The constant depends only on the boundedness of the one-hot bin indicators and not on N k . Pr oof: Let z j ∈ { e 1 , . . . , e H } be the one-hot v ector indicating the bin of the j -th residual. Then, v k = 1 N k P N k j =1 z j and p k = E [ z 1 ] . Define ξ j = z j − p k , which are independent, mean-zero, and satisfy ∥ ξ j ∥ 2 ≤ ∥ z j ∥ 2 + ∥ p k ∥ 2 ≤ 1 + 1 = √ 2 . Applying a vector Bernstein (or Hilbert-space Hoeffding) inequality [44] to ξ = P N k j =1 ξ j yields P ( ∥ v k − p k ∥ 2 ≥ ε ) = P ( ∥ ξ ∥ 2 ≥ N k ε ) ≤ 2 H exp − N k ε 2 3 . (27) Setting ε = q 3 N k log(2 H /δ ) completes the proof. Lemma 7 (T raining error causes benign histogram drift): Let r ( w ) := | y − x ⊺ w | be the residual of linear regression and L x := p E ∥ x k,n ∥ 2 2 . Fix H bins with boundaries { a h } H h =0 and let p ⋆ be the benign population histogram under w ⋆ , and p k the population histogram under w = w ⋆ + e k . Assume the benign residual distribution under w ⋆ admits a density f ⋆ such that sup h =0 ,...,H f ⋆ ( a h ) ≤ f max < ∞ . Then, we have ∥ p k − p ⋆ ∥ 2 ≤ 2 p 2( H + 1) f max L x ∥ e k ∥ 2 . (28) Equi valently , ∥ p k − p ⋆ ∥ 2 ≤ C bin f max L x ∥ e k ∥ 2 where C bin := 2 p 2( H + 1) depends only on the binning. Pr oof: Let r ⋆ := r ( w ⋆ ) = | y − x ⊺ w ⋆ | and r k := r ( w ⋆ + e k ) = | y − x ⊺ ( w ⋆ + e k ) | , with CDFs F ⋆ and F k , respectively . By Lemma 5, we have | r k − r ⋆ | ≤ ∥ x ∥ 2 ∥ e k ∥ 2 = : ∆( x ) . Hence, for any t ≥ 0 , { r ⋆ ≤ t − ∆( x ) } ⊆ { r k ≤ t } ⊆ { r ⋆ ≤ t + ∆( x ) } . T aking probabilities over ( x , y ) yields F ⋆ ( t − ∆( x )) ≤ F k ( t ) ≤ F ⋆ ( t + ∆( x )) . Therefore, | F k ( t ) − F ⋆ ( t ) | ≤ F ⋆ ( t + ∆( x )) − F ⋆ ( t − ∆( x )) . Assuming F ⋆ admits a density f ⋆ with sup u ∈ R f ⋆ ( u ) ≤ f max (in particular at the bin edges), the mean-value bound gi ves F ⋆ ( t + ∆( x )) − F ⋆ ( t − ∆( x )) ≤ 2 f max ∆( x ) . T aking e xpectations over x and using Cauchy-Schwarz, | F k ( t ) − F ⋆ ( t ) | ≤ 2 f max E [∆( x )] = 2 f max ∥ e k ∥ 2 E ∥ x ∥ 2 ≤ 2 f max ∥ e k ∥ 2 q E ∥ x ∥ 2 2 = 2 f max L x ∥ e k ∥ 2 . (29) Now define the edge deviations g h := F k ( a h ) − F ⋆ ( a h ) for h = 0 , . . . , H , so that each bin-mass difference is ( p k − p ⋆ ) h = F k ( a h ) − F k ( a h − 1 ) − F ⋆ ( a h ) − F ⋆ ( a h − 1 ) = g h − g h − 1 , h = 1 , . . . , H. (30) Let g := ( g 0 , . . . , g H ) ⊺ and let B ∈ R H × ( H +1) be the first- difference matrix such that p k − p ⋆ = Bg . Since each ro w of B has exactly one +1 and one − 1 , we have ∥ B ∥ 2 ≤ √ 2 , and hence ∥ p k − p ⋆ ∥ 2 ≤ ∥ B ∥ 2 ∥ g ∥ 2 ≤ √ 2 ∥ g ∥ 2 ≤ p 2( H + 1) ∥ g ∥ ∞ . Using the CDF bound at t = a h giv es ∥ g ∥ ∞ = max h | g h | ≤ 2 f max L x ∥ e k ∥ 2 , and therefore ∥ p k − p ⋆ ∥ 2 ≤ 2 p 2( H + 1) f max L x ∥ e k ∥ 2 , (31) which proves the claim. Pr oposition 2 (Separation margin impro ves under partial sharing): Let ∆ := ∥ q − p ⋆ ∥ 2 denote the (attack-dependent) separation between the adversarial mean histogram q and the benign population histogram p ⋆ . Let K b be the number of benign clients and N min := min k ∈B N k . Assume Lemma 7 giv es ∥ p k − p ⋆ ∥ 2 ≤ C drift L x ∥ e k ∥ 2 for benign k (with C drift depending only on the binning and f max ), and define e rms := max k ∈B p E ∥ e k ∥ 2 2 . Then, with probability at least 1 − δ ov er benign sampling, min Byz j min benign k ∥ v j − v k ∥ 2 ≥ ∆ − r b − r a , (32) where r b := C H q log(2 H K b /δ ) N min + C drift L x e rms and r a is a radius controlling adversarial concentration around q (e.g., analogous to the first term if the attack is stochastic). Moreover , partial sharing reduces the Byzantine-induced steady-state error component E ω ( M ) (Lemma 4), which decreases e rms in attack- dominated regimes, thereby shrinking r b and increasing the separation margin ∆ − r b − r a . Pr oof (sketch): For any Byzantine j and benign k , by the triangle inequality , ∥ v j − v k ∥ 2 ≥ ∥ q − p ⋆ ∥ 2 − ∥ v j − q ∥ 2 − ∥ v k − p ⋆ ∥ 2 . The benign term satisfies ∥ v k − p ⋆ ∥ 2 ≤ ∥ v k − p k ∥ 2 + ∥ p k − p ⋆ ∥ 2 . Apply Lemma 6 with a union bound o ver K b benign clients IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 9 to bound max k ∈B ∥ v k − p k ∥ 2 by the first term in r b , and apply Lemma 7 plus Jensen to bound max k ∈B ∥ p k − p ⋆ ∥ 2 by C drift L x e rms . The adv ersarial term is controlled by r a . T aking minima yields the claim. Theor em 3 (Distance-based filtering under partial sharing): Consider the calibration-stage filtering in Section III-B , where maliciousness scores { m k } K k =1 are computed according to (12) and the B := |S B | clients with the lar gest scores are removed. Let K b := K − B and define ∆ := ∥ q − p ⋆ ∥ 2 , where p ⋆ is the benign population histogram and q is the adversarial mean histogram. Let N min := min k ∈B N k and define r b := C H s log(2 H K b /δ ) N min + C drift L x e rms , (33) where e rms upper bounds benign regression error (as defined in Proposition 2) and C drift is the drift constant from Lemma 7. Assume an adversarial concentration radius r a such that max j ∈S B ∥ v j − q ∥ 2 ≤ r a holds with probability at least 1 − δ a , and assume B < K b − 1 . Define γ := ∆ − r a − r b and Γ := ∆ + r a + r b . If the score-separation condition ( K b − 1) γ > B Γ + ( K b − 1 − B ) 2 r b (34) holds, then with probability at least 1 − ( δ + δ a ) the filtering is exact (no benign client is removed and no Byzantine client is retained). In particular , P ( misfiltering ) ≤ δ + δ a . Moreover , under partial sharing, the Byzantine-induced steady-state training error decreases (Lemma 4), which reduces e rms (in attack- dominated regimes), shrinks r b in (33) , and makes (34) easier to satisfy . Pr oof: See Appendix A. Building on the filtering guarantee of Theorem 3, we obtain the following corollaries. Cor ollary 3 (P ost-filtering quantile bias): Let b C denote the set of clients retained by the calibration-stage filter (Section III-B ). Let ˆ q 1 − α be the (1 − α ) conformal quantile computed from the scor es (or sketches) contributed by clients in b B , and let q ⋆ 1 − α denote the corresponding benign quantile under w ⋆ . Define the filtering failure probability ε := P ( b B = B ) . Under the hypotheses of Theorem 3, ε ≤ δ + δ a (and, for the standard parameter choices in Lemma 6, it decays exponentially in N min ). Assume the local density conditions of Theorem 1 hold around q ⋆ 1 − α . Then | ˆ q 1 − α − q ⋆ 1 − α | ≤ C q E ∥ e k ∥ 2 2 1 / 4 | {z } training-phase effect + C adv ε | {z } residual adversaries , (35) where C q is the constant from Corollary 1 (or Corollary 2), and C adv depends only on the score range (e.g., C adv = 1 if scores are normalized to [0 , 1] ). Consequently , partial sharing reduces the first term via training MSE attenuation and, by increasing the separation margin γ , suppresses ε , tightening the overall calibration quantile. Cor ollary 4 (Cover age bounds under Byzantine attacks): Let K be the total number of clients, K b = K − |S B | the number of benign clients, and n b the number of calibration samples per benign client. Let ε denote the filtering failure probability (a Byzantine client passes the filter). For the post-filtering empirical cov erage d Co v , following the certification frame work of [37], with probability at least 1 − β , we hav e 1 − α − δ L ≤ d Cov ≤ 1 − α + δ U , where δ L = εn b + 1 n b + K b + H · Φ − 1 (1 − β / (2 H K b )) 2 √ n b · 1 + τ 1 − τ , δ U = ε + K b n b + K b + H · Φ − 1 (1 − β / (2 H K b )) 2 √ n b · 1 + τ 1 − τ , (36) with τ = ( K − K b ) /K b and Φ − 1 the standard normal quantile function. Cor ollary 5 (T ighter bounds under partial sharing): Under PRISM-FCP with partial sharing ratio M /D < 1 , the co verage bounds in Corollary 4 are tightened through a reduction in the filtering failure probability ε . Mechanism. By Theorem 3, filtering succeeds with probability at least 1 − ( δ + δ a ) whenev er the score-separation condition holds, with benign radius r b and error level e rms as defined therein. Partial sharing attenuates Byzantine influence during training (Lemma 4), reducing e rms (in attack-dominated regimes) and hence shrinking r b , which enlarges the separation margin γ = ∆ − r a − r b . This decreases the failure probability ε and tightens both δ L and δ U , since they are monotone increasing in ε . V . S I M U L A T I O N R E S U LT S A. Synthetic-Data Experiments Experimental setup. W e consider a federated network of K = 100 clients. At each training iteration, the server uniformly samples |S n | = 10 clients to participate. Each client k observes a non-IID stream ( x k,n , y k,n ) generated by the linear model (1) , with the ground-truth parameter vector normalized as ∥ w ⋆ ∥ 2 = 1 . W e set the model dimension to D = 50 and apply partial sharing with M = 15 parameters per iteration (sharing ratio M /D = 0 . 3 ), which corresponds to a 70% reduction in the number of exchanged parameters. The entries of x k,n are drawn from zero-mean Gaussian distributions with client- specific variances ς 2 k ∼ U (0 . 2 , 1 . 2) , while the observation noise is Gaussian with variance σ 2 ν k ∼ U (0 . 005 , 0 . 025) . All results are av eraged over 100 independent Monte Carlo trials. Byzantine model. W e consider |S B | = 20 Byzantine clients (20% of the network). During training, these clients inject additiv e Gaussian perturbations into their transmitted model updates with probability p a = 0 . 25 , using variance σ 2 B = 0 . 1 (cf. Section II-C ). During calibration, the same Byzantine clients submit adversarial nonconformity scores according to three attack scenarios (Fig. 2): (i) efficiency attack (deflating scores to shrink intervals by sending all-zero scores), (ii) cover age attack (inflating scores to widen intervals by sending scores IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 10 Efficiency Cov erage Random Attac k Type 10 20 30 40 50 60 Maliciousness Score m k Byzantine Fig. 3. Distribution of maliciousness scores m k (cf. (12) ) under different calibration-phase Byzantine attacks. Byzantine clients ( red ) attain markedly larger scores than benign clients, enabling reliable outlier filtering. 10 × the benign mean), and (iii) r andom attack (adding zero- mean Gaussian noise with variance σ 2 C = 0 . 5 to original scores while keeping them nonneg ativ e). W e set the size of the characterization vectors to H = 100 to balance histogram resolution and communication cost, and tar get 90% cov erage (i.e., α = 0 . 1 in (8)). Maliciousness-scor e separ ation. In Fig. 3, we show the mali- ciousness scores of all clients under the three calibration attacks. In each case, Byzantine clients concentrate on substantially larger scores than benign clients, making them clear outliers and enabling reliable filtering prior to quantile estimation. Note that random attacks present a particularly formidable challenge in the context of mitigation strategies. As the variance of the added Gaussian noise diminishes, specifically when σ 2 C ≤ 0 . 2 , the difficulty associated with effecti vely countering these attacks increases. This phenomenon can be attributed to the increasingly cov ert nature of the random attacks, making their detection and subsequent mitigation notably more complex. Consequently , addressing the subtlety of these reduced-variance random attacks necessitates the development of more sophisticated and nuanced approaches in future w orks to ensure resilience. W e present the marginal coverage and average prediction- interval width for FCP , Rob-FCP , and PRISM-FCP under the same Byzantine attack scenarios as in Fig. 4 and T able I. W e train for 1 , 000 iterations, then perform conformal calibration using 1 , 000 samples and ev aluate on 1 , 000 test samples per client. For clarity , in Fig. 4, we only visualize the first 30 test samples. In T able I, we compare FCP , Rob-FCP , and PRISM-FCP under Byzantine attacks that affect both the training and calibration phases. Rob-FCP uses full model sharing ( M /D = 1 ) with Byzantine filtering, while FCP uses full sharing without filtering. Under the coverage attacks, FCP exhibits inflated cov erage ( 100 . 0% ) together with interval widths that are 8 . 4 × larger than T ABLE I: Mar ginal coverage and av erage interval width under different Byzantine attacks ( K = 100 clients, |S B | = 20 Byzantine, D = 50 , α = 0 . 1 ). Attack Method M /D Coverage ( % ) Interval Width Efficienc y PRISM-FCP 0.3 90 . 0 ± 0 . 1 0 . 91 ± 0 . 13 Rob-FCP 1.0 90 . 0 ± 0 . 1 1 . 68 ± 0 . 28 FCP 1.0 87 . 5 ± 0 . 2 1 . 58 ± 0 . 25 Coverage PRISM-FCP 0.3 90 . 0 ± 0 . 1 0 . 91 ± 0 . 13 Rob-FCP 1.0 90 . 0 ± 0 . 1 1 . 68 ± 0 . 28 FCP 1.0 100 . 0 ± 0 . 0 7 . 67 ± 1 . 23 Random PRISM-FCP 0.3 90 . 0 ± 0 . 1 0 . 91 ± 0 . 13 Rob-FCP 1.0 90 . 0 ± 0 . 1 1 . 68 ± 0 . 28 FCP 1.0 91 . 9 ± 0 . 6 1 . 87 ± 0 . 27 PRISM-FCP , while both PRISM-FCP and Rob-FCP remain close to the nominal 90% target. Under the efficienc y and random attacks, the three methods achiev e similar coverage, but PRISM- FCP consistently produces tighter intervals, reflecting its lower training error . Ov erall, these results show that calibration-phase filtering is necessary for reliable uncertainty quantification under adversarial manipulation. PRISM-FCP further improves over Rob-FCP by substantially tightening the prediction interv als. Although both methods filter Byzantine clients during calibration, PRISM-FCP yields intervals that are 1 . 8 × narrower than Rob-FCP by additionally mitigating Byzantine influence during training via partial sharing. This agrees with Theorem 2, which predicts an M /D attenuation of injected perturbation energy . The training MSE corroborates this ef fect: PRISM-FCP achie ves an MSE of − 26 . 4dB compared to Rob-FCP’ s MSE of − 21 . 4dB ( 5dB improv ement), consistent with the MSE decomposition in Lemma 3. Fig. 5 corroborates Corollary 2 by reporting the calibration- quantile deviation across sharing ratios. The de viation decreases monotonically with M /D , and at M /D = 0 . 1 it is roughly 70% smaller than under full sharing ( M /D = 1 ). This trend aligns with the MSE decomposition in Lemma 3: partial sharing attenuates the Byzantine-induced term E ω (Lemma 4), which in turn reduces the perturbation of residuals and the resulting conformal quantile. Robustness to unknown |S B | . PRISM-FCP can be deplo yed either with a known Byzantine count |S B | , where score-based filtering excludes exactly |S B | clients, or without this knowledge using a MAD-based outlier rule with a scale factor 1 . 4826 and a threshold of 2 . 5 as its parameters. Across all three attack types, the known- |S B | setting achiev es perfect detection ( 20 / 20 true positives and no false positiv es). When |S B | is unknown, a practical alternati ve is to flag outliers using a robust MAD-based rule (Remark 3). The MAD rule also attains 100% recall, while incurring roughly one false positiv e on average. Importantly , both settings preserv e nominal cov erage ( 90% ), indicating that PRISM-FCP remains rob ust e ven when |S B | is not kno wn a priori. B. Real-Data Experiments (UCI Super conductivity Dataset) T o demonstrate practical applicability , we ev aluate PRISM- FCP on the UCI Superconductivity dataset [45], which contains IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 11 5 10 15 20 25 30 Time Index ( n ) − 3 − 2 − 1 0 1 2 T arget V alue y (a) Efficienc y attack 5 10 15 20 25 30 Time Index ( n ) − 4 − 2 0 2 4 (b) Coverage attack 5 10 15 20 25 30 Time Index ( n ) − 3 − 2 − 1 0 1 2 (c) Random attack Fig. 4. Illustrativ e prediction intervals under (a) efficienc y , (b) coverage, and (c) random attacks. The true target values are shown as dashed lines, while prediction interv als from FCP and PRISM-FCP ( M /D = 0 . 3 ) are sho wn in red and green , respectively . 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 Sharing Ratio M /D 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 Quantile Deviation Fig. 5. Quantile deviation | ˆ q 1 − α − q ⋆ 1 − α | of PRISM-FCP versus sharing ratio M /D under different types of Byzantine attacks. 21 , 263 samples with D = 81 features describing material properties, with the target being the critical temperature, in Kelvin (K), at which superconducti vity occurs. Experimental Setup. W e distribute the dataset across K = 100 clients, including |S B | = 20 Byzantine clients. Each client is assigned 3 , 000 samples, split into 1 , 000 training, 1 , 000 calibration, and 1 , 000 test samples. 3 At each training iteration, the server uniformly samples |S n | = 20 clients to participate. Features are standardized to zero mean and unit variance, and targets are normalized similarly . As before, we compare: (i) PRISM-FCP with partial sharing ( M < D ) and Byzantine filtering, (ii) Rob-FCP with full sharing ( M = D ) and Byzantine filtering, and (iii) FCP with full sharing and no filtering. 3 T o simulate non-IID data heterogeneity , we partition the target variable (critical temperature) into 10 equal-frequency quantile bins and dra w each client’ s mixing proportions ov er these bins from a Dir(0 . 5) distribution [46]. Each client receiv es 3 , 000 samples accordingly , with ov erlap permitted across clients. T ABLE II: Evaluation results on the UCI Superconductivity dataset with D = 81 . Attack Method M /D Coverage ( % ) Interval Width (K) Efficienc y PRISM-FCP 0.25 90 . 0 ± 0 . 1 64 . 09 ± 1 . 25 0.49 90 . 0 ± 0 . 1 66 . 43 ± 1 . 99 0.74 90 . 0 ± 0 . 2 69 . 87 ± 2 . 81 1.0 90 . 0 ± 0 . 2 74 . 33 ± 4 . 37 Rob-FCP 1.0 90 . 0 ± 0 . 2 74 . 33 ± 4 . 37 FCP 1.0 87 . 5 ± 0 . 2 69 . 36 ± 4 . 10 Coverage PRISM-FCP 0.25 90 . 0 ± 0 . 1 64 . 09 ± 1 . 25 0.49 90 . 0 ± 0 . 1 66 . 43 ± 1 . 99 0.74 90 . 0 ± 0 . 2 69 . 87 ± 2 . 81 1.0 90 . 0 ± 0 . 2 74 . 33 ± 4 . 37 Rob-FCP 1.0 90 . 0 ± 0 . 2 74 . 33 ± 4 . 37 FCP 1.0 100 . 0 ± 0 . 0 364 . 98 ± 22 . 33 Random PRISM-FCP 0.25 90 . 0 ± 0 . 1 64 . 33 ± 1 . 28 0.49 90 . 0 ± 0 . 1 66 . 66 ± 2 . 04 0.74 90 . 0 ± 0 . 2 70 . 09 ± 2 . 86 1.0 90 . 0 ± 0 . 2 74 . 52 ± 4 . 39 Rob-FCP 1.0 90 . 0 ± 0 . 2 74 . 52 ± 4 . 39 FCP 1.0 91 . 7 ± 0 . 3 78 . 43 ± 4 . 17 W e sweep sharing ratios M /D ∈ { 0 . 25 , 0 . 49 , 0 . 74 , 1 . 0 } (i.e., M ∈ { 20 , 40 , 60 , 81 } ) and a verage results over 100 independent trials. The target coverage is 1 − α = 90% . In T able II, we present marginal cov erage and av erage interv al width under the three calibration-phase attack scenarios. The results corroborate the synthetic experiments and validate PRISM-FCP on real-world data: Covera ge validity . Across all three attacks and all sharing ratios, PRISM-FCP stays close to the 90% cov erage target, indicating that partial sharing does not compromise conformal v alidity in practice. In contrast, FCP without Byzantine filtering fails under adversarial calibration: it substantially inflates intervals under cov erage attacks (reaching 100 . 0% cov erage with widths exceeding 5 × ) and undercovers under efficienc y attacks (e.g., 87 . 5% co verage). Interval efficiency . PRISM-FCP yields tighter intervals than Rob-FCP . For example, at M /D = 0 . 25 ( M = 20 ), PRISM- FCP produces interv als that are about 13% narrower than Rob- FCP across all three attacks. This impro vement is attributable IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 12 to reduced training error from partial sharing, which attenuates Byzantine influence during training. Communication-accuracy trade-off . Smaller sharing ratios generally tighten intervals due to stronger Byzantine attenuation, but the benefit saturates around M /D ≈ 0 . 5 . In particular , reducing M /D from 0 . 49 to 0 . 25 provides only marginal additional width reduction while slightly slo wing con ver gence, suggesting 0 . 3 ≤ M /D ≤ 0 . 5 as a practical operating range that balances efficiency and communication cost. Attack-type in variance. The relati ve advantage of PRISM-FCP ov er Rob-FCP is stable across ef ficiency , coverage, and random attacks, consistent with the interpretation that training-stage mitigation via partial sharing complements the calibration-phase filtering. Overall, PRISM-FCP pro vides two complementary benefits: (i) r esilience to Byzantine behavior in both training and calibration, and (ii) communication efficiency through partial model parameter sharing and client subsampling, making it a practical approach for scalable FCP . V I . C O N C L U S I O N A N D F U T U R E W O R K W e proposed PRISM-FCP to mitigate Byzantine attacks in both training and calibration phases of federated conformal prediction. W e showed that partial sharing attenuates adversarial perturbation energy during training, reducing steady-state error and tightening prediction intervals, while histogram-based filter- ing strengthens robustness during calibration. Comprehensive experiments on both synthetic and real data corroborated our theoretical analysis. Several directions merit future inv estigation. Extending the theory beyond linear regression to nonlinear models and deep networks is an important next step. In addition, analyzing robustness against fully adaptive adversaries that exploit partial-sharing patterns will further strengthen the guarantees. A P P E N D I X A P RO O F O F T H E O R E M 3 W e prove that on a high-probability event, ev ery Byzantine client has a strictly larger maliciousness score than ev ery benign client, hence removing the B largest scores recovers exactly the Byzantine set. Step 1: Benign histogram concentration. For each benign client k ∈ B , Lemma 6 implies that for any δ ′ ∈ (0 , 1) , P ∥ v k − p k ∥ 2 > C H s log(2 H /δ ′ ) N k ≤ δ ′ . (37) Choose δ ′ = δ /K b and use N k ≥ N min to get P ∥ v k − p k ∥ 2 > C H s log(2 H K b /δ ) N min ≤ δ K b . (38) A union bound o ver all benign clients yields the ev ent E b := max k ∈B ∥ v k − p k ∥ 2 ≤ C H s log(2 H K b /δ ) N min (39) satisfies P ( E b ) ≥ 1 − δ . Step 2: Drift due to training err or . Lemma 7 giv es, for each benign k , ∥ p k − p ⋆ ∥ 2 ≤ C drift L x ∥ e k ∥ 2 . By the definition of e rms used in the manuscript (as an upper bound on benign regression error), we hav e ∥ e k ∥ 2 ≤ e rms for benign k , hence max k ∈B ∥ p k − p ⋆ ∥ 2 ≤ C drift L x e rms . Combining this with E b and the triangle inequality , max k ∈B ∥ v k − p ⋆ ∥ 2 ≤ max k ∈B ∥ v k − p k ∥ 2 + max k ∈B ∥ p k − p ⋆ ∥ 2 ≤ r b on E b , where r b is defined in (33). Step 3: Adversarial concentration and distance bounds. Define the adversarial concentration ev ent E a := { max j ∈S B ∥ v j − q ∥ 2 ≤ r a } , which holds with probability at least 1 − δ a by (3) . Let E := E b ∩ E a , thus P ( E ) ≥ 1 − ( δ + δ a ) . Conditioning on E , for an y two benign clients k , k ′ , we have ∥ v k − v k ′ ∥ 2 ≤ ∥ v k − p ⋆ ∥ 2 + ∥ v k ′ − p ⋆ ∥ 2 ≤ 2 r b . For any Byzantine client j and benign client k , the triangle inequality yields the lo wer bound ∥ v j − v k ∥ 2 ≥ ∥ q − p ⋆ ∥ 2 − ∥ v j − q ∥ 2 − ∥ v k − p ⋆ ∥ 2 ≥ ∆ − r a − r b = : γ , (40) and similarly , the upper bound ∥ v j − v k ∥ 2 ≤ ∥ v j − q ∥ 2 + ∥ q − p ⋆ ∥ 2 + ∥ v k − p ⋆ ∥ 2 ≤ ∆ + r a + r b = : Γ . (41) Step 4: Lower bound on Byzantine scores. Fix any Byzantine client j ∈ S B . There are K b benign clients, and by the bound abov e, each benign k satisfies ∥ v j − v k ∥ 2 ≥ γ . Consider any subset T ⊆ B with | T | = K b − 1 . Then, X k ∈ T ∥ v j − v k ∥ 2 ≥ ( K b − 1) γ . Since FN( j, K b − 1) contains the K b − 1 lar gest distances from j , its sum is at least the sum over any K b − 1 clients, hence m j = X k ′ ∈ FN( j,K b − 1) ∥ v j − v k ′ ∥ 2 ≥ ( K b − 1) γ . Step 5: Upper bound on benign scores. Fix any benign client k ∈ B . Among its K b − 1 farthest neighbors, at most B can be Byzantine (there are only B Byzantine clients total). For any benign neighbor k ′ we ha ve ∥ v k − v k ′ ∥ 2 ≤ 2 r b , and for an y Byzantine neighbor j we have ∥ v k − v j ∥ 2 ≤ Γ . Therefore, m k = X k ′ ∈ FN( k,K b − 1) ∥ v k − v k ′ ∥ 2 ≤ B Γ + ( K b − 1 − B )2 r b . Step 6: Score separation implies exact filtering. By Steps 4–5, on E we have for e very Byzantine j and benign k , m j ≥ ( K b − 1) γ , m k ≤ B Γ + ( K b − 1 − B )2 r b . Under condition (34) , this yields m j > m k for all Byzantine j and benign k . Hence the B largest scores { m k } belong exactly to the Byzantine clients, so filtering by (12) is exact on E . IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. XX, 2026 13 Step 7: Misfiltering pr obability . Since misfiltering can occur only if E fails, P ( misfiltering ) ≤ P ( E c ) ≤ P ( E c b ) + P ( E c a ) ≤ δ + δ a , which completes the proof. R E F E R E N C E S [1] H. B. McMahan, E. Moore, D. Ramage, S. Hampson, and B. A. Y . Arcas, “Communication-efficient learning of deep networks from decentralized data, ” in Pr oc. Int. Conf. Artif. Intell. Stat. , 2017, pp. 1273–1282. [2] Q. Y ang, Y . Liu, T . Chen, and Y . T ong, “Federated machine learning: Concept and applications, ” A CM T rans. Intell. Syst. T echnol. , vol. 10, no. 2, pp. 1–19, 2019. [3] T . Li, A. K. Sahu, A. T alwalkar , and V . Smith, “Federated learning: Challenges, methods, and future directions, ” IEEE Signal Process. Mag. , vol. 37, no. 3, pp. 50–60, 2020. [4] P . Kairouz, H. B. McMahan, B. A vent, A. Bellet, M. Bennis, A. N. Bhagoji, K. Bonawitz, Z. Charles, G. Cormode, R. Cummings et al. , “ Advances and open problems in federated learning, ” F ound. Tr ends Mach. Learn. , vol. 14, no. 1–2, pp. 1–210, 2021. [5] E. Lari, V . C. Gogineni, R. Arablouei, and S. W erner , “Noise-robust and resource-efficient ADMM-based federated learning, ” Signal Process. , vol. 233, p. 109988, 2025. [6] V . Smith, C. Chiang, M. Sanjabi, and A. S. T alw alkar , “Federated multi- task learning, ” in Pr oc. Adv . Neural Inf. Pr ocess. Syst. , 2017. [7] J. W ang, Q. Liu, H. Liang, G. Joshi, and H. V . Poor, “T ackling the objectiv e inconsistency problem in heterogeneous federated optimization, ” in Pr oc. Adv . Neural Inf . Pr ocess. Syst. , vol. 33, 2020, pp. 7611–7623. [8] S. Reddi, Z. Charles, M. Zaheer , Z. Garrett, K. Rush, J. Kone ˇ cn ` y, S. Kumar , and H. B. McMahan, “ Adaptiv e federated optimization, ” in Proc. Int. Conf. Learn. Repr esent. , 2021. [9] K. Bonawitz, H. Eichner, W . Grieskamp, D. Huba, A. Ingerman, V . Ivanov , C. Kiddon, J. Kone ˇ cn ` y, S. Mazzocchi, B. McMahan et al. , “T owards federated learning at scale: System design, ” in Proc. MLSys , 2019, pp. 374–388. [10] J. W ang, Z. Charles, Z. Xu, G. Joshi, H. B. McMahan, M. Al-Shedi vat, G. Andrew , S. A vestimehr, K. Deng, J. Duchi et al. , “ A field guide to federated optimization, ” in Pr oc. Int. Conf. Learn. Represent. , 2024. [11] Z. Charles, N. Garrett, Z. Xu, and G. Joshi, “T owards federated foundation models: Scalable dataset pipelines for group-structured learning, ” in Pr oc. Adv . Neural Inf. Pr ocess. Syst. , 2024. [12] Q. Y ang, Y . Liu, Y . Cheng, Y . Kang, T . Chen, and H. Y u, “Federated learning, ” IEEE T rans. Big Data , vol. 6, no. 4, pp. 673–688, 2020. [13] W . Y . B. Lim, N. C. Luong, D. T . Hoang, Y . Jiao, Y .-C. Liang, Q. Y ang, D. Niyato, and C. Miao, “Federated learning in mobile edge networks: A comprehensiv e surve y , ” IEEE Commun. Surv . T utor . , vol. 22, no. 3, pp. 2031–2063, 2020. [14] T . Gafni, N. Shlezinger, K. Cohen, Y . C. Eldar, and H. V . Poor , “Federated learning: A signal processing perspectiv e, ” IEEE Signal Process. Mag. , vol. 39, no. 3, pp. 14–41, 2022. [15] E. Lari, V . C. Gogineni, R. Arablouei, and S. W erner , “On the resilience of online federated learning to model poisoning attacks through partial sharing, ” in Pr oc. IEEE Int. Conf. Acoust. Speech Signal Pr ocess. , 2024, pp. 9201–9205. [16] E. Lari, R. Arablouei, V . C. Gogineni, and S. W erner, “Resilience in online federated learning: Mitigating model-poisoning attacks via partial sharing, ” IEEE T rans. Signal Inf. Pr ocess. Netw . , vol. 11, pp. 388–400, 2025. [17] V . V ovk, A. Gammerman, and G. Shafer , Algorithmic learning in a random world . Springer , 2005. [18] V . Plassier, N. Kotele vskii, A. Rubashevskii, F . Noskov , M. V elikanov , A. Fishkov , S. Horvath, M. T akac, E. Moulines, and M. Panov , “Ef ficient conformal prediction under data heterogeneity , ” in Proc. Int. Conf. Artif . Intell. Stat. PMLR, 2024, pp. 4879–4887. [19] M. Zhu, M. Zecchin, S. Park et al. , “Federated inference with reliable uncertainty quantification over wireless channels via conformal prediction, ” IEEE T rans. Signal Pr ocess. , vol. 72, pp. 1235–1250, 2024. [20] F . Y e, M. Y ang, J. P ang, L. W ang, D. W ong, E. Yilmaz, S. Shi, and Z. T u, “Benchmarking LLMs via uncertainty quantification, ” Pr oc. Adv . Neural Inf. Process. Syst. , vol. 37, pp. 15 356–15 385, 2024. [21] X. Zhou, B. Chen, Y . Gui, and L. Cheng, “Conformal prediction: A data perspectiv e, ” A CM Comput. Surv . , vol. 58, no. 2, pp. 1–37, 2025. [22] J. Lei, M. G’Sell, A. Rinaldo, R. J. Tibshirani, and L. W asserman, “Distribution-free predictive inference for regression, ” J. Am. Stat. Assoc. , vol. 113, no. 523, pp. 1094–1111, 2018. [23] J. Ai and Z. Ren, “Not all distributional shifts are equal: Fine-grained robust conformal inference, ” in Pr oc. Int. Conf. Mach. Learn. , 2024, pp. 641–665. [24] C. Lu, Y . Y u, S. P . Karimireddy , M. Jordan, and R. Raskar, “Federated conformal predictors for distributed uncertainty quantification, ” in Pr oc. Int. Conf. Mach. Learn. PMLR, 2023, pp. 22 942–22 964. [25] P . Humbert, B. Le Bars, A. Bellet, and S. Arlot, “One-shot federated conformal prediction, ” in Pr oc. Int. Conf. Mach. Learn. PMLR, 2023, pp. 14 153–14 177. [26] V . Plassier , M. Makni, A. Rubashevskii, E. Moulines, and M. P anov , “Conformal prediction for federated uncertainty quantification under label shift, ” in Pr oc. Int. Conf. Mach. Learn. , 2023, pp. 28 101–28 139. [27] Y . Min, C. Zhang, L. Peng, and C. Zou, “Personalized federated conformal prediction with localization, ” in Proc. Adv . Neural Inf. Process. Syst. , 2025. [28] N. K outsoubis, A. W aqas, Y . Y ilmaz et al. , “Priv acy-preserving federated learning and uncertainty quantification in medical imaging, ” Radiol.: Artif. Intell. , vol. 7, no. 4, 2025. [29] P . Blanchard, E. M. El Mhamdi, R. Guerraoui, and J. Stainer, “Machine learning with adversaries: Byzantine tolerant gradient descent, ” Proc. Adv . Neural Inf. Pr ocess. Syst. , v ol. 30, 2017. [30] E. M. E. Mhamdi, R. Guerraoui, and S. Rouault, “The hidden vulnerability of distrib uted learning in byzantium, ” in Pr oc. Int. Conf. Mach. Learn. , 2018, pp. 3521–3530. [31] M. Fang, X. Cao, J. Jia, and N. Gong, “Local model poisoning attacks to Byzantine-Robust federated learning, ” in USENIX Security Symp. , Aug. 2020, pp. 1605–1622. [32] W . Huang, Z. Shi, M. Y e, H. Li, and B. Du, “Self-driven entropy aggregation for Byzantine-robust heterogeneous federated learning, ” in Pr oc. Int. Conf. Mach. Learn. , 2024, pp. 20 096–20 110. [33] D. Y in, Y . Chen, R. Kannan, and P . Bartlett, “Byzantine-robust distributed learning: T owards optimal statistical rates, ” in Proc. Int. Conf. Mac h. Learn. , 2018, pp. 5650–5659. [34] K. Pillutla, S. M. Kakade, and Z. Harchaoui, “Robust aggregation for federated learning, ” IEEE T rans. Signal Pr ocess. , vol. 70, pp. 1142–1154, 2022. [35] C. Qian, M. W ang, H. Ren, and C. Zou, “ByMI: Byzantine machine identification with false discov ery rate control, ” in Proc. Int. Conf. Mach. Learn. , 2024, pp. 41 357–41 382. [36] B. Zhang, M. Fang, Z. Liu, B. Y i, P . Zhou, Y . W ang, T . Li, and Z. Liu, “Practical framew ork for privac y-preserving and Byzantine-robust federated learning, ” IEEE T rans. Inf . F orensics Security , 2025. [37] M. Kang, Z. Lin, J. Sun, C. Xiao, and B. Li, “Certifiably byzantine-rob ust federated conformal prediction, ” in Pr oc. Int. Conf. Mach. Learn. PMLR, 2024, pp. 23 022–23 057. [38] V . C. Gogineni, S. W erner , Y .-F . Huang, and A. Kuh, “Communication- efficient online federated learning frame work for nonlinear regression, ” in Pr oc. IEEE Int. Conf. Acoust. Speech Signal Pr ocess. , 2022, pp. 5228– 5232. [39] ——, “Communication-ef ficient online federated learning strategies for kernel regression, ” IEEE Internet Things J . , vol. 10, pp. 4531–4544, 2023. [40] B. Kailkhura, S. Brahma, and P . K. V arshney , “Data falsification attacks on consensus-based detection systems, ” IEEE T rans. Signal Inf. Pr ocess. Netw . , vol. 3, no. 1, pp. 145–158, 2017. [41] B. De Finetti, “On the condition of partial exchangeability , ” Stud. Inductive Logic Pr obab. , vol. 2, pp. 193–205, 1980. [42] T . Dunning, “The T -Digest: Efficient estimates of distributions, ” Software Impacts , vol. 7, p. 100049, 2021. [43] C. Leys, C. Ley , O. Klein, P . Bernard, and L. Licata, “Detecting outliers: Do not use standard deviation around the mean, use absolute de viation around the median, ” J . Exp. Soc. Psychol. , v ol. 49, pp. 764–766, 2013. [44] J. A. Tropp, “User-friendly tail bounds for sums of random matrices, ” F ound. Comput. Math. , vol. 12, no. 4, pp. 389–434, 2012. [45] K. Hamidieh, “ A data-driven statistical model for predicting the critical temperature of a superconductor, ” Comput. Mater . Sci. , vol. 154, pp. 346–354, 2018. [46] T .-M. H. Hsu, H. Qi, and M. Brown, “Measuring the effects of non- identical data distribution in federated optimization, ” arXiv preprint arXiv:1909.06335 , 2019.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment