Learning to Tune Pure Pursuit in Autonomous Racing: Joint Lookahead and Steering-Gain Control with PPO

Pure Pursuit (PP) is widely used in autonomous racing for real-time path tracking due to its efficiency and geometric clarity, yet performance is highly sensitive to how key parameters-lookahead distance and steering gain-are chosen. Standard velocit…

Authors: Mohamed Elgouhary, Amr S. El-Wakeel

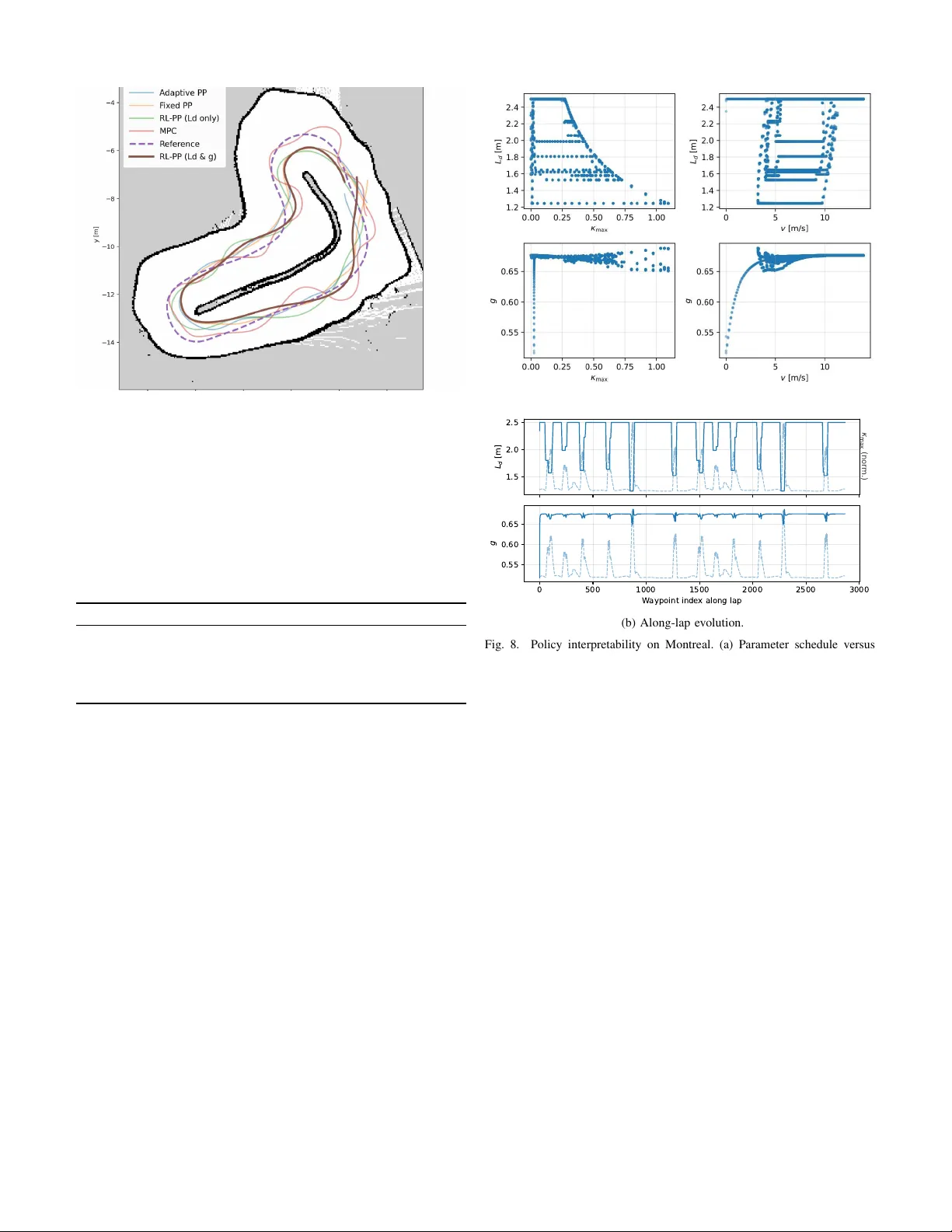

1 Learning to T une Pure Pursuit in Autonomous Racing: Joint Lookahead and Steering-Gain Control with PPO Mohamed Elgouhary and Amr S. El-W akeel Abstract —Pure Pursuit (PP) is widely used in autonomous racing for real-time path tracking due to its efficiency and geometric clarity , yet performance is highly sensitive to how key parameters—lookahead distance and steering gain—are chosen. Standard velocity-based schedules adjust these only approximately and often fail to transfer across tracks and speed profiles. W e propose a reinfor cement-learning (RL) approach that jointly chooses the lookahead L d and a steering gain g online using Proximal P olicy Optimization (PPO). The policy observes compact state featur es (speed and curvature taps) and outputs ( L d , g ) at each control step. T rained in F1TENTH Gym and deployed in a R OS 2 stack, the policy drives PP directly (with light smoothing) and requires no per-map retuning . Across simulation and real-car tests, the proposed RL–PP controller that jointly selects ( L d , g ) consistently outperforms fixed-lookahead PP , velocity-scheduled adaptiv e PP , and an RL lookahead-only variant, and it also exceeds a kinematic MPC raceline tracker under our evaluated settings in lap time, path-tracking accu- racy , and steering smoothness, demonstrating that policy-guided parameter tuning can reliably improv e classical geometry-based control. Index T erms —A utonomous racing; Path tracking; Pure Pur- suit; Reinfor cement learning; Proximal Policy Optimization (PPO); Dynamic lookahead; Steering gain; Sim-to-real transfer; R OS2; F1TENTH I . I N T R O D U C T I O N Intelligent and connected vehicles must track reference paths accurately across widely v arying speeds and curvatures while preserving stability and comfort. Geometric controllers remain attracti ve in this setting for their transparency and lo w computational cost, with Pure Pursuit (PP) [1] directing the vehicle to ward a lookahead point and con verting geometry into a steering command suitable for real-time use. A longstanding limitation of PP is its sensiti vity to important parameters—most notably the lookahead distance and the effecti ve steering gain. Small lookahead values yield agile cornering but can excite oscillations on straights; large values smooth the motion yet understeer in tight bends. Likewise, an overly aggressiv e steering gain can induce noise, whereas a conservati ve gain slows con ver gence. Rule-based schedules that scale lookahead (and sometimes gain) with v elocity or cur - vature partially address these effects, but their fixed functional forms and tuned coefficients often fail to transfer across tracks, speed profiles, and platforms. Model-predictive control (MPC) can achie ve strong performance, b ut typically requires more The authors are with the Lane Department of Computer Science and Electrical Engineering, W est V irginia Uni versity , Morganto wn, WV , USA ( { mae00018@mix.wvu.edu, amr.elwakeel@mail.wvu.edu ). modeling effort and online optimization. Instead of replacing PP , we keep its geometric steering la w and learn to tune a small set of parameters to improve performance while preserving simplicity and interpretability . W e propose a data-dri ven alter- nativ e in which a reinforcement learning (RL) polic y jointly sequences the lookahead distance L d and a steering gain g online. W e formulate parameter sequencing as a continuous control problem and train with Proximal Policy Optimization (PPO) [2] to map compact state features—vehicle speed and curvature taps at near/mid/far horizons—into a 2-D action ( L d , g ) . The policy is trained in the F1TENTH Gym [3] using a minimum-curvature raceline [4] to supply waypoints and curvature; optimization is stabilized through KL-constrained PPO updates, observation/return normalization, gradient clip- ping, and a scheduled learning rate chosen via Optuna [5]. In simulation, we increase task dif ficulty by uniformly scaling the raceline speed profile so that the policy must learn a curvature- and speed-aware schedule rather than ov erfitting to a single fixed speed profile. The learned policy is inte grated into a R OS 2 control stack that preserves PP’ s geometric steering law and ex- poses two lightweight interfaces (topics) for ( L d , g ) . A first- order smoother mitigates sudden action changes, and a safety “teacher” (linear v 7→ L d and v 7→ g ) serves as a fallback if RL commands become stale. T o focus on the ef fect of learned parameter tuning while pre- serving PP’ s interpretability and runtime efficienc y , W e ev al- uate against multiple Pure Pursuit (PP) baselines—including fixed-lookahead and velocity-scheduled variants—as well as an MPC raceline tracker . W e ev aluate in simulation (zero-shot across unseen tracks) and on the physical F1TENTH platform at speeds limited by safety and track size, and we monitor PPO diagnostics (approximate KL, clip fraction, action standard deviation, and value loss as illustrated in Figs. 2 and 3) to verify stable optimization consistent with the chosen learning- rate settings. The main contributions of this paper are: • An RL–Pure Pursuit framework that adapts the lookahead distance L d and steering gain g online while preserving PP’ s simplicity and interpretability . • A practical training recipe (reward design, hyperpa- rameter tuning, and stability techniques) that learns a curvature–speed-a ware ( L d , g ) policy with smooth, ac- curate tracking. • A simulation and real-car ev aluation in a ROS 2 F1TENTH stack, including ablations and comparisons to 2 fixed PP , velocity-scheduled PP , and a kinematic MPC tracker , showing improved lap time, lateral error , and steering smoothness. • An interpretability study that visualizes how L d and g vary with track geometry and speed along the raceline. This work sho ws ho w deep reinforcement learning can complement geometric control to improve adaptability and robustness while preserving interpretable, real-time path track- ing. I I . R E L AT E D W O R K Path tracking is a core problem in autonomous driving, addressed by classical methods [6] and learning-based ap- proaches [7]. Among geometric controllers, Pure Pursuit (PP) [1] and Stanley [8] remain popular due to their simplicity and real-time performance, including in racing settings [9]. A central limitation of PP is its sensitivity to the lookahead dis- tance, which trades responsi veness against smoothness. Con- sequently , many w orks propose adapti ve lookahead schemes based on speed and/or curvature [1], [10], but still rely on hand-designed functional forms and tuned coefficients. Model predicti ve control (MPC) optimizes o ver a finite horizon with dynamics and constraints and is widely used as a strong baseline in autonomous racing. While MPC can achieve precise tracking and constraint handling, it typically requires accurate modeling, online optimization, and careful tuning of costs and constraints, which may be challenging on resource- limited platforms. Reinforcement learning (RL) provides a data-driven al- ternativ e for context-dependent control [11], with methods such as DQN [12], DDPG [13], and PPO [2] applied across robotics and autonomous dri ving. End-to-end policies [14] can be effecti ve but often face interpretability and transfer challenges [15]–[17]. T o balance adaptability with structure, hybrid approaches use RL to tune parameters of classical con- trollers rather than replace them outright [18]–[20]. Howe ver , most prior work focuses on throttle or full-actuation policies, with less emphasis on learning to tune the core geometric path-following parameters in PP . While RL-driven lookahead adaptation has been explored in other domains (e.g., heavy trucks) [21], we explicitly benchmark against an adaptive PP baseline (a velocity-linear Pure Pursuit schedule) and include an MPC raceline tracker as a stronger model-based reference in both simulation and hardware experiments. Finally , we use minimum-curvature racelines from TUM’ s global_racetrajectory_optimization [4] as the shared reference for all controllers. Prior adapti ve PP methods adjust L d with hand-designed rules (speed/curvature/pre view), while RL-based tuning has been used to adapt gains of classical controllers or to learn end-to-end tracking. Our contribution differs in that we (i) keep the PP steering law fixed and interpretable, (ii) learn a joint continuous schedule for both L d and a gain g using compact curvature-pre view features, and (iii) ev aluate zero- shot generalization to unseen tracks and deplo y within a R OS 2 stack on a real F1TENTH vehicle. D ata / M ap s H oc ke nhe i m _m a p M i ni m um - c urva t ure ra c e l i ne w i t h s pe e d profi l e + 30% i n s i m Le ar n i n g (P P O ) E nvi ronm e nt (RO S 2/ G ym ) O bs : [ v , κ ₀ , κ ₁ , κ ₂ , Δκ ] V e c N orm a l i z e ( obs & re w a rds ) P P O P ol i c y π θ O u tp u ts ( L d , g ) C on tr ol / D e p l oyme n t RO S t opi c s / l ooka he a d_di s t a nc e / s t e e ri ng_ga i n P ure purs ui t γ = g · 2y / L d 2 Spe e d: r ac e l i ne pr of i l e S i m ul a t i on & Re a l F 1t e nt h Fig. 1. Pipeline: mapping and minimum-curv ature raceline feed the simulator and a baseline PP controller . A PPO agent learns ( L d , g ) and integrates with PP via ROS topics. W e validate in simulation and deploy on a real F1TENTH car . I I I . F R A M E W O R K A N D M E T H O D O L O G Y This section details the full stack used in our study . W e estimate pose with a LiD AR-based Monte Carlo localization (MCL) filter , generate a global reference using minimum- curvature optimization [4], and couple a classical Pure Pursuit (PP) tracker with a learned policy that selects the lookahead distance and the steering gain online. W e then specify ob- servation and action spaces, the rew ard, PPO training, and how these components integrate in simulation and on the real F1TENTH platform [3] for fair comparison against multiple PP baselines (fix ed-lookahead and velocity-scheduled v ariants) and an MPC raceline tracker . From a controller-design perspecti ve, our goal is to inv es- tigate ho w far a lightweight geometric Pure Pursuit approach can be pushed by exposing only a small set of parameters to learning. W e therefore build our stack around PP and ev aluate against standard tracking baselines under a common reference trajectory and localization stack. A. Localization and Global Refer ence W e estimate pose using a standard LiD AR-based Monte Carlo localization (MCL) particle filter [22] over a prebuilt occupancy grid. Particles are propagated with a kinematic motion model, reweighted by a beam-based scan likelihood using grid ray-casting, and resampled when the effecti ve sample size drops; the state estimate is taken as the weighted mean (circular mean for heading). For the global reference, we use TUM’ s global_racetrajectory_optimization [4] to compute a smooth minimum-curvature raceline within track boundaries. The optimizer returns a closed-loop trajectory with associated curvature κ ( s ) and a friction- limited speed profile v max ( s ) , which we export as waypoints { x ( s ) , y ( s ) , κ ( s ) , v max ( s ) } . This globally smooth geometry provides both the tracking reference and curvature features used by the controller and the RL policy . B. System Overview and Experimental Setup Fig. 1 summarizes the components. Using the F1tenth Hoc k- enheim map, we load a raceline with curvature and a reference 3 speed profile. The raceline drives both the En vironment and a baseline PP controller . Rollouts train a PPO agent that outputs ( L d , g ) each step, which are lightly smoothed and published on R OS topics; PP then computes steering and speed commands. C. Observation and Action Spaces At time t the agent observes s t = v t κ 0 ,t κ 1 ,t κ 2 ,t ∆ κ t , (1) where v t is speed, κ 0 , 1 , 2 are absolute curv atures at near/mid/far horizon taps along the raceline, and ∆ κ t = κ 1 ,t − κ 0 ,t . The action is a 2-D continuous vector , a t = L t +1 g t +1 , L t +1 ∈ [0 . 35 , 4 . 0] m , g t +1 ∈ [0 . 45 , 1 . 15] . (2) followed by a first-order exponential smoother (per- component) before publishing: ˜ L t +1 = β L L t +1 + (1 − β L ) ˜ L t , ˜ g t +1 = β g g t +1 + (1 − β g ) ˜ g t . (3) with β L = β g = 0 . 2 . Conceptually , the policy implements a mapping s t 7→ ( L t +1 , g t +1 ) that uses current speed and previe wed curvature to adjust the PP lookahead distance and steering gain before they are smoothed and applied. Curvatur e pre view taps. Let the raceline be discretized into N waypoints { p i = ( x i , y i ) } N − 1 i =0 with associated curvature samples { κ i } N − 1 i =0 . At time t , we find the nearest waypoint index i ⋆ t = arg min i ∥ p t − p i ∥ 2 , where p t is the current vehicle position. W e then form three fixed previe w taps using waypoint-index of fsets k ∈ { 0 , 5 , 12 } (with wrap-around modulo N ): I t ( k ) = ( i ⋆ t + k ) mo d N . The curv ature features are the absolute curvatures at these taps, κ j,t = | κ I t ( k j ) | for k 0 =0 , k 1 =5 , k 2 =12 , and ∆ κ t = κ 1 ,t − κ 0 ,t . W ith our waypoint spacing, k = 5 – 12 corresponds to approximately 1 – 4 m of lookahead. D. Rewar d Design The re ward balances speed, agreement with teacher targets, smoothness, curvature exposure, straight behavior , progress, and safety: R t = w v v t − w L ˜ L t − L ∗ t − w G ˜ g t − g ∗ t − w j L ˜ L t − ˜ L t − 1 − w j G ˜ g t − ˜ g t − 1 − w k | κ t | − w × ˜ L t κ max t + w pre I bend ( κ max t ) I h ˜ L t ≤ ℓ ( v t ) i − w c I collision − w s I slow + w p ∆ p t . (4) where κ max t = max( κ 0 ,t , κ 1 ,t , κ 2 ,t ) , κ t is a smoothed local curvature, and ∆ p t counts newly passed waypoints. The teacher targets are L ∗ t = clip 0 . 50 + 0 . 28 v t − 3 . 5 κ max t , 0 . 35 , 4 . 0 , g ∗ t = clip mv t + b, 0 . 45 , 1 . 15 . (5) T ABLE I R E WAR D W E I G H T S U S E D F O R PP O T R A I N I N G . T erm W eight w v 1.8 w L 3.0 w G 0 w j L 0.4 w j G 0 w k 1.5 w × 2.0 w pre 1.5 w c 10.0 w s 0.5 w p 1.0 with m = ( g min − g max ) / ( v max − v min ) and b = g max − mv min (we use v min =3 , v max =18 , g max =0 . 9 , g min =0 . 65 ). W e give a small bonus ( w pre ) for pre-shortening lookahead before pronounced bends, and gently discourage ov erly large g on straights. Collisions are detected if the minimum LiDAR range < 0 . 2 m; we also penalize low speed and stalling. Rewards are clipped R t ∈ [ − 30 , 100] for stability . Reward weights. T able I lists the weight values used for all PPO training runs. E. Pure Pursuit Contr ol Giv en the target point at a distance L d along the raceline, transformed to the vehicle frame ( x ′ , y ′ ) , PP computes γ = g · 2 y ′ L 2 d , γ ∈ [ − 0 . 35 , 0 . 35] , (6) and publishes the steering command (we use γ directly as the steering angle command in our setup). The speed command follows the raceline’ s reference profile (capped on the real car for safety). If RL commands are stale beyond a short timeout, PP re verts to a linear teacher for ( L d , g ) until fresh actions resume. Here L d controls the lookahead distance and therefore the trade-off between responsiveness and smoothness, while g scales the curv ature implied by the lookahead geometry and sets how aggressiv ely the vehicle turns toward the target point. Why L d and g ar e non-r edundant.: L d determines the target point (pre view geometry), while g scales the resulting curvature command after the target is fixed. Hence g acts as a pure multiplier , whereas L d also mov es the target point: ∂ γ /∂ g = 2 y ′ /L 2 d , whereas dγ dL d contains both an explicit term − 4 g y ′ /L 3 d and an implicit term (2 g /L 2 d ) dy ′ /dL d due to the L d -dependent target point ( x ′ , y ′ ) . Ablations confirm the benefit of jointly adapting ( L d , g ) . F . V ehicle Dynamics Model W e model the platform with the standard kinematic bicycle dynamics: ˙ x = v cos θ , ˙ y = v sin θ , (7) ˙ θ = v L tan δ, ˙ v = a, (8) where ( x, y ) denote the position, θ the heading, v the longi- tudinal speed, a the commanded acceleration, δ the steering 4 input, and L the wheelbase. Under small-slip conditions, this simplified, nonholonomic model pro vides an accurate description for our high-rate path-tracking tasks in simulation and is suf ficiently faithful for transfer to the real F1TENTH platform. G. Pr oximal P olicy Optimization (PPO) W e train the stochastic policy π θ ( a t | s t ) —which out- puts the 2 -D action ( L t +1 , g t +1 ) —using Proximal Policy Optimization (PPO) from Stable-Baselines3 [23]. PPO is a first-order policy-gradient method that constrains each update via a clipped surrogate objecti ve, which helps prevent large, destabilizing policy shifts between iterations. Our training setup uses on-policy rollouts of length n steps = 4096 with a minibatch size of 256 and n epochs = 5 optimization passes per update. W e adopt γ = 0 . 99 for discounting and Generalized Advantage Estimation with λ = 0 . 98 . The probability ratio is clipped with ϵ = 0 . 2 , and we monitor a target KL of 0 . 015 to guard against overly aggressiv e updates. W e include an entropy bonus (coefficient 0 . 02 ) to encourage exploration and weight the value-function loss with 0 . 6 . Gradients are clipped at a global norm of 0 . 7 . The default learning rate follows a linear decay ℓ ( f ) = ℓ 0 f with ℓ 0 = 2 . 4 × 10 − 4 . W e also ev aluate a cosine schedule (enabled via --lr-schedule cosine ), implemented as ℓ cos ( f ) = ℓ 0 2 1 + cos π (1 − f ) , where f ∈ [1 , 0] is the remaining training progress provided by SB3. Observations and returns are normalized online with V ec- Normalize for stability . PPO maximizes the clipped surrogate L clip ( θ ) = E t h min r t ( θ ) ˆ A t , clip( r t ( θ ) , 1 − ϵ, 1 + ϵ ) ˆ A t i (9) where r t ( θ ) = π θ ( a t | s t ) /π θ old ( a t | s t ) and ˆ A t denotes the adv antage. The full objectiv e combines policy , v alue, and entropy terms: L ppo ( θ , ϕ ) = −L clip ( θ ) + c v E t ( V ϕ ( s t ) − ˆ R t ) 2 − c s E t H ( π θ ( · | s t )) (10) with c v = 0 . 6 and c s = 0 . 02 in our implementation. During training we e valuate the polic y periodically on a separate ev aluation en vironment that shares (but does not update) the normalization statistics, and we sav e both best-model check- points and intermediate snapshots. H. Implementation and T raining Our pipeline is implemented in ROS2 using rclpy and trained with Stable-Baselines3 PPO. The policy outputs a two-dimensional action ( L t +1 , g t +1 ) at each control step; both components are lightly filtered and published on ROS topics consumed by the Pure Pursuit (PP) controller . PP 1.2e-3 1.6e-3 2e-3 2.4e-3 2.8e-3 0 200k 400k 600k 800k 1M 1.2M (a) Approx. KL (target 0.015) 0 1e-3 3e-3 5e-3 7e-3 9e-3 0.011 0.013 0 200k 400k 600k 800k 1M 1.2M (b) Clip fraction Fig. 2. PPO diagnostics (1/2): update stability metrics. Approx. KL and clip fraction remain small after the initial transient, indicating conservati ve policy updates. 1 1.1 1.2 1.3 1.4 1.5 1.6 0 200k 400k 600k 800k 1M 1.2M (a) Policy action std 0.1 0.3 0.5 0.7 0 200k 400k 600k 800k 1M 1.2M (b) V alue loss (critic MSE) Fig. 3. PPO diagnostics (2/2): exploration and critic learning. The policy action standard de viation decreases as exploration reduces, while the critic loss stabilizes as value estimates improve. then computes steering directly from the geometric relation γ = g · 2 y /L 2 and applies a raceline speed profile (safely capped on hardware). If RL messages become stale, PP falls back to a linear “teacher” rule for ( L, g ) until fresh actions arriv e. Runs comprise up to 1.2M en vironment steps. W e log to T ensorBoard and ev aluate ev ery 5,000 steps on a separate ev aluation en vironment that shares (but does not update) the normalization statistics. The best-performing snapshot is saved automatically , and additional checkpoints are written every 25,000 steps to enable recovery and ablation comparisons. As training proceeds (Figs. 2, 3), the approximate KL and clip fraction remain small after an initial spike and trend downw ard later in training, the policy’ s action standard devi- ation increases gradually , and the critic’ s v alue loss decreases and stabilizes—together indicating conservati ve updates with a stabilizing critic. The full loop executes entirely in simulation (F1TENTH Gym + ROS2), and we repeat runs to check consistency and generalization. The agent emits ( L d , g ) , which are relayed to PP . PP issues steering/speed commands, and the en vironment returns the next state and reward. This closed-loop design allows the policy to learn curvature- and speed-aw are adjust- ments for both lookahead and steering gain, and it transfers readily to the real vehicle via the same R OS interfaces. All experiments were executed on a workstation-class desk- top (Dell Precision 3660, Intel Core i9–13900, 32 GiB RAM) running Ubuntu 22.04.5 L TS (64-bit, X11). W e observed typical wall-clock training times on the order of a few hours for runs of ∼ 1.2 M environment steps. I V . E X P E R I M E N TA L R E S U LT S W e ev aluate in both simulation and on a physical F1TENTH vehicle. Across all experiments, we compare five controllers: 5 (a) Fixed L too small: weav- ing on a straight. (b) Fixed L too large: corner cutting / wall impact. Fig. 4. Classical Pure Pursuit failure modes with fixed lookahead. (i) Fixed PP , (ii) Adaptive PP (linear v 7→ L d ), (iii) RL–PP ( L d only), (iv) RL–PP (joint ( L d , g ) ), and (v) a kinematic MPC raceline tracker . All methods track the same minimum- curvature raceline and share the same localization and inter - faces for fair comparison. A. Baseline: Kinematic MPC Kinematic MPC r aceline tr acker (baseline).: As a stronger model-based reference, we implement a kinematic MPC waypoint tracker that follows the same minimum- curvature raceline used by PP . The MPC state is x = [ x, y , v , ψ ] ⊤ and the control is u = [ a, δ ] ⊤ . W e use a horizon of T K = 8 with timestep ∆ t = 0 . 1 s (0.8 s lookahead). The horizon reference { x ref t } T K t =0 is generated by advancing along the raceline proportional to the current speed. At each control step, we solve a QP that penalizes tracking error , control effort, and control-rate changes: min x 0: T K , u 0: T K − 1 P T K − 1 t =0 ∥ x t − x ref t ∥ 2 Q + ∥ x T K − x ref T K ∥ 2 Q f + P T K − 1 t =0 ∥ u t ∥ 2 R + P T K − 2 t =0 ∥ u t +1 − u t ∥ 2 R ∆ . (11) with Q = Q f = diag (13 . 5 , 13 . 5 , 5 . 5 , 13 . 0) for ( x, y , v , ψ ) , R = diag (0 . 01 , 5 . 0) for ( a, δ ) , and R ∆ = diag(0 . 01 , 5 . 0) . W e impose actuator and rate limits consistent with our platform: δ ∈ [ − 0 . 4189 , 0 . 4189] rad , | a | ≤ 3 . 0 m/s 2 , | δ t +1 − δ t | ≤ ˙ δ max ∆ t, ˙ δ max = 180 ◦ / s . (12) The QP is solved online using CVXPY with OSQP . F ixed-Lookahead F ailure Modes.: Classical Pure Pursuit (PP) with a fixed lookahead L is highly sensiti ve to the track geometry and the chosen speed profile. If L is set too small, the vehicle tends to oscillate on straights and cut inside through bends; if L is set too large, straight-line tracking appears stable, but the car understeers and struggles in tighter corners, as shown in Fig. 4. In practice, deploying PP on a ne w track often requires re-tuning L , and performance can remain sensitiv e despite this effort. In our setting, these limitations motiv ate learning to adapt not only L but also the steering gain g online. Challenges: Although our hardware experiments reach v max = 6 m / s , they still operate belo w the most aggressive dynamics explored in simulation (via speed-profile scaling). At higher speeds, tire slip and unmodeled nonlinearities become more prominent; thus we use the high-speed simulation stress tests to complement the real-car validation while maintaining safety on the physical track. Setup and zero-shot evaluation.: W e train a PPO policy that outputs both ( L, g ) entirely in simulation on the Hock- enheim F1TENTH racetrack, then e valuate without any fine- tuning on two unseen layouts: Montr eal and Y as Marina from the f1tenth_racetracks collection [24]. T o increase task difficulty and encourage generalization, we simulate training with the raceline speed profile uniformly scaled by +30% , which yields a maximum reference speed of 15 . 6 m / s and a minimum of 4 . 16 m / s . T rac k selection. T raining uses Hock enheim ; Montr eal and Y as Marina are held out for zero-shot tests (Fig. 5). Safety-teacher activation rate.: T o confirm that results are driven by the learned policy rather than the linear fallback, we log whether the controller uses PPO outputs ( RL mode ) or the linear teacher ( teacher mode ) at each control step (teacher mode triggers only if RL outputs are unav ailable/stale beyond the timeout). Across our evaluation runs, the teacher was ne ver triggered on either track: Montreal 0 / 8261 steps (0.000%), 0 ev ents; Y as Marina 0 / 11228 steps (0.000%), 0 ev ents (max continuous teacher duration 0 . 000 s ). Qualitative r obustness.: Fig. 6 compares representative challenging regions on Montr eal and Y as Marina . For each track, the full-track plot marks the area enlarged below , where we overlay the reference raceline and all fiv e controllers. Across both tracks, the learned RL–PP policies follow the reference closely through corner entry , mid-corner , and exit while staying within the track boundaries; the joint ( L d , g ) variant is typically the closest match, while the L d -only policy shows slightly larger offsets in the highest-curvature parts of the turn. In contrast, fix ed-lookahead PP and the v elocity-linear adaptiv e PP exhibit larger geometry-dependent deviations in the highlighted regions (e.g., earlier inside cutting or lar ger exit drift), consistent with their reliance on a single hand-designed scheduling rule. The kinematic MPC tracker follows the same reference and provides a model-based comparison; under our horizon and constraints it shows modest rounding/offset in the highlighted regions. Overall, these qualitativ e observations align with the lap-time results: adapting both lookahead and steering gain improves robustness relativ e to fixed-parameter and single-rule baselines. B. Ablation Study: Benefit of J oint ( L d , g ) T uning A key design choice in our framew ork is to adapt both the Pure Pursuit lookahead distance L d and the steering gain g . T o isolate the value of learning g in addition to L d , we compare two learned v ariants trained under the same PPO setup and observation space: • RL–PP (joint) : the policy outputs ( L d , g ) . • RL–PP ( L d only) : the policy outputs L d while g is held fixed at a constant g = g 0 . 6 (a) Hockenheim (b) Montreal (c) Y as Marina Fig. 5. Simulator maps used in our study: (a) Hockenheim for training, (b) Montreal, and (c) Y asMarina for ev aluation. (a) Montreal (b) Y as Marina Fig. 6. Qualitative comparison in highlighted re gions on two unseen tracks. F or each track, the full-layout plot (top) indicates the zoom window shown below . The zoom overlays the reference raceline and all five controllers. The learned RL–PP policies follow the reference closely while remaining within the track boundaries, whereas Fixed PP and Adaptiv e PP (linear v 7→ L d ) show larger de viations in these regions. The kinematic MPC tracker provides a model-based comparison under the same reference and constraints. Colors follow the legend. Choice of g 0 . W e set g 0 to the best-performing fixed gain selected by validation in simulation (i.e., a short sweep over a small grid of constant g values, ev aluated under the same completion criterion used in our main results). This ensures the L d -only ablation is not artificially weakened by a poor fixed gain. Both v ariants are trained on Hoc kenheim and ev aluated zero- shot on Montr eal and Y as Marina under the same protocol as the main results. The results are reported in T ables II and III (rows RL–PP (joint) and RL–PP ( L d only) ), and in the real- car e valuation in T able IV. Overall, the joint policy achieves the best combination of lap time and stability , indicating that learning g in addition to L d provides measurable benefit beyond adaptiv e lookahead alone. P olicy interpr etability .: T o understand what the learned policy encodes, we log ( L d , g ) along the raceline and correlate them with curvature and speed. Empirically , the policy reduces L d in high-curvature segments (tighter previe w) and increases L d on straights. The gain g varies more mildly , remaining near a narro w range while adjusting slightly with context. W e provide plots of ( L d , g ) versus κ max t and v t in Fig. 8(a), and show the along-lap e volution of ( L d , g ) in Fig. 8(b). T ABLE II M O N T R E A L F 1 T E N T H R AC E T R A C K : L A P TI M E S OV E R 1 0 C O N S E C U T I V E L A P S . F O R T H E 1 0 / 1 0 C O M P L E T I O N O B J E C T I V E , E AC H C O N T RO L L E R U S E S T H E B E S T S P E E D M U LT I P L I E R T H AT Y I E L D S 1 0 / 1 0 L A P S I N O U R S E T U P . Adaptive PP (linear) A P P L I E S A L I N E A R v 7→ L d RU L E W I T H B O U N D S L d ∈ [1 . 0 , 2 . 5] m . Controller Mean Std Min Max RL–PP (joint L d , g , +16% ) 32.85 0 . 24 32 . 18 32 . 88 RL–PP ( L d only , +13% ) 33 . 23 0 . 13 33 . 05 33 . 49 Adaptiv e PP (linear v 7→ L d , +10% ) 34 . 25 0 . 04 34 . 18 34 . 34 Fixed PP ( L d fixed, − 10% ) 41 . 35 0 . 09 41 . 24 41 . 57 MPC raceline tracker ( − 15% ) 48 . 06 0 . 08 47 . 96 48 . 25 Montr eal F1TENTH racetrac k: quantitative results (10 laps).: W e e valuate five controllers on Montreal over 10 consecutiv e laps. T o compare methods under a common com- pletion criterion, each controller uses the best speed-profile multiplier that achieves 10/10 completed laps in our setup: RL–PP (joint ( L d , g ) ) at +16% , RL–PP ( L d only) at +13% , Adaptiv e PP (linear v 7→ L d ) at +10% , Fixed PP at − 10% , and the MPC raceline tracker at − 15% . The resulting lap-time statistics are summarized in T able II. From T able II, RL–PP (joint ( L d , g ) ) achiev es a mean lap time of 32 . 85 ± 0 . 24 s over ten laps at the +16% profile (range 7 Fig. 7. Real-car tracking comparison using a shared raceline speed profile capped at v max = 6 m / s : overlaid trajectories for all fi ve controllers against the same reference raceline. Using the same capped profile emphasizes differ- ences in lateral tracking and stability while keeping longitudinal commands comparable across controllers. T ABLE III Y A S M A R I N A F 1 T E N T H RA C E T R AC K : L A P TI M E S OV E R 10 C O N S E C U T I V E L A P S . F O R T H E 1 0 / 1 0 C O M P L E T I O N O B J E C T I V E , E AC H C O N T RO L L E R U S E S T H E B E S T S P E E D M U LT I P L I E R T H AT Y I E L D S 1 0 / 1 0 L A P S I N O U R S E T U P . Adaptive PP (linear) A P P L I E S A L I N E A R v 7→ L d RU L E W I T H B O U N D S L d ∈ [1 . 0 , 2 . 5] m . Controller Mean Std Min Max RL–PP (joint L d , g , +17 . 2% ) 45.10 0 . 07 44 . 96 45 . 20 RL–PP ( L d only , +15% ) 46 . 04 0 . 10 45 . 93 46 . 21 Adaptiv e PP (linear v 7→ L d , [+15]%) 46 . 41 0 . 12 46 . 24 46 . 57 Fixed PP ( L d fixed, orig.) 52 . 97 0 . 21 52 . 79 53 . 44 MPC raceline tracker ([-10]%) 64 . 01 0 . 25 63 . 60 64 . 51 32 . 18 – 32 . 88 s). The RL–PP ( L d only) v ariant at +13% records 33 . 23 ± 0 . 13 s (range 33 . 05 – 33 . 49 s), and the Adaptiv e PP (linear v 7→ L d ) baseline at +10% achieves 34 . 25 ± 0 . 04 s (range 34 . 18 – 34 . 34 s). Fixed PP requires a reduced profile ( − 10% ) to complete all laps and yields 41 . 35 ± 0 . 09 s. The MPC raceline tracker attains 10/10 completion at − 15% with a mean time of 48 . 06 ± 0 . 08 s. Ov erall, the joint RL–PP variant provides the fastest times under our completion criterion, improving over the linear adaptive baseline while maintaining low lap-to-lap variability . Y as Marina F1TENTH racetr ack: quantitative results (10 laps).: W e apply the same protocol as in Montreal: each controller uses the best speed-profile multiplier that achieves 10/10 completed laps in our setup. On Y as Marina , RL– PP (joint ( L d , g ) ) runs at +17 . 2% , RL–PP ( L d only) at +15% , Adaptive PP (linear v 7→ L d ) at +15% , Fixed PP uses the original (non-accelerated) profile, and the MPC raceline tracker completes 10/10 laps at − 10% . The lap-time statistics (seconds) are reported in T able III. Across ten laps, the RL policy again leads, with a lower mean time and comparably small dispersion relativ e to the lin- ear dynamic baseline, and a substantial margin over fixed– L . (a) Schedule vs. curvature/speed. 1.5 2.0 2.5 L d [ m ] 0 500 1000 1500 2000 2500 3000 W aypoint inde x along lap 0.55 0.60 0.65 g m a x ( n o r m . ) (b) Along-lap ev olution. Fig. 8. Policy interpretability on Montreal. (a) Parameter schedule versus curvature and speed along the raceline. (b) Along-lap ev olution of L d and g plotted against waypoint index, with normalized κ max (dashed) highlighting high-curvature segments. Real-car validation (raceline speed pr ofiles capped for safety).: W e ev aluated the learned controller on a physical 1:10 racecar (F1TENTH class) [3] equipped with a VESC- based drivetrain, a 2D LiD AR (UST -10LX class), and onboard IMU/odometry running Ubuntu 20.04 with R OS 2 Foxy . A SLAM-based map [25] was first generated for the test track; during e xperiments the v ehicle localized against this map using scan matching and an MCL particle filter [22]. A raceline for the test track was computed of fline using a minimum-curv ature optimizer [4] and conv erted into a reference speed profile. A lightweight R OS 2 wrapper supplies the policy with the same observation channels as in simulation (speed, curvature along the raceline, and heading error to the lookahead point) and returns the actions ( L d , g ) , preserving observation/action parity with the simulator and reducing Sim-to-Real drift due to interface mismatch. Lap-time evaluation (T able IV). All controllers track the same raceline speed profile capped at v max = 6 m / s for safety and a fair comparison. T able IV summarizes the resulting lap times; RL–PP (joint L d , g ) achieves the best mean performance ( 9 . 46 ± 0 . 23 s; min 9 . 09 , max 9 . 82 ), follo wed by RL–PP ( L d only) and Adapti ve PP (linear v 7→ L d ). Fixed PP is slightly slower , while the MPC raceline tracker is substantially slower in our hardware setting. 8 T ABLE IV R E A L - C A R L A P T I M E S U S I N G A R AC E L I N E S P E E D P RO FI L E U N I F O R M LY C A P P E D A T v max = 6 m / s . Controller Mean Std Min Max RL–PP (joint L d , g ) 9.46 0.23 9.09 9.82 RL–PP ( L d only) 9.61 0.58 8.94 10.51 Adaptiv e PP (linear v 7→ L d ) 9.72 0.27 9.34 10.40 Fixed PP ( L d fixed) 9.85 0.43 9.32 10.55 MPC raceline tracker 15.42 0.47 14.48 16.17 T rajectory overlays (F ig. 7). T o visualize tracking behavior under a more conserv ative cap, we generate the overlays in Fig. 7 using the same raceline speed profile capped at v max = 6 m / s . All fiv e controllers are overlaid against the reference raceline, highlighting that the learned policies follow the reference smoothly with small lateral deviation. V . C O N C L U S I O N W e presented a hybrid Pure Pursuit (PP) controller in which a PPO polic y jointly selects the lookahead L d and steering gain g from curv ature–speed features. The design preserves PP’ s geometric steering law and adds only lightweight interfaces (two R OS topics) with a linear fallback, yielding a deployable and interpretable module. Across simulation tracks, the RL-tuned PP consistently im- prov es lap time, path-tracking accuracy , and steering smooth- ness ov er both fixed- L , velocity-linear baselines, and MPC raceline tracker (see T ables II and III). On hardware with a capped raceline speed profile, RL–PP (joint ( L d , g ) ) achiev es the best mean lap time among the ev aluated controllers (T a- ble IV) and e xhibits smooth tracking beha vior in the qualitati ve zooms and trajectory overlays (Figs. 7). Overall, adaptiv e selection of ( L d , g ) reduces per-track retuning and transfers from simulation to the real car, though performance depends on a high-quality global reference race- line/localization and may degrade under extreme dynamics, motiv ating future work on robustness to imperfect references and highly dynamic scenarios. R E F E R E N C E S [1] A. L. Garrow , D. L. Peters, and T . A. Panchal, “Curvature sensitive modification of pure pursuit control, ” ASME Letters in Dynamic Systems and Control , vol. 4, no. 2, p. 021002, 2024. [2] J. Schulman, F . W olski, P . Dhariwal, A. Radford, and O. Klimov , “Proximal policy optimization algorithms, ” arXiv:1707.06347, 2017. [3] M. O’Kelly , H. Zheng, D. Karthik, and R. Mangharam, “F1tenth: An open-source ev aluation environment for continuous control and rein- forcement learning, ” in Pr oc. NeurIPS Competition and Demonstration T rack , vol. 123, 2020, pp. 77–89. [4] A. Heilmeier, A. Wischne wski, L. Hermansdorfer , J. Betz, M. Lienkamp, and B. Lohmann, “Minimum curvature trajectory planning and control for an autonomous race car , ” V ehicle System Dynamics , vol. 58, no. 10, pp. 1497–1527, 2020. [5] T . Akiba, S. Sano, T . Y anase, T . Ohta, and M. Koyama, “Optuna: A next-generation hyperparameter optimization framework, ” in Proc. ACM SIGKDD , 2019, pp. 2623–2631. [6] S. Rahman, X. Jiang, Y . Zhang, M. W ang, and C. Chen, “ A survey on trajectory planning and path tracking for autonomous v ehicles, ” Results in Engineering , vol. 23, p. 102439, 2025. [7] Y . Beycimen and A. Duran, “Unmanned ground vehicles: A compre- hensiv e re view of perception, planning, and control, ” J ournal of F ield Robotics , 2023. [8] A. AbdElmoniem and M. Osama, “ A path-tracking algorithm using predictiv e stanley lateral controller, ” International Journal of Advanced Robotic Systems , vol. 17, no. 6, pp. 1–14, 2020. [9] R. Al Fata and N. Daher, “ Adaptiv e pure pursuit with de viation model regulation for trajectory tracking in small-scale racecars, ” in Proc. Int. Conf. Control, Automation, and Instrumentation (IC2AI) , 2025, pp. 1–6. [10] F . T arhini, R. T alj, and M. Doumiati, “ Adaptiv e look-ahead distance based on an intelligent fuzzy decision for an autonomous vehicle, ” in Pr oc. IEEE Intelligent V ehicles Symp. (IV) , 2023, pp. 1–8. [11] J. B. Kimball, B. DeBoer, and K. Bubbar , “ Adaptive control and reinforcement learning for v ehicle suspension control: A review , ” Annual Reviews in Contr ol , vol. 58, p. 100974, 2024. [12] V . Mnih, A. P . Badia, M. Mirza et al. , “ Asynchronous methods for deep reinforcement learning, ” in Proc. Int. Conf. Machine Learning (ICML) , 2016, pp. 1928–1937. [13] T . P . Lillicrap, J. J. Hunt, A. Pritzel, N. Heess, T . Erez, Y . T assa, D. Silver , and D. Wierstra, “Continuous control with deep reinforcement learning, ” arXiv:1509.02971, 2015. [14] M. Ganesan, S. Kandhasamy , B. Chokkalingam, and L. Mihet-Popa, “ A comprehensive revie w on deep learning-based motion planning and end-to-end learning for self-driving vehicle, ” IEEE Access , vol. 12, pp. 66 031–66 067, 2024. [15] S. Milani, N. T opin, M. V eloso, and F . Fang, “Explainable reinforcement learning: A survey and comparative revie w , ” ACM Comput. Surv . , vol. 56, no. 7, 2024. [16] A. W achi, W . Hashimoto, X. Shen, and K. Hashimoto, “ A generalized formulation and algorithms for safe exploration in reinforcement learn- ing, ” in Pr oc. Int. Joint Conf. Artificial Intelligence (IJCAI) , 2024. [17] X. Hu, S. Li, T . Huang, B. T ang, R. Huai, and L. Chen, “How simulation helps autonomous dri ving: A survey of sim2real, digital twins, and parallel intelligence, ” IEEE T rans. Intelligent V ehicles , vol. 9, no. 2, pp. 593–616, 2024. [18] R. Muduli, D. Jena, and T . Moger, “ Application of reinforcement learning-based adaptive pid controller for automatic generation control of multi-area power system, ” IEEE T rans. Automation Science and Engineering , vol. 22, pp. 1057–1068, 2025. [19] T . Kobayashi, “Re ward bonuses with gain scheduling inspired by itera- tiv e deepening search, ” Results in Contr ol and Optimization , vol. 12, p. 100244, 2023. [20] B. D. Evans, H. W . Jordaan, and H. A. Engelbrecht, “Comparing deep reinforcement learning architectures for autonomous racing, ” Machine Learning with Applications , vol. 14, p. 100496, 2023. [21] Z. Han, P . Chen, B. Zhou, and G. Y u, “Hybrid path tracking control for autonomous trucks: Integrating pure pursuit and deep reinforcement learning with adaptive look-ahead mechanism, ” IEEE T rans. Intelligent T ransportation Systems , vol. 26, no. 5, pp. 7098–7112, 2025. [22] C. W alsh and S. Karaman, “Cddt: Fast approximate 2d ray casting for accelerated localization, ” arXiv:1705.01167, 2017. [23] A. Raffin, A. Hill, A. Gleave, A. Kanervisto, M. Ernestus, and N. Dor- mann, “Stable-baselines3: Reliable reinforcement learning implementa- tions, ” J. Mach. Learn. Res. , vol. 22, no. 268, pp. 1–8, 2021. [24] “F1tenth racetracks, ” GitHub repository , 2020, accessed 2025. [25] W . Hess, D. Kohler , H. Rapp, and D. Andor , “Real-time loop closure in 2d lidar slam, ” in Pr oc. IEEE Int. Conf. Robotics and Automation (ICRA) , 2016, pp. 1271–1278.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment