Decision Support under Prediction-Induced Censoring

In many data-driven online decision systems, actions determine not only operational costs but also the data availability for future learning -- a phenomenon termed Prediction-Induced Censoring (PIC). This challenge is particularly acute in large-scal…

Authors: Yan Chen, Ruyi Huang, Cheng Liu

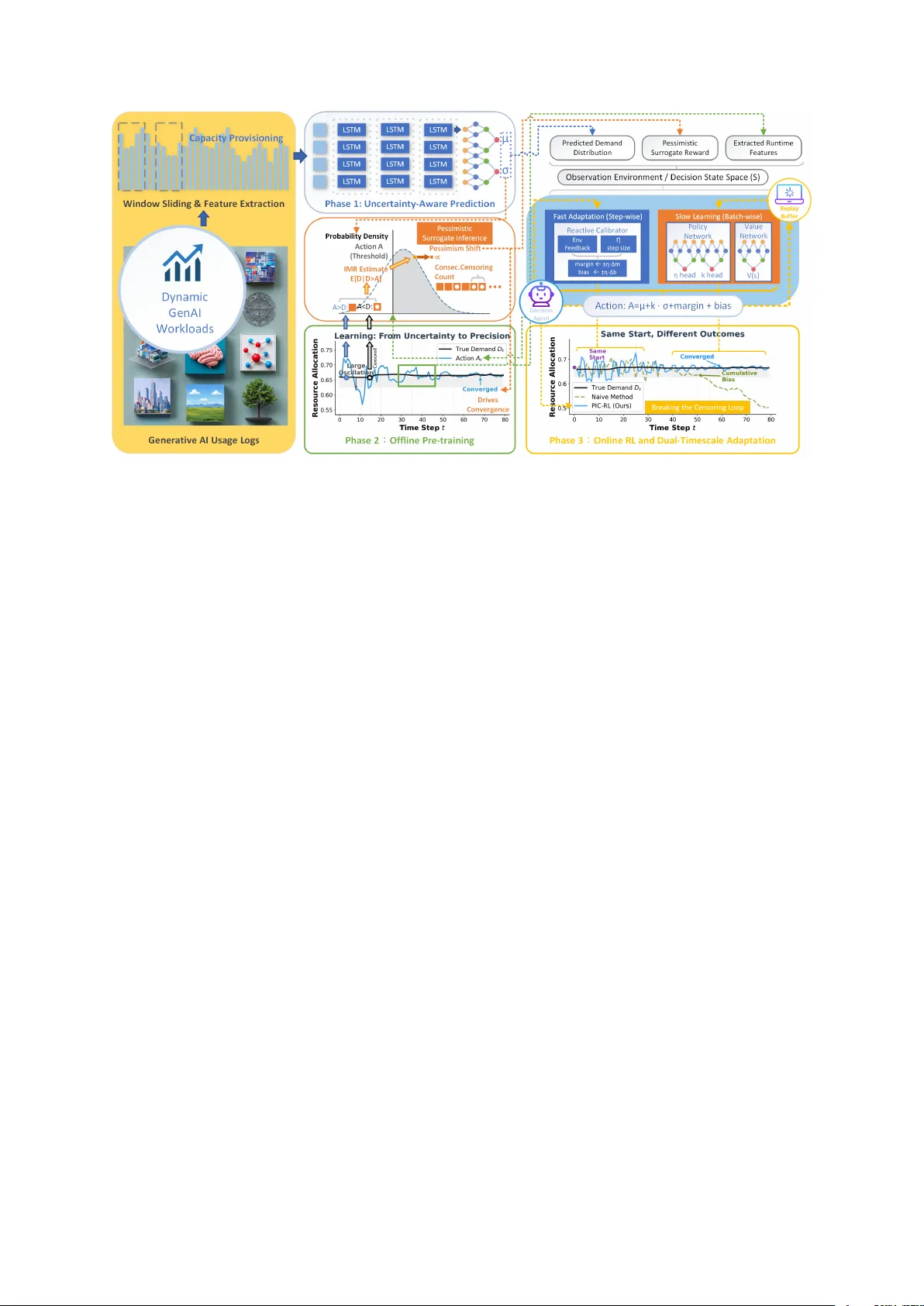

Decision Supp ort under Prediction-Induced Censoring Y an Chen 1 , Ruyi Huang 1 , and Cheng Liu ∗ 1 1 Dep artment of Systems Engine ering, City University of Hong Kong, Hong Kong SAR, China Abstract In man y data-driv en online decision systems, actions determine not only op erational costs but also the data a v ailability for future learning—a phenomenon termed Prediction- Induced Censoring (PIC) . This c hallenge is particularly acute in large-scale resource allo cation for generative AI (GenAI) serving: insufficien t capacity triggers shortages but hides the true demand, lea ving the system with only a ”greater-than” constraint. Standard decision-making approaches that rely on uncensored data suffer from selection bias, often lo c king the system in to a self-reinforcing lo w-provisioning trap. T o break this loop, this pap er prop oses an adaptive approac h named PIC-Reinforcement Learning (PIC-RL) , a closed-lo op framew ork that transforms censoring from a data qualit y problem in to a decision signal. PIC-RL integrates (1) Uncertaint y-Aware Demand Prediction to manage the information–cost trade-off, (2) Pessimistic Surrogate Inference to construct decision- aligned conserv ative feedbac k from shortage even ts, and (3) Dual-Timescale Adaptation to stabilize online learning against distribution drift. The analysis provides theoretical guaran tees that the feedback design corrects the selection bias inherent in naiv e learning. Exp erimen ts on production Alibaba GenAI traces demonstrate that PIC-RL consistently outp erforms state-of-the-art baselines, reducing service degradation b y up to 50% while main taining cost efficiency . Keyw ords: Online Decision Supp ort, Resource Management, Censored Information, Rein- forcemen t Learning, Generativ e AI 1 In tro duction Large-scale cloud services t ypically rely on the pip eline ”online demand forecasting → capacity pro visioning” to balance utilization and service-level ob jectiv es (SLOs). This tension is acute for GenAI and large language mo del (LLM) inference serving, where pro viders must meet stringen t latency targets while con trolling exp ensiv e GPU costs. Under v olatile w orkloads, practitioners often ov er-provision to av oid risks [ 1 ], y et static allo cations or blac k-b o x scheduling struggle to matc h heterogeneous resource demands [ 2 , 3 , 4 ]. Recent works explore p o oling or p eak-shaping to impro ve efficiency [ 5 , 6 ], but they fundamentally rely on feedbac k loops that break under shortage. In pro visioning, actions determine not only cost but also feedbac k observ abilit y: when resources are insufficien t ( a t < d t ), op erators observe only the capacity limit rather than true demand [ 3 ]. This motiv ates a fundamen tal question: ho w should learning pro ceed when online actions shap e the data b eing observ ed? This pap er studies this problem through the lens of PIC. Unlike standard sup ervised le arn- ing, PIC creates a self-reinforcing loop: underestimation → censored feedbac k → biased learning ∗ Corresp onding Author. Email: cliu647@cityu.edu.hk 1 Figure 1: The PIC-RL F ramework. A three-phase architecture transforming censoring from a missing-lab el problem in to a sup ervision signal: (1) Uncertaint y-Aware Prediction, (2) Offline Pre- training, and (3) Online RL and Dual-Timescale Online Adaptation. → p ersisten t failure [ 7 ]. The action-as-threshold mechanism in tro duces a unique information– cost coupling: acquiring informativ e lab els requires paying higher resource costs [ 7 ]. While cen- sored demand is w ell-studied in in ven tory managemen t [ 8 , 9 , 10 ] and econometrics [ 11 , 12 ], these fields predominantly assume exogenous censoring driv en b y external constrain ts rather than the learner’s p olicy . Consequently , classical base-sto ck p olicies [ 13 , 14 ] or regression methods (e.g., T obit [ 15 ]) [ 16 , 17 ] fail to mo del the causal feedback lo op where the decision itself induces the data distribution shift. Under nonstationary workloads, the inability to activ ely manage the information–cost trade-off leads to slow adaptation or collapse [ 18 , 19 , 20 ]. T o break this lo op, PIC-RL is in troduced (Figure 1 ), transforming censoring from a ”miss- ing label” problem in to a sup ervision signal. The framew ork rests on tw o PIC-aligned inno- v ations. First, P essimistic Surrogate Inference (Phase 2, Figure 1 Middle) synthesizes conserv ativ e rewards from censored ev en ts to correct the selection bias inheren t in uncensored- only learning (Prop ositions 1–2; see Section 3). Second, Dual-Timescale Adaptation (Phase 3, Figure 1 Right) couples a fast R e active Calibr ator for immediate v olatilit y with a slo w Policy Network for robust strategy refinemen t. Exp erimen ts on Alibaba GenAI traces show that PIC- RL prev ents the passive service degradation observed in baselines [ 21 ], pro viding a foundation for learning under endogenous feedbac k constrain ts. 2 Problem Definition Consider a discrete-time horizon t = 1 , 2 , . . . , T . At each time step, there is a laten t normalized demand d t ∈ [0 , 1] and the system chooses an action a t ∈ [0 , 1], interpreted as a provisioning lev el. The action affects cost immediately and, crucially in PIC, determines the feedback ob- serv abilit y . Let H t denote the history av ailable b efore acting at time t , and let π b e a p olicy that selects actions as a t = π ( H t ). The observ ation mo del follows the action-as-threshold structure. After choosing a t , the system observes the truncated v alue y t = min( d t , a t ) together with a censoring indicator c t = 1 [ d t > a t ]. When c t = 0, the system observes the ground truth y t = d t ; when c t = 1, it only observ es y t = a t and the inequality d t > a t . Th us, the online feedback is ( y t , c t ), meaning the a v ailabilit y of ground-truth lab els is causally coupled to the decision itself. 2 Decision quality is ev aluated using an asymmetric linear cost. F or an y ( a t , d t ), the per-step cost is ℓ ( a t , d t ) = c under ( d t − a t ) + + c ov er ( a t − d t ) + , (1) where c under > c ov er (t ypically c under = 2 , c ov er = 1). The primary metric is the cum ulativ e regret: Regret( π ) ≜ T X t =1 ℓ ( a t , d t ) . (2) The Mean Absolute Error (MAE) is also rep orted to measure tracking accuracy . Proto col: Offline-to-Online under Dri ft. Access to historical data is assumed for offline pretraining. Ho wev er, the online demand pr o cess may undergo distribution shift (concept drift). The system must adapt online using only the partial PIC feedbac k ( y t , c t ), without access to immediate ground truth when censored. This setting captures the core c hallenge: warm-starting from historical logs while adapting to nonstationary demand under action-dep enden t censoring. 3 Metho dology PIC-RL is a closed-loop framework designed to realign ”decision → observ abilit y → learning signal” under PIC. As illustrated in Figure 1 , it op erates in three progressive phases. Phase 1 (Uncertain t y-Aw are Prediction) lev erages historical logs to train an uncertain t y-a w are de- mand predictor. Phase 2 (Offline Pre-training) tackles censoring b y constructing a Pessimistic Surr o gate Infer enc e mechanism, con verting censorship constrain ts into directionally correct su- p ervision signals for p olicy initialization. Finally , Phase 3 (Online Reinforcement Learning (RL) and Dual-Timescale Adaptation) deploys a dual-timescale mec hanism: a R e active Cali- br ator (fast loop) handles immediate feedbac k v olatility , while a Slow Policy Network (outer lo op) refines the strategy using repla y ed exp eriences. 3.1 Phase 1: Uncertain ty-Aw are Prediction Standard p oin t predictors are insufficien t under PIC b ecause they fail to quantify the risk of censoring. T o enable risk-aw are provisioning, a probabilistic predictor f θ is trained to output a distribution ov er future demand, parameterized b y a mean µ t and a standard deviation σ t . Im- plemen ted as an LSTM [ 22 ] with a Gaussian likelihoo d head, the mo del minimizes the negativ e log-lik eliho od (NLL) on historical data: L pred = 1 N N X i =1 " log σ i + 1 2 d i − µ i σ i 2 # . (3) Crucially , the learned uncertaint y σ t serv es as a dynamic signal for the downstream p olicy: when σ t is high, the system can choose to pay a higher resource cost (via a safety buffer) to reduce censoring risk and acquire more informativ e feedbac k. 3.2 Phase 2: Offline Pretraining Phase 2 pretrains a policy netw ork using offline rollouts on the training data. The k ey inno v ation is a theoretically grounded surrogate reward for censored steps, whic h allows the p olicy to learn from censoring ev ents rather than ignoring them. 3 3.2.1 P olicy and V alue Net w orks The p olicy net work π ϕ tak es a state vector s t as input and outputs t wo quantities: ( η t , k t ) = π ϕ ( s t ) , (4) where: • η t ∈ [0 . 5 , 3 . 0] is a step-size multiplier that controls the sp eed of fast calibration up dates in Phase 3. • k t ∈ [0 , 2 . 0] is the uncertaint y utilization co efficien t that determines how muc h of σ t to add as a safety buffer. A v alue net w ork V ψ ( s t ) pro vides a baseline for v ariance reduction in p olicy gradien t updates. 3.2.2 State Design The RL agent operates on a ric h state space s t constructed from extracted runtime features (Figure 1 , top-righ t), pro viding the p olicy with sufficient information to mak e informed decisions under PIC. It includes three categories of features: 1. Calibration and feedbac k statistics: current margin, bias, recen t censoring rate, con- secutiv e censoring coun t, consecutiv e o v er-provision count. 2. Sequence statistics: mean and standard deviation of recent observ ations, progress through the episode. 3. Prediction and uncertain ty estimates: µ t , σ t from the predictor, plus outputs from a censored-demand estimator (estimated mean, estimated standard deviation, p essimism factor, uncertaint y measure). This design ensures that the p olicy can ”see” its o wn uncertain ty and the curren t feedbac k regime, enabling it to adapt its b eha vior (e.g., b ecome more conserv ativ e when information is scarce). 3.2.3 Learnable F eedbac k Under Censoring A cen tral c hallenge in Phase 2 is how to assign rew ards when censoring o ccurs and the true demand d t is not observed. This is addressed through a theoretically motiv ated surrogate rew ard. The Bias of Uncensored-Only Learning. A natural but fla wed approac h is to simply skip censored steps or impute the censored demand. The follo wing result formalizes why this is problematic. Prop osition 1 (Instabilit y under Mixture) . Consider a naive le arner that fits demand using a mixtur e of (i) historic al unc ensor e d observations and (ii) curr ent c ensor e d observations under thr eshold action A t , with mixtur e weight ρ ∈ (0 , 1] on the c ensor e d str e am. Its effe ctive tar get me an is µ mix ( A t ) = (1 − ρ ) E [ D ] + ρ E [ D | D ≤ A t ] . If c ensoring is active ( P ( D > A t ) > 0 ), then µ mix ( A t ) < E [ D ] . Conse quently, any up date rule that tr acks this tar get (e.g., setting the next b ase level to µ mix ) exhibits a strictly ne gative drift even at the unbiase d p oint A t = E [ D ] . In p articular, this c overs sto chastic appr oximation up dates of the form A t +1 = A t + γ t ( µ mix ( A t ) − A t ) with γ t > 0 . 4 Figure 2: V erification of Prop osition 1 (Instability). (a) Naive learning exhibits systematic negative bias. (b) Cumulativ e error confirms that the system inevitably drifts into a “censoring trap,” ev en with historical data replay . Pr o of. Let the true demand exp ectation b e µ ∗ = E [ D ]. The learner’s target minimizes risk o v er the mixed distribution: E train [ D ] = (1 − ρ ) µ ∗ + ρ E [ D | D ≤ A t ] . (5) By the la w of total exp ectation, µ ∗ = E [ D | D ≤ A t ] P ( D ≤ A t ) + E [ D | D > A t ] P ( D > A t ). Since D > A t implies v alues strictly greater than those in the observ ed set, it holds that E [ D | D > A t ] > E [ D | D ≤ A t ], which implies µ ∗ > E [ D | D ≤ A t ]. Let the bias gap be ∆( A t ) = µ ∗ − E [ D | D ≤ A t ] > 0. Substituting bac k: E train [ D ] = (1 − ρ ) µ ∗ + ρ ( µ ∗ − ∆( A t )) = µ ∗ − ρ ∆( A t ) . (6) Th us, E train [ D ] < µ ∗ . If A t = µ ∗ , the new target is strictly low er, creating a negativ e drift force − ρ ∆( A t ). Prop osition 2 (Consistency and Escap e of Surrogate Rew ard) . L et the surr o gate r ewar d b e r ( a ) ∝ − ( ˆ µ + ˆ σ λ ( z ) − a ) · Ψ( n ) , wher e z ≜ ( a − ˆ µ ) / ˆ σ . Under the Gaussian assumption: 1. Gr adient Consistency: ∂ r ∂ a > 0 for al l a . The gr adient c onsistently inc entivizes incr e as- ing the action when c ensor e d. 2. Pessimistic Esc ap e: F or a fixe d action a , as the c onse cutive c ensoring c ount n in- cr e ases, the gr adient magnitude | ∂ r ∂ a | sc ales with Ψ( n ) , guar ante eing a gr owing for c e to esc ap e p ersistent under-pr ovisioning tr aps. Pr o of. Let G ( a ) = ˆ µ + ˆ σ λ ( a − ˆ µ ˆ σ ) − a . The rew ard is r ( a ) = − C · G ( a ) · Ψ, where C > 0 is a constan t. Differentiating G ( a ) with resp ect to a : ∂ G ∂ a = ˆ σ λ ′ ( z ) 1 ˆ σ − 1 = λ ′ ( z ) − 1 . (7) A key prop ert y of the In v erse Mills Ratio is that 0 < λ ′ ( z ) < 1 for all z ∈ R [ 23 ]. Therefore, ∂ G ∂ a ∈ ( − 1 , 0), meaning the estimated gap strictly decreases as action increases. Consequently , the reward gradient is: ∂ r ∂ a = − C Ψ( λ ′ ( z ) − 1) = C Ψ(1 − λ ′ ( z )) > 0 . (8) 5 Figure 3: Mechanisms of Proposition 2. (a) Strict monotonicity of the surrogate gap ensures gradien t consistency ( ∂ r/∂ a > 0). (b) The p essimism factor Ψ( n ) amplifies rew ard signals super-linearly to enable escap e from censoring traps. This confirms Consistency : the p olicy alwa ys receiv es a p ositiv e rew ard signal for raising actions. F urthermore, since 1 − λ ′ ( z ) is b ounded a wa y from zero lo cally , the gradient magnitude is prop ortional to Ψ( n ). As n → N max , Ψ( n ) gro ws, pro viding the Escape prop ert y . Surrogate Rew ard Construction (Pessimistic Surrogate Inference). F or censored steps, the Pessimistic Surr o gate Infer enc e mec hanism (Figure 1 , Middle) uses the In verse Mills Ratio (IMR) [ 24 ] to define the surrogate rew ard as: r cens t = − c under · ( ˆ µ t + ˆ σ t λ ( ˆ α t ) − a t ) | {z } Expected Gap (IMR) · Ψ( n t ) | {z } Pessimism , (9) where Ψ( n t ) = 1+ β · min( n t , N max ) scales non-linearly with the duration of information black out. F or uncensored steps, the true asymmetric cost is used: r uncens t = − ℓ ( a t , d t ) = − c under ( d t − a t ) + − c ov er ( a t − d t ) + . (10) This surrogate reward allo ws the p olicy to receive informativ e gradien ts ev en when ground truth is hidden, addressing the core PIC c hallenge. Here Ψ( n t ) represents the pessimism factor that creates a “break-out” gradient: if the system gets stuc k in a censoring loop, the surging p essimism forces the policy to drastically raise actions, re-acquiring ground truth. 3.2.4 Offline RL T raining Rollouts are performed on the training data to sim ulate the PIC observ ation mec hanism: at eac h step, censoring is recorded and the observ ation sequence is up dated accordingly (appending d t if uncensored, a t if censored). The p olicy and v alue netw orks are up dated using an Actor–Critic algorithm [ 25 ] with the surrogate rewards defined ab ov e. The key b enefit of Phase 2 is that the p olicy learns “how to b eha ve under censoring” b efore facing real PIC feedbac k. This warm-start is critical: without it, Phase 3 would b egin with a randomly initialized policy that may get trapped in the self-reinforcing underestimation lo op. 6 3.3 Phase 3: Online RL and Dual-Timescale Adaptation In online deplo yment, the system must adapt to distribution drift while managing the immediate feedbac k lo op. The pro visioning action a t is form ulated as a hierarc hical comp osition of three comp onen ts: a t = µ t |{z} Base + k t ( s t ) · σ t | {z } Risk Buffer + ∆ t |{z} Reactive Correction . (11) Here, ( µ t , σ t ) are from the frozen predictor. The Risk Buffer is con trolled by the RL p olicy output k t , allowing the agent to dynamically trade cost for information based on state uncer- tain t y . The Reactiv e Correction ∆ t = m t + b t is up dated by the R e active Calibr ator (Figure 1 Righ t) at ev ery step: m t +1 = m t + η t ( s t ) δ m · [ I ( c t ) − I ( ¬ c t ∧ a t > y t )] , b t +1 = b t + η t ( s t ) δ b · [ I ( c t ) − γ I ( ¬ c t ∧ a t > y t )] . (12) This dual-timescale design ensures stabilit y: the fast inner lo op (∆ t ) handles high-frequency v olatilit y , while the slow outer loop (P olicy π ϕ ) optimizes structural parameters ( η t , k t ). R emark (Dual-Timesc ale Stability). The interaction b et ween the fast calibrator (∆ t ) and slo w p olicy ( π ϕ ) forms a tw o-timescale sto c hastic appro ximation system. Under standard Lips- c hitz assumptions, the separation of time scales ensures asymptotic stability [ 26 ] (Theorem 3.3 ). Theorem 1 (Dual-Timescale Stability). F or analysis, consider diminishing step sizes (standard in sto c hastic appro ximation); in implementation, small constants are used to track drift. Let x t denote slow policy parameters and y t ≜ ( m t , b t ) denote fast calibration v ariables (Eq. 12 ). Consider the coupled updates x t +1 = x t + α t [ H ( x t , y t ) + M t +1 ] , (13) y t +1 = y t + β t [ G ( x t , y t ) + N t +1 ] , (14) where { M t } , { N t } are martingale difference sequences. Assumptions. (A1) The step sizes satisfy Robbins–Monro conditions: X t α t = X t β t = ∞ , X t ( α 2 t + β 2 t ) < ∞ , α t /β t → 0 . (15) (A2) The functions H , G are bounded and lo cally Lipschitz almost ev erywhere; the fast loop admits an ordinary differen tial equation (ODE) (or differential inclusion) representation. (A3) F or any fixed x , the fast dynamics ODE ˙ y ( τ ) = G ( x, y ( τ )) has a unique globally asymptotically stable equilibrium λ ( x ). Pr o of sketch. Consider the Ly apunov function V x ( y ) = 1 2 ∥ y − λ ( x ) ∥ 2 . Under (A2)–(A3), V x strictly decreases along tra jectories of the fast ODE whenever y = λ ( x ), hence y t trac ks λ ( x t ) on the fast timescale [ 26 , 27 ]. Given this tracking, the slo w dynamics asymptotically follo w the reduced ODE ˙ x ( t ) = H ( x ( t ) , λ ( x ( t ))), and the tw o-timescale theorem implies that the coupled iterates ( x t , y t ) con v erge (or trac k, under nonstationarit y) the stable inv arian t set of this reduced system [ 26 , 27 ]. 4 Exp erimen ts PIC-RL is ev aluated on three production traces against state-of-the-art forecasting, RL, and decision-fo cused learning baselines. Results show sup erior tracking accuracy and cost efficiency b y learning from censored feedbac k. 7 T able 1: Characteristics of Pro duction W orkloads. High p eak-to-mean ratio (PMR) and CV highlight the extreme sto c hasticit y of GenAI traces compared to traditional DLRM. Dataset Length PMR CV W orkload Dynamics DLRM 31 da ys 2.99 0.69 Strong Seasonalit y , Predictable GenAI-Mem 23 hrs 5.20 0.87 Hea vy-tailed, Quasi-p erio dic Spik es GenAI-Ctx 23 hrs 5.20 0.87 Multi-v ariate, Context-Ric h 4.1 Exp erimen tal Setup 4.1.1 Pro duction W orkloads The ev aluation uses three distinct industrial traces from Alibaba Cloud [ 29 , 30 ], represen ting a sp ectrum from predictable patterns to highly sto c hastic dynamics. T able 1 summarizes their statistical characteristics. • Dataset A (Industrial DLRM): GPU request logs from large-scale recommendation services [ 29 ]. This w orkload exhibits strong diurnal seasonality (auto correlation, Auto corr ≈ 1 . 0) and moderate v ariabilit y , serving as a baseline for predictable demand. • Dataset B (GenAI-Mem): GPU memory usage from pro duction Stable Diffusion serving clusters [ 30 ]. Unlik e DLRM, this w orkload is c haracterized by extreme non- stationarit y (co efficien t of v ariation, CV=0.87) and heavy-tailed bursts driven b y batched inference scheduling, p osing a sev ere c hallenge for censorship handling. • Dataset C (Context-Ric h GenAI): An augmented version of Dataset B that includes GPU dut y-cycle metrics as auxiliary con text features [ 30 ], testing the algorithm’s abilit y to leverage multi-modal signals under partial observ abilit y . The resource domain is mapp ed to A t ∈ [0 , 1] via min-max normalization using statistics computed solely on the training partition; the same scaling is applied to v alidation and test to preven t lo ok-ahead bias. V alues exceeding the training range are clipp ed to [0 , 1], sim ulat- ing con tin uous resource partitioning mec hanisms (e.g., MPS or MIG). Chronological splitting (60/20/20) is used for training, v alidation, and testing to strictly preven t temporal leak age. 4.1.2 Proto col: Endogenous Censoring Simulation T o systematically b enc hmark learning efficacy under endogenous censoring, a Counterfactual Sim ulation En vironment is used. Unlik e static sup ervised ev aluation, this en vironmen t enforces a dynamic feedback lo op: the agen t’s c hosen provision lev el A t instan tly determines the data av ailability for future training steps. F ormally , let D ∗ t denote the unobserved oracle demand (from the trace). At eac h step t , the en vironment reveals only the censored feedback: Y t = min( D ∗ t , A t ) , C t = I ( D ∗ t > A t ) . (16) The agent m ust update its policy solely based on ( Y t , C t ); the oracle demand D ∗ t is never rev e aled to the learner and is used only for offline p erformance ev aluation. This proto col rigorously tests the algorithm’s ability to break the self-reinforcing bias lo op—a capability that cannot b e assessed via standard metrics on fixed test sets. 8 4.1.3 Baselines PIC-RL is compared against state-of-the-art methods via a Comp onen t-wise Substitution proto col whenev er a baseline targets a sp ecific mo dule. The key c hallenge is that PIC tightly couples forecasting, control, and observ ability: many baselines are designed for a subsystem (e.g., forecasting or offline RL) and do not sp ecify an end-to-end procedure under endogenous censoring. Running them “as-is” w ould therefore confound missing comp onen ts with algorithmic w eakness. T o isolate eac h baseline’s c or e me chanism under the same PIC lo op, only the in tended phase(s) are s ubstituted while k eeping the remaining phases and the counterfactual sim ulator fixed. F or methods that define an end-to-end controller, ev aluation is p erformed as a full-pip eline baseline under the same sim ulator and cost w eights. • Phase 1 (Predictor) Substitution: Replace the Phase-1 probabilistic predictor with T emp oral F usion T ransformer (TFT) [ 31 ], Informer [ 32 ], or Autoformer [ 33 ] (with output heads adapted to Gaussian ( µ t , σ t ) for interface compatibilit y), while k eeping Phase 2/3 unchanged. • Phase 2 (Offline RL) Substitution: Replace Phase-2 actor–critic pretraining with Conserv ativ e Q-Learning (CQL) [ 34 ], while keeping Phase 1 and Phase 3 unc hanged. • Phase 3 (Online Calibration) Substitution: Replace Phase-3 margin/bias adaptation with Conformal Prediction [ 35 , 36 ] built on the same Phase-1 predictor; Phase 2 is omitted as Conformal does not define an offline RL pretraining comp onent. W e use one- sided (upp er) calibration to match the newsv endor-style asymmetric decision ob jective. • Phase 2+3 (Decision Mo dule) Substitution: Replace the Phase-2/3 decision mo d- ule with (i) Thompson Sampling (TS) [ 37 ] as a Bay esian exploration–exploitation con troller, or (ii) a P ensieve -st yle actor–critic con troller [ 38 ] by substituting the p ol- icy/v alue net w orks (including a 1D-CNN state enco der) in both Phase 2 and Phase 3. F or fairness, b oth con trollers are adapted only at the interface lev el to our contin uous decision parameterization and state features, while preserving their core exploration/actor–critic mec hanisms. • F ull-Pipeline Baselines: SPO+ (decision-fo cused training) [ 39 ] and Autopilot (rule- based scaling) [ 40 ]. 4.1.4 Metrics MAE is rep orted as the primary metric for pro visioning accuracy: MAE = 1 T P | A t − D ∗ t | . Secondary metrics include Regret (Eq. 2 ), which captures the asymmetric cost of o ver- and under-pro visioning. Lo w er v alues indicate better decision qualit y for both MAE and Regret. Note that Regret dep ends on spe cific cost w eights ( c under = 2 , c ov er = 1), while MAE provides a generic, transferable measure of tracking qualit y . 4.2 In ternal V alidation and Dynamics Learning dynamics across the three training phases are analyzed on the GenAI-Mem dataset to v alidate the in ternal mec hanisms of PIC-RL. Phase 1: Calibrated Uncertain ty Quantification. The foundation of risk-a w are decision- making is a well-calibrated probabilistic predictor. Figure 4 sho ws rapid NLL stabilization and a v alidation MAE of ab out 0.0752, indicating reliable estimation of both the conditional mean and disp ersion. This yields a p oin t forecast µ t together with an uncertain t y signal σ t , whic h op erationalizes the information–cost trade-off b y controlling how aggressively the system buys observ abilit y under PIC. 9 Figure 4: Phase 1 T raining Dynamics. (a) Rapid NLL con vergence confirms the mo del learns the full demand distribution. (b) Stable v alidation MAE demonstrates robust generalization without ov erfitting. Figure 5: Phase 2 Offline Pretraining. (a) 79% reduction in v alue loss v alidates the critic’s ability to learn from censored feedback. (b) The derived p olicy effectively anticipates demand spik es (red crosses indicate censored even ts). Phase 2: P essimistic P olicy Crystallization. In Phase 2, the p olicy is pretrained with the surrogate reward to warm-start decision-making under censoring. In Figure 5 , the critic v alue loss drops by 79% ov er 150 iterations, consistent with the directionally correct learning signal induced b y the IMR-based surrogate (Prop osition 2). The resulting policy trac ks burst y spik es while maintaining a mo dest buffer, reducing shortages without resorting to p ersisten t o v er-pro visioning. Phase 3: Dual-Timescale Adaptation. Phase 3 ev aluates online adaptation under en- dogenous censoring. Figure 6 shows sub-linear growth of cumulativ e regret under censored feedbac k, indicating sustained impro vemen t in decision qualit y . The dual-timescale dynamics separate fast resp onsiv eness (margin) from slow drift-tracking (bias), stabilizing the closed loop while keeping the error distribution A t − D t balanced rather than p ersisten tly biased to w ard o v er-pro visioning. 4.3 Main Comparison Results T able 2 compares PIC-RL against baselines substituted at differen t phases under the same PIC lo op. The results sho w that each phase contributes to robust p erformance under endogenous 10 Figure 6: Phase 3 Online Performance. (a) Deploymen t tra jectory sho wing effective trac king within µ ± σ b ounds. (b) Balanced error distribution. (c) Distinct evolution of F ast (margin) and Slo w (bias) v ariables stabilizing the feedback lo op. (d) Sub-linear regret growth ( O ( √ T )) confirming successful adaptation. censoring. Impact of Phase 1 Substitution. Replacing our probabilistic predictor with standard fore- casters (TFT, Informer) yields similar MAE but higher Regret (e.g., TFT Regret +175% on GenAI-Mem). This reflects pr e diction–de cision misalignment : forecasting losses under censor- ing fail to capture tail risk. In con trast, our NLL-based predictor provides calibrated uncertain t y ( σ t ) for risk-a ware buffering. Impact of Phase 3 Substitution. Conformal Prediction (Phase 3 Subst.; no Phase-2 pretraining) underp erforms (Regret +1279% on GenAI-Mem) b ecause one-sided calibration on c ensor e d r esiduals inherits the selection bias in Prop osition 1. PIC-RL instead uses IMR to correct the censored feedback signal. Impact of Phase 2/3 Substitution. Substituting the RL mo dule reveals tw o distinct fail- ure modes. CQL (Phase 2 Subst.) exhibits “conserv ative collapse” (Figure 7 d): without dual-timescale calibration, it conv erges to a static p olicy that misses bursts. P ensieve-st yle Actor–Critic and Thompson Sampling (Phase 2+3 Subst.) degrade under censor- ing (e.g., TS Regret +525% on GenAI-Mem) b ecause generic exploration/control (even with in terface-compatible state/action parameterizations) lacks IMR-based coun terfactual learning 11 T able 2: Main Comparison Results. PIC-RL consisten tly outperforms baselines on MAE and Regret. By systematically substituting key mo dules (Phase 1, Phase 2, Phase 2+3, or Phase 3), w e observ e that no single comp onen t is sufficient on its own. DLRM-GPU GenAI-Ctx GenAI-Mem Metho d MAE Reg MAE Reg MAE Reg PIC-RL (Ours) 0.0058 22.8 0.0112 5.4 0.0104 2.4 Phase 1 Substitution (Predictor) TFT [ 31 ] 0.0085 30.3 0.0238 6.2 0.0113 6.6 Informer [ 32 ] 0.0129 37.5 0.0195 4.3 0.0124 2.5 Autoformer [ 33 ] 0.0112 29.0 0.1285 29.9 0.2567 54.6 Phase 2 Substitution (Offline RL) CQL [ 34 ] 0.0098 27.5 0.0385 8.3 0.0162 5.5 Phase 3 Substitution (Online Calibration; no Phase-2 pretraining) Conformal [ 36 ] 0.0072 34.4 0.0456 38.1 0.0637 33.1 Phase 2+3 Substitution (Decision Mo dule) P ensiev e [ 38 ] 0.0104 25.3 0.0184 6.0 0.0154 5.0 TS [ 37 ] 0.0112 31.8 0.2070 11.7 0.0261 15.0 F ull-Pip eline Baselines SPO+ [ 39 ] 0.0158 54.1 0.0467 23.4 0.0466 23.5 Autopilot [ 40 ] 0.0829 149.5 0.0836 32.7 0.0836 32.7 from censored ev ents and can b e destabilized b y prolonged information blac kouts. F ull-Pip eline Analysis. F ull-stack baselines exhibit structural deficits. SPO+ drifts under online censoring b ecause its decision-fo cused loss assumes fully observed lab els. Autopilot o v er-pro visions to reduce shortages, yielding the highest Regret and MAE (Figure 7 b). 4.4 Ablation Study An ablation study is conducted to attribute p erformance gains to individual comp onen ts. The results (T able 3 and Figure 8 ) are consistent with the three-phase design: F oundational (Phase 1), Structur al (Phase 2), and Stabilizing (Phase 3). F oundational Role of Uncertaint y (A1). Remo ving uncertain t y quantification (A1: w/o Uncertain t y) yields the largest degradation. As shown in Figure 8 , dropping the probabilistic output σ t increases MAE b y 646% on GenAI-Ctx and 328% on GenAI-Mem (relative to F ull). Without σ t , the con troller cannot form an uncertaint y-based buffer or compute the IMR- based surrogate term, effectively reducing decisions to a p oin t estimate under asymmetric costs. This supp orts the necessity of uncertaint y quan tification for decision-making under endogenous censoring. Structural Necessity of P essimism (A2–A3). Even with access to uncertaint y , the agen t requires a mec hanism to translate risk into correct gradien ts. • w/o Censored Rew ard (A2): Remo ving the IMR-based surrogate reward increases MAE by +57% (DLRM), +115% (GenAI-Ctx), and +55% (GenAI-Mem), indicating that censored steps pro vide insufficient directional learning signal without coun terfactual surrogate feedback. 12 Figure 7: T ra jectory Comparison (GenAI-Mem). PIC-RL (top) tightly trac ks demand. In con trast, CQL (Phase 2 Subst.) exhibits ”conserv ative collapse”, while TFT (Phase 1 Subst.) captures p erio dicit y but underestimates sto c hastic bursts. • w/o Pessimism (A3): Removing the pessimism factor Ψ( n ) increases MAE b y +53% (DLRM), +113% (GenAI-Ctx), and +40% (GenAI-Mem), indicating that gradien t am- plification is needed to escape persistent censoring traps. Con trol-Theoretic Stabilit y (A4–A7). The remaining ablations (w/o k σ , KL, EMA, Pre- training) are stabilit y refinements. Relativ e to F ull, these changes increase MAE by 9%–102% across datasets (T able 3 ). F or example, on GenAI-Ctx, remo ving KL (A5) increases MAE by 102% , whereas on GenAI-Mem, removing EMA (A6) increases MAE b y 9% . Removing KL regularization (A5) or exp onen tial mo ving a verage (EMA) (A6) also increases action v ariance, consisten t with the role of dual-timescale stabilization. 5 Conclusion This work formalizes Prediction-Induced Censoring , a p erv asiv e c hallenge in online de cision supp ort systems where decisions shape which data become observ able. Under PIC, standard learning pip elines can en ter a self-reinforcing lo op: under-pro visioning reduces lab el a v ailabilit y , amplifies selection bias, and leads to persistent service degradation. T o break this lo op, PIC-RL is introduced as a closed-lo op decision supp ort framework that transforms censoring from a data quality issue in to a usable decision signal. The design couples uncertaint y-aw are prediction for managing the information–cost trade-off, conserv ative 13 T able 3: Complete Ablation Results. Metrics degrade consistently across ablations. Uncertaint y (A1) and Censored Reward (A2) are the most critical components. DLRM GenAI-Ctx GenAI-Mem Metho d MAE Regret MAE Regret MAE Regret PIC-RL (F ull) 0.0058 22.8 0.0112 5.4 0.0104 2.4 F oundational & Structur al A1: w/o Uncert. 0.0086 25.6 0.0836 7.3 0.0445 3.4 A2: w/o CensRwd 0.0091 28.2 0.0241 7.4 0.0161 3.8 A3: w/o Pessimism 0.0089 26.3 0.0239 6.8 0.0146 3.1 Contr ol Stability A4: w/o k · σ 0.0085 24.6 0.0193 6.1 0.0136 3.5 A5: w/o KL 0.0082 24.1 0.0226 5.8 0.0119 2.7 A6: w/o EMA 0.0079 23.3 0.0220 5.7 0.0113 2.6 A7: w/o Pretrain 0.0078 23.5 0.0213 5.6 0.0118 2.7 surrogate feedback for learning directionally correct up dates from shortage ev en ts (Prop. 1– 2), and dual-timescale online adaptation for stable op eration under drift. Exp erimen ts on pro duction GenAI traces sho w consistent impro v emen ts ov er strong baselines, reducing service degradation by up to 50% while main taining cost efficiency . F uture Directions. F uture work includes extending the framew ork to multi-resource cou- pled constraints (e.g., joint GPU memory and compute censoring) and strengthening op era- tional go v ernance for decision supp ort, such as monitoring, auditability , and safe adaptation under distribution shift. References [1] Arpan Gujarati, Reza Karimi, Safy a Alzay at, W ei Hao, Antoine Kaufmann, Ymir Vig- fusson, and Jonathan Mace. Serving dnns like clo ckw ork: Performance predictability from the b ottom up. In 14th USENIX Symp osium on Op er ating Systems Design and Implemen- tation (OSDI) , pages 443–462, Berkeley , CA, USA, 2020. USENIX Asso ciation. [2] Daniel Cranksha w, Xin W ang, Michael J. F ranklin, Joseph E. Gonzalez, and Ion Stoica. Clipp er: A low-latency online prediction serving system. In 14th USENIX Symp osium on Networke d Systems Design and Implementation (NSDI) , pages 613–627, Berk eley , CA, USA, 2017. USENIX Asso ciation. [3] W encong Xiao, Romil Bhardwa j, Ramac handran Ramjee, Muthian Siv athanu, Nipun Kwa- tra, et al. Gandiv a: Introspective cluster scheduling for deep learning. In 13th USENIX Symp osium on Op er ating Systems Design and Implementation (OSDI) , pages 595–610, Berk eley , CA, USA, 2018. USENIX Asso ciation. [4] Jian yong Y uan, Jia yi Zhang, Zin uo Cai, and Junchi Y an. T ow ards v ariance reduction for reinforcement learning of industrial decision-making tasks: A bi-critic based demand- constrain t decoupling approac h. In Pr o c e e dings of the 29th A CM SIGKDD Confer enc e on Know le dge Disc overy and Data Mining , KDD ’23, pages 3162–3173, New Y ork, NY, USA, 2023. ACM. 14 [5] Prat yush Patel, Esha Choukse, Chao jie Zhang, ´ I ˜ nigo Goiri, Brijesh W arrier, Nithish Ma- halingam, and Ricardo Bianc hini. Polca: Po wer o v ersubscription in llm cloud pro viders, 2023. arXiv preprin t [6] Chengyi Nie, Rodrigo F onseca, and Zhenh ua Liu. Aladdin: Joint placement and scaling for slo-aw are llm serving, 2024. arXiv preprin t [7] Jingying Ding, W o onghee Tim Huh, and Ying Rong. F eature-based in ven tory control with censored demand. Manufacturing and Servic e Op er ations Management , 26(3):1157–1172, 2024. [8] Omar Besb es and Alp Muharremoglu. On implications of demand censoring in the newsv en- dor problem. Management Scienc e , 59(6):1407–1424, 2013. [9] W o onghee Tim Huh, Ganesh Janakiraman, John A. Muckstadt, and P aat Rusmevichien- tong. An adaptiv e algorithm for finding the optimal base-stock p olicy in lost sales in ven tory systems with censored demand. Mathematics of Op er ations R ese ar ch , 34(2):397–416, 2009. [10] Man wei Li, Detao Lv, Y ao Y u, and Zihao Jiao. Contrastiv e learning for inv entory add prediction at fliggy . In Pr o c e e dings of the 31st A CM SIGKDD Confer enc e on Know le dge Disc overy and Data Mining , KDD ’25, pages 1–10, New Y ork, NY, USA, 2025. A CM. [11] Sh ujaat Khan and Elie T amer. Inference on endogenously censored regression models using conditional moment inequalities. Journal of Ec onometrics , 152(2):104–119, 2009. [12] Victor Chernozhuk ov, Sokbae Lee, and Adam M. Rosen. In tersection b ounds: Estimation and inference. Ec onometric a , 81(2):667–737, 2013. [13] W o onghee Tim Huh, Retsef Levi, P aat Rusmevichien tong, and James B. Orlin. Adap- tiv e data-driven inv entory con trol with censored demand based on k aplan-meier estimator. Op er ations R ese ar ch , 59(4):929–941, 2011. [14] Bo xiao Chen, Xiuli Chao, and Yining W ang. T ec hnical note—data-based dynamic pric- ing and inv entory con trol with censored demand and limited price c hanges. Op er ations R ese ar ch , 68(5):1445–1456, 2020. [15] Eoghan O’Neill. Type i tobit bay esian additiv e regression trees for censored outcome regression. Statistics and Computing , 34(4):123, 2024. [16] Y angy ang W ang, Jiaw ei Gu, Li Long, Xin Li, Li Shen, Zhouyu F u, Xiangjun Zhou, and Xu Jiang. F reshretailnet-50k: A sto c k out-annotated censored demand dataset for laten t demand recov ery and forecasting in fresh retail, 2025. arXiv preprint [17] R. Sousa et al. Predicting demand for new pro ducts in fashion retailing using censored data. Exp ert Systems with Applic ations , 259:125313, 2025. [18] Lin An, Andrew A. Li, Benjamin Moseley , and R. Ravi. The nonstationary newsv endor with (and without) predictions. Manufacturing and Servic e Op er ations Management , 27(3):881– 896, 2025. [19] Xin Chen, Jiameng Lyu, Shilin Y uan, and Y uan Zhou. Learning when to restart: Nonstationary newsvendor from uncensored to censored demand, 2025. arXiv preprin t [20] Yinfeng Xiang, Jiangyi F ang, Chao Li, Haitao Y uan, Yiwei Song, and Jiming Chen. Effec- tiv e aoi-level parcel volume prediction: When lo ok ahead parcels matter. In Pr o c e e dings of the 31st A CM SIGKDD Confer enc e on Know le dge Disc overy and Data Mining , KDD ’25, pages 1–12, New Y ork, NY, USA, 2025. ACM. 15 [21] Y an ying Lin, Sh uaip eng W u, Sh utian Luo, Hong Xu, Haiying Shen, Chong Ma, Min Shen, et al. Understanding diffusion mo del serving in production: A top-do wn analysis of work- load, scheduling, and resource efficiency . In Pr o c e e dings of the 2025 ACM Symp osium on Cloud Computing , pages 1–15, New Y ork, NY, USA, 2025. A CM. [22] Xiao wei Jia, Ankush Khandelwal, Guruprasad Na yak, James Gerb er, Kimberly Carlson, P aul W est, and Vipin Kumar. Incremental dual-memory lstm in land cov er prediction. In Pr o c e e dings of the 23r d A CM SIGKDD International Confer enc e on Know le dge Disc overy and Data Mining , KDD ’17, pages 867–876, New Y ork, NY, USA, 2017. A CM. [23] ´ Arp´ ad Baricz. Mills’ ratio: monotonicity patterns and functional inequalities. Journal of Mathematic al Analysis and Applic ations , 340(2):1362–1370, 2008. [24] Dale Heien and Cath y Roheim W esseils. Demand systems estimation with micro data: A censored regression approac h. Journal of Business & Ec onomic Statistics , 8(3):365–371, 1990. [25] Iv o Grondman, Lucian Busoniu, Gabriel A. D. Lop es, and Rob ert Babusk a. A survey of actor-critic reinforcement learning: Standard and natural p olicy gradients. IEEE T r ansac- tions on Systems, Man, and Cyb ernetics, Part C (Applic ations and R eviews) , 42(6):1291– 1307, 2012. [26] Viv ek S. Bork ar. Sto chastic Appr oximation: A Dynamic al Systems Viewp oint . Cam bridge Univ ersit y Press, Cam bridge, UK, 2008. [27] Harold J. Kushner and G. George Yin. Sto chastic Appr oximation and R e cursive Algorithms and Applic ations . Stochastic Mo delling and Applied Probability . Springer, New Y ork, NY, USA, 2nd edition, 2003. [28] Nino Vieillard, T adashi Kozuno, Bruno Scherrer, Olivier Pietquin, R ´ emi Munos, and Matthieu Geist. Lev erage the av erage: An analysis of kl regularization in reinforcemen t learning. In A dvanc es in Neur al Information Pr o c essing Systems , volume 33, pages 12163– 12174, Red Hook, NY, USA, 2020. Curran Associates, Inc. [29] Lingyun Y ang, Y ongchen W ang, Yinghao Y u, Qizhen W eng, Jian b o Dong, Kan Liu, Chi Zhang, Y anyi Zi, Hao Li, Zechao Zhang, Zechao Zhang, Nan W ang, Y u Dong, Menglei Zheng, Lanlan Xi, Xiaow ei Lu, Liang Y e, Guo dong Y ang, Binzhang F u, T ao Lan, Liping Zhang, Lin Qu, and W ei W ang. Gpu-disaggregated serving for deep learning recommen- dation mo dels at scale. In 22nd USENIX Symp osium on Networke d Systems Design and Implementation (NSDI 25) , USENIX NSDI ’25, Berkeley , CA, USA, 2025. USENIX Asso- ciation. [30] Y an ying Lin, Shijie P eng, Chengzhi Lu, Chengzhong Xu, and Kejiang Y e. Flexpip e: Adapt- ing dynamic llm serving through inflight pip eline refactoring in fragmented serverless clus- ters. In Eur op e an Confer enc e on Computer Systems (Eur oSys ’26) , pages 1–17. A CM, 2026. [31] Bry an Lim, Sercan ¨ O Arık, Nicolas Lo eff, and T omas Pfister. T emporal fusion transformers for in terpretable m ulti-horizon time series forecasting. International Journal of F or e c asting , 37(4):1748–1764, 2021. [32] Hao yi Zhou, Shanghang Zhang, Jieqi P eng, Shuai Zhang, Jianxin Li, Hui Xiong, and W an- cai Zhang. Informer: Bey ond efficient transformer for long sequence time-series forecasting. In Pr o c e e dings of the AAAI Confer enc e on Artificial Intel ligenc e , volume 35, pages 11106– 11115, 2021. 16 [33] Haixu W u, Jieh ui Xu, Jianmin W ang, and Mingsheng Long. Autoformer: Decomp osition transformers with auto-correlation for long-term series forecasting. In A dvanc es in Neur al Information Pr o c essing Systems (NeurIPS) , v olume 34, pages 22419–22430, 2021. [34] Aviral Kumar, Aurick Zhou, George T uck er, and Sergey Levine. Conserv ative q-learning for offline reinforcemen t learning. In A dvanc es in Neur al Information Pr o c essing Systems (NeurIPS) , volume 33, pages 1179–1191, 2020. [35] Anastasios N. Angelop oulos and Stephen Bates. Conformal prediction: A gen tle in tro duc- tion. F oundations and T r ends in Machine L e arning , 16(4):494–591, 2023. [36] Jun yu Cao. A conformal approac h to feature-based newsvendor under mo del missp ecifica- tion. arXiv pr eprint arXiv:2412.13159 , 2024. [37] Shipra Agra wal and Navin Go yal. Thompson sampling for contextual bandits with linear pa y offs. In International Confer enc e on Machine L e arning (ICML) , pages 127–135. PMLR, 2013. [38] Hongzi Mao, Ra vi Netrav ali, and Mohammad Alizadeh. Neural adaptive video streaming with p ensiev e. In Pr o c e e dings of the Confer enc e of the A CM Sp e cial Inter est Gr oup on Data Communic ation (SIGCOMM) , pages 197–210. ACM, 2017. [39] Adam N Elmac h toub and P aul Grigas. Smart ”predict, then optimize”. Management Scienc e , 68(1):9–26, 2022. [40] Krzysztof Rzadca, P a w el Findeisen, Jacek Swiderski, et al. Autopilot: W orkload autoscal- ing at go ogle. In Pr o c e e dings of the Fifte enth Eur op e an Confer enc e on Computer Systems (Eur oSys) , pages 1–16. ACM, 2020. 17 Algorithm 1 PIC-RL: Learning with Prediction-Induced Censoring Require: Historical data D train , Online horizon T , Hyperparameters δ m , δ b , γ , N update Ensure: Online provisioning decisions a 1: T Phase 1: Uncertain t y-Aware Prediction (Offline) 1: T rain predictor f θ via NLL minimization: 2: θ ∗ ← arg min θ P ( x i ,d i ) ∈D train L NLL ( d i ; f θ ( x i )) Phase 2: Offline Pre-training with P essimistic Surrogate Inference (Offline) 3: Initialize p olicy π ϕ , v alue net work V ψ 4: for ep o c h = 1 , . . . , E do 5: Rollout tra jectory using sim ulator with action a τ ∼ π ϕ ( s τ ) 6: for each step τ in rollout do 7: if censored ( c τ = 1) then 8: Construct P essimistic Surrogate Reward : 9: r τ ← − c under · d gap( a τ ; ˆ µ τ , ˆ σ τ ) · pess( n cens ) ▷ Prop. 2 10: else 11: Use true cost: r τ ← − ℓ ( a τ , d τ ) 12: end if 13: end for 14: Update ϕ, ψ via Actor-Critic on { r τ } sequences 15: end for Phase 3: Online RL and Dual-Timescale Adaptation (Online) 16: Initialize fast calibration terms: m 0 ← 0 , b 0 ← 0 17: Initialize Replay Buffer B 18: for time step t = 1 , . . . , T do 19: Predict: ( µ t , σ t ) ← f θ ∗ (windo w t ) 20: Plan: ( η t , k t ) ← π ϕ ( s t ) ▷ Slow V ariable: Policy Output 21: Act: a t ← clip( µ t + k t σ t + m t + b t , 0 , 1) 22: Observ e: Censored feedbac k ( y t , c t ) under the action-as-threshold model 23: F ast Up date (Ev en t-Driven Calibration): 24: if c t = 1 then ▷ Under-provisioning (Shortage) 25: m t +1 ← m t + η t δ m , b t +1 ← b t + η t δ b 26: else ▷ Over-pro visioning 27: m t +1 ← m t − η t δ m , b t +1 ← b t − γ η t δ b 28: end if 29: Rew ard & Store: 30: if c t = 1 then 31: r t ← − c under · d gap( a t ; ˆ µ t , ˆ σ t ) · pess( n cens ) ▷ Prop. 2 32: else 33: r t ← − ℓ ( a t , y t ) 34: end if 35: Append ( s t , a t , r t , s t +1 ) to B 36: Slo w Up date (P olicy Refinemen t): 37: if t mo d N update == 0 then 38: Up date π ϕ using B with KL regularization [ 28 ] 39: end if 40: Update observ ation window with ( y t , c t ) 41: end for 18 Figure 8: Ablation Study (MAE Impact). Uncertaint y remov al (A1) yields the largest degradation, while remo ving p essimism (A3) or IMR (A2) w eakens adaptation. Stability refinemen ts (A4–A7) pro vide consisten t robustness gains. 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment