PyTrim: A Practical Tool for Reducing Python Dependency Bloat

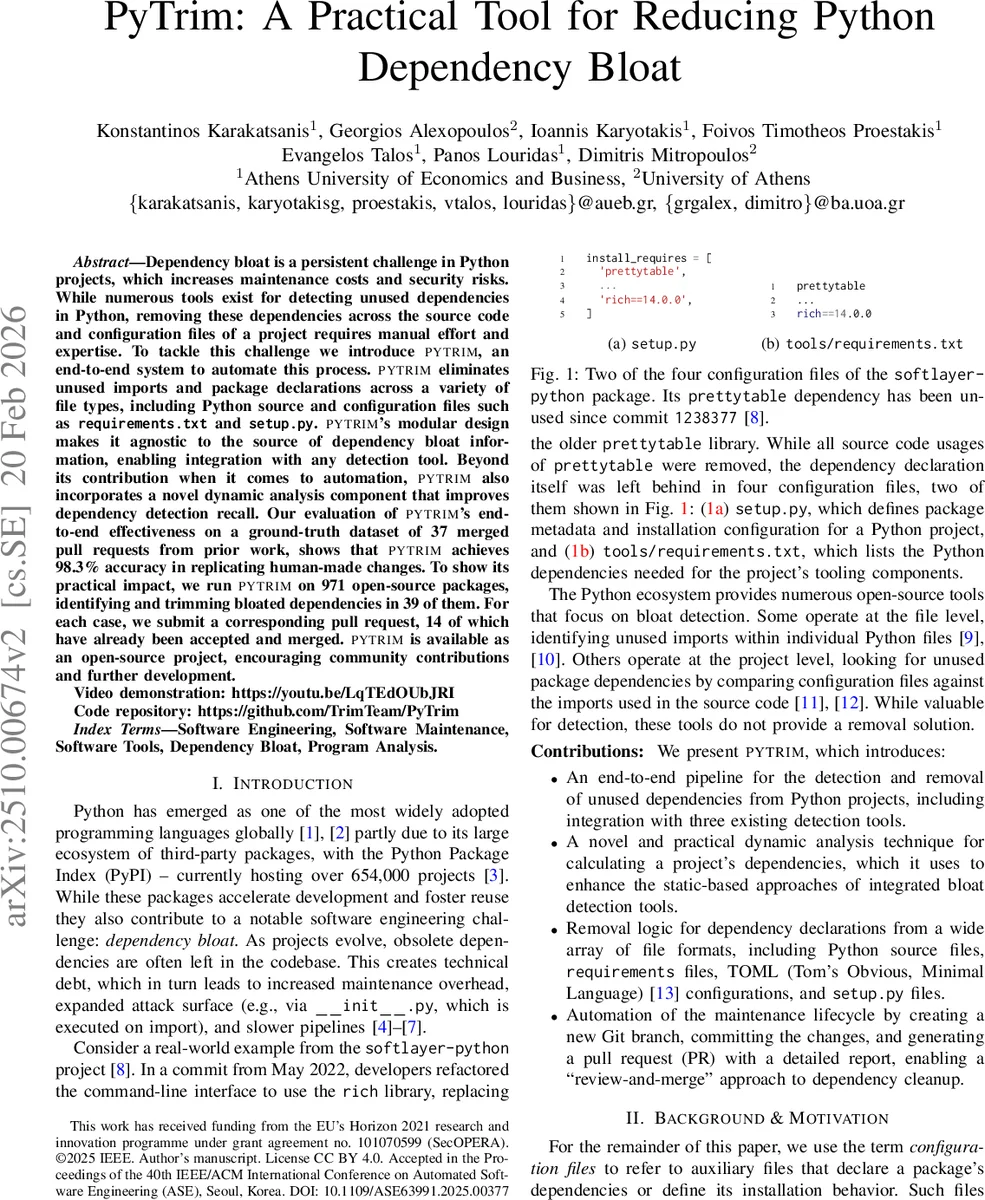

Dependency bloat is a persistent challenge in Python projects, which increases maintenance costs and security risks. While numerous tools exist for detecting unused dependencies in Python, removing these dependencies across the source code and configuration files of a project requires manual effort and expertise. To tackle this challenge we introduce PYTRIM, an end-to-end system to automate this process. PYTRIM eliminates unused imports and package declarations across a variety of file types, including Python source and configuration files such as requirements.txt and setup.py. PYTRIM’s modular design makes it agnostic to the source of dependency bloat information, enabling integration with any detection tool. Beyond its contribution when it comes to automation, PYTRIM also incorporates a novel dynamic analysis component that improves dependency detection recall. Our evaluation of PYTRIM’s end-to-end effectiveness on a ground-truth dataset of 37 merged pull requests from prior work, shows that PYTRIM achieves 98.3% accuracy in replicating human-made changes. To show its practical impact, we run PYTRIM on 971 open-source packages, identifying and trimming bloated dependencies in 39 of them. For each case, we submit a corresponding pull request, 6 of which have already been accepted and merged. PYTRIM is available as an open-source project, encouraging community contributions and further development. Video demonstration: https://youtu.be/LqTEdOUbJRI Code repository: https://github.com/TrimTeam/PyTrim

💡 Research Summary

PyTrim is an end‑to‑end system that automates the detection and removal of unused Python package dependencies and import statements across a project’s source code and configuration files. While many existing tools can identify unused dependencies, they stop short of actually cleaning up the declarations in files such as requirements.txt, setup.py, or pyproject.toml, leaving developers to perform error‑prone manual edits. PyTrim bridges this gap with three core components.

First, a dynamic dependency resolver installs the target project in an isolated environment using pip -t and then runs pipdeptree to extract the exact dependency graph that results from the actual installation process. This dynamic step captures dependencies that are constructed programmatically (e.g., reading external files in setup.py) and therefore missed by static parsers. In the authors’ evaluation, the dynamic resolver uncovered missed dependencies in 48 out of 971 projects (≈5 %).

Second, PyTrim is detector‑agnostic. It ships with out‑of‑the‑box adapters for three popular static analysis tools: a call‑graph based detector built on PyCG, FawltyDeps, and deptry. Each detector’s static dependency list is merged with the dynamic list, producing a superset that dramatically reduces false negatives.

Third, the remover module handles a wide variety of file formats. Python source files are processed with AST analysis to delete unused import statements. Structured configuration files (TOML, YAML, INI) are parsed with appropriate libraries (toml, PyYAML, configparser). Line‑oriented files such as requirements.txt and its .in variants are edited using robust regular‑expression patterns. Executable setup.py files are also transformed via AST to avoid syntactic breakage. When a lock file (e.g., poetry.lock) becomes out‑of‑sync, PyTrim warns the user rather than attempting an unsafe automatic regeneration.

The workflow is fully automated: given a project path, PyTrim (1) resolves dependencies dynamically, (2) invokes the chosen detector to flag unused packages, (3) removes the corresponding declarations and imports, (4) creates a new Git branch, commits the changes, and (5) opens a pull request with a detailed markdown report. This “review‑and‑merge” pipeline eliminates the manual remediation step that is the primary source of human error.

Evaluation was performed on two fronts. For replication accuracy, the authors used a curated dataset of 37 pull requests from prior work where developers manually removed bloated dependencies. PyTrim reproduced the final state of 59 out of 60 relevant files, achieving 98.33 % accuracy. The single mismatch involved a semantic refactoring (changing pyjwt to `pyjwt

Comments & Academic Discussion

Loading comments...

Leave a Comment