I, Robot? Exploring Ultra-Personalized AI-Powered AAC; an Autoethnographic Account

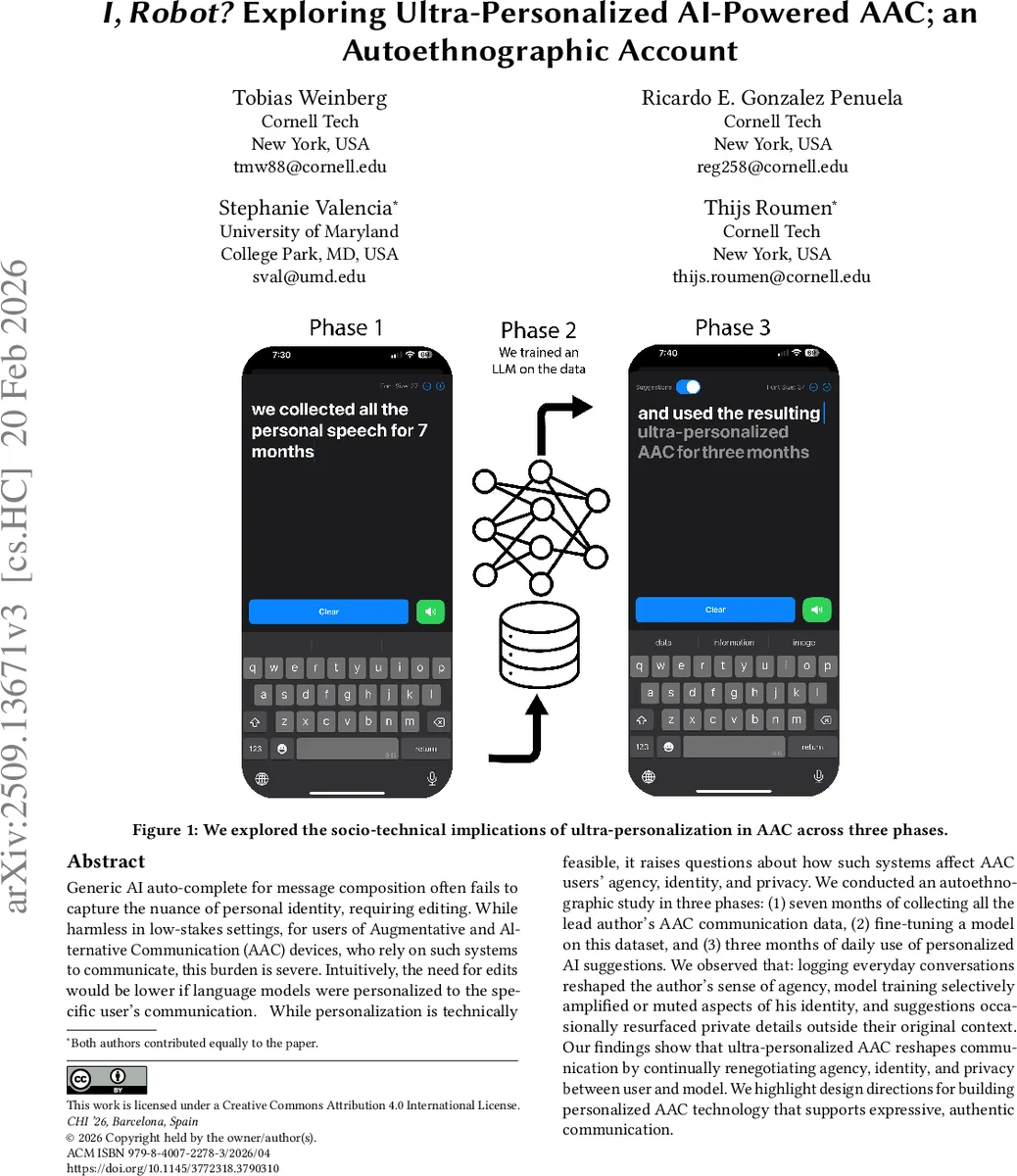

Generic AI auto-complete for message composition often fails to capture the nuance of personal identity, requiring editing. While harmless in low-stakes settings, for users of Augmentative and Alternative Communication (AAC) devices, who rely on such systems to communicate, this burden is severe. Intuitively, the need for edits would be lower if language models were personalized to the specific user’s communication. While personalization is technically feasible, it raises questions about how such systems affect AAC users’ agency, identity, and privacy. We conducted an autoethnographic study in three phases: (1) seven months of collecting all the lead author’s AAC communication data, (2) fine-tuning a model on this dataset, and (3) three months of daily use of personalized AI suggestions. We observed that: logging everyday conversations reshaped the author’s sense of agency, model training selectively amplified or muted aspects of his identity, and suggestions occasionally resurfaced private details outside their original context. We find that ultra-personalized AAC reshapes communication by continually renegotiating agency, identity, and privacy between user and model. We highlight design directions for building personalized AAC technology that supports expressive, authentic communication.

💡 Research Summary

The paper “I, Robot? Exploring Ultra‑Personalized AI‑Powered AAC; an Autoethnographic Account” presents a three‑phase, longitudinal autoethnographic study of a single AAC (Augmentative and Alternative Communication) user who is also the lead author. The motivation is that generic AI autocomplete tools often fail to capture the nuance of a user’s personal identity, forcing extensive editing—a burden that is especially severe for AAC users who rely on these systems for everyday speech. The authors hypothesize that ultra‑personalized language models—LLMs fine‑tuned on an individual’s own communication history—could reduce editing effort, but they also raise concerns about agency, identity, and privacy.

Phase 1 – Data Collection (7 months).

The author logged every message typed on his AAC device using a custom note‑taking app. The act of continuous logging itself altered his behavior: he began to self‑censor, avoiding profanity, dark humor, and certain slang because he knew everything would be stored. This self‑censorship already reduced his sense of agency and limited the expressive range of the dataset.

Phase 2 – Data Curation and Model Fine‑tuning.

From the raw logs the author removed expressions he felt uncomfortable delegating to an AI (e.g., highly personal jokes, controversial statements). The curated corpus was then used to fine‑tune a pre‑trained LLM, producing a model that could generate suggestions reflecting his multicultural vocabulary, code‑switching patterns, and preferred phrasing. However, the curation introduced a bias: the model learned only the “socially well‑behaved” portion of his voice, effectively erasing parts of his identity that he deemed unsuitable for automation.

Phase 3 – Deployment and Daily Use (3 months).

The fine‑tuned model was embedded in a simple AAC app that presented real‑time text suggestions as the author composed messages. Over three months the author used this system as his primary communication tool, logging acceptance/rejection decisions and reflecting on each interaction in diary entries. The model succeeded in reproducing his cultural references and multilingual style, markedly increasing typing speed and fluency. Yet, occasional “contextual leakage” occurred: the model resurfaced intimate biographical details (e.g., medical history, family issues) in unrelated conversations, leading to privacy breaches and social awkwardness. These incidents highlighted that the model, lacking robust context awareness, could over‑generalize from past data and violate situational appropriateness.

Key Findings.

- Agency: Continuous logging and the presence of AI suggestions reshaped the author’s sense of control. While the model reduced mechanical effort, it introduced a new decision layer—whether to accept, edit, or reject a suggestion—adding cognitive load.

- Identity: Fine‑tuning amplified certain identity cues (multilingual code‑switching, cultural idioms) while muting others (irreverent humor, controversial opinions). The resulting persona presented to interlocutors is a filtered version of the user’s authentic self.

- Privacy: The model’s propensity to reuse stored personal facts in inappropriate contexts demonstrates a privacy risk unique to ultra‑personalized AAC: the system can unintentionally become a “memory dump” that discloses sensitive information without user intent.

Design Implications.

- Context‑Sensitive Filtering: Implement real‑time context detection to suppress suggestions containing private or situationally inappropriate content.

- User‑Centric Control UI: Provide transparent mechanisms for users to quickly accept, edit, or block suggestions, and to give feedback that continuously refines the model’s behavior.

- Transparent Data Governance: Allow users to review, edit, or delete portions of their logged corpus, and to decide which subsets are used for model training.

- On‑Device or Hybrid Inference: Reduce latency and dependence on network connectivity, which can impair responsiveness—a critical factor for AAC users who need immediate feedback.

Limitations.

The study is based on a single, literate, keyboard‑based AAC user with motor but not cognitive impairments, limiting generalizability to other AAC modalities (e.g., eye‑gaze, switch‑based input) or to users with additional cognitive challenges. The technical specifics of the model (architecture, training hyper‑parameters) are not the focus; the contribution lies in the lived experience and sociotechnical insights. The authors acknowledge that latency, system lag, and the lack of quantitative measures of cognitive load are areas for future work.

Future Directions.

- Conduct multi‑participant longitudinal studies across diverse AAC technologies and cultural backgrounds.

- Explore hybrid on‑device models that balance personalization with privacy (e.g., federated learning).

- Develop metrics for agency (e.g., proportion of user‑generated vs. AI‑generated tokens), identity fidelity (semantic similarity to self‑reported style), and privacy risk (frequency of contextual leakage).

- Investigate policy frameworks and ethical guidelines for data ownership, consent, and the right to be forgotten in the context of ultra‑personalized assistive AI.

Conclusion.

Ultra‑personalized AI for AAC holds promise for dramatically increasing communication speed and linguistic relevance, but it simultaneously reshapes the user’s agency, identity, and privacy in complex ways. Designers must treat personalization not merely as a technical optimization but as a sociotechnical negotiation, embedding safeguards, user control, and transparent data practices to ensure that the technology amplifies, rather than diminishes, the authentic voice of AAC users.

Comments & Academic Discussion

Loading comments...

Leave a Comment