Learning from Biased and Costly Data Sources: Minimax-optimal Data Collection under a Budget

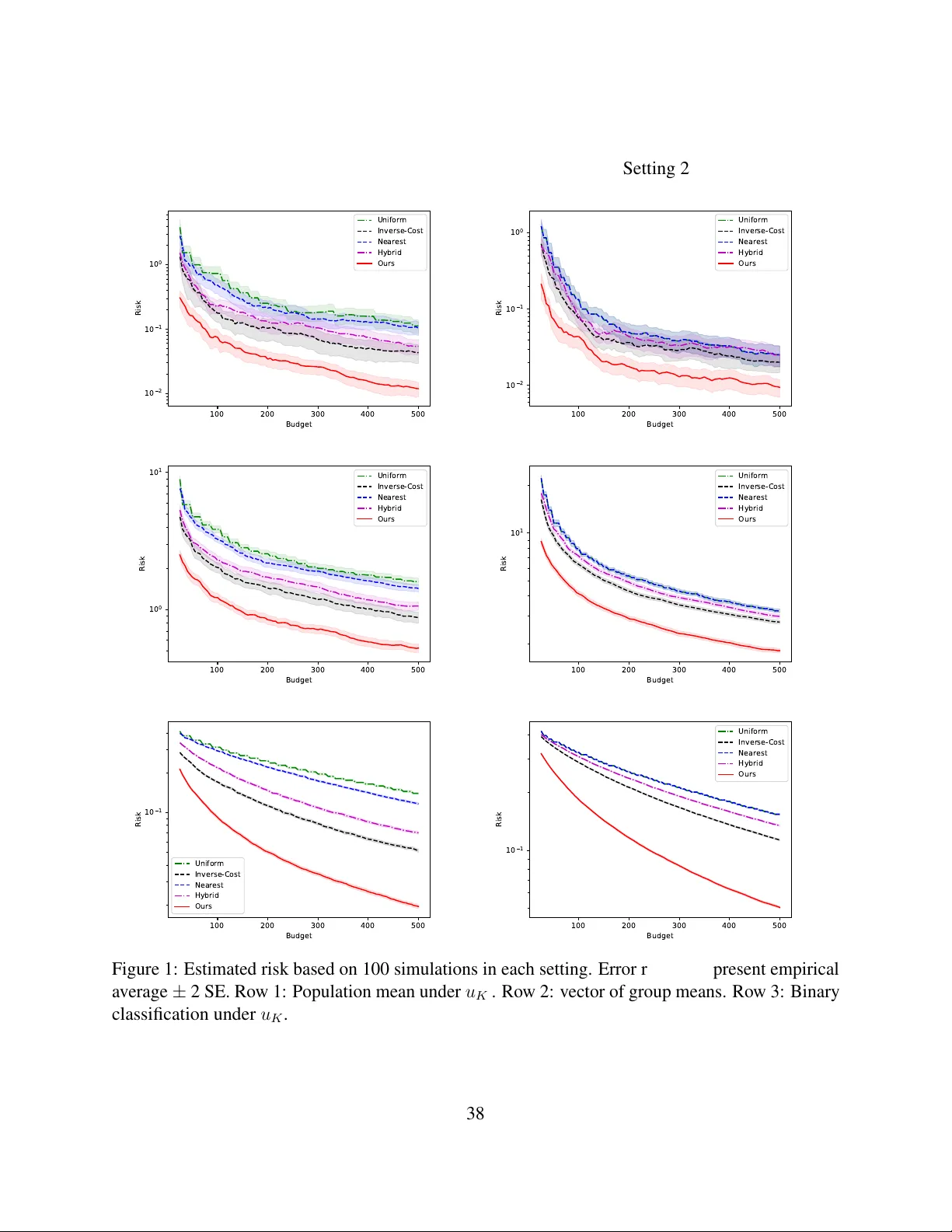

Data collection is a critical component of modern statistical and machine learning pipelines, particularly when data must be gathered from multiple heterogeneous sources to study a target population of interest. In many use cases, such as medical stu…

Authors: Michael O. Harding, Vikas Singh, Kirthevasan K