Deep Learning for Dermatology: An Innovative Framework for Approaching Precise Skin Cancer Detection

Skin cancer can be life-threatening if not diagnosed early, a prevalent yet preventable disease. Globally, skin cancer is perceived among the finest prevailing cancers and millions of people are diagnosed each year. For the allotment of benign and ma…

Authors: Mohammad Tahmid Noor, B. M. Shahria Alam, Tasmiah Rahman Orpa

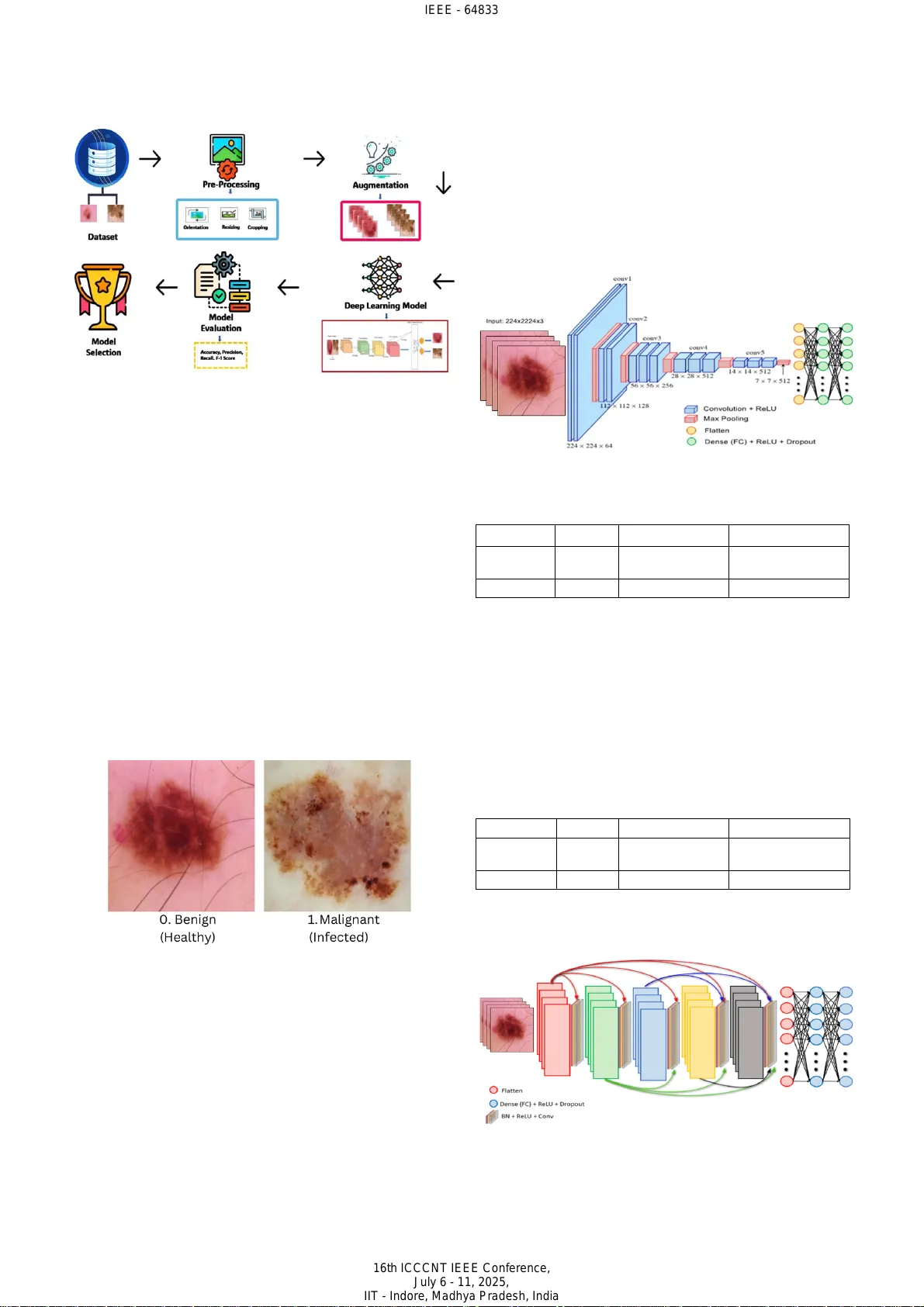

Deep Learning for Dermatology: An Innovative Framework for Approaching Precise Skin Cancer Detection Mohammad Tahmi d Noor Department of Comp uter Sc ience an d Engineering East West Uni versity Dhaka, Ban gladesh tahmidnoor7 70@gmail.co m Shaila Afroz Anika Department of Comp uter Sc ience an d Engineering East West Uni versity Dhaka, Ba ngladesh anikaafroz2002@gmail.com B. M. Sha hria Alam Department of Comp uter Sc ience an d Engineering East West Un iversit y Dhaka, Ba ngladesh bmshahria@gmail.com Mahjab in Ta snim Samiha De p artment of Computer Science and Engineering East West Uni versity Dhaka , Banglad esh tasnimsamiha 864@gma il.com Tasmiah Rahman Or pa Department of Comp uter Sc ience an d Engineering East West Uni versity Dhaka, Ba ngladesh tasmiahorpa37 6@gmail.co m Fahad Ahammed Department of Comp uter Sc ience an d Engineering East West Uni versity Dhaka, Ba ngladesh fahadahbd @gmail.c om Abstract — Skin cancer can be life - threatening if not diagnosed early, a prevalent yet preventable disease. Global ly, s kin cancer is perceived among the finest prevailing cancers and m illions of peo ple are diagnose d each year . Fo r the allotment of benign and malignant s kin spots, an area of critical importance in dermatological diagnostic s, the application of two prominent deep learning models, VGG16 and DenseNet201 are investigated by this paper. We evaluate these CNN architectures for their efficacy in dif ferentiating benig n from malignant skin lesions leveraging enhancement s in deep learning enforced to skin cancer spotting . Our objective is to assess model accuracy and computational efficiency, offer ing insights into how these models could assist in early detection, diagnosis, and streamlined workflows in dermatology. We used two deep learning methods DenseNet201 and VGG16 model on a binary class dataset c ontaining 3297 images. The be st result with an accuracy of 93.79% achieved by DenseNet201. Al l image s were re size d to 224 × 224 by rescaling. Although both models provide excellent accuracy, there is still some room for improv ement . In future using new datasets, we tend to improve our work by achieving great accuracy. Keywords — CNN , Skin , Cancer , Machi ne l earni ng , Image classification , G RAD C am, S HAP I. I NTRODUCTION Skin cancer manifests i n different types, known as the part of the most concerning and common forms of cancer on a global basis . Among the differ ences in skin can cer, melanoma is the most aggressive due to its potent ial to spread rapidly. Prolonge d exposure to ultraviolet radia tion is mainly responsible for the condition arising . This condition leads to abnormal cell growth in the skin. To substantially improve survival rates, early detection is critical. The challenging part is to identify benign or malign ant lesions , due to the visual differences and overlapping characterist ics of these lesions. Skin cancer arises from abnormal skin cell changes t hat proliferate uncontrollably. These skin cancers form tumo u rs that can be either malignant or benign. Basal cell carcinoma and s quamous cell carcinoma are two other vari eties of skin cancer that tend to gr ow slowly and rarely spread. However, skin cancer is a leading form of canc er worldwide that affects the areas vulnerable to ultraviolet radiation, such as the face, arms , legs, etc. UV rays are mainl y responsibl e for spreadi ng skin cancer from the sun or synthetic sources like tanning beds. D amaging the DNA in skin cells leads to mutations and causes cells to grow immensely. Excessive sun exposure without protection, a sluggish immune sy stem, a family history of skin cancer, etc can also increase the possibility of one's skin cancer. Minimizi ng disclosure to UV radiation is the m ain prevention of skin cancer [1] . Wearing vigilant clothing, using broad - spectrum sunscreen with immense SPF, seeki ng shade during excessive sunlight and heat, etc cloud be helpful in terms of protecting every individual. Regular dermatologist screenings are crucial for catching early signs of any kind of dermatological concerns . To significantly dwindl e the risk of abnormal skin growth , every individual should adopt these preventions as per public health campaign recommendations . In the realm of medical imaging, especially classifying skin lesions, Convolutional neural networks (CNN), particularly VGG16 and DenseNet201 demonstrated profound efficiency [2] . To extract and analyze patterns, the models are de signed, and t hey can anal yze intric ate patte rns from hi gh - resolution dermoscopic images. For thei r architectural depth and capability in feature extraction, VGG16 and DenseNet201 are prof oundly renowned. Th is quality allows them to process the complex texture, color, and characteri stics that distinguish healthy skin. In the era of image classification, VGG16, a 16 - layer deep CNN set a high standard due to its simplic ity and high performance [3 ]. S equential convolutional layers with a uniform kernel size are t here in the architecture of this model that allows it to maintain computational efficiency and capture essential visual features. VGG16 is mainly stronger in hierarchical feature extract ion, which enabl es learning low - level edges and textures in early layers. VGG16’s structure is built in a way that enables one to discern the subtle textual differences between benign and malignant lesions by focusing on details within the image. Another model that represent s a more advanced CNN architecture known as DenseNet201, emphasizes extensive interconnections between layers. In traditional models, each layer passes information to the next while DenseNet201 connects each layer to every other layer. It promotes feature IEEE - 64833 16th ICCCNT IEEE Conference, July 6 - 11, 2025, IIT - Indore, Madhya Pradesh, India reuse and reduces the number of para meters that are required. DenseNet201 seems to capt ure complex, nuanced pat terns within dermo scopic images , enhancin g its abi lity to extricate itself amidst different ski n irregularities . This model can analyze intricate structures within malignant spots, with varied pigmentation and asymmetric shapes. An other p aper combined U - Net with an improved version of MobileNet - V3 architecture to make it more precise to diagnosi ng cancer which was enhanced through hyperparameter op timization [4]. The development and application of these models can emphasize the transmute potential of AI in healthcare. These models promise us an early detection and meticulous diagnosis of skin cancer, which can become routi ne and improve patient outcom es globally. In skin cancer diagnosis, both VGG16 and DenseNet201 models have aut omated formidable tools, offering distinct advantages to cater to different clinical needs. VGG16 architecture is simple, and it has mo derate r esource demand which makes it id eal for f ast, scal able appli cations. The architecture’s speed and efficiency are paramount. On the other hand, DenseNet201 provides us with deeper feature insights which is particularly effec tive in time requiring high sensitivity. Based on clinical requirements , combining these models, diagnos tic accuracy can be optimi zed by the healthcare providers. The development and application of these mod els reinforce the transformative possibility of AI in healthcare which promises us a futur e where early detection and meticulo us diagnosis of skin cancer can be rout ine and prov ide outcomes on a global scale. Leveraging a larger and more div erse d ataset, our approach regulates the potential to attain superior diagnostic accuracy, thereby amplifying it s robustness, generalizability, and reliability in being prudent to intricate and subtle patterns across a broad spectrum of skin cancer cases. This ad vancement un derscores the st owage of our method to transfigure diagnostic practices, paving the way for more rigor ous, versat ile, and stir ring implicat ions in the detection and mana gement of skin cancer. II. RELATED WORKS For better understanding, we have gathered some relate d works that can help us to u nderstand more clearl y the comparison of our proposed work. Hasan et al . make an ingenious approach to skin cancer diagnosis by leveraging convolutional neural networks to automate the detection process [5] . Their study introduces a CNN architecture that processes dermoscopic images to classify skin lesions as benign or malignant. The model achieves a notable accuracy of 89.5% by segmenting images and ext racting defining features. The efficiency and precision of CNNs were emphasized in this paper in m edical imaging. An alterna tive path way was p roposed t o expedit e diagnosi s and reduce diagnostic errors in skin cancer detection. Battle et al. handled Siamese Neural Networks (SNNs) to classify surface lesions and ascertain the novel league of skin cancer adopting clinical and dermoscopic pictures [6 ]. Their leading - edge approach achieved a classifi cation accuracy of 74.33% on clinical images and 85.61% on dermoscopic images. Their accuracy level evinces the model's adeptness at extricating between highly similar lesion class es. To address the intrica cy inherent in dermatological diagnosis, peculiarly when novel or r are lesi on catego ries emerge, by showcasing the potentials of Siamese Neural Networks (SNNs) in this study. This model provides a robust framework by learning discriminative features between image pairs and successfully identifies skin cancer types w ith greater precision. Krohling et al. utilize a Convo lutional based deep learning model to train pictures , which are mingled with les ion specific data and pat ient demographics [7] . Their study compassed a balanced accuracy of 85%, which integrated into a smartphone - based application, and they steered at making skin cancer screening more attainable and convenient. Their integration of deep learning into a mobile platform that highlights its potential in enhancing the reach and persuasiveness of dermatological assessments. Image d ata and patient context were lever aged, and the CNN based approach delivers reliable and susceptible classification. This application demonstrat es the power of combining deep learning and mobile technology to democratize healthcare, fostering early detecti on. Shaikh et al. emphasize the role of CNNs transf ormation diagnostic practices and explore t he potential of AI - driven solutions for skin cancer detection [8] . Their study presents a CNN bas ed app roach that a utomates lesion analysis and reduces the reliance on manual examin ation. By isolating key features and segmenting dermoscopic images, the model effectively differentiates amongst benign and malignant skin lesions. Using the CNN architecture , they got an impressive accuracy of 91% which demonstrates CNN’s capacity t o provide reliable and timely diagnos es. Ganthya’s wo rk demonstra tes a nove l CNN model t hat is designed to advance skin cancer which enhances image classification technique [9] . Their model achieves an excellent accuracy of 91% by leveraging advanced data preprocessing methods which also include data augmentation. They have emphasized reducing diagnostic errors and present their model as a promising tool to assist doctors in the prompt recogni tion and verdict of skin conditions. III. M ETHODOLOGY In the methodology section , we provi ded a detai led description of our proposed work. We app lied a deep learning model to our preprocessed dataset. For VGG16, we used a learning rate of 0.00 02 and opti mized using the Adam optimizer for efficient convergence. The batch size was set to 20 by balancing memory and stable gradient updates. By implementing early stopping to prevent overfitting, we trained the model for 108 epochs. For DenseNet201, Epoch 80 , Patience 15 , Learning rate 0.0001 , and b atch size 20 have been used. Some data set enrichment metho ds such as rotation, flipping , and contrast alterat ion helped improve generalization. We al so fi ne - tuned the hyperparameters and introduced dropout layers to enhance model robustness. To extract deep features using transfer learning, we trai ned the deep CNN model. We use this feature to recognize the patterns. To shape, color, print, and appearance to represent an object, we use the deep CNN algorithm. There are different layers in the deep CNN model to calculate the dot product of weights and pass the input image in the first layer. In the ReLu layer, inactive neur ons were don e b y p ooling and removing. Softmax is being used to classify the features that are computed. We also used VGG16 which is a pre - trained IEEE - 64833 16th ICCCNT IEEE Conference, July 6 - 11, 2025, IIT - Indore, Madhya Pradesh, India deep CNN model to extract its features . Fig . 1 shows the proposed methodology. Fig . 1. Methodol ogy A. Dat aset We hav e use d the prop osed models for dise ase d etecti on. For this research, we use two types of di sease dataset s that are benign (healthy) and mali gnant (infected) skin spot s that have 1800 and 1497 images [1 0] . We collecte d the data set from online sources . We found some imbalanced distribution, with malignant cases being fewer than benign. We have done some preprocessing , including reseizing, normalizations, data augmentation, and noise reduction, to enhance model performance. Faced some challenges including dataset imbalance and varying image quality which we addressed using oversampling, augmentation, and filtering of low - quality images. The proposed methods were simulated on a MacBook Air M2 15 - inch. We have shown a sample input of the dataset in the following Fig . 2. This balanced and comprehensive dataset forms the substratum of our analysis, enabling the model to congruously learn subtle distinctions between healthy and cancerous skin conditions, thereby driving agile and flawless predi ctions. Fig. 2. Sample inp uts of the skin cancer In the image 0, skin cancer named benign is shown. Benign s kin sp ots ar e gene rally harmless and noncancerous. It naturally is consistent in color and shape, slightly raised texture. On the other hand, Malignant skin spots are shown in the pictures 1 . It is potentially cancerous. This cancer can be seen as asymmetry and irregular borde rs, and it may also itch or bleed. These discernible pat terns amplify th e dataset's ability to convey critical diagnostic indications, providing a panoramic keystone for training a model. B. VGG - 16 In the era of im age classification, VGG16 is so strong that has done an impressive job. VGG16, a convolutional neural network, is pre - trained model bas ed on a mass ive da taset. After extracti ng t he features usin g a pre - trained architecture , fine - tune the entire model. Expanding the depth of the network, VGG16 joins more convolutional layers while using very small convolutional filters in all layers. Hyperparameter tuning for VGG16 is shown in Table 1. In Fig . 3 the structure of the VGG16 engineering is shown . Fig. 3. Structur e of the model VGG16 TABLE I HYPERPARAMETER TUNING FOR VGG16 Batch size 20 Loss function binary_crossentropy Learning rate 0.0002 Number of epochs 108 Optimizer Adam Patience 20 C. DenseNet201 DenseNet201, a convolution al neu ral network, establishes connections between layers. This model has such layers as convolutional networks, pooling, and fully connected layers. Utili zing the bottleneck layers and transition layers improves computational efficiency. This model is a powerful to ol for detecting skin cancer. Hyperparameter tuning for DenseNet201 is sho wn in Ta ble 2. TABLE II HYPERPARAMETER TUNING FOR DENSENET201 Batch size 20 Loss function binary_crossentropy Learning rate 0.000 1 Number of epochs 62 Optimizer Adam Patience 15 An adva nced d eep le arning model like DenseNe t201 p romises to enhance crop health and productivity in agricultural sectors. Fig . 4 shows the architecture of DenseNet201. Fig. 4. Structur e of the model Dense Net201 IEEE - 64833 16th ICCCNT IEEE Conference, July 6 - 11, 2025, IIT - Indore, Madhya Pradesh, India IV. EX PERIMENTAL RESULT AND ANALYSIS A. VGG - 16 In this process, 87 .49 % a ccuracy has been success fully gained by the VGG16 model . It also succeeded for 108 epochs. 330 seconds per step was taken during the training phase and w e applied 108 training epochs. In F ig . 5, 108 epochs are displayed. Acc uracy f or VGG16 as cl assificati on wise has s hown in Tabl e 3. Fig. 5. VGG16 mode l – quality metrics evaluat ion during training: (a) accuracy (b) loss TABLE III CLASSIFICATION WISE ACCURACY TABLE FOR VGG16 Classes Precision Recall F1 - score Support Accuracy Benign 0.95 0.78 0.86 180 0.86 Maligna nt 0.78 0.95 0.86 149 Fig . 6 shows the performance of the VGG16 algorithm which has bee n highlighte d in this co nfusion matr ix in classifying benign and malignant skin spots. W ith 140 benign and 142 malignant cases correctly classified by this model which indicates high accuracy. However, 40 benign cases were classified as malignant, and 7 malignant cases were misclassi fied as benign which can lead to unn ecessary tests. Overall the model is effecti ve and cou ld benefit from reducing false positives and improving specificity. Fig. 6. Confusi on matrix of VGG16 model B. Den seNet201 The Dense Net201 model h as suc cessfully gained a training accuracy of 9 3. 79 % af ter train ing for 80 epochs. 374 seconds per step has taken by the training phase and we applied 80 training epochs. The epochs are displayed in F ig . 7 a nd the c lassifica tion - wise accuracy is shown in Table 4. Fig. 7. DenseNe t201 model – quality metrics evaluation during training: (a) accuracy (b) loss TABLE IV CLASSIFICATION WISE ACCURACY TABLE FOR DENSENET201 Classes Precision Recall F1 - score Support Accuracy Benign 0.98 0.89 0.94 180 0.93 Maligna nt 0.88 0.98 0.93 149 In Fig . 8, This confusion matri x demonstrates improved classification by the model, with 161 benign and 146 malignant cases correc tly i dentifie d. In this model, benig n is misclassi fied as ma lignant which is only 19 and the fal se negative count which i s malignant as benign is very low only 3. The overall enhancement is refl ected by this model in both sensitivity and specificity. This shows that t he model is more accurate in identifying both benign and malignant cas es. Fig. 8. Confusio n matrix of Den se N et201 architecture C. SHAP Fig. 9 shows the skin cancer identif ication by using DenseNet201. SHAP mainly assigns value to the feature and explains how much each contributes to the prediction. A prominent feature here is Grad - CAM. The following f igure shows a Grad - CAM output where the heatmap highlights the most influent ial regions tha t c ontribute d t o th e deep learning model's pr ediction. The pictu re visual izes that the heat map IEEE - 64833 16th ICCCNT IEEE Conference, July 6 - 11, 2025, IIT - Indore, Madhya Pradesh, India highlights the spot on skin which belongs from the b enign class and confirms that t he model identifies these areas as important features. In the heatmap the warm red color areas indicate areas of greater significance. By this, the model makes deci sions bas ed on rel evant vi sual cues . Fig . 9. Skin Cancer Identificati on using SHAP V. C OMPARISON The t raining time between the two models VGG16 was taken 9 hours 23 minute s a nd the DenseNe t201 was taken 8 hours 31 minutes . The training and test a ccuracy are compared in Tabl e 5 . The two models have gained an excellent accuracy of 87.49% and 9 3.79 %. The tr aining an d the test accuracy of each model have different numbers of training epochs. Despite h aving 93 .79% accu racy, i t strug gles with ambiguous lesions where the two classes feature overlap and misclassification happens . General izations to r eal - world cases are affected because of the dataset's limited diversity. We aim to use mor e adva nced au gmentat ion tec hnique s for better diagnostic accuracy. TABLE V ACCURACY COMPARISON BETWEEN THE TWO MODELS Algorithm VGG16 DenseNet201 Training accurac y 87.49 93.79 Test accuracy 86.93 93.31 To showcase our work and to prove t hat our method was more effective than the others we ga thered some compariso ns with some previous works shown in Table 6. In terms of accuracy, various skin cancer classification methods are compared. Using convolutional neural networks (CNNs), Hasan et al. achie ved 89.50% accura cy [5] . Wu attained 83.10% accuracy with a ResNet - 50 model [ 11 ] , and Houssein’s dee p CNN appr oach achieved 87.9 0% [1 2] , also under 90%. In contrast, Battle et al. SNNs to achieve 85.61 % [6] demonstrating improved performance. Krohling et al. a n achieved accuracy of 85% by using CNN architecture [ 7 ]. On the other hand, using VGG16 architecture, we can achieve 87.49% accuracy and DenseNet201 gives us an accuracy of 93.79% which shows our model works better in terms of better accuracy and detection of skin cancer. The F1 score ensures balanced performance across two classes. The A UC - ROC measured its ab ility t o make a differe nce betwee n benign and malignant cases. By anal yzing the precision and r ecall, we minimize false negatives in malignant detection. Our a pproach has yielded unparalleled outcomes , surpassing all other methods, thereby underscoring its exceptional efficacy and di stinguished superiority in delivering remarkable results. TABLE VI COMPARISON OF THE PROPOSED MODEL WITH PREVIOUS WORKS Author Method Accuracy (%) Hasan et a l. [5] Convolutional neural networks 89.50 Battle et a l. [6] Siamese Neural Networks 85 . 61 Wu [1 1] ResNet - 50 83 . 10 H. Houssein [1 2] Deep Convolut ional Neural Network 87.90 Krohling e t al. [7] CNN 85.00 Proposed model CNN VGG16 87.49 DenseNet201 93.79 VI. C ONCLUSION Skin c ancer ranks among the most ubiquitous and expeditiously growing cancers worldwide , posing a significant heal th challenge , especially due t o its increasing rate from influences like persistent UV exposure. Early and accurate diagnosis is crucial , particularly for malignant varieties like melanoma, where timely intervention can greatly expand the responses from patients. In this article , we explored the efficiency of deep neural learning algorithms, specifically VGG16 and DenseNet20 1 to classify skin abnormalities as either malignant or benign. The art icles were thoroughly quali fied and assessed on a dataset to evaluate their efficacy in accurately identifying skin cancer, a fundamental application within dermatolog y and oncology. VGG16 achieved an accuracy of 87. 49%, while DenseNet20 1 displayed outstanding perf ormance wit h an accuracy of 93.79%, highlighting its potential for enhanced diagnostic accuracy in clinical approaches. The method’s success in attaining high accuracy after 80 epochs soli difies its role as a groundbreaking advancement in the field, offering a scalabl e and viabl e soluti on fo r prompt detection. Harnessing the synergy of DenseNet201's dense connectivity and VGG16's proven efficiency, this method demonstrates a new benchmark in the quest for navigable and comprehensive cancer diagnostics . We hope to use vision transfor mers in the future for improved feature extraction, integrating explainable AI for better interpretabilit y. Thus, the proposed approach is not merely a technical achievement but a step forward in democratizing access to accurate skin cancer diagnostics globally. Fin ally, our study sheds li ght on the critical role of convolutional neural networks in dermatological diagnostic assessments. These i nsights reinforce the transformative possibility of deep learning in medical diagnostics, offering a pat hway toward more precise, accessible, and life - saving tools for skin cancer detection. R EFERENCES [1] Khan, M. A., Hu ssain, A., Rehman, Z. U., Fayya z, M., Khan, M. S ., & Ali, A. (201 9). Classi fication of Melanoma an d Nevus in Digi tal Images for D iagnosis of Skin Cancer. Informatics in Medicine Unlocked, 16, 10023 9. https: //doi.o rg/10.101 6/j.imu. 2019.100239 [2] Kumar, A., Kumar, M ., Bhardwaj, V.P. et al. A novel skin cancer detection model using modified finch deep CNN classifier model. Sci Rep 14, 11235 (2024). htt ps://doi.o rg/10.1038/s 41598 - 024 - 60954 -2 [3] Jaber, N.J.F., Akbas, A. Melanoma skin cancer detection based on deep learning methods and binary Harris Hawk optimization. Multimed Tools Appl (2024). https://doi. org/10.1007/s11042 - 024 - 19864 -8. [4] Kumar Lilhore, U., Simaiya, S., Sharma, Y.K. et al. A precise model for skin cancer diagnosis using hybrid U - Net and improved MobileNet - V3 with hyperparame ters optimiz ation. Sci Rep 14, 4299 (2024 ). https://doi.org/10.1038/s41598 - 024 - 54212 -8 [5] Mahamudul Hasan, Suraji t Das Barman, Samia Islam, and Ahmed Wasif Reza. 20 19. Skin Cance r De tecti on Using Convol utiona l N eural IEEE - 64833 16th ICCCNT IEEE Conference, July 6 - 11, 2025, IIT - Indore, Madhya Pradesh, India Network. In Proceedings of the 2019 5th International Conference on Computing and Artificial Intellige nce (ICCAI '19). Association for Computing Machinery, New York, NY, USA, 254 – 258. https://doi.org/10.1145/3330482.3330525 [6] Battle, M. L., Wilson, P., Mendez, A., & Roberts, A. (2022). Siamese Neural Networks for Skin Cancer Classif ication and New Clas s Detection using Clinical and Dermoscopic Image Datasets. arXiv preprint arXiv:2212.06130. Retrieved from https://arxiv.org/abs /2212.06130 [7] Krohling, B., Luz, E., Lima, G., & Jung, C. (2021). A Smartphone - based Application for Skin Cancer Classification Using Deep Learning with Clinical Images and Lesion Information. arXiv preprint arXiv:2104.14353. Retrieved from https://arxiv.org/abs/2104.14 353 [8] Shaikh, J., Khan, R., Ingle, Y., & Shaikh, N. (2022). Skin cancer detection: A review using AI techniques. International Journal of Health Sciences, 6(S2), 14339 – 14346. https://doi.org/10.53730/ijhs. v6nS2.8761 [9] Manoj Ganthya. Convolut ional Neural Networks in Dermatology: Skin Cancer Detection and Analysis , 27 August 2024, PREPRINT (Version 1) available at R esearch Square [https://doi.org/10.21203/rs.3.rs - 4833522/v1] [10] Manda l, R. (n.d.). Skin Cancer ISIC Images Dataset. Retrieved from Kaggle: https://www.kagg le.com/dat asets/rm1 000/skin - cancer - isic - images/data [11] Wu, Y., Liu, J., Ma, L., Zhao, Y., & Yu, W. (2022). Skin Cance r Classificati on Wit h Deep Learning: A Systematic Review. Frontiers in Oncology. https:// doi.org/ 10.3389/f onc.2022. 893972 [12] Housse in, E.H., Abdelkareem, D.A., Hu, G. et al. An effective multiclas s skin cancer classifi cation approach based on deep convolutional neural network. Cluster Comput 27, 12799 – 12819 (2024). https://doi.org/10.1007/s10586 - 024 - 04540 -1 IEEE - 64833 16th ICCCNT IEEE Conference, July 6 - 11, 2025, IIT - Indore, Madhya Pradesh, India

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment