Learning Flow Distributions via Projection-Constrained Diffusion on Manifolds

We present a generative modeling framework for synthesizing physically feasible two-dimensional incompressible flows under arbitrary obstacle geometries and boundary conditions. Whereas existing diffusion-based flow generators either ignore physical …

Authors: Noah Trupin, Rahul Ghosh, Aadi Jangid

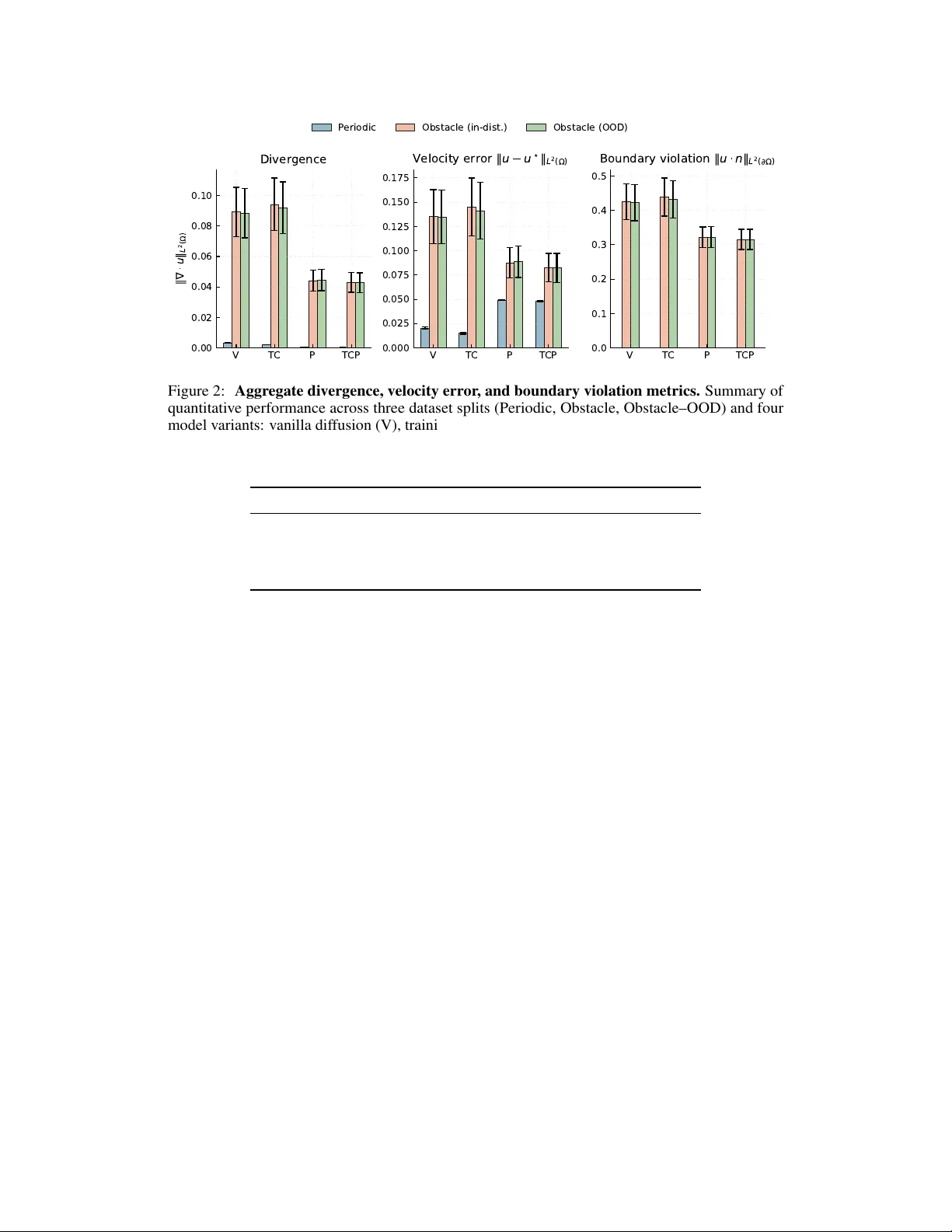

Lear ning Flow Distrib utions via Pr ojection-Constrained Diffusion on Manifolds Noah T rupin Rahul Ghosh Aadi Jangid Department of Computer Science Purdue Univ ersity {ntrupin, ghosh126, ajangid}@purdue.edu Abstract W e present a generativ e modeling framework for synthesizing ph ysically feasible two-dimensional incompressible flows under arbitrary obstacle geometries and boundary conditions. Whereas existing diffusion-based flow generators either ignore physical constraints, impose soft penalties that do not guarantee feasibility , or specialize to fix ed geometries, our approach integrates three complementary components: (1) a boundary-conditioned diffusion model operating on velocity fields; (2) a physics-informed training objectiv e incorporating a div ergence penalty; and (3) a projection-constrained re verse diffusion process that enforces exact incompressibility through a geometry-a ware Helmholtz–Hodge operator . W e deri ve the method as a discrete approximation to constrained Lange vin sampling on the manifold of div ergence-free vector fields, providing a connection between modern diffusion models and geometric constraint enforcement in incompressible flow spaces. Experiments on analytic Na vier–Stokes data and obstacle-bounded flow configurations demonstrate significantly improv ed div ergence, spectral accuracy , vorticity statistics, and boundary consistenc y relati ve to unconstrained, projection- only , and penalty-only baselines. Our formulation unifies soft and hard physical structure within dif fusion models and provides a foundation for generativ e modeling of incompressible fields in robotics, graphics, and scientific computing. 1 Introduction Diffusion models ha ve enabled unprecedented progress in scientific generati ve modeling, including images, audio, molecular structure, spatiotemporal dynamics, and other surrogate PDE modeling and high-dimensional dynamical forecasting [ 8 , 19 , 6 , 20 , 21 , 7 ]. Y et, generativ e modeling of incompressible vector fields remains limited. V elocity fields gov erned by the Na vier–Stokes equations must satisfy the geometric constraint ∇ · u = 0 , along with geometry-dependent boundary conditions (periodic, no-slip, inflo w and outflow , or mixed). Even small violations of incompressibility can cause numerical instabilities, unphysical transport, and incompatibility with downstream tasks in robotics, planning, or simulation-based inference. Existing approaches face three limitations: 1. Image-based diffusion treats velocity fields as RGB images, ignoring div ergence con- straints; 2. Penalty-based physics-inf ormed diffusion [ 3 , 18 ], reduces diver gence on average but cannot enforce feasibility at sample time; 3. Geometry-specialized architectur es cannot generalize across obstacle configurations or boundary conditions. Preprint. At the same time, emerging robotics methods rely on global vector fields and potential-free flows for planning [ 16 ], motiv ating a general model that respects both local geometry and global physical laws. Our approach. W e propose a projected, boundary-conditioned diffusion model for incompressible flows. The method inte grates: • A geometry-aware scor e network that conditions directly on obstacle masks and boundary embeddings; • A physics-inf ormed training objective with a div ergence penalty applied to the network’ s denoised velocity estimate; • A projection-constrained re verse diffusion step , enforcing exact incompressibility and geometry-consistent boundary conditions at ev ery sampling iteration. The projection operator implements a discrete Helmholtz–Hodge decomposition [ 4 ], using a periodic FFT -based solver in obstacle-free settings and a masked Poisson solver for arbitrary geometries. This transforms the re verse diffusion chain into a constrained sampler operating on the manifold of incompressible flows. Our main contributions are: 1. Boundary-conditioned diffusion f or v ector fields . W e introduce a DDPM architecture conditioned jointly on spatial geometry masks and boundary-regime embeddings, enabling generation across obstacle configurations unseen during training. 2. Hybrid physical constraints: soft during training, hard during sampling . A div er- gence penalty shapes the score model’ s denoised predictions, while a geometry-aware Helmholtz–Hodge projection applied at ev ery re verse step ensures e xact diver gence-free samples. 3. A theoretical deri vation of projected diffusion as manif old-constrained sampling . W e show that the projected rev erse chain approximates constrained Langevin dynamics on the incompressible manifold, linking diffusion with classical geometric PDE operators. 4. Conditioning across geometries and regimes . The separation of stochastic velocity gener- ation from deterministic boundary conditioning allo ws the model to synthesize physically consistent flows under pre viously unseen obstacle layouts and boundary condition types. 5. Empirical validation on periodic and obstacle-bounded Navier–Stokes data . The proposed method achiev es lower di vergence, improv ed vortex and spectral statistics, and better boundary consistency that unconstrained or physics-only baselines. T ogether , these advances position projected diffusion as a principled and practical tool for generati ve incompressible flow modeling. 2 Related W orks Physics-inf ormed diffusion and generative PDE surr ogates. Sev eral works augment diffusion models with soft physical constraints. Physics-Informed Diffusion Models (PIDMs) introduce PDE-residual terms into the diffusion loss to enforce governing equations during training and demonstrate lar ge reduction in residual error on flo w and topology optimization problems, while maintaining standard sampling procedures [ 2 ]. Closely related physics-informed surrogates for PDEs, such as neural PDE solvers with message passing or operator-learning architectures, similarly rely on penalizing residuals or embedding inducti ve biases rather than guaranteeing hard constraint satisfaction at sample time [5]. Our formulation also employs a div ergence penalty in the training objectiv e, but differs in two respects: (i) the loss is defined directly on the network’ s denoised velocity estimate on a discrete incompressible manifold, and (ii) soft penalties are coupled with a Helmholtz–Hodge projection at ev ery reverse step, yielding e xactly diver gence-free samples rather than approximate constraint satisfaction. 2 Constraint-enf orcing generative sampling. A second line of work enforces hard constraints during sampling. Constrained Synthesis with Projected Diffusion Models (PDMs) recasts each reverse diffusion step as a constrained optimization problem, projecting the sample onto a user-defined feasible set using generic solvers [ 5 ]. Physics-Constrained Flow Matching (PCFM) wraps pretrained flow-matching models with physics-based corrections that enforce nonlinear PDE constraints, such as conservation laws and boundary conditions, through continuous guidance of the flow during sampling [22]. At the MCMC le vel, projected and constrained Lange vin algorithms hav e been analyzed as princi- pled methods for sampling from distributions restricted to con vex sets or manifolds by alternating unconstrained Langevin updates with projection or constrained dynamics [ 11 , 1 ]. Our projected rev erse diffusion can be vie wed as a discrete analogue of projected Langevin dynamics on the man- ifold of incompressible flows: each re verse DDPM step approximates an unconstrained Lange vin mov e in velocity space, and our discrete Helmholtz–Hodge operator realizes the projection onto the div ergence-free, boundary-consistent manifold. Unlike generic PDMs, the constraint set here is the solution space of the incompressible Na vier–Stokes constraint with geometry-dependent boundary conditions, for which we design specialized projectors. Diffusion models f or incompressible and geometry-conditioned flows. Diffusion models hav e recently been applied directly to incompressible or near -incompressible flow generation. Hu et al. condition a latent dif fusion model on obstacle geometry to predict flow fields around bluf f bodies, and apply a post-hoc projection to reduce di ver gence; while they demonstrated improv ed accuracy , the generati ve process itself is not deri ved as sampling on a constrained manifold, and projection is decou- pled from the dif fusion dynamics [ 9 ]. Miyauchi et al. use a two-stage pipeline where user sk etches are con verted into target vorticity distrib utions and then refined via Helmholtz–Hodge decomposition to obtain visually plausible, approximately incompressible flows for graphics applications [15]. In contrast, our model (i) conditions explicitly on obstacle masks and boundary-regime embeddings, (ii) uses a geometry and BC-a ware projection operator at each re verse step, and (iii) provides a formal connection between projected rev erse diffusion and constrained Langevi n dynamics on the manifold. Flow-field r epresentations for robotics and physics-inf ormed planning. In robotics, several works employ continuous fields governed by PDEs as planning substrates. NTFields represent solutions of the Eikonal equation as neural time fields and use them as cost functions for robot motion planning in cluttered en vironments, providing a physics-informed alternati ve to grid-based planning [ 16 ]. Follo w-up work extends this paradigm to acti ve exploration, mapping, and planning in unknown environments with physics-informed neural fields [ 12 ]. These methods highlight the utility of globally consistent fields for planning, b ut the y focus on scalar arri val-time fields rather than vector -valued flo ws and rely on deterministic PDE solv ers or PINN-style training rather than generativ e modeling. Our approach is complementary: by learning a boundary-conditional dif fusion model over v elocity fields and interpreting the re verse process as constrained sampling on the incompressible manifold, we provide a generativ e primitive for sampling physically feasible flow fields across geometries. This can serve as a b uilding block for planning and control methods that require diverse, physically consistent flow distrib utions rather than single deterministic solutions. 3 Methods Let • u 0 ∈ R 2 × H × W denote a 2-D velocity field, • m ∈ { 0 , 1 } 1 × H × W a binary obstacle mask identifying solid regions, and • c ∈ R K a boundary-condition or flow-re gime embedding. W e seek to learn the conditional distribution p θ ( u 0 | m, c ) subject to the physical constraint that u 0 be incompr essible and consistent with the geometry encoded in m . W e achiev e this by integrating (1) boundary-conditioned dif fusion ov er velocity fields, (2) a 3 div ergence-aw are objectiv e, and (3) projection-constrained rev erse diffusion onto the manifold of feasible flows. 3.1 F orward Diffusion on V ector Fields W e adopt a standard DDPM forward process [ 8 ] applied solely to the velocity field. Geometry m and regime embedding c remain deterministic and uncorrupted throughout. For a noise schedule { β t } T t =1 with ¯ α t = Q t s =1 (1 − β s ) , the forward kernel is q ( u t | u 0 ) = N √ ¯ α t u 0 , (1 − ¯ α t ) I , u t = √ ¯ α t u 0 + √ 1 − ¯ α t ε, ε ∼ N (0 , I ) . The independence structure ( u t , m, c ) ∼ q ( u t | u 0 ) × δ ( m ) × δ ( c ) . separates the stochastic v ariable (velocity) from deterministic conditioning v ariables. This allo ws a single diffusion model to generalize across heterogeneous geometries. 3.1.1 Geometry-aware training data For obstacle-bounded domains, an analytic velocity field (e.g., T aylor–Green vorte x) is first restricted to fluid cells via no-slip enforcement and then projected using the masked Poisson operator 3.4 to obtain an incompressible field consistent with geometry . The forward diffusion is applied to this field while leaving m and c unchanged. 3.2 Boundary-Conditioned Score Netw ork The reverse kernel p θ ( u t − 1 | u t , m, c ) is parameterized by a U-Net-based noise estimator [ 13 ] ε θ ( u t , t, m, c ) . Conditioning enters through: 1. Local geometry : The mask m is concatenated as a spatial input channel. 2. Global BC : The embedded c is transformed via an MLP into a feature map broadcast across the grid. 3. T emporal structure : Timestep embeddings modulate each block. 4. V elocity-only prediction : The model outputs a 2-channel noise field; we do not dif fuse or predict geometry . The denoised estimate is ˆ u 0 ( u t , t ) = 1 √ ¯ α t u t − r 1 − ¯ α t ¯ α t ε θ ( u t , t, m, c ) . This maintains the standard DDPM form while allowing geometry to af fect the score field. 3.3 Physics-Inf ormed Objective W e augment the DDPM loss with a div ergence penalty: L ( θ ) = E u 0 ,t,ε ∥ ε θ ( u t , t, m, c ) − ε ∥ 2 2 | {z } diffusion loss + λ div ∥ D ( ˆ u 0 ( u t , t )) ∥ 2 2 | {z } div ergence penalty where D ( · ) is a discrete di vergence operator applied only o ver fluid cells (i.e., where m = 0 ). This encourages, but does not enforce, membership in the manifold. Soft di vergence constraints shape the score netw ork so that the reverse diffusion trajectory remains close to the feasible region, impro ving stability of the subsequent projection step. 3.4 Projection-Constrained Re verse Diffusion For a v elocity field v , the Helmholtz–Hodge decomposition asserts that v = u + ∇ ϕ, u ∈ M , D ( u ) = 0 , and that u is unique giv en appropriate boundary conditions. 4 3.4.1 Periodic Domains For periodic geometries, the potential ϕ solves ˆ ϕ ( k ) = − ik · ˆ v ( k ) ∥ k ∥ 2 , ˆ u ( k ) = ˆ v ( k ) − k ˆ ϕ ( k ) , yielding a closed-form Fourier -space projection onto the diver gence-free subspace. 3.4.2 Obstacle-Bounded Domains Periodic projectors fail when m contains solid regions. W e therefore solve the Poisson equation ∆ ϕ = D ( v ) on fluid cells (1 − m ) , with Dirichlet no-slip inside solids ( u = 0 ), Neumann interface conditions between fluid and solid, and Jacobi iterations for discretization. The projected velocity is u = v − ∇ ϕ, restricted to fluid cells. In domains without solids, this reduces to the FFT -based projector . W e denote the full geometric projection by u = Π M ( v ) where M = { u ∈ R 2 × H × W : C ( u ) = 0 } , with C ( u ) collecting linear PDE constraints (e.g., div ergence). Under mild smoothness assumptions on C , M forms a differentiable submanifold whose tangent space is T u M = ker D C ( u ) . The projector Π : u → arg min v ∈M ∥ v − u ∥ 2 is precisely the Helmholtz (periodic) or masked- Poisson (obstacle) projection we implement numerically . 3.4.3 Projected Re verse Step Giv en the unconstrained DDPM update ˜ u t − 1 ∼ p θ ( u t − 1 | u t , m, c ) , the physically consistent update is u t − 1 = Π M ( ˜ u t − 1 ) . Applied at e very timestep, this guarantees that all samples remain exactly di vergence-free, irrespecti ve of model imperfections. 3.5 Projected Re verse Diffusion as Constrained Sampling Let the unconstrained rev erse SDE for DDPMs be d u t = f t ( u t )d t + g t d W t . The true rev erse process restricted to the manifold M is d u t = [ f t ( u t ) − ∇ log Z t ( u t )]d t + Π T u M ◦ d W t , where Π T u M is the projection onto the tangent space of M and Z t normalizes the constrained density . The SDE is analytically intractable. 3.5.1 Projected Lange vin Appr oximation Projected sampling corresponds to one step of an implicit constrained Langevin inte grator: u t +1 = u t + η ∇ log p ( u t ) + p 2 η ξ t followed by u t − 1 ← Π M ( u t − 1 ) , ξ t ∼ N (0 , I ) with η > 0 a discrete Langevin step size and p the target unconstrained density whose score the DDPM rev erse process approximates. Our method realizes a diffusion-model analogue of this scheme, where each DDPM rev erse step acts as an approximate Langevin update in the ambient space and the projection Π M enforces feasibility . Thus the rev erse chain u T ∼ N (0 , I ) , u t − 1 = Π M ( p θ ( u t − 1 | u t )) approximates sampling from p ( u 0 | m, c ) restricted to the incompressible manifold. This theoretical link clarifies why training-time penalties alone cannot ensure correctness, and why projection alone can distort statistical structure. The combination approximates the geometry of the constrained score field. 5 1 0 0 1 0 1 W a v e n u m b e r k 1 0 2 1 0 1 1 0 0 1 0 1 1 0 2 1 0 3 1 0 4 E n e r g y s p e c t r u m E ( k ) P eriodic ener gy spectra V T CP Figure 1: Periodic energy spectra. Comparison of the learned velocity-field ener gy spectra E ( k ) under the vanilla dif fusion model (V) and the full physically-constrained model (TCP). Both models recov er physically plausible k -decay , while TCP exhibits slightly improved mid–high frequency attenuation, consistent with enforcing incompressibility and boundary correctness. 3.6 Soft Constraints, Projection, and Stability The diver gence penalty biases the learned score such that unconstrained updates already lie near M . Projection therefore requires smaller corrections, reducing geometric distortion. Conv ersely , projection ensures that acumulated diver gence errors do not amplify along the rev erse trajectory . The resulting chain is both numerically stable e ven under model imperfections, and physically faithful, producing exactly di vergence-free samples in an y geometry m . 4 Experiments W e ev aluate the four diff usion varia nts defined in App. B, v anilla ( V ), training-constrained ( TC ), projection-only ( P ), and fully constrained ( TCP ), on both periodic and obstacle-bounded Navier–Stok es datasets (App. A). All models share identical architecture and training h yperparam- eters, differing only in physical constraints. This controlled ablation isolates the effects of (i) soft di ver gence shaping during training and (ii) hard geometric projection during sampling. The ev aluation addresses three questions: 1. Physical corr ectness : Does the model produce div ergence-free, boundary-consistent flows? 2. Distributional fidelity : Do generated fields preserve v ortex structure, spectral decay , and smoothness of the underlying distribution? 3. Generalization : Does the approach transfer to obstacle configurations unseen during training? Across all experiments, we report means over 2000 samples per split, with all metrics computed exclusi vely ov er fluid cells. 4.1 Evaluation Pr otocol Giv en a generated field u ∈ R 2 × H × W and obstacle mask m , we measure: Diver gence. ∥ D ( u ) ∥ L 2 (Ω f ) = X x ∈ Ω f D ( u )( x ) 2 1 2 , quantifying incompressibility . 6 V T C P T CP 0.00 0.02 0.04 0.06 0.08 0.10 u L 2 ( ) Diver gence V T C P T CP 0.000 0.025 0.050 0.075 0.100 0.125 0.150 0.175 V e l o c i t y e r r o r u u L 2 ( ) V T C P T CP 0.0 0.1 0.2 0.3 0.4 0.5 B o u n d a r y v i o l a t i o n u n L 2 ( ) P eriodic Obstacle (in-dist.) Obstacle (OOD) Figure 2: Aggregate diver gence, velocity error , and boundary violation metrics. Summary of quantitativ e performance across three dataset splits (Periodic, Obstacle, Obstacle–OOD) and four model v ariants: vanilla diffusion (V), training-constrained diffusion (TC), projection-only (P), and the fully constrained method (TCP). TCP and P achiev e the lowest di vergence and boundary error , while TCP provides consistently strong generalization across obstacles. Method ∥∇ · u ∥ L 2 (Ω) ↓ ∥ u − u ⋆ ∥ L 2 (Ω) ↓ R Ω |∇ · u | d x ↓ V 0.0033 ± 1.8e-04 0.0206 ± 2.0e-03 0.0035 ± 1.9e-04 TC 0.0021 ± 1.1e-04 0.0151 ± 2.0e-03 0.0022 ± 1.2e-04 P 0.0005 ± 1.5e-05 0.0493 ± 7.9e-04 0.0005 ± 1.6e-05 TCP 0.0004 ± 1.9e-05 0.0480 ± 6.1e-04 0.0004 ± 2.1e-05 T able 1: Periodic incompressible flows. Mean L 2 di ver gence, v elocity error , and integrated div ergence ov er the periodic test set. Boundary violation. ∥ u · n ∥ L 2 ( ∂ Ω) , using normals estimated from mask gradients; this measures no-slip consistency for obstacle domains. V elocity error . ∥ u − u ⋆ ∥ L 2 (Ω f ) , computing against the analytic Na vier–Stokes field. While generativ e, the conditional distribution is sufficiently concentrated for this to act as a fidelity metric. Spectral energy and vorticity . W e compute the kinetic energy spectrum E ( k ) and vorticity histograms to assess structural realism of turbulence-lik e features. Fig. 1 displays the periodic- domain energy spectra for V and TCP . 4.2 Periodic Flo w T ab . 1 reports div ergence, integrated di vergence, and L 2 velocity error for periodic samples. 4.2.1 Diver gence Reduction and the Role of Projection The unconstrained V model exhibits significant diver gence. Adding a diver gence penalty ( TC ) reduces this by 40% , confirming that soft constraints help shape the score field in the ambient space. Ho wev er , TC still accumulates di vergence during sampling because the rev erse chain does not remain on the constraint manifold. Projection-based v ariants ( P , TCP ) reduce div ergence by an order of magnitude, achie ving signif- icantly improved diver gence lev els. This directly validates the geometric argument in Sec. 3: the 7 Method ∥∇ · u ∥ L 2 (Ω) ↓ ∥ u − u ⋆ ∥ L 2 (Ω) ↓ R Ω |∇ · u | d x ↓ ∥ u · n ∥ L 2 ( ∂ Ω) ↓ V 0.0896 ± 2.99e-02 0.1366 ± 5.04e-02 0.0856 ± 3.02e-02 0.4245 ± 9.46e-02 TC 0.0929 ± 2.95e-02 0.1419 ± 5.16e-02 0.0884 ± 2.91e-02 0.4345 ± 9.65e-02 P 0.0445 ± 1.19e-02 0.0889 ± 2.66e-02 0.0365 ± 1.03e-02 0.3219 ± 5.17e-02 TCP 0.0432 ± 1.17e-02 0.0840 ± 2.68e-02 0.0352 ± 9.96e-03 0.3180 ± 5.10e-02 T able 2: Obstacle flows (in-distribution geometries). Mean diver gence, velocity error, integrated div ergence ov er the fluid region, and boundary violation ∥ u · n ∥ L 2 ( ∂ Ω) . Method ∥∇ · u ∥ L 2 (Ω) ↓ ∥ u − u ⋆ ∥ L 2 (Ω) ↓ R Ω |∇ · u | d x ↓ ∥ u · n ∥ L 2 ( ∂ Ω) ↓ V 0.0869 ± 2.68e-02 0.1312 ± 4.54e-02 0.0830 ± 2.74e-02 0.4190 ± 8.80e-02 TC 0.0939 ± 3.10e-02 0.1451 ± 5.37e-02 0.0897 ± 3.10e-02 0.4400 ± 9.82e-02 P 0.0445 ± 1.22e-02 0.0885 ± 2.78e-02 0.0366 ± 1.04e-02 0.3217 ± 5.35e-02 TCP 0.0423 ± 1.15e-02 0.0818 ± 2.62e-02 0.0347 ± 9.95e-03 0.3153 ± 5.07e-02 T able 3: Generalization to held-out obstacle geometries. Same metrics as T able 2. Models are ev aluated on unseen obstacle masks. projection acts as an orthogonal map onto the manifold of incompressible fields, correcting de viations introduced by imperfect score estimates. 4.2.2 Fidelity and Spectral Structure Interestingly , projection increases L 2 reconstruction error for P and TCP . This is expected: the projector removes high-frequency diver gence modes, and these corrections shift samples slightly off the unconstrained denoiser’ s optimum. Fig. 1 shows that TCP nonetheless yields more accurate mid–high-frequency spectral decay , consistent with smoother , physically plausible velocity fields. 4.3 Obstacle-Bounded Flows T ab . 2 and T ab. 3 report results for in-distribution obstacle layouts and held-out obstacle layouts, respectiv ely . 4.3.1 Diver gence and Boundary Conditions Across both splits, projection ( P , TCP ) deliv ers the dominant gain, with div ergence dropping by roughly a factor of 2 and boundary violation falling by approximately 25% . The masked Poisson projection guarantees that e very re verse step yields a flo w with zero normal velocity on ∂ Ω , thereby prev enting the accumulation of boundary errors common in unconstrained diffusion. The soft div ergence penalty alone ( TC ) has negligible effect in obstacle domains. This further confirms that the geometry must be handled during sampling. 4.3.2 Reconstruction and Flow Structur e Both projection-based v ariants substantially improve L 2 velocity error . This reduction reflects two coupled phenomena: (1) projection pre vents the re verse dif fusion chain from drifting, and (2) soft penalties guide the score tow ard the tangent space of the target manifold, reducing the magnitude of each projection correction. 4.3.3 Unified Metric Comparison Fig. 2 aggregates di vergence, reconstruction error , and boundary violation across all splits and model variants. The pattern is immediately visible: • Projection is essential ( P , TCP outperform V , TC ). • Soft and hard constraints combine effecti vely ( TCP yields the most stable performance across all metrics and domains). 8 This mirrors the previous theoretical decomposition: projection enforces global feasibility while div ergence-aw are training reduces local distortion. 4.4 Generalization to Unseen Geometries T ab . 3 ev aluates models on obstacle masks not present during training (shifted centers, different radii, elliptical shapes). The results parallel the in-distribution case, with improvements in div ergence, boundary violation, and L 2 error from V to TCP . This robust generalization is a ke y finding: the diffusion model learns a geometry-conditioned prior , while the projector handles exact enforcement, enabling transfer across obstacle layouts without architecture changes or re-training. This supports the claim from Sec. 1 that dif fusion provides a reusable generati ve primiti ve for flo w synthesis under heterogeneous geometries. 4.5 Spectral and V orticity Analysis Periodic energy spectra in Fig. 1 and v orticity statistics illustrate that non-projection v ariants preserve ov erall k -decay but introduce surplus high-frequency energy , indicating compressible or noisy reconstruction artifacts while projection v ariants better match the tar get spectrum, with TCP providing the smoothest tail behavior . This aligns with the theoretical insight that projection removes spurious di ver gence-carrying modes generated during rev erse diffusion. Combined with the tables, these structural metrics provide strong evidence that projection reshapes the diffusion geometry in a way that stabilizes fine-scale flow behavior rather than just global di ver gence. 4.6 Summary of Empirical Findings Across all datasets and metrics, we find three conclusions: • T raining-time divergence penalties impr ove the scor e field but do not guarantee phys- ical correctness. Soft constraints bias the network toward the tangent space but remain insufficient as a standalone mechanism. • Sampling-time projection is necessary and overwhelmingly effective. Projection onto M stabilizes the rev erse chain, enforces incompressibility and no-slip conditions, and dramatically reduces div ergence and boundary violations. • Combining soft and hard constraints (TCP) yields the most consistent and physi- cally faithful flows. TCP balances fidelity and feasibility , achie ving the best or near-best performance on ev ery metric, and generalizing robustly to unseen obstacle geometries. T ogether with the theoretical analysis of Sec. 3, these results support the interpretation of our method as a discrete approximation to constrained Langevin sampling on the incompressible manifold, with projection providing the essential geometric correction missing from unconstrained dif fusion. 5 Discussion & Conclusion This work demonstrates that geometric constraint enforcement proves fundamental for generati ve modeling of incompressible flows. Across periodic and obstacle-bounded geometries, unconstrained dif fusion models consistently drift away from the manifold of admissible solutions. Even when trained exclusi vely on di vergence-free data, the re verse diffusion dynamics accumulate div ergence, violate no-slip boundaries, and distort small-scale features. These failures reflect a structural limitation: the denoising network learns a score in the ambient velocity space rather than on the constraint manifold M . By contrast, models incorporating sampling-time projection maintain exact incompressibility at e very step through a geometry-aw are Helmholtz–Hodge operator . This modification alters the geometry of the dif fusion chain itself. Instead of e volving in the unconstrained space, the sampler becomes a discrete analogue of constrained Lange vin dynamics on M . Theoretical analysis in Sec. 3 explains 9 this behavior: projection ensures each iterate lies in the correct tangent space, preventing di vergence accumulation and stabilizing the flow of probability mass. Empirically , projection is the dominant factor in achie ving physical correctness, yielding order -of- magnitude improv ements in diver gence and boundary consistency , while the training-time div ergence penalty contributes a complementary benefit: it shapes the score so that projection requires smaller corrections and better preserves distributional fidelity . The full TCP model achieves the best performance across all metrics, including spectral accuracy , v orticity statistics, and generalization to unseen geometries. These results highlight a division of labor that appears essential in PDE- constrained generati ve modeling: the score network learns a geometry-conditioned prior , while the projector guarantees physical feasibility . More broadly , our findings indicate that diffusion models for scientific and robotics applications must incorporate physics-aw are operators into the sampling algorithm, not just as losses or architectural features. Projection-based diffusion pro vides a principled mechanism for embedding PDE constraints directly into the generative process, and our results show it scales naturally to arbitrary obstacle layouts through masked Poisson solves. Future e xtensions include time-dependent sampling for unsteady Na vier–Stokes flo ws, 3D geometries and boundary conditions, incorporating learned or adapti ve projection operators, and in vestigating fully continuous score-based SDEs. These directions aim to ward a general methodology for manifold- constrained generativ e modeling, where the sampler ev olves on a physically meaningful space rather than in an unconstrained Euclidean ambient domain. Our results show that such structure has both theoretical justification and practical necessity for high-fidelity generativ e flow modeling. References [1] Kwangjun Ahn and Sinho Chewi. Efficient constrained sampling via the mirror-langevin algorithm. In A. Beygelzimer , Y . Dauphin, P . Liang, and J. W ortman V aughan, editors, Advances in Neural Information Pr ocessing Systems , 2021. URL https://openreview.net/forum? id=vh7qBSDZW3G . [2] Jan-Hendrik Bastek, W aiChing Sun, and Dennis M. Kochmann. Physics-informed diffusion models. In International Confer ence on Learning Repr esentations , 2025. URL https:// openreview.net/forum?id=tpYeermigp . [3] Johannes Brandstetter, Daniel W orrall, and Max W elling. Message passing neural pde solvers. arXiv pr eprint arXiv:2202.03376 , 2022. [4] Alexandre J. Chorin and Jerrold E. Marsden. A Mathematical Introduction to Fluid Mechanics , volume 4 of T exts in Applied Mathematics . Springer-V erlag, New Y ork, 3 edition, 1993. ISBN 978-0-387-97918-2. doi: 10.1007/978- 1- 4612- 0883- 9. URL https://doi.org/10.1007/ 978- 1- 4612- 0883- 9 . [5] Jacob K. Christopher , Stephen Baek, and Ferdinando Fioretto. Constrained synthesis with projected dif fusion models. In Advances in Neural Information Pr ocessing Systems , 2024. URL https://arxiv.org/abs/2402.03559 . [6] Prafulla Dhariwal and Alexander Quinn Nichol. Diffusion models beat GANs on image synthesis. In A. Beygelzimer , Y . Dauphin, P . Liang, and J. W ortman V aughan, editors, Advances in Neural Information Pr ocessing Systems , 2021. URL https://openreview.net/forum? id=AAWuCvzaVt . [7] Jayesh K Gupta and Johannes Brandstetter . T ow ards multi-spatiotemporal-scale generalized PDE modeling. T ransactions on Machine Learning Resear ch , 2023. ISSN 2835-8856. URL https://openreview.net/forum?id=dPSTDbGtBY . [8] Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffusion probabilistic models. arXiv pr eprint arxiv:2006.11239 , 2020. 10 [9] Jiajun Hu, Zhen Lu, and Y ue Y ang. Generativ e prediction of flow fields around an obstacle using the diffusion model. Physical Revie w Fluids , 2025. URL 2407.00735 . T o appear . [10] Diederik P . Kingma and Jimmy Ba. Adam: A method for stochastic optimization, 2017. URL https://arxiv.org/abs/1412.6980 . [11] Andrew Lamperski. Projected stochastic gradient langevin algorithms for constrained sampling and non-con vex learning. In Confer ence on Learning Theory , volume 134 of Pr oceedings of Machine Learning Resear ch , pages 2891–2937, 2021. URL 12137 . [12] Y uchen Liu, Ruiqi Ni, and Ahmed H. Qureshi. Physics-informed neural mapping and motion planning in unknown en vironments. IEEE T ransactions on Robotics , 41:2200–2212, 2025. ISSN 1941-0468. doi: 10.1109/tro.2025.3548495. URL http://dx.doi.org/10.1109/TRO. 2025.3548495 . [13] Jonathan Long, Ev an Shelhamer , and T re vor Darrell. Fully con volutional netw orks for semantic segmentation . In 2015 IEEE Conference on Computer V ision and P attern Recognition (CVPR) , pages 3431–3440, Los Alamitos, CA, USA, June 2015. IEEE Computer Society . doi: 10.1109/ CVPR.2015.7298965. URL https://doi.ieeecomputersociety.org/10.1109/CVPR. 2015.7298965 . [14] lucidrains. denoising-diffusion-p ytorch: Implementation of denoising dif fusion probabilistic model in pytorch. https://github.com/lucidrains/denoising- diffusion- pytorch , 2025. commit revision XYZ, accessed February 23, 2026. [15] Ryuichi Miyauchi, Hengyuan Chang, Tsukasa Fukusato, Kazunori Miyata, and Haoran Xie. Physics-aw are fluid field generation from user sketches using helmholtz-hodge decomposition. CoRR , abs/2507.09146, 2025. URL . [16] Ruiqi Ni and Ahmed H Qureshi. NTFields: Neural time fields for physics-informed robot motion planning. In International Confer ence on Learning Repr esentations , 2023. URL https://openreview.net/forum?id=ApF0dmi1_9K . [17] Olaf Ronneberger , Philipp Fischer, and Thomas Brox. U-net: Con volutional networks for biomedical image segmentation. In Nassir Navab, Joachim Horne gger, W illiam M. W ells, and Alejandro F . Frangi, editors, Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015 , pages 234–241, Cham, 2015. Springer International Publishing. ISBN 978-3- 319-24574-4. [18] Pedro Sanchez, Xiao Liu, Alison Q O’Neil, and Sotirios A. Tsaftaris. Diffusion models for causal discovery via topological ordering. In The Eleventh International Conference on Learning Repr esentations , 2023. URL https://openreview.net/forum?id=Idusfje4- Wq . [19] Y ang Song, Jascha Sohl-Dickstein, Diederik P Kingma, Abhishek Kumar , Stefano Ermon, and Ben Poole. Score-based generative modeling through stochastic differential equations. In International Conference on Learning Representations , 2021. URL https://openreview. net/forum?id=PxTIG12RRHS . [20] Alexander T ong, Nikolay Malkin, Kilian Fatras, Lazar Atanacko vic, Y anlei Zhang, Guillaume Huguet, Guy W olf, and Y oshua Bengio. Simulation-free schrödinger bridges via score and flow matching. arXiv preprint 2307.03672 , 2023. [21] Alexander T ong, Kilian F A TRAS, Nikolay Malkin, Guillaume Huguet, Y anlei Zhang, Jarrid Rector-Brooks, Guy W olf, and Y oshua Bengio. Improving and generalizing flo w-based genera- tiv e models with minibatch optimal transport. T ransactions on Machine Learning Resear ch , 2024. ISSN 2835-8856. URL https://openreview.net/forum?id=CD9Snc73AW . Expert Certification. [22] Utkarsh Utkarsh, Pengfei Cai, Alan Edelman, Rafael Gómez-Bombarelli, and Christopher V in- cent Rackauckas. Physics-constrained flo w matching: Sampling generativ e models with hard constraints. CoRR , abs/2506.04171, 2025. URL . 11 A Data Generation W e construct training and ev aluation data from analytic Navier–Stokes solutions under both periodic and obstacle-bounded geometries. Periodic samples use the T aylor–Green vortex at randomly sampled viscosities and times. For obstacle-bounded domains, we randomly generate between one and four circular obstacles with radii sampled relati ve to domain size. A binary mask m marks solid regions, and a no-slip constraint u = 0 is imposed inside obstacles. The initial analytic velocity is then projected using the masked Poisson operator 3.4 to obtain an incompressible field consistent with geometry . Each sample therefore contains ( u 0 , m, c ) , where c index es the boundary condition regime (periodic vs. no-slip). This dataset enables training and e valuation of models under heterogeneous, previously unseen geometries. B T raining Protocol W e ev aluate four diffusion variants in a controlled ablation (as described in Sec. 4), isolating the contribution of training-time penalties and sample-time projection: • V (V anilla). A baseline Gaussian dif fusion model [ 14 ] with neither physical penalties nor projection. • TC (T raining-Constrained). Identical to V , but augmented with an auxiliary di vergence penalty during training. • P (Projection-Only). Our Flow-Gaussian dif fusion model equipped with hard projection at sample time but no training-time penalties. • TCP (T raining-Constrained + Projection). The full pipeline: soft training-time di vergence penalties together with hard projection at sample time. All models use a 200-step diffusion schedule with a linear noise variance ranging from β 1 = 10 − 4 to β 2 = 2 × 10 − 2 . For the TC and TCP variants, we apply a div ergence-penalty weight of λ = 10 − 2 . The embedded UNet backbone [ 17 ] employs a base channel width of 32 with hierarchical multipliers (1 , 2 , 4) . T raining is performed on 20,000 synthetic Navier–Stok es fields (resolution 32 × 32 ), generated as described in App. A. The dataset is ev enly split between obstacle-free and obstacle-laden domains. T aylor–Green vorte x samples use decay parameter ν = 0 . 01 to emphasize advecti ve structure and increase conditioning difficulty . Models are trained for 10 epochs using Adam [ 10 ] with learning rate η = 10 − 4 . This protocol ensures that (i) each ablation is trained under identical architectural and optimization conditions, (ii) di ver gence-related effects can be cleanly attrib uted, and (iii) projection mechanisms are ev aluated in isolation and in conjunction with training-time regularization. 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment