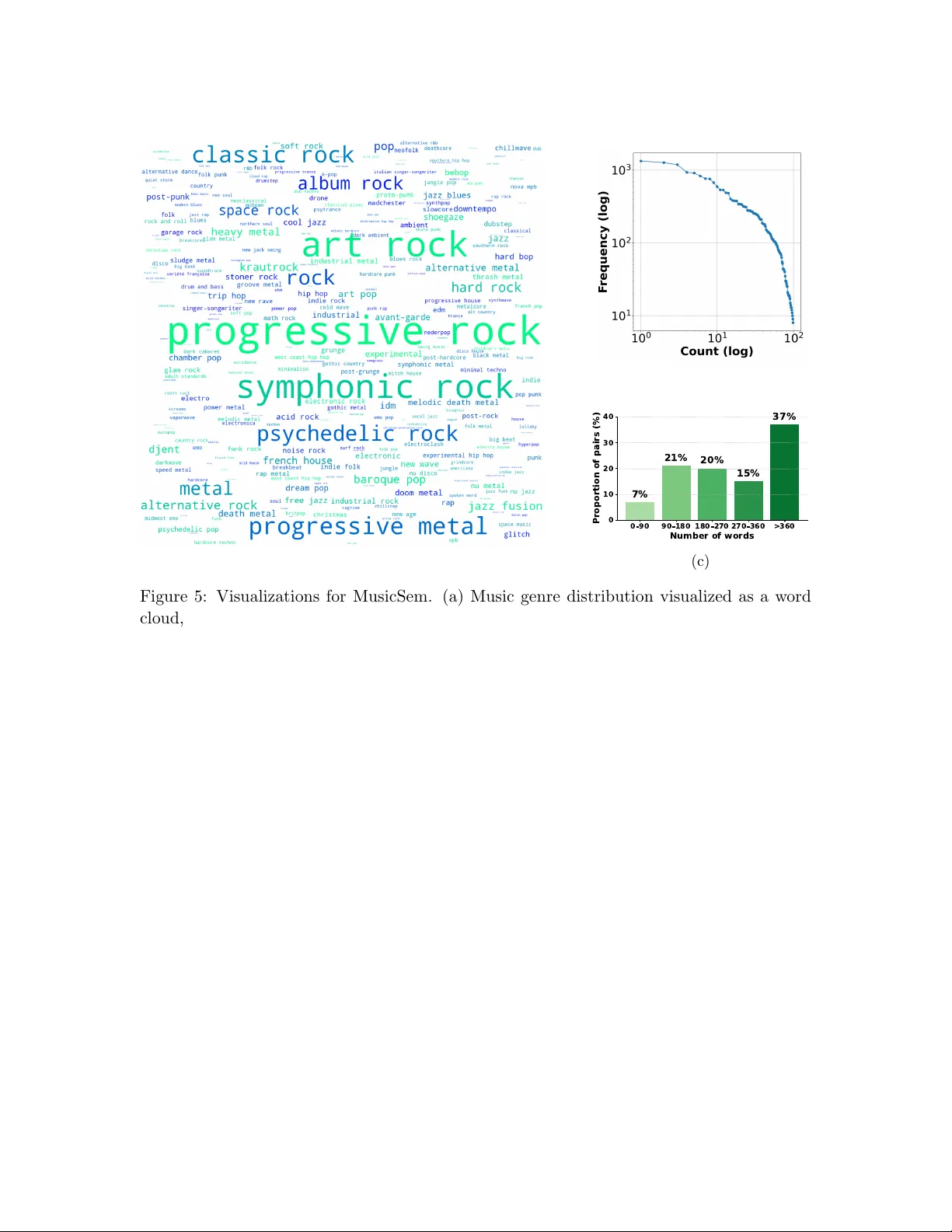

MusicSem: A Semantically Rich Language--Audio Dataset of Natural Music Descriptions

Music representation learning is central to music information retrieval and generation. While recent advances in multimodal learning have improved alignment between text and audio for tasks such as cross-modal music retrieval, text-to-music generatio…

Authors: Rebecca Salganik, Teng Tu, Fei-Yueh Chen