Inelastic Constitutive Kolmogorov-Arnold Networks: A generalized framework for automated discovery of interpretable inelastic material models

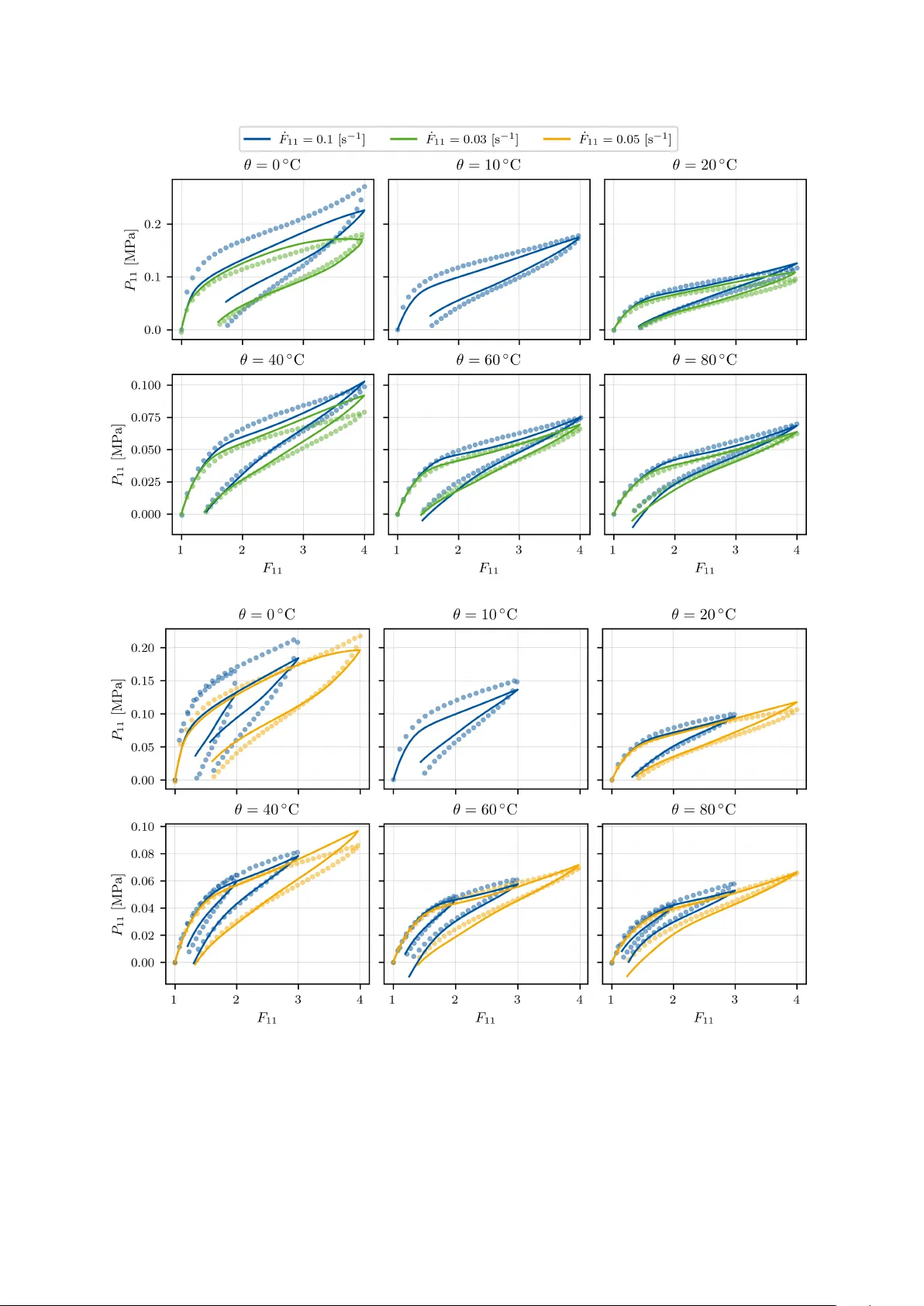

A key problem of solid mechanics is the identification of the constitutive law of a material, that is, the relation between strain and stress. Machine learning has lead to considerable advances in this field lately. Here we introduce inelastic Consti…

Authors: Chenyi Ji, Kian P. Abdolazizi, Hagen Holthusen