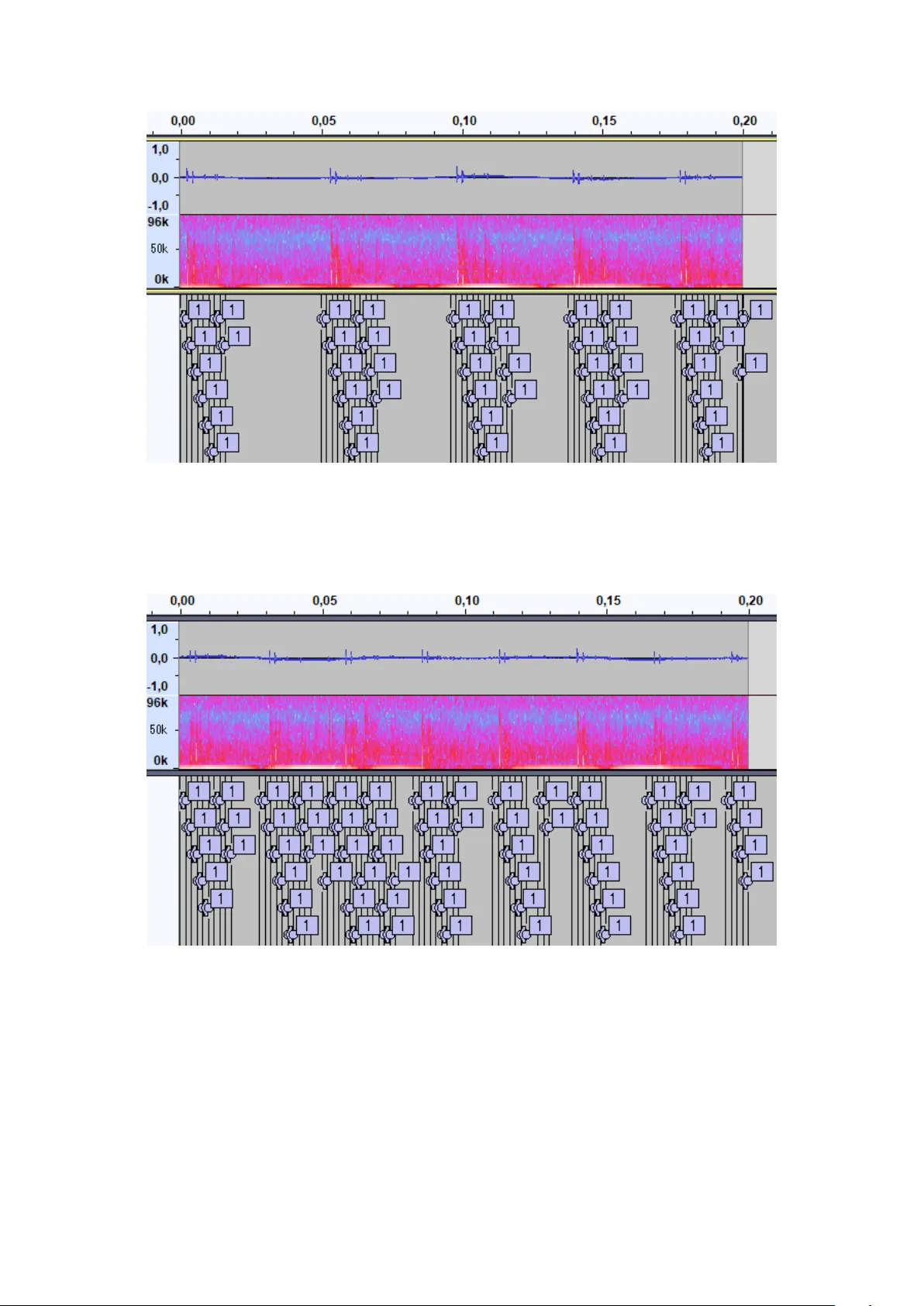

Detection and Classification of Cetacean Echolocation Clicks using Image-based Object Detection Methods applied to Advanced Wavelet-based Transformations

A challenge in marine bioacoustic analysis is the detection of animal signals, like calls, whistles and clicks, for behavioral studies. Manual labeling is too time-consuming to process sufficient data to get reasonable results. Thus, an automatic sol…

Authors: Christopher Hauer