Reasoning-Native Agentic Communication for 6G

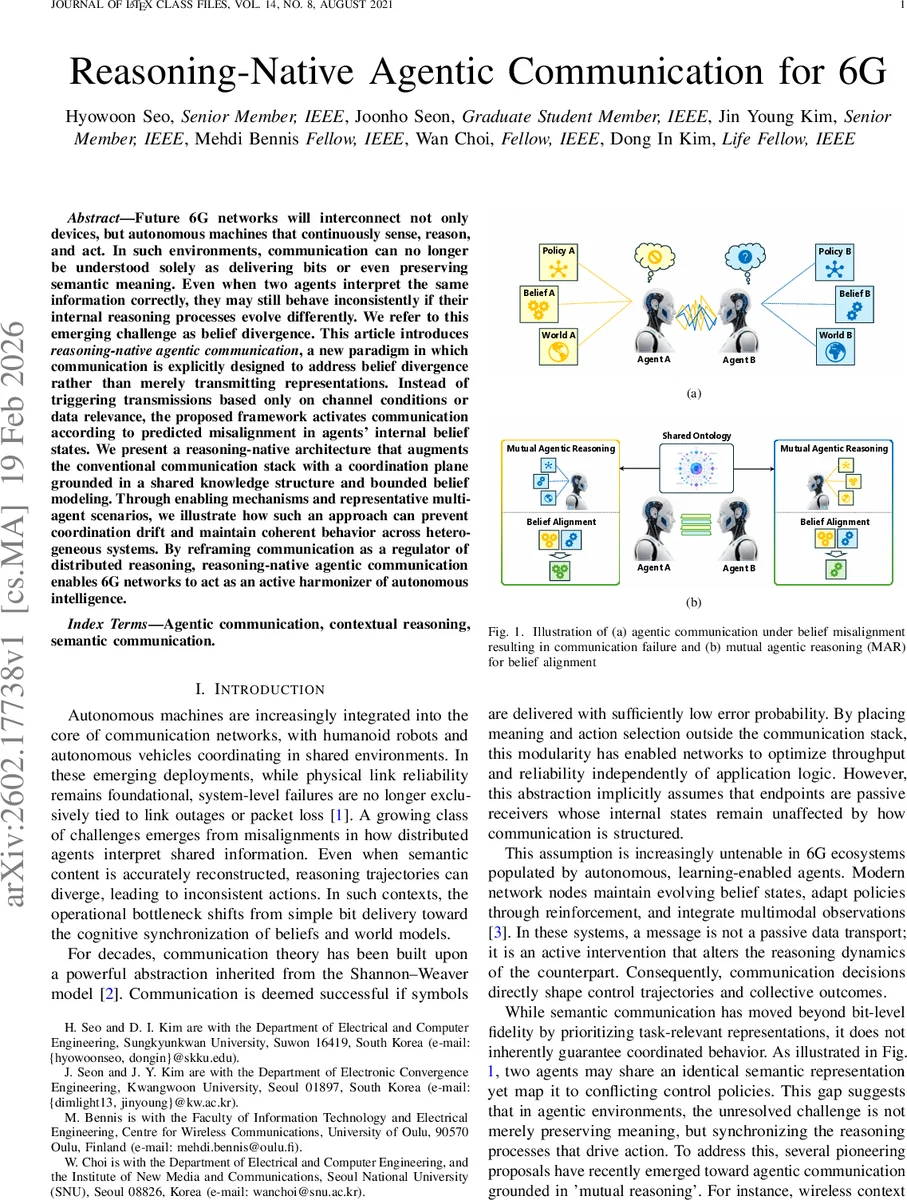

Future 6G networks will interconnect not only devices, but autonomous machines that continuously sense, reason, and act. In such environments, communication can no longer be understood solely as delivering bits or even preserving semantic meaning. Even when two agents interpret the same information correctly, they may still behave inconsistently if their internal reasoning processes evolve differently. We refer to this emerging challenge as belief divergence. This article introduces reasoning native agentic communication, a new paradigm in which communication is explicitly designed to address belief divergence rather than merely transmitting representations. Instead of triggering transmissions based only on channel conditions or data relevance, the proposed framework activates communication according to predicted misalignment in agents internal belief states. We present a reasoning native architecture that augments the conventional communication stack with a coordination plane grounded in a shared knowledge structure and bounded belief modeling. Through enabling mechanisms and representative multi agent scenarios, we illustrate how such an approach can prevent coordination drift and maintain coherent behavior across heterogeneous systems. By reframing communication as a regulator of distributed reasoning, reasoning native agentic communication enables 6G networks to act as an active harmonizer of autonomous intelligence.

💡 Research Summary

The paper addresses a fundamental challenge that will arise in future 6G networks: the divergence of internal belief states among autonomous agents, even when they correctly interpret the same semantic information. The authors term this phenomenon “belief divergence” and argue that traditional communication theory—rooted in the Shannon‑Weaver model and focused on reliable bit delivery—cannot guarantee coordinated behavior in environments populated by learning‑enabled robots, autonomous vehicles, and edge AI systems. To bridge the gap between semantic alignment and behavioral alignment, the authors propose a new paradigm called reasoning‑native agentic communication.

The cornerstone of this paradigm is Mutual Agentic Reasoning (MAR). MAR equips each agent with a Recursive Belief Engine (RBE) that maintains an estimate of the counterpart’s belief vector, policy parameters, and world‑model state. Before transmitting any message, an agent simulates how the candidate message would update the counterpart’s belief and, consequently, its decision‑making policy. If the predicted belief shift is below a predefined threshold, the transmission is suppressed—embodying the principle “silence is information.” Conversely, if a potential misalignment is detected, the agent enriches or reformulates the message to explicitly resolve the ambiguity. This anticipatory, theory‑of‑mind‑like process enables agents to coordinate with minimal signaling, reducing bandwidth usage while improving safety and task success.

To operationalize MAR, the authors introduce a dual‑plane architecture. The Data Delivery Plane handles conventional tasks such as semantic token extraction, encoding, error correction, and reliable transport over the physical channel. The Reasoning Coordination Plane sits above it and hosts the shared ontology, the RBE, and the MAR logic. The shared ontology provides a stable conceptual substrate—defining primitives, relational dependencies, and causal rules—that all heterogeneous agents can reference, ensuring that belief updates are expressed in a common structural language. This separation allows the network to preserve the efficiency of traditional data pipelines while adding a dedicated layer for belief synchronization.

The paper validates the approach through three representative scenarios: (1) cooperative lifting by heterogeneous humanoid robots, where semantic tokens alone lead to opposite corrective actions; (2) intersection negotiation among autonomous vehicles with differing control policies; and (3) distributed edge‑AI model synchronization where agents have divergent learning histories. In each case, MAR‑enabled agents achieve 30‑50 % reduction in transmitted bits and a 15‑25 % increase in policy coherence and task success rates compared with state‑of‑the‑art semantic communication schemes.

Beyond performance metrics, the authors propose new key performance indicators tailored to agentic communication: belief‑divergence metric, reasoning‑alignment latency, and policy‑coherence ratio. These metrics capture the quality of distributed reasoning, which traditional KPIs (throughput, latency, reliability) overlook.

Finally, the paper outlines open research directions: automated generation and evolution of shared ontologies, scalable learning of recursive belief models in large agent swarms, and privacy‑preserving mechanisms for belief‑state exchange. By reframing the network’s role from a passive data conduit to an active harmonizer of distributed intelligence, the work lays a conceptual and architectural foundation for the next generation of 6G systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment