A Theoretical Framework for Modular Learning of Robust Generative Models

Training large-scale generative models is resource-intensive and relies heavily on heuristic dataset weighting. We address two fundamental questions: Can we train Large Language Models (LLMs) modularly-combining small, domain-specific experts to matc…

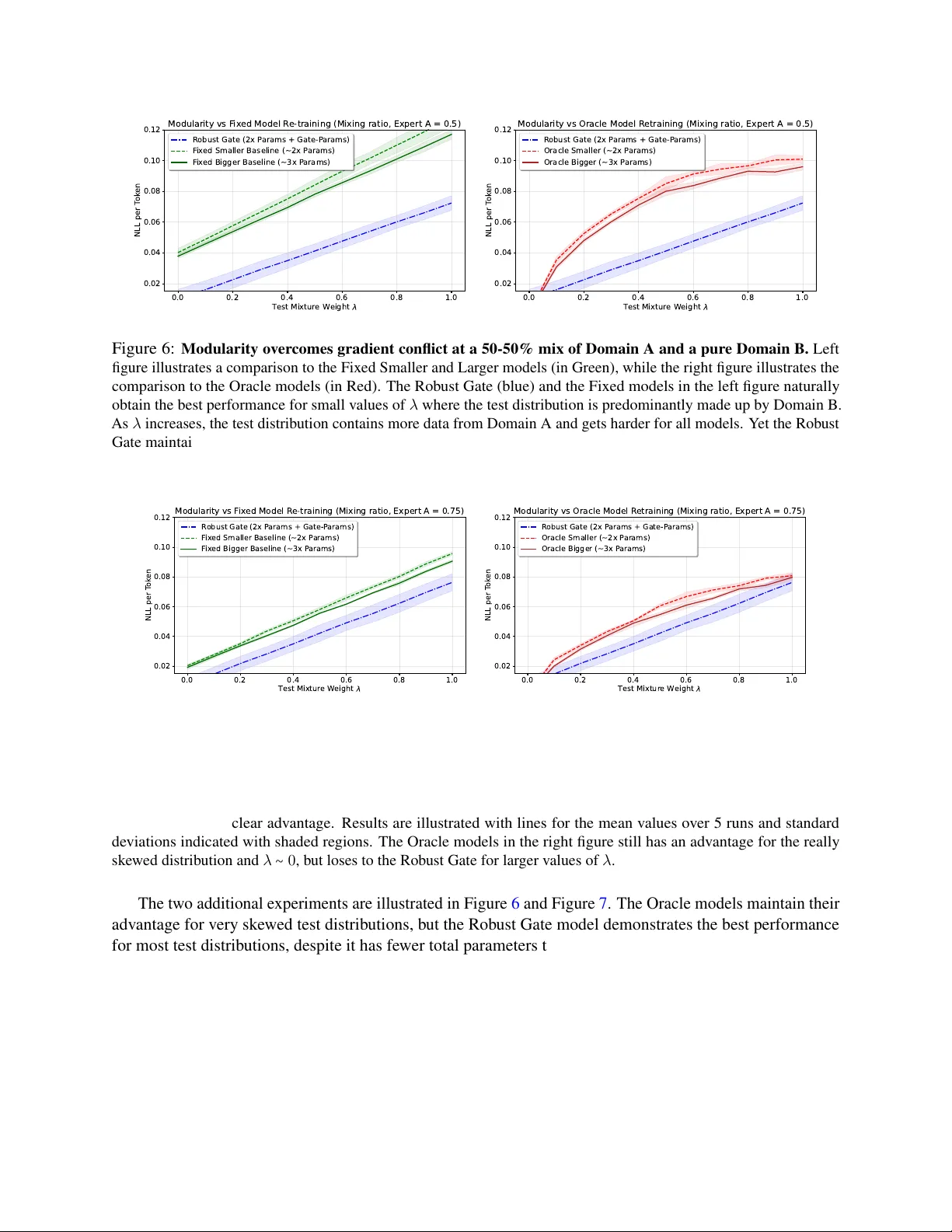

Authors: Refer to original PDF