Privacy in Theory, Bugs in Practice: Grey-Box Auditing of Differential Privacy Libraries

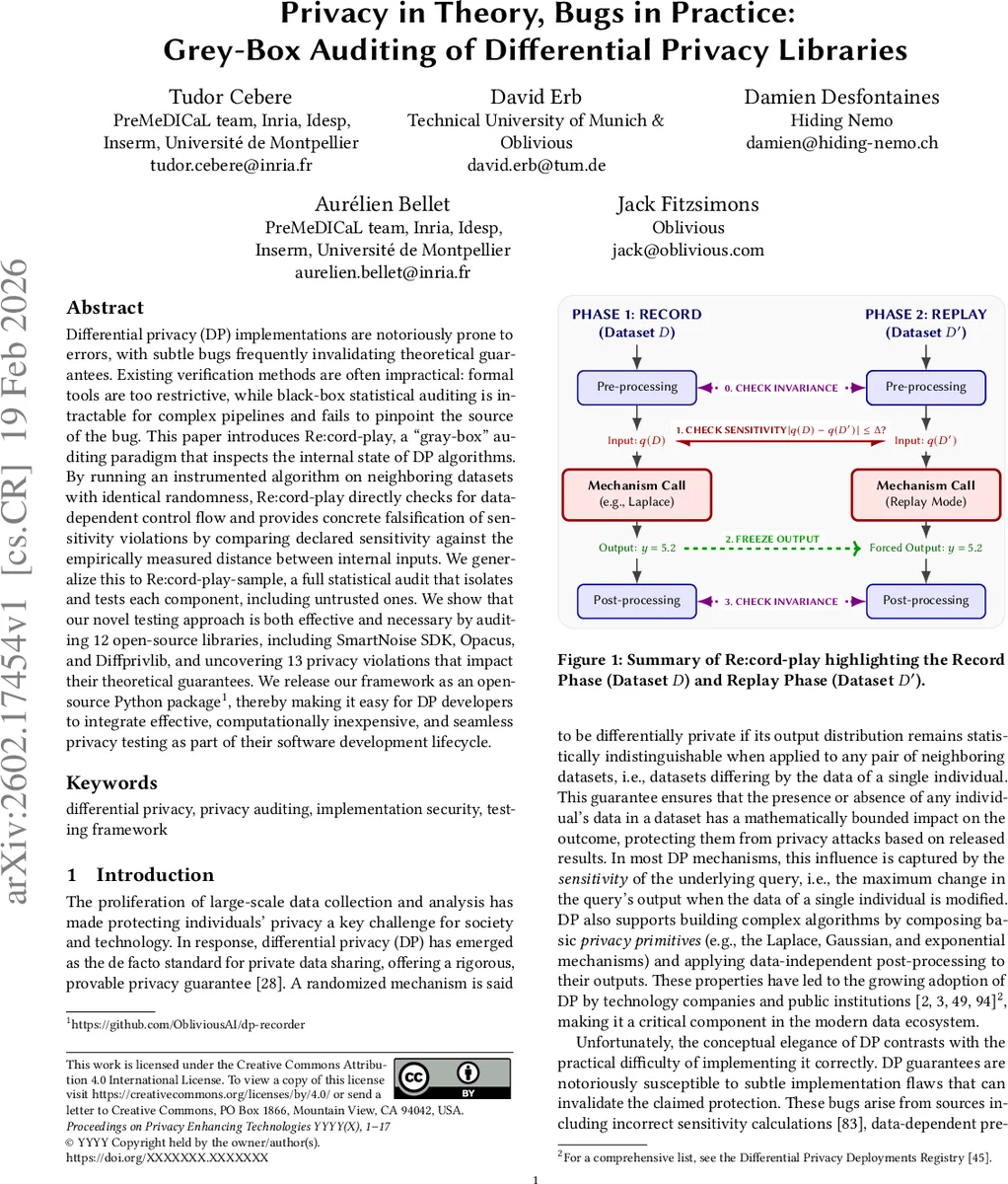

Differential privacy (DP) implementations are notoriously prone to errors, with subtle bugs frequently invalidating theoretical guarantees. Existing verification methods are often impractical: formal tools are too restrictive, while black-box statistical auditing is intractable for complex pipelines and fails to pinpoint the source of the bug. This paper introduces Re:cord-play, a gray-box auditing paradigm that inspects the internal state of DP algorithms. By running an instrumented algorithm on neighboring datasets with identical randomness, Re:cord-play directly checks for data-dependent control flow and provides concrete falsification of sensitivity violations by comparing declared sensitivity against the empirically measured distance between internal inputs. We generalize this to Re:cord-play-sample, a full statistical audit that isolates and tests each component, including untrusted ones. We show that our novel testing approach is both effective and necessary by auditing 12 open-source libraries, including SmartNoise SDK, Opacus, and Diffprivlib, and uncovering 13 privacy violations that impact their theoretical guarantees. We release our framework as an open-source Python package, thereby making it easy for DP developers to integrate effective, computationally inexpensive, and seamless privacy testing as part of their software development lifecycle.

💡 Research Summary

Differential privacy (DP) promises mathematically provable protection for individuals, yet real‑world implementations frequently contain subtle bugs that invalidate those guarantees. Existing verification approaches fall into two camps: formal methods that require rewriting code in restrictive domain‑specific languages, and black‑box statistical audits that compare output distributions on neighboring datasets. Formal tools are impractical for the large, heterogeneous Python and C++ libraries used today, while black‑box audits become statistically intractable for complex pipelines and provide no insight into the source of a failure.

This paper introduces a new “gray‑box” auditing paradigm called Re:cord‑play and its extension Re:cord‑play‑sample. The core idea is to exploit the natural separation in DP algorithms between data‑independent logic (post‑processing, control flow) and calls to privacy primitives (Laplace, Gaussian, Exponential mechanisms). By instrumenting the code to record the random seed, the query inputs, and any intermediate variables at each primitive call, and then replaying the same randomness on an adjacent dataset, the framework can directly check three classes of violations:

- Data‑dependent leakage – if intermediate variables differ despite identical primitive outputs, data has leaked into supposedly data‑independent code.

- Sensitivity mis‑specification – the declared sensitivity Δ is compared against the empirically measured distance |q(D) − q(D′)|.

- Noise mis‑calibration – assuming the primitive implementation is correct, a mismatch between the frozen noise and the measured sensitivity reveals under‑ or over‑scaled noise.

Because the primitive outputs are frozen, the audit does not rely on sampling the high‑dimensional final output distribution; a single deterministic run suffices to flag a bug and pinpoint its location.

When a library includes custom or third‑party primitives whose correctness cannot be assumed, the authors propose Re:cord‑play‑sample. Each primitive is isolated and subjected to a statistical audit similar to traditional distributional auditing, but on the primitive’s own privacy‑loss distribution (PLD). By estimating PLDs analytically for known mechanisms and empirically for black‑box primitives, the framework can compose them to obtain an end‑to‑end (ε, δ) bound, while still identifying the offending component.

The authors implemented the entire methodology as an open‑source Python package (dp-recorder). They evaluated it on twelve widely used DP libraries—including SmartNoise SDK, Opacus, Diffprivlib, and Synthcity—automating the audit across many configurations. The audit uncovered 13 previously unknown privacy violations, spanning:

- Pre‑processing bugs where clipping thresholds were computed from the data, causing the declared L2‑sensitivity to be lower than the true sensitivity.

- Incorrect sensitivity declarations where the code claimed a smaller Δ than the measured |q(D) − q(D′)|.

- Noise scale errors such as using the wrong σ for Gaussian noise, leading to an ε that is far smaller than the actual privacy loss.

- Randomness reuse that introduced unintended correlations between runs.

For each violation the tool produces a detailed report indicating the exact line of code, the mismatched values, and a suggested fix, making it suitable for integration into continuous integration/continuous deployment (CI/CD) pipelines as a lightweight unit test.

The paper also discusses limitations. Re:cord‑play requires source‑level access; it cannot be applied to opaque services accessed only via API. It currently handles static sensitivity and single‑call primitives; adaptive mechanisms like DP‑SGD with dynamic clipping need further instrumentation. Future work includes automated sensitivity inference, richer dynamic analysis for adaptive algorithms, and hybrid approaches that combine formal verification with gray‑box auditing.

In summary, the work bridges the gap between theoretical DP guarantees and practical software correctness by providing a practical, low‑overhead “privacy debugger.” It complements existing black‑box audits with precise, component‑level diagnostics, and its open‑source release lowers the barrier for DP developers to embed rigorous privacy testing into everyday development workflows.

Comments & Academic Discussion

Loading comments...

Leave a Comment