Continual learning and refinement of causal models through dynamic predicate invention

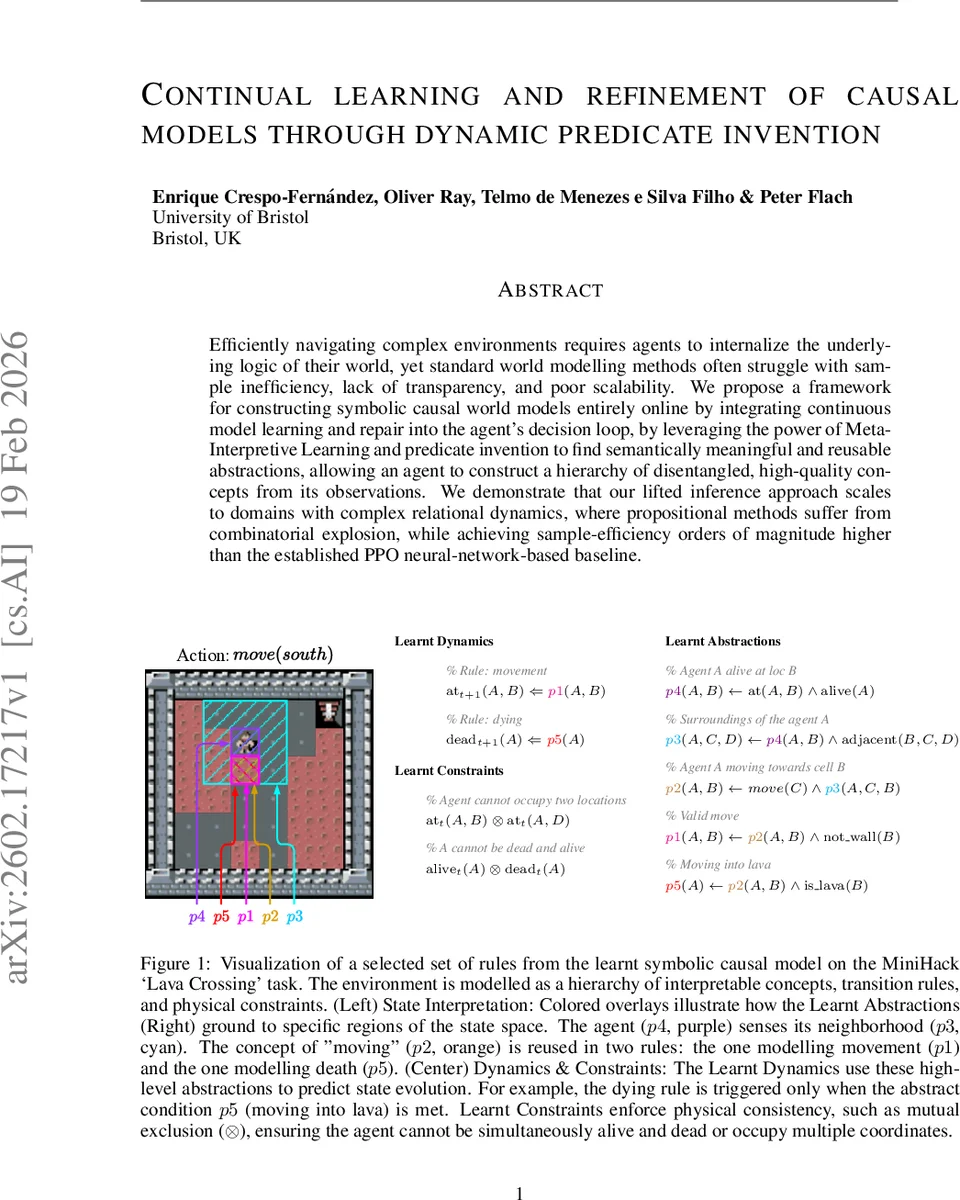

Efficiently navigating complex environments requires agents to internalize the underlying logic of their world, yet standard world modelling methods often struggle with sample inefficiency, lack of transparency, and poor scalability. We propose a framework for constructing symbolic causal world models entirely online by integrating continuous model learning and repair into the agent’s decision loop, by leveraging the power of Meta-Interpretive Learning and predicate invention to find semantically meaningful and reusable abstractions, allowing an agent to construct a hierarchy of disentangled, high-quality concepts from its observations. We demonstrate that our lifted inference approach scales to domains with complex relational dynamics, where propositional methods suffer from combinatorial explosion, while achieving sample-efficiency orders of magnitude higher than the established PPO neural-network-based baseline.

💡 Research Summary

The paper presents an online, continual learning framework that builds symbolic causal world models by integrating Meta‑Interpretive Learning (MIL) with dynamic predicate invention. Unlike conventional deep model‑based approaches, which are data‑hungry and opaque, or traditional symbolic planners that require hand‑crafted models, this method learns and refines a First‑Order Logic transition theory directly from streams of experience. The agent operates in a Predict‑Verify‑Refine loop: it predicts the next state using the current hypothesis H, compares the prediction with the observed state, and generates error signals. False negatives (unpredicted changes) trigger the abductive generation of new rules via metarule templates, possibly inventing new predicates that capture higher‑level abstractions. False positives (predicted but absent changes) cause specialization by pruning or deleting offending clauses. The search space is constrained by a fixed set of metarules, a strong type system, and a global predicate registry that reuses equivalent predicates, ensuring that computational complexity depends on logical depth rather than the size of the grounded state space. Complexity analysis yields O(|M|·|Typed|^k·D_max), demonstrating scale‑invariance. Experiments in the MiniHack “Lava Crossing” domain show that the symbolic agent solves the task after a single failure (episode 2), whereas a PPO baseline with pre‑trained CNN weights requires roughly 128 episodes for first success and many more for convergence. Moreover, the learned rule set transfers zero‑shot to a larger 100×100 grid, confirming that the abstractions are invariant to environment scale. Visualizations illustrate that invented predicates (e.g., p2 for “moving” and “dying”) align with human‑interpretable concepts and are reused across multiple dynamics, providing transparent hierarchical reasoning. Compared to batch ILP systems (FastLAS, Popper) and neurosymbolic hybrids that rely on expensive pre‑training, this approach offers real‑time model repair, full interpretability, and superior sample efficiency. The authors suggest future extensions to stochastic domains, multi‑agent settings, and hybrid neuro‑symbolic integration.

Comments & Academic Discussion

Loading comments...

Leave a Comment