EntropyPrune: Matrix Entropy Guided Visual Token Pruning for Multimodal Large Language Models

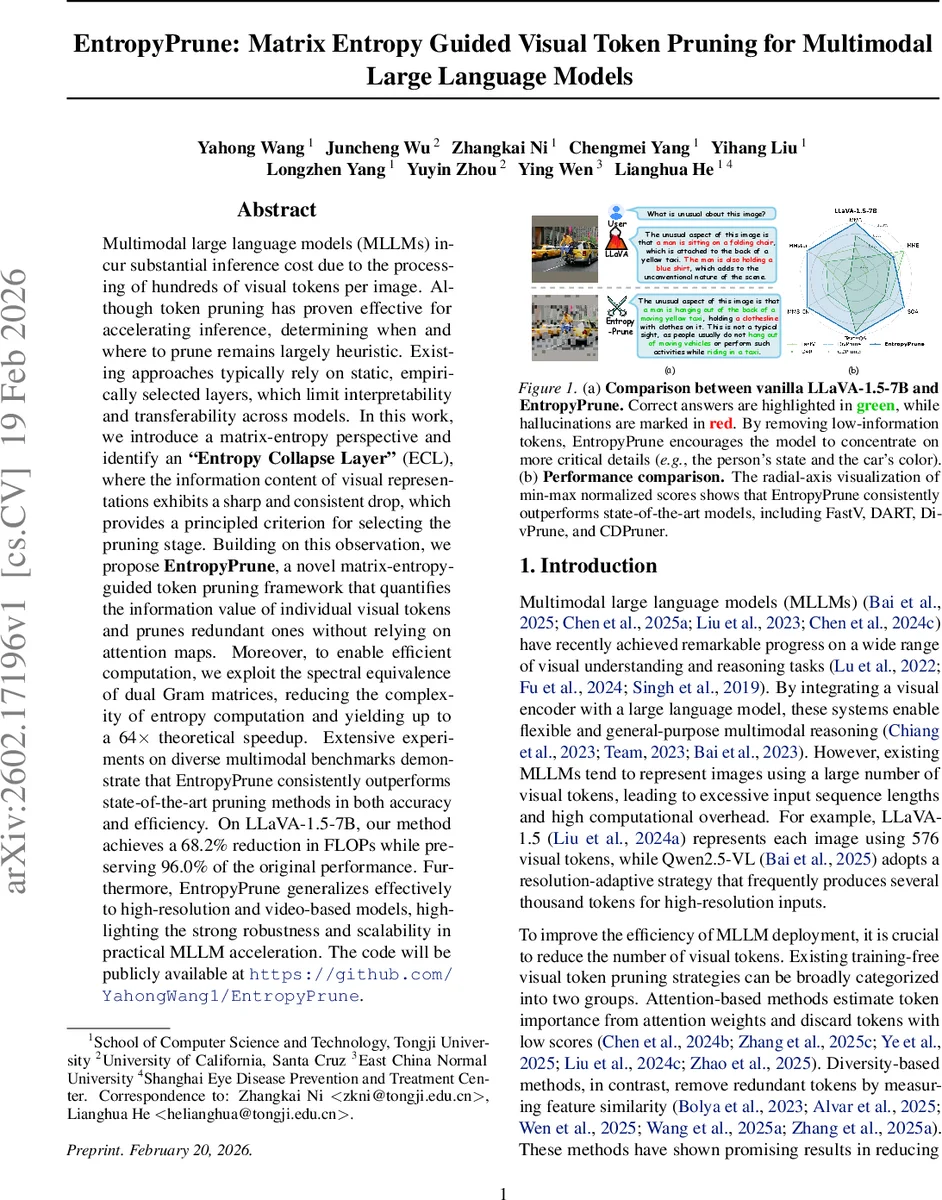

Multimodal large language models (MLLMs) incur substantial inference cost due to the processing of hundreds of visual tokens per image. Although token pruning has proven effective for accelerating inference, determining when and where to prune remains largely heuristic. Existing approaches typically rely on static, empirically selected layers, which limit interpretability and transferability across models. In this work, we introduce a matrix-entropy perspective and identify an “Entropy Collapse Layer” (ECL), where the information content of visual representations exhibits a sharp and consistent drop, which provides a principled criterion for selecting the pruning stage. Building on this observation, we propose EntropyPrune, a novel matrix-entropy-guided token pruning framework that quantifies the information value of individual visual tokens and prunes redundant ones without relying on attention maps. Moreover, to enable efficient computation, we exploit the spectral equivalence of dual Gram matrices, reducing the complexity of entropy computation and yielding up to a 64x theoretical speedup. Extensive experiments on diverse multimodal benchmarks demonstrate that EntropyPrune consistently outperforms state-of-the-art pruning methods in both accuracy and efficiency. On LLaVA-1.5-7B, our method achieves a 68.2% reduction in FLOPs while preserving 96.0% of the original performance. Furthermore, EntropyPrune generalizes effectively to high-resolution and video-based models, highlighting the strong robustness and scalability in practical MLLM acceleration. The code will be publicly available at https://github.com/YahongWang1/EntropyPrune.

💡 Research Summary

The paper addresses the high inference cost of multimodal large language models (MLLMs) that stem from processing hundreds of visual tokens per image. While token pruning has been shown to accelerate inference, existing methods rely on heuristic, static layer choices and often depend on attention maps or token similarity measures, limiting interpretability, transferability, and compatibility with fast attention implementations.

To overcome these limitations, the authors introduce a matrix‑entropy perspective. For a set of visual tokens X they compute a trace‑normalized covariance matrix Σ_X and define its entropy as the von Neumann entropy S(Σ_X)=−∑σ_i log σ_i, where σ_i are the eigenvalues of Σ_X. This formulation directly quantifies the information content of the token set without requiring probability density estimation.

Analyzing eight diverse multimodal benchmarks (SQA, MME, TextVQA, POPE, VizWiz, LLaVA‑Bench, MMVET, VQAV2) on two state‑of‑the‑art models (LLaVA‑1.5‑7B and LLaVA‑Next‑7B), the authors observe a consistent pattern: matrix entropy remains high in the early transformer layers and then drops sharply after a specific depth (the second layer for both models). They define this depth as the Entropy Collapse Layer (ECL). The abrupt entropy decline signals that visual representations have become highly compressed and many tokens are redundant, making ECL a principled, model‑agnostic criterion for when to prune.

Based on ECL, the proposed EntropyPrune framework proceeds in three steps: (1) locate ECL by monitoring layer‑wise entropy; (2) at ECL, reshape each visual token into a head‑wise matrix M_i (h heads, each of dimension d_h), compute a trace‑normalized covariance Σ_i across heads, and evaluate token‑wise entropy I(x_i)=−tr(Σ_i log Σ_i); (3) retain tokens with high entropy and discard low‑entropy ones, then continue inference with the compact token set.

A naïve eigen‑decomposition of Σ_i would cost O(d_h³) per token, which is prohibitive because d_h (e.g., 128) is much larger than the number of heads h (e.g., 32). The authors exploit the spectral equivalence between a matrix and its Gram counterpart: the non‑zero eigenvalues of Σ_i are identical to those of the h × h Gram matrix M_i M_iᵀ. By computing eigenvalues in the smaller h‑dimensional space, they achieve a theoretical 64× speedup, making the entropy computation practical for real‑time inference.

Empirical results on LLaVA‑1.5‑7B show that EntropyPrune removes 77.8 % of visual tokens, reduces FLOPs by 68.2 %, and retains 96.0 % of the original performance. Compared against recent pruning baselines (FastV, DART, DivPrune, CDPruner), EntropyPrune consistently yields higher accuracy and lower hallucination rates across all benchmarks. The method also generalizes to high‑resolution images and video inputs, where the same entropy‑collapse phenomenon appears, demonstrating robustness to longer sequences and larger token counts.

Importantly, EntropyPrune is training‑free: it can be applied to a pre‑trained MLLM without any fine‑tuning, preserving the original model’s weights and avoiding additional data or compute. The authors will release code and pretrained checkpoints on GitHub.

In summary, EntropyPrune introduces a theoretically grounded, interpretable token‑pruning strategy based on matrix entropy and a novel spectral acceleration technique. By identifying the Entropy Collapse Layer as a universal pruning point and scoring tokens via their intrinsic information content, the method delivers substantial inference speed‑ups while maintaining near‑original accuracy, advancing the practical deployment of large multimodal models.

Comments & Academic Discussion

Loading comments...

Leave a Comment