The Bots of Persuasion: Examining How Conversational Agents' Linguistic Expressions of Personality Affect User Perceptions and Decisions

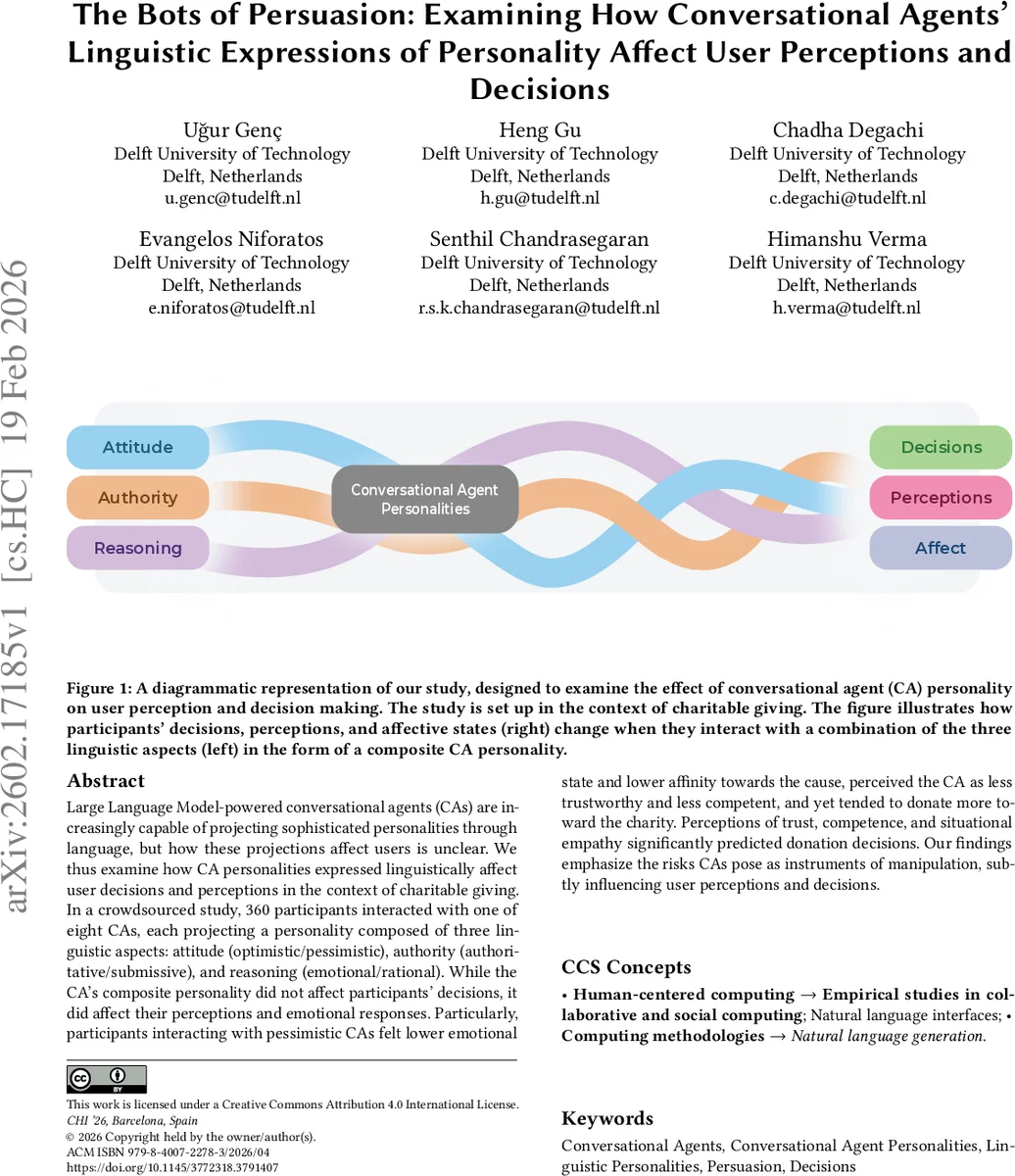

Large Language Model-powered conversational agents (CAs) are increasingly capable of projecting sophisticated personalities through language, but how these projections affect users is unclear. We thus examine how CA personalities expressed linguistically affect user decisions and perceptions in the context of charitable giving. In a crowdsourced study, 360 participants interacted with one of eight CAs, each projecting a personality composed of three linguistic aspects: attitude (optimistic/pessimistic), authority (authoritative/submissive), and reasoning (emotional/rational). While the CA’s composite personality did not affect participants’ decisions, it did affect their perceptions and emotional responses. Particularly, participants interacting with pessimistic CAs felt lower emotional state and lower affinity towards the cause, perceived the CA as less trustworthy and less competent, and yet tended to donate more toward the charity. Perceptions of trust, competence, and situational empathy significantly predicted donation decisions. Our findings emphasize the risks CAs pose as instruments of manipulation, subtly influencing user perceptions and decisions.

💡 Research Summary

The paper investigates how the linguistic expression of personality in large‑language‑model (LLM) powered conversational agents (CAs) influences user perceptions, emotions, and charitable‑giving decisions. The authors define three binary personality dimensions—Attitude (optimistic vs. pessimistic), Authority (authoritative vs. submissive), and Reasoning (emotional vs. rational)—and combine them to create eight distinct CA personas. In a between‑subjects online experiment, 360 crowd‑sourced participants were randomly assigned to interact with one of the eight CAs in a short text‑based dialogue about a charitable cause. After the interaction, participants reported their current affective state, trust in the CA, perceived competence of the CA, situational empathy toward the cause, and their willingness to donate as well as the amount they would donate (using a virtual currency).

Statistical analysis (ANOVA and multiple regression) revealed that the composite CA personality did not have a direct effect on the binary donation decision or on the donation amount. However, the Attitude dimension had strong indirect effects: pessimistic CAs significantly lowered participants’ positive affect, reduced feelings of affinity toward the cause, and led to lower ratings of trustworthiness and competence. Paradoxically, participants who interacted with pessimistic CAs donated slightly more money (approximately a 12 % increase) than those who interacted with optimistic CAs. Regression models showed that trust, perceived competence, and situational empathy were significant predictors of donation amount, and mediation analyses indicated that these variables partially mediated the relationship between CA attitude and donation behavior.

The authors interpret these findings through the lens of “negative affect → reduced risk perception → compliance” mechanisms documented in persuasion literature. A pessimistic tone may create a sense of urgency or vulnerability, prompting users to compensate by donating, even while they feel less positively toward the agent and the cause. This indirect pathway highlights how CAs can influence behavior without overt persuasion, raising ethical concerns about “dark patterns” in conversational AI.

The paper contributes to HCI by (1) providing a systematic factorial manipulation of CA personality using LLM‑generated language, (2) demonstrating that personality cues shape user perceptions and emotions, which in turn affect prosocial behavior, and (3) flagging the potential for manipulative designs that exploit users’ psychological vulnerabilities. Limitations include the short, text‑only interaction, the single domain of charitable giving, and a participant pool drawn mainly from US‑based MTurk workers, which may limit generalizability across cultures and longer‑term engagements. Future work is suggested to explore other high‑stakes domains (healthcare, legal advice), longer multimodal interactions, and to develop automated tools for detecting manipulative linguistic patterns in AI‑generated dialogue. The authors call for design guidelines and policy measures that prioritize transparency, user autonomy, and safeguards against covert persuasion in conversational agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment