B$^3$-Seg: Camera-Free, Training-Free 3DGS Segmentation via Analytic EIG and Beta-Bernoulli Bayesian Updates

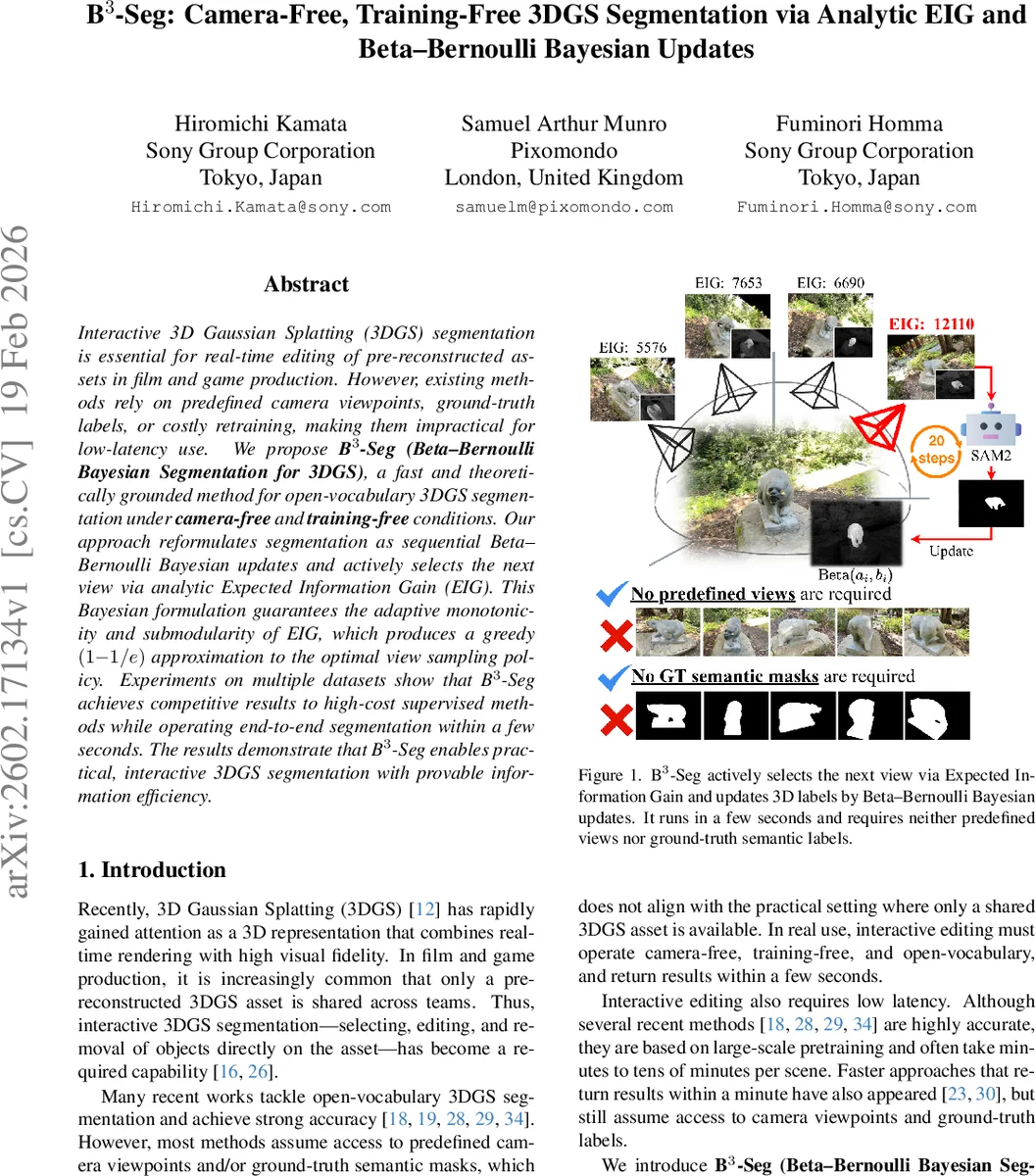

Interactive 3D Gaussian Splatting (3DGS) segmentation is essential for real-time editing of pre-reconstructed assets in film and game production. However, existing methods rely on predefined camera viewpoints, ground-truth labels, or costly retraining, making them impractical for low-latency use. We propose B$^3$-Seg (Beta-Bernoulli Bayesian Segmentation for 3DGS), a fast and theoretically grounded method for open-vocabulary 3DGS segmentation under camera-free and training-free conditions. Our approach reformulates segmentation as sequential Beta-Bernoulli Bayesian updates and actively selects the next view via analytic Expected Information Gain (EIG). This Bayesian formulation guarantees the adaptive monotonicity and submodularity of EIG, which produces a greedy $(1{-}1/e)$ approximation to the optimal view sampling policy. Experiments on multiple datasets show that B$^3$-Seg achieves competitive results to high-cost supervised methods while operating end-to-end segmentation within a few seconds. The results demonstrate that B$^3$-Seg enables practical, interactive 3DGS segmentation with provable information efficiency.

💡 Research Summary

**

The paper introduces B³‑Seg (Beta‑Bernoulli Bayesian Segmentation), a novel method for segmenting 3D Gaussian Splatting (3DGS) assets without requiring predefined camera viewpoints, ground‑truth masks, or any additional training. The authors reformulate the segmentation problem as a sequence of Bayesian updates on per‑Gaussian Bernoulli variables, each governed by a Beta prior. When a 2‑D view is rendered, the contribution of each Gaussian to the foreground or background is estimated using its opacity and transmittance; these contributions serve as pseudo‑counts of successes and failures, which update the Beta parameters analytically thanks to the conjugate Beta‑Bernoulli relationship.

To decide which view to render next, the method computes the Expected Information Gain (EIG) for a set of candidate viewpoints. EIG is defined as the expected reduction in the sum of Beta entropies after observing a view, and it can be evaluated analytically without actually segmenting the rendered image. The authors approximate the pseudo‑counts using the current Beta means, allowing a single render per candidate to estimate its EIG. The view with maximal EIG is selected greedily, rendered, and a high‑quality mask is obtained using a lightweight open‑vocabulary pipeline: Grounding DINO proposes text‑conditioned bounding boxes, SAM2 refines them into masks guided by a soft image generated from the current Beta means, and CLIP re‑ranks the masks against the user query. The resulting mask supplies exact pseudo‑counts for a final Bayesian update.

The paper provides rigorous theoretical analysis. Lemma 1 proves adaptive monotonicity: the expected information gain of any additional view is non‑negative because Beta entropy decreases as the total count increases. Lemma 2 establishes adaptive submodularity: the marginal gain of a view diminishes as more views have already been observed, due to the concave nature of the entropy reduction. Consequently, Theorem 3 shows that the greedy EIG‑based policy achieves a (1 − 1/e) approximation to the optimal adaptive view‑selection policy, invoking a classic result on adaptive submodular maximization.

Experiments are conducted on two public datasets, LERF‑Mask and 3D‑OVS, using a single RTX A6000 GPU. The pipeline runs for 20 iterations, sampling 20 candidate viewpoints per iteration, and initializes all Beta parameters to (1, 1). An initial mask is obtained from a canonical view using the same Grounding DINO + SAM2 + CLIP stack. Across all scenes, B³‑Seg attains Intersection‑over‑Union (IoU) scores comparable to or exceeding those of supervised methods such as Gaussian Grouping, OpenGaussian, and ObjectGS, while completing the entire process in a few seconds—orders of magnitude faster than methods that require minutes of optimization or multi‑view supervision. Qualitative results demonstrate cleaner, more complete masks, especially in cluttered environments where prior methods either miss parts of the object or include background noise.

Key contributions include: (1) a fully camera‑free, training‑free segmentation pipeline for 3DGS; (2) a unified probabilistic model that treats each Gaussian as a Bernoulli variable with a Beta posterior, enabling principled uncertainty handling; (3) an analytic EIG formulation that guides view selection efficiently and provably; (4) theoretical guarantees of adaptive monotonicity, submodularity, and a (1 − 1/e) greedy approximation; and (5) empirical evidence that the method matches high‑cost supervised baselines while operating in real‑time.

Limitations are acknowledged: the approach relies heavily on the quality of the 2‑D masks produced by the open‑vocabulary segmenter, and the choice of initial Beta hyper‑parameters can affect convergence speed. Future work may explore more robust mask generation, multi‑object extensions, automatic hyper‑parameter tuning, and tighter integration with interactive user interfaces. Overall, B³‑Seg offers a theoretically grounded, practically efficient solution for interactive 3DGS segmentation in production pipelines where speed and minimal supervision are paramount.

Comments & Academic Discussion

Loading comments...

Leave a Comment