Synergizing Transport-Based Generative Models and Latent Geometry for Stochastic Closure Modeling

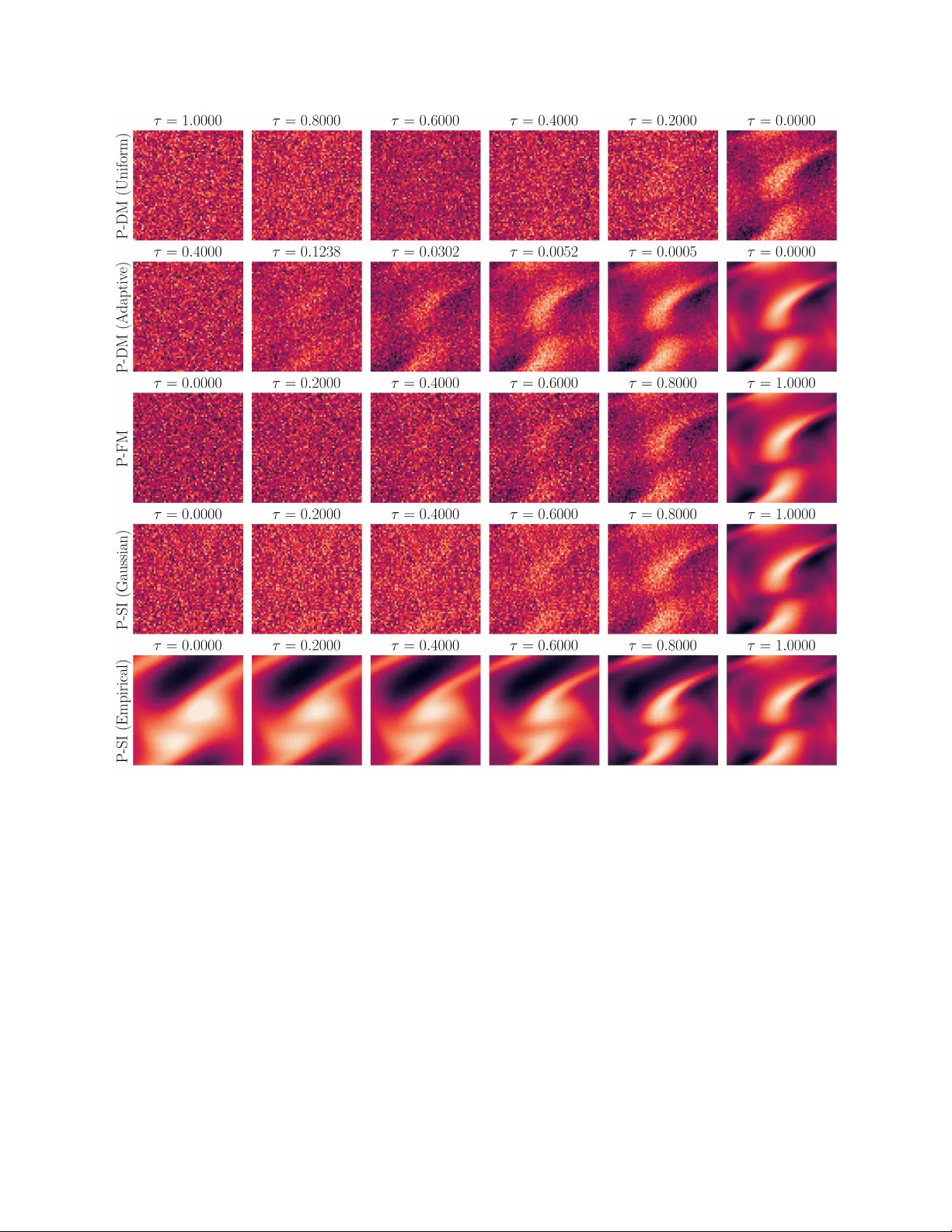

Diffusion models recently developed for generative AI tasks can produce high-quality samples while still maintaining diversity among samples to promote mode coverage, providing a promising path for learning stochastic closure models. Compared to othe…

Authors: Xinghao Dong, Huchen Yang, Jin-long Wu