Calibrate-Then-Act: Cost-Aware Exploration in LLM Agents

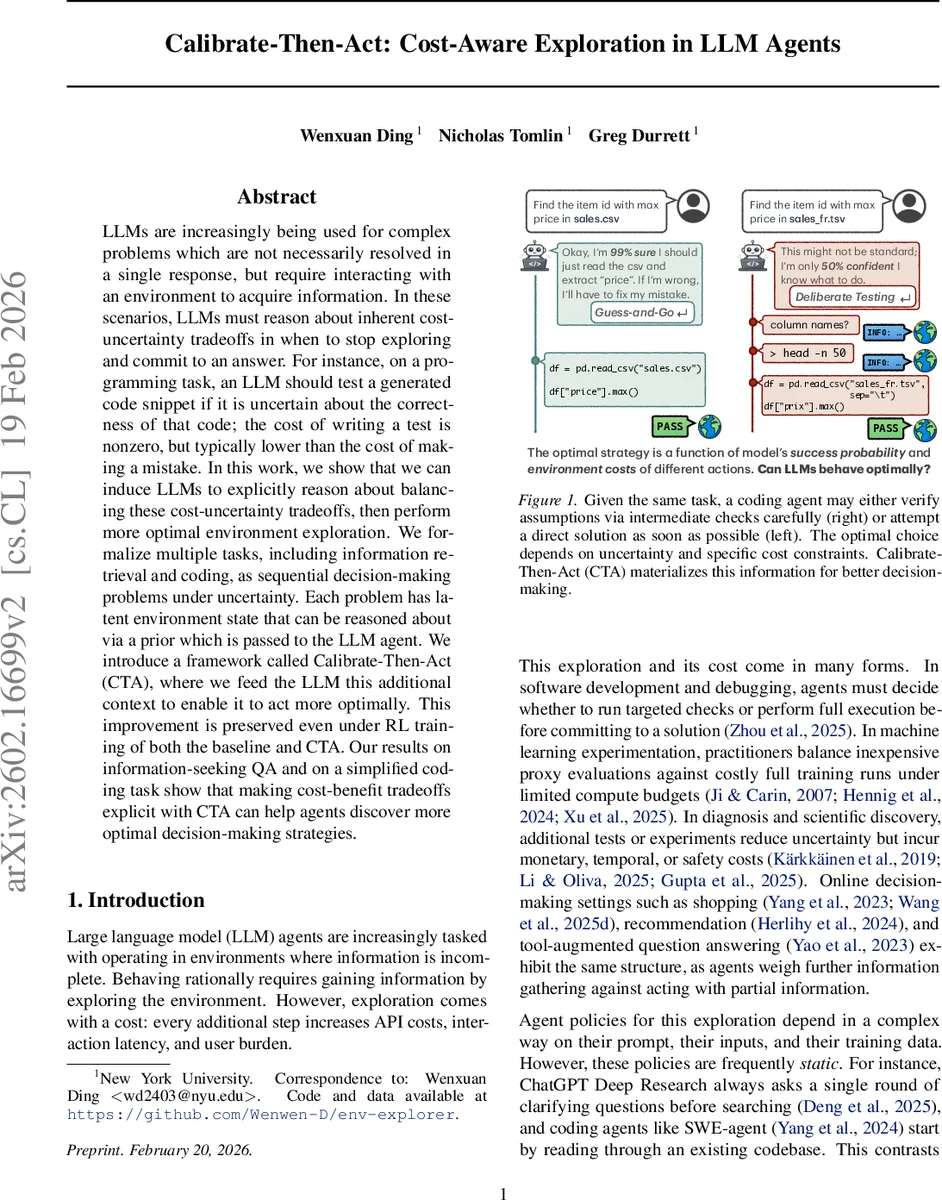

LLMs are increasingly being used for complex problems which are not necessarily resolved in a single response, but require interacting with an environment to acquire information. In these scenarios, LLMs must reason about inherent cost-uncertainty tradeoffs in when to stop exploring and commit to an answer. For instance, on a programming task, an LLM should test a generated code snippet if it is uncertain about the correctness of that code; the cost of writing a test is nonzero, but typically lower than the cost of making a mistake. In this work, we show that we can induce LLMs to explicitly reason about balancing these cost-uncertainty tradeoffs, then perform more optimal environment exploration. We formalize multiple tasks, including information retrieval and coding, as sequential decision-making problems under uncertainty. Each problem has latent environment state that can be reasoned about via a prior which is passed to the LLM agent. We introduce a framework called Calibrate-Then-Act (CTA), where we feed the LLM this additional context to enable it to act more optimally. This improvement is preserved even under RL training of both the baseline and CTA. Our results on information-seeking QA and on a simplified coding task show that making cost-benefit tradeoffs explicit with CTA can help agents discover more optimal decision-making strategies.

💡 Research Summary

The paper tackles a fundamental challenge for large language model (LLM) agents that must interact with an external environment: deciding when to keep exploring (incurring cost) and when to stop and commit to an answer. The authors formalize this as a sequential decision‑making problem under uncertainty, modeled as a partially observable Markov decision process (POMDP) with a discount factor Dθ that captures the cost of each exploratory action. The reward is given only upon a commit action and is multiplied by the accumulated discount, so the agent must balance information gain against exploration cost.

The central contribution is the Calibrate‑Then‑Act (CTA) framework. CTA separates (1) calibration, where an explicit prior distribution (\hat p(Z|x)) (or optionally a posterior estimate) about hidden world variables is computed by an auxiliary estimator and injected into the LLM’s prompt, and (2) action, where the LLM, now conditioned on this prior, selects actions using the same prompt‑based or RL‑based policy but with direct access to the quantitative uncertainty information. By making the prior explicit, the LLM can perform a form of Bayesian reasoning about the expected value of further exploration versus immediate commitment.

Three experimental domains are used to validate CTA:

-

Pandora’s Box Toy Problem – A classic optimal stopping problem where one of K boxes contains a prize of value 1, each inspection incurs a discount γ, and the agent knows the prior probabilities of each box containing the prize. The optimal policy is provably “inspect boxes in decreasing prior order, stop and commit when the posterior exceeds γ”. Using Qwen‑3‑8B, the authors show that a CTA‑prompted agent matches the oracle policy 94 % of the time and achieves an average reward of 0.625, whereas baseline prompted agents without priors (Prompted‑NT, Prompted) achieve near‑zero match rates and much lower rewards.

-

Knowledge‑QA with Optional Retrieval – The LLM receives a factual question and can either answer directly (using its parametric knowledge) or invoke a costly external retriever. The prior here is derived from the model’s own confidence score, interpreted as the probability of answering correctly without retrieval. CTA enables the model to defer to retrieval only when confidence is low, reducing API cost while maintaining or improving accuracy. The CTA‑prompted version outperforms a standard RL‑trained baseline (average reward 0.71 vs. 0.58) and shows more disciplined cost‑aware behavior.

-

Coding Task with Environmental Priors – In a simplified debugging scenario, the agent must decide whether to write and run unit tests, inspect file schemas, or directly output a fix. Priors about file structure, variable types, and typical bug patterns are estimated from past data and supplied to the prompt. CTA‑RL agents achieve a 15 % higher success rate than a baseline coding agent and demonstrate fewer unnecessary test executions, evidencing more efficient exploration.

Across all settings, the authors also evaluate a hybrid CTA‑RL approach, where the prior‑augmented prompt is used during reinforcement learning fine‑tuning. This yields consistent gains over pure RL, confirming that the explicit prior information is not easily learned end‑to‑end from reward signals alone.

Key insights include:

- Explicit priors dramatically improve LLM reasoning about cost‑benefit trade‑offs; without them, even strong LLMs fail to discover optimal stopping rules.

- CTA is lightweight: the prior estimator can be a simple classifier or confidence‑based model trained on existing data, and the augmented prompt can be used zero‑shot or with RL fine‑tuning.

- Cost functions are flexible; Dθ can encode latency, monetary API charges, or user fatigue, allowing CTA to be adapted to diverse real‑world deployment constraints.

- RL alone is insufficient for learning cost‑aware exploration when the underlying reward structure is sparse and the environment’s hidden state is complex.

The paper concludes that making uncertainty quantification explicit via priors is a practical and effective route to more rational, cost‑aware LLM agents. Future work may explore richer prior representations (e.g., hierarchical Bayesian models), automated prior‑estimation pipelines, and applications to domains such as scientific experimentation, recommendation systems, and autonomous tool use.

Comments & Academic Discussion

Loading comments...

Leave a Comment