SceneVGGT: VGGT-based online 3D semantic SLAM for indoor scene understanding and navigation

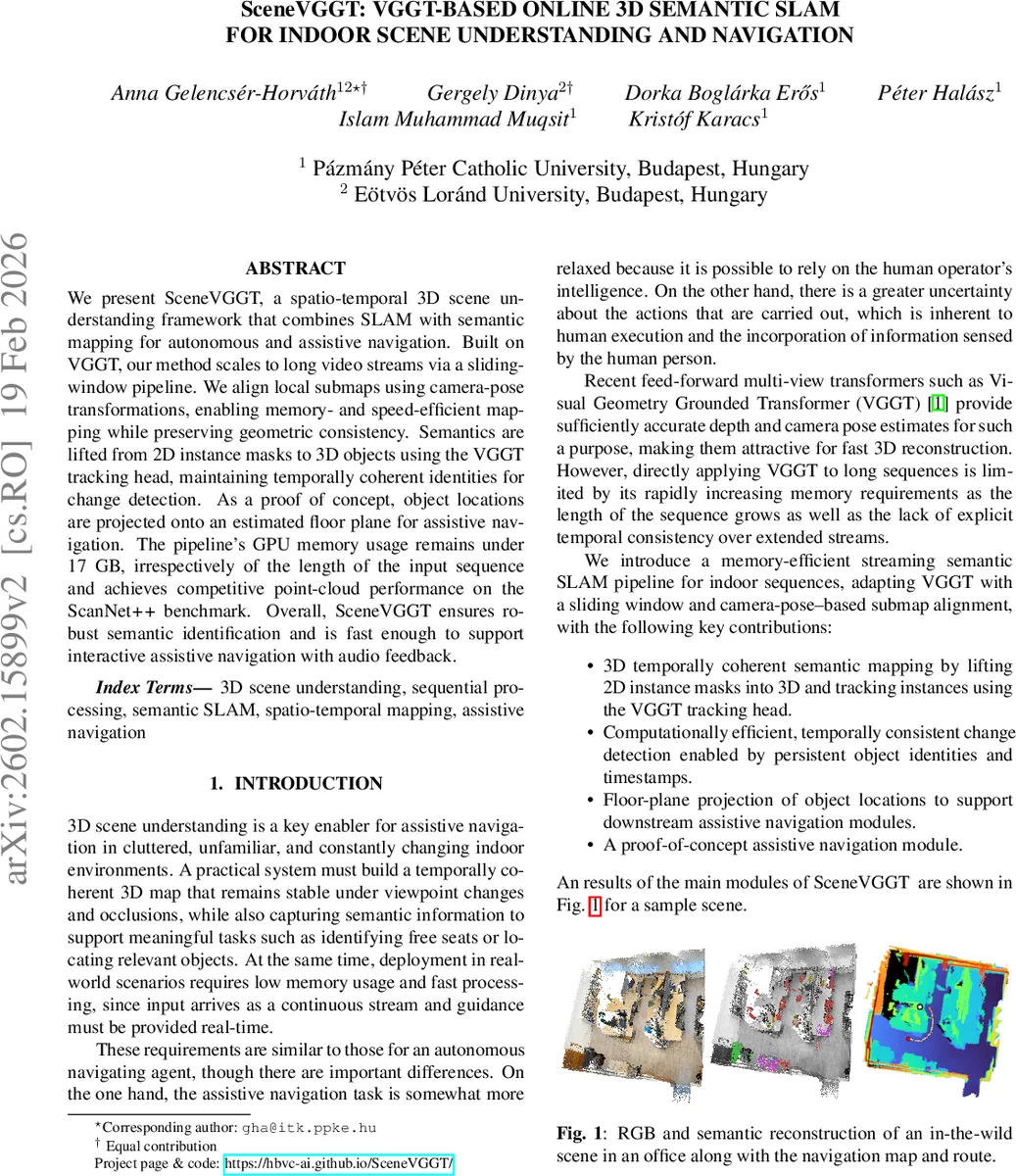

We present SceneVGGT, a spatio-temporal 3D scene understanding framework that combines SLAM with semantic mapping for autonomous and assistive navigation. Built on VGGT, our method scales to long video streams via a sliding-window pipeline. We align local submaps using camera-pose transformations, enabling memory- and speed-efficient mapping while preserving geometric consistency. Semantics are lifted from 2D instance masks to 3D objects using the VGGT tracking head, maintaining temporally coherent identities for change detection. As a proof of concept, object locations are projected onto an estimated floor plane for assistive navigation. The pipeline’s GPU memory usage remains under 17 GB, irrespectively of the length of the input sequence and achieves competitive point-cloud performance on the ScanNet++ benchmark. Overall, SceneVGGT ensures robust semantic identification and is fast enough to support interactive assistive navigation with audio feedback.

💡 Research Summary

SceneVGGT introduces a practical online 3D semantic SLAM system designed for indoor scene understanding and assistive navigation. Built upon the Visual Geometry Grounded Transformer (VGGT), the method overcomes VGGT’s inherent memory growth with sequence length by employing a sliding‑window streaming pipeline. Input video streams are partitioned into disjoint blocks of n frames; each block is processed together with k ≤ n overlapping keyframes from the previous block (the authors use k = n). Overlapping frames provide inter‑block pose constraints, allowing the system to compose relative transforms and align each new sub‑map with the accumulated global trajectory. Depth predictions from VGGT are scaled to metric units using concurrent LiDAR depth measurements; low‑confidence pixels and those outside the sensor’s reliable range are masked out to avoid corrupting alignment.

Semantic mapping is achieved by lifting 2‑D instance masks into 3‑D point clouds while preserving temporally consistent object identities. Instance segmentation masks are first eroded and uniformly sampled; the VGGT tracking head then propagates sampled points across frames within a block, assigning a persistent tracklet ID to each physical object. New objects appearing mid‑block are marked “untracked” until a future keyframe can initialize a tracklet. Tracklet matching across blocks uses Chamfer distance between per‑frame median point sets, with a 30 cm threshold for merging. Each tracklet stores its last observed frame index and a confidence score, enabling three states: RECENT (currently observed), REMOVED (confidence decayed to zero after missed detections), and RETAINED (out of view but still present). This mechanism provides change detection and temporal consistency without re‑projecting the entire accumulated foreground cloud.

For navigation, the system assumes a flat indoor floor. A RANSAC‑based plane estimator runs periodically on the reconstructed 3‑D cloud; if the plane changes beyond 5° or 0.1 m, the reference is updated and all points are re‑projected. The 3‑D cloud is then collapsed onto a 2‑D grid, where each cell stores the mean point density (points per observation) to reduce sensitivity to transient objects. Obstacles are derived from semantic masks; cells occupied by objects are dilated to account for the agent’s footprint, yielding a conservative free‑space map. The floor‑plane projection also produces a binary known/unknown mask based on height filtering; unknown cells are treated as non‑traversable. Goal selection for assistive navigation filters the map for a target class (e.g., chairs), discards small spurious components, and chooses the nearest centroid to the user. If no target is visible, frontier‑based exploration is invoked, while a human‑in‑the‑loop (e.g., a white cane) handles navigation in truly unknown regions. Audio feedback conveys the selected goal and navigation instructions.

Experimental evaluation on the 7‑Scenes dataset follows the StreamVGGT protocol, reporting Root Mean Square Error of the Average Trajectory Error (RMSE‑ATE) and reconstruction metrics (accuracy, completeness, normal consistency). SceneVGGT achieves comparable or better performance than prior streaming methods (StreamVGGT, InfiniteVGGT) while keeping peak GPU memory usage under 17 GB regardless of sequence length. Semantic performance is assessed on ScanNet++ validation and test splits using ground‑truth instance masks for nine frequent categories (aligned with COCO). ID consistency is measured by the dominance ratio, showing high stability of tracklet IDs across frames. A proof‑of‑concept assistive navigation demo demonstrates successful seat‑finding in office scenes, with real‑time audio guidance.

In summary, SceneVGGT delivers a memory‑bounded, computationally efficient pipeline that unifies dense 3‑D reconstruction, temporally coherent semantic instance tracking, and floor‑plane‑based 2‑D navigation. By leveraging VGGT’s depth and pose predictions, a sliding‑window alignment strategy, and a lightweight tracking head, the system scales to long video streams while maintaining geometric and semantic consistency. This makes it well‑suited for embodied agents, indoor robots, and assistive devices that require continuous, real‑time scene understanding and navigation support.

Comments & Academic Discussion

Loading comments...

Leave a Comment