Accelerating Large-Scale Dataset Distillation via Exploration-Exploitation Optimization

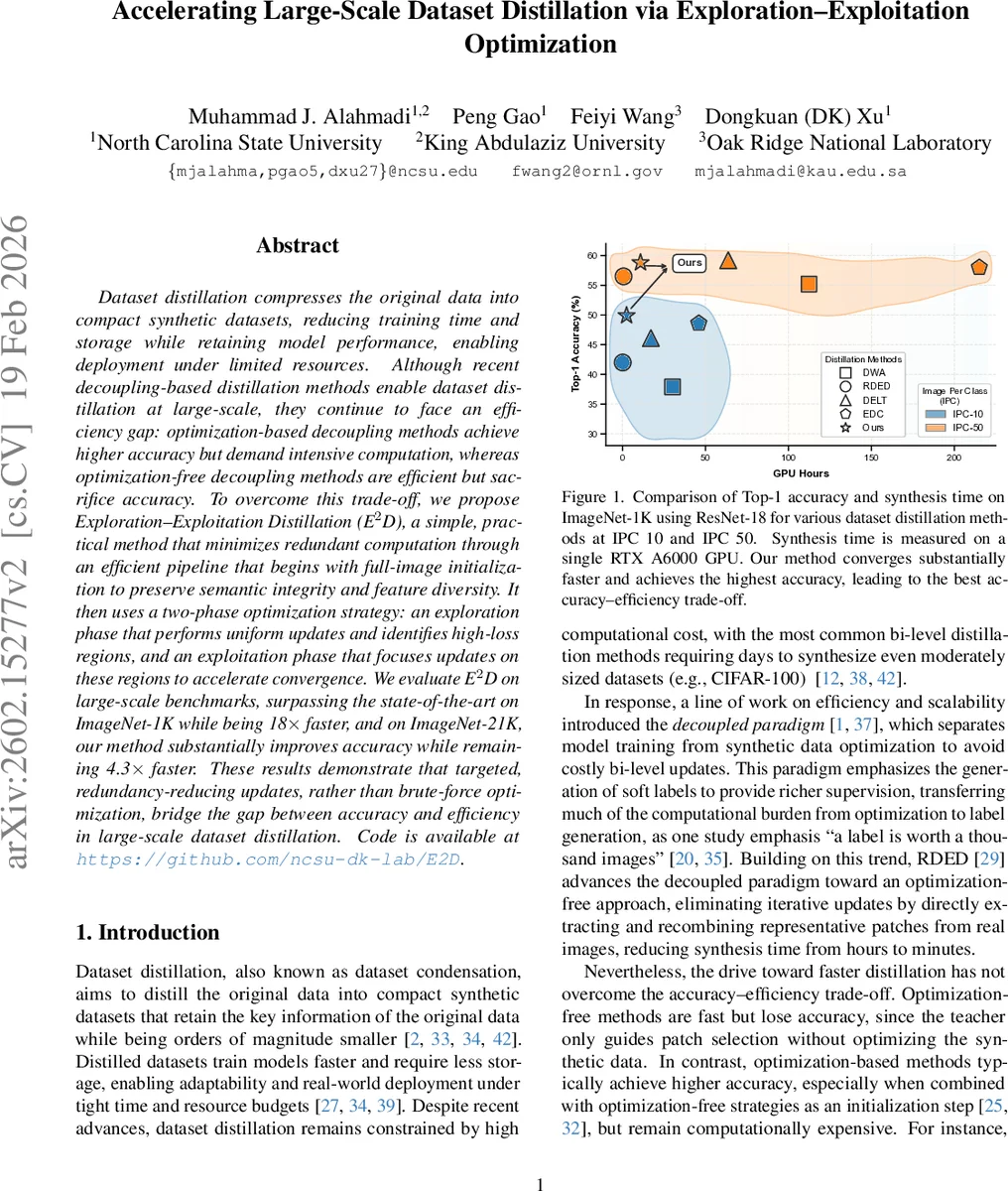

Dataset distillation compresses the original data into compact synthetic datasets, reducing training time and storage while retaining model performance, enabling deployment under limited resources. Although recent decoupling-based distillation methods enable dataset distillation at large scale, they continue to face an efficiency gap: optimization-based decoupling methods achieve higher accuracy but demand intensive computation, whereas optimization-free decoupling methods are efficient but sacrifice accuracy. To overcome this trade-off, we propose Exploration–Exploitation Distillation (E$^2$D), a simple, practical method that minimizes redundant computation through an efficient pipeline that begins with full-image initialization to preserve semantic integrity and feature diversity. It then uses a two-phase optimization strategy: an exploration phase that performs uniform updates and identifies high-loss regions, and an exploitation phase that focuses updates on these regions to accelerate convergence. We evaluate E$^2$D on large-scale benchmarks, surpassing the state-of-the-art on ImageNet-1K while being $18\times$ faster, and on ImageNet-21K, our method substantially improves accuracy while remaining $4.3\times$ faster. These results demonstrate that targeted, redundancy-reducing updates, rather than brute-force optimization, bridge the gap between accuracy and efficiency in large-scale dataset distillation. Code is available at https://github.com/ncsu-dk-lab/E2D.

💡 Research Summary

This paper addresses the long‑standing accuracy‑efficiency trade‑off in large‑scale dataset distillation. Existing approaches fall into two extremes: optimization‑based methods achieve high accuracy but require prohibitive GPU hours, while optimization‑free methods are fast but suffer substantial performance loss. The authors identify “redundancy” as the root cause of inefficiency, arising from (1) patch‑based initialization that generates many similar crops, reducing diversity, and (2) uniform gradient updates that treat every region of the synthetic data as equally important, leading to unnecessary computation. To eliminate these sources of redundancy, they propose Exploration‑Exploitation Distillation (E²D).

E²D introduces full‑image initialization instead of small patches. By starting from whole images, each synthetic sample preserves the semantic and structural information of the original data, providing strong class‑level signals from the outset and dramatically reducing the amount of corrective optimization needed later.

The core of E²D is a two‑phase optimization strategy. In the Exploration phase, the method performs K iterations of random multi‑crop updates on each synthetic image, computing the teacher model’s loss for each crop. Crops whose loss exceeds a predefined threshold ε are stored in a per‑image memory buffer together with their coordinates and loss values. This process builds an “uncertainty map” that highlights high‑loss regions needing further attention.

During the Exploitation phase, optimization shifts focus to these high‑loss regions. Crops are sampled from the buffer with probabilities proportional to their stored loss (softmax over loss values). Only the selected crops are updated, and after each update the loss is recomputed; crops whose loss falls below ε are removed from the buffer. This targeted refinement concentrates computation on the most informative parts of the synthetic data, accelerating convergence and preventing the wasteful repetition of low‑impact updates.

The authors also observe that global‑statistics alignment (e.g., matching batch‑norm means and variances) behaves differently depending on the state of the synthetic features. When features are far from the target distribution (as with patch‑based initialization), global alignment reduces redundancy. However, once the features are already well‑aligned (as with full‑image initialization), continued global alignment amplifies shared class‑level signals and erodes instance‑level diversity. Consequently, E²D limits global updates after the initial correction and relies on the focused exploitation of high‑loss regions to preserve diversity.

An accelerated learning schedule is applied during student model training, adjusting learning rates and epoch counts to further reduce total synthesis time.

Extensive experiments on ImageNet‑1K and ImageNet‑21K validate the approach. On ImageNet‑1K with ResNet‑18 teachers and students, E²D achieves state‑of‑the‑art Top‑1 accuracy at IPC 10 and IPC 50 while being up to 18× faster than the previous best method. On ImageNet‑21K, it improves accuracy by up to +9.6% and remains 4.3× faster. Cosine‑similarity analysis across classes shows that E²D consistently yields the lowest intra‑class similarity, indicating superior diversity.

In summary, E²D bridges the accuracy‑efficiency gap by (1) eliminating redundant initialization through full‑image seeding, and (2) employing a principled exploration‑exploitation optimization that concentrates updates on high‑loss regions. This results in a practical, scalable dataset distillation pipeline suitable for real‑world scenarios where computational resources are limited.

Comments & Academic Discussion

Loading comments...

Leave a Comment