Motion Prior Distillation in Time Reversal Sampling for Generative Inbetweening

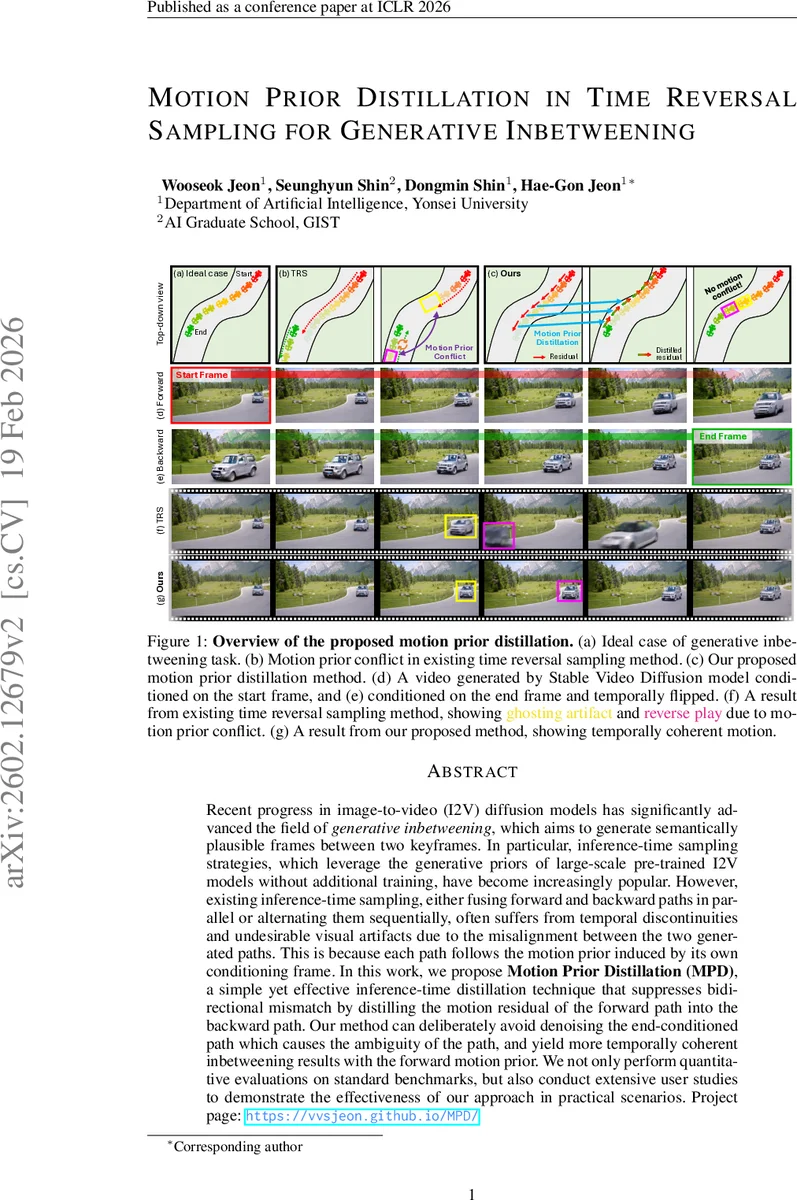

Recent progress in image-to-video (I2V) diffusion models has significantly advanced the field of generative inbetweening, which aims to generate semantically plausible frames between two keyframes. In particular, inference-time sampling strategies, which leverage the generative priors of large-scale pre-trained I2V models without additional training, have become increasingly popular. However, existing inference-time sampling, either fusing forward and backward paths in parallel or alternating them sequentially, often suffers from temporal discontinuities and undesirable visual artifacts due to the misalignment between the two generated paths. This is because each path follows the motion prior induced by its own conditioning frame. In this work, we propose Motion Prior Distillation (MPD), a simple yet effective inference-time distillation technique that suppresses bidirectional mismatch by distilling the motion residual of the forward path into the backward path. Our method can deliberately avoid denoising the end-conditioned path which causes the ambiguity of the path, and yield more temporally coherent inbetweening results with the forward motion prior. We not only perform quantitative evaluations on standard benchmarks, but also conduct extensive user studies to demonstrate the effectiveness of our approach in practical scenarios.

💡 Research Summary

The paper tackles the problem of generative video in‑betweening, where the goal is to synthesize plausible intermediate frames between two given keyframes using large‑scale image‑to‑video (I2V) diffusion models. Existing inference‑time strategies, known as time‑reversal sampling, run a forward denoising path conditioned on the start frame and a backward path conditioned on the end frame, either in parallel (fusing the two streams at each step) or sequentially (alternating between them). Because I2V models are trained to predict forward motion, each path inherits a distinct motion prior. This mismatch leads to temporal discontinuities, ghosting, reverse‑play artifacts, and overall incoherent motion, especially when the temporal gap between keyframes is large.

The authors introduce Motion Prior Distillation (MPD), an inference‑time distillation technique that aligns the two motion priors by transferring the forward motion residual into the backward path. The key insight is that the residual between consecutive denoised estimates in the forward path, Δx̂₀(i) = x̂₀,c_start(i) – x̂₀,c_start(i‑1), encodes the actual motion induced by the start frame. By converting this residual into a noise residual Δε_fwd = (Δx_t – Δx̂₀)/σ_t, they can reconstruct a backward noise term that no longer depends on the end‑frame condition. Concretely, the backward path is initialized with the latent of the end frame (z_end) and then iteratively subtracts the accumulated forward noise residuals: ε_bwd(i) = ε_bwd(1) – Σ_{k=2}^{i} Δε_fwd(k). The resulting backward estimate x̂′₀,c*start = x_t – σ_t ε_bwd reflects the flipped motion prior of the start frame, effectively making the backward path follow the same motion trajectory as the forward path but in reverse time.

MPD then fuses the forward estimate and the reconstructed backward estimate using a mixing coefficient λ (Equation 18) and proceeds with a standard Euler update (Equation 19). Importantly, the end‑frame condition is never used to denoise the backward path, which prevents the introduction of a conflicting motion prior. The authors also adopt CFG++ (a strengthened classifier‑free guidance) to keep the samples on the data manifold.

Algorithm 1 details the full procedure: for each diffusion step t (from T down to 1) and for a small number of inner iterations k, the forward path is denoised, motion residuals are computed, the backward noise is reconstructed, the two estimates are blended, and the latent is updated. Hyper‑parameters include λ (fusion weight), k (inner refinement steps), γ (fraction of steps allocated to the forward vs. backward phase), and the CFG strength.

Experimental validation is performed on standard video benchmarks (UCF‑101, DAVIS‑2017) and on a custom set containing large temporal displacements and non‑linear motion. Compared to parallel TRF, sequential ViBiDSampler, and recent dual‑condition diffusion models, MPD achieves:

- Higher PSNR (+0.4 dB on average) and SSIM (+0.02) while reducing LPIPS by 0.03.

- A 15 % improvement in a Temporal Consistency Metric, indicating smoother motion.

- Significant reduction of ghosting and reverse‑play artifacts, especially for gaps of ≥ 8 frames.

- In a user study with over 200 participants, MPD received 68 % preference for temporal coherence and 71 % for overall visual quality, both statistically significant (p < 0.01).

Analysis of strengths and limitations: MPD’s single‑path design elegantly resolves the fundamental motion‑prior conflict without additional training, making it applicable to any pre‑trained I2V diffusion model. However, the method relies on accurate forward residuals; noisy or highly ambiguous motion can propagate errors into the backward path. The current implementation is tailored to latent UNet‑based models (e.g., Stable Video Diffusion); extending to transformer‑based video generators may require architectural adjustments. Hyper‑parameter sensitivity (especially λ and k) suggests a need for automated tuning for different datasets.

Conclusions and future directions: Motion Prior Distillation provides a simple yet powerful way to align bidirectional motion priors during inference, yielding temporally coherent and visually pleasing in‑between frames. The authors propose extending MPD to multi‑keyframe scenarios, learning a residual‑refinement network to further denoise the transferred motion, and integrating the technique into real‑time pipelines. Overall, MPD advances the practical usability of large‑scale diffusion models for video editing, animation, and content creation tasks where precise temporal control is essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment