Learning Perceptual Representations for Gaming NR-VQA with Multi-Task FR Signals

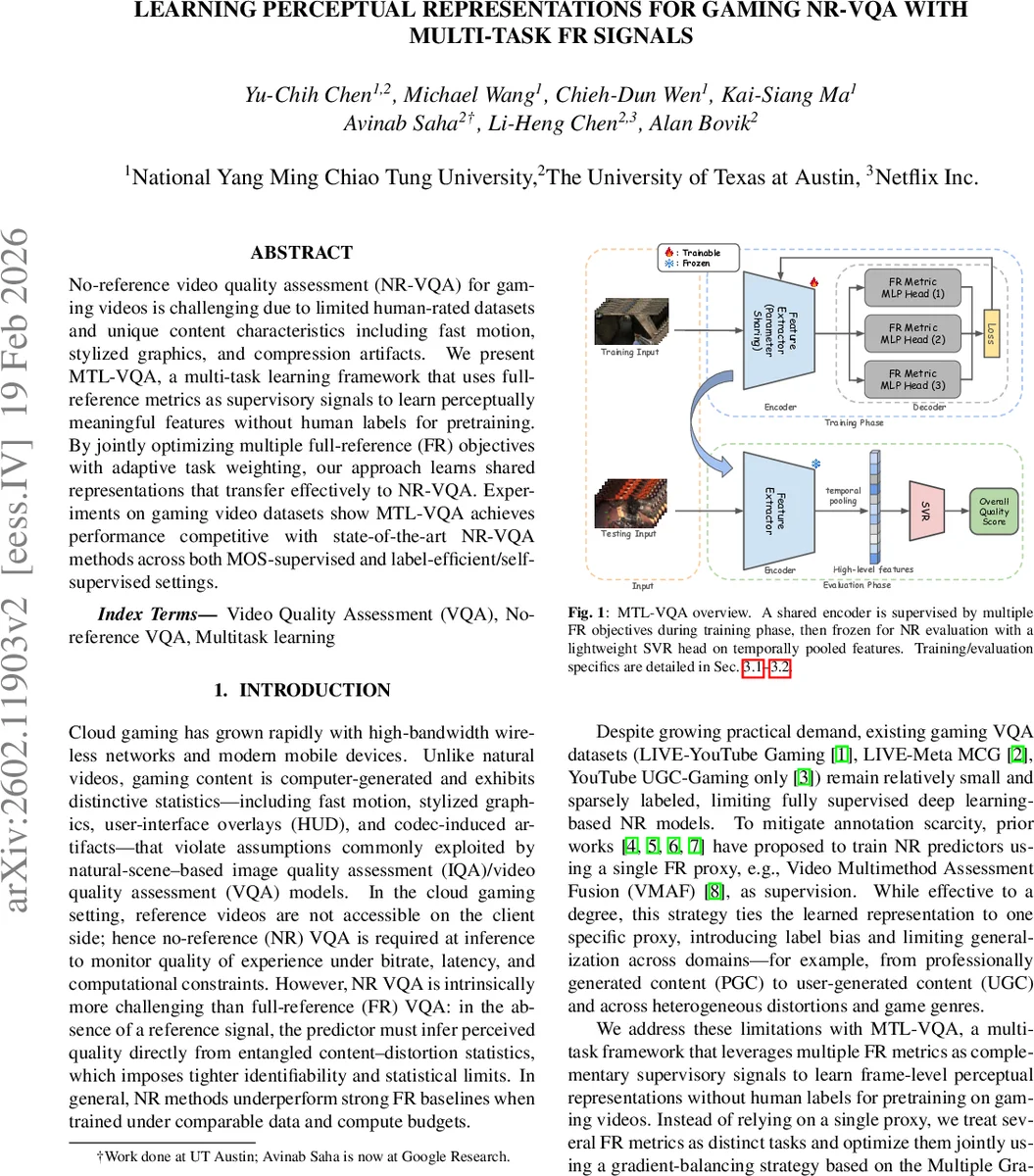

No-reference video quality assessment (NR-VQA) for gaming videos is challenging due to limited human-rated datasets and unique content characteristics including fast motion, stylized graphics, and compression artifacts. We present MTL-VQA, a multi-task learning framework that uses full-reference metrics as supervisory signals to learn perceptually meaningful features without human labels for pretraining. By jointly optimizing multiple full-reference (FR) objectives with adaptive task weighting, our approach learns shared representations that transfer effectively to NR-VQA. Experiments on gaming video datasets show MTL-VQA achieves performance competitive with state-of-the-art NR-VQA methods across both MOS-supervised and label-efficient/self-supervised settings.

💡 Research Summary

The paper tackles the problem of no‑reference video quality assessment (NR‑VQA) for gaming videos, a domain that suffers from scarce human‑rated datasets and distinctive visual characteristics such as rapid motion, stylized graphics, HUD overlays, and compression artifacts. To overcome the lack of MOS labels and the domain shift between natural‑scene models and computer‑generated game content, the authors propose MTL‑VQA, a multi‑task learning framework that uses multiple full‑reference (FR) quality metrics as supervisory signals during a pre‑training phase.

In the pre‑training stage, the authors collect three professional‑generated gaming video datasets (GamingVideoSET, KUGVD, CGVDS) and generate additional distorted versions by encoding each reference at five bitrate levels (0.25–5 Mbps) using ffmpeg. This yields roughly 885 000 frames, each annotated with four FR metrics: SSIM, MS‑SSIM, VMAF, and FovVideoVDP. A shared ResNet‑50 encoder extracts frame‑level embeddings, and lightweight MLP heads predict each FR metric from the shared representation. The loss for each task is a Smooth‑L1 regression loss. To prevent any single task from dominating the shared encoder, the authors compute per‑task gradients and solve a MinNorm optimization problem (the Multiple Gradient Descent Algorithm) to obtain non‑negative weights that minimize the L2 norm of the weighted gradient sum. The weighted sum of the task losses is then back‑propagated to update both the encoder and the task heads.

After this proxy‑supervised pre‑training, the encoder is frozen. For NR evaluation on target datasets, the authors apply a simple temporal mean pooling over frame embeddings to obtain a clip‑level descriptor, and train only a lightweight regressor (either an RBF‑kernel SVR or a Ridge regression) on a small number of MOS‑labeled clips. This design yields a highly label‑efficient adaptation: with as few as 10–100 labeled clips, the model reaches performance comparable to fully supervised NR‑VQA methods.

The evaluation uses three disjoint test sets: LIVE‑Meta MCG (PGC), LIVE‑YouTube Gaming (PGC), and YouTube UGC‑Gaming (user‑generated content). Three protocols are examined: zero‑shot (no target labels, only a source MOS head), few‑shot (K ∈ {10,20,50,100} target MOS samples), and standard 80/20 splits with cross‑validation. Across all protocols, MTL‑VQA consistently outperforms single‑proxy baselines (e.g., VMAF‑only) and matches or exceeds state‑of‑the‑art NR‑VQA models such as GAMIV‑AL, CONVIQT, and DO‑VER++. Notably, on the challenging YouTube UGC‑Gaming set, MTL‑VQA attains an SRCC of 0.8292 and a PLCC of 0.9301 with only 100 labeled clips, demonstrating strong transferability despite the domain shift from PGC to UGC.

Ablation studies confirm that adding complementary FR tasks (SSIM, MS‑SSIM, VMAF) yields an average SRCC gain of about 0.054 across benchmarks, validating the benefit of multi‑proxy supervision. The adaptive weighting via MinNorm proves more effective than fixed‑weight multi‑loss baselines, reducing proxy‑specific bias.

From a systems perspective, the authors keep the computational load low: frames are down‑sampled to 960 × 540, only one frame per two is processed, and the backbone is a standard ResNet‑50. At inference time, no reference video is required, and the lightweight SVR head enables real‑time quality monitoring suitable for cloud‑gaming QoE pipelines. The code and pretrained models will be released for reproducibility.

In summary, MTL‑VQA introduces a principled multi‑task pre‑training strategy that leverages multiple FR metrics to learn a robust, quality‑aware representation without human labels, and then adapts this representation to NR‑VQA with minimal MOS data. This approach addresses both the data scarcity and domain‑specific challenges of gaming video quality assessment, offering a practical solution for real‑world cloud‑gaming services.

Comments & Academic Discussion

Loading comments...

Leave a Comment