BrainRVQ: A High-Fidelity EEG Foundation Model via Dual-Domain Residual Quantization and Hierarchical Autoregression

Developing foundation models for electroencephalography (EEG) remains challenging due to the signal's low signal-to-noise ratio and complex spectro-temporal non-stationarity. Existing approaches often overlook the hierarchical latent structure inhere…

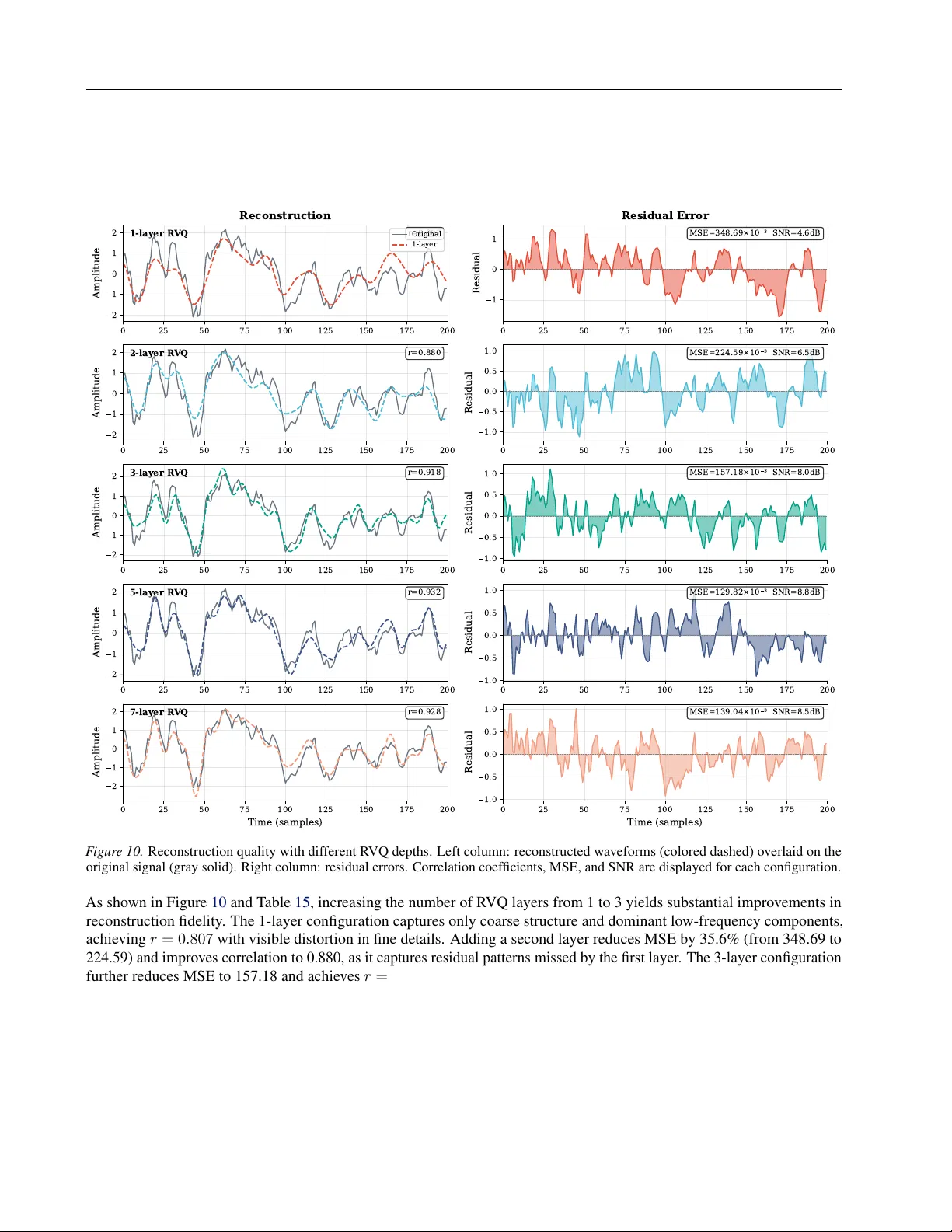

Authors: Mingzhe Cui, Tao Chen, Yang Jiao