Learning Distributed Equilibria in Linear-Quadratic Stochastic Differential Games: An $α$-Potential Approach

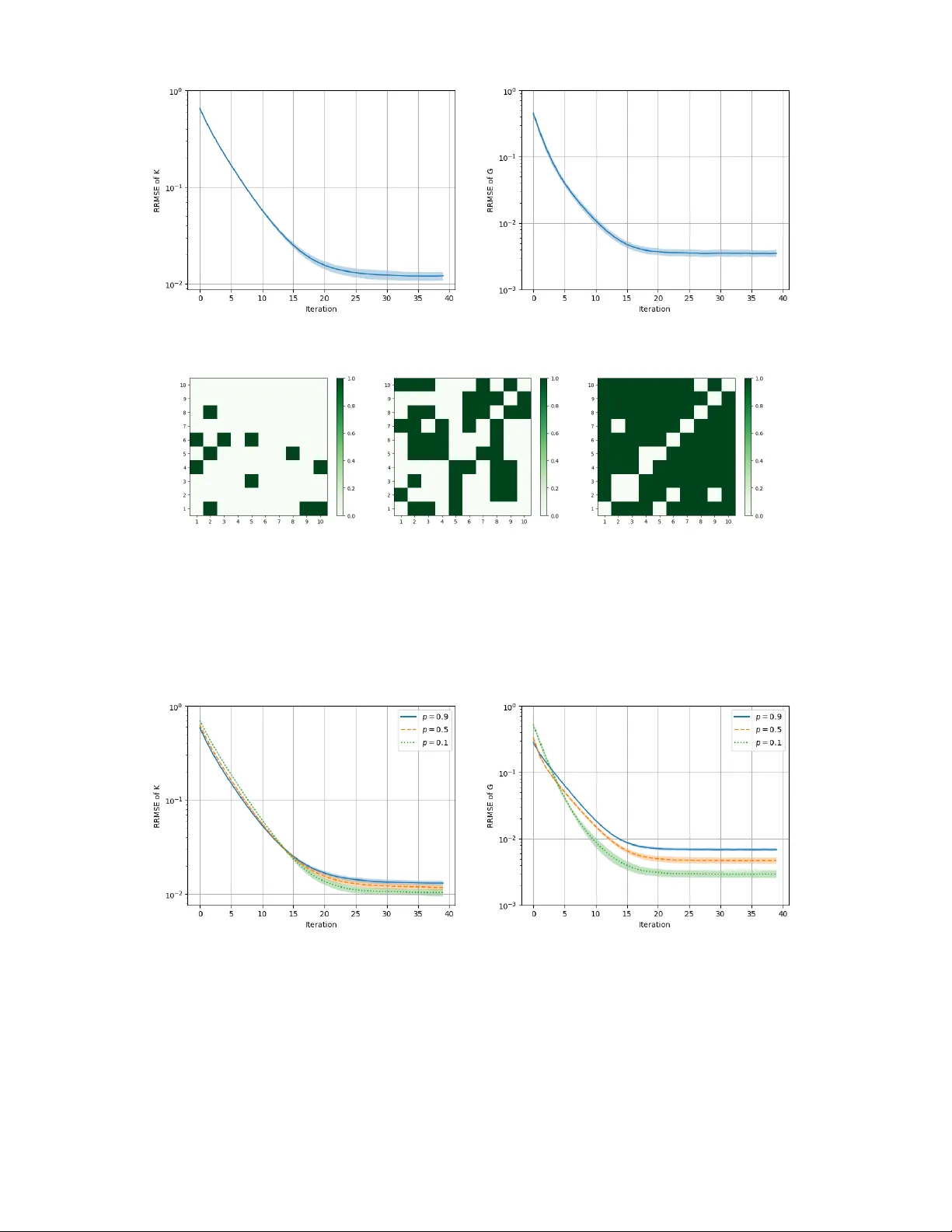

We analyze independent policy-gradient (PG) learning in $N$-player linear-quadratic (LQ) stochastic differential games. Each player employs a distributed policy that depends only on its own state and updates the policy independently using the gradien…

Authors: Philipp Plank, Yufei Zhang