Two-way Clustering Robust Variance Estimator in Quantile Regression Models

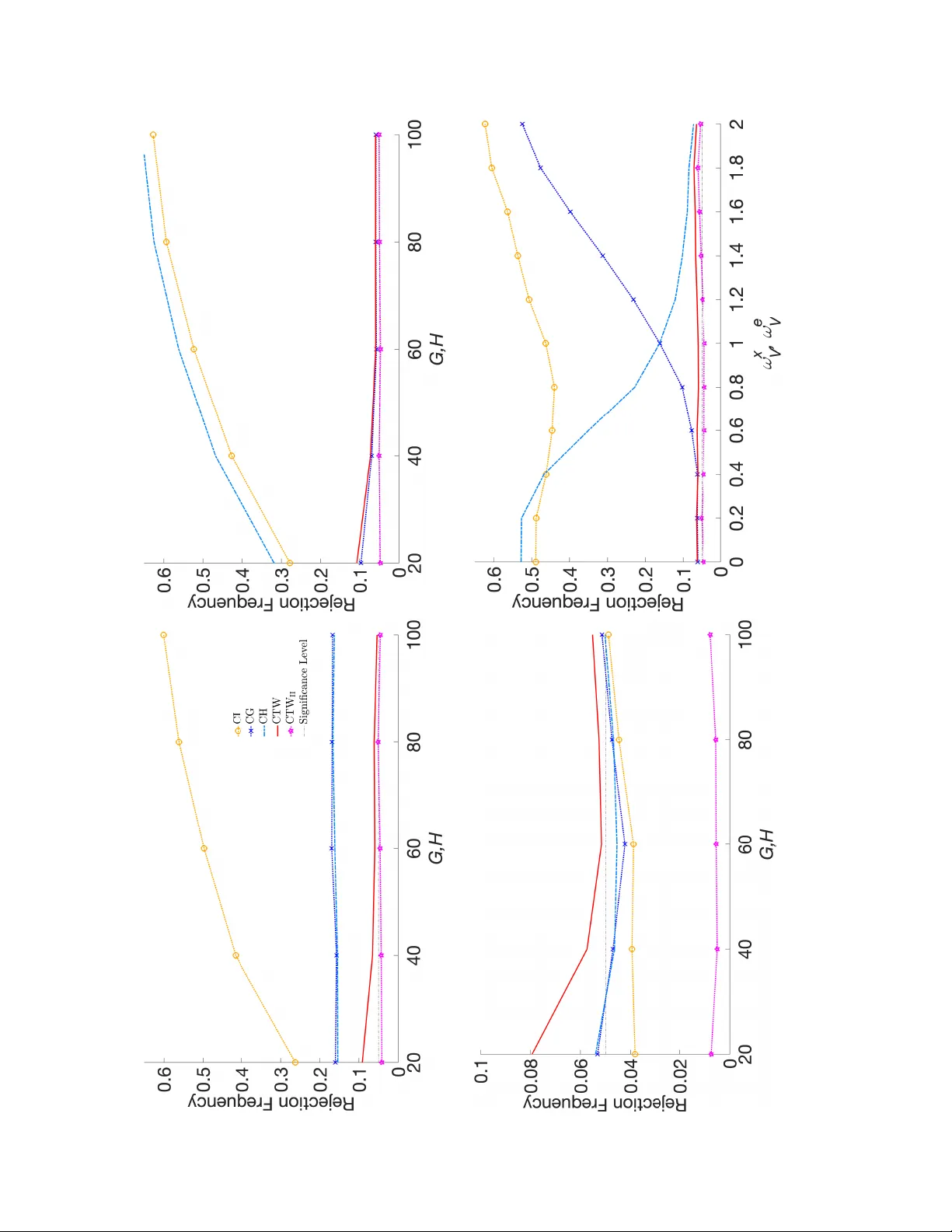

We study inference for linear quantile regression with two-way clustered data. Using a separately exchangeable array framework and a projection decomposition of the quantile score, we characterize regime-dependent convergence rates and establish a se…

Authors: Ulrich Hounyo, Jiahao Lin