A general framework for modeling Gaussian process with qualitative and quantitative factors

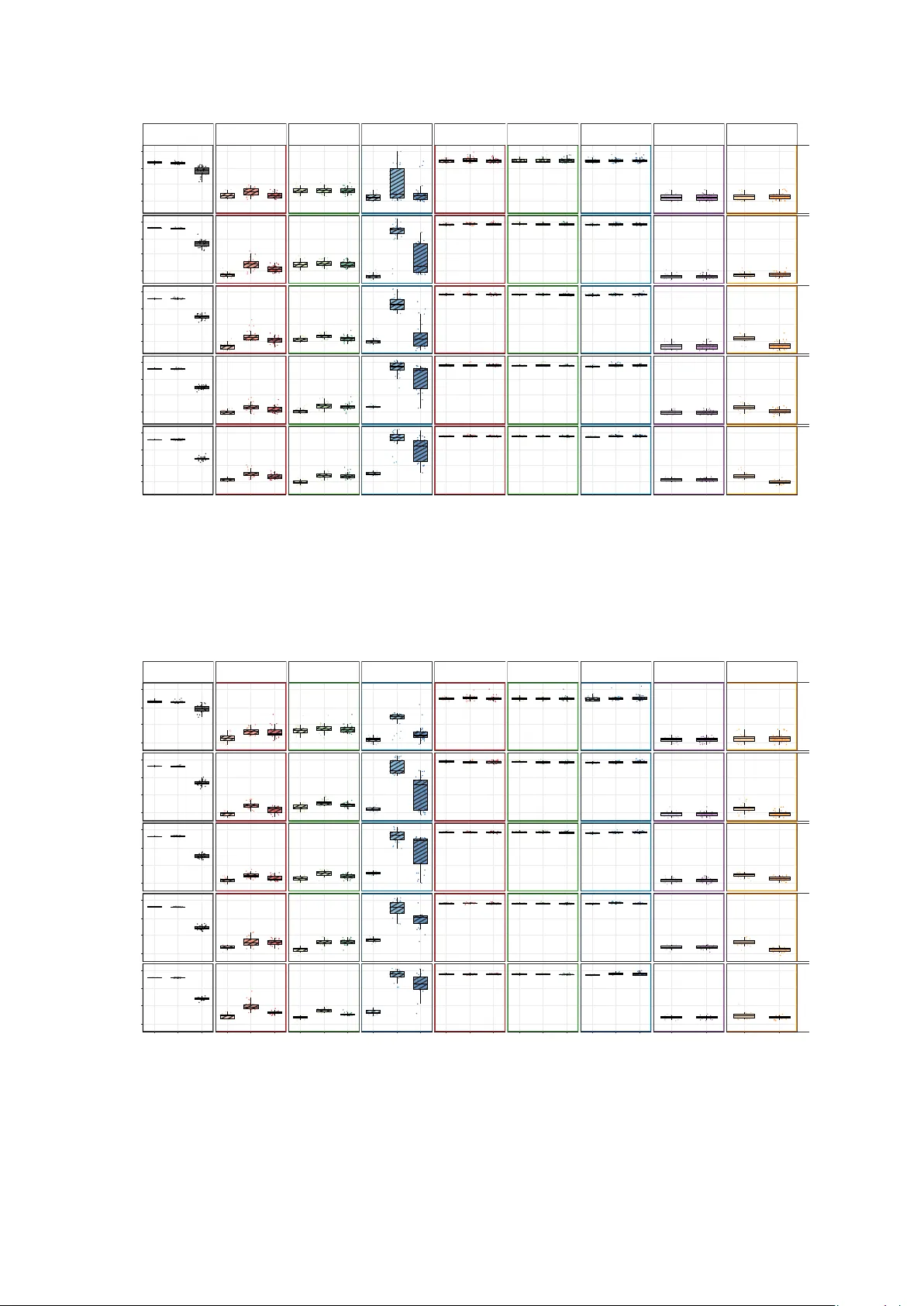

Computer experiments involving both qualitative and quantitative (QQ) factors have attracted increasing attention. Gaussian process (GP) models have proven effective in this context by choosing specialized covariance functions for QQ factors. In this…

Authors: Linsui Deng, C. F. Jeff Wu