RefineFormer3D: Efficient 3D Medical Image Segmentation via Adaptive Multi-Scale Transformer with Cross Attention Fusion

Accurate and computationally efficient 3D medical image segmentation remains a critical challenge in clinical workflows. Transformer-based architectures often demonstrate superior global contextual modeling but at the expense of excessive parameter c…

Authors: **저자 정보 제공되지 않음** (논문 본문에 저자 명시가 없습니다.)

RefineF ormer3D: Efficien t 3D Medical Image Segmen tation via A daptiv e Multi-Scale T ransformer with Cross A tten tion F usion Ka vyansh T yagi National Institute of T ec hnology Kurukshetra Departmen t of Electronics and Communication Engineering 123109026@nitkkr.ac.in Vish was Rathi ∗ National Institute of T ec hnology Kurukshetra Departmen t of Computer Engineering vishwas@nitkkr.ac.in Puneet Goy al Indian Institute of T ec hnology Ropar Departmen t of Computer Science and Engineering puneet@iitrpr.ac.in ∗ Corresp onding author Abstract A ccurate and computationally efficien t 3D medical image segmentation remains a crit- ical challenge in clinical workflo ws. T ransformer-based architectures often demonstrate su- p erior global con textual mo deling but at the exp ense of excessive parameter counts and memory demands, restricting their clinical deploymen t. W e propose RefineF ormer3D, a ligh tw eight hierarchical transformer architecture that balances segmentation accuracy and computational efficiency for volumetric medical imaging. The architecture integrates three k ey components: (i) GhostConv3D-based patch embedding for efficien t feature extraction with minimal redundancy , (ii) MixFFN3D mo dule with low-rank pro jections and depthwise con volutions for parameter-efficient feature extraction, and (iii) a cross-attention fusion de- co der enabling adaptive multi-scale skip connection integration. RefineF ormer3D contains only 2.94M parameters, substantially few er than con temp orary transformer-based metho ds. Extensiv e experiments on ACDC and BraTS b enchmarks demonstrate that RefineF ormer3D ac hieves 93.44% and 85.9% a v erage Dice scores resp ectively , outperforming or matc hing state-of-the-art metho ds while requiring significantly few er parameters. F urthermore, the mo del achiev es fast inference (8.35 ms p er volume on GPU) with low memory requiremen ts, supp orting deploymen t in resource-constrained clinical environmen ts. These results estab- lish RefineF ormer3D as an effective and scalable solution for practical 3D medical image segmen tation. Keyw ords: 3D medical image segmentation, T ransformer architecture, Atten tion mecha- nism, Efficien t deep learning, Multi-scale feature fusion, Brain tumor segmen tation, Cardiac segmen tation 1 In tro duction Deep learning has fundamen tally reshaped medical image analysis as extraction of complex patterns from clinical data can b e done automatically at unprecedented scales. Among these 1 adv ances, 3D medical image segmen tation stands as a foundational task whic h is crucial for applications ranging from organ localization and tumor delineation to treatment planning. T ra- ditional enco der-deco der netw orks suc h as U-Net [ 32 ] and its deriv atives [ 16 , 44 ] hav e long b een the bac kb one of volumetric segmentation, and they effectively compress input data in to latent represen tations and reconstruct dense v oxel-wise predictions. Ho w ever, the limited receptiv e field of conv olutional op erators and their inheren t lo cality bias restrict their capacity to mo del global anatomical context, particularly in cases in volving large in ter patient v ariation in scale, texture, and shap e. T o address these limitations, transformer based architectures hav e emerged as a p ow erful alternativ e as they lev erage global self-atten tion mec hanisms [ 15 ] to capture long range de- p endencies and seman tic coherence across medical v olumes. Pioneering contributions suc h as T ransUNet [ 5 ] and UNETR [ 12 ] hav e demonstrated the effectiv eness of integrating Vision T rans- formers with conv olutional deco ders, leading to substantial impro vemen ts o ver earlier purely con- v olutional approaches. SWIN-Unet [ 4 ] hav e further explored hierarchical and pure transformer U-shap ed architectures, affirming the v alue of transformer backbones for con textual represen ta- tion learning in segmen tation tasks. Ho wev er, these gains come at a cost. As full self-attention incurs heavy memory ov erhead and computational burden, it raises concerns ab out clinical feasibility where resource constrain ts matter. This limits their applicability in real world clinical scenarios where efficiency and re- liabilit y are paramount. Moreo ver, the current prev ailing skip fusion strategies are typically based on static concatenation or con volutional op erations, and they may inadequately integrate m ulti-scale features, which undermines segmentation p erformance in anatomically complex or am biguous regions. The con ven tional concatenation-based skip connections treat all encoder features uniformly , failing to selectively aggregate semantically relev an t information for the de- co der’s current reconstruction state. This naive fusion not only int ro duces redundant features but also foregoes adaptiv e, query driv en selection mechanisms that could align enco der con- text with deco der sp ecific requiremen ts. This limitation b ecomes acute when segmen ting the heterogeneous anatomical structures with v ariable app earances. Recen t state-of-the-art researc h, including nnF ormer [ 43 ] and SegF ormer3D [ 30 ], tried to reconcile this trade-off b et ween p erformance and efficiency by introducing hybrid or window ed atten tion sc hemes and lighter m ultilay er perceptron (MLP) based deco ders. These arc hitec- tures ha v e made strides in reducing computational costs. Mo dels suc h as Le ViT-UNet [ 39 ] ha ve sp ecifically targeted inference efficiency by employing fast transformer encoders. Despite these adv ances, many contemporary transformer mo dels still retain excessive parameter coun ts, esp ecially within skip fusion and decoding mo dules, or they tend to sacrifice global feature inte- gration to ac hieve efficiency . F urthermore, the rep etitiv e and compressible nature of volumetric medical data a characteristic ideally suited for ligh tw eight, con text aw are mo deling remains underexploited in existing segmen tation frameworks. In resp onse to these p ersisten t challenges, w e prop ose RefineF ormer3D, a hierarchical multi- scale transformer arc hitecture engineered for b oth parametric efficiency and robust con textual reasoning in 3D medical image segmentation. The core of our metho d is the cross atten tion fusion deco der blo ck, whic h uses GhostCon v3D for efficient 3D feature pro cessing and enhances it with channel wise attention using Squeeze and Excitation mec hanisms. This adaptively and dynamically aggregates the m ulti-scale features across the deco der. The atten tion aw are fusion strategy refines semantic integration throughout the netw ork while minimizing computational o verhead. The enco der employs hierarchica l windo wed self-attention and MixFFN3D mo dules with low-rank pro jections and depthwise 3D con volutions. This design captures both global dep endencies and fine anatomical details, effectiv ely balancing accuracy and computational efficiency . Figure 1 illustrates the performance efficiency trade-off on the ACDC dataset [ 2 ]. Refine- F ormer3D achiev es sup erior p erformance with only 2.94 million parameters, outp erforming state- 2 Figure 1: Model efficiency comparison on A CDC dataset [ 2 ]. Parameter count v ersus segmenta- tion p erformance for RefineF ormer3D and existing 3D segmentation mo dels. Blue bars indicate n umber of parameters; orange-red curve shows a verage Dice score. RefineF ormer3D ac hieves sup erior p erformance with only 2.94M parameters. of-the-art metho ds and demonstrating its effectiveness as a ligh tw eight y et p ow erful 3D segmen- tation arc hitecture. Extensiv e exp erimen ts on widely used medical segmentation benchmarks, including ACDC [ 2 ], and BraTS [ 27 ], demonstrate that RefineF ormer3D not only outp erforms state-of-the-art mo dels suc h as nnF ormer, SegF ormer3D, and UNETR in segmen tation accuracy but also ac hieves no- table reductions in parameter count and inference time. These results pro ject RefineF ormer3D as a strong candidate for clinical deplo yment, which meets the critical requirements of accuracy , reliabilit y and computational efficiency . The main con tributions of this w ork are: (i) a hier- arc hical transformer architecture for efficient 3D medical image segmen tation with only 2.94M parameters, (ii) an adaptive deco der blo c k that dynamically integrates multi-scale features using atten tion guided fusion mec hanisms, (iii) a comprehensive ev aluation on tw o b enchmark datasets demonstrating sup erior p erformance efficiency trade-offs compared to existing metho ds, and (iv) detailed ablation studies v alidating the contribution of each architectural comp onent. 2 Related W ork Recen t adv ances in 3D medical image segmen tation explore CNNs, transformers and their hy- brids to improv e p erformance and efficiency . While CNNs excel at capturing local features, transformers enhance global context understanding through self-attention mechanisms. W e di- vide the existing w orks into four categories: (i) CNN-based approaches, (ii) Pure transformer based metho ds, (iii) Hybrid CNN-T ransformer arc hitectures and (iv) Efficient transformer v ari- 3 an ts. 2.1 CNN-Based Approaches The U-Net arc hitecture [ 32 ] and its many descendan ts, including deep-sup ervised CNNs [ 45 ], DenseUNet [ 3 ], and 3D v arian ts such as 3D U-Net [ 29 ], V-Net [ 28 ], and nnUNet [ 17 ], ha ve driven significan t progress in medical image segmentation. Con v olutional netw orks provide effective m ulti-scale feature extraction but their fundamentally lo cal receptiv e fields limit their ability to accurately segmen t complex and context-dependent structures, particularly in 3D volumes. CNN-based improv ements, suc h as dilated con volutions, attention gates, and pyramid p o ol- ing, hav e enhanced context mo deling [ 6 , 35 , 7 ]. Ho wev er, these approac hes remain constrained b y the inheren t lo cality of conv olution op erations, often failing to mo del long-range dep endencies or capture subtle anatomical v ariations. A ttempts to mitigate these weaknesses through deep er or wider arc hitectures [ 20 , 21 ] frequently result in increased computational demands without prop ortional gains in accuracy . 2.2 Pure T ransformer Arc hitectures SETR [ 42 ] and other early transformer-based architect ures reformulated segmen tation as a sequence-to-sequence prediction task, emplo ying pure transformers as enco ders for global con- text. Ho wev er, these mo dels often suffer from p o or lo calization due to the loss of spatial detail during patc h em b edding and the absence of hierarc hical, multi-resolution features, requiring complex or inefficien t deco ders for spatial recov ery . T ransformer-only arc hitectures such as Swin-UNet [ 4 ] and SwinUNETR [ 11 ] leveraged hier- arc hical attention with shifted windo ws to efficien tly learn lo cal-to-global representations. Ho w- ev er, their dep endence on windo wed attention ma y restrict con text mo deling for large or irregular anatomical structures and con tributes to relatively high parameter counts. Recent ligh tw eight designs suc h as GCI-Net [ 31 ] and PF ormer [ 9 ] addressed this b y introducing efficien t global con text mo dules and con tent-driv en attention, main taining strong p erformance at a fraction of traditional transformer complexit y . 2.3 Hybrid CNN-T ransformer Arc hitectures Hybrid CNN-transformer architectures, such as T ransBTS [ 33 ], CoT r [ 41 ], T ransF use [ 40 ], and LoGoNet [ 19 ], hav e b een prop osed to lev erage the complementary strengths of b oth paradigms. These mo dels incorp orated diverse fusion and attenti on strategies, including deformable atten- tion [ 36 ] and explicit integration of CNN and transformer features [ 40 ], to enhance segmentation p erformance. While these hybrid mo dels effectively capture b oth lo cal and global context, they often inv olv e complex architectures that can lead to slo wer inference and limited scalabilit y when applied to larger or more diverse clinical datasets. Recen t w orks suc h as DA UNet [ 22 ], DS-UNETR++ [ 18 ], and MS-TCNet [ 1 ] ha ve further refined this paradigm b y in tro ducing deformable aggregation, gated dual-scale attention, and m ulti-scale transformer-CNN fusion, ac hieving higher Dice accuracy with competitive parameter coun ts. 2.4 Efficien t T ransformer V arian ts Efficien t transformer v ariants such as Le ViT-UNet [ 39 ] and SegF ormer3D [ 30 ] further addressed the high computational and parameter complexity by integrating light w eight transformer mo d- ules and streamlined deco ders, suc h as all-MLP designs. Le ViT-UNet in tro duced a fast hy- brid enco der but was limited by shallo w multi-scale fusion, whereas SegF ormer3D demonstrated strong efficiency and comp etitive accuracy by employing hierarchical attention and a light w eight deco der with 4.51M parameters. Despite these gains, SegF ormer3D and its con temp oraries 4 T able 1: Comparison of RefineF ormer3D with state-of-the-art metho ds in terms of mo del size and computational complexity . RefineF ormer3D demonstrates notable reduction in parameters while main taining comp etitive computational cost. Arc hitecture P arams (M) nnF ormer [ 43 ] 150.5 T ransUNet [ 5 ] 96.07 UNETR [ 12 ] 92.49 DS-UNETR++ [ 18 ] 67.7 SwinUNETR [ 11 ] 62.83 MS-TCNet [ 1 ] 59.49 PF ormer [ 9 ] 46.04 D AUNet [ 22 ] 16.36 GCI-Net [ 31 ] 13.36 SegF ormer3D [ 30 ] 4.51 RefineF ormer3D 2.94 con tinue to rely on largely static or parameter-hea vy skip fusion strategies, which can fail to adaptiv ely exploit anatomical v ariation or handle c hallenging low-con trast targets. Metho ds including nnF ormer [ 43 ], which employ ed skip atten tion instead of concatenation for feature fusion, and UNETR [ 12 ], whic h used a transformer as the primary enco der for 3D segmentation, demonstrated improv ed segmentation p erformance and b etter global context mo deling. Ho wev er, these methods often remain computationally demanding and do not fully resolv e the tension b etw een fine-scale lo calization and adaptive, efficien t feature in tegration. T able 1 presents a comprehensive comparison of RefineF ormer3D with state-of-the-art meth- o ds in terms of parameter count. Despite adv ances in recent architectures, most curren t mo dels emplo y rigid or parameter-hea vy skip fusion schemes that limit their adaptability to anatomi- cal differences and hinder b oth segmentation accuracy and efficiency . RefineF ormer3D addresses these limitations by in tro ducing adaptive, attention-based skip fusion within a light weigh t trans- former design, achieving sup erior accuracy and resource efficiency compared to previous meth- o ds. 3 Prop osed Methodology RefineF ormer3D is designed to deliv er state-of-the-art segmen tation accuracy for v olumetric medical images while main taining exceptional computational efficiency . The arc hitecture re- quires only 2.94 million parameters, an order of magnitude less than leading transformer based baselines. This section outlines the k ey architectural comp onen ts introduced in the encoder and deco der mo dules. The prop osed RefineF ormer3D architecture is illustrated in Figure 2 . 3.1 Enco der Arc hitecture The enco der in RefineF ormer3D efficien tly extracts hierarchical features from 3D medical images through a m ulti-stage processing pip eline. It addresses memory and computational limitations of previous transformer-based segmen tation mo dels, enabling b etter scalabilit y for clinical deploy- men t. The enco der comprises three key comp onen ts working in sequence: (i) GhostCon v3D [ 10 ] based patc h embedding for efficien t low-lev el feature extraction, (ii) T ransformer blo cks that progressiv ely capture multi-scale con text through shifted windo w atten tion, (iii) MixFFN3D mo dule that p erform parameter-efficien t feature mixing using low-rank pro jections and depth- wise con volutions. It also uses hybrid normalization with sto c hastic depth regularization to 5 Decoder Block 1 Decoder Block 2 Decoder Block 3 Final Up Block Segmentation Head (1x1 Conv3D) Output Segmentation Map [B, num_classes, D, H, W] Input [B,C,D,H,W] Encoder Block 1 Encoder Block 2 Encoder Block 3 Encoder Block 4 skip connection 1 skip connection 2 skip connection 3 Low Rank Linear DWConv3D SILU Low Rank Linear GhostConv3D DWConv3D LayerNorm Attention Block W-MSA Dropout LayerNorm MixFFN3D Dropout LayerNorm TriLinear Upsample Q Proj Linear Proj K,V Proj Window Cross Attention SE Attention PatchEmbedding3D Transformer Block 1 Transformer Block 2 PatchEmbedding3D skip connection Decoder feature Decoder Block MixFFN3D LayerNorm LayerNorm LayerNorm K Q V DWConv3D GhostConv3D SILU SILU + Flatten Figure 2: RefineF ormer3D architecture o verview. The input v olume R B × C in × D × H × W is pro cec- ssed via 3D patc h embedding and enco ded through four hierarchical stages. Eac h enco der stage applies t wo consecutive transformer blo cks whic h are window ed self atten tion follow ed b y shifted windo w attention . The deco der progressiv ely refines features b y fusing enco der skip connections through cross attention, where deco der features query encoder representations for selective multi scale aggregation. The final upsampling blo c k p erforms refinemen t without skip connections. Deep sup ervision is applied via auxiliary heads at intermediate deco der outputs. ensure stable training. Each comp onent of encoder is designed to balance computational effi- ciency with represen tational p ow er, as detailed b elow. 3.1.1 P atchEm b edding3D Input v olumes are first pro cessed b y a GhostCon v3D based patch embedding lay er that pre- serv es lo cal vo xel con tin uity while using substantially fewer parameters and computations than con ven tional 3D con volutions. Unlike standard patch em b edding whic h often ignores spatial redundancy , GhostConv3D generates a set of primary feature maps via regular conv olution and then augmen ts them with “ghost” features using light weigh t depth wise conv olutions. T o the b est of our knowledge, this represents the first application of GhostConv3D for 3D transformer patch em b edding in medical imaging. Giv en input v olume X ∈ R B × C in × D × H × W , where B represents the batc h size, C in is the n umber of input c hannels, and D , H , W represent depth, heigh t, and width resp ectiv ely , the 6 GhostCon v3D patch embedding computes: F primary = Con v3D( X ; W prim ) (1) F ghost = D WCon v3D( F primary ; W ghost ) (2) F out = Concat [ F primary , F ghost ] (3) where W prim and W ghost represen t the learnable weigh ts of the primary and depth wise Con v3D lay ers, resp ectively . Here, F primary ∈ R B × C 1 × D ′ × H ′ × W ′ , F ghost ∈ R B × ( C out − C 1 ) × D ′ × H ′ × W ′ and F out ∈ R B × C out × D ′ × H ′ × W ′ . The parameter C 1 = ⌊ C out /r ⌋ where r is the ghost ratio (typically r = 2 ), and C out is the output c hannel size. The depthwise con- v olution DW Con v3D op erates with kernel size 3 3 and applies a separate con volutional filter for eac h input channel, pro ducing ghost features that capture lo cal spatial v ariations with minimal additional parameters ( 27 C 1 compared to C 1 · C out · 27 for standard conv olution). The output spatial dimensions D ′ , H ′ , W ′ are computed as S ′ = ⌊ S +2 p − k s ⌋ + 1 for eac h axis, where S is the input size, k the k ernel size, s the stride, and p the padding. The parameter reduction ac hieved b y GhostCon v3D can be quan tified as follo ws: while standard Conv3D requires C in · C out · k 3 parameters, GhostConv3D requires only C in · C 1 · k 3 + ( C out − C 1 ) · k 3 ≈ C in · C out · k 3 /r , yielding appro ximately 2 × parameter reduction for r = 2 . Before flattening to tokens, the output passes through a 3D p ositional con volution (P osConv) blo c k follow ed b y Lay erNorm, whic h together em b ed spatial lo cation information and normalize feature distributions. The PosCon v blo ck employs a depth wise 3D conv olution with kernel size 3 3 and zero padding to encode relative spatial p ositions: F pos = D WCon v3D( F out ; W pos , k =3 , groups = C out ) (4) where each channel learns its own p ositional enco ding pattern. This is follow ed by La yerNorm to stabilize training: F norm = La yerNorm( F pos ) (5) The normalized features are then flattened along spatial dimensions to pro duce tok en sequence T ∈ R B × N × C out where N = D ′ × H ′ × W ′ . These spatially a ware tokens then serve as input to the hierarc hical transformer stages for multi-scale feature extraction. 3.1.2 T ransformer Blo c k The P atchEm b edding3D output is pro cessed through tw o sequential transformer blo cks ha ving distinct attention mec hanisms. The first blo ck computes self atten tion within fixed spatial win- do ws capturing lo cal context efficiently . The second blo c k emplo ys shifted window partitioning to establish inter window connections whic h addresses the limitation of isolated lo cal receptiv e fields. The non ov erlapping window ed self-attention mechanisms [ 8 ] capture long range dep en- dencies within lo cal v olumetric regions balancing global context mo deling with computational efficiency . F ollowing the Swin T ransformer [ 23 ] alternate transformer blo cks use shifted window partitioning (shift by ⌊ w / 2 ⌋ ) to enable cross-window information exc hange. Each of the enco der stage applies tw o consecutive transformer blo c ks- the first with regular window partitioning and the second with shifted windows which creates a pattern that facilitates information flo w across windo w b oundaries. Giv en input features X ∈ R B × D × H × W × C , where D , H , W denote the spatial dimensions and C the c hannel dimension, a cyclic shift by s = ( s d , s h , s w ) vo xels (where s d , s h , s w are shift amoun ts along depth, heigh t, and width, t ypically set to ( ⌊ w d / 2 ⌋ , ⌊ w h / 2 ⌋ , ⌊ w w / 2 ⌋ ) for shifted blo c ks and (0 , 0 , 0) for regular blo c k) is applied in alternate blo cks, follow ed b y partitioning into M windows of size ( w d , w h , w w ) : 7 X ′ = CyclicShift( X , s ) (6) { X ( m ) } = P artition( X ′ , ( w d , w h , w w )) , m = 1 , . . . , M (7) where M = D · H · W w d · w h · w w represen ts the total n umber of windo ws. Eac h window X ( m ) ∈ R B × ( w d · w h · w w ) × C is treated as a sequence of w d · w h · w w tok ens for self-attention computation. Within each windo w, we compute standard m ulti-head self-atten tion with relative p ositional bias: A ttention( Q , K , V ) = Softmax QK ⊤ √ d k + B V where Q = X ( m ) W Q , K = X ( m ) W K , V = X ( m ) W V are pro jections of the windo w tok ens with learnable w eight matrices W Q , W K , W V ∈ R C × d k , and d k = C /H num is the dimension p er atten tion head with H num denoting the n umber of heads. The relativ e p ositional bias B ∈ R ( w d · w h · w w ) × ( w d · w h · w w ) is a learnable parameter that enco des spatial relationships b etw een tok ens within the window, defined as B ij = B (∆ d, ∆ h, ∆ w ) where (∆ d, ∆ h, ∆ w ) represents the relative position offset b etw een tokens i and j . F or shifted windows, an atten tion mask M ∈ { 0 , −∞} ( w d · w h · w w ) × ( w d · w h · w w ) is added to preven t attention b et ween non-adjacent regions created b y cyclic shifting. Finally , windo w outputs are merged and the shift is reversed: Y = CyclicShift − 1 (Windo wReverse( { Z ( m ) } ) , s ) where Z ( m ) denotes the output feature tensor of the m -th window after W-MSA has b een applied, and WindowRev erse concatenates the M windo w features back into the original spatial lay out Y ∈ R B × D × H × W × C . The complete transformer blo ck follows a residual structure: X ℓ +1 / 2 = W - MSA(Lay erNorm( X ℓ )) + X ℓ (8) X ℓ +1 = MixFFN3D(La yerNorm( X ℓ +1 / 2 )) + X ℓ +1 / 2 (9) where ℓ denotes the blo ck index, and DropPath regularization is applied to b oth residual con- nections during training. This alternating shifted window scheme encourages cross-window in- formation flo w while main taining linear complexity O ( D · H · W · C ) with resp ect to input size, compared to O ( D 2 · H 2 · W 2 · C ) for global self-attention. The attention outputs at each stage are then pro cessed through MixFFN3D module to refine and enric h the learned feature represen tations b efore downsampling to the next hierarchical level. 3.1.3 MixFFN3D Standard transformer FFNs expand features to 4 d in termediate dimensions, requiring 8 d 2 param- eters p er blo ck. W e adopt MixFFN [ 37 ] with architectural adaptations for 3D medical volumes. This is done b y low-rank factorization reducing channel expansion o verhead and 3D depthwise con volution to capture volumetric spatial context within the b ottleneck representation. Giv en input features x ∈ R B × N × d , the feed-forw ard operation factorizes through a lo w- dimensional subspace: MixFFN3D ( x ) = W B SiLU ( W A x ) + D W Conv3D SiLU ( W A x ) (10) where W A ∈ R d × r pro jects to intermediate rank r = max( d/ 2 , 64) , and W B ∈ R r × d pro jects bac k to the original dimension. Instead of a single dense la yer W ∈ R d × 4 d as in standard FFNs, 8 the lo w-rank factorization W A W B appro ximates the transformation through a b ottleneck of rank r ≪ 4 d , where the matrix pro duct W A W B ∈ R d × d has at most rank r . The 3D depthwise conv olution (kernel size 3 3 , applied to reshap ed spatial la yout) enables lo cal feature aggregation across depth, height, and width within the compressed representation, whic h is particularly effective for capturing anatomical contin uit y in medical volumes. This design reduces parameters from 8 d 2 to 2 dr + 27 r . F or d =256 and r =128 , this yields 68,992 parameters versus 524,288 for standard FFN. This gives us a 7.6 × reduction while main- taining expressiv eness through the nonlinear spatial mixing op eration. F or robust and stable training across diverse data regimes, we employ La yerNorm [ 34 ] for sequence inputs. Both normalization techniques demonstrate greater stabilit y than Batch- Norm [ 26 ], esp ecially at small batch sizes commonly found in medical imaging. A dditionally , sto c hastic depth (DropPath) [ 14 ] regularization is applied throughout the enco der. Giv en input features X ∈ R B × N × C in sequence format, La yerNorm computes: La yerNorm( X ) = X − µ ℓ σ ℓ · γ ℓ + β ℓ where µ ℓ , σ ℓ are the mean and standard deviation computed across all c hannels C for each token indep enden tly , and γ ℓ , β ℓ are learnable scale and shift parameters. F or regularization, w e apply DropPath during training: DropP ath( Z ) = ( Z 1 − p , with probability 1 − p 0 , with probabilit y p where p is the drop probability , t ypically increased linearly with netw ork depth. This hybrid normalization and regularization sc heme enhances training stabilit y and generalization under small batc h constraints and limited lab eled data. 3.2 Deco der Arc hitecture The decoder progressiv ely reconstructs segmen tation maps through three upsampling stages where they incorp orate skip connections from corresp onding enco der levels. Effectiv e fusion of enco der and deco der features bridges the seman tic gap b et ween abstract enco der represen tations and spatially refin ed deco der features. Each decoder stage consists of three operations: (i) trilinear upsampling to match enco der resolution, (ii) skip connection fusion via cross atten tion and (iii) spatial refinemen t through GhostConv3D blo cks. Eac h deco der stage follows a progressive refinement pip eline where deco der features are first upsampled 2 × using trilinear interpolation to match the spatial resolution of the corresp onding enco der skip connection. The upsampled deco der features are then fused with enco der skip con- nections through window-based cross-attention with asymmetric query-key-v alue assignmen t. As the decoder features generate queries while enco der features pro vide keys and v alues, en- abling selective aggregation of m ulti-scale enco der context. Finally , the fused features undergo spatial refinemen t through parameter-efficient GhostConv3D blo cks follow ed by GroupNorm and SiLU activ ation, pro ducing enriched represen tations for the subsequent deco der stage or final segmen tation head. Standard U-Net architectures concatenate enco der features X e ∈ R B × C e × D × H × W with de- co der features X d ∈ R B × C d × D × H × W , treating all enco der information equally regardless of its relev ance to the current decoding stage. This uniform fusion strategy assumes that all m ulti- scale enco der features con tribute equally to reconstruction which ignores the seman tic con text of the deco der’s representational state. W e instead emplo y window-based cross-atten tion for skip connection fusion, where deco der features generate queries to selectively aggregate relev ant enco der con text, enabling adaptive multi-scale feature integration. 9 The decoder features X d ∈ R B × C d × D/ 2 × H / 2 × W / 2 from the previous stage are upsampled using trilinear in terp olation with scale factor 2: X ↑ d = Upsample( X d , scale = 2) ∈ R B × C d × D × H × W (11) T o ensure c hannel compatibilit y , enco der skip features are pro jected via a linear transformation: X ′ e = X e W pro j + b pro j ∈ R B × C d × D × H × W (12) where W pro j ∈ R C e × C d and b pro j ∈ R C d are learnable parameters. This pro jection unifies the c hannel dimensions to C d for b oth streams b efore atten tion computation. After flattening spatial dimensions, ˜ X ↑ d , ˜ X ′ e ∈ R B × N × C d are obtained, where N = D × H × W represen ts the total num b er of v o xels. Deco der features pro ject to queries while enco der features pro ject to k ey-v alue pairs: Q = ˜ X ↑ d W Q ∈ R B × N × d k (13) K , V = Split ( ˜ X ′ e W K V ) ∈ R B × N × d k (14) where W Q ∈ R C d × d k and W K V ∈ R C d × 2 d k are learnable pro jections, with d k = C d /H num denoting the dimension p er atten tion head and H num the num b er of heads. The asymmetric query-k ey-v alue assignmen t allows deco der vo xels to attend to relev an t enco der context through learned similarity , effectively weigh ting enco der features based on their relev ance to each deco der p osition. F ull attention o ver N v oxels requires O ( N 2 ) complexity , whic h is computationally pro- hibitiv e for 3D medical v olumes (e.g., N ≈ 128 3 ≈ 2 M v oxels). W e partition features into non-o verlapping windows of size w 3 (t ypically w = 4 ), yielding M = ⌈ D/w ⌉ × ⌈ H /w ⌉ × ⌈ W /w ⌉ windo ws. Within each windo w m ∈ { 1 , . . . , M } , we extract corresp onding tok ens Q m , K m , V m ∈ R B × w 3 × d k and compute m ulti-head cross-attention: A m = Softmax Q m K ⊤ m √ d k ∈ R B × w 3 × w 3 (15) F m = A m V m ∈ R B × w 3 × d k (16) The attention matrix A m [ i, j ] enco des the similarit y b etw een deco der tok en i and enco der token j within window m , where high v alues indicate that enco der p osition j pro vides relev ant con text for deco der p osition i . W e apply output pro jection W O ∈ R d k × C out and reverse windo w partitioning: Y = Reshap e Windo wReverse ( { F m W O } M m =1 ) ∈ R B × C out × D × H × W (17) where Windo wReverse concatenates the M window outputs back to the spatial la yout. This reduces computational complexity to O ( N · w 3 ) , whic h is linear in the n umber of vo xels N and enables efficien t pro cessing of high-resolution 3D volumes. F ollowing cross-atten tion fusion, we apply Squeeze-Excitation (SE) c hannel attention [ 13 ] to recalibrate c hannel-wise feature resp onses: z = GlobalA vgP o ol3D ( Y ) ∈ R B × C out (18) s = σ ( W 2 · ReLU ( W 1 z )) ∈ R B × C out (19) Y se = s ⊙ Y (20) where W 1 ∈ R ( C out / 16) × C out , W 2 ∈ R C out × ( C out / 16) , σ denotes the sigmoid function, and ⊙ rep- resen ts c hannel-wise m ultiplication. The SE blo ck emphasizes informativ e channels while sup- pressing less relev an t ones. The refined features are then pro cessed through GhostCon v3D [ 10 ], GroupNorm, and SiLU activ ation to pro duce the final deco der output for stage i . 10 3.3 T raining Ob jectiv es W e emplo y deep sup ervision with auxiliary losses at deco der blo cks 2 and 3 to stabilize training and guide in termediate feature representations. The o verall loss function is defined as: L total = L ( ˆ y , y ) + λ 3 X i =2 L ( ˆ y i , y ) (21) where y is the ground truth, ˆ y is the final prediction, ˆ y i denotes auxiliary predictions from in termediate deco der stages, and λ is the w eight of the auxiliary sup ervision. Each loss L com bines Dice loss and cross-entrop y loss: L ( ˆ y , y ) = L Dice ( ˆ y , y ) + L CE ( ˆ y , y ) (22) Dice loss addresses class imbalance and directly measures segmentation quality while cross- en tropy provides strong gradients for effectiv e optimization. Auxiliary predictions are upsampled to ground truth resolution via trilinear interpolation b efore computing losses. 4 Exp erimen tal Results This section presents the exp erimental setup and quantitativ e ev aluations conducted to assess the effectiv eness and robustness of the prop osed metho d compared to the state-of-the-art metho ds. 4.1 Exp erimen tal Setup W e ev aluate RefineF ormer3D against state-of-the-art 3D medical image segmentation metho ds on three widely used volumetric b enchmarks: BraTS (Brain T umor Segmen tation) [ 27 ] and A CDC (Automatic Cardiac Diagnosis Challenge) [ 2 ]. T o ensure comparability with prior w ork, w e replicate established proto cols for dataset splits, prepro cessing, and ev aluation. No external data sources, pretraining, or auxiliary datasets are used, and all exp erimen ts are p erformed on a single NVIDIA R TX 5080 GPU using the PyT orc h framework. The mo del parameters are optimized using the Adam W optimizer [ 25 ], with an initial learning rate of 2 × 10 − 4 , w eight deca y of 1 × 10 − 5 , and default b etas (0.9, 0.999). After warm-up, a cosine annealing schedule [ 24 ] is employ ed with a minimum learning rate of 1 × 10 − 9 and T max set to 100 ep o chs, unless otherwise specified. The v alue of λ (Eq. 21 ) is chosen as 0.4. F or ablation studies, ReduceLROnPlateau is optionally used to adapt the learning rate based on v alidation loss. T o ensure robust generalization, w e apply 3D data augmentation including random flip- ping, rotation, and Gaussian noise. During inference, w e utilize test-time augmentation (TT A) via spatial flips and predictions are av eraged for final output. All mo dels are ev aluated using iden tical data pro cessing, augmentation, and inference strategies. 4.2 Quan titative Results T able 2 presents the p erformance comparison on the ACDC dataset. RefineF ormer3D (Ghost- Con v3D v arian t, 2.94M parameters) achiev es an av erage Dice score of 93.44%, outp erforming the b est competing metho d, DS-UNETR++ (93.03%, 67.7M), despite using appro ximately 95.7% few er parameters. The standard Conv3D v ariant further improv es p erformance to 94.88% with 4.87M parameters, exceeding DS-UNETR++ by 1.85%. Notably , RefineF ormer3D shows consistent gains across all anatomical structures, ac hieving 92.19% (R V), 91.97% (Myo), and 96.14% (L V) with the ligh t weigh t configuration. Compared to transformer-hea vy baselines suc h as nnF ormer (150.5M) and T ransUNet (96.07M), our mo del 11 T able 2: Performance comparison on ACDC dataset. Bold v alues represen t b est p erformance and underlined v alues indicate second b est results. P arameters are rep orted in millions. Metho d P arams (M) A vg (%) R V Myo L V DS-UNETR++ [ 18 ] 67.7 93.03 92.23 90.82 96.04 PF ormer [ 9 ] 46.04 92.33 91.11 89.93 95.88 nnF ormer [ 43 ] 150.5 92.06 90.94 89.58 95.65 GCI-Net [ 31 ] 13.36 91.43 90.28 89.24 94.77 MS-TCNet [ 1 ] 59.49 91.43 89.43 89.09 95.77 Segformer3D [ 30 ] 4.5 90.96 88.50 88.86 95.53 Le ViT-Unet-384 [ 39 ] 52.17 90.32 89.55 87.64 93.76 SwinUNet [ 4 ] – 90.00 88.55 85.62 95.83 T ransUNet [ 5 ] 96.07 89.71 88.86 85.54 95.73 D AUNet [ 22 ] 16.36 88.73 85.44 86.69 94.05 UNETR [ 12 ] 92.49 88.61 85.29 86.52 94.02 R50-VIT-CUP [ 5 ] 86.00 87.57 86.07 81.88 94.75 VIT-CUP [ 5 ] 86.00 81.45 81.46 70.71 92.18 RefineF ormer3D (GhostConv3D) 2.94 93.44 92.19 91.97 96.14 RefineF ormer3D (Standard Conv3D) 4.87 94.88 93.09 93.67 97.89 R V: Righ t V entricle; Myo: Myocardium; L V: Left V entricle. reduces parameter coun t b y ov er 95–98% while maintaining sup erior or comp etitive segmenta- tion accuracy . This demonstrates exceptional p erformance and parameter efficiency in cardiac structure segmen tation. T able 3 presents quan titative comparisons on the BraTS dataset. RefineF ormer3D ac hieves an a verage Dice of 85.9% (GhostConv3D, 2.94M) and 86.2% (standard Conv3D, 4.87M). The ligh tw eight v arian t p erforms within 0.5% of nnF ormer (86.4%, 150.5M) while using appro xi- mately 98% fewer parameters, demonstrating a sup erior accuracy–complexit y trade-off. A cross tumor subregions, the GhostCon v3D v arian t attains 91.5% (WT), 80.6% (ET), and 85.2% (TC), sho wing strong whole-tumor and tumor-core segmen tation p erformance under extreme param- eter constrain ts. Ev en when compared to mid-sized mo dels suc h as SegF ormer3D (4.5M) and GCI-Net (13.36M), RefineF ormer3D achiev es higher or comp etitiv e Dice scores with substan- tially reduced mo del capacit y . Ov erall, the results across b oth cardiac and brain tumor datasets v alidate that Refine- F ormer3D delivers state-of-the-art or near state-of-the-art segmentatio n accuracy while main- taining an order-of-magnitude reduction in parameters. This establishes the prop osed architec- ture as a highly efficien t alternative to conv entional transformer-based 3D segmentation frame- w orks. 4.3 Qualitativ e Analysis T o qualitativ ely assess segmen tation p erformance, Figures 3 and 4 present visual comparisons of predicted segmen tations with ground truth annotations on the BraTS and ACDC datasets, resp ectiv ely . Figure 3 presents segmentation outcomes on four represen tative cases from the BraTS dataset. Eac h row sho ws an original axial slice from multimodal brain MRI scans, follo w ed by ground truth annotation and predicted segmentation. The results demonstrate effective identification and distinction of tumor subregions, including small enhancing areas and complex structures. Predicted b oundaries align well with ground truth, even in regions with irregular shapes and 12 T able 3: Performance comparison on BraTS dataset. Bold v alues represent b est p erformance and underlined v alues indicate second-b est results. P arameters are rep orted in millions. Metho d P arams A vg WT ET TC (M) (%) nnF ormer [ 43 ] 150.5 86.4 91.3 81.8 86.0 GCI-Net [ 31 ] 13.36 85.88 91.58 85.86 80.20 MS-TCNet [ 1 ] 59.49 85.20 91.20 80.20 84.20 DS-UNETR++ [ 18 ] 67.7 83.19 91.68 78.58 79.30 Segformer3D [ 30 ] 4.5 82.1 89.9 74.2 82.2 UNETR [ 12 ] 92.49 71.1 78.9 58.5 76.1 T ransBTS [ 33 ] – 69.6 77.9 57.4 73.5 CoT r [ 38 ] 41.9 68.3 74.6 55.7 74.8 CoT r w/o CNN [ 38 ] – 64.4 71.2 52.3 69.8 T ransUNet [ 5 ] 96.07 64.4 70.6 54.2 68.4 SETR MLA [ 41 ] 310.5 63.9 69.8 55.4 66.5 SETR PUP [ 41 ] 318.31 63.8 69.6 54.9 67.0 SETR NUP [ 41 ] 305.67 63.7 69.7 54.4 66.9 RefineF ormer3D (GhostCon v3D) 2.94 85.9 91.5 80.6 85.2 RefineF ormer3D (Standard Conv3D) 4.87 86.2 91.8 81.3 85.6 WT: Whole T umor; ET: Enhancing T umor; TC: T umor Core. heterogeneous in tensities. Figure 4 illustrates segmen tation results on four represen tative ACDC cases. Eac h ro w sho ws an original cardiac MRI slice, follow ed by corresp onding ground truth segmen tation and predicted segmentation. The predicted mask shows close similarit y with ground truth ma jorly around L V and m yocardium edges. The mo del effectiv ely captures detailed boundaries and adapts w ell to different anatomical v ariations. This demonstrates strong potential for clinical applications where reliable segmen tation is essential. 4.4 Robustness to Reduced T raining Data T o test generalization under limited sup ervision, we progressiv ely reduce the BraTS training set to 90%, 70%, and 50% of the original cases while keeping the test set unc hanged. As presented in T able 4 , RefineF ormer3D maintains stable p erformance across all settings: 85.90% (100%), 85.80% (90%), 84.86% (70%), and 82.50% (50%). The p erformance degradation remains mo dest ev en under severe data reduction. Reducing the training data to 70% results in only a 0.94 p oint decrease in Dice score, while halving the dataset (50%) leads to a 3.40 p oint absolute drop. This gradual decline indicates strong generalization capability and resistance to ov erfitting. The observ ed robustness achiev ed on the reduced training dataset is due to the compact arc hitectural design, which limits ov er-parameterization while preserving effective contextual mo deling. In the medical imaging domain, where annotated volumetric data are scarce and costly to obtain, such stabilit y under reduced sup ervision is particularly v aluable. These findings sho w that RefineF ormer3D provides fav orable regularization characteristics. T able 4: Robustness of RefineF ormer3D to reduced training data on BraTS. % T raining Set Dice (%) Drop in Dice 100% 85.90 – 90% 85.80 0.10 70% 84.86 0.94 50% 82.50 3.40 13 Original MRI Ground T ruth Predicted Whole T umor Enhancing T umor T umor Core Figure 3: Visual comparison of original MRI, ground truth, and predicted segmentation for four represen tative BraTS cases sho wing whole tumor (y ellow), enhancing tumor (orange), and tumor core (cy an) regions. 14 Original MRI Ground T ruth Predicted R V Myocardium L V Figure 4: Visual comparison of original MRI, ground truth, and predicted segmentation for four represen tative ACDC cardiac cases sho wing righ t ven tricle (R V), m y o cardium, and left ven tricle (L V). 15 4.5 Comp onen t wise Ablation Analysis W e conducted ablations of key comp onents on the BraTS dataset to quantify the con tribution of each ma jor comp onen t- MixFFN3D, Cross Atten tion F uion and GhostCon v3D. As shown in T able 5 , removing or replacing an y of these mo dules consisten tly decreases Dice p erformance, confirming their complemen tary roles in efficiency and accuracy . T able 5: Ablation results on BraTS. Each v arian t is trained under identical settings. Dice (%) ↑ ; lo wer ∆ indicates p erformance drop relative to the full mo del. V ariant Dice (%) P arams (M) F ull RefineF ormer3D 85.90 2.94 w/o MixFFN3D → Dense MLP 84.87 2.88 w/o Cross-A ttention F usion → Concat + Conv3D 83.22 3.00 GhostCon v3D → Standard Conv3D 86.21 4.87 Disabling MixFFN3D or replacing it with a dense MLP leads to a 1.03% drop in Dice score (85.90% → 84.87%) while main taining similar parameter count (2.94M → 2.88M), v alidating its lo w-rank efficiency . Removing the cross attention fusion and using simpler concatenation with Con v3D results in the largest p erformance degradation of 2.68% (85.90% → 83.22%), demon- strating its critical role in feature fusion. Replacing GhostCon v3D with standard Con v3D in- creases parameters by 66% (2.94M → 4.87M) while impro ving Dice sligh tly to 86.21%, indicating that while standard con volutions offer marginally b etter accuracy , the parameter cost is pro- hibitiv e. T ogether, these ablations confirm that eac h comp onent is essen tial for achieving the optimal accuracy-efficiency trade off in RefineF ormer3D. 4.6 Inference Efficiency and Memory Usage T able 6 summarizes inference and memory p erformance of RefineF ormer3D v ersus transformer- based 3D segmen tation baselines. It achiev es the best trade-off b etw een speed, accuracy and mo del size requiring only 2.94 M parameters and 191.2 GFLOPs. Despite b eing nearly 50 × smaller than nnF ormer, 20 × smaller than SwinUNETR and 15 × smaller than PF ormer, it sustains rapid inference with a forward-pass latency of only 8.35 ms on GPU and 296.2 ms on CPU. T able 6: Inference efficiency on BRaTS input ( 1 × 4 × 128 3 ). Times av eraged ov er 200 passes. En v: R TX 5080, Ryzen 9 9950X, PyT orc h 2.8, CUD A 12.8. Mo del P arams FLOPs Mem † GPU CPU (M) (G) (GB) (ms) (ms) UNETR [ 12 ] 92.5 153.5 3.3 82.5 2145 SwinUNETR [ 11 ] 62.8 572.4 19.7 228.6 7612 nnF ormer [ 43 ] 149.6 421.5 12.6 148.0 5248 DS-UNETR++ [ 18 ] 42.6 70.1 2.4 62.4 1498 RefineF ormer3D 2.94 191.2 1.5 8.35 296 † Pe ak GPU memory via PyT or ch al lo cator; system pe ak 2.1 GB. The p eak GPU memory fo otprint is significan tly lo w er than prior transformer baselines, making RefineF ormer3D suitable for b oth w orkstation and em b edded deploymen t. When nor- malized b y computational cost, RefineF ormer3D attains: ms/GFLOP = 0.043, v ol/s/GFLOP = 15.3, mem(GB)/Mparam = 0.51, demonstrating sup erior compute efficiency and throughput-p er- FLOP compared to UNETR++ (0.89, 0.23, 0.056 resp ectively). 16 5 Conclusion In this w ork, we presented RefineF ormer3D, a parameter-efficient hierarchical transformer based arc hitecture for 3D medical image segmentation that systematically addresses the accuracy–efficiency trade-off. By integrating GhostConv3D-based patc h em b edding, MixFFN3D with low-rank spa- tial–c hannel mixing and an adaptiv e cross-attention skip fusion deco der, the prop osed framework ac hieves strong contextual mo deling with minimal computational o verhead. The experiments on the BraTS and A CDC b enchmarks demonstrate that RefineF ormer3D achiev es state-of-the- art or comp etitive Dice performance while operating with substan tially fewer parameters and a reduced memory fo otprin t compared to competitive transformer baselines. By impro ving throughput and deplo yment feasibility , RefineF ormer3D con tributes to ward the translation of transformer-based segmentation systems in to real-w orld clinical workflo ws. F uture w ork will ev aluate the mo del’s abilit y to generalize across differen t imaging mo dalities and adapt to data v ariabilit y in m ulti-institutional settings. A dditionally , we will in v estigate its in tegration in to end-to-end computer-assisted diagnosis and clinical decision-supp ort systems. A c kno wledgments The authors w ould like to thank the researc hers who made the BraTS and A CDC datasets publicly a v ailable. W e also ac kno wledge the computational resources pro vided b y the Indian Institute of T ec hnology Ropar. Declaration of Comp eting In terest The authors declare that they hav e no kno wn comp eting financial in terests or p ersonal relation- ships that could ha ve app eared to influence the work rep orted in this pap er. Data A v ailabilit y The datasets analyzed during the current study are publicly av ailable: • BraTS: https://www.med.upenn.edu/cbica/brats/ • ACDC: https://www.creatis.insa- lyon.fr/Challenge/acdc/ References [1] Y u A o, W eili Shi, Bai Ji, Y u Miao, W ei He, and Zhengang Jiang. MS-TCNet: An effective transformer–CNN combined netw ork using multi-scale feature learning for 3D medical image segmen tation. Computers in Biolo gy and Me dicine , 170:108057, 2024. [2] Olivier Bernard, Alain Lalande, Clement Zotti, F rederick Cerv enansky , Xin Y ang, and et al Heng. Deep learning tec hniques for automatic MRI cardiac m ulti-structures segmentation and diagnosis: Is the problem solv ed? IEEE T r ansactions on Me dic al Imaging , 37(11):2514– 2525, No vem b er 2018. [3] Sijing Cai, Y unxian Tian, Harvey Lui, Haishan Zeng, Yi W u, and Guannan Chen. Dense- UNet: A nov el multiphoton in vivo cellular image segmentation mo del based on a conv olu- tional neural net work. Quantitative Imaging in Me dicine and Sur gery , 10(6), 2020. [4] Hu Cao, Y ueyue W ang, Jo y Chen, Dongsheng Jiang, Xiaop eng Zhang, Qi Tian, and Man- ning W ang. Swin-Unet: Unet-like pure transformer for medical image segmen tation. In Eur op e an Confer enc e on Computer Vision , pages 205–218. Springer, 2022. 17 [5] Jieneng Chen, Y ongyi Lu, Qihang Y u, Xiangde Luo, Ehsan Adeli, Y an W ang, Le Lu, Alan L. Y uille, and Y uyin Zhou. T ransUNet: T ransformers mak e strong enco ders for medical image segmen tation. Me dic al Image Analysis , 77:102352, 2022. [6] Lin wei Chen, Lin Gu, Dezhi Zheng, and Ying F u. F requency-adaptiv e dilated con volution for seman tic segmen tation. In Pr o c e e dings of the IEEE/CVF Confer enc e on Computer Vision and Pattern R e c o gnition , pages 3414–3425, 2024. [7] T ong Chen, Hao jie Liu, Zhan Ma, Qiu Shen, Xun Cao, and Y ao W ang. End-to-end learnt image compression via non-local attention optimization and improv ed con text mo deling. IEEE T r ansactions on Image Pr o c essing , 30:3179–3191, 2021. [8] Y uan F eng, Kexiao Peng, Jiaolong W ei, and Zuping T ang. Windo w attention conv olution net work (W A CN): A lo cal self-attention automatic mo dulation recognition metho d. IEEE T r ansactions on Co gnitive Communic ations and Networking , 10(2):502–515, 2024. [9] Y ueyang Gao, Jinh ui Zhang, Siyi W ei, and Zheng Li. PF ormer: An efficient CNN- transformer h ybrid net w ork with con tent-driv en P-attention for 3D medical image seg- men tation. Biome dic al Signal Pr o c essing and Contr ol , 101:107154, 2025. [10] Kai Han, Y unhe W ang, Qi Tian, Jian yuan Guo, Chunjing Xu, and Chang Xu. GhostNet: More features from cheap op erations. In Pr o c e e dings of the IEEE/CVF Confer enc e on Computer Vision and Pattern R e c o gnition , pages 1580–1589, 2020. [11] Ali Hatamizadeh, Vishw esh Nath, Y ucheng T ang, Dong Y ang, Holger R Roth, and Daguang Xu. Swin UNETR: Swin transformers for seman tic segmen tation of brain tumors in MRI images. In International MICCAI Br ainlesion W orkshop , pages 272–284. Springer, 2021. [12] Ali Hatamizadeh, Y uc heng T ang, Vish wesh Nath, Dong Y ang, Andriy Myronenk o, Bennett Landman, Holger R. Roth, and Daguang Xu. UNETR: T ransformers for 3D medical image segmen tation. In 2022 IEEE/CVF Winter Confer enc e on Applic ations of Computer Vision (W ACV) , pages 1748–1758, 2022. [13] Jie Hu, Li Shen, and Gang Sun. Squeeze-and-excitation net works. In Pr o c e e dings of the IEEE Confer enc e on Computer Vision and Pattern R e c o gnition (CVPR) , June 2018. [14] Gao Huang, Y u Sun, Zhuang Liu, Daniel Sedra, and Kilian Q. W ein b erger. Deep netw orks with sto c hastic depth. CoRR , abs/1603.09382, 2016. [15] Md Shamim Hussain, Mohammed J Zaki, and Dharmashank ar Subramanian. Global self- atten tion as a replacemen t for graph conv olution. In Pr o c e e dings of the 28th A CM SIGKDD Confer enc e on Know le dge Disc overy and Data Mining , pages 655–665, 2022. [16] F abian Isensee, P aul F Jaeger, Simon AA K ohl, Jens P etersen, and Klaus H Maier-Hein. nnU-Net: A self-configuring metho d for deep learning-based biomedical image segmen ta- tion. Natur e Metho ds , 18(2):203–211, 2021. [17] F abian Isensee, Jens Peterse n, Andr ’e Klein, Da vid Zimmerer, Paul F. Jaeger, Simon Kohl, Jakob W asserthal, Gregor K "ohler, T obias Nora jitra, Sebastian J. Wirkert, and Klaus H. Maier-Hein. nnU-Net: Self- adapting framework for U-Net-based medical image segmentation. CoRR , abs/1809.10486, 2018. [18] Ch unhui Jiang, Yi W ang, Qingni Y uan, P eng ju Qu, and Heng Li. A 3D medical image segmen tation net work based on gated attention blo c ks and dual-scale cross-attention mec h- anism. Scientific R ep orts , 15(1):6159, F ebruary 2025. 18 [19] Amin Karimi Monsefi, Pa yam Karisani, Mengxi Zhou, Stacey Choi, Nathan Doble, Heng Ji, Sriniv asan Parthasarath y , and Ra jiv Ramnath. Masked LogoNet: F ast and accurate 3D image analysis for medical domain. In Pr o c e e dings of the 30th A CM SIGKDD Confer enc e on Know le dge Disc overy and Data Mining , pages 1348–1359, 2024. [20] Xiaomeng Li, Hao Chen, Xiao juan Qi, Qi Dou, Chi-Wing F u, and Pheng-Ann Heng. H- DenseUNet: Hybrid densely connected UNet for liver and liv er tumor segmen tation from CT v olumes. CoRR , abs/1709.07330, 2017. [21] Zan Li, Hong Zhang, Zhengzhen Li, and Zuyue Ren. Residual-attention UNet++: A nested residual-atten tion U-Net for medical image segmen tation. Applie d Scienc es , 12:7149, 07 2022. [22] Qinghao Liu, Min Liu, Y uehao Zhu, Licheng Liu, Zhe Zhang, and Y aonan W ang. D AUNet: A deformable aggregation UNet for m ulti-organ 3D medical image segmen tation. Pattern R e c o gnition L etters , 191:58–65, 2025. [23] Ze Liu, Y utong Lin, Y ue Cao, Han Hu, Yixuan W ei, Zheng Zhang, Stephen Lin, and Baining Guo. Swin transformer: Hierarchical vision transformer using shifted windows. In Pr o c e e dings of the IEEE/CVF International Confer enc e on Computer Vision , pages 10012– 10022, 2021. [24] Ily a Loshchilo v and F rank Hutter. SGDR: Sto chastic gradient descen t with restarts. CoRR , abs/1608.03983, 2016. [25] Ily a Loshc hilov and F rank Hutter. Fixing weigh t deca y regularization in adam. CoRR , abs/1711.05101, 2017. [26] Ekdeep S Lubana, Rob ert Dick, and Hidenori T anak a. Beyond Batc hNorm: T ow ards a unified understanding of normalization in deep learning. A dvanc es in Neur al Information Pr o c essing Systems , 34:4778–4791, 2021. [27] Bjo ern H. Menze, Andras Jak ab, Stefan Bauer, Ja yashree Kalpath y-Cramer, Keyv an F ara- hani, Justin Kirb y , Y uliya Burren, Nicole P orz, Johannes Slotb o om, Roland Wiest, and K o en V an Leemput . The multimodal brain tumor image segmentation b enchmark (BRA TS). I E E E T r ans- actions on Me dic al Imaging , 34(10):1993 – 2024, 2015. [28] F austo Milletari, Nassir Na v ab, and Seyed-Ahmad Ahmadi. V-Net: F ully con volutional neural netw orks for volumetric medical image segment ation. In 2016 F ourth International Confer enc e on 3D Vision (3DV) , pages 565–571, 2016. [29] "Ozg"un Çiçek, Ahmed Ab dulk adir, So eren S. Lienk amp, Thomas Brox, and Olaf Ron- neb erger. 3D U-Net: Learning dense v olumetric segmen tation from sparse annotation. CoRR , abs/1606.06650, 2016. [30] Shehan Perera, P ouyan Na v ard, and Alp er Yilmaz. SegF ormer3D: An efficien t transformer for 3D medical image segmentation. In 2024 IEEE/CVF Confer enc e on Computer Vision and Pattern R e c o gnition W orkshops (CVPR W) , pages 4981–4988, Los Alamitos, CA, USA, June 2024. IEEE Computer Society . [31] Qiang Qiao, Meixia Qu, W enyu W ang, Bin Jiang, and Qiang Guo. Effectiv e global con text in tegration for ligh t weigh t 3D medical image segmentation. IEEE T r ansactions on Cir cuits and Systems for Vide o T e chnolo gy , 35(5):4661–4674, 2025. 19 [32] Olaf Ronneb erger, Philipp Fischer, and Thomas Brox. U-Net: Con volutional net works for biomedical image segmen tation. In International Confer enc e on Me dic al Image Computing and Computer-Assiste d Intervention , pages 234–241. Springer, 2015. [33] W enxuan W ang, Chen Chen, Meng Ding, Hong Y u, Sen Zha, and Jiangyun Li. T rans- BTS: Multimo dal brain tumor segmentation using transformer. In Marleen de Bruijne, Philipp e C. Cattin, St ’ephane Cotin, Nicolas Pado y , Stefanie Sp eidel, Y efeng Zheng, and Caroline Essert, editors, Me dic al Image Computing and Computer Assiste d Intervention – MICCAI 2021 , pages 109– 119, Cham, 2021. Springer International Publishing. [34] Xin yi W u, Amir Ajorlou, Yifei W ang, Stefanie Jegelk a, and Ali Jadbabaie. On the role of at- ten tion masks and Lay erNorm in transformers. A dvanc es in Neur al Information Pr o c essing Systems , 37:14774–14809, 2024. [35] Y u-Huan W u, Y un Liu, Xin Zhan, and Ming-Ming Cheng. P2T: Pyramid p o oling trans- former for scene understanding. IEEE T r ansactions on Pattern Analysis and Machine Intel ligenc e , 45(11):12760–12771, 2022. [36] Zh uofan Xia, Xuran Pan, Shiji Song, Li Erran Li, and Gao Huang. Vision transformer with deformable attention. In Pr o c e e dings of the IEEE/CVF Confer enc e on Computer Vision and Pattern R e c o gnition , pages 4794–4803, 2022. [37] Enze Xie, W enhai W ang, Zhiding Y u, Anima Anandkumar, Jose M Alv arez, and Ping Luo. SegF ormer: Simple and efficien t design for semantic segmentation with transformers. A dvanc es in Neur al Information Pr o c essing Systems , 34:12077–12090, 2021. [38] Y utong Xie, Jianp eng Zhang, Chunh ua Shen, and Y ong Xia. CoT r: Efficien tly bridging CNN and transformer for 3D medical image segmen tation. In International Confer enc e on Me dic al Image Computing and Computer-Assiste d Intervention (MICCAI) , pages 171–180. Springer, 2021. [39] Guoping Xu, Xingrong W u, Xuan Zhang, and Xinw ei He. Le ViT-UNet: Make faster en- co ders with transformer for medical image segmentation. CoRR , abs/2107.08623, 2021. [40] Y undong Zhang, Huiy e Liu, and Qiang Hu. T ransF use: F using transformers and CNNs for medical image segmen tation. In International Confer enc e on Me dic al Image Computing and Computer-Assiste d Intervention , pages 14–24. Springer, 2021. [41] Sixiao Zheng, Jiac hen Lu, Hengshuang Zhao, Xiatian Zhu, Zekun Luo, Y abiao W ang, Y anw ei F u, Jianfeng F eng, T ao Xiang, Philip H. S. T orr, and Li Zhang. Rethinking se- man tic segmen tation from a sequence-to-sequence p ersp ective with transformers. CoRR , abs/2012.15840, 2020. [42] Sixiao Zheng, Jiac hen Lu, Hengshuang Zhao, Xiatian Zhu, Zekun Luo, Y abiao W ang, Y an- w ei F u, Jianfeng F eng, T ao Xiang, Philip HS T orr, et al. Rethinking semantic segmen- tation from a sequence-to-sequence persp ective with transformers. In Pr o c e e dings of the IEEE/CVF Confer enc e on Computer Vision and Pattern R e c o gnition , pages 6881–6890, 2021. [43] Hong-Y u Zhou, Jiansen Guo, Yinghao Zhang, Xiaoguang Han, Lequan Y u, Liansheng W ang, and Yizhou Y u. nnF ormer: V olumetric medical image segmentation via a 3D transformer. IEEE T r ansactions on Image Pr o c essing , 32:4036–4045, 2023. [44] Zongw ei Zhou, Md Mahfuzur Rahman Siddiquee, Nima T a jbakhsh, and Jianming Liang. UNet++: A nested U-Net architecture for medical image segmen tation. In International W orkshop on De ep L e arning in Me dic al Image Analysis , pages 3–11. Springer, 2018. 20 [45] Qikui Zhu, Bo Du, Baris T urkbey , Peter L. Choyk e, and Pingkun Y an. Deeply-sup ervised CNN for prostate segmentation. In 2017 International Joint Confer enc e on Neur al Networks (IJCNN) , pages 178–184, 2017. 21

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

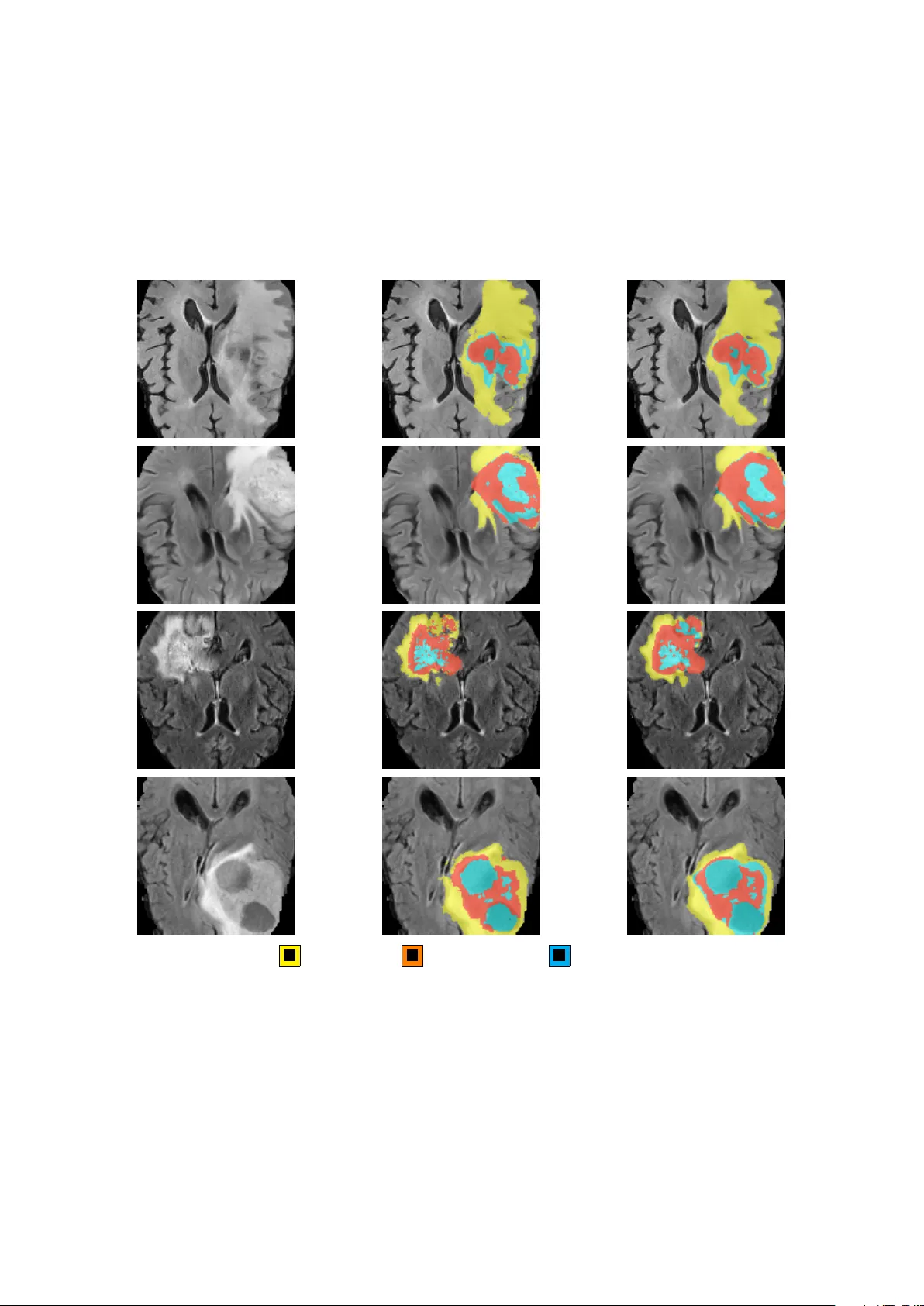

Leave a Comment