Collaborative Safe Bayesian Optimization

Mobile networks require safe optimization to adapt to changing conditions in traffic demand and signal transmission quality, in addition to improving service performance metrics. With the increasing complexity of emerging mobile networks, traditional…

Authors: Alina Castell Blasco, Maxime Bouton

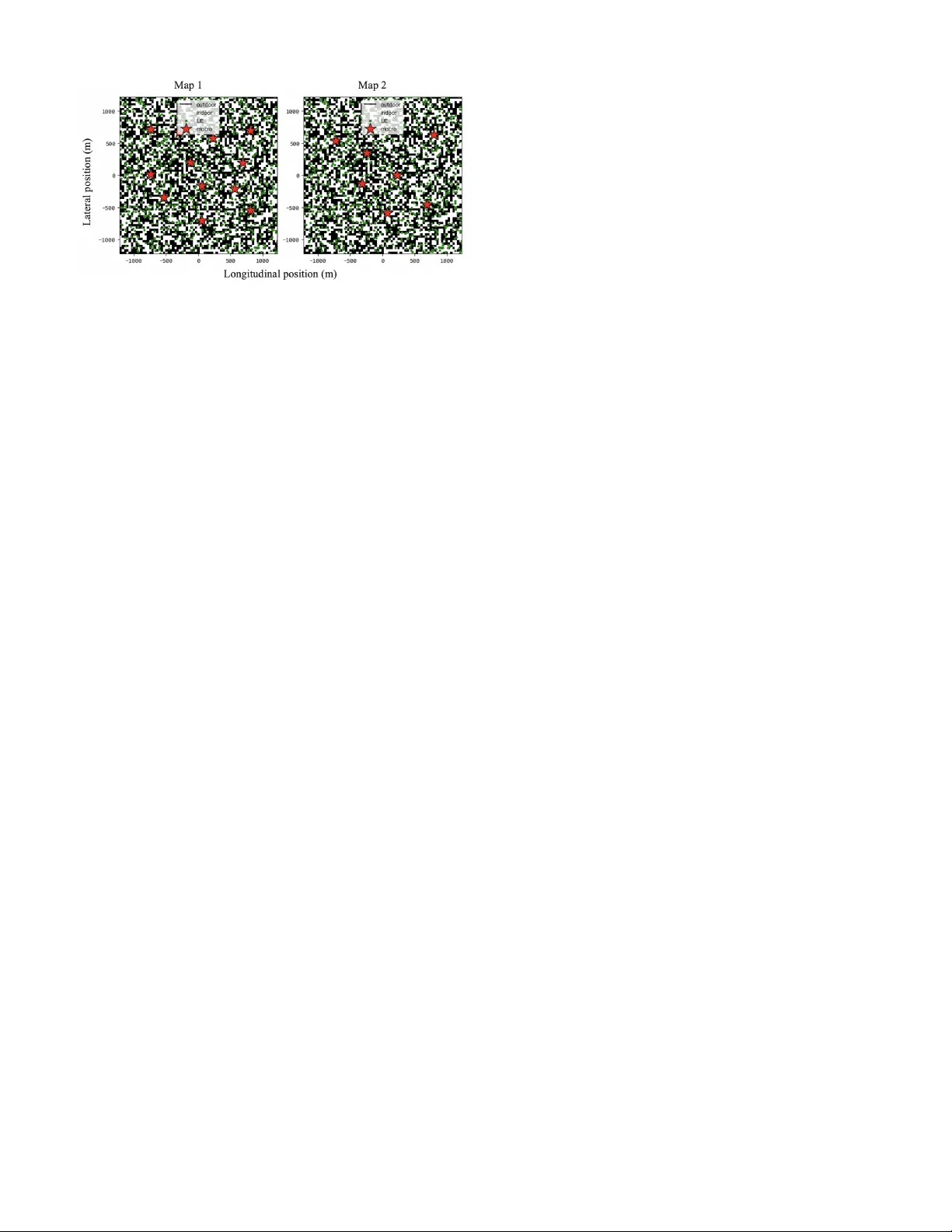

Collaborati v e Safe Bayesian Optimization Alina Castell Blasco, Maxime Bouton Ericsson Resear ch, Stockholm Abstract —Mobile networks r equire safe optimization to adapt to changing conditions in traffic demand and signal transmission quality , in addition to impro ving service performance metrics. With the increasing complexity of emerging mobile networks, traditional parameter tuning methods become too conservative or complex to e valuate. F or the first time, we apply safe Bay esian op- timization to mobile networks. Moreover , we develop a new safe collaborative optimization algorithm called C O SB O, leveraging information from multiple optimization tasks in the network and considering multiple safety constraints. The resulting algorithm is capable of safely tuning the network parameter online with very few iterations. W e demonstrate that the proposed method impro ves sample efficiency in the early stages of the optimization process by comparing it against the S A F E O P T - M C algorithm in a mobile network scenario. Index T erms —Safe Bayesian Optimization, Gaussian Pr ocesses, Artificial Intelligence for Mobile Networks, 5G and 6G Networks. I . I N T R O D U C T I O N Modern mobile networks consist of complex systems where key parameters, such as antenna tilt or beamwidth, directly in- fluence the coverage quality experienced by users. Optimizing these parameters is essential for maintaining efficient network performance and adapting to changing environmental condi- tions. Howe ver , ev aluating the impact of new configurations is complex. It requires simulations or testing with real-world systems which might take days and can be challenging due to safety constraints such as maintaining minimum coverage lev els or minimum signal quality . This paper addresses the problem of safely and efficiently optimizing performance-related parameters in mobile network systems, since the e valuation of each ne w parameter might take up to a day to complete. The goal is to develop a method that maximizes performance subject to safety constraints through online optimization, that is, by safely exploring parameter values directly in the network. In addition, our objective is to use data from collaborativ e agents to improve the sample efficienc y of current methods. W ith the increasing complexity of modern mobile networks, such as 5G and future 6G networks, traditional methods like rule-based strategies have become too conserv ativ e. Promising approaches like reinforcement learning (RL) require exten- siv e offline training data or high-quality simulators, and safe ev aluation in the netw ork is challenging. Bayesian Opti- mization (BO), and in particular safe Bayesian Optimization (safe BO), addresses some of these limitations. Unlike RL- based methods that require training, safe BO is an online This w ork has been performed with support of Sweden’ s Inno vation Agency . optimization approach. It uses probabilistic models, typically Gaussian processes (GPs), to explore the parameter space and iterativ ely update the knowledge about performance while ensuring that each new ev aluation is safe. Safe BO uses fewer e valuations than RL methods. Howe ver , it requires an initial safe set which might be small or poorly located, hence increasing the number of ev aluations needed to reach an optimal configuration, or causing the algorithm to be stuck local optima. W e propose a new algorithm called Collabora- tiv e Safe Bayesian Optimization (CoSBO) that builds on the standard safe BO algorithm for multiple constraints S A F E O P T - M C [1], introducing a collaborative initialization strategy . The proposed method differs from previous methods in sev eral ways. Unlike rule-based systems, it adapts dynamically to the data. In contrast to RL-based methods, it optimizes online with no pre-training required. Although BO already improv es ef fi- ciency by balancing exploration and exploitation, we further improv e sample efficienc y by integrating external data into the optimization process and show that it helps discovering new optimal regions. The first contribution of this paper is CoSBO, the new al- gorithm that takes advantage of the similarity between agents, such as antennas in similar geographical or netw ork traf fic con- ditions, to enrich the initial estimation model of the network performance. By identifying the most correlated collaborator among a set of network optimization problems and transferring a subset of its data, the optimization process starts with a more informed model, without requiring additional e valuations at the initial stage. The quality and av ailability of the collaborator data are key factors to consider , since the benefit may be reduced if the selected collaborator is poorly correlated. The second contribution consists of applying a safe Bayesian optimization method to the telecommunications domain for the first time. This is done through a careful modeling of safety and performance functions for mobile networks and is ev aluated through series of simulation-based experiments. The method is shown to reach high-performing configurations with very few parameter ev aluations in the network while maintaining formal safety guarantees. In general, this work contributes to the dev elopment of adaptiv e, data-ef ficient, and safety-constrained optimization techniques for modern mobile networks. The outline of this paper continues with related work and background, follo wed by a description of the problem and the CoSBO algorithm, and finishes with details on the experi- ments performed, results obtained, and concluding remarks. The code for the algorithm is a vailable at: https://github . com/EricssonResearch/collaborativ e- safe- bo, including hyper- parameters and simulated datasets used in our experiments. I I . R E L AT E D W O R K Recent studies provide RL-based approaches that optimize parameters in mobile networks in a multi-agent setting, such as antenna tilt optimization [2]. These methods require an offline training phase, using a large number of simulated data, since it is too risky and expensiv e to retriev e samples from real systems, and lack safety guarantees. In contrast, our work focuses on online optimization, aims to reduce the number of ev aluations to limit risks and computational cost of training, and considers safety guarantees. Instead of RL-based methods, we rely on Berkenkamp et al.’ s algorithm, S A F E O P T - M C [1], in the core structure of our method. This algorithm balances exploration of the safe set of configurations and exploitation of the optimal one, similar to Sui et al.’ s algorithm, S A F E O P T [3]. Maggi et al. [4] applied Bayesian optimization to radio resource management in cellular networks. Ho wever , their approach does not account for safety constraints during optimization nor collaborativ e data. In this paper , we use Berkenkamp et al.’ s approach that formalizes performance and safety as separate functions to optimize and adapt it to mobile network scenarios. Compared to C O S B O , none of the safe BO algorithms provides a collaborative framew ork. Existing multi-agent op- timization works create simulated functions [5], or optimize multiple agents in parallel [6]. By contrast, we work with real- world performance functions, and we aim to safely optimize a single agent, respectiv ely . Other works [7] [8] define a global re ward function and a global safety constraint, which is useful for planning instead of optimization. Our method uses similarities between different parameters and network areas to both speed up the optimization of the safe BO method and broaden the exploration while maintaining safety guarantees. I I I . B A C K G R O U N D In this section, we present Gaussian processes (GPs) and the standard safe Bayesian optimization method, which are the mathematical foundations of our Collaborativ e Safe Bayesian optimization algorithm in Section V. A. Gaussian Pr ocesses (GPs) Optimization problems inv olve finding the best parameter configuration to achieve the maximum possible performance. Generally , this performance or objective function is unknown, so we use models that can approximate the behavior of the system based on previous observations. These approximations are called probabilistic surrogate models, they construct esti- mations ˆ f of the true objecti ve function f based on observa- tions or ev aluations of this function and quantify confidence in these predictions [9]. W e use Gaussian processes (GPs) as our probabilistic surrogate model since it is suitable for complex black-box functions and can explicitly account for noisy performance measurements, with the predictiv e variance reflecting this uncertainty [1]. GPs represent a probability distribution over functions, i.e., the estimated function values are modeled as samples of a Gaussian distribution [10]. That is, the predicted value at any point in the function comes with an uncertainty estimate. This predicted point represents the point of the true objectiv e function, and this confidence is used to decide which point to ev aluate next. GPs construct a GP prior distribution when no ev aluations have been done on the objectiv e function, this distribution is f ( x ) ∼ G P ( µ ( x ) , k ( x , x ′ )) , x , x ′ ∈ X , where µ ( x ) is the prior mean function and k ( x , x ′ ) is the covariance function or kernel between two points x , x ′ of X . In this work, the parameter space X corres ponds to domains of network configuration parameters such as the electrical tilt of an antenna. The mean function represents prior knowledge about the function and the kernel controls the smoothness of the functions [9]. The kernel we use is the squared exponential (RBF), formally described in [9], eq. (15.9), due to its particular smoothness properties and common usage in the safe optimization literature. Giv en that we have a set of points x and their associated observ ations y , the next step is to predict the v alue ˆ y at point x ∗ conditioned on the previously observed values. Thus, we construct the GP posterior distrib ution , the distrib ution of possible unobserv ed values conditioned on observed values [9]. B. Safe Bayesian Optimization Safe BO algorithms aim to maximize an unknown objecti ve function while ensuring that ev ery selected parameter satisfies safety constraints with high confidence. The optimization is restricted to a safe set of parameters that is gradually expanded as the algorithm gains more information about the behavior of the system. The algorithm starts with an initial safe set of points S 0 ⊆ X where safety constraints are satisfied. It then iterativ ely explores the parameter space to expand the region classified as safe while balancing exploitation to find the optimal v alue. The point of con vergence is one reachable from the initial safe region through safe steps. Thus, if the initial set is poorly defined and the exploration hyperparameter is conservati ve, the algorithm may fail to explore beyond it and will con verge to the optimal point within the safe region explored [1] [3]. I V . P RO B L E M S TA T E M E N T In this section, we present a formal definition of the constrained optimization for mobile networks and the system model. A. Constr ained Bayesian optimization Our goal is to maximize an unknown performance function f : X → R , e.g. throughput per user or signal quality of an antenna, where X ⊆ R d is the domain of input parameters such as electrical tilt. Since f is not known a priori , we can only learn about it through ev aluations at selected parameter points x ∈ X , e.g . certain tilt values, which yield observations that are noisy because signal propagation is influenced by div erse en vironmental and netw ork factors, of the form: ˆ f ( x ) = f ( x ) + ω n , ω ∼ N (0 , σ 2 n ) , (1) where ω n is a Gaussian noise with zero mean and σ 2 n noise variance. The v ariance quantifies the uncertainty of the predictions. T o optimize our objectiv e function f is to obtain its maximum value. For that, we construct an accurate estimate function ˆ f , or surrogate model, based on these noisy observations. Specifically , we sequentially evaluate a finite set of parameter points X = [ x 1 , x 2 , ..., x n ] and obtain corresponding noisy observations ˆ f ( x i ) for each x i ∈ X . The surrogate model leverages these observations to approximate the true underlying function f , guiding the search for its maximum. The e valuated parameter points must satisfy a set of safety constraints (e.g., fraction of users above a signal quality lev el) defined by q unknown constraint functions of the form g i ( x ) ≥ 0 , g i : X → R , where i ∈ I g = { 1 , . . . , q } are the safety function indices that belong to the set of function indices I g ⊂ I . Similarly as the objective function, the safety constraints are estimated from noisy observ ations since they are unkno wn a priori . Neither the performance function nor the safety constraints are known. Howe ver , we assume the e xistence of an initial safe set of parameters S 0 ⊆ X known to satisfy the safety conditions. This starting point is deriv ed from domain knowledge. In telecommunications applications, prior experience and system kno wledge allo w experts to identify parameter configurations that are guaranteed to be safe, for e xample, based on current deployment practices. W ith our C O S B O algorithm we pro vide an initial safe set intelligently informed from experience of dif ferent antenna parameter tuning problems. The optimization process then proceeds iteratively: at each time step t , the algorithm selects a ne w parameter point x t ∈ X to ev aluate, ensuring that x t is safe according to the current model estimates. Only parameters within the feasible region (where all safety constraints are satisfied) are ev aluated. Formally , the optimization problem is defined as: max x ∈X f ( x ) subject to g i ( x ) ≥ 0 , i = 1 , . . . , q . (2) Nev ertheless, as mentioned in Section III-B, we can only find the optimal point reachable from the initial safe region and learn the safety constraints up to some accuracy ϵ . Therefore, we define the reachable safe set R ϵ ( S 0 ) as the set of parameters that can be safely explored starting from the initial set S 0 , giv en the estimation accuracy ϵ , and without going through unsafe parameters. Consequently , the true optimal value is defined as: f ∗ ϵ = max x ∈ R ϵ ( S 0 ) f ( x ) s. t. g i ( x ) ≥ 0 , i = 1 , . . . , q , (3) where R ϵ ( S ) := S ∪ \ i ∈I g { x ∈ X | ∃ x ′ ∈ S : g i ( x ′ ) − ϵ ≥ 0 } (4) B. System Model Having introduced the formal problem, we no w describe a real mobile network application. This scenario takes place in a region composed of multiple urban areas, where each has its o wn size, population density , geographical topology , and Fig. 1. Illustration of two mobile network scenarios where the antenna parameter being adjusted on the left is the horizontal beamwidth, and on the right is the electrical tilt. Each base station includes three cells. Both scenarios show two negati ve outcomes (non connected users) resulting from beamwidth/tilt configuration and insufficient coordination. mobile network infrastructure with base stations and antennas. These factors influence the performance of the antennas and are reflected on their performance functions which may differ or show similarities among areas with comparable characteris- tics. The optimization problem begins when a new antenna is to be deployed: its performance function is initially unknown and must be optimized to identify the best configuration, while simultaneously satisfying a safety constraint that guarantees minimum cov erage for the served cell. W e consider two performance functions ( f ) corresponding to different parameters illustrated in Fig. 1, beamwidth and tilt, in addition to a coverage safety constraint ( g ). W e define the following measurements based on Reference Signal Received P ower (RSRP) since it is proportional to the antenna gain and the antenna gain is a function of tilt and beamwidth as described in [11], eq. (3). W e compute the Signal over Interfer ence and Noise Ratio (SINR) according to [11], eq. (4), and the throughput according to [11], eq. (5). The beamwidth performance f is quantified by the number of users with good coverage and low interference, the tilt performance f by the a verage user throughput and the safety constraint g by the number of users with acceptable RSRP . The respective formulas are as follows: T otalUsers = N X u =1 I Interference u < h interference ∧ RS R P u > h RSRP (5) Throughput = 1 N N X u =1 Throughput u (6) RSRP count = N X u =1 I RSRP i > h RSRP , (7) where u corresponds to users, N is the total number of users, and the thresholds h interference and h RSRP thresholds correspond to the 50th percentile of interference and 5th percentile of RSRP across users in dBs, respectively , measured in the initial state and used as constants throughout the optimization. V . T H E C O SBO M E T H O D In this section, we introduce a novel collaborative extension of the S A F E O P T - M C algorithm [1]. Our algorithm lev erages multiple agents by identifying the one most similar to the main function and transferring its data to the main agent, thereby enhancing the optimization process. W e illustrate an example run in Fig. 2, and the full procedure is described in Algorithm 1. A. Collabor ative initialization str ate gy W e propose a collaborativ e strategy to enhance the sample efficienc y of S A F E O P T - M C [1] by using information from external agents, achiev ed by incorporating observations from collaborator agents into the main agent’ s GP model during initialization. The key is to identify the collaborator whose behavior most closely correlates with the main agent by calculating the similarity between the main agent and the collaborators. Howe ver , since at initialization there is no direct data a v ailable from the main agent, we propose to use an adjacent optimization domain, as described next. W e define X A as the main set of input parameters (e.g., electrical tilt) ov er which we optimize the main agent (e.g., an antenna). Then, we assume that there exists another do- main, denoted X B , over a separate set of input parameters (e.g., horizontal beamwidth). Additionally , we assume that all agents, both collaborators and the main agents, hav e estimated objectiv e functions on this domain and we hav e access to them. This assumption could easily be satisfied by starting a safe optimization on one parameter that we know is safer , as we demonstrate using safe BO. Alternativ ely , one could use the history of network configuration changes performed on the area being optimized. Afterwards, we compute the Pearson correlation [12] be- tween the data points of the collaborators and the main agent on domain X B (e.g., horizontal beamwidth), formally: ρ ( x X B , x ′ X B ) = E [( x − µ x )( x ′ − µ x ′ )] σ x σ x ′ , (8) where x , x ′ ∈ X B represent the main agent and collaborator agents, and ( µ x , σ x ) and ( µ x ′ , σ x ′ ) correspond to their statisti- cal estimates, respectiv ely . Next, the collaborator with highest correlation is identified and, on the main input domain X A , we select the k most correlated samples, by default k = 10 , from its GP posterior distribution. These samples are then used to initialize the GP model of the main agent for optimization. Importantly , while the correlation is computed using X B , the transferred data originates from X A under the assumption that pairwise similarity across collaborators in X B reflects that in X A . In other words, two network areas that show strong correlation when being optimized for beamwidth will most likely be correlated for other parameters as well. This is prov en to hold for our simulation experiments in Section VI-C. B. The C O S B O algorithm Algorithm 1 starts by fitting the GP models from a known safe initial parameter value x 0 (e.g., a tilt value) and its Algorithm 1 CoSBO Input: Initial safe parameter x 0 ∈ S 0 ⊆ X A , G P priors (( µ 0 , σ 0 , k ) , ( µ i , σ i , k i )) , ∀ i ∈ I g , G P posterior ˆ f X B , collaborator sets C X A , C X B 1: Initialize G P ← x 0 , ˆ f ( x 0 ) , ˆ g i ( x 0 ) , ∀ i ∈ I g 2: Identify most correlated collaborator: z c = ρ ( ˆ f X B , ˆ f C X B ) 3: Collaborator data: ( ˆ f ( x ) , ˆ g i ( x )) ∈ C X A , ∀ i ∈ I g 4: D k ← { top k points from collaborator data } 5: f or d = 1, . . . , k do 6: Let x d ∈ D k 7: Update G P ← x d , z c , ( ˆ f ( x d ) , ˆ g i ( x d )) ∈ C X A , ∀ i ∈ I g 8: end f or 9: f or t = 1, . . . do 10: Run S A F E O P T - M C 11: end f or measures ˆ f ( x 0 ) , ˆ g i ( x 0 ) (e.g., throughput per user and number of users with acceptable RSRP). Then, selects the most corre- lated collaborator using the Pearson correlation ρ ( ˆ f X B , ˆ f C X B ) between the main agent’ s GP posterior and the collaborator agents from the set C X B . After that, it selects the collabo- rator data from the main set C X A and identifies the k most informativ e data points (the most correlated ones), which are stored in the set D k . Then, updates the G P model of the main agent’ s performance and safety functions with high-quality prior information. The optimization process then proceeds with the S A F E O P T - M C [1] algorithm. The visualization in Fig. 2 represents the optimization process of the horizontal beamwidth parameter of an antenna that aims to maximize the number of users with good coverage and low interference (performance function f ) while ensuring a minimum number of users with acceptable RSRP (safety constraint g ). The algorithm starts with the estimation of both the performance and safety function based on the transferred data from a collaborator (orange crosses). W ith the GP (light blue curv e) and the confidence intervals (light blue areas), the algorithm is able to determine current observations and nearby parameter points as safe (green set). At each iteration, an ev aluation (red cross) of the real objectiv e function (gray curve) is done and added to the GP posterior . The selected point to ev aluate belongs to the safe set and is chosen because it either expands this safe set or maximizes the performance. The safety threshold (gray dashed line) indicates that the points below it are unsafe. In this example, the addition of collaborativ e data has created three separate safe regions, the algorithm takes advantage of this to explore all of them; by increasing the safe set of points, we improve the sample efficienc y of the process. The data points obtained from the optimization process are ev aluations of the real objectiv e function, therefore, the GP models should ha ve more confidence on those points than on the transferred ones from the collaborator . This is formally Fig. 2. Optimization process of the horizontal beamwidth of an antenna using the CoSBO algorithm to maximize the performance function (gray curve on the top) while satisfying the safety constraint (gray curve on the bottom). The visualization shows the progress of the estimated functions (light blue curves) at the first, fifth, and tenth iterations. The algorithm starts with collaborator data (orange crosses) that is added as observed data (black crosses). Then, based on the GP posterior and its confidence intervals (light blue areas), it iterati vely selects and evaluates new data (red crosses) that are abov e the safety threshold (gray dashed line) and within the current safe set (green set). The input parameter region is truncated on the plot. defined by e xtending the performance function to incorporate a context variable f : X × Z → R . Therefore, for each input x ∈ X the GP model is augmented with an associated conte xt v alue z ∈ Z and measurements are of the form ˆ f ( x , z ) [13]. On the one hand, the context value associated to the collaborator data is the correlation coefficient between the main agent and the collaborator , formally , ρ ( x X B , x ′ X B ) = z c ∈ Z . On the other hand, the value associated to the measurements from the main agent is z m = 1 , the maximum Pearson correlation coefficient which is 1 [12]. Hereby , the transferred data is of the form ( x , z c ) and is quantified with a higher uncertainty than the data e v aluated from the real objective function ( x , z m ) . Quantifying the context is essential to prev ent low-quality collaborators from degrading performance, since all trans- ferred samples are assigned lower confidence than ev aluations obtained directly from the main agent. Consequently , if a col- laborator has misleading correlations, its influence decreases rapidly as real e valuations are accumulated. In the worst-case scenario, where none of the transferred data points contrib ute useful information for identifying the objective function, the effecti ve optimization process begins once the main agent’ s own ev aluations are incorporated. A safeguard mechanism against misleading correlations is proposed in Section VII. C. The S A F E O P T - M C algorithm This algorithm guarantees safety , and balances exploration and exploitation; that is, it iterativ ely selects points that can either expand the safe set of parameters (e.g., explore a region of the network with possibly poorer coverage) or maximize the objective function (e.g., maximize recei ved signal quality). T o ensure safety , the algorithm defines confidence bounds around the G P posterior of both f and g i with its predictiv e posterior mean µ t ( x ) and variance σ 2 t ( x ) , which contain the true functions f and g i with high probability [1]: Q t ( x ) := [ µ t − 1 ( x ) ± β 1 / 2 t σ t − 1 ( x )] , (9) where the hyperparameter β controls the desired confidence lev el. These confidence interv als are used to compute safe regions and guide the selection of the next query point to ev aluate. A point is considered safe if its lower confidence bound l ( x ) remains above the safety threshold h . The set of safe points are defined as the safe region: S t = \ i ∈ I g [ x ∈ S t − 1 { x ′ ∈ X | l i t ( x ) ≥ h } , (10) which contains all points in the set S t − 1 [1]. T o trade off between exploration and exploitation, the algorithm identifies two subsets of the safe set at ev ery iteration t : the set of potential maximizers (M), that could improve the estimate of the maximum, and potential expanders (E), that could e xpand the safe region. The maximizers are the safe points whose upper confidence bound ( u ( x ) ) is higher than the largest lo wer confidence bound ( l ( x ) ) of the performance function f . The expanders are the safe points that would produce a posterior distribution with a larger safe set if they were added to the G P of the safety functions g i . These points are the most uncertain, providing the most information and naturally lying near the boundary of the safe region [9]. These sets are originally defined by Sui et al. [3]. Every time a new point is added to the GP model, the confidence bounds Q , the safe set S , the maximizers set M and expanders set E are updated. This new point x t is the Fig. 3. T wo of the five simulator-generated topological maps for traffic volumes 15 × 10 7 bit s − 1 . The mobile network is comprised by base stations (macro, red stars), the users (green dots), the obstacles or indoor areas (white squares), and the outdoor areas (black squares). The indoor-outdoor probability corresponds to a 0 . 5 q uantity . one with highest predictiv e uncertainty , thereby balancing safe exploration and performance improvement: x t = arg max x ∈ E t S M t max i ∈ I w n ( x , i ) , (11) w n ( x , i ) = u t ( x ) − l n ( x ) (12) This iterative process of expanding the safe set of points, exploiting promising regions, and exploring most uncertain ones, continues until no new safe points can be identified or no potential impro vement is possible. V I . E X P E R I M E N T S T o assess the performance of C O S B O we conduct ex- periments on a set of simulated mobile network scenarios and compare our algorithm against S A F E O P T - M C [1]. In particular , we aim to answer the following questions: Does C O S B O impro ve sample-efficienc y? Is using data from a poorly correlated collaborator still more sample efficient than no collaboration at all? A. Simulation En vir onment The experiments were carried out using a proprietary system lev el simulator that simulates realistic behavior of antennas and base stations modeling the signal propagation [14], and using 3GPP models [15] for NR urban macro scenarios. W e constructed five artificial maps to emulate the topology of a city based on a default square layout and placed sev en to twelve base stations according to a Poisson point process, providing spatial randomness in their distribution, with a minimum intersite distance. An example of these maps can be seen in Fig. 3. In addition to the topological v ariability of the different maps, we incorporated traffic load variations which represent the amount of data moving across a network. W e defined three load lev els: low , medium and high, correspond to a percentage of utilization of 5 − 10 % , 30 % and 60 − 80 % , and total traffic volumes of 5 × 10 7 bit s − 1 , 15 × 10 7 bit s − 1 and 25 × 10 7 bit s − 1 , respectively . The traffic volume is spread uniformly across uniformly distributed users in the map. All these factors influence the performance and safety functions producing unique network optimization problems for e very scenario. With 5 maps and 3 load lev els we have a total of 15 different network scenarios. The simulated data is available in our code repository . The optimization task was conducted over two parameters of the antennas: the horizontal beamwidth and the electrical tilt. These parameters were modeled using a urban macro propagation model with frequency of 2GHz using the standard reference of 3GPP TR 38.921 [15]. They influence the radia- tion pattern of the antenna and directly impact user experience metrics. The beamwidth determines the angular spread of the antenna’ s main lobe in the horizontal plane, narrower beamwidths result in poorer signal cov erage at the boundary of the cell sector (beam distortion is more likely to occur) while wider ones results in good signal coverage. The tilt controls the v ertical orientation of the antenna, narro wer tilts result in more focused coverage areas, which can increase signal strength but may cause some users to lose coverage; while wider tilts ensure no user loses coverages but also introduce interference if not properly aligned with the rest of antennas. B. Hyperpar ameter configuration The hyperparameter configuration we used to setup the topological map is described in T able I. In addition, we used a unique GP regression model to estimate each of the performance and safety functions that also has tunable hyperparameters including kernel function parameters, noise variance, and constants specific for the algorithms C O S B O and S A F E O P T - M C [1], all of which are described in T able II. W e tune the values of the variance and lengthscale, which controls the smoothness of the function, ho w quickly is it expected to change in terms of y values. The chosen value for the lengthscale is ℓ = 1 , as it provides a good balance of smoothness and is a standard choice in the literature. The noise variance is assigned dif ferent values for the performance and safety functions to rely more confidently on safety estimates while accounting for potential variability in performance mea- surements. In the S A F E O P T - M C algorithm we use the explo- ration constant β which balances e xploration and e xploitation while preserving safety; we choose the value β = 2 since higher v alues focus on exploring the parameter space instead of exploiting it, while lower values focus on maximization and get stuck in local optima. In the C O S B O algorithm we define the safety threshold h , which determines the safe regions that can be explored. Since the safety constraint corresponds to the number of users with a signal quality level (RSRP) higher than − 130 dB (Eq. (7)), the threshold h is set to the 50th percentile of users that must have coverage. Moreov er , we scaled all the experimental scenarios since small dif ferences in the function values can greatly affect the correlation coefficient and v alues outside the range of values of the GP prior distrib ution might be less likely to be observed, leading to inaccurate predictions. W e applied a Min-Max scaling to all the scenarios using scenario-specific minimum Fig. 4. Optimization method comparison for tilt and beamwidth using high-quality collaborators (on the left) and low-quality ones (on the right). Curv es correspond to the optimum obtained at each iteration of the process for a budget of 60 iterations. The shaded areas represent the 25 % - 75 % inter-quartile range of values obtained over the 15 s imulated networks times the 10 d ifferent starting points. CoSBO method is displayed in blue, SafeOpt-MC in orange, and an unsafe random exploration method in violet red. T ABLE I T O P O L O G I C A L M A P PAR A M E T E R S . Square Map Edge length 2000 m Number of bins 5000 Poisson Distribution λ m − 2 4 × 10 6 Min. intersite distance 400 m Deployment Number of users 1000 Sectors per site 3 T ABLE II G P M O D E L S A N D C O S B O PAR A M E T E R S . Kernel σ 2 ℓ f noise σ 2 g noise σ 2 β Threshold h k 0.5 1 1 × 10 − 4 1 × 10 − 5 2 0.4 10 and maximum values to normalize all function outputs to the [0 , 1] interval. This pre-processing step was possible because the functions were computed from simulations, allowing the true minimum and maximum values to be known beforehand. If those were not known, estimates based on collaborator data or domain-specific kno wledge could be used. In practice, his- torical performance management counters from a live network could be used to perform this normalization step. C. F ast online optimization in mobile networks W e measure the sample ef ficiency of the methods in terms of the highest average value of the objectiv e function found at each iteration in all scenarios of each input parameter , represented in each curve of Fig. 4. The experiments were repeated 10 times with dif ferent safe initial starting points randomly selected within a safe range. Our algorithm is represented in a blue curve, the baseline algorithm, S A F E O P T - M C [1], in an orange curve, and an unsafe random exploration process in violet red. The latter is a method that searches for the optimum by randomly exploring the entire parameter space. Since it does not consider a safety constraint nor a safe region of parameters, it randomly chooses any point from the entire parameter space and rapidly con ver ges tow ards the true optimal v alue. Kno wing that its conv ergence is faster than that of the safe optimization algorithms, we use it as a reference for the other two algorithms. W e separate the results by network parameter being tuned because their performance function values are too dif ferent to be plotted jointly . W e display the curves with confidence intervals to represent the complete range of values, which are computed over the 15 simulated networks. In the graphic in the upper left of Fig. 4, the C O S B O (blue curve) reaches the asymptotic optimal value in fe wer iterations than the S A F E O P T - M C (orange curve); this is attributed to the addi- tional data receiv ed from the collaborator in the early stages of optimization. In particular , the optimal value of the tilt parameter is reached about sev en iterations earlier with the C O S B O algorithm than with S A F E O P T - M C , which in a liv e mobile network deployment can translate into an advantage of sev en days in the optimization process since measuring the performance function is often done using daily aggregate of network performance counters [2]. In the bottom left plot of Fig. 4, both algorithms (blue and orange curves) ov erlap no- tably with their confidence intervals, meaning that they obtain similar optimal values at each iteration step, still, on average C O S B O obtains higher optimal values during the initial stage of the process. Therefore, the optimization results using the most correlated collaborator (i.e., high-quality) demonstrate that C O S B O is more sample ef ficient than S A F E O P T - M C . Furthermore, our method con ver ges with fewer ev aluations compared to RL-based approaches in a similar simulation en vironment. Mendo et al. [2] (Figures 6 and 7) rely on the same simulation environment and report that their pre- trained agent requires at least 10 steps to fine-tune to a new network scenario on the tilt optimization problem. Our approach achiev es it using fewer steps without the need for pre-training. A comprehensiv e comparison of BO and RL would significantly widen the scope of this paper . Generally , GP based optimization may scale less than RL in terms of space complexity but requires orders of magnitude fewer samples. W e believe the overall trade-off strongly fav ors BO for the problem at hand. Exploring hybrid RL-BO strategies is an interesting future direction. W e further ev aluate the robustness of our collaborativ e strategy by testing it with lower -quality collaborators, i.e., the least correlated ones. The correlation coefficients of these collaborators is, in av erage, a 0 . 39 f or the beamwidth pa- rameter functions and a 0 . 15 f or the tilt parameter functions across the 15 scenarios. These are significantly low values considering that the maximum Pearson coefficient is 1 a nd a coefficient of 0 i ndicates no correlation [12]. In the plots on the right side of Fig. 4 the two compared algorithms perform similarly for both optimized parameters, as indicated by the considerable o verlap of their confidence intervals and their con vergence to the asymptotic optimal value in similar itera- tions. These graphics show that when the chosen collaborator is not correlated at all, the algorithm does not gain information. Howe ver , the performance of our algorithm does not worsen; in fact, it achiev es optimal values comparable to S A F E O P T - M C [1]. Thus, ev en under limited collaboration, our algorithm remains as sample-efficient as S A F E O P T - M C. This results from the smart use of the context variable computed with the correlation coefficient of the selected collaborator discussed in Section V -B. Furthermore, our collaborativ e strategy relies on the assumption that pairwise similarity across collaborators in domain X B reflects that in the main domain X A . The fact that the C O S B O algorithm achiev es a higher sample efficienc y with high-quality collaborators than with low-quality ones supports this assumption. V I I . C O N C L U S I O N S A N D F U T U R E W O R K W e presented a collaborative extension of the safe Bayesian optimization framew ork that enhances the sample-efficiency by le veraging observ ations collected from other agents de- ployed in similar , but not identical, en vironments. The method accelerates the identification of safe and high-performing regions in the parameter space by integrating external data at early stages into the optimization process, i.e., directly into the Gaussian process model. W e demonstrated ho w to model mobile network optimization as a safe Bayesian optimization problem and how to apply S A F E O P T - M C [1]. Through real- istic simulations, we demonstrated that our proposed collab- orativ e strategy impro ves sample efficienc y when using high- quality collaborators. In addition, we prove that our method achiev es comparable efficiency to S A F E O P T - M C even under low-quality collaborators. C O S B O is particularly ef fecti ve during the early stages of optimization and recommended in scenarios where safe e xploration is essential and the av ailable ev aluation budget is limited or complex. Finally , although our experiments rely on simulations, the proposed method could be implemented in a real network within the O-RAN architecture running as an rApp in a centralized non real time Radio Intelligent Controller (RIC) [16]. Future work includes exploring estimation-based ap- proaches to improve collaborator selection and looking into uncertainty-aware correlation metrics. Extending the telecom- munications application to multiple safety constraints which could influence the selection of collaborators is a straightfor- ward extension, as well as expanding the set of parameters to be tuned, which we refrained to do to focus the analysis on the collaborativ e aspect. Adding a safeguard approach to require a minimum Pearson correlation v alue for a collaborator to be used. Another avenue of future w ork could be to combine the collaborativ e safe exploration strategy proposed in this work to reinforcement learning based methods. R E F E R E N C E S [1] F . Berkenkamp, A. Krause, and A. P . Schoellig, “Bayesian optimization with safety constraints: Safe and automatic parameter tuning in robotics, ” Mac hine learning , v ol. 112, no. 10, pp. 3713–3747, 2023. [2] A. Mendo, J. Outes-Carnero, Y . Ng-Molina, and J. Ramiro-Moreno, “Multi-agent reinforcement learning with common policy for antenna tilt optimization, ” arXiv pr eprint arXiv:2302.12899 , 2023. [3] Y . Sui, A. Goto vos, J. Burdick, and A. Krause, “Safe exploration for optimization with gaussian processes, ” in International confer ence on machine learning , 2015. [4] L. Maggi, A. V alcarce, and J. Ho ydis, “Bayesian optimization for radio resource management: Open loop po wer control, ” IEEE J ournal on Selected Areas in Communications , vol. 39, no. 7, pp. 1858–1871, 2021. [5] J. L ¨ ubsen, C. Hespe, and A. Eichler, “T owards safe multi-task bayesian optimization, ” in 6th Annual Learning for Dynamics & Contr ol Confer ence , 2024. [6] Q. Chen, L. Jiang, H. Qin, and R. A. Kontar, “Multi-agent collaborati ve bayesian optimization via constrained gaussian processes, ” T echnometrics , pp. 1–23, 2024. [7] Z. Zhu, E. Bıyık, and D. Sadigh, “Multi-agent safe planning with gaussian processes, ” in 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IR OS) , 2020. [8] A. P . V inod, S. Safaoui, A. Chakrabarty, R. Quirynen, N. Y oshikawa, and S. Di Cairano, “Safe multi-agent motion planning via filtered reinforcement learning, ” in 2022 International Conference on Robotics and Automation (ICRA) , 2022. [9] M. J. K ochenderfer and T . A. Wheeler, Algorithms for Optimization . The MIT Press Cambridge, 2019. [10] C. K. W illiams and C. E. Rasmussen, Gaussian pr ocesses for machine learning . MIT press Cambridge, MA, 2006, vol. 2. [11] H. Farooq, A. Imran, and M. Jaber, “Ai empowered smart user association in lte relays hetnets, ” in 2019 IEEE International Conference on Communications W orkshops (ICC W orkshops) , 2019. [12] D. Na varro and D. Foxcroft, Learning Statistics with jamovi: A T utorial for Be ginners in Statistical Analysis . Jan. 2025, I S B N : 978-1-80064-937-8. [13] A. Krause and C. S. Ong, “Contextual g aussian process bandit optimization, ” in Advances in Neural Information Pr ocessing Systems 24: 25th Annual Conference on Neural Information Pr ocessing Systems 2011. Pr oceedings of a meeting held 12-14 December 2011, Granada, Spain , 2011. [14] H. Asplund, M. Johansson, M. Lundev all, and N. Jald ´ en, “A set of propagation models for site-specific predictions, ” in 12th Eur opean Conference on Antennas and Propa gation (EuCAP 2018) , 2018. [15] 3GPP, “5g; study on international mobile telecommunications (imt) parameters for 6.425 - 7.025 ghz, 7.025 - 7.125 ghz and 10.0 - 10.5 ghz ( release 17), ” 3GPP, T ech. Rep. 3GPP TR 38.921 version 17.1.0 (2022-05)), 2022. [16] O-RAN Alliance, “O-ran architecture description, ” O-RAN Alliance, T ech. Rep. O-RAN.WG1.TS.O AD-R004-v15.00, 2025. Fig. 5. Optimization method comparison for tilt and beamwidth using high-quality collaborators and a GP model with lengthscale value 4 Curv es correspond to the optimum obtained at each iteration of the process for a budget of 60 iterations. The shaded areas represent the 25 % - 75 % inter- quartile range of v alues obtained over the 15 s imulated networks times the 10 d ifferent starting points. CoSBO method is displayed in blue, SafeOpt- MC in orange, and an unsafe random exploration method in violet red. A P P E N D I X T o further understand the behavior of both safe algorithms, C O S B O and S A F E O P T - M C [1], we conducted additional experiments by modifying a key GP hyperparameter: the kernel lengthscale ℓ , which influences the smoothness of the estimated functions ˆ f , ˆ g . W e observe the effect of a larger lengthscale value ( ℓ = 4 ) compared to the standard config- uration used previously ( ℓ = 1 ). The smoothness of the GP posterior increases with the lengthscale, which also affects the width of the confidence intervals used to guide the exploration [10]. Smoother models produce narrower intervals near the observations, encouraging the selection of points farther from the known data. This leads to increased exploration, but also raises the risk of selecting unsafe points due to the wider safe region. Figure 6 presents additional results for the optimization of the horizontal beamwidth parameter , similar to those in Fig. 2 of the main text, but using the same topological map under a different traffic v olume. The plot illustrates an increase in smoothness in the estimated function. Analogously to Fig. 4, in Fig. 5 we measure the sample efficienc y of the methods using a GP model with lengthscale 4. The results indicate that smoother GPs better capture the tilt parameter functions (top), Fig. 6. Optimization process of the horizontal beamwidth of an antenna using the CoSBO algorithm to maximize the performance function (gray curve on the top) while satisfying the safety constraint (gray curve on the bottom). The visualization shows the progress of the estimated functions (light blue curves) at the first, fifth, and tenth iterations using a smooth GP model with lengthscale 4 The algorithm starts with collaborator data (orange crosses) that is added as observed data (black crosses). Then, based on the GP posterior and its confidence intervals (light blue areas), it iteratively selects and evaluates new data (red crosses) that are abov e the safety threshold (gray dashed line) and within the current safe set (green set). which are inherently smooth, while slightly decreasing the performance on the beamwidth functions (bottom), which are highly v ariable. For the beamwidth optimization problem, we can see that our method still provides significant impro vements in sample ef ficiency .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment