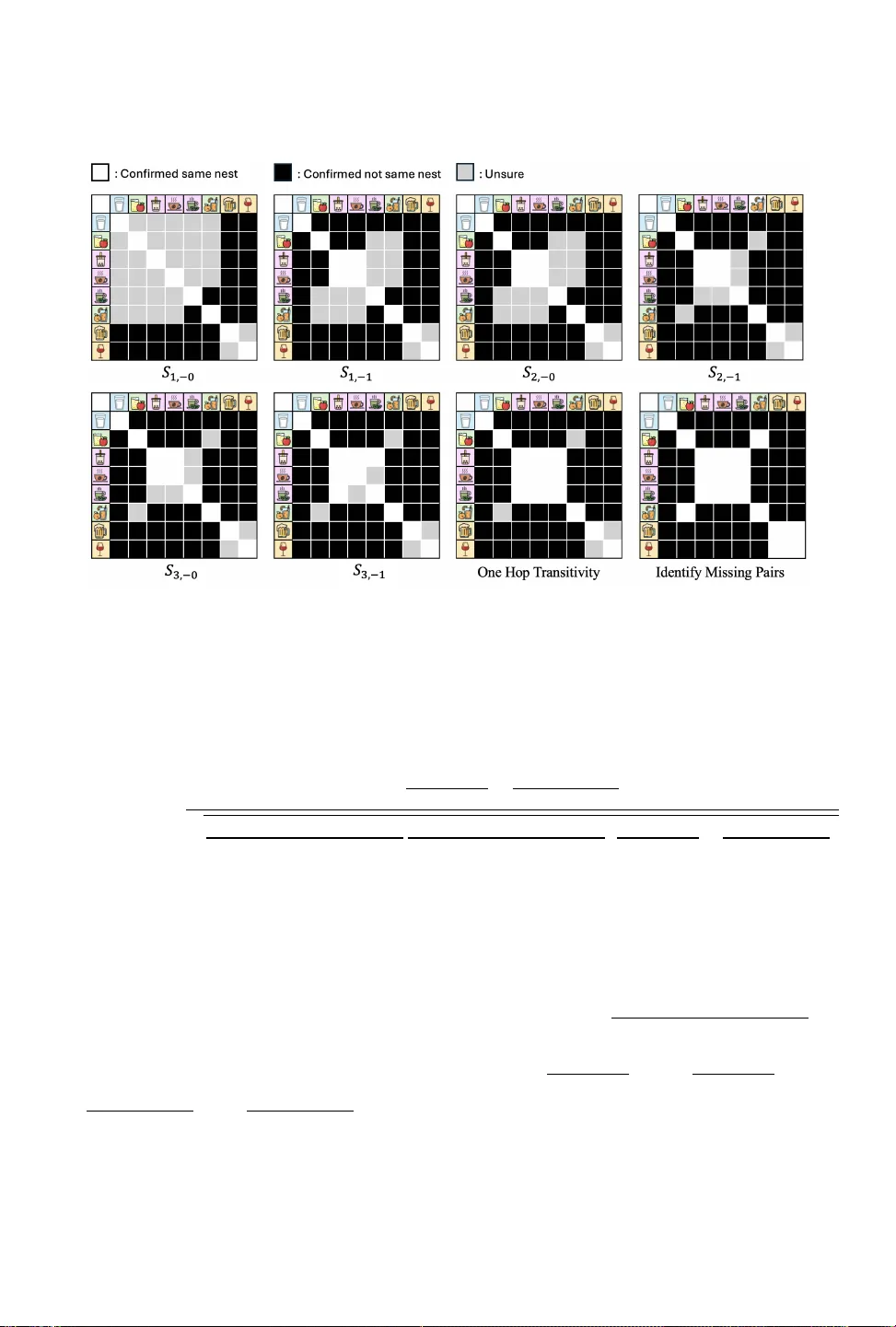

Experimental Assortments for Choice Estimation and Nest Identification

What assortments (subsets of items) should be offered, to collect data for estimating a choice model over $n$ total items? We propose a structured, non-adaptive experiment design requiring only $O(\log n)$ distinct assortments, each offered repeatedl…

Authors: Refer to original PDF