Empirical Cumulative Distribution Function Clustering for LLM-based Agent System Analysis

Large language models (LLMs) are increasingly used as agents to solve complex tasks such as question answering (QA), scientific debate, and software development. A standard evaluation procedure aggregates multiple responses from LLM agents into a sin…

Authors: ** (논문에 명시된 저자 정보가 제공되지 않아 정확히 기재할 수 없습니다. 일반적으로 “저자명1, 저자명2, …” 형태로 기재됩니다.) **

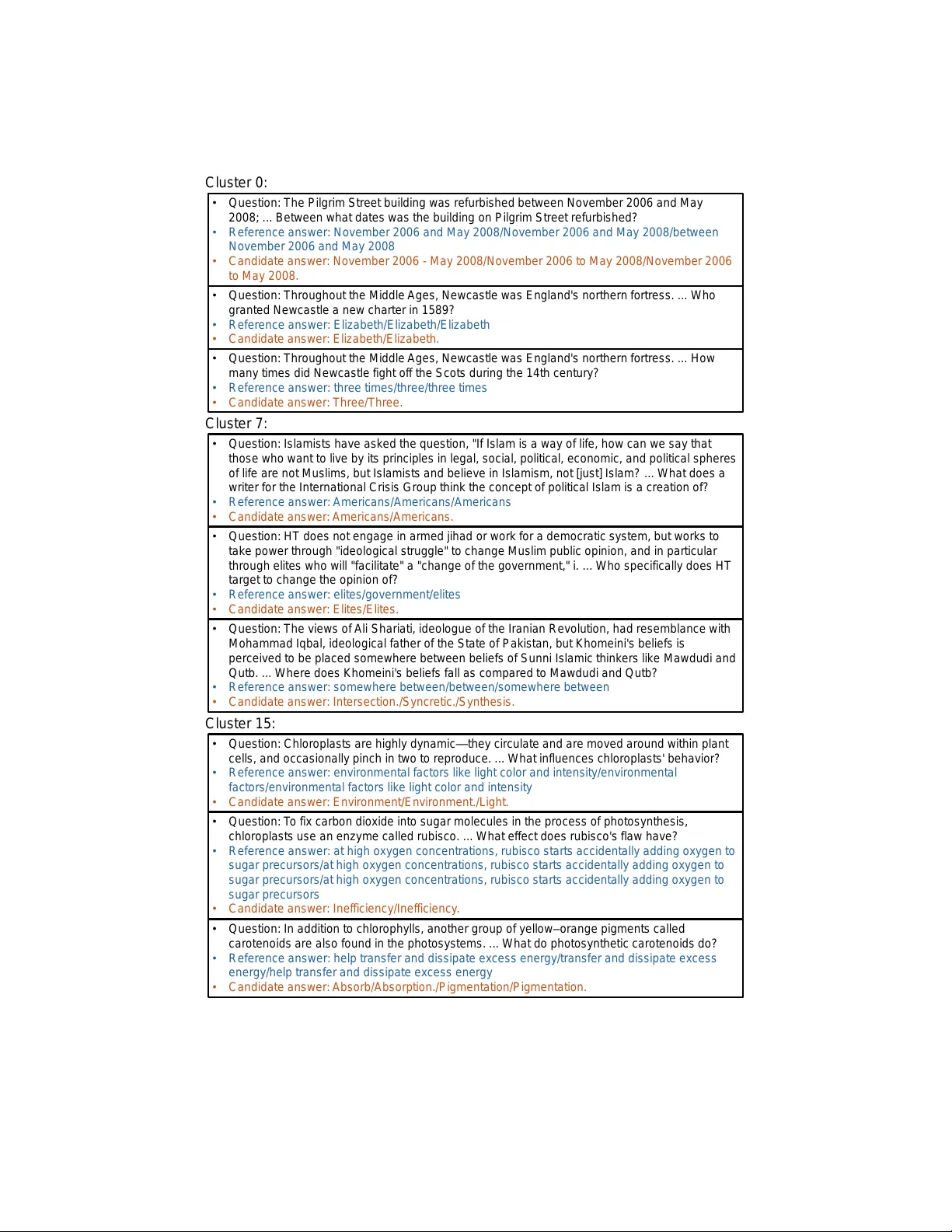

Empirical Cum ulativ e Distribution F unction Clustering for LLM-based Agen t System Analysis Chihiro W atanab e 1 and Jingyu Sun 1 1 NTT Computer and Data Science Lab oratories , 3-9-11, Midori-c ho, Musashino-shi, T oky o, Japan ∗ Abstract Large language mo dels (LLMs) are increasingly used as agents to solve complex tasks such as question answ ering (QA), scientific debate, and soft ware dev elopment. A standard ev aluation pro cedure aggregates m ultiple resp onses from LLM agen ts into a single final answer, often via ma jority v oting, and compares it against reference answ ers. Ho wev er, this pro cess can obscure the qualit y and distributional c haracteristics of the original resp onses. In this pap er, we propose a nov el ev aluation framework based on the empirical cumulativ e distribution function (ECDF) of cosine similarities b etw een generated resp onses and reference answers. This enables a more n uanced assessment of resp onse quality beyond exact match metrics. T o analyze the resp onse distributions across differen t agen t configurations, w e further in tro duce a clustering metho d for ECDF s using their distances and the k -medoids algorithm. Our exp eriments on a QA dataset demonstrate that ECDF s can distinguish b etw een agen t settings with similar final accuracies but differen t quality distributions. The clustering analysis also reveals interpretable group structures in the resp onses, offering insigh ts into the impact of temperature, p ersona, and question topics. 1 In tro duction F ollowing the success of large language mo dels (LLMs), v arious agent-based approaches [1] hav e b een prop osed to tackle tasks such as scientific debate [2, 3] and softw are developmen t [4–6]. In particular, this pap er fo cuses on the task of question answ ering (QA), where eac h question generally has multiple correct answers. Such QA datasets range from multiple-c hoice questions to test kno wledge of mo dels (e.g., CommonsenseQA [7] and SW A G [8] datasets) to questions requiring complex reasoning to answ er (e.g., StrategyQA [9] and GSM8K [10] datasets), and are commonly used to measure the task p erformance of LLM-based agent systems. A t ypical ev aluation pip eline for LLM-based agent systems inv olves generating multiple resp onses p er question under a given configuration, then selecting a final answ er through decision proto cols suc h as ma jority v oting [11–13]. In suc h cases, a basic metho d for comparing multiple settings is to ev aluate the consistency b etw een the final answ ers obtained under each setting and the correct ones. How ever, such an ev aluation criterion alone cannot reveal the tendencies of individual original resp onses generated by the LLM-based agent. Ev en if the final answers are the same in a pair of settings, the quality of the original resp onses ma y differ. F or example, when applying ma jorit y v oting to 2 n ` 1 resp onses, w e cannot distinguish b etw een the case where all 2 n ` 1 resp onses are correct and the case where only n ` 1 resp onses are correct. F urthermore, the “go o dness” of incorrect resp onses ma y differ among the original resp onses. F or instance, for the question “What is the highest mountain in Japan?”, the answ er “Y ari-ga-take” can b e considered closer to the correct answ er than the answer ∗ ch.w atanab e@ntt.com 1 Re formatt ed QA dat as et Se tt in g Que stion Re fere nce a n sw e r li st Se tt in g Que stion Re fere nce a n sw e r li st Se tt in g Que stion Re fere nce a n sw e r li st E CD F list Dis tance matrix Ca nd id ate a n sw e r li st Ca nd id ate a n sw e r li st Ca nd id ate a n sw e r li st - medoids clus tering M e d o id s Figure 1: The prop osed framework of ECDF clustering for LLM-based agent system analysis. “computer,” even though b oth are incorrect answers. How ever, simply measuring the p ercen tage of exact matches with the correct answer do es not allow us to distinguish b etw een the quality of these t wo answers. T o solve these problems and obtain more detailed information ab out the quality of resp onses given b y LLM-based agen ts to each question, we prop ose to ev aluate a giv en set of resp onses based on the empirical cumulativ e distribution function (ECDF) of their cosine similarities to the correct answ ers, as shown in Figure 1. Using ECDF s to ev aluate the responses has t wo adv antages. First, unlik e histograms, for which the bin width needs to be determined, there is no need to set an y hyperparameters to construct an ECDF. Moreov er, by representing the sets of resp onses as ECDF s, we can make direct comparison across resp onse sets of v arying lengths. One concern with using ECDF s is that there are v arious types of configurations in LLM-based agen t systems, and it is difficult to grasp the ov erview of the large num b er of ECDF s corresp onding to differen t settings. Therefore, we prop ose a metho d to apply clustering to ECDF s, which allows us to estimate the group s tructure of ECDF s that are similar to eac h other. Since ECDF s are different from the typical vector format samples (although a set of cosine similarities for defining an ECDF can b e represen ted as a vector), we propose a new clustering metho d for ECDF s, each of whic h corresp onds to a set of LLM-based agen ts’ resp onses in a given setting. Applying clustering to ECDF s has also b een prop osed in the existing study [14] aimed at net work traffic anomaly detection, how ever, their metho d differs from ours in that the ECDF s are first discretized and con verted into vector format samples and then cluste red using k -means algorithm. The subsequent part of this paper is organized as follows. In Section 2, we review the existing studies on LLM-based agent systems. Next, in Section 3, we prop ose an ECDF clustering metho d for analyzing multiple sets of LLM-based agents’ resp onses. In Section 4, we exp erimen tally demonstrate the effectiveness of the prop osed ECDF clustering by applying it to tw o practical QA datasets, c hanging t wo types of agent settings (i.e., persona and temp erature). Finally , we conclude this pap er in Section 5. 2 Related W orks A typical approach to solving tasks, including QA, with LLM-based agen ts is to first hav e each agen t generate an answer to a giv en question, and then combine all the answers to generate a final answer. In this section, we review the existing metho ds based on such an approach from the following three p erspectives: ho w to generate m ultiple answers, how to conv ert multiple answers into a final answer, and how to ev aluate the final answ er. First, the generation of multiple answ ers using LLM-based agents itself inv olves v arious settings, including base models of agents, prompt templates, and inter-agen t comm unication styles. Sev eral studies hav e rep orted that discussion among LLM-based agents improv es p erformance in reasoning tasks [2, 15–18], while others ha ve explored prompting methods to elicit the reasoning abilit y of mo dels b y sp ecifying p ersonas [19, 20] or by describing the reasoning pro cedure [21]. The inter-agen t 2 comm unication structure also affects the cost and quality of the discussion [22, 23]. Next, there hav e b een sev eral methods to summarize multiple resp onses of LLM-based agents in to a single final answer. Typical wa ys to make such decisions are ma jority or confidence-weigh ted v oting [11, 12, 15, 24] and introducing an aggregator agent for judging and consolidating the resp onses [13, 16, 18]. How ever, it has b een p ointed out that such metho ds for synthesizing multiple resp onses eliminate the diversit y of the original opinions [25]. Finally , a commonly used criterion for ev aluating the final answ ers for all questions is their av erage consistency with the correct answers. F or example, we can ev aluate the quality of the final answ er by using exact matc h, BLEU [26], and BER TScore [27]. How ever, when w e use these ev aluation criteria for the final answers, w e might ov erlo ok the differences in the distributions of the “go odness” of the original answers, as describ ed in Section 1. 3 ECDF Clustering for LLM-based Agen t System Analysis Let ˜ D “ tp ˜ q 1 , ˜ a 1 q , . . . , p ˜ q n Q , ˜ a n Q qu b e a QA dataset, where n Q P N is a n umber of questions. F or i P r n Q s , ˜ q i P T is the i th question (e.g., ˜ q i “ “What year is it three years after the year 2000?”), and ˜ a i “ r ˜ a i 1 , . . . , ˜ a in p i q R s is a list of correct answers (e.g., ˜ a i “ r “2003” , “The year is 2003.” s ), where T is a set of texts, n p i q R is a num b er of correct answers for the i th question, and r n s – t 1 , . . . , n u . F or each question in QA dataset ˜ D , w e generate m ultiple resp onses using LLM-based agents under m utually different agent settings (e.g., combination of base mo dels, prompts, and temp erature). In the subsequen t part of this pap er, we call a combination of question and agent setting as “setting” and it is distinguished from an agen t setting, which do es not include the setting of a question. Let D “ tp q 1 , a 1 q , . . . , p q n , a n qu b e the reformatted QA dataset, where n is the n umber of settings and q i and a i indicate the question and the correct answer list corresp onding to the i th setting, resp ectively . W e also define lists A “ r a 1 , . . . , a n s and ˆ A “ r ˆ a 1 , . . . , ˆ a n s of reference and candidate answer lists, resp ectiv ely , where ˆ a i “ r ˆ a i 1 , . . . , ˆ a in p i q C s is a list of LLM-based agents’ resp onses in the i th setting and n p i q C is its size. 3.1 Definition of ECDF s T o compare multiple sets of texts (i.e., LLM-based agen ts’ resp onses) of generally differen t sizes, we in tro duce ECDF s of their ev aluation v alues. An ECDF ˆ F x n : R ÞÑ r 0 , 1 s is a function whose output indicates the prop ortion of samples in x n “ r x 1 , . . . , x n s with an input v alue or less, and it is given by ˆ F x n p x q “ 1 n n ÿ i “ 1 1 p´8 ,x s p x i q . (1) Here, 1 A : R ÞÑ t 0 , 1 u , A Ă R is an indicator function, which is given by 1 A p x q “ # 1 if x P A, 0 otherwise . (2) Sp ecifically , we prop ose to define ECDF s based on the cosine similarities betw een LLM-based agen ts’ resp onses and correct answers. This allows us to obtain more fine-grained information ab out the go o dness of the answ ers in eac h setting, rather than just a binary represen tation of agreemen t with a correct answer. By using reformatted QA dataset D and candidate answers ˆ A , we define a list V “ r v 1 , . . . , v n s of cosine similarity lists, where v i “ r v i 1 , . . . , v in p i q C s is a list of maximum cosine similarities b et ween each LLM-based agent’s resp onse and the correct answ ers in the i th setting. F or j P r n p i q C s , we hav e v ij “ max a P a i g p f ϕ p a q , f ϕ p ˆ a ij qq , (3) 3 where f ϕ : T ÞÑ R d is a function whic h em b eds input text to d dimensional v ector b y using an arbitrary mo del (e.g., neural netw ork) with parameter ϕ and g p u 1 , u 2 q indicates the cosine similarity b et ween v ectors u 1 and u 2 . Based on list V , w e define a list E “ r ˆ F v 1 , . . . , ˆ F v n s of ECDF s of cosine similarities. 3.2 Clustering ECDF s based on Their Distances T o reveal the group structure and the typical shap es of the ECDF s E in Section 3.1, we prop ose to apply a clustering metho d to the ECDF s. Sp ecifically , we adopt k -medoids clustering, since once a distance matrix is given, it can b e applied to the ECDF s in the same w ay as it is applied to the data v ectors. Let D “ p D ij q 1 ď i ď n, 1 ď j ď n P R n ˆ n b e a distance matrix whose p i, j q th entry D ij represen ts the distance b et ween the ECDF s corresp onding to the i th and j th settings. In this pap er, we adopt the follo wing L1 distance 1 . D ij “ D j i “ ż 8 ´8 ˇ ˇ ˇ ˆ F v i p x q ´ ˆ F v j p x q ˇ ˇ ˇ d x, for all 1 ď i ď n, 1 ď j ď n. (4) Let h p u 1 , u 2 q b e the op eration that combines vectors u 1 and u 2 , remov es duplicate v alues, and sorts en tries in ascending order (e.g., h pr 1 , 3 , 2 s , r 4 , 3 , 3 , 5 sq “ r 1 , 2 , 3 , 4 , 5 s ). F or all p i, j q , we define ˜ v ij “ h p v i , v j q “ r ˜ v ij 1 , . . . , ˜ v ij ˜ n p i,j q C s . Since the difference | ˆ F v i p x q ´ ˆ F v j p x q| b etw een a pair of ECDF s is a step function, whic h has p ˜ n p i,j q C ´ 1 q interv als in the supp ort r ˜ v ij 1 , ˜ v ij ˜ n p i,j q C q , the right side of Eq. (4) is given b y ż 8 ´8 ˇ ˇ ˇ ˆ F v i p x q ´ ˆ F v j p x q ˇ ˇ ˇ d x “ ˜ n p i,j q C ´ 1 ÿ k “ 1 ˇ ˇ ˇ ˆ F v i p ˜ v ij k q ´ ˆ F v j p ˜ v ij k q ˇ ˇ ˇ p ˜ v ij p k ` 1 q ´ ˜ v ij k q . (5) Based on distance matrix D , we split the ECDF s into m clusters by applying “Partitioning Around Medoids” or P AM algorithm [29] to them. Algorithm 1 shows the pro cedure to obtain cluster assign- men t vector c “ r c 1 , . . . , c n s P r m s n and medoid index vector r “ r r 1 , . . . , r m s P r n s m , and it is based on BUILD and SW AP steps. Each entry c i of cluster assignment vector represents the cluster index of the i th ECDF, and each en try r i of medoid index v ector represents the index of the medoid ECDF of the i th cluster. In BUILD step, w e select initial medoids in a greedy manner so that the sum of distances from each sample to its nearest medoid is as small as p ossible. Then, in SW AP step, we rep eatedly try sw apping all pairs of medoid and non-medoid samples and find the b est one with the minim um sum of distances from each sample to its nearest medoid. If sw apping such an optimal pair leads to a better result than using the original set of medoids, w e adopt it. Otherwise, we terminate the algorithm and determine medoid index vector r and cluster assignment vector c based on the current set of medoids. 4 Exp erimen ts T o verify the effectiveness of the prop osed metho d, we used v alidation samples of Stanford Question Answ ering Dataset (SQuAD) [30]. W e examined the effects of c hanging tw o agent settings, p ersona 1 F or i P r n s , let µ i be a probability measure on R , which is given by µ i p B q “ 1 n ř n p i q C k “ 1 1 B p v ik q . This probability measure represents the proportion of samples t v ik u that are included in B Ă R . It must b e noted that the L1 distance between the i th and j th ECDF s in Eq. (4) is equal to the L 1 -W asserstein distance b etw een probabilit y measures µ i and µ j [28]. 4 Algorithm 1 P AM algorithm of k -medoids clustering Input: Distance matrix D , num b er of clusters m Output: Cluster assignmen t v ector c “ r c 1 , . . . , c n s P r m s n , medoid index vector r “ r r 1 , . . . , r m s P r n s m {BUILD algorithm for determining initial medoid index vector r } r 1 Ð arg min i Pr n s ř n j “ 1 D ij J 1 Ð t r 1 u for i “ 2 , . . . , m do r i Ð arg min j Pr n sz J i ´ 1 ř n k “ 1 min l P J i ´ 1 Yt j u D kl J i Ð J i ´ 1 Y t r i u end for {SW AP algorithm for improving cluster assignment vector c and medoid index vector r } J 1 Ð t r 1 , . . . , r m u J 2 Ð r n sz J 1 D ˚ Ð ř n k “ 1 min l P J 1 D kl while T rue do F or all p i, j q P J 1 ˆ J 2 , ˜ J ij Ð p J 1 zt i uq Y t j u F or all p i, j q P J 1 ˆ J 2 , ˜ D ij Ð ř n k “ 1 min l P ˜ J ij D kl p i ˚ , j ˚ q Ð arg min p i,j qP J 1 ˆ J 2 ˜ D ij if ˜ D i ˚ j ˚ ă D ˚ then J 1 Ð ˜ J i ˚ j ˚ J 2 Ð r n sz J 1 D ˚ Ð ˜ D i ˚ j ˚ else break end if end while r Ð r r 1 , . . . , r m s , where J 1 “ t r 1 , . . . , r m u c Ð r c 1 , . . . , c n s , where c i “ arg min j P J 1 D ij for all i P r n s and temp erature, whic h we call settings P and T , resp ectively . F or eac h sub ject or title, we randomly c hose three samples to use in the subsequen t procedure. Based on each i th original question q i , whic h is defined as “context” and “question” v alues of the dataset concatenated with a space b etw een them, we defined question q ˚ i “ q i ` “ Just describ e your answer in one word without providing any explanation for the answer.” In all exp eriments, w e set the num b er of agent settings at n 0 “ 50 (i.e., the n umber of settings is n “ n 0 n Q ). W e used GPT-4o mini [31] and paraphrase-MiniLM-L6-v2 [32] as a base mo del of an LLM-based agent and an embedding mo del of function f ϕ , resp ectiv ely , and set the num b er of resp onses at n p i q C “ 10 for i P r n s . • Persona settings : In setting P , we defined the i th p ersona text as p ˚ i “ “Y ou are ” ` l p p i q` “ ”, where l : T ÞÑ T is the op eration of conv erting the first character of the input text to lo wercase and the p i P T is the i th sample of “p ersona” subset of Persona Hub dataset [33] for i P r n 0 s . In setting T , we set p ˚ i “ “” for all i P r n 0 s . • T emp erature settings : F or i P r n 0 s , w e set the i th temp erature at β i “ 2 p i ´ 1 q{ n 0 in setting T and β i “ 1 in setting P . F or each com bination of the i th question and the j th agent settings, we defined the user prompt as s n 0 p i ´ 1 q` j “ p ˚ j ` q ˚ i , input it in to the LLM-based agent by setting the temp erature at β j , and 5 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 A ccuracy=0.000 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 A ccuracy=0.333 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 A ccuracy=0.667 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 A ccuracy=1.000 Figure 2: ECDF s for eac h v alue of accuracy in setting P . Blue line shows the centroid. 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 A ccuracy=0.000 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 A ccuracy=0.333 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 A ccuracy=0.667 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 A ccuracy=1.000 Figure 3: ECDF s for eac h v alue of accuracy in setting T . got resp onses ˆ a n 0 p i ´ 1 q` j . T o allow for v ariation in the suffix, we remov ed the suffixes “.”, “,”, “;”, “:”, and “\\” from b oth the reference and candidate answers A and ˆ A b efore ev aluation (i.e., fin al answer selection in Section 4.1 and computation of maximum cosine similarit y in Eq. (3)). 4.1 Preliminary Exp erimen t: Diversit y of ECDF s Before applying the prop osed metho d to the datasets, we examined the following research question: If the r esults ar e similar in terms of ac cur acy (i.e., the p er c entage of c orr e ct final answers), wil l their ECDFs end up b eing similar? T o v erify this question, we plotted the ECDF s of the results with the same accuracy together. W e determined the final answer in each setting based on the following pro cedure. First, we define embedding vector list U p i q “ r u i 1 , . . . , u in p i q C qs of each i th setting, where u ij “ f ϕ p ˆ a ij q for j P r n p i q C s . Next, we select the candidate answer ˆ a ij ˚ with the minim um L2 distance from the mean embedding vector ¯ u i . j ˚ “ arg min j Pr n p i q C s } u ij ´ ¯ u i } 2 , ¯ u i “ 1 n p i q C n p i q C ÿ j “ 1 u ij . (6) By using the ab ov e pro cedure, w e can alw ays determine a final answ er even if all the candidate answ ers are different from each other. Finally , we define a binary v alue b i P t 0 , 1 u representing the correctness of each i th setting. W e define b i “ 1 if there exists a reference answer a P a i that matches the final answ er ˆ a ij ˚ and b i “ 0 otherwise. The accuracy of eac h k th sub ject is given as the mean v alue of b i for all i that corresp ond to the k th sub ject. Figs. 2 and 3 show the ECDF s for each v alue of accuracy in settings P and T , resp ectively . It must b e noted that there were only four p ossible accuracy v alues (i.e., 0 , 1 { 3 , 2 { 3 , 1 ), since the accuracy was measured as the p ercentage of correct answers for three questions of the same sub ject. F or i P r 4 s , the i th centroid represen ts an ECDF of the concatenated samples corresp onding to the i th accuracy v alue. F rom these results, we see that even with the same accuracy , the ECDF s can v ary greatly , esp ecially when the accuracy is lo w. Moreo ver, when the accuracy is one, not all the resp onses match the correct answ ers. That is, there were cases where the original responses contained wrong answ ers, how ever, the final answer matched the correct answer through the final answ er selection pro cess. 6 4.2 Application to SQuAD Dataset W e applied ECDF clustering in Section 3.2 to the candidate answers for SQuAD dataset by setting the num b er of clusters at m “ 16 . Before plotting the clustering results, we redefined the cluster indices so that the clusters with smaller indices indicate b etter results. Sp ecifically , we defined matrix W “ p W ij q 1 ď i ď m, 1 ď j ď m P t 0 , 1 u m ˆ m , whose p i, j q th en try is given by W ij “ 1 p´8 , 0 q " ż 8 ´8 ” ˆ F v r i p x q ´ ˆ F v r j p x q ı d x * , for all 1 ď i ď m, 1 ď j ď m. (7) Based on matrix W , w e defined the num b er of “wins” of eac h k th cluster as w k “ ř m j “ 1 W kj and redefined the cluster indices so that the k th cluster corresp onds to the k th largest v alue of t w k u . F or visibility , we plotted the cluster assignments as matrix C “ p C ij q 1 ď i ď n Q , 1 ď j ď n 0 P r m s n Q ˆ n 0 , whose p i, j q th entry represents the cluster index corresp onding to the combination of the i th question and the j th agent setting. Instead of plotting matrix C as is, we applied matrix reordering to it so that the rows and columns with mutually similar v alues are closer together. Sp ecifically , we applied m ultidimensional scaling (MDS) [34, 35] to the rows and columns of matrix C , obtained their one- dimensional features , and sorted them according to the feature v alues. Figs. 4 and 6 show the ECDF s of each cluster in settings P and T , resp ectively , and Figs. 5 and 7 sho w the cluster assignments in settings P and T , resp ectively . As in Section 4.1, each i th centroid represen ts an ECDF of the concatenated samples in the i th cluster. F rom Figs. 4 and 6, we see that relatively similar ECDF s w ere assigned to each cluster compared to the results in Section 4.1. Fig. 5 shows that there was little difference in the ECDF s dep ending on the p ersona setting, how ever, the ECDF s changed significantly dep ending on the sub ject of the question. F or example, questions in sub ject “Normans” and “Sup er-Bowl-50” w ere relativ ely easy for agents to answer correctly , while questions in sub ject “Chloroplast” often resulted in answers that were far from the correct answers. Fig. 7 shows that the temp erature also did not significantly affect the ECDF s, and that the cluster structure at low temp eratures collapsed when the temp erature was set relatively high. As additional information for each cluster, w e sho w example answers of medoids of Clusters 0 , 7 , and 15 in Figure 8. F rom this figure, we see that medoids of Clusters 0 and 7 corresp ond to the questions whose correct answers are expressed in relatively simple words, while that of Cluster 15 corresp onds to the questions that require more complex and longer sen tences, leading to the worst ECDF s. 5 Conclusion In this pap er, we developed a new method for ev aluating the resp onses generated by LLM-based agen ts. F or each setting, we first define a single ECDF that represen ts the cum ulative distribution of similarities b etw een the generated answers and the correct ones, and then estimate the cluster structure of the ECDF s corresp onding to all the settings. W e show ed the effectiv eness of the prop osed metho d b y applying it to a practical dataset. The prop osed metho d successfully revealed the cluster structure of the ECDF s, each of which corresp onds to a com bination of a temp erature/p ersona and a question topic. References [1] T aicheng Guo et al. Large language mo del based m ulti-agents: a surv ey of progress and c hallenges. In Pr o c e e dings of the Thirty-Thir d International Joint Confer enc e on A rtificial Intel ligenc e , 2024. 7 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 0 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 1 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 2 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 3 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 4 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 5 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 6 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 7 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 8 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 9 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 10 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 11 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 12 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 13 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 14 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 15 Figure 4: ECDF s of each cluster in setting P . Black and blue lines show the medoid and centroid of the cluster, resp ectively . P44 P28 P16 P8 P2 P46 P15 P13 P14 P9 P43 P36 P38 P4 P37 P29 P17 P1 P32 P24 P45 P5 P11 P41 P30 P3 P7 P20 P22 P12 P21 P42 P19 P48 P18 P40 P47 P27 P34 P33 P39 P26 P31 P25 P10 P0 P23 P49 P6 P35 Setting Nor mans Super_Bowl_50 Sk y_(United_Kingdom) Newcastle_upon_T yne Rhine Harvar d_University Huguenot Nik ola_T esla American_Br oadcasting_Company V ictoria_and_Albert_Museum T eacher Steam_engine Imperialism Doctor_Who Apollo_pr ogram Amazon_rainfor est Ctenophora United_Methodist_Chur ch F or ce Y uan_dynasty F r esno,_Califor nia Genghis_Khan University_of_Chicago W arsaw Construction Computational_comple xity_theory Geology Oxygen Economic_inequality Islamism Eur opean_Union_law Jacksonville,_Florida Civil_disobedience Black_Death Souther n_Califor nia 1973_oil_crisis P rivate_school P rime_number V ictoria_(Australia) Scottish_P arliament P ack et_switching Immune_system Martin_L uther Inter gover nmental_P anel_on_Climate_Change F r ench_and_Indian_W ar Phar macy K enya Chlor oplast Subject Cluster 0 Cluster 1 Cluster 2 Cluster 3 Cluster 4 Cluster 5 Cluster 6 Cluster 7 Cluster 8 Cluster 9 Cluster 10 Cluster 11 Cluster 12 Cluster 13 Cluster 14 Cluster 15 Figure 5: Cluster assignments in setting P . 8 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 0 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 1 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 2 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 3 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 4 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 5 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 6 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 7 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 8 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 9 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 10 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 11 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 12 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 13 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 14 0.0 0.5 1.0 Cosine similarity 0.00 0.25 0.50 0.75 1.00 Cluster 15 Figure 6: ECDF s of eac h cluster in setting T . 0.00 0.36 0.24 0.48 0.12 0.20 0.28 0.60 0.16 0.32 0.88 0.80 0.52 0.08 0.44 0.56 0.72 0.40 1.04 0.84 0.92 0.68 0.64 0.96 0.76 1.36 1.12 0.04 1.08 1.00 1.40 1.16 1.32 1.20 1.24 1.48 1.60 1.44 1.64 1.28 1.52 1.68 1.56 1.80 1.76 1.72 1.84 1.88 1.92 1.96 Setting Chlor oplast Phar macy K enya F r ench_and_Indian_W ar Martin_L uther Inter gover nmental_P anel_on_Climate_Change Immune_system P ack et_switching P rime_number Scottish_P arliament V ictoria_(Australia) P rivate_school Y uan_dynasty Economic_inequality Black_Death Souther n_Califor nia 1973_oil_crisis Civil_disobedience Eur opean_Union_law Islamism Jacksonville,_Florida Oxygen Geology W arsaw Construction Genghis_Khan F r esno,_Califor nia University_of_Chicago F or ce Apollo_pr ogram United_Methodist_Chur ch Ctenophora Doctor_Who Imperialism Harvar d_University Amazon_rainfor est Steam_engine Computational_comple xity_theory T eacher Huguenot V ictoria_and_Albert_Museum American_Br oadcasting_Company Nik ola_T esla Nor mans Rhine Newcastle_upon_T yne Sk y_(United_Kingdom) Super_Bowl_50 Subject Cluster 0 Cluster 1 Cluster 2 Cluster 3 Cluster 4 Cluster 5 Cluster 6 Cluster 7 Cluster 8 Cluster 9 Cluster 10 Cluster 11 Cluster 12 Cluster 13 Cluster 14 Cluster 15 Figure 7: Cluster assignments in setting T . 9 [2] Yilun Du et al. Improv ing factualit y and reasoning in language models through multiagen t debate. In Pr o c e e dings of the 41st International Confer enc e on Machine L e arning , volume 235, pages 11733–11763, 2024. [3] Xiangru T ang et al. MedAgents: Large language mo dels as collab orators for zero-shot medical reasoning. In Findings of the Asso ciation for Computational Linguistics: A CL 2024 , pages 599– 621, 2024. [4] Sirui Hong et al. MetaGPT: Meta programming for a multi-agen t collab orativ e framework. In Pr o c e e dings of the Twelfth International Confer enc e on L e arning R epr esentations , 2024. [5] Chen Qian et al. ChatDev: Communicativ e agents for soft ware developmen t. In Pr o c e e dings of the 62nd Annual Me eting of the Asso ciation for Computational Linguistics (V olume 1: L ong Pap ers) , pages 15174–15186, 2024. [6] Qingyun W u et al. AutoGen: Enabling next-gen LLM applications via multi-agen t conv ersations. In First Confer enc e on L anguage Mo deling , 2024. [7] Alon T almor et al. CommonsenseQA: A question answ ering c hallenge targeting commonsense kno wledge. In Pr o c e e dings of the 2019 Confer enc e of the North Americ an Chapter of the Asso ci- ation for Computational Linguistics: Human L anguage T e chnolo gies, V olume 1 (L ong and Short Pap ers) , pages 4149–4158, 2019. [8] Ro wan Zellers et al. SW AG: A large-scale adversarial dataset for grounded commonsense inference. In Pr o c e e dings of the 2018 Confer enc e on Empiric al Metho ds in Natur al L anguage Pr o c essing , pages 93–104, 2018. [9] Mor Gev a et al. Did Aristotle use a laptop? A question answering b enchmark with implicit reasoning s trategies. T r ansactions of the Asso ciation for Computational Linguistics , 9:346–361, 2021. [10] Karl Cobb e et al. T raining verifiers to solve math w ord problems. arXiv:2110.14168, 2021. [11] Lars Benedikt Kaesberg et al. V oting or consensus? Decision-making in m ulti-agen t debate. arXiv:2502.19130, 2025. [12] Jun you Li et al. More agents is all you need. T r ansactions on Machine L e arning R ese ar ch , 2024. [13] Junlin W ang et al. Mixture-of-Agen ts enhances large language mo del capabilities. arXiv:2406.04692, 2024. [14] Jungh yun Kim, So ohan Ahn, and Y oujip W on. Mining an anomaly: On the small time scale b eha vior of the traffic anomaly . In Pr o c e e dings of IADIS International Confer enc e WWW/Internet 2006 , pages 552–559, 2006. [15] Justin Chen, Swarnadeep Saha, and Mohit Bansal. ReConcile: Round-table conference improv es reasoning via consensus among div erse LLMs. In Pr o c e e dings of the 62nd Annual Me eting of the Asso ciation for Computational Linguistics (V olume 1: L ong Pap ers) , pages 7066–7085, 2024. [16] Yi F ang et al. Counterfactual debating with preset stances for hallucination elimination of LLMs. In Pr o c e e dings of the 31st International Confer enc e on Computational Linguistics , pages 10554– 10568, 2025. [17] Akbir Khan et al. Debating with more persuasive LLMs leads to more truthful answers. In Pr o c e e dings of the 41st International Confer enc e on Machine L e arning , volume 235, pages 23662– 23733, 2024. 10 [18] Tian Liang et al. Encouraging divergen t thinking in large language mo dels through multi-agen t debate. In Pr o c e e dings of the 2024 Confer enc e on Empiric al Metho ds in Natur al L anguage Pr o- c essing , pages 17889–17904, 2024. [19] Leonard Salewski et al. In-context imp ersonation reveals large language mo dels’ strengths and biases. In A dvanc es in Neur al Information Pr o c essing Systems , v olume 36, pages 72044–72057, 2023. [20] Greg Serapio-García et al. Personalit y traits in large language mo dels. arXiv:2307.00184, 2023. [21] Jason W ei et al. Chain-of-Thought prompting elicits reasoning in large language models. In A dvanc es in Neur al Information Pr o c essing Systems , v olume 35, pages 24824–24837, 2022. [22] Y unxuan Li et al. Improving m ulti-agent debate with sparse comm unication topology . In Findings of the Asso ciation for Computational Linguistics: EMNLP 2024 , pages 7281–7294, 2024. [23] Zhangyue Yin et al. Exchange-of-Though t: Enhancing large language mo del capabilities through cross-mo del communication. In Pr o c e e dings of the 2023 Confer enc e on Empiric al Metho ds in Natur al L anguage Pr o c essing , pages 15135–15153, 2023. [24] Chi-Min Chan et al. ChatEv al: T ow ards better LLM-based ev aluators through multi-agen t debate. In Pr o c e e dings of the Twelfth International Confer enc e on L e arning R epr esentations , 2024. [25] Zengqing W u and T ak a yuki Ito. The hidden strength of disagreement: Unrav eling the consensus- div ersity tradeoff in adaptive multi-agen t systems. arXiv:2502.16565, 2025. [26] Kishore Papineni et al. BLEU: a metho d for automatic ev aluation of machine translation. In Pr o c e e dings of the 40th Annual Me eting on Asso ciation for Computational Linguistics , pages 311– 318, 2002. [27] Tian yi Zhang et al. BER TScore: Ev aluating text generation with BER T. In Pr o c e e dings of the Eighth International Confer enc e on L e arning R epr esentations , 2020. [28] Eustasio del Barrio, Ev arist Giné, and Carlos Matrán. Central limit theorems for the Wasserstein distance b etw een the empirical and the true distributions. The Annals of Pr ob ability , 27(2):1009– 1071, 1999. [29] Leonard Kaufman and Peter J. Rousseeuw. Clustering by means of medoids. In Pr o c e e dings of Statistic al Data Analysis Base d on the L1-Norm and R elate d Metho ds , pages 405–416, 1987. [30] Prana v Ra jpurk ar et al. SQuAD: 100,000+ questions for machine comprehension of text. In Pr o c e e dings of the 2016 Confer enc e on Empiric al Metho ds in Natur al L anguage Pr o c essing , pages 2383–2392, 2016. [31] Aaron Hurst et al. GPT-4o system card. arXiv:2410.21276, 2024. [32] Nils Nils Reimers and Iryna Gurevych. Sentence-BER T: Sentence embeddings using Siamese BER T-netw orks. In Pr o c e e dings of the 2019 Confer enc e on Empiric al Metho ds in Natur al L anguage Pr o c essing and the 9th International Joint Confer enc e on Natur al L anguage Pr o c essing (EMNLP- IJCNLP) , pages 3982–3992, 2019. [33] T ao Ge et al. Scaling synthetic data creation with 1,000,000,000 p ersonas. 2024. [34] Joseph Lee Ro dgers and T ony D. Thompson. Seriation and m ultidimensional scaling: A data analysis approach to scaling asymmetric pro ximity matrices. Applie d Psycholo gic al Me asur ement , 16(2):105–117, 1992. [35] Ian Sp ence and Jed Graef. The determination of the underlying dimensionalit y of an empirically obtained matrix of proximities. Multivariate Behavior al R ese ar ch , 9(3):331–341, 1974. 11 • Ques tion: The P ilgrim S treet building wa s re furbis hed betwee n No vember 2006 and May 2008 ; .. . Bet we en wh at dates wa s the building on Pil gr im St re et re furbis hed? • Re ferenc e ans we r: No vember 2006 and May 2008 /November 2006 and May 2008 /bet we en No vember 2006 and May 2008 • Ca ndida te ans we r: No vember 2006 - May 2008 /November 2006 to May 2008 /November 2006 to May 2008 . • Ques tion: Thro ugho ut the Middle Ages, Ne wc as tle wa s England's nor thern fortres s. .. . Who gr anted Ne wc as tle a new ch ar ter in 1589? • Re ferenc e ans we r: Eli za beth/E lizab eth/ Eli za beth • Ca ndida te ans we r: Eli za beth/E lizab eth. • Ques tion: Thro ugho ut the Middle Ages, Ne wc as tle wa s England's nor thern fortres s. .. . Ho w many tim es did New ca stle fight of f the Scots dur ing the 14th ce ntury? • Referenc e answ er: three ti mes/t hree/t hr ee ti mes • Ca ndida te ans we r: Thre e/Three . Cl uster 0: • Ques tion: Islamists have as ke d the ques tion, "I f Islam is a way of lif e, how ca n we sa y that those wh o wa nt to liv e by it s pr inc iples in legal, so cia l, political, ec onomic , and political sph er es of lif e ar e not Mus lims, but Islamists and believe i n I sla mism, not [just] Islam? .. . Wha t does a wr iter for the Int er national Cr isis Gro up think the co nc ept of political I sla m is a cr eation of? • Re ferenc e ans we r: America ns /A mer icans /A mer ica n s • Ca ndida te ans we r: America ns /A mer ica ns. • Ques tion: HT does not enga ge in armed jihad or wo rk for a democ ra tic system, but wo rk s to take pow er throug h "ideologic al strugg le" to ch ange Mus lim public opinion , and in particula r throug h elites who will "f ac ilit ate" a " ch ange of the governme nt, " i . .. . Who sp ec ifi ca lly does HT target to ch ange the opinion of? • Re ferenc e ans we r: elites/government /elit es • Ca ndida te ans we r: Eli tes/E lit es . • Ques tion: The v iews of Ali Shariati, ideolog ue of the Iranian Re volut ion, had re se mblance with Mohammad Iqbal, ideolog ica l f ather of the St ate of Pakis tan, but Khomeini's beliefs is per ce iv ed to be plac ed so mewh er e betwee n beliefs of Sunni I sla mic thinker s like Maw dudi and Qutb. .. . Whe re does Khomeini's beliefs fall as co mpar ed to Mawd udi and Qutb? • Re ferenc e ans we r: so mewh er e betwee n/between /s ome wh ere betwee n • Ca ndida te ans we r: Int er se ction./ Sy ncre tic./ Sy nthe sis . Cl uster 7: • Ques tion: Ch loro plas ts ar e highly dynamic — they cir cu late and ar e moved ar ound within plant ce lls, and oc ca sio nally pinc h in t wo to re pr oduce. .. . Wha t influenc es ch loro plas ts ' beha vi or ? • Re ferenc e ans we r: environmen tal factors like light co lor and intensity /env iron mental factors /e nvironm ent al factors like light co lor and intensity • Ca ndida te ans we r: Env iron ment/ Env iron ment. /L ight. • Ques tion: T o fix ca rb on dioxide i nto su gar molec ules in t he pr oc es s of photos ynt hesis, ch loro plas ts us e an enz ym e ca lled rubis co . .. . Wha t ef fect does ru bis co 's flaw hav e? • Re ferenc e ans we r: at high oxy gen co nc entr ations , ru bis co star ts ac cid entally addin g oxy gen to su gar pr ec ur sors/ at high oxy gen co nc entra tions , ru bis co star ts ac cid entally addin g oxy gen to su gar pr ec ur sors/ at high oxy gen co nc entra tions , ru bis co star ts ac cid entally addin g oxy gen to su gar pr ec ur sors • Candidate answ er: Inef fi cienc y /I nef fi cienc y . • Ques tion: In addition to ch loro phylls, another gr oup of yellow – or ange pigmen ts ca lled ca ro tenoids ar e als o found in t he photos ystems. .. . Wha t do photos ynt hetic ca ro tenoids do? • Re ferenc e ans we r: help trans fer and dis sip ate exces s ener gy/ transf er and dis sip ate exces s ener gy/ help trans fer and dis sip ate exces s ener gy • Ca ndida te ans we r: Absor b/A bs or p tion. /P igmen tat ion/ Pigment at ion. Cl uster 15: Figure 8: Example answers of medoids of Clusters 0 , 7 , and 15 in setting P . Regarding the candidate answ ers, only unique answers are listed. 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment