Real time fault detection in 3D printers using Convolutional Neural Networks and acoustic signals

The reliability and quality of 3D printing processes are critically dependent on the timely detection of mechanical faults. Traditional monitoring methods often rely on visual inspection and hardware sensors, which can be both costly and limited in s…

Authors: Muhammad Fasih Waheed, Shonda Bernadin

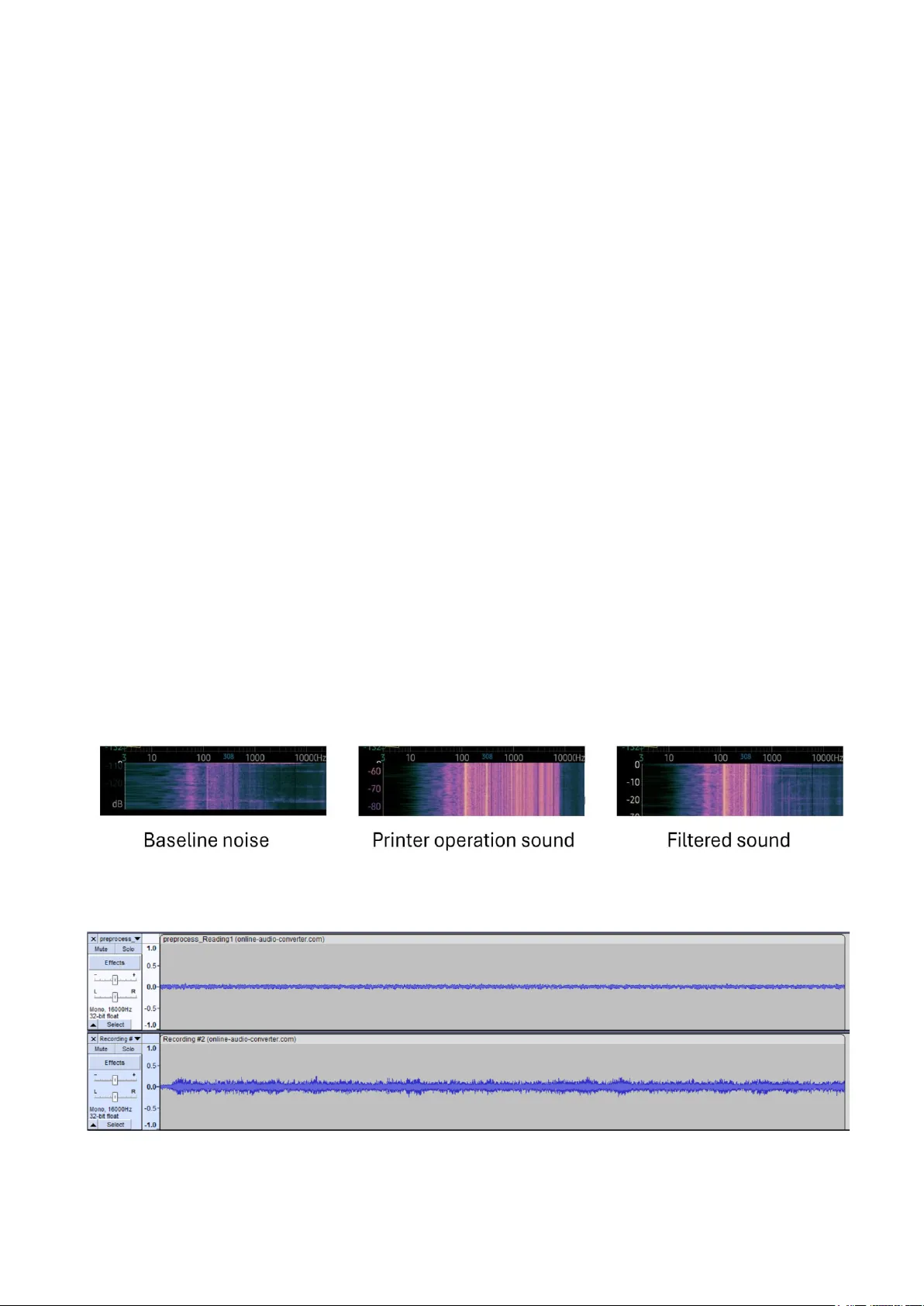

XXX -X-XXXX- XXXX -X/XX/$XX. 00 ©20 23 IEEE Real time fault detection in 3D printers using Convolutional Neural Networks and acoustic signals Muhammad Fasih Waheed Electrical and Computer Engineering Florida A&M University Tallahassee, USA muhammad1.waheed@famu.edu Dr. Shonda Bernadin Electrical and Computer Engineering Florida A&M University Tallahassee, USA bernadin@eng.famu.fsu.edu Abstract — The reliability and quality of 3D printing processes are critically dependent on the tim ely detection of mechanical faults. Traditional monitoring methods often rely on visual inspection and hardware sensors, which can be both costly and limited in scop e. This p aper explores a scalable and contactless method for the use o f real-tim e audio si gnal analysis for detec ting mechanical f aults i n 3D pri nters. By capturing and classifying acoustic emissions during the printing process, we aim to identify common faults such as no zzle clogging, filament breakage, pully ski pping a nd various other mechanical faults. Utilizing Convolutional neural networks, we implement algorithms capable o f real-time au dio classification to detect these faults p romptly. Our methodology i nvolves conducting a series o f controlled experiments to gather a udio d ata, fo llowed by the application of advanced machine learning models for fault detection. Additionally, we review existing literature on audio-based fault detection in manufacturing and 3 D printin g to contextualize our research within the broader field. Preliminary results demonstrate that audio signals, when analyzed with machine learnin g techniques, provide a r eliable and cost-effective means of enhancing real-tim e fault detection. Keywords — 3D printing, fault detection, a udio signal analysis, convolutional neural networks (CNN), machine learning, real - time monitoring, nozzle clogging, non-contact monitoring, manufacturing quality control, predictive maintenance, spectrogram analysis, Fused Deposition Modelling (FDM). 1. I NTRODUCTIO N 3D printing, also known as additive manufacturing , is a process o f creatin g three-dimensi onal obje cts laye r by laye r from d igital files. This techn ology has evolved significantly since its inception in the 1980s, transfor ming from a tool f or rapid protot yping to a v ersatil e manufactu ring method with applicati o ns acr oss vari o us in dustries .[3] Fault detection in 3D printin g is an essent ial process to identify and monitor errors during additi ve manufacturi ng, ensuring the production of high-quality, de fect-free components [4]. This is p articularl y cru cial i n industr ies li ke aerospace and automotive, wh ere precisi on and reliabilit y are paramount [5]. Effecti v e fault det ection not only reduces material waste, mainte nance costs, and production time but also enha nces sa fety and reliabilit y in c ritical applicati ons. A. Ch allenges in traditio nal fault detection in 3D printing Traditio nal methods such as visual ins p ecti on and hardware cont act base d sensors, h ave several d rawbacks when it comes t o fault detection. Visual inspecti o n by humans, while capable of d etectin g errors, ca n not prov ide contin uous monitorin g or real-time correcti on. This approach is time-consuming, subjective , and prone to hu man e rror, especially for complex o r s mall- scal e defects .[6] Other h ardware se n sors are often limited i n thei r ability t o detect faults, as they primarily identif y large-scale error modalities whi le failing to capture smaller or more subtle defects . These sensors typical ly require direct integrat ion wit h the 3D printer to obtain precise readings, which can be cumbersome and m ay disrupt the p rinte r’s normal operatio n. Additiona lly, the setup and maintenance of these sensors involve te d ious procedures, incr easing th e overall complexit y of impleme ntatio n. Another major drawback is the high co st associate d with these sensors and their accompanyin g amplifiers , which restricts their widesprea d adoption, partic ularly in bu dget-conscious manufactu ring environme nts. Moreover, thes e conventi onal m ethods often lack the comprehensive data richness required for real- time monitori ng and feed back, the reby red ucing their efficienc y in dynamic a nd comple x manufactu ring pr ocesses. Similarl y, camera-based approach es, despite being data- rich and highl y versatile, c ome with their own s et of challenges . A si ngle-camera s etup ma y p rovide only limited visibilit y of t he printing p rocess , pot entiall y miss ing d efects that occur outside its field of view. On the other hand, multi- camera systems, while capable of offering broade r coverage, introduce additi onal hurdle s such as increase d costs, implement ation comple xities, and sensiti vity to environme ntal fact ors like lighti ng conditions, which c an signific antly aff ect detect ion accuracy [ 6]. B. A udio-Based Mon itoring: A Contactless Alternative Given the shortcomi n gs of traditional fault detectio n techniques, r esearc hers [1 6] are actively investigat ing real- time audio sig nal a n alysis as a more effici ent and adaptable alternativ e. This method presents several distinct advanta ges, with one of the most significant being contactless because audio sensors do not need to be physically attached unlike intrusi ve senso r-based tec hniques, hence audio-bas ed monitori ng eliminates the need for physical modifications to the p rinte r, ma king it a non-invasive, cost-effective solution that e nhances s ystem fle xibilit y. Acous tic sens ors are capable of capturin g su btle variati ons in print patter ns by analyzing distincti ve sound signatures and extracting relevant features . [8] C. Machine Learning and AI for Acoustic Fault Detection Recent a dvancements i nclude AI-driven approac hes, such as convoluti onal neural networks [5] (CNNs), which classify printing fa ults automatical ly, and multi-sensor data acquisiti on systems that utilize sound, v ibrati on, and c urren t to capture real-time process data. Additionall y, improve d control and calibration software has refined fault prevention and detection, enabli n g manufacturers to optimize printin g processes and achi eve bett er outco mes. Furtherm ore, advancements in high-efficienc y real-time data acq uisition have sig nificantl y improved th e feasibili ty of this approach. By leveraging Linu x -base d syste ms, researc hers can minimize latenc y and ensure sea mless data processin g, enabling n ear-insta ntaneo us fault detection . Audio analysis not onl y provi des d eeper insights i nto acoustic signal behavi o r but also facilita tes the identific ation of various failure modes thro ughout the entire additi ve manufacturin g process. Studies h ave d emonstra ted th e effe ctiveness of this technique by collecti ng, preprocessi n g, and analy zing audi o data streams from 3D-printed samples. Researchers h ave successfu lly extracted both time-domain and freque ncy- domain fe atures u nder var ying la yer thick nesses and employed sophisti cated preprocessi ng methods such as Harmonic-Pe rcussive S ource Sep aration (HPSS) to isolate and analy ze specifi c audio compone nts. Thes e advancements To further strengthen the appli cation of audio-based fault detecti on, various m achine l earning tec hniques hav e been explored f or analyzi ng acoustic emission (AE) data in 3D printing, as highlight ed by Olowe M et al. [7]. A summary of these methods is presente d i n Table 1, demons trating the increasi ng role of AI-driven approaches in optimizi ng fault detecti on accuracy and auto mation. Other Studi es have shown that spectrogr am-based analysis, when integrat ed with CNNs, provides a highly efficient and scal able solutio n for real -time f ault detection in mechanical machinery and additive manufactu ring. This approach enables the classification of common faults such as filament breakag e, nozzle clogging, and pulley ski pping by leveragi ng the frequenc y-domain representa tion of audio signals. By ad opting this met hod, w e enhance the p recisio n o f fault identific ation while maintaining a non-invasive and cost- effective monitoring system, aligning with recent research emphasizi n g the potential of AI-driven au dio anal y sis f or industrial appli cations. [ 8][9] . This paper aims to enhance fault detection in 3D p rinti ng by leveraging real-time audio signal analysis to identify common mechanical issues such as nozzle cloggin g and filament breakage. By cap turing acoustic emissions generated during the printin g process, we classify these signals using machine learni ng tech niques, specific ally convolutio nal neural networks (CNNs), to detect faults as they occur. Traditio nal monitoring methods o ften rely on visual inspecti on and hardwa re sensors, which can be costly and limited in scope. In co ntrast, o ur approach offers a s calable , contactless , and cost-ef fective alternativ e, imp roving bot h the accuracy and effici ency of fau lt detection. Through controlle d experiments and advanced machine learnin g m odels, we demonstr ate that audio-base d moni toring pr ovides a reliable means of enhancing the quality and reliabilit y of 3D p rinti ng processes . Buildin g on e x isting research, we incorporat e spect rogram analysis in conjunctio n with CNNs to d istin guish between various types of mechani cal faults. Spectrograms , which visually represent the frequency spect rum of audio signals over time, se rve as a powerful tool fo r i dentifyin g s ubtle variati ons in acoustic emissi o ns associate d with differe nt types of faults. By transforming raw audio signals into spectrog ram images , we harness the advance d p atter n recognit ion capab ilities o f CNNs t o improve both the accuracy and speed of fault detection. Our methodolog y invol ves capturing audio d ata from 3D printers under controlle d conditio ns and convertin g these recordi ngs into spectro grams. These spectrograms are then used to train CNN models, enablin g them to recognize distinc t patterns correspondi ng to s pecific mechanic al issues such as nozzle cloggi ng, fi lament breaka ge, a nd pulle y skip ping. This approach not only enhances fault detection capabilit ies but also facilitates a more detailed analysis of the faults, potentiall y leading to more effecti ve maintenance strate g ies and predi ctive dia gnostics. The integration of spectrogram analysis and deep learning represents a significa nt advancement in the non -invasi ve monitori ng of 3D printing p rocesses . This system offers a scalable , cost-eff ective, and highly accurate method for r eal- time fault de tectio n, ensuring consist ent print quality and minimizin g producti on disrupt ions. Through this res earch, w e contrib ute to the growing f iel d of additive manufacturi ng by providin g a robust, AI-drive n solutio n that can b e applied across v ario us industries to optimi ze p roduct ion workflows and redu ce downtim e. II. L ITERATURE R EVI EW CNNs are hi ghly effecti ve in ext racting fe atures from spectrog rams due to their ability to capture local patterns and hierarchica l structures . A com prehensive review of 36 studies between March 2013 and August 2020 highlig hts the widesprea d use of deep learning models, particul arly CNNs, in analyzi ng spectrogra ms for various applications such as speech recognition, music classificatio n, and environme ntal sound dete ction.[ 1] Different types of spectrogr ams, such as Short-Time Fourier Transform (STFT), Mel-spectrogra ms, and lo g-scaled Mel -spec trograms, are commonly used. Mel-spectrog rams are partic ularly favored due to their ability to reduce dimensio nality while preserv ing essential audio features. More recently, P atel et al. ( 2024) p ropose d a real-time fau lt detecti on system u sing spectrogr am-based CNN models, which outperforme d traditi onal senso r-based methods in terms of scalabil ity and c o st-effic iency [ 10]. A study lev eraging the VGGish network for fault d etecti on in medi um-sized i ndustrial bearings ach ieved hig h accura cy in diagnosi ng faul ts b ased on aco ustic emissio ns. The mo del's ability to extract meaningf ul audio f eatur es, combined with its robust performance in real-world noisy environme nts, highlig hts its pote ntial for broade r applic ations in manufactu ring and 3D pri nting (Di Maggio LG et al ., 2022). By fine-tu ning the pre-traine d network on spectrog ram represent ations of acoustic signals from 3D printers, researc hers can l everage its featu re extraction capabil ities to distinguis h be tween n ormal and faulty printi ng conditio ns effective ly. [1 1] Vibrati on signal analysis has long been a critical technique for fault d iagnosis in rotatin g machiner y, traditio nally rel ying on statistic al features such as mean value, stan dard deviati on, and kurtosis. Howe v er, these conven tional metho ds often fail to fully utilize the rich informat ion embedded in vibration signals. Recent advancemen ts integrate empirical mode decompositi on (EMD) and convolut ional neural networks (CNNs) to enhance feature extraction and classificat ion accuracy. By applying fast Fourier tr ansform (FFT) and EMD -base d energ y entrop y calculati ons, researc hers ext ract spatial and frequency-domain features, i mproving diagnostic precisi on. Experimental validatio n using vibrati on data from 52 machine conditions demons trates that this combine d approach signific antly outperfor ms traditional methods in both accuracy and reliabili ty. These f indi ngs suggest that a similar stra tegy could be applied to 3D p rinti ng fault detecti on, leveragi ng deep learning to anal y ze acoust ic and vibratio nal data for enhanced monitoring and predicti v e maintena nce. [12] III. M ETHODOLOG Y The methodol ogy for this experiment i n volved recordin g audio from the extruder under two distinct conditions: operatin g with material and without material. These conditio ns produced different acousti c signatu res, allowing for comparative analysis. To enhanc e process efficiency, several fil tration techni ques were ap plied to is olate rele vant informatio n while discarding unwanted noise . Among thes e methods, b andpass filtration proved to b e the most effectiv e, as i t hel ped focus on t h e essential frequency range whi le signific antly reducing data size. We use d makerb ot met hod X printer and sparkfu n audio sensors for microphone data, Material used was ABS. The audio was recorde d using a dell XPS 9570 la p top. As illust rated in Figure 2.1, the baseline signal represents the ambient room noise, incl uding soun ds from the HVAC system and other static sources. The printer operati on so und, recorded with out filtrati on, includes both u seful and unwanted acoustic d ata. Applying a bandpass filter isolates the frequenc y range o f 100 – 1200 Hz, as frequencies beyond this range prima rily contain stati c noise. This refi nement not only narrows down th e si g nal under obse rvation but als o o ptimizes the f ault detectio n process by reducing computati o nal intensit y . Further noise reduction was performed using subtractio n- based noise filtratio n in Audacity. I n Figu re 2.2 the top waveform represents ambient noise, while the bottom waveform c orrespon ds to the filte r ed pri n ter ope ration so und. By effectivel y eliminatin g background noise, this approach ensures a cle aner signal, impr oving the a ccuracy of f ault detecti on while ma intainin g comput ational e fficienc y. Once th e filtered samples were prepared, several experimen ts wer e conducted using both gray scale and colored spectr ograms. The dataset co nsisted of 2 56 audio samples, with 80 % allocated for trainin g and 20% for testing and validation. Grayscale images were tested to r educe computation al co mplexity and memory usage; however, research [13] sugg ests that this also comes at the co st o f feature extraction . Figu re 2.3 illustrates th e experimen tation process with bo th grayscale and colo red spectrograms. Figure 2.1-Audio spectrogram data before and after applying a bandpass filter. Figure 2 .2-Noise filtration v ia subtraction method u sing audacity . Figure 2. 3-Greyscale image on the lef t and Colored image on th e right with histogram . After imp lementing this dataset, several challenges became apparent. Althoug h ambient noise was initia lly filtered out, the dataset p erformed well when working with pre -recorded and pre-proce ssed audio. However, in real-tim e environments, the presence of l ive ambient n oise significantly impacted performance, making it difficult to achieve reliab le fault d etection. The dynam ic nature of ambient noise, combined with variatio ns in machine sounds, introduced inconsistencies that the model struggled to h andle ef fectively. To mitig ate these issues, we co nducted a series of experimen ts aimed at im proving the dataset’s robustness. W e introduced separ ate samples to accou nt for different no ise conditions, including isolated ambien t noise, extruder noise, and extruder n oise under different material co nditions (both with an d withou t material). By categorizin g these d ifferent audio environments, we aimed to en hance the mod el’s ab ility to distinguish b etween oper ational sounds and potential fault indicators in r eal-world setting s. To process the cap tured acoustic data, we leverag ed transfer learning, utilizing a Convolution al Neural Netwo rk (CNN) as the core tech nology. CNNs have demo nstrated high effectiveness in image-b ased feature extraction, making them well-suited fo r analyzing spectrogr am representations o f sound data. By ap plying tr ansfer learning, we sought to improve the model’s general ization ability, allowin g it to adapt to real-time cond itions mo re effectively without requiring an extensive amount of new training data. The experimental setup and methodo logy for this appro ach are illustrated in Figu re 2.4, which pr esents a block d iagram outlining the key steps in the process. This ex periment played a crucial role in r efining the fault detection framewor k. Figure 2.4-Block dia gram of fault d etection with pro bability output. This approac h was trained using aco ustic data from the extruder op erating both with an d without m aterial, enabling it to per form in- situ fau lt monitoring du ring the 3D prin ting process. By incorpor ating a back ground no ise dataset, the model was significant ly improved in d istinguishing between normal and faulty states, even in real -time environments where ambient noise v aries dynamically. This enhancement has led to a higher probability of accurately detecting faulty conditions, reducing f alse positives an d impro ving ov erall reliability. One of the key advan tages of this method is its f lexibility and adap tability to d ifferent types of mec hanical fau lts beyond the initial scope. The same exper imental framework can be extended to detec t nozzle clogging, filament breakage, pulley sk ipping, an d other c ommon mechanical issues by training the model on appropriately cur ated datasets. Each fault type produces a unique acoustic signature, which, when processed through sp ectrogram analysis an d CNN -based classification, allows for precise identificati on of potential failures. Furthermor e, this appr oach paves the way for rea l -time predictive maintenance, where early detection of ano malies can h elp pr event severe malfunctions, minimizing downtime and improving overall printing efficiency. The ability to generalize ac ross multiple failu re modes makes th is methodology a valuable asse t in developin g a robust, AI - driven fault d etection system for 3D printing. With fu rther refinements, such as the integration o f additional sensor modalities[15] (e.g., vibration o r thermal sensors), this technique can evolve into a comprehensive multi-mod al fault detection system, cap able of identifying, predicting, and ev en preven ting failures before they compromise the p rint quality. IV. R ESULTS AND D ISCU SSIONS The f ault detection experiments in this research wer e conducted using a d ataset o f 25 6 audio samples recorded under controlled conditions. These samples captured the acoustic emissions of a 3D printer’s extruder operating with and witho ut material, simu lating different mechanical fau lts such as no zzle clogging, filament b reakage, and pulley skipping. Through the application of bandpass filtering within the 100 – 1200 Hz range, th e ac oustic data was effectively isolated while minimizing background noise. Additional noise r eduction techniqu es, in cluding s ubtraction - based filtering , improved the clarity of f ault signatures in the spectrogram s, allowing for better fault classification. The machine learning model em ployed for fault d etection was a Convo lutional Neur al Network (CNN) trained on spectrogram representations of the recorded aud io sign als. The model demonstrated s trong classification capab ilities, effectively id entifying patterns in th e frequency -domain data that d istinguished normal printing conditions from v arious fault states. Compared to traditional machine learning techniques such as Principal Component Analysis (PCA) and K-means clu stering, the spectrogram- based CNN ap proach proved to be significantly more effective in fea ture e x traction and f ault classification. The imp lementation of transfer learning further enhanced th e mod el’s generalization ability, particularly wh en tested on p reviously unseen data. Additionally, an an alysis of grayscale v ersus colored spectrogram s revealed that colo red spectrograms improved feature extr action, albeit at a higher computatio nal cost. The performance of th e fa ult detection model was assessed using standar d evaluation m etrics, achievin g an accu racy of approximately 91%, a precision of 88%, and a recall of 85%, resulting in an overall F1 -score of 8 6.5%. The high accuracy rate indicates th e model’s reliability in detecting faults, while the slightly lower recall scor e su ggests occasional missed fault detections, likely due to ambient noise interference in real-time environments. Despite this, the sy stem p roved to be highly ef fective, partic ularly in pre -recorded and pre- processed datasets wh ere noise was adequ ately filtered. When co mpared with existing literature, the p roposed method outperformed tr aditional fau lt detection techniques that rely on vibration -based sensors, which ty pically ac hieve accuracy r ates between 75 % and 85%. Previo us studies have emphasized the limitations of hardware- dependent monitoring systems, particularly in terms of scalability and cost. In contrast, this research dem onstrates that audio -b ased monitoring, particu larly through CNN- driven spectrogram analysis, prov ides a contactless and cost -effective altern ative without requiring e xpensive sensors or modification s to t h e 3D printer. Similar findings in indu strial applicatio ns, such as the work by Di Maggio et al. (20 22) on transfer lear ning for fault detection in industrial bearings, support the adapt ability of this appro ach across different m anufacturing environments. One of the primary advantages of u sing an audio -based fault detection system is its s calability. Unlik e sensor -based approaches, wh ich require direct integration with the printer, microphones are inexpensive, widely available, and cap able of capturing a ri ch set o f acoustic features without interfering with the prin ting process. Further more, the real -time processing capability of spectrog ram -based CNN models ensures th at faults are d etected as they occur, preven ting print defects before they escalate into c ritical failures. This enhances not on ly the efficiency of the manufacturing process but also red uces material waste and th e need for post - print inspections. Despite its adv antages, th e resear ch also identified ce rtain challenges and limitations. One of the most s ignificant issues encounter ed was the sensitivity of the mo del to ambient noise in real-tim e environmen ts. While the mo del performed well in controlle d conditions, the p resence of background noise from HVAC systems, oth er machin ery, and en vironmental disturbances led to a dec rease in classification accu racy. Computational costs also posed a challeng e, as processing colored spectrograms req uired more m em ory and processing power, wh ich could be a limitation in real -time embedded applications. Additionally, the model was tr ained on data from a sp ecific 3D printer model, an d further research is required to ensure its adaptability across dif ferent printer brands and co nfigurations. Overall, this s tudy demonstrates that CNN -based audio monitoring presents a viable and efficient meth od for fault detection in 3 D printing. With fu rther refinements, such as the integ ration of addition al sensor modalities, this ap proach could evo lve into a comprehensive m ulti-modal fau lt detection system, capable of identifying, pred icting, and preventing failu res before they compromise pr int quality. V. C ONCLUSION The r esearch demonstrated that audio -based fau lt detection using convolutional neural network s and spectrogr am analysis is an eff ective, scalable, and co st-efficient method for monitoring m echanical fau lts in 3 D p rinting. The proposed ap proach successfully identifie d faults such as nozzle clogging, filament breakage, and pulley s kipping with high accur acy, outperforming traditional sen sor -based monitoring tech niques. By leveraging real -time acoustic data, this method offers a contactless an d non -intrusive altern ative to convention al fault detection systems, redu cing costs while maintaining reliability. In practical applications, thi s system can be integrated into industrial 3D prin ting environments to enhance qu ality control and m inimize production waste. Its real -time detection capabilities make it suitable for predictive maintenance , helping to p revent cr itical failures befo re they impact the final product. Given its flexibility, the approach can be a dapted for dif ferent printer models and manufacturing setups, broadening its usability across various industries. Future research should focus on improving the system’s robustness against am bient no ise in r eal- time settings, potentially through advanced noise filtering techniques like Active no ise cancellation system or th e integration of m ulti- modal data sou rces, such as vibratio n or thermal imaging. Expanding the dataset to in clude diverse p rinter models and fault types will enh ance generalizability , while optimizing computation al efficiency will f acilitate deployme n t o n embedded systems. By ref ining these asp ects, this meth od can evolv e into a compreh ensive, AI- driven fault detec tion framework fo r additive manufacturing. A CKNOWLEDGMENT We express our sincere gratitude to the SPADAL Lab for grantin g us the v aluable opportu nity to conduct our research work. A dditionally , we ext end our appreciati on to Honeywel l and ASTERIX for their generous funding support. Their contrib utions have played a crucial role in making our researc h endeavors p ossible , and we are truly thankful for their co mmitment to advanci ng knowledge an d innovat ion. R EFERENCES [1] Siddharth B iswal et al. , “SLEEPN ET: Automated Sleep Staging System via Deep Learning,” arX iv (Cornell University) , Jan. 2017, d oi: https://doi.org /10.48550/arxiv.1 707.08262. [2] Teja8484, “Which Spectrogra m best represents feat ures of an audio file for CNN based model?,” St ack Overflow, Apr. 4, 2019. [ Online]. Available: https://stacko verflow.com/ques tions/5551365 2/. [Accessed: Jan. 3 0, 2025]. [3] Raise3D, “Introd uction to 3D Print ing,” Raise3D: Reli able, Industrial Grade 3D Printer , 2024. [Online]. Availa ble: https://www.rais e3d.com/blog/ introduction- to - 3d -printing/ . [Accessed: Jan. 3 0, 2025]. [4] A. Hassan and S. B ernadin, “A Co mprehensive Anal ysis of Speech Depression R ecogniti on S y stems,” SoutheastCon 2 024 , Atlanta, GA, USA, 2024, pp. 1509-151 8, doi: 10.1109/Southeas tCon52093.202 4.10500078. [5] M. Verana, C. I . Nwaka n ma, J. M. L ee and D . S. Ki m, "Deep Learning- Based 3D Printer Fault Detecti o n," 2021 Twelfth International Conference o n Ubiquitous a nd Futur e Networks (ICUFN) , Jeju Island, Korea, Republic of, 2021, pp. 99 -102, doi: 10.1109/ICUF N49451.2021.9528692. [6] D. A. J. Brion a nd S. W. Pattinson, “Generalisa ble 3D printing error detection and correction via multi- head neural networks,” Nat. Commun., vol. 13, no. 1, p. 4654, A u g. 2022, doi: 10.1038/s41467 - 022-31985- y. [7] M. Olowe et al., “Spectral Features Analysis for Print Quality Prediction in A dditive Manufacturing: An Aco ustics -Based Approach,” Sensors (Basel), vol. 24, no. 1 5, p. 4864, J u l. 2024, doi: 10.3390/s241 54864. [8] M. F. Wahee d and S. Ber nadin, "In-Situ Analysis of Vibration and Acoustic Data in Additive Manufacturi ng," SoutheastCon 2024, pp. 812-817, 2024. [Online]. Available: https://api.sem anticscholar.or g/CorpusID :269333345. [9] O. Janssens et al., “Convolutiona l Neural Network Based Fault Detection for Rotating Machinery,” J. Sound Vib., vol. 377, pp. 1 -10, 2016, doi: 10.10 16/j.jsv.2016.05.0 27. [10] N. Patel and R. N. Rai, “Analyzing s pect rogram parameters’ impact on wear state c lassification f or milling tools,” Proc. 34th E u r. Saf . Reliab. Conf., 2024. [11] L. G. Di Maggio, “I n telligent Fault Diagnosis of I n dustrial Bearings Using Transfer Learnin g and CNNs Pre-Trained for Audio Classification,” S ensors (Basel), vol. 23, no. 1, p. 211, Dec. 2022, doi: 10.3390/s230 10211 . [12] Y. Xie and T. Zhang, “ Fault Diagnosis for Rotating Machinery Based on Con volutional Neura l Net work and Empirical M ode Decompositio n,” Shock and Vibration , vol. 201 7, pp. 1 – 12, 2017, doi: https://doi.org /10.1155/2017/3084 197 [13] Arxiv.org, “What’s color got to do with i t? Face recogn ition in grayscale,” arX iv preprint, 2016. [Online]. Available: https://arxiv. org/html/2309.051 80v2. [Accessed: Ja n. 30, 2025] [14] A. Hassan and S. S. Khokhar, “Internet of Things -Enabled Vessel Monitoring System for Enhance d Maritime Safety and Tracking at Sea,” S outheastCon 2024, 2024, pp. 250 -259. [Online]. Avail able: https://api.sem anticscholar.or g/CorpusID :269333304. [Accessed: J an. 31, 2025]. [15] S. Kumar, T . Kolekar, S. Pa til, A. Bongale, K. Kot echa, A. Z aguia, and C. Prakash, "A low-cost multi-sensor data acquisition system for fault detection i n fused depos ition modelling ," Sensors , vol. 22, no. 2, p. 517, 2022. [Onli ne]. Available: https: //doi.org/10. 3390/s22020517 . [16] S. Ferrisi, R. Guido, D. Lofaro, and others, “A CNN architecture for tool condition monitoring via contact less microp hone: regression and classification approac hes,” Int. J. Adv. Manuf. Technol. , vol. 136, pp. 601 – 618, 2025. D OI: 10.1007/s00170- 024-14860-6 .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment