Toward Beginner-Friendly LLMs for Language Learning: Controlling Difficulty in Conversation

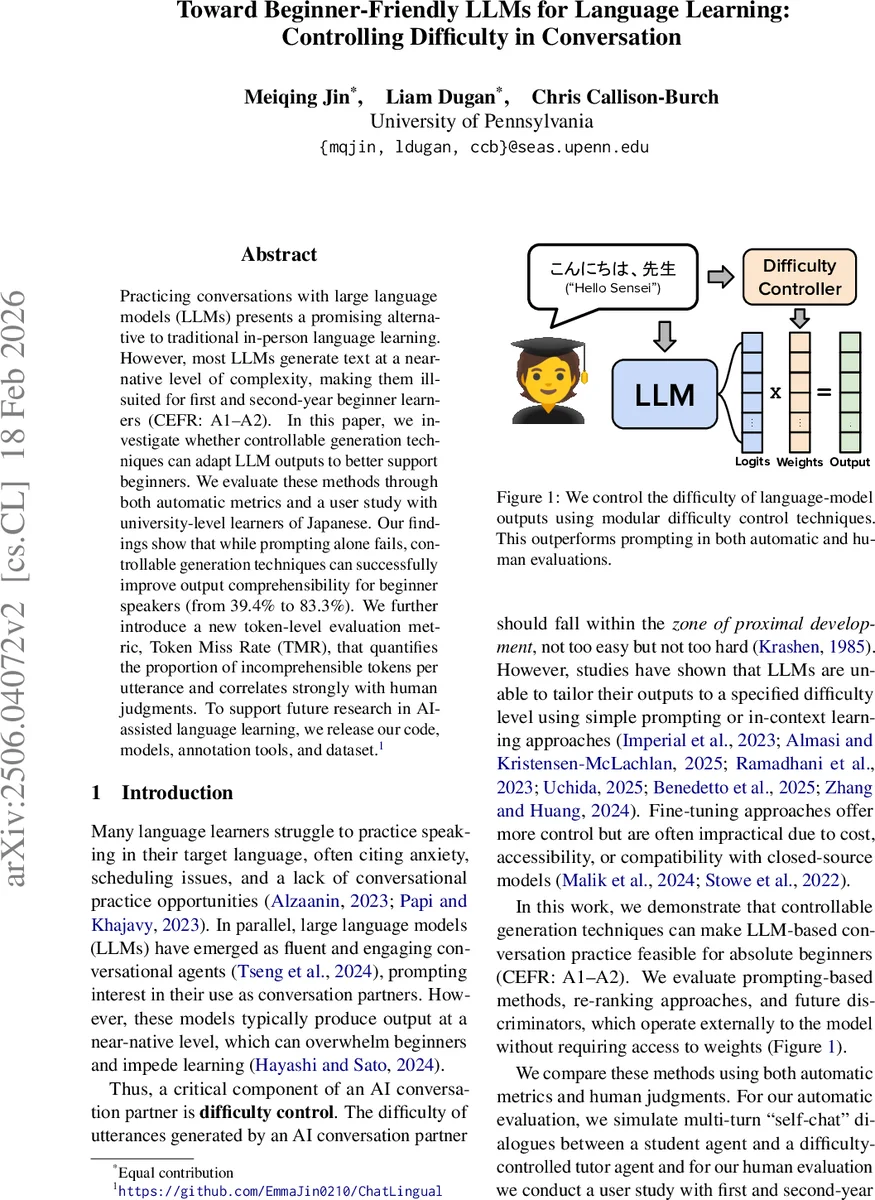

Practicing conversations with large language models (LLMs) presents a promising alternative to traditional in-person language learning. However, most LLMs generate text at a near-native level of complexity, making them ill-suited for first and second-year beginner learners (CEFR: A1-A2). In this paper, we investigate whether controllable generation techniques can adapt LLM outputs to better support beginners. We evaluate these methods through both automatic metrics and a user study with university-level learners of Japanese. Our findings show that while prompting alone fails, controllable generation techniques can successfully improve output comprehensibility for beginner speakers (from 39.4% to 83.3%). We further introduce a new token-level evaluation metric, Token Miss Rate (TMR), that quantifies the proportion of incomprehensible tokens per utterance and correlates strongly with human judgments. To support future research in AI-assisted language learning, we release our code, models, annotation tools, and dataset.

💡 Research Summary

This paper addresses a critical gap in the use of large language models (LLMs) for language learning: while LLMs can hold fluent, engaging conversations, their output is typically at a near‑native level of complexity, which overwhelms beginners (CEFR A1‑A2). The authors define a new task, “conversational difficulty control,” in which a tutor‑role LLM must generate responses that remain comprehensible to a learner throughout a multi‑turn dialogue, without sacrificing naturalness or fluency. They map Japanese Language Proficiency Test (JLPT) levels N5‑N1 to CEFR levels and construct vocabulary and grammar lists for each level, providing a concrete grounding for difficulty assessment.

Three families of controllable generation methods are evaluated:

- Prompt‑based control – a simple baseline prompt and a “detailed” prompt that includes explicit level descriptions, example dialogues, and a list of 100 expressions.

- Over‑generate + re‑ranking – the model produces five candidate continuations; each token is assigned a JLPT level using a pre‑trained classifier (TOHOKU‑BER‑T) and heuristic frequency filters. Candidates are ranked by estimated Token Miss Rate (TMR), the proportion of tokens above the target level, and the best candidate is returned.

- FUDGE (Future Discriminators for Generation) – a modular inference‑time technique that blends the base model’s logits with those of a lightweight difficulty classifier (ModernBERT fine‑tuned on the jpWac‑L corpus). A control strength parameter λ interpolates between the two distributions; higher λ yields stronger difficulty enforcement.

To evaluate difficulty, the authors introduce Token Miss Rate (TMR), a token‑level metric that counts the fraction of tokens whose JLPT level exceeds the learner’s target. They also compute ControlError, the squared distance between the classifier’s predicted level and the target level. Fluency is measured with perplexity (using Aya‑Expanse‑8B), average utterance length, trigram diversity (div@3), and a Japanese readability score (JReadability).

Automatic “self‑chat” experiments simulate 75 dialogues between a student LLM (configured at each JLPT level) and a tutor LLM (using each control method). Results (Table 3) show that FUDGE consistently achieves the lowest TMR (as low as 11.9 % with λ = 0.9) and ControlError (1.78), while maintaining competitive perplexity (≈ 74) and diversity. Over‑generation improves over prompt methods but still leaves TMR around 13‑15 %. Simple prompting fails dramatically, with TMR above 30 % and a pronounced “alignment drift” where the model gradually reverts to native‑level output over turns.

A human user study with 30 university Japanese learners (first‑ and second‑year) validates these findings. Participants converse with each controlled tutor for a fixed topic; they rate comprehension and naturalness on a 5‑point scale. FUDGE receives the highest comprehension score (4.2) and naturalness (4.0), whereas the detailed prompt scores around 2.1 for comprehension. The study confirms that FUDGE’s λ‑controlled decoding can sustain beginner‑appropriate language across multiple turns, while prompting alone cannot.

The paper’s contributions are fourfold: (1) formalizing conversational difficulty control for beginners; (2) proposing the token‑level TMR metric, which correlates strongly with human judgments; (3) demonstrating that modular inference‑time control (especially FUDGE) can achieve strong difficulty regulation without fine‑tuning the base LLM, making it applicable to closed‑source models; and (4) releasing code, models, annotation tools, and the newly created dataset to foster reproducibility.

Limitations include focus on a single language (Japanese), lack of longitudinal assessment of learning outcomes, and reliance on a manually set λ parameter. Future work should extend the framework to other languages, explore adaptive λ tuning, and investigate personalized difficulty control that accounts for individual learner progress and preferences.

In summary, the study provides compelling evidence that controllable generation techniques—particularly FUDGE—enable LLMs to act as effective, beginner‑friendly conversational partners. By offering a scalable, low‑cost alternative to full model fine‑tuning, this work paves the way for more inclusive AI‑assisted language learning platforms that can adapt to the needs of novice learners worldwide.

Comments & Academic Discussion

Loading comments...

Leave a Comment