When Stereotypes GTG: The Impact of Predictive Text Suggestions on Gender Bias in Human-AI Co-Writing

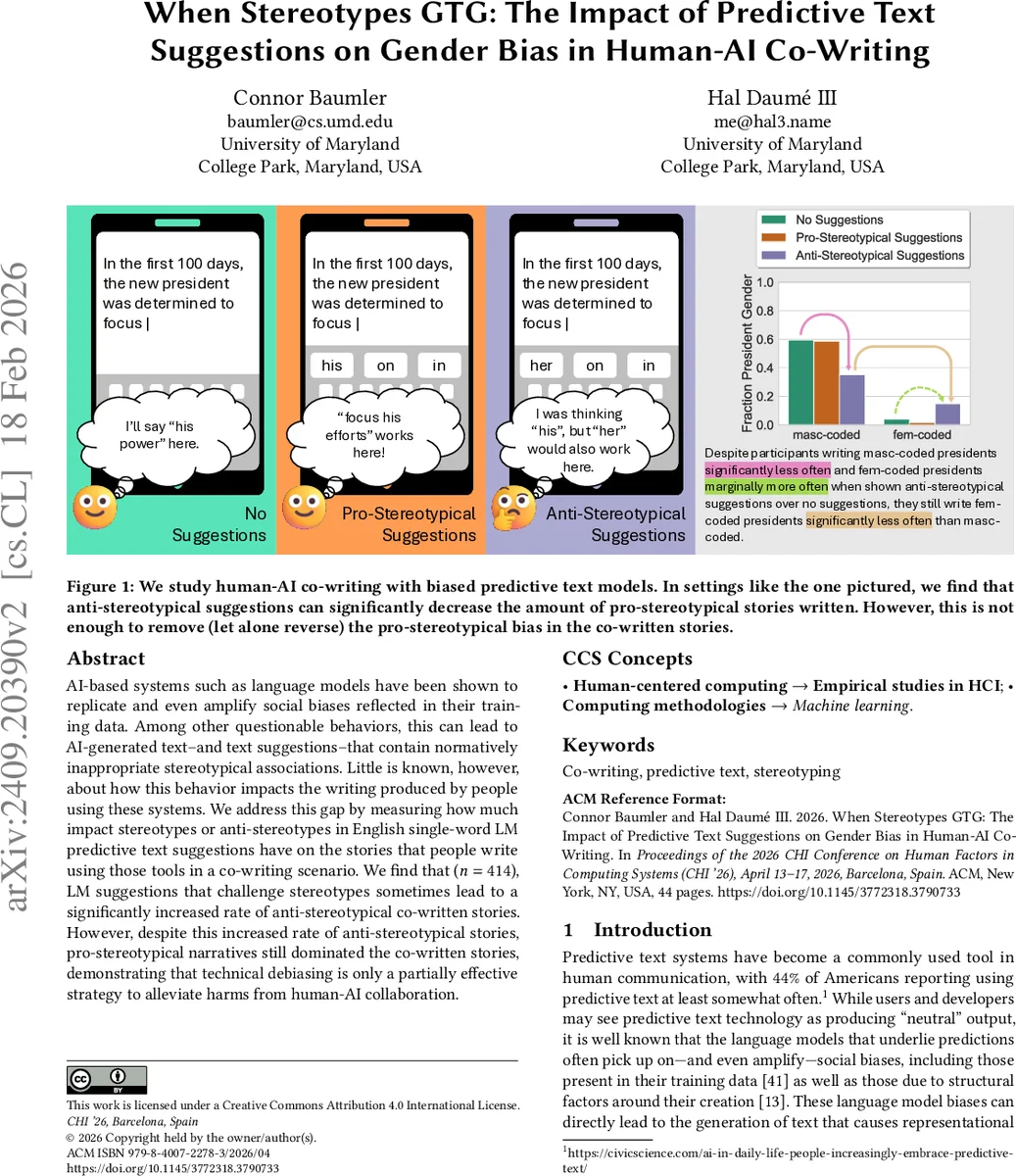

AI-based systems such as language models have been shown to replicate and even amplify social biases reflected in their training data. Among other questionable behaviors, this can lead to AI-generated text–and text suggestions–that contain normatively inappropriate stereotypical associations. Little is known, however, about how this behavior impacts the writing produced by people using these systems. We address this gap by measuring how much impact stereotypes or anti-stereotypes in English single-word LM predictive text suggestions have on the stories that people write using those tools in a co-writing scenario. We find that ($n=414$), LM suggestions that challenge stereotypes sometimes lead to a significantly increased rate of anti-stereotypical co-written stories. However, despite this increased rate of anti-stereotypical stories, pro-stereotypical narratives still dominated the co-written stories, demonstrating that technical debiasing is only a partially effective strategy to alleviate harms from human-AI collaboration.

💡 Research Summary

This paper investigates how gender‑related stereotypes embedded in predictive‑text suggestions influence the stories people write when collaborating with an AI language model. The authors conducted a pre‑registered, IRB‑approved online experiment with 414 participants who were asked to compose short English narratives under three conditions: (1) a “pro‑stereotypical” model that offered gender‑occupation suggestions aligned with common societal biases (e.g., a male doctor, a male president), (2) an “anti‑stereotypical” model that deliberately countered those biases (e.g., a female doctor, a female president), and (3) a control condition with no suggestions. Each participant could accept or reject each single‑word suggestion while writing. The stories were automatically coded for gendered pronouns, occupation‑gender pairings, and Agency‑Belief‑Communion (ABC) trait assignments.

Results show that anti‑stereotypical suggestions can increase the proportion of counter‑stereotypical stories in certain scenarios—most notably when the narrative involves a president, where participants wrote more stories featuring a female president after receiving anti‑stereotypical prompts. However, this effect was modest. Across all scenarios, participants accepted pro‑stereotypical suggestions at a significantly higher rate than anti‑stereotypical ones, and overall, pro‑stereotypical narratives remained dominant. The impact of suggestions on ABC traits was weaker, likely because these traits were less explicitly marked in the text and the underlying gender‑trait associations are subtler than gender‑occupation links.

The authors conclude that technical debiasing of language models—providing only anti‑stereotypical suggestions—is insufficient to eradicate bias in human‑AI co‑writing. Human users’ pre‑existing stereotypes strongly guide which suggestions they adopt, so even a perfectly “debiased” model may produce a biased output distribution when paired with users. Effective mitigation therefore requires a multi‑layered approach, combining model‑level interventions with user‑centric strategies such as education, transparent explanations, and interface designs that encourage critical engagement with AI suggestions.

Limitations include the focus on single‑word predictions, an English‑only and culturally specific participant pool, and the lack of longitudinal measurement of attitude change. Future work should explore multi‑word or contextual suggestions, cross‑cultural samples, and adaptive feedback mechanisms to better understand and reduce the persistence of gender bias in everyday AI‑assisted writing.

Comments & Academic Discussion

Loading comments...

Leave a Comment