Generalized Leverage Score for Scalable Assessment of Privacy Vulnerability

Can the privacy vulnerability of individual data points be assessed without retraining models or explicitly simulating attacks? We answer affirmatively by showing that exposure to membership inference attack (MIA) is fundamentally governed by a data …

Authors: Valentin Dorseuil, Jamal Atif, Olivier Cappé

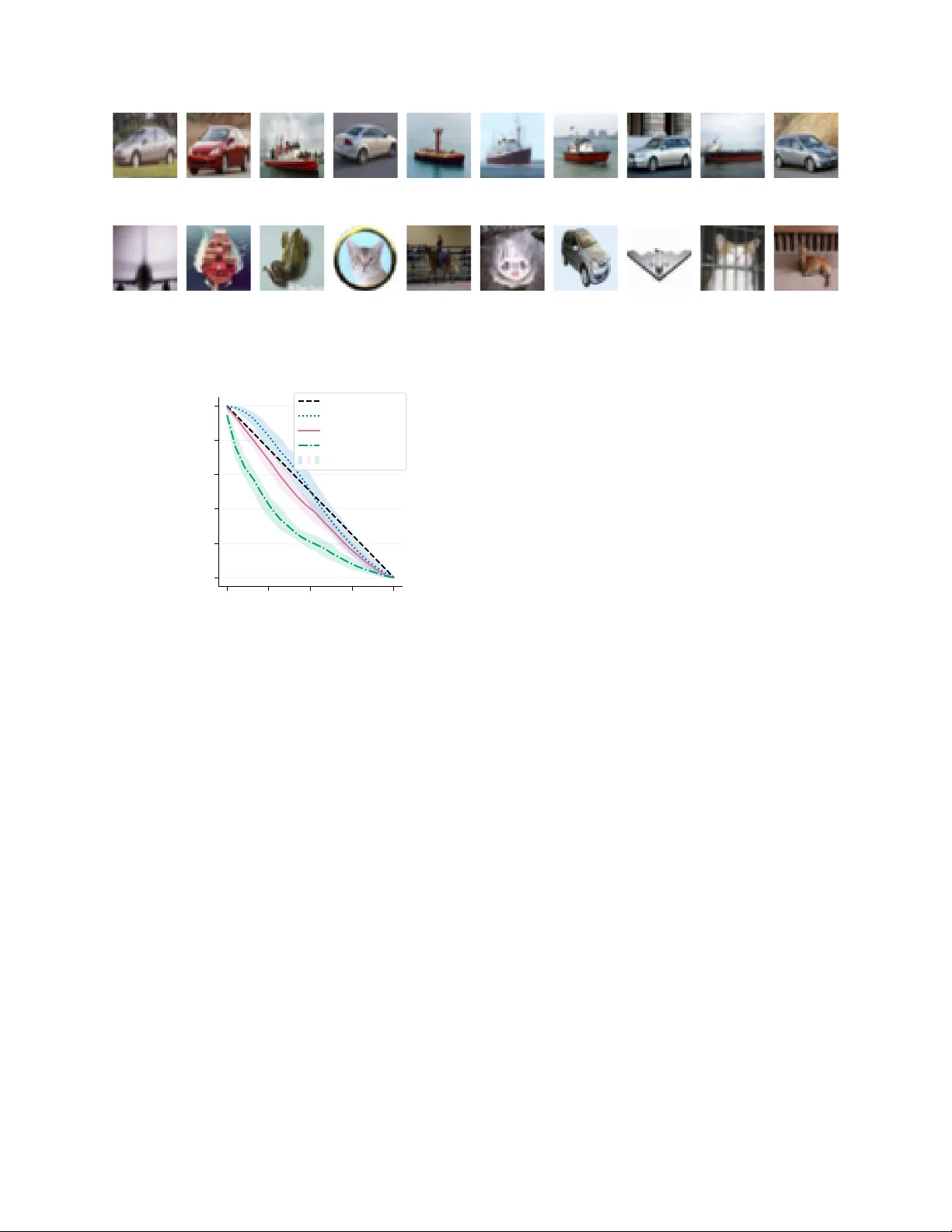

Generalized Lev erage Score for Scalable Assessmen t of Priv acy V ulnerabilit y V alen tin Dorseuil ∗ 1 , Jamal A tif 2 , and Olivier Cappé 1 1 DI ENS, École normale supérieure, Université PSL, CNRS, 75005 Paris, F rance 2 CMAP , École p olytec hnique, Institut P olytechnique de Paris, 91120 Palaiseau, F rance Abstract Can the privacy vulner ability of individual data p oints b e assesse d without r etr aining mo dels or explicitly sim- ulating attacks? W e answer affirmatively by showing that exp osure to membership inference attack (MIA) is fundamen tally gov erned by a data point’s influence on the learned mo del. W e formalize this in the linear setting b y establishing a theoretical corresp ondence b et w een individual MIA risk and the leverage score, iden tifying it as a principled metric for vulnerability . This characterization explains how data-dep enden t sensitivit y translates into exposure, without the com- putational burden of training shadow models. Build- ing on this, w e prop ose a computationally efficient generalization of the lev erage score for deep learning. Empirical ev aluations confirm a strong correlation b et w een the prop osed score and MIA success, v alidat- ing this metric as a practical surrogate for individual priv acy risk assessment. 1 In tro duction Mo dern machine learning mo dels, and deep neural net works in particular, are kno wn to memorize asp ects of their training data ( Zhang et al. , 2017 ; Carlini et al. , 2019 ). This memorization induces priv acy vulnerabil- ities that can b e exploited by Memb ership Infer enc e Attacks (MIAs), whic h aim to determine whether a sp ecific data p oin t was included in the training set ( Shokri et al. , 2017 ; Carlini et al. , 2022 ). A principled defense against mem b ership inference is provided by Differen tial Priv acy (DP) ( Dwork , 2006 ), implemented in deep learning through noise-injected stochastic gra- dien t methods ( Abadi et al. , 2016 ). Ho wev er, con- trolling the trade-off betw een priv acy protection and mo del utility remains c hallenging. Noise calibration ∗ Corresponding author: valentin.dorseuil@ens.psl.edu Preprint. Under review. t ypically relies on w orst-case theoretical accoun ting, paired with empirical priv acy auditing via MIAs, and often leads to either ov er-conserv ativ e noise levels or insufficien t priv acy protection. In this context, design- ing MIAs for empirical auditing is essen tial to quan tify leak age in non-priv ate mo dels ( Y eom et al. , 2018 ) or to v alidate the practical tigh tness of DP guarantees in priv ate models ( Nasr et al. , 2021 ; Jagielski et al. , 2020 ). While such auditing is now standard practice ( Car- lini et al. , 2022 ; Nasr et al. , 2021 ; Zarifzadeh et al. , 2024 ), relying on aggregate metrics like av erage ac- curacy or AUC is insufficient. Such global measures can obscure critical risk heterogeneity , as outliers and rare subgroups are significantly more prone to mem- orization than typical samples ( Carlini et al. , 2022 ; F eldman and Zhang , 2020 ). Consequen tly , a mo del certified as priv ate on av erage may still exp ose sp ecific p oin ts to high priv acy risks. T o address this heterogeneity , recen t work is in- creasingly focusing on individual priv acy risk assess- men t, aiming to quantify membership leak age at the lev el of eac h data p oin t, separately , rather than in aggregate. State-of-the-art metho ds for p er-sample auditing ( Carlini et al. , 2022 ; Zarifzadeh et al. , 2024 ) predominan tly rely on shadow mo dels . These tec h- niques train multiple reference mo dels on random data splits to characterize eac h data p oin t’s influence on the mo del’s b eha vior. This enables iden tification of which p oin ts exhibit increased sensitivity to their presence in the training dataset. How ev er, these techniques are computationally prohibitiv e, esp ecially for large-scale mo dels, as they require retraining the mo del m ultiple times. This leads to our cen tral question stated in the abstract: c an the privacy vulner ability of individual data p oints b e assesse d without r etr aining mo dels or explicitly simulating attacks? In classical statistics, the lever age sc or e quantifies a data p oin t’s geometric influence on a mo del, indep en- den t of its label. W e establish that in Gaussian linear 1 mo dels, this score precisely c haracterizes membership inference vulnerability . W e prov e that under an op- timal blac k-b o x attac k, the priv acy loss distribution is con trolled by a single scalar, the lev erage score. In other words, mem b ership inference vulnerability is fundamen tally ab out self-influenc e : samples that are geometrically p ositioned to hav e a disprop ortionate effect on the model’s learned parameters are the most at risk of priv acy leak age. Consequen tly , while spe- cific outcomes fluctuate due to noise, the individual a verage priv acy risk in the linear regime is determined b y data geometry . T o extend this analysis to deep neural net works, w e introduce the Gener alize d L ever age Sc or e ( GLS ) . Deriv ed via implicit differen tiation of the training opti- malit y conditions, the GLS measures the infinitesimal sensitivit y of a mo del’s prediction to its own lab el, generalizing the leverage score to both regression and classification settings. While the exact computation is expensive for deep netw orks, we demonstrate that a last-lay er appro ximation remains highly effective in practice. This allo ws us to compute a scalable, theoretically principled, pro xy for priv acy risk that correlates with the success of state-of-the-art attac ks, without the need for retraining or shadow models. Con tributions. (i) W e prov e that for Gaussian lin- ear mo dels under blac k-b o x access, the leverage score is the sufficient statistic characterizing b oth the pri- v acy loss distribution and the optimal membership inference test. (ii) W e extend this lev erage score to general differentiable mo dels via the Gener alize d L ever age Sc or e ( GLS ), deriving a principled and scal- able estimator of priv acy vulnerability . (iii) Through m ultiple exp erimen ts, w e sho w that this metric serv es as a surrogate for individual p r iv acy risk. It identifies vulnerable samples, showing a strong correlation with state-of-the-art shado w model attacks, at a reduced computational cost. 2 Related W ork Mem b ership Inference Attac ks (MIA). Mem- b ership inference aims to determine if a sp ecific sample w as used to train a mo del. Early approaches relied on simple metric-based classifiers, exploiting ov erfitting signals suc h as prediction confidence, en tropy , or the magnitude of gradien ts ( Shokri et al. , 2017 ; Y eom et al. , 2018 ). While computationally inexpensive, these methods often struggle to distinguish b et ween a “vulnerable" mem b er and a “hard" non-member. T o address this, current state-of-the-art methods adopt the shadow mo del paradigm Shokri et al. ( 2017 ). By training multiple mo dels on different data splits, attac ks like LiRA (Lik eliho od Ratio Attac k) ( Carlini et al. , 2022 ) essentially perform a hypothesis test on the loss distribution of a target point ( Zarifzadeh et al. , 2024 ). While highly effectiv e, this approac h is computationally prohibitive, often requiring hundreds of training runs to estimate the risk for a single p oin t accurately . Our work seeks to achiev e the precision of these h yp othesis-test based metho ds without their computational burden, by substituting retraining with geometric analysis. Influence F unctions and Data A ttribution. Originating in robust statistics with influence mea- sures suc h as Co ok’s distance ( Co ok , 1977 ), influence functions quan tify the effect of a training p oin t on mo del parameters or predictions. In mo dern deep learning, K oh and Liang ( 2017 ) rein tro duced influ- ence functions via Hessian-vector pro ducts to explain mo del’s b eha vior and iden tify mislab eled data. Recen t work has b egun to explore the connection b et w een influence and priv acy . Notably , F eldman and Zhang ( 2020 ) and F eldman ( 2020 ) link the memoriza- tion of a sample to its influence on the learning pro cess, arguing that memorization is necessary for general- ization in long-tailed distributions. How ever, classical influence functions typically measure the effect of a training p oin t on a sep ar ate test p oin t. In contrast, our GLS form ulation focuses on self-influenc e (the sensitivit y of a prediction to its o wn label), identi- fying this sp ecific form of leverage as the canonical driv er of priv acy leak age. Priv acy Auditing and Heterogeneit y . Differen- tial Priv acy (DP) ( Dwork , 2006 ) provides worst-case guaran tees for membership priv acy . Using algorithms suc h as DP-SGD ( Abadi et al. , 2016 ), one can train Deep Learning models to b e priv ate. Ho wev er, these b ounds are often lo ose and do not capture the empir- ical realit y that priv acy risk is non-uniform: some outlier samples are far more exposed than others ( Zarifzadeh et al. , 2024 ). Auditing individual priv acy risk (estimating the sp ecific ( ε, δ ) for a giv en sample) remains an op en c hallenge. Existing auditing to ols rely hea vily on the aforemen tioned shadow mo del tec hniques or ran- domized smoothing ( Lecuyer et al. , 2019 ), which are difficult to scale. Our w ork contributes to this domain b y prop osing an individual priv acy risk metric for iden tifying vulnerable samples efficiently . W e b eliev e that this approach can complement existing audit- ing metho ds, providing a first-pass filter to identify high-risk points without retraining. 2 3 Lev erage Scores and Mem b er- ship V ulnerabilit y W e b egin by analyzing how data heterogeneity impacts the effectiv eness of Membership Inference Attac ks (MIAs) in the fixed-design Gaussian linear regression framew ork. This setting is selected for its analytical tractabilit y and its ability to represent data v ariance through the fixed design matrix. In contrast to ap- proac hes that assume an i.i.d. data distribution, our fixed-design analysis captures the non-uniform risk exp osure inherent to heterogeneous datasets. W e provide a characterization of the optimal mem- b ership inference test and its trade-off curve in this setting, where the a verage is taken ov er all possible training noise realizations for a fixed dataset, rather than across data p oin ts. By quantifying vulnerabilit y using lever age sc or es , w e establish a rigorous link b e- t ween a point’s structural influence and its individual priv acy risk exp osure. 3.1 Problem Setting and Definitions Consider a fixed design matrix X ∈ R n × d , where eac h row x ⊤ i represen ts a data p oin t. W e examine the m ultiv ariate linear mo del: Y = X Θ ∗ + E , (1) where Y ∈ R n × m is the resp onse matrix, Θ ∗ ∈ R d × m is the true parameter matrix, and E ∈ R n × m repre- sen ts noise. W e assume the noise is centered, indep en- den t and iden tically distributed (i.i.d.) Gaussian, suc h that for any row i , E [ E i · ] = 0 and Co v ( E i · ) = σ 2 I m . W e assume n ≥ d and that X has full column rank. The Ordinary Least Squares (OLS) estimator is ˆ Θ = ( X ⊤ X ) − 1 X ⊤ Y . The fitted v alues are ˆ Y = H Y , where H = X ( X ⊤ X ) − 1 X ⊤ is the hat matrix. F or a sp ecific data p oin t i , the residual vector r i ∈ R m is defined as: r i = y i − ˆ Θ ⊤ x i = n X j =1 ( δ ij − h ij ) y j , (2) where δ ij is the Kroneck er delta. The diagonal ele- men ts of the hat matrix, h ii = x ⊤ i ( X ⊤ X ) − 1 x i , are the lever age sc or es . They satisfy 0 ≤ h ii ≤ 1 and P n i =1 h ii = d , serving as a measure of the geometric influence of x i on the mo del’s predictions ( Belsley et al. , 1980 ). 3.2 Distinguishing Mem b ers from Non-Mem b ers T o formalize the Membership Inference Attac k, we define tw o hypotheses for a data p oin t ( x i , y i ) . Un- der the memb er hypothesis ( H 1 = H train ), the pair w as included in the training set used to compute ˆ Θ . Under the non-memb er h yp othesis ( H 0 = H test ), the mo del was trained on the dataset, but w e ev aluate the residual on an independent observ ation ˜ y i generated from the same distribution: ˜ y i = Θ ∗⊤ x i + ε ′ i , where ε ′ i is independent noise. Prop osition 3.1 (Residual Distributions) . Under the Gaussian noise assumption, the r esiduals for the i -th data p oint under the memb er and non-memb er hyp otheses fol low distinct multivariate normal distri- butions: r i | H tr ain ∼ N 0 , σ 2 (1 − h ii ) I m , (3) r i | H test ∼ N 0 , σ 2 (1 + h ii ) I m . (4) Conse quently, the squar e d norms of the r esiduals fol low sc ale d Chi-squar e d distributions: ∥ r i ∥ 2 | H tr ain ∼ σ 2 (1 − h ii ) χ 2 ( m ) , (5) ∥ r i ∥ 2 | H test ∼ σ 2 (1 + h ii ) χ 2 ( m ) . (6) The proof is pro vided in App endix A.2 . This result highligh ts that members hav e low er residual v ariance than non-mem b ers, with the gap con trolled explicitly b y the leverage score h ii . In particular, the distri- butions differ in scale and not in location, leading to fundamentally non-symmetric error trade-offs in mem b ership inference. This asymmetry leads to easier detection of non-mem b ers (low false negativ e rate β ) than members (low false p ositiv e rate α ), whic h is consisten t with the empirical b eha vior of state-of-the art MIAs ( Carlini et al. , 2022 ). 3.3 A Membership Inference Attac k P ersp ectiv e Using Proposition 3.1 , we deriv e the theoretically optimal mem b ership inference attack. The Neyman- P earson Lemma ( Neyman and Pearson , 1933 ) states that the most p o w erful test for distinguishing these h yp otheses is the Likelihoo d Ratio T est. Due to the heterogeneit y of the data (v arying h ii ), a global threshold on the raw loss ∥ r i ∥ 2 is sub optimal. Instead, the optimal decision rule requires a sample- sp ecific normalization. Prop osition 3.2 (Optimal MIA T est) . L et S i ( r i ) b e the lo g-likeliho o d r atio statistic for the i -th sam- ple. The most p owerful test at level α r eje cts the non-memb er hyp othesis H test (pr e dicts memb ership) if S i ( r i ) > γ α , wher e the sufficient statistic is given by: S i ( r i ) = m 2 ln 1 + h ii 1 − h ii − h ii σ 2 (1 − h 2 ii ) ∥ r i ∥ 2 . (7) 3 The pro of of this result is provided in App endix A.3 . T o obtain the optimal ( α, β ) trade-off curv e, samples m ust b e ranked in descending order of their score S i ( r i ) . This score represents a data-dep enden t affine transformation of the loss ∥ r i ∥ 2 . This relationship aligns with the design of parametric MIAs that fit Gaussian distributions to scores. It suggests that such metho ds implicitly learn this affine transformation to distinguish mem b ers from non-members ( Carlini et al. , 2022 ; Zarifzadeh et al. , 2024 ). W e follow the approach of f -Differen tial Priv acy defined in Dong et al. ( 2022 ) to characterize the mem- b ership inference trade-off curve as a functional rela- tionship betw een error types. Prop osition 3.3 (MIA Errors Curve) . F or the p oint i , the tr ade-off b etwe en the false alarm r ate α (T yp e-I err or) and the misse d dete ction r ate β (T yp e-II err or) is describ e d by the curve: β i ( α i ) = 1 − F m 1 + h ii 1 − h ii F − 1 m ( α i ) . (8) wher e F m is the Cumulative Distribution F unction of the χ 2 ( m ) distribution. This result highlights that h ii is the unique parame- ter controlling the distinguishability of training p oin ts from test p oin ts in the Blac k-Box setting, i.e., when the attack er only has access to the loss v alues. The pro of is provided in App endix A.4 with an illustration for m ultiple h ii v alues in Figure 5 . 3.4 An Influence P oin t of View The vulnerability of high-leverage p oin ts established in Prop osition 3.2 can b e in tuitively understo o d through the lens of self-influenc e . While the lev erage score h ii w as defined geometri- cally via the pro jection matrix, it admits an op era- tional in terpretation as the self-influenc e of the i -th observ ation. With the OLS estimator, ˆ Θ w e hav e: ˆ y i = n X j =1 h ij y j = n X j =1 x ⊤ i ( X ⊤ X ) − 1 x j y j . (9) The fitted v alue for p oin t i is a linear com bination of all observed lab els y j . Thus leverage score h ii corresp onds to the sensitivity of the i -th fitted v alue ˆ y i with resp ect to the observed target y i . This yields the iden tity: ∂ ˆ y i ∂ y i = h ii I m . (10) It quantifies how muc h the mo del’s prediction for a sp ecific p oin t changes when the lab el of that p oin t is p erturbed. The scalar lev erage score can then b e reco vered via the trace: h ii = 1 m T r ∂ ˆ y i ∂ y i . This iden tity highlights the link betw een the lever- age score and the mo del’s sensitivit y to its own train- ing labels. F or high-leverage p oin ts ( h ii ≈ 1 ), the mo del is forced to interp olate the observ ation y i to minimize the global squared error. The sp ecific noise instance ε i presen t in a member’s lab el is directly enco ded in to the prediction ˆ y i . Consequen tly the training residual r i v anishes, making membership eas- ily detectable. While the geometric definition of h ii is sp ecifically relev an t to OLS, the sensitivit y formulation in Equa- tion ( 10 ) is general, allowing us to extend leverage scores to non-linear deep neural netw orks. 4 Generalized Lev erage Scores ( GLS ) W e formalize the Gener alize d L ever age Sc or e ( GLS ) to extend the classical sensitivit y in terpretation to arbitrary differen tiable mo dels. 4.1 F ormal F ramework and Definition W e consider a dataset D = { ( x i , y i ) } n i =1 and a mo del f θ : X → Y parameterized b y θ ∈ Θ ⊂ R p . The output space Y is m -dimensional (for example m = 1 for scalar regression and m = C for C -class classi- fication). T raining is p erformed by minimizing the empirical risk: L ( θ ) = 1 n n X i =1 ℓ ( f θ ( x i ) , y i ) , (11) where ℓ is a twice-differen tiable loss function. Let ˆ θ de- note the optimal parameters obtained at con vergence, i.e., ˆ θ ∈ arg min θ ∈ Θ L ( θ ) . Definition 4.1 (Generalized Leverage Score) . The Generalized Lev erage Score ( GLS ) of the i -th sample is defined as the infinitesimal sensitivity of the mo del’s prediction at x i to its o wn observed lab el y i : GLS i = ∂ f ˆ θ ( x i ) ∂ y i ∈ R m × m . (12) This metric captures a self-influenc e effect; it quan- tifies how muc h the mo del would alter its prediction for a sp ecific sample if that sample’s lab el w as p erturbed, accoun ting for the implicit c hange in the learned pa- rameters ˆ θ . 4 4.2 Analytic Deriv ation via Implicit Differen tiation Computing GLS i directly is c hallenging as ˆ θ dep ends on y i implicitly through the optimization pro cess. T o isolate this dep endence, we follow the approach of Koh and Liang ( 2017 ) and derive a closed-form expression for GLS i . Prop osition 4.2 (Closed-form GLS ) . Assuming the aver age d loss function L is twic e differ entiable and its Hessian H ˆ θ is invertible, the GLS for a univariate output is given by: GLS i = − 1 n ∇ θ f ˆ θ ( x i ) 2 H − 1 ˆ θ ∂ 2 ℓ ∂ y ∂ f , (13) wher e ∥ v ∥ 2 A = v ⊤ A v denotes the squar e d Matrix norm. F or multivariate outputs, this gener alizes to: GLS i = − 1 n J θ f ˆ θ ( x i ) H − 1 ˆ θ J θ f ˆ θ ( x i ) ⊤ ∂ 2 ℓ ∂ y ∂ f , (14) wher e J θ f ˆ θ ( x i ) ∈ R m × p is the Jac obian of the mo del outputs with r esp e ct to the p ar ameters, H ˆ θ is the Hes- sian of the loss function L , i.e. H ˆ θ = ∇ 2 θ L ( ˆ θ ) , and ℓ denotes the p ointwise loss evaluate d at ( f ˆ θ ( x i ) , y i ) . This result follows from applying implicit differen ti- ation to the first-order optimality condition ∇ θ L ( ˆ θ ) = 0 , noting that ˆ θ dep ends implicitly on y i and L de- p ends explicitly on y i through ℓ ( f ˆ θ ( x i ) , y i ) . The full deriv ation is provided in App endix B.2 . The scaling factor ∂ 2 ℓ ∂ y ∂ f acts as a loss-sp ecific weigh t- ing term in the GLS expression. W e derive this term for common loss functions in b oth regression and clas- sification settings. 4.3 GLS for Regression Mo dels In the multiv ariate regression setting, we typically consider the quadratic loss. F or a single data p oin t ( x , y ) the loss is defined as: ℓ ( f ( x ) , y ) = ∥ f ( x ) − y ∥ 2 2 . Prop osition 4.3 (Multiv ariate Regression) . F or the quadr atic loss with tar gets y ∈ R m and pr e dictions f ∈ R m , the se c ond derivative is: ∂ 2 ℓ ∂ y ∂ f = − 2 I m . (15) This giv es us: GLS i = 2 n J θ f ˆ θ ( x i ) H − 1 ˆ θ J θ f ˆ θ ( x i ) ⊤ . (16) 4.4 GLS for Classification Mo dels F or classification, w e consider the cross-en tropy loss ℓ ( ˆ p , y ) = − P m j =1 y j log ( ˆ p j ) , where y ∈ { 0 , 1 } m is the one-hot label vector and ˆ p = s ( f ˆ θ ( x )) ∈ [0 , 1] m is the v ector of predicted probabilities obtained via the softmax function s . The GLS dep ends on the loss through the second deriv ativ e ∂ 2 ℓ ∂ y ∂ f , whic h for cross-entrop y reduces to a constan t matrix: Prop osition 4.4 (Cross-En tropy Deriv atives) . F or the cr oss-entr opy loss with one-hot lab els y and lo gits f , the se c ond derivative is: ∂ 2 ℓ ∂ y ∂ f = − I m . (17) While the GLS is formally deriv ed in terms of logit sensitivit y , interpreting these scores in the probabil- it y space is often more practical. F or classification, w e th us consider the GLS in the probability space. Applying the c hain rule, we hav e: GLS i = ∂ ˆ p i ∂ y i = ∂ s ∂ f × ∂ f ˆ θ ( x i ) ∂ y i = 1 n diag( ˆ p i ) − ˆ p i ˆ p ⊤ i | {z } Softmax Jacobian J θ f ˆ θ ( x i ) H − 1 ˆ θ J θ f ˆ θ ( x i ) ⊤ . This form ulation yields a leverage score properly nor- malized within the probability simplex. W e use this score as our primary priv acy metric in the following ex- p erimen ts. Detailed deriv ations are in App endix B.3 . 4.5 In terpretation in Binary Classifica- tion T o further interpret the GLS in classification, w e con- sider the classical binary logistic regression mo del. The mo del predicts ˆ p i = σ ( ˆ y i ) , where ˆ y i = x ⊤ i θ and σ : z 7→ 1 / (1 + e − z ) is the sigmoid func- tion. The standard cross entrop y loss is ℓ ( ˆ y i , y i ) = − y i log( ˆ p i ) − (1 − y i ) log(1 − ˆ p i ) . Prop osition 4.5 (Binary Logistic Leverage Score) . Under the cr oss entr opy loss, for a binary lo gistic r e gr ession mo del, the GLS in the pr ob ability sp ac e r e duc es to: ∂ ˆ p i ∂ y i = σ ′ ( ˆ y i ) × GLS i = w i x ⊤ i X ⊤ W X − 1 x i , (18) with w i = ˆ p i (1 − ˆ p i ) = σ ′ ( ˆ y i ) and W = diag( w 1 , . . . , w n ) . 5 The pro of is provided in App endix B.4 . W e recov er here the leverage score from logistic regression defined in Pregibon ( 1981 ). This score can b e in terpreted as a weigh ted v ersion of the input’s norm in the Hessian- induced metric: ∂ ˆ p i ∂ y i = σ ′ ( ˆ y i ) × ∥ x i ∥ 2 H − 1 ˆ θ , (19) with H ˆ θ = X ⊤ W X the Hessian of the loss at con- v ergence. The term w i = ˆ p i (1 − ˆ p i ) reac hes its maxim um at ˆ p i = 0 . 5 , which corresp onds to sample lo cated on the decision b oundary . This aligns with the LiRA in tuition that samples near the decision rule are most sensitiv e to p erturbations in the training set, and th us most vulnerable to membership inference ( Carlini et al. , 2022 ). 5 Scalable Computation & Ap- pro ximations Computing the GLS directly from its closed-form ex- pression can b e computationally prohibitive for deep net works due to the need to inv ert the p × p Hessian matrix, where p is the num b er of mo del parameters. T o address this challenge, we prop ose an efficient algo- rithm that lev erages Hessian-vector pro ducts (HVPs) and iterative solv ers to compute the GLS without explicitly forming or in verting the Hessian. 5.1 Efficien t GLS Computation The key observ ation is that the GLS expression in- v olves the term H − 1 ˆ θ J θ f ˆ θ ( x i ) ⊤ . Instead of computing the in verse Hessian directly , we can reformulate this as solving a linear system: H ˆ θ Z i = J θ f ˆ θ ( x i ) ⊤ , (20) for eac h sample i , where Z i is the unkno wn matrix w e wish to compute. Once Z i is obtained, the GLS can be computed as: GLS i = − 1 n ∂ 2 ℓ ∂ y ∂ f J θ f ˆ θ ( x i ) Z i . (21) T o solv e the linear system efficiently , we employ the conjugate gradien t (CG) metho d, which only requires the ability to compute Hessian-vector pro ducts. These pro ducts can b e c omputed efficiently using automatic differen tiation techniques without explicitly forming the Hessian matrix ( P earlmutter , 1994 ). The ov erall algorithm for computing the GLS for a set of target samples X = { x i } k i =1 is summarized Algorithm 1 In verse-Hessian Jacobian Pro duct via Conjugate Gradien t Solver Input: D train , Mo del f ˆ θ , Jacobians J ⊤ , Damping λ , Iterations T Output: Z ∈ R p × m Define the linear op erator : A [ J ⊤ ] = ( H ˆ θ + λ I ) J ⊤ Z ← CG Algorithm ( op erator = A , target = J ⊤ , iters = T ) return Z Algorithm 2 Generalized Lev erage Score Computa- tion Input: T arget samples X , D train , f ˆ θ , Damping λ , Iterations T Output: { GLS i } k i =1 for i = 1 to k do J i ← {∇ θ f ˆ θ ( x i ) } k i =1 M i ← − 1 n ∂ 2 ℓ ∂ y ∂ f ( f ( x i ) , y i ) Z i ← Algorithm 1 ( D train , f ˆ θ , J ⊤ i , λ, T ) GLS i ← J i Z i M i end for return { GLS i } k i =1 in Algorithm 2 . The key steps in volv e computing the Jacobians for the target samples, solving the linear sys- tems using CG (Algorithm 1 ), and finally assem bling the GLS v alues. Using torch.vmap ( P aszke et al. , 2019 ) and tensor parallelization, we implemented a v ersion of this algorithm that w as able to process m ultiple target samples at the same time. Computational Complexit y . The prop osed framew ork av oids the O ( p 3 ) time and O ( p 2 ) memory requiremen ts of direct Hessian in version b y using a conjugate gradient (CG) solv er. The primary computational cost is dominated b y solving the linear system A [ J ⊤ ] , whic h computes Hessian-Matrix Pro ducts (HMPs) via a pass ov er the training set D train . F or a mo del with p parameters and m output logits, the total time complexit y is O ( k T npm ) , where k is the num ber of target points, n is the training set size, and T is the n umber of CG iterations. The Hessian Jacobian pro duct can be computed using mini- batches for the Hessian part, allowing for efficient estimation by summing the contributions from each batch without requiring the full dataset in one pass. The CG algorithm iteratively refines the solution to the linear system using only matrix-vector pro ducts, making it suitable for large-scale problems where the matrix is not explic- itly formed. The details of the CG algorithm can b e found in Hestenes and Stiefel ( 1952 ). 6 T able 1: Computational Complexit y of GLS Subpro- cesses. Subpro cess Time Memory Jacobian O ( pm ) O ( pm ) Hessian Matrix Product O ( npm ) O ( pm ) CG Solv er ( T iter) O ( T npm ) O ( pm ) T otal for k p oin ts O ( kT npm ) O ( pm ) 5.2 Appro ximation via Lay er Restric- tion Exact computation of GLS o ver the full training set ( k = n ) is in tractable for deep netw orks as our algo- rithm yields an O ( n 2 ) time complexit y . Thus, our prop osed algorithm is computationally feasible for a mo derate num b er of target p oin ts k since the time complexit y scales linearly with k . 10 3 10 4 Compute T ime (s) Last Layer Last 2 Layers Last 4 Layers Full Network Differentiation Depth 0.45 0.50 0.55 LiRA Corr (Spearman ρ ) Figure 1: Sp earman’s correlation (green line) b et ween LiRA scores and GLS (trace), and computational time (blue area) for differen t netw ork depths. Error bars sho w 95% confidence interv als across 16 mo dels. Computational times are measured on a single A100 GPU. T o mitigate this, w e explored computing our GLS b y differen tiating only through a subset of lay ers, and found that restricting the computation to the last few lay ers yields nearly the same correlation with our comparison metrics as the full mo del. F ormally , this corresp onds to computing ∂ ˆ y ∂ y on a restricted mo del θ sub where the feature extractor is frozen. This is equiv alen t to masking the full Jacobian and Hessian to the subspace θ sub . The results can b e observed in Figure 1 , where the correlation betw een the LiRA Score and the GLS is The LiRA score is computed as the likelihoo d ratio of the observ ation under tw o Gaussian distributions mo deling the member and non-member hypotheses. The parameters of these plotted against computation time for v arious differen- tiation depths. The resulting complexit y is reduced to O ( k T nmp sub ) , where p sub is the num b er of pa- rameters in θ sub . In particular, when the last la y er is linear, the GLS admits a closed-form solution. W e recov er the classical statistical lev erage scores in the feature space, allo wing for easier computation. With d b eing the feature dimension, the closed-form lead to an improv ed complexit y of O ( nd 2 + d 3 ) for the regression case with quadratic loss (even with m > 1 ) and O ( nm 2 d 2 + m 3 d 3 ) for the classification case with the cross entrop y loss. More details can b e found in App endix C . 6 Exp erimen ts In this section, we empirically ev aluate the effectiv e- ness of the Generalized Leverage Score ( GLS ) as a mem b ership inference metric. W e assess its correla- tion with the LiRA score, an established benchmark for membership inference attacks, and analyze its p er- formance in identifying outlier samples within the training data. 6.1 Setup Dataset and Mo del. W e conduct exp erimen ts on the CIF AR-10 dataset ( Krizhevsky and Hin ton , 2009 ), using a ResNet-18 arc hitecture ( He et al. , 2016 ) trained to achiev e approximately 92% test accuracy . The training set consists of 50,000 images, while the test set con tains 10,000 images. In order to compute the LiRA scores, w e follo w the proto col established by Carlini et al. ( 2022 ), training an ensemble of 200 shado w mo dels with arc hitectures iden tical to the target mo del. Eac h shadow mo del is trained on a random subset of 40,000 images from CIF AR-10, with the remaining 10,000 images reserved for ev aluation. The LiRA scores are then computed for all the samples, split betw een members and non- mem b ers of the target mo del’s training set. Computation T o compute the Generalized Lever- age Scores ( GLS ), we implemen t the algorithm out- lined in Section 5.1 . W e set the damping factor λ b et w een 10 − 4 and 10 − 2 , dep ending on the differen- tiation depth and run the conjugate gradient (CG) solv er for T = 100 iterations. In most cases, the solv er returned a s olution (with a tolerance of 10 − 3 on the equalit y) b efore the maximum n umber of iterations distributions are estimated u si ng an ensemble of shadow mo dels. More information can b e found in Carlini et al. ( 2022 ). 7 T able 2: Sp earman correlation b et w een metrics computed using shadow mo dels (LiRA) and Generalized Lev erage Scores for different differen tiation depths. Results are a veraged ov er 16 different mo dels; 95% quan tiles are rep orted next to each entry using ± notation. Highest correlations per metric are in b old. Generalized Leverage Scores Shado w Metrics last la yer 2 last la yers 4 last la yers full model LiRA Score 0 . 50 ± 0 . 05 0 . 51 ± 0 . 04 0 . 51 ± 0 . 03 0 . 51 ± 0 . 04 | σ test /σ train | 0 . 40 ± 0 . 04 0 . 41 ± 0 . 03 0 . 41 ± 0 . 04 0 . 41 ± 0 . 03 TPR@FPR = 0 . 05 0 . 27 ± 0 . 04 0 . 28 ± 0 . 04 0 . 28 ± 0 . 05 0 . 29 ± 0 . 05 | µ test − µ train | 0 . 06 ± 0 . 04 0 . 06 ± 0 . 03 0 . 07 ± 0 . 04 0 . 06 ± 0 . 04 t -SNE dimension 1 t -SNE dimension 2 10 − 6 10 − 5 10 − 4 10 − 3 Lev erage Score (Trace) – Class Frog Figure 2: t -SNE visualization of the mo del’s final lay er represen tations for the "F rog" class. P oint coloring represen ts the leverage score magnitude, highlighting the geometric distribution of high-leverage samples. w as reached. W e trained 16 differen t mo dels with dif- feren t random seeds to compute the GLS scores and rep ort av eraged results. T o compare results across mo dels, we k eep the first 128 samples in the train set for all models. W e tried different op erations on the GLS matrix to get a scalar score: the T race, the F rob enius Norm and the Sp ectral Norm. W e observed that eac h of these op erations lead to similar results. In the rest of the pap er we rep ort results with the T race op erator. 6.2 Metrics and Results Correlation with LiRA T able 2 rep orts the Sp ear- man correlation b et w een the Generalized Leverage Scores, computed for different differentiation depth, and v arious shado w mo del indicators, including the LiRA score, the mean gap ( | µ in − µ out | ), the standard deviation ratio ( σ in /σ out ), and the TPR at lo w FPR. W e observ e a p ositiv e rank correlation b et ween GLS and the LiRA score as w ell as other metrics. This confirms that the leverage score captures a similar vul- nerabilit y signal as more computationally exp ensiv e shado w mo dels: high-leverage p oin ts corresp ond to samples where attac ks are more lik ely to confiden tly predict mem b ership. Outlier Detection T o further understand the char- acteristics of high-leverage samples, we visualize the mo del’s final la yer representations using t -SNE ( v an der Maaten and Hin ton , 2008 ). In Figure 2 , w e color the p oin ts based on their GLS magnitude, re- v ealing that most of the low-lev erage samples cluster around the class center in the representation space. In con trast the points far from the class center tend to ha ve high leverage scores. A dditionally , we present a qualitative analysis of the images corresponding to the highest and low est GLS v alues in Figure 3 . W e observe that high-lev erage samples often exhibit atypical features or artifacts, suc h as unusual poses or bac kgrounds, whic h ma y con tribute to their disprop ortionate influence on the mo del. Con versely , lo w-leverage samples tend to b e more protot ypical representations of their respective classes. Mem b ership Inference A ttack Ev aluation W e ev aluate the effectiveness of GLS in mem b ership infer- ence attac ks by comparing the p erformance of LiRA for high- GLS samples and lo w- GLS samples. The results sho w that high- GLS samples are significan tly more vulnerable to membership inference attac ks com- pared to lo w- GLS samples. Figure 4 illustrates the error trade-off curves for samples in the top and b ottom 2% of GLS quan tiles. The curves represent the mean of 50 LiRA attacks, with shaded areas indicating 95% p ercen tile in ter- v als. Notably , high-lev erage samples demonstrate a mark ed shift tow ard the lo wer-left of the plot, indicat- ing increased susceptibilit y to mem b ership inference attac ks. 8 GLS: 4 . 1 × 10 − 9 GLS: 5 . 6 × 10 − 9 GLS: 7 . 2 × 10 − 9 GLS: 1 . 4 × 10 − 8 GLS: 1 . 6 × 10 − 8 GLS: 1 . 7 × 10 − 8 GLS: 1 . 9 × 10 − 8 GLS: 2 . 3 × 10 − 8 GLS: 2 . 7 × 10 − 8 GLS: 3 . 5 × 10 − 8 GLS: 7 . 5 × 10 − 3 GLS: 7 . 5 × 10 − 3 GLS: 7 . 8 × 10 − 3 GLS: 7 . 9 × 10 − 3 GLS: 8 . 0 × 10 − 3 GLS: 8 . 3 × 10 − 3 GLS: 8 . 4 × 10 − 3 GLS: 8 . 7 × 10 − 3 GLS: 9 . 0 × 10 − 3 GLS: 9 . 1 × 10 − 3 Lowest Generalized Le verage Scores Highest Generalized Lev erage Scores Figure 3: Visualization of the 10 images with the Low est and Highest Generalized Lev erage Score. 0 . 00 0 . 25 0 . 50 0 . 75 1 . 00 α 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 β 1 − α Lowest GLS Lira A verage Lira Highest GLS Lira 95% Intervals Figure 4: Error trade-off curves ( α = FPR , β = FNR ) for samples in the 2% highest and lo west GLS quan- tiles. Curves represent the mean of 50 LiRA attac ks; shaded areas indicate 95% p ercentile interv als. 7 Conclusion W e introduced the GLS , an influence-based, p er- instance diagnostic for assessing priv acy exp osure without retraining models or explicitly simulating attac ks. Although derived in the linear setting, GLS pro vides a computationally efficient to ol for identifying data p oin ts with elev ated risk of membership inference in deep learning mo dels. Rather than offering formal priv acy guarantees, it serves as an interpretable and scalable pro xy for individual vulnerabilit y that com- plemen ts attack-based audits. While GLS do es not p erfectly align with shado w-mo del–based metrics, it consisten tly highlights samples that are more suscepti- ble to priv acy leak age, making it particularly suitable for large-scale auditing scenarios. A key limitation of our approac h is that its theoretical justification is con- fined to linear mo dels, with effectiveness in non-linear regimes supported empirically . Promising directions for future w ork include extending the framework to white-b o x auditing settings, systematically ev aluating its b eha vior across architectures and datasets, and studying its interaction with differen t training regimes, including differen tially priv ate optimization. References Martín Abadi, Andy Chu, Ian Go odfellow, H. Bren- dan McMahan, Ilya Mironov, Kunal T alwar, and Li Zhang. Deep Learning with Differential Priv acy . In Pr o c e e dings of the 2016 ACM SIGSAC Confer- enc e on Computer and Communic ations Se curity , pages 308–318, Octob er 2016. doi: 10.1145/2976749. 2978318. Da vid A Belsley , Edwin Kuh, and Ro y E W elsch. R e gr ession Diagnostics: Identifying Influential Data and Sour c es of Col line arity , chapter 2, pages 6– 84. John Wiley & Sons, Ltd, 1980. doi: 10.1002/ 0471725153.c h2. Nic holas Carlini, Chang Liu, Úlfar Erlingsson, Jernej K os, and Da wn Song. The secret sharer: Ev aluat- ing and testing unin tended memorization in neural net works. In 28th USENIX se curity symp osium (USENIX se curity 19) , pages 267–284, 2019. Nic holas Carlini, Steve Chien, Milad Nasr, Sh uang Song, Andreas T erzis, and Florian T ramer. Mem- b ership inference attacks from first principles. In 2022 IEEE symp osium on se curity and privacy (SP) , pages 1897–1914. IEEE, 2022. R. Dennis Co ok. Detection of Influential Observ ation in Linear Regression. T e chnometrics , 19(1):15–18, 1977. doi: 10.1080/00401706.1977.10489493. Jinsh uo Dong, Aaron Roth, and W eijie J S u. Gaussian differen tial priv acy. Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy , 84(1):3– 37, 2022. 9 C. Dwork. Differ ential privacy , volume 2006. ICALP , 2006. Pages: 1-12. Vitaly F eldman. Does learning require memorization? a short tale about a long tail. In Pr o c e e dings of the 52nd annual ACM SIGACT symp osium on the ory of c omputing , pages 954–959, 2020. Vitaly F eldman and Chiyuan Zhang. What neural net works memorize and why: Disco vering the long tail via influence estimation. A dvanc es in Neur al In- formation Pr o c essing Systems , 33:2881–2891, 2020. Kaiming He, Xiangyu Zhang, Shao qing Ren, and Jian Sun. Deep residual learning for image recognition. In Pr o c e e dings of the IEEE c onfer enc e on c omputer vision and p attern r e c o gnition , pages 770–778, 2016. M.R. Hestenes and E. Stiefel. Metho ds of conjugate gradien ts for solving linear systems. Journal of R ese ar ch of the National Bur e au of Standar ds , 49 (6):409, Decem b er 1952. ISSN 0091-0635. doi: 10. 6028/jres.049.044. Matthew Jagielski, Jonathan Ullman, and Alina Oprea. Auditing differentially priv ate machine learn- ing: How priv ate is priv ate sgd? A dvanc es in Neur al Information Pr o c essing Systems , 33:22205–22216, 2020. P ang W ei Koh and Percy Liang. Understanding Black- b o x Predictions via Influence F unctions. In Pr o- c e e dings of the 34th International Confer enc e on Machine L e arning , pages 1885–1894. PMLR, July 2017. Alex Krizhevsky and Geoffrey Hinton. Learning m ul- tiple la yers of features from tin y images. T ec hnical Rep ort 0, Universit y of T oron to, T oronto, On tario, 2009. Mathias Lecuy er, V aggelis A tlidakis, Ro xana Geam- basu, Daniel Hsu, and Suman Jana. Certified ro- bustness to adv ersarial examples with differen tial priv acy . In 2019 IEEE symp osium on se curity and privacy (SP) , pages 656–672. IEEE, 2019. Milad Nasr, Shuang Songi, Abhradeep Thakurta, Nico- las P ap ernot, and Nicholas Carlin. A dversary in- stan tiation: Lo wer bounds for differentially priv ate mac hine learning. In 2021 IEEE Symp osium on se curity and privacy (SP) , pages 866–882. IEEE, 2021. Jerzy Neyman and Egon Sharp e P earson. Ix. on the problem of the most efficient tests of statistical h yp otheses. Philosophic al T r ansactions of the R oyal So ciety of L ondon, Series A: Containing Pap ers of a Mathematic al or Physic al Char acter , 231(694-706): 289–337, 02 1933. doi: 10.1098/rsta.1933.0009. A dam Paszk e, Sam Gross, F rancisco Massa, A dam Lerer, James Bradbury , Gregory Chanan, T revor Killeen, Zeming Lin, Natalia Gimelshein, Luca An tiga, et al. Pytorc h: An imp erativ e style, high- p erformance deep learning library . A dvanc es in neur al information pr o c essing systems , 32, 2019. Barak A. P earlmutter. F ast exact m ultiplication b y the hessian. Neur al Computation , 6(1):147–160, 01 1994. doi: 10.1162/neco.1994.6.1.147. Daryl Pregibon. Logistic regression diagnostics. The A nnals of Statistics , 9(4):705–724, 1981. Reza Shokri, Marco Stronati, Congzheng Song, and Vitaly Shmatik ov. Mem b ership inference attacks against mac hine learning mo dels. In 2017 IEEE symp osium on se curity and privacy (SP) , pages 3– 18. IEEE, 2017. Laurens v an der Maaten and Geoffrey Hinton. Vi- sualizing Data using t-SNE. Journal of Machine L e arning R ese ar ch , 9(86):2579–2605, 2008. Sam uel Y eom, Irene Giacomelli, Matt F redrikson, and Somesh Jha. Priv acy risk in mac hine learn- ing: Analyzing the connection to ov erfitting. In 2018 IEEE 31st c omputer se curity foundations sym- p osium (CSF) , pages 268–282. IEEE, 2018. Sa jjad Zarifzadeh, Philipp e Liu, and Reza Shokri. Lo w-Cost High-Po wer Membership Inference At- tac ks. In Ruslan Salakh utdinov, Zico Kolter, Katherine Heller, Adrian W eller, Nuria Oliv er, Jonathan Scarlett, and F elix Berk enk amp, editors, Pr o c e e dings of the 41st International Confer enc e on Machine L e arning . PMLR, 21–27 Jul 2024. Chiyuan Zhang, Samy Bengio, Moritz Hardt, Ben- jamin Rec ht, and Oriol Viny als. Understanding deep learning requires rethinking generalization. In International Confer enc e on L e arning R epr esenta- tions , 2017. 10 A Lev erage Scores and Mem b ership V ulnerability: Pro ofs in the Linear Gaussian Case In this app endix, we provide detailed pro ofs for the results presented in Section 3 , which analyzes the relationship b et w een leverage scores and membership inference vulnerability in the context of linear mo dels. A.1 Setup and Notations W e recall here the setup for the linear regression model and the asso ciated residual distributions under mem b ership and non-mem b ership hypotheses. Consider a fixed design matrix X ∈ R n × d , where each row x ⊤ i represen ts a data point. W e examine the m ultiv ariate linear mo del: Y = X Θ ∗ + E , where Y ∈ R n × m is the resp onse matrix, Θ ∗ ∈ R d × m is the true parameter matrix, and E ∈ R n × m represen ts noise. W e assume the noise is centered, indep enden t and identically distributed (i.i.d.) Gaussian, such that for an y row i , E [ E i · ] = 0 and Co v ( E i · ) = σ 2 I m . The Ordinary Least Squares (OLS) estimator is ˆ Θ = ( X ⊤ X ) − 1 X ⊤ Y . The fitted v alues are ˆ Y = H Y , where H = X ( X ⊤ X ) − 1 X ⊤ is the hat matrix. F or a sp ecific data p oin t i , the residual vector r i ∈ R m is defined as: r i = y i − ˆ Θ ⊤ x i = n X j =1 ( δ ij − h ij ) y j , where δ ij is the Kronec ker delta. The diagonal elements of the hat matrix, h ii = x ⊤ i ( X ⊤ X ) − 1 x i , are the lever age sc or es . They satisfy 0 ≤ h ii ≤ 1 and P n i =1 h ii = d , serving as a measure of the geometric influence of x i on the model’s predictions ( Belsley et al. , 1980 ). W e consider the task of Membership Inference Attac k (MIA), framed as a hypothesis test on a residual r i . W e distinguish b et w een tw o scenarios for a p oin t i : • H 1 = H train (Mem b er): The p oin t i w as included in the training set. • H 0 = H test (Non-mem b er): The p oin t i is a fresh test p oin t with the same features x i but a fresh noise realization ˜ ε i . A.2 Residual Distributions Prop osition 3.1 (Residual Distributions) . Under the Gaussian noise assumption, the r esiduals for the i -th data p oint under the memb er and non-memb er hyp otheses fol low distinct multivariate normal distributions: r i | H tr ain ∼ N 0 , σ 2 (1 − h ii ) I m , (3) r i | H test ∼ N 0 , σ 2 (1 + h ii ) I m . (4) Conse quently, the squar e d norms of the r esiduals fol low sc ale d Chi-squar e d distributions: ∥ r i ∥ 2 | H tr ain ∼ σ 2 (1 − h ii ) χ 2 ( m ) , (5) ∥ r i ∥ 2 | H test ∼ σ 2 (1 + h ii ) χ 2 ( m ) . (6) Pr o of. Under the membership hypothesis H 1 , the residual for p oin t i is: r i = y i − ˆ Θ ⊤ x i = (Θ ∗⊤ x i + ε i ) − n X j =1 h ij (Θ ∗⊤ x j + ε j ) = ε i − n X j =1 h ij ε j = (1 − h ii ) ε i − X j = i h ij ε j 11 Since the noise terms ε j are i.i.d. Gaussian with mean 0 and cov ariance σ 2 I m , the residual r i is also Gaussian with mean 0 and cov ariance: Co v ( r i ) = E [ r i r ⊤ i ] = (1 − h ii ) 2 σ 2 I m + X j = i h 2 ij σ 2 I m = σ 2 (1 − h ii ) 2 + X j = i h 2 ij I m = σ 2 (1 − h ii ) I m where w e used the property of the hat matrix that P n j =1 h 2 ij = h ii . Under the non-mem b ership hypothesis H 0 , the residual for p oin t i is: r i = ˜ y i − ˆ Θ ⊤ x i = (Θ ∗⊤ x i + ˜ ε i ) − n X j =1 h ij (Θ ∗⊤ x j + ε j ) = ˜ ε i − n X j =1 h ij ε j = ˜ ε i − h ii ε i − X j = i h ij ε j Similarly , the co v ariance of r i under H 0 is: Co v ( r i ) = E [ r i r ⊤ i ] = σ 2 I m + h 2 ii σ 2 I m + X j = i h 2 ij σ 2 I m = σ 2 1 + h 2 ii + X j = i h 2 ij I m = σ 2 (1 + h ii ) I m Th us, we hav e: r i | H train ∼ N (0 , σ 2 (1 − h ii ) I m ) r i | H test ∼ N (0 , σ 2 (1 + h ii ) I m ) A.3 Optimal Detection via Lik eliho o d Ratio T o distinguish b et w een H 1 and H 0 , the Neyman-P earson lemma identifies the Likelihoo d Ratio (LR) as the most pow erful test. Prop osition 3.2 (Optimal MIA T est) . L et S i ( r i ) b e the lo g-likeliho o d r atio statistic for the i -th sample. The most p owerful test at level α r eje cts the non-memb er hyp othesis H test (pr e dicts memb ership) if S i ( r i ) > γ α , wher e the sufficient statistic is given by: S i ( r i ) = m 2 ln 1 + h ii 1 − h ii − h ii σ 2 (1 − h 2 ii ) ∥ r i ∥ 2 . (7) Pr o of. The likelihoo d ratio for the residual r i is giv en by: Λ( r i ) = p ( r i |H train ) p ( r i |H test ) 12 where p ( r i |H train ) and p ( r i |H test ) are the probability density functions of the multiv ariate normal distributions under the t wo hypotheses. Using the results from Section A.2 , we hav e: p ( r i |H train ) = 1 (2 π ) m/ 2 | σ 2 (1 − h ii ) I m | 1 / 2 exp − 1 2 r ⊤ i ( σ 2 (1 − h ii ) I m ) − 1 r i = 1 (2 π ) m/ 2 ( σ 2 (1 − h ii )) m/ 2 exp − 1 2 σ 2 (1 − h ii ) ∥ r i ∥ 2 and p ( r i |H test ) = 1 (2 π ) m/ 2 | σ 2 (1 + h ii ) I m | 1 / 2 exp − 1 2 r ⊤ i ( σ 2 (1 + h ii ) I m ) − 1 r i = 1 (2 π ) m/ 2 ( σ 2 (1 + h ii )) m/ 2 exp − 1 2 σ 2 (1 + h ii ) ∥ r i ∥ 2 Substituting these expressions in to the lik eliho od ratio, we obtain: Λ( r i ) = 1 (2 π ) m/ 2 ( σ 2 (1 − h ii )) m/ 2 exp − 1 2 σ 2 (1 − h ii ) ∥ r i ∥ 2 1 (2 π ) m/ 2 ( σ 2 (1+ h ii )) m/ 2 exp − 1 2 σ 2 (1+ h ii ) ∥ r i ∥ 2 = 1 + h ii 1 − h ii m/ 2 exp 1 2 σ 2 1 1 + h ii − 1 1 − h ii ∥ r i ∥ 2 = 1 + h ii 1 − h ii m/ 2 exp − h ii σ 2 (1 − h 2 ii ) ∥ r i ∥ 2 Th us, the log-likelihoo d ratio is: log Λ( r i ) = m 2 log 1 + h ii 1 − h ii − h ii σ 2 (1 − h 2 ii ) ∥ r i ∥ 2 A.4 Error Curve Deriv ation W e deriv e here the error trade-off curve for the optimal membership inference test based on the residual norm ∥ r i ∥ 2 . W e provide an illustration of these theoretical curv es for different h ii v alues in Figure 5 . Higher lev erage scores corresp ond to curves closer to the origin, indicating increased vulnerabilit y to membership inference attac ks. The curves are non-symmetric due to the differing v ariances of the residual distributions under the t wo hypotheses, leading to easier detection of non-mem b ers than members. Prop osition 3.3 (MIA Errors Curve) . F or the p oint i , the tr ade-off b etwe en the false alarm r ate α (T yp e-I err or) and the misse d dete ction r ate β (T yp e-II err or) is describ e d by the curve: β i ( α i ) = 1 − F m 1 + h ii 1 − h ii F − 1 m ( α i ) . (8) wher e F m is the Cumulative Distribution F unction of the χ 2 ( m ) distribution. Pr o of. The type I error (false p ositiv e rate, i.e., non-member misclassified as member) is α ( t ) = P H test ∥ r i ∥ 2 ≤ t = F χ 2 m t σ 2 (1 + h ii ) , (A.22) where F χ 2 m is the cum ulative distribution function (CDF) of the χ 2 distribution with m degrees of freedom. The t yp e II error (false negative rate, i.e., member misclassified as non-mem b er) is β ( t ) = 1 − P H train ∥ r i ∥ 2 ≤ t = 1 − F χ 2 m t σ 2 (1 − h ii ) . (A.23) 13 0 . 0 0 . 5 1 . 0 α i 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 β i 1 − α h ii = 0 . 1 h ii = 0 . 5 h ii = 0 . 9 Figure 5: Theoretical error trade-off curv es for different leverage scores h ii for m = 1 . Eliminating t b y injecting Equation ( A.22 ) in to Equation ( A.23 ), we obtain: β i ( α i ) = 1 − F m 1 + h ii 1 − h ii F − 1 m ( α ) , where F m is the CDF of the χ 2 ( m ) distribution. B Generalized Leverage Score: Pro ofs In this section, we pro vide a formal deriv ation of the Generalized Leverage Score ( GLS ) for arbitrary differen tiable mo dels, following the notation and conv en tions of the main pap er. W e also derive the pro ofs for the second-order deriv ativ es required in the closed-form expression of the GLS for tw o common loss functions: quadratic loss and cross entrop y loss. B.1 Setup W e restate the problem setup for completeness. Consider a dataset D = { ( x i , y i ) } n i =1 and a mo del f θ : X → Y parameterized b y θ ∈ Θ ⊂ R p . The empirical risk is giv en by L ( θ ) = 1 n n X i =1 ℓ ( f θ ( x i ) , y i ) , where ℓ is a twice-differen tiable loss function. The optimal parameters are ˆ θ = arg min θ ∈ Θ L ( θ ) . W e recall the definition of the Generalized Leverage Score. Definition 4.1 (Generalized Leverage Score) . The Generalized Leverage Score ( GLS ) of the i -th sample is defined as the infinitesimal s ensitivit y of the mo del’s prediction at x i to its o wn observed lab el y i : GLS i = ∂ f ˆ θ ( x i ) ∂ y i ∈ R m × m . (12) B.2 Deriv ation via Implicit Differentiation W e recall the closed-form expression for th e GLS . Prop osition 4.2 (Closed-form GLS ) . Assuming the aver age d loss function L is twic e differ entiable and its Hessian H ˆ θ is invertible, the GLS for a univariate output is given by: GLS i = − 1 n ∇ θ f ˆ θ ( x i ) 2 H − 1 ˆ θ ∂ 2 ℓ ∂ y ∂ f , (13) 14 wher e ∥ v ∥ 2 A = v ⊤ A v denotes the squar e d Matrix norm. F or multivariate outputs, this gener alizes to: GLS i = − 1 n J θ f ˆ θ ( x i ) H − 1 ˆ θ J θ f ˆ θ ( x i ) ⊤ ∂ 2 ℓ ∂ y ∂ f , (14) wher e J θ f ˆ θ ( x i ) ∈ R m × p is the Jac obian of the mo del outputs with r esp e ct to the p ar ameters, H ˆ θ is the Hessian of the loss function L , i.e. H ˆ θ = ∇ 2 θ L ( ˆ θ ) , and ℓ denotes the p ointwise loss evaluate d at ( f ˆ θ ( x i ) , y i ) . Pr o of. Let m ∈ N ∗ b e the output dimension of the mo del. W e seek to compute the Jacobian GLS i = ∂ f ˆ θ ( x i ) ∂ y i , accoun ting for the fact that ˆ θ dep ends implicitly on y i through the empirical risk minimization. Applying the c hain rule, we hav e: ∂ f ˆ θ ( x i ) ∂ y i = J θ f ˆ θ ( x i ) ∂ ˆ θ ∂ y i , (B.24) where J θ f ˆ θ ( x i ) ∈ R m × p is the Jacobian of f θ ( x i ) with respect to θ , ev aluated at ˆ θ . W e now need to compute ∂ ˆ θ ∂ y i . W e use implicit differentiation on the optimality condition for ˆ θ . This condition states that the gradient of the empirical risk v anishes at ˆ θ : ∇ θ L ( ˆ θ ) = 0 . Since ˆ θ is implicitly a function of y i , w e treat the optimality condition as an equation in volving b oth θ and y i . Sp ecifically , w e express the first-order condition as ∇ θ L ( ˆ θ ( y i ) , y i ) = 0 , making explicit that ˆ θ dep ends on y i through the optimization, and that L also dep ends directly on y i via the i -th term in the empirical risk. d d y i ∇ θ L ( ˆ θ ( y i ) , y i ) = 0 ∇ 2 θ L ( ˆ θ ) ∂ ˆ θ ∂ y i + ∂ ∂ y i ∇ θ L ( ˆ θ , y i ) = 0 , (B.25) where ∇ 2 θ L ( ˆ θ ) = H ˆ θ is the Hessian of the loss and where the partial deriv ativ e ∂ ∂ y i ∇ θ L is tak en with res pect to y i only via the i -th term in the empirical risk, holding ˆ θ fixed. In the following we derive a closed-form for ∂ ∂ y i ∇ θ L ( ˆ θ , y i ) , and th us we drop the dep endence of ˆ θ on y i in the notation for clarity . Substituting the definition of the empirical risk: ∂ ∂ y i ∇ θ L ( ˆ θ , y i ) = ∂ ∂ y i 1 n n X j =1 ∇ θ ℓ ( f ˆ θ ( x j ) , y j ) = 1 n n X j =1 ∂ ∂ y i ∇ θ ℓ ( f ˆ θ ( x j ) , y j ) . Since the dataset samples are indep enden t, the loss for sample j (where j = i ) do es not dep end explicitly on y i . Therefore, ∂ ∂ y i ∇ θ ℓ j = 0 for all j = i . The summation collapses to the single i -th term: ∂ ∂ y i ∇ θ L ( ˆ θ , y i ) = 1 n ∂ ∂ y i ∇ θ ℓ ( f ˆ θ ( x i ) , y i ) = 1 n ∂ ∂ y i J θ f ˆ θ ( x i ) ⊤ ∇ f ℓ f ˆ θ ( x i ) , y i = 1 n J θ f ˆ θ ( x i ) ⊤ ∂ 2 ℓ ∂ y ∂ f , 15 as J θ f ˆ θ ( x i ) does not dep end on y i . Substituting back into Equation ( B.25 ): ∂ ˆ θ ∂ y i = − 1 n H − 1 ˆ θ J θ f ˆ θ ( x i ) ⊤ ∂ 2 ℓ ∂ y ∂ f . and then substituting in to the c hain rule expression for GLS i (Equation ( B.24 )): GLS i = − 1 n J θ f ˆ θ ( x i ) H − 1 ˆ θ J θ f ˆ θ ( x i ) ⊤ ∂ 2 ℓ ∂ f ∂ y . F or m = 1 , this reduces to the scalar case with J θ f ˆ θ ( x i ) = ∇ θ f ˆ θ ( x i ) ⊤ and ∂ 2 ℓ ∂ f ∂ y b eing a scalar, recov ering the follo wing result: GLS i = − 1 n ∂ 2 ℓ ∂ f ∂ y ∇ θ f ˆ θ ( x i ) ⊤ H − 1 ˆ θ ∇ θ f ˆ θ ( x i ) = − 1 n ∂ 2 ℓ ∂ f ∂ y ∇ θ f ˆ θ ( x i ) 2 H − 1 ˆ θ . B.3 Loss F unction Deriv atives W e provide explicit deriv ations for the mixed second deriv ativ e ∂ 2 ℓ ∂ y ∂ f for tw o common loss functions: quadratic loss and cross en tropy loss with softmax outputs. Prop osition 4.3 (Multiv ariate Regression) . F or the quadr atic loss with tar gets y ∈ R m and pr e dictions f ∈ R m , the se c ond derivative is: ∂ 2 ℓ ∂ y ∂ f = − 2 I m . (15) Pr o of. F or the quadratic loss, we hav e: ℓ ( f ( x ) , y ) = ∥ f ( x ) − y ∥ 2 2 . The first deriv ativ e with respect to f is: ∂ ℓ ∂ f = 2 ( f ( x ) − y ) . The second deriv ativ e is: ∂ 2 ℓ ∂ y ∂ f = ∂ ∂ y (2 ( f ( x ) − y )) = − 2 I m , where I m is the m × m identit y matrix. Prop osition 4.4 (Cross-En tropy Deriv atives) . F or the cr oss-entr opy loss with one-hot lab els y and lo gits f , the se c ond derivative is: ∂ 2 ℓ ∂ y ∂ f = − I m . (17) Pr o of. F or the cross entrop y loss with softmax outputs, we ha ve: ℓ ( f ( x ) , y ) = − m X c =1 y c log e f c ( x ) P m j =1 e f j ( x ) ! = − m X c =1 y c f c ( x ) + log m X j =1 e f j ( x ) . 16 The first deriv ativ e with respect to f is: ∂ ℓ ∂ f c = − y c + e f c ( x ) P m j =1 e f j ( x ) = p c − y c , where p c is the predicted probabilit y for class c . The second deriv ativ e is: ∂ 2 ℓ ∂ f c ∂ y d = ∂ ∂ y d ( p c − y c ) = − δ cd , where δ cd is the Kronec ker delta, equal to 1 if c = d and 0 otherwise. Thus, the second deriv ativ e matrix is: ∂ 2 ℓ ∂ y ∂ f = − I m , where I m is the m × m identit y matrix. R emark B.1 . F or vector-v alued outputs, GLS i is an m × m matrix. Scalar summaries such as the trace, F rob enius norm, or Sp ectral norm can be used to obtain a single leverage score p er sample. As explained in the main part w e decided to use the trace in our exp erimen ts as all these op erations led to similar results. B.4 Pro of of Prop osition 4.5 Prop osition B.2 (Binary Logistic Leverage Score) . Under the cr oss entr opy loss, for a binary lo gistic r e gr ession mo del, the GLS in the pr ob ability sp ac e r e duc es to: ∂ ˆ p i ∂ y i = σ ′ ( ˆ y i ) × GLS i = w i x ⊤ i X ⊤ W X − 1 x i , (B.26) with w i = ˆ p i (1 − ˆ p i ) = σ ′ ( ˆ y i ) and W = diag ( w 1 , . . . , w n ) . Pr o of. W e consider the binary logistic regression mo del where the prediction is ˆ p i = σ ( f ˆ θ ( x i )) with f ˆ θ ( x i ) = x ⊤ i ˆ θ . The individual cross entrop y loss is giv en by ℓ ( ˆ y i , y i ) = − y i log( ˆ p i ) − (1 − y i ) log(1 − ˆ p i ) . 1. Gr adient of the mo del output The gradien t of the linear predictor f ˆ θ ( x i ) with respect to the parameters θ is: ∇ θ f ˆ θ ( x i ) = x i 2. Se c ond derivative of the loss W e first find the deriv ativ e of the loss with resp ect to the linear output f . Recall that ∂ ˆ p i ∂ f = σ ′ ( f ) = ˆ p i (1 − ˆ p i ) . ∂ ℓ ∂ f = ∂ ℓ ∂ ˆ p i ∂ ˆ p i ∂ f = − y i ˆ p i + 1 − y i 1 − ˆ p i ˆ p i (1 − ˆ p i ) = ˆ p i − y i T aking the mixed partial deriv ativ e with respect to the lab el y i : ∂ 2 ℓ ∂ y i ∂ f = ∂ ∂ y i ( ˆ p i − y i ) = − 1 3. Hessian of the total loss The Hessian H ˆ θ for the a veraged cross entrop y loss L = 1 n P j ℓ j is: 17 H ˆ θ = ∇ 2 θ L = 1 n n X j =1 ∂ 2 ℓ j ∂ f 2 x j x ⊤ j Since ∂ 2 ℓ j ∂ f 2 = ∂ ∂ f ( ˆ p j − y j ) = ˆ p j (1 − ˆ p j ) = w j , w e hav e: H ˆ θ = 1 n X ⊤ W X 4. Computing the GLS i (L o git Sp ac e) Substituting these in to your general GLS formula: GLS i = − 1 n ( − 1) ∥ x i ∥ 2 ( 1 n X ⊤ W X ) − 1 = x ⊤ i X ⊤ W X − 1 x i 5. Mapping to the Pr ob ability Sp ac e T o find the sensitivit y in the probability space ∂ ˆ p i ∂ y i , w e apply the chain rule: ∂ ˆ p i ∂ y i = ∂ ˆ p i ∂ f × ∂ f ∂ y i = σ ′ ( ˆ y i ) × GLS i Substituting w i = σ ′ ( ˆ y i ) = ˆ p i (1 − ˆ p i ) , w e obtain: ∂ ˆ p i ∂ y i = w i x ⊤ i X ⊤ W X − 1 x i This completes the proof. C GLS for Last Linear Lay er In this section, w e deriv e the exact closed-form solution for our score matrix when differentiation is restricted to the last linear la yer. W e denote the Generalized Lev erage Score, computed with differentiation restricted to the last linear lay er, as GLS i θ sub . W e also denote d the dimension of the feature vector output by the p en ultimate la yer, and we augment it with a bias term to form ˜ g i = [ g ( x i ) ⊤ , 1] ⊤ ∈ R d +1 . W e analyze t wo cases separately: the quadratic loss for regression and the cross entrop y loss for classification. The separation is necessary b ecause the quadratic loss treats each output dimension indep enden tly , whereas the cross entrop y loss couples all classes through the softmax function, prev enting decoupled computation. Remark C.3 , a the end of the section, provide a complexity analysis for b oth cases. C.1 Quadratic Loss (Regression) Prop osition C.1 (Last Linear La yer GLS for quadratic loss) . L et ˜ g i ∈ R d +1 b e the fe atur e ve ctor (emb e ddings and bias) and ˜ G the matrix stacking al l ve ctors ˜ g i . The last-layer GLS for sample i under the quadr atic loss is given by: GLS i θ sub = ˜ g ⊤ i ˜ G ⊤ ˜ G − 1 ˜ g i . (C.27) Pr o of. W e consider a neural netw ork model where the last lay er is a linear transformation. 1. Notation and Jac obian Structur e Let the mo del output b e f ( x ) = W g ( x ) + b ∈ R m . W e denote the flattened parameter v ector of this la yer as θ sub = v ec ([ W , b ]) ∈ R m ( d +1) . Since the mo del is linear with resp ect to θ sub , the Jacobian of the output f ( x i ) with respect to the parameters is a tensor pro duct: J i θ sub = ∇ θ sub f ( x i ) = I m ⊗ ˜ g ⊤ i ∈ R m × m ( d +1) (C.28) where I m is the iden tity matrix of size m , and ⊗ denotes the Kronec ker pro duct. 18 2. The Hessian Matrix The Hessian of the loss L with resp ect to θ sub is defined as H | θ sub = 1 n P n j =1 J ⊤ j | θ sub ( ∇ 2 f ℓ j ) J j | θ sub . F or the quadratic loss, w e hav e ∇ 2 f ℓ = 2 I m . Th us, the Hessian b ecomes: H | θ sub = 2 n n X j =1 ( I m ⊗ ˜ g j )( I m ⊗ ˜ g ⊤ j ) = I m ⊗ 2 n n X j =1 ˜ g j ˜ g ⊤ j = I m ⊗ 2 n ˜ G ⊤ ˜ G The in verse is given by : H | θ sub − 1 = I m ⊗ n 2 ( ˜ G ⊤ ˜ G ) − 1 (C.29) 3. Derivation of the GLS The Generalized Lev erage Score matrix is giv en by GLS i = 1 n · J i H − 1 J ⊤ i · − ∂ 2 ℓ ∂ y ∂ f W e hav e the equiv alent formulation using the restricted Jacobian and Hessian: GLS i θ sub = 1 n · J i θ sub · H | θ sub − 1 · J ⊤ i θ sub · − ∂ 2 ℓ ∂ y ∂ f (C.30) The sensitivit y term for quadratic loss is − ∂ 2 ℓ ∂ y ∂ f = − ( − 2 I m ) = 2 I m . Injecting the expressions deriv ed in Equations ( C.28 ) and ( C.29 ) in to Equation ( C.30 ) w e hav e: : GLS i θ sub = 2 n I m ⊗ ˜ g ⊤ i h I m ⊗ n 2 ( ˜ G ⊤ ˜ G ) − 1 i ( I m ⊗ ˜ g i ) = I m ⊗ ˜ g ⊤ i ( ˜ G ⊤ ˜ G ) − 1 ˜ g i This simplifies to the scalar leverage score m ultiplying the iden tity matrix. This form ulation allows computing the GLS for all samples in O ( nd 2 + d 3 ) in time . The O ( nd 2 ) comes from computing ˜ G ⊤ ˜ G and O ( d 3 ) from inv erting the d × d matrix. This is more efficient than the solution prop osed in Algorithm 2 for mo derate d and large n , as t ypically encountered in practice. C.2 Cross Entrop y Loss (classification) Prop osition C.2 (Last-Lay er GLS for Cross Entrop y) . L et p j = softmax ( f ( x j )) ∈ R m b e the pr ob ability ve ctor for sample j , and let S j = diag ( p j ) − p j p ⊤ j b e the Jac obian of the loss w.r.t the lo gits. L et ˜ g i ∈ R d +1 b e the fe atur e ve ctor (emb e ddings and bias). The last-layer GLS for sample i under the cr oss entr opy loss is the m × m matrix wher e the entry ( u, v ) is given by: GLS i θ sub uv = 1 n ˜ g ⊤ i H θ sub − 1 [ u,v ] ˜ g i (C.31) This excludes the cost of the forward pass to obtain ˜ g i as these are typically av ailable from the training or inference pro cedure. W e refer only here to the computational ov erhead of the GLS computation itself. 19 wher e H θ sub ∈ R m ( d +1) × m ( d +1) is the Hessian, structur e d as a blo ck matrix wher e the ( k , l ) -th blo ck (for 1 ≤ k , l ≤ m ) is given by: H θ sub [ k,l ] = 1 n n X j =1 ( S j ) kl ˜ g j ˜ g ⊤ j ∈ R ( d +1) × ( d +1) . (C.32) Pr o of. W e consider the same neural netw ork model where the last lay er is a linear transformation. 1. Notation and Jac obian Structur e As in the regression case, let f ( x ) = W g ( x ) + b ∈ R m and θ sub = v ec ([ W , b ]) . The Jacobian of the output with respect to the parameters remains identical: J i θ sub = I m ⊗ ˜ g ⊤ i ∈ R m × m ( d +1) (C.33) 2. The Hessian Matrix The Hessian of the loss with resp ect to the parameters is defined as: H θ sub = 1 n n X j =1 J ⊤ j θ sub ( ∇ 2 f ℓ j ) J j θ sub F or the cross en tropy loss, ∇ 2 f ℓ j = S j , whic h is the Hessian of the loss w.r.t the logits. Substituting the Jacobian structure, w e can explicitly compute the structure of H θ sub . The matrix consists of m × m blo c ks, where each block captures the interaction b et ween the parameters of class k and class l . The ( k , l ) -th blo c k of size ( d + 1) × ( d + 1) is given by: H [ k,l ] = 1 n n X j =1 J ⊤ j θ sub ( S j ) J j θ sub [ k,l ] = 1 n n X j =1 ( S j ) kl ˜ g j ˜ g ⊤ j Unlik e the quadratic case, S j is dense, so H θ sub is not blo c k-diagonal (i.e ., H [ k,l ] = 0 for k = l ). Consequen tly , it must b e in verted as a full matrix. 3. Derivation of the GLS The Generalized Lev erage Score matrix is giv en by: GLS i θ sub = 1 n · J i θ sub · H θ sub − 1 · J ⊤ i θ sub · − ∂ 2 ℓ ∂ y ∂ f (C.34) The sensitivit y term for cross en tropy is − ∂ 2 ℓ ∂ y ∂ f = I m . Injecting Equation ( C.33 ) in to Equation ( C.34 ), w e hav e: GLS i θ sub = 1 n I m ⊗ ˜ g ⊤ i H θ sub − 1 ( I m ⊗ ˜ g i ) T o find the ( u, v ) -th scalar en try of this resulting m × m matrix, we observe that the Kroneck er structure of the Jacobian effectiv ely pro jects the ( u, v ) -th blo c k of the inv erse Hessian on to the feature space: GLS i θ sub uv = 1 n · ˜ g ⊤ i H θ sub − 1 [ u,v ] ˜ g i R emark C.3 (Computational Complexity Comparison) . The computational cost differs significantly betw een the t wo losses due to the structure of the Hessian. F or the quadr atic loss , the Hessian consists of m iden tical diagonal blo c ks, allowing us to compute and in vert a single ( d + 1) × ( d + 1) matrix. This yields a total complexit y of O ( nd 2 + d 3 ) . Con versely , for the cr oss entr opy loss , the softmax function couples all m classes, forcing the construction and in version of the full m ( d + 1) × m ( d + 1) Hessian. This results in a significantly higher complexity of O ( nm 2 d 2 + m 3 d 3 ) . 20

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment