ROIX-Comp: Optimizing X-ray Computed Tomography Imaging Strategy for Data Reduction and Reconstruction

In high-performance computing (HPC) environments, particularly in synchrotron radiation facilities, vast amounts of X-ray images are generated. Processing large-scale X-ray Computed Tomography (X-CT) datasets presents significant computational and st…

Authors: Amarjit Singh, Kento Sato, Kohei Yoshida

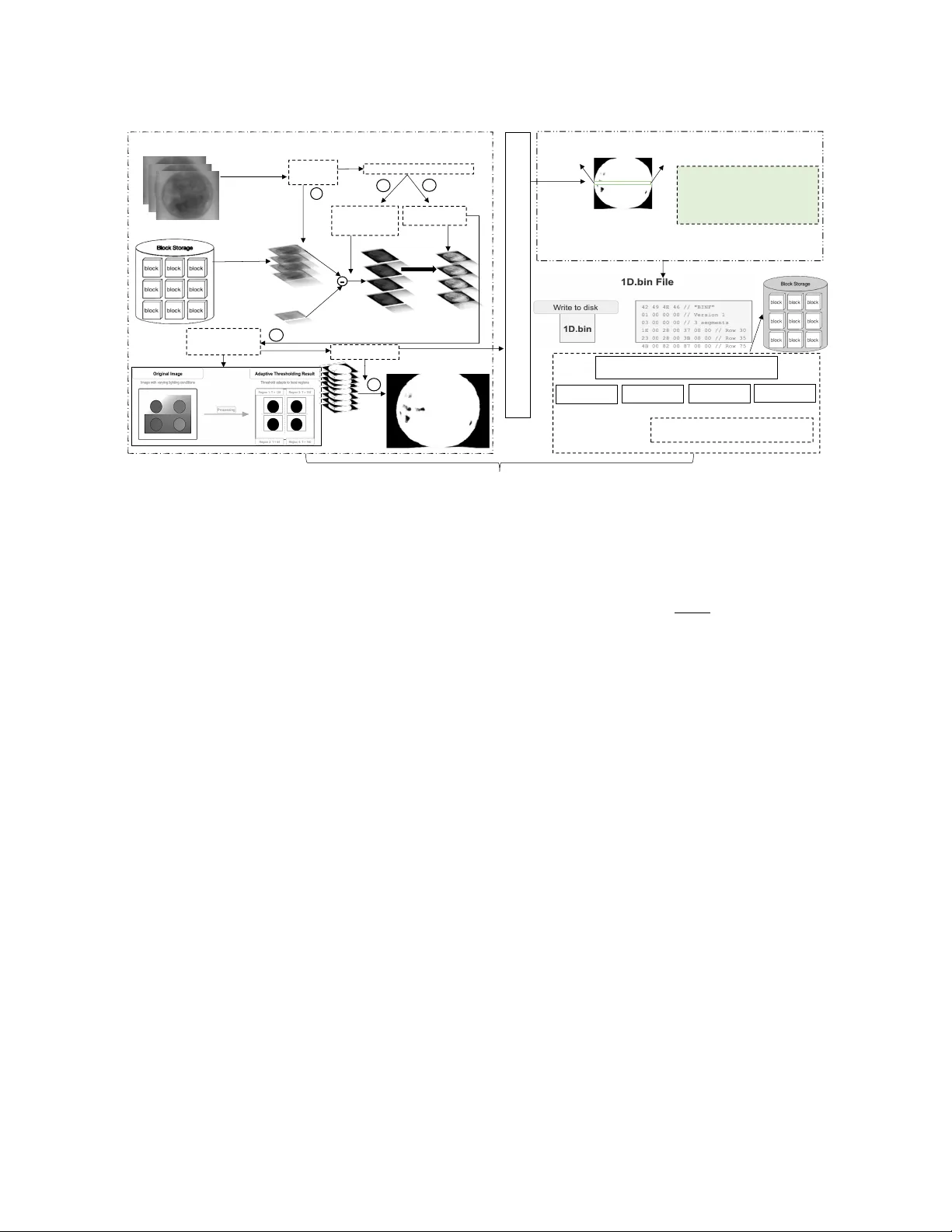

ROIX -Comp: Optimizing X -ray Computed T omography Imaging Strategy for Data Reduction and Reconstruction Amarjit Singh amarjitsingh@riken.jp RIKEN (R-CCS) Kobe, Hyogo, Japan Kento Sato kento.sato@riken.jp RIKEN (R-CCS) Kobe, Hyogo, Japan Kohei Y oshida yoshida@hpc.is.uec.ac.jp The University of Electro-Communications, RIKEN (R-CCS) T oky o, Japan Kentar o Uesugi ueken@spring8.or .jp Japan Synchrotron Radiation Research Institute Sayo, Hyogo, Japan Y asumasa Joti joti@spring8.or .jp RIKEN SPring-8 Center Hyogo, Japan T akaki Hatsui hatsui@spring8.or .jp RIKEN SPring-8 Center Hyogo, Japan Andrès Rubio Proaño arubio5@indoamerica.edu.ec Centro de Investigación en Mecatrónica y Sistemas Interactivos(MIST), Universidad T ecnológica Indoamérica Quito, Pichincha, Ecuador Abstract In high-performance computing (HPC) environments, particularly in synchrotron radiation facilities, vast amounts of X -ray images are generated. Processing large-scale X-ray Computed T omogra- phy (X -CT) datasets presents signicant computational and storage challenges due to their high dimensionality and data volume. T ra- ditional approaches often require extensiv e storage capacity and high transmission bandwidth, limiting real-time pr ocessing capa- bilities and workow eciency . T o address these constraints, w e introduce a region-of-interest (ROI)-driv en extraction framework (ROIX-Comp) that intelligently compr esses X-CT data by identify- ing and retaining only essential features. Our work reduces data volume while preserving critical information for downstream pro- cessing tasks. At pre-processing stage, we utilize error-bounded quantization to reduce the amount of data to be processed and therefore impr ove computational ecencies. At the compression stage, our methodology combines object extraction with multiple state-of-the-art lossless and lossy compressors, resulting in signi- cantly improved compression ratios. W e evaluated this framework against seven X -CT datasets and observed a relative compression ratio impro vement of 12.34 × compared to the standard compression. ACM acknowledges that this contribution was authored or co-authored by an employee, contractor or aliate of a national government. A s such, the Government retains a nonexclusive, ro yalty-free right to publish or reproduce this article, or to allow others to do so, for Government purposes only . Request permissions from owner /author(s). SCA/HPCAsia 2026, Osaka, Japan © 2026 Copyright held by the owner/author(s). ACM ISBN 979-8-4007-2067-3/2026/01 https://doi.org/10.1145/3773656.3773665 CCS Concepts • Theory of computation → Data compression ; • Computing methodologies → Image processing ; Ke y wor ds HPC, Computed T omography , Data Compression, Segmentation, Lossless/Lossy Compression, Error Bounded Lossy Compression, I/O Optimization, Requirements Analysis, State of Practice A CM Reference Format: Amarjit Singh, Kento Sato, K ohei Y oshida, Kentaro Uesugi, Y asumasa Joti, T akaki Hatsui, and Andrès Rubio Proaño. 2026. ROIX -Comp: Optimizing X-ray Computed T omography Imaging Strategy for Data Reduction and Reconstruction. In SCA/HPCAsia 2026: Supercomputing Asia and Interna- tional Conference on High Performance Computing in Asia Pacic Region (SCA/HPCAsia 2026), January 26–29, 2026, Osaka, Japan. ACM, New Y ork, NY, USA, 11 pages. https://doi.org/10.1145/3773656.3773665 1 Introduction Synchrotron radiation facilities such as ESRF [ 4 ], APS [ 2 ], and SPring-8 [ 22 ] specialize in the generation of high-intensity X-rays. These facilities provide advance d material analysis and imaging across various scientic disciplines. In recent years, dete ctors at these light source facilities have become increasingly ecient: they are now capable of generating data ranging from terabytes to petabytes per day . This exponential growth of data creates signi- cant challenges in storage, processing, and analysis that demand highly ecient data management strategies. T o ee ctively handle these datasets, distributed storage architectures with compression capabilities must be integrated into high performance computing (HPC) environments [8]. SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan Amarjit Singh, Kento Sato, K ohei Y oshida, Kentaro Uesugi, Y asumasa Joti, Takaki Hatsui, and Andrès Rubio Pr oaño X-ray computed tomography (X-CT) images created by these large radiation facilities are particularly imp ortant because of their applications in various scientic, industrial, and medical domains. High-resolution imaging enables detailed investigation of inter- nal material properties and structures [ 1 ]. The data generation rate in SPring-8 has increased signicantly since the introduction of DIFRAS detectors[ 9 ]. This exponential growth of data creates challenges in storage, processing, and analysis that demand highly ecient data management strategies. Recent variants of DIFRAS detectors equipped with the IMX661 ( 13 . 9 𝑘 x 9 . 7 𝑘 pixels), 21 . 8 frames/sec @ 10 bit depth generate data at a rate of 10 . 4 GB / s max- imum ( 899 TB / day )[ 21 ]. Therefore, data compression is critical for ecient storage and data analysis. Traditional data compression approaches typically apply a general- purpose compression algorithm directly to X -CT images. These techniques do not achieve optimal r esults be cause they do not ac- count for the specialize d nature of X -CT data, including its unique noise patterns, spatial correlations, and critical structural features. Moreover , these standard methods often struggle with the high dynamic range found in X-CT scans, where both high-density and low-density materials must be preserved with high delity . Lossless compression strategies such as Zstd [ 3 ], Gzip [ 5 ], Hu- man coding [ 7 ], Bzip2 [ 20 ], and Lz77 [ 28 ] prioritize data delity over compression ratio and rate, which limits their eectiveness for large image datasets. In contrast, lossy compression methods such as Sz3 [ 11 ] and Zfp [ 13 ] achieve high data reduction while preserving key characteristics of the object, such as precision, by removing less signicant information. Our approach employs region-of-inter est (ROI) recognition to au- tomatically segment signicant structural regions from background elements based on density distributions. Be cause processing whole volumetric scans is computationally e xpensive and storage inten- sive, this domain-specic methodology extracts only the object of interest, eectively eliminating non-valuable areas entirely from further processing. By focusing exclusively on these relevant re- gions, we optimize both the r econstruction and segmentation steps. This reduction in working dataset size delivers dual benets: speed- ing up data-intensive operations while minimizing storage and transmission costs. Building on this foundation, we introduce a spe cialized pre- processing algorithm that conditions the segmented X-CT data to better align with the capabilities of standard compressors. This conditioning step enables signicantly higher compression ratios compared to applying compr essors directly to raw X-CT data while maintaining essential data quality throughout the process. The key contributions of this paper include: • The development of an adaptive thresholding and binariza- tion framework for processing 2D X -CT images. • The Implementation of a region/object extraction strategies to isolate diagnostically relevant areas fr om X-CT data. • The integration of a region-of-interest recognition with error- bounded quantization to analyze the relationship between compression ratio and data preservation within sp ecied error tolerances. • The demonstration that pre-processing segmentation en- hances data processing eciency while reducing storage requirements for X -CT datasets. • The evaluation of the approach across multiple datasets, com- paring compression ratios with state-of-the-art compressors to validate performance improvements. This paper is organized as follows. Section 2 provides background on X-CT and reviews the fundamental techniques. Section 3 dis- cusses related work in X-CT data compr ession and ROI extraction. Section 4 presents our object e xtraction strategy , while Section 5 evaluates our approach across multiple datasets, analyzing the com- pression ratio, processing time, and reconstruction quality . Finally , we conclude with a summar y of the ndings and directions for future research. 2 Background X-ray Computed T omography (X-CT) is an imaging technique that provides a high-r esolution 3D representation of an object’s internal structure. X -CT acquires numerous 2D X-ray pr ojections from dif- ferent angles, as illustrated in Figure 1. During the imaging process, the X-ray source emits radiation towar d the object, which is posi- tioned between the source and the detector . The object undergo es stepwise rotation to capture multiple projections from dierent angles, as shown in Step 1 of Figure 1. These projections are then recorded by the detector and processed to generate 2D projection images (Step 2). X- ray source Objec t X- ray detector 2D Projection Rotation Display Pl ane Imag e source Step 1 Step 2 Figure 1: Projection of X-CT Object Capturing Structural and Density The analysis of X-CT scans involves multiple phases, such as pre- processing, shape identication, feature extraction based on pixel intensity and structural patterns, classication, and post-processing. Feature extraction separates structures or materials of interest from the background. In the context of large-scale scientic imaging, eective segmentation techniques enable the isolation of critical regions by ltering out less r elevant information. This technique reduces computational ov erhead, facilitates more accurate mea- surements, and simplies subsequent data handling, storage, and analysis tasks. ROIX-Comp: Optimizing X-ray Computed T omography Imaging Strategy for Data Reduction and Reconstruction SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan X-CT data oer signicant compression opportunities because most scan content is not critical: in typical scans, only the small region of interest requires high resolution detail, while large areas outside contain minimal valuable information. By recognizing this pattern, we can apply selective compression that preserves quality in important areas while aggressively reducing data in noncritical regions. 3 Related W ork Several studies have e xplored region-of-interest-based techniques in X-CT , addressing challenges related to data eciency , reconstruc- tion, and segmentation. Our work addr esses ROI-based compres- sion, which requires both eective ROI identication and ecient compression methods. Existing methods often depend on lossless compression, which ensures exact data reconstruction but struggles to achiev e high compression ratios, particularly for large-scale structured datasets. As a r esult, lossy compression approaches have emerged as mor e suitable alternatives to handle high-dimensional scientic data. Specically , the lossy compressors Sz3 [ 11 ] and Zfp [ 13 ] are used to compress oating-point data. These compressors are designed to handle scientic datasets with precision control, adaptive com- pression, and high-throughput performance. Sz3 utilizes predictive coding and quantization to reduce redundancy with controlled ac- curacy loss, while Zfp uses blockwise transform coding to enable compression with xed precision constraints. ROI-based approaches have been successfully applied to various X-CT pr ocessing tasks, demonstrating the value of region-spe cic prioritization. In reconstruction contexts, X-CT imaging faces com- putational challenges including high radiation exposure and expen- sive forward projection steps. Huck et al. [ 6 ] explored ROI-based X-CT using a dynamic beam attenuator (DBA) to minimize radia- tion exposure while maintaining diagnostic accuracy . McCann et al. [ 14 ] proposed an ROI reconstruction method for parallel X-CT that eliminates the costly forward projection step through digital ltering implementations. For ROI identication, eective segmentation metho ds are essen- tial as pr e-processing steps. Zheng [ 27 ] propose d a lung CT segmen- tation algorithm that combines thresholding and gradient-based methods, utilizing time-evolutionary feature extraction to improve segmentation accuracy . Muthukrishnan [ 16 ] proposed a topological derivative approach for CT and MRI segmentation in head and brain imaging. Howev er , Müller et al. [ 17 ] identied critical evaluation challenges, nding that common segmentation metrics produce misleading results due to the class imbalance between small ROIs and large background areas. Recent advances in ROI-based compression have shown promis- ing results, though primarily in medical imaging applications. The Swin Transformer-based ROI model [ 26 ] demonstrated how ROI mask frameworks can enhance key region quality . W asson [ 10 ] developed a hybrid compression te chnique for telemedicine that applies lossy fractal compression to non-ROI regions while preserv- ing ROI areas with lossless compression. How ever , their approach lacks integration with high-performance computing frame works and faces limitations in scalability for large-scale X-CT datasets. ROI-based HPC compression has not yet been fully augmented with these advanced techniques, emphasizing the ne ed for fur- ther research to integrate ROI-awar e prioritization methods with high-performance scientic data compression. Current scientic compression frameworks such as Sz3 and Zfp apply uniform com- pression across entire datasets [ 11 , 13 ], without considering the varying scientic importance of dierent regions within the data. Although ROI-driven models have been widely applied to images, particularly in medical imaging [ 10 ] and remote sensing appli- cations [ 26 ], there is a signicant gap in integrating ROI-base d prioritization with error-bounded compression in large-scale sci- entic data. Current ROI methods typically operate at the pixel level in image compression, but scientic datasets often require region-specic precision control across entire data volumes. An improvement lies in dev eloping hybrid compression methods that apply ROI prioritization to structured datasets, allowing Sz3 and Zfp to maximize eciency without compromising scientic accu- racy . Bridging this gap would improve data reduction strategies for high-resolution imaging, large-scale simulations, and scientic computing applications requiring precise information retention. 4 Proposed Approach W e pr opose a 2D X-CT extraction framework, illustrated in Figure 2, which consists of several key steps: 1) Pre-pr ocessing (cf. Section 4.1), 2) Feature extraction ( cf. Section 4.2), and 3) HPC-base d com- pression (cf. Section 4.3). 4.1 Pre-processing Stage Before applying ROI-based compression, the raw X-CT data must be prepared to facilitate accurate region identication and consistent processing across dierent datasets. The pre-processing consists of four phases: 1) a substraction phase where the background is removed based on pixel intensity , 2) a normalization phase to avoid inconsistent analysis due to variations in imaging conditions, 3) an adaptive thresholding to automatically determine optimal threshold values based on local image characteristics, and 4) a binary mask- ing to create precise region-of-interest boundaries for subsequent analysis. 4.1.1 Background subtraction: X-CT scans exhibit a characteristic structure with distinct regions of varying scientic importance. W e dene the **foreground** as the central region containing the object or features of scientic interest, which typically corresponds to the specimen, material, or anatomical structure being analyzed in the middle of the X-CT scan. In contrast, we dene the **background** as the surrounding areas that contain no valuable information for scientic analysis, such as empty space, mounting apparatus, or air gaps. A key property of X -CT imaging is the clear intensity dier- ence between the foreground and background regions as a result of varying material densities and X-ray absorption coecients. The foreground objects typically e xhibit higher intensity values com- pared to the relatively uniform low-intensity background areas. W e lev erage this intensity contrast to automatically distinguish between scientically relevant and irrelevant r egions. T o detect and remove the background based on this intensity dierence, we employ a exible approach with multiple options SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan Amarjit Singh, Kento Sato, K ohei Y oshida, Kentaro Uesugi, Y asumasa Joti, Takaki Hatsui, and Andrès Rubio Pr oaño Acquire 2D Images Adaptive Thresholding Image segmentation/f iltering Binarization 1. PRE - PROCES SING ST AGE E xtraction (i , 𝑥 _start, 𝑥 _end, 𝑝 1 , 𝑝 2 , . . . , 𝑝𝑛 ) 2. FEA TURE EXTRACTION ST AGE x- ray C T S ca n • 𝑖 the row index. • 𝑥 _start: t he starting 𝑥 - coordinate • 𝑥 _end: the ending 𝑥 - coordinate • 𝑃 ( 𝑖 ): the set of pixel values ROIX PROCESSING PIPELINE Lossless/ Lossy Compression Error bounde d quantization GZIP ZSTD SZ3 ZFP 3. COMPRESSION ST AGE Raw Data subtraction load data Background subtraction (using ref) Intensity normalization 1 2 3 4 5 R O I R E G I O N x_start x_end Reference Bac kground Image (p re - computed Background) ROIX - CO MP Original images Normalization fo r ROI enhancement Background- subtracted object data Figure 2: Adaptive ROIX Compression Framework: Systematic W orkow depending on the availability of data. Our solution primarily uses **background references** that are acquired during calibration scans without any sample present. When background refer ences are un- available, our method ** estimates a static background** from the scene by analyzing regions where no sample is present and extrap- olating these values across the image. This approach provides exibility by utilizing highly accurate background r eferences when available, while still functioning eec- tively through backgr ound estimation when necessary . Once the background is established, our method r emoves it by pixel-by-pixel subtraction from the original image. Given an input image 𝐼 ( 𝑥 , 𝑦 ) (where 𝑥 and 𝑦 represent spatial coordinates in the image) and a background r eference 𝐵 ( 𝑥 , 𝑦 ) , we compute the output image 𝑀 ( 𝑥 , 𝑦 ) with the following formula: 𝑀 ( 𝑥 , 𝑦 ) = 𝐼 ( 𝑥 , 𝑦 ) − 𝐵 ( 𝑥 , 𝑦 ) By removing the background, we preserve all essential informa- tion while enabling targeted compression that fo cuses computa- tional resources on scientically signicant features. 4.1.2 Intensity normalization: The conditions of acquisition of X - CT images (Section 2) may var y during the procedure. These varia- tions in scanning parameters, X-ray source intensity , or detector sensitivity can result in inconsistent images within the dataset. This variability can negatively impact subsequent processing steps, particularly segmentation and feature extraction. T o reduce this variability , we normalize all image pixels to t within an 8-bit range (0-255). W e selecte d this range be cause it provides sucient dy- namic range for distinguishing relevant material densities while standardizing the input for our optimized compression algorithms. This normalization ensures consistent processing regardless of the original acquisition conditions. Given an input grayscale image 𝐼 ( 𝑥 , 𝑦 ) with pixel values varying from 0 to 𝐼 max , the normalization process is computed as: 𝐼 𝑛 ( 𝑥 , 𝑦 ) = 𝐼 ( 𝑥 , 𝑦 ) 𝐼 max × 255 (1) where 𝐼 max is the maximum pixel intensity in the image. Intensity normalization is applied to the background-subtracted object data (not the raw projections) to enhance the visibility of thin layers and low-intensity features that ar e dicult to detect at certain projection angles. This normalization aids ROI extraction while preserving the physical intensity relationships in the original data. 4.1.3 Adaptive Thr esholding: After intensity normalization of the X-CT images, we applied a thresholding and binarization procedure to separate the object from its background. Thresholding works by setting a specic intensity value as a cut-o p oint: pixels above this value are considered part of the object, while pixels b elow are classied as background. W e sp ecically used the multi-Otsu adaptive thresholding tech- nique [ 12 ], which extends the Otsu metho d [ 18 ] to compute multiple threshold values and segment images into classes based on intensity distribution. Unlike conventional xed-threshold approaches, this method dynamically determines optimal threshold classes, making it eective for X-CT images with varying intensity levels. 4.1.4 Binarization: Once the threshold is found, we proceed with the binarization. Binarization creates a representation, called a binary mask M(x,y), where the object pixels have a value of 1 and the background pixels have a value of 0 isolating the obje ct of interest: 𝑀 ( 𝑥 , 𝑦 ) = ( 1 , if pixel b elongs to segmented ROI 0 , other wise (2) ROIX-Comp: Optimizing X-ray Computed T omography Imaging Strategy for Data Reduction and Reconstruction SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan This step eliminates noise and irrelevant pixels, ensuring that subsequent analysis focuses exclusively on the target structures or features of interest. 4.2 Feature Extraction T o extract a feature, we rst ne ed to determine its contour from the created binary mask. 4.2.1 Contour detection: W e use OpenCV’s findContours() func- tion with RETR_EXTERNAL mode to detect only outer boundaries and CHAIN_APPROX_SIMPLE for ecient coordinate storage. Fr om all detected contours, we select the largest by area to focus on the main object. Once ROI boundaries are identied, we convert the contour in- formation into a row-based representation for ecient data organi- zation. For each row that intersects with the ROI, w e determine the leftmost and rightmost pixel coordinates that fall within the object boundaries. This creates a compact r epresentation that separates structural information from pixel content. 4.2.2 Feature extraction: As illustrated in Figure 3, for each row index 𝑖 that contains part of the object, we extract and store the following: Geometry Data Structure 𝐺 ( 𝑖 ) = [ 𝑖, 𝑥 start , 𝑥 end ] (3) Pixel Data Structure 𝑃 ( 𝑖 ) = [ 𝑝 0 , 𝑝 1 , . . . , 𝑝 𝑛 𝑖 − 1 ] (4) Here we dene: • 𝑖 : the row index • 𝑥 start : the starting 𝑥 -co ordinate of the object in row 𝑖 • 𝑥 end : the ending 𝑥 -co ordinate of the object in row 𝑖 • 𝑝 𝑗 : the pixel value at position 𝑥 start + 𝑗 in row 𝑖 • 𝑛 𝑖 : 𝑥 end − 𝑥 start : the number of pixels in row 𝑖 The complete ROI representation consists of: • Geometry se ction G = { 𝐺 ( 𝑖 1 ) , 𝐺 ( 𝑖 2 ) , . . . , 𝐺 ( 𝑖 𝑚 ) } contain- ing spatial indexing data • Pixel se ction P = { 𝑃 ( 𝑖 1 ) , 𝑃 ( 𝑖 2 ) , . . . , 𝑃 ( 𝑖 𝑚 ) } containing in- tensity values This approach separates spatial indexing information from pixel content. W e pr eserve the indexing data exactly to maintain accurate reconstruction co ordinates. The intensity values of the pixels can b e compressed with controlled quality loss. In this way , we maintain precise ROI positioning while achieving le size reduction. 4.2.3 Absolute Error-Bounded antization. After separating ROI data into indexing and pixel parts, we compress the pixel values to reduce storage space while keeping the indexing data exact. W e use absolute error-bounded quantization because it controls qual- ity loss by ensuring compressed pixel values dier from originals by no more than a set error limit. This method provides thr ee key benets: signicant reduction in le size and maintains scientic ac- curacy . Unlike general compressors that treat all data uniformly , our approach targets ROI-specic compression, pr eser ving structural information while compressing pixels with controlled precision for scientic applications. Input: Pixel sequence 𝐷 [ 0 ] , 𝐷 [ 1 ] , . . ., 𝐷 [ 𝑚 − 1 ] (where 𝑚 is the sequence length), positive absolute error tolerance 𝐸 abs Output: Quantized sequence 𝑄 [ 0 ] , 𝑄 [ 1 ] , . . ., 𝑄 [ 𝑚 − 1 ] where | 𝑄 [ 𝑖 ] − 𝐷 [ 𝑖 ] | ≤ 𝐸 abs Step 1: Calculate Error Bounds For each pixel, dene its ac- ceptable range: upper [ 𝑖 ] = 𝐷 [ 𝑖 ] + 𝐸 abs (5) lower [ 𝑖 ] = 𝐷 [ 𝑖 ] − 𝐸 abs (6) Step 2: Initialize 𝑢 = +∞ , 𝑙 = −∞ , head = 0 (7) where [ 𝑙 , 𝑢 ] tracks the interse ction of acceptable ranges for the current group. Note: The midpoint is only computed after the actual data have up dated the group range [ 𝑙 , 𝑢 ] , avoiding the undened behavior of the initial values ±∞ . Step 3: For each pixel 𝑖 = 0 to 𝑚 − 1 : (1) Compute intersection with the current group: 𝑙 new = max ( 𝑙 , lower [ 𝑖 ] ) (8) 𝑢 new = min ( 𝑢 , upper [ 𝑖 ] ) (9) (2) Check if ranges overlap: • If 𝑢 new < 𝑙 new (no overlap): 𝑄 [ 𝑗 ] = 𝑢 + 𝑙 2 ∀ 𝑗 ∈ [ head , 𝑖 − 1 ] (10) 𝑙 = lower [ 𝑖 ] (11) 𝑢 = upp er [ 𝑖 ] (12) head = 𝑖 (13) • Else (ranges overlap): 𝑙 = 𝑙 new (14) 𝑢 = 𝑢 new (15) Step 4: Final Group 𝑄 [ 𝑗 ] = 𝑢 + 𝑙 2 ∀ 𝑗 ∈ [ head , 𝑚 − 1 ] (16) The midpoint of the acceptable range is chosen to minimize the abso- lute worst-case error across all pixels in the group. Step 5: Clip to V alid Range 𝑄 = clip ( 𝑄 , 0 , 255 ) (17) Usage: This absolute-err or bounded quantization method serves as a preparation step for general purpose compressors (Gzip, Zstd) to achieve error-bounded compression. Specialized error-bounded quantization compressors (Sz3, Zfp) bypass this step because they incorporate their own error-control mechanisms. 4.3 Integrating HPC-based compression At this point, only the regions of interest from the X -CT image have been preserved and prepared for compression. ROIX-Sz3 and ROIX-Zfp utilize the compressors’ built-in error control mecha- nisms: Sz3 employs prediction-based compression with adjustable parameters, while Zfp uses block-base d compression with xed- rate or xed-precision options. Both specialized compressors can adapt their strategies to preserve critical features in important re- gions. This combination of ROIX’s targete d data selection with SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan Amarjit Singh, Kento Sato, K ohei Y oshida, Kentaro Uesugi, Y asumasa Joti, Takaki Hatsui, and Andrès Rubio Pr oaño Image with O bject r ow i Pixel values between x _start and x_end 1D Array Storage Method Inside is also important Complete St orage Sequence (Multiple Row Sequence) x_start x_end Figure 3: ROI Extraction Pipeline: From Data to T argeted Feature Isolation both general-purpose and domain-specic compression te chniques enables comprehensive e valuation across dierent scientic data types and application requirements. Our compression methodology begins with adaptive threshold- ing to identify regions of interest. After obje ct detection, we extract their geometric data and conv ert it into a compact one-dimensional array stor ed as a binary le (.bin). This 1D representation preserves critical structural elements: row indices, x-coordinate boundaries, pixel values, and intensities. The compressed format consists of this binary le that contains the quantized 1D array based on our error-bounded constraints. W e then apply either lossless methods (Gzip, Zstd) or lossy scientic compressors (Sz3, Zfp) to this repre- sentation. During decompression, our algorithm reconstructs the image through a systematic reverse process. Each row is precisely restored using the preserved metadata captured during compression, specif- ically the row indices, x-coordinate boundaries, pixel values, and intensities. This comprehensive appr oach ensures that the spatial relationships and shape of the object are accurately reproduced. The reconstructed object is then combined with the precomputed background to recreate the original image. 5 Experimental Evaluation W e conduct our evaluations across six key components: (1) ROI eval- uation measuring spatial r eduction eciency , (2) **ROI evaluation** using standard metrics (DSC, IoU, sensitivity , sp ecicity , accuracy , Kappa coecient, AUC, and A verage Hausdor Distance) to vali- date extraction accuracy , (3) compression ratio analysis comparing lossless compression with relativ e improvements to demonstrate our method’s eectiveness, followed by error-bounded quantization analysis across dierent err or bounds to achieve higher compres- sion ratios, (4) compression time evaluation, (5) decompression time assessment for reconstruction eciency , and (6) quality assessment using Structural Similarity Index (SSIM) to validate preservation of meaningful content. This multifaceted evaluation demonstrates both the eectiveness of our ROI extraction methodology and the practical benets achieved through integrated compression opti- mization. Hardware Congurations: Our experiments were conducted on an x86-64 system (SYS-4029GP- TRT2) equipped with Intel(R) Xeon(R) Gold 5215 CP U @ 2.50GHz and an NVIDIA A100 GP U with 80 GB of memory . Software Environment: Our implementation was developed using a Python environment. For the Sz3 and Zfp compression algorithms, we utilized libpressio integration via spack. Dataset: W e teste d our approach against several X -CT datasets; their details are provided in T able 1. Dataset name Resolution Number of images T otal Size (GB) W o od [23] 740x300 900 0.4 Fossil embr yos of cnidarians [24] 4090x3008 1804 42 Ryugu [25] 1207x659 903 1.4 Chicken [15] 2240x2368 721 3.83 W alnut [15] 2240x2368 721 7.66 Pine cone [15] 2240x2368 721 7.66 Seashell [15] 2240x2368 721 7.66 T able 1: X-CT Dataset Specication 5.1 ROI detection evaluation The evaluation demonstrates the framework’s ability to accurately identify and extract object structures while eliminating background areas. Figure 4 demonstrates the eectiveness of the proposed ROI extraction methodology in dierent datasets, highlight signicant variations in spatial reduction factors that reect the inherent char- acteristics of dierent image typ es. The results show that the Ryugu dataset achieved the highest spatial reduction factor of 8 . 49 × . In contrast, the Fossile dataset exhibited the lowest spatial reduction factor of 1 . 51 × , implying that meaningful features are more widely distributed throughout the image , requiring a larger ROI that en- compasses approximately 66% of the original image area. The re- maining datasets (Chicken: 4 . 87 × , Pine cone: 4 . 66 × , Seashell: 3 . 81 × , W alnut: 2 . 62 × , and W o od: 2 . 45 × ) demonstrate interme diate spa- tial reduction factors, ranging from 2 . 45 × to 4 . 87 × . These varying reduction factors highlight the adaptability of the ROI extraction algorithm to dierent types of image content, with the average spatial reduction factor of 4 . 06 × in all datasets indicating substan- tial potential for storage and bandwidth optimization in practical applications. The signicant variation in spatial reduction factors underscores the importance of content-adaptive ROI identication methods that can automatically adjust to the spatial distribution characteristics of dierent image types. ROIX-Comp: Optimizing X-ray Computed T omography Imaging Strategy for Data Reduction and Reconstruction SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan W o od Fossile Ryugu Chicken W alnut Pine Cone Seashell 0 2 4 6 8 10 2 . 45 1 . 51 8 . 49 4 . 87 2 . 62 4 . 66 3 . 81 Dataset Spatial Reduction Factor ( × ) Figure 4: Spatial reduction achieved through ROI extraction across dierent datasets. The spatial reduction factor indicates how many times smaller the ROI is compared to the original image. 5.2 ROI quality evaluation T o comprehensively evaluate our ROI detection method, we used multiple standard metrics that measure dierent aspects of seg- mentation performance. The Dice Similarity Coecient (DSC) and Intersection over Union (IoU) assess the overlap accuracy between our detected regions and ground truth object. Sensitivity measures how well our method identies all relevant pixels, while specicity evaluates its ability to correctly exclude background areas. Accuracy provides general corr ectness, and the Kappa coecient accounts for chance agreement by measuring performance beyond random classication. The Area Under Curve (AUC) summarizes perfor- mance across dierent threshold values, and the A verage Hausdor Distance (AHD ) measures boundar y precision by calculating the maximum distance between predicted and actual boundaries. T o- gether , these metrics provide a complete picture of our method’s detection accuracy , b oundary precision, and reliability across dif- ferent object types and imaging conditions. The evaluation metrics are mathematically dened as follow: 𝐷 𝑆𝐶 = 2 | 𝐴 ∩ 𝐵 | | 𝐴 | + | 𝐵 | (18) 𝐼 𝑜 𝑈 = | 𝐴 ∩ 𝐵 | | 𝐴 ∪ 𝐵 | (19) 𝑆 𝑒 𝑛𝑠𝑖 𝑡 𝑖 𝑣 𝑖 𝑡 𝑦 = 𝑇 𝑃 𝑇 𝑃 + 𝐹 𝑁 (20) 𝑆 𝑝 𝑒 𝑐 𝑖 𝑓 𝑖𝑐 𝑖 𝑡 𝑦 = 𝑇 𝑁 𝑇 𝑁 + 𝐹 𝑃 (21) 𝐴 𝑐 𝑐𝑢 𝑟 𝑎𝑐𝑦 = 𝑇 𝑃 + 𝑇 𝑁 𝑇 𝑃 + 𝑇 𝑁 + 𝐹 𝑃 + 𝐹 𝑁 (22) 𝑓 𝑐 = ( 𝑇 𝑁 + 𝐹 𝑁 ) ( 𝑇 𝑁 + 𝐹 𝑃 ) + ( 𝐹 𝑃 + 𝑇 𝑃 ) ( 𝐹 𝑁 + 𝑇 𝑃 ) 𝑇 𝑃 + 𝑇 𝑁 + 𝐹 𝑁 + 𝐹 𝑃 (23) 𝐾 𝑎 𝑝 𝑝 𝑎 = ( 𝑇 𝑃 + 𝑇 𝑁 ) − 𝑓 𝑐 ( 𝑇 𝑃 + 𝑇 𝑁 + 𝐹 𝑁 + 𝐹 𝑃 ) − 𝑓 𝑐 (24) 𝐴 𝑈 𝐶 = 1 − 1 2 𝐹 𝑃 𝐹 𝑃 + 𝑇 𝑁 + 𝐹 𝑁 𝐹 𝑁 + 𝑇 𝑃 (25) 𝐴𝐻 𝐷 = max 1 | 𝐴 | 𝑎 ∈ 𝐴 min 𝑏 ∈ 𝐵 𝑑 ( 𝑎 , 𝑏 ) , 1 | 𝐵 | 𝑏 ∈ 𝐵 min 𝑎 ∈ 𝐴 𝑑 ( 𝑎 , 𝑏 ) ! (26) where 𝐴 represents the predicted ROI, 𝐵 represents the ground truth ROI, 𝑇 𝑃 is true positive, 𝑇 𝑁 is true negative, 𝐹 𝑃 is false posi- tive, 𝐹 𝑁 is false negative, 𝑓 𝑐 is the expected agreement by chance, and 𝑑 ( 𝑎, 𝑏 ) is the Euclidean distance between the p oints 𝑎 and 𝑏 . This paper evaluates the eectiveness of the proposed method using the Dice Similarity Coecient (DSC), sensitivity , and other metrics[ 17 ] based on image segmentation. Therefore, we focus on showing how our method performs on various metrics, avoiding common evaluation mistakes in this eld. Our evaluation of seven test cases demonstrates that our segmentation method achieves strong performance across dierent scenarios. The Dice coecient (DSC) ranged from 0.9508 to 0.9992, with six out of seven cases exceeding 0.99, indicating excellent segmentation quality . Case 3 showed the most interesting result: despite having the lowest DSC (0.9508), it still maintained high accuracy (0.9882), demonstrating how accuracy can be misleading by showing only a 3.7 p ercent drop while actual segmentation quality dropped nearly 5 percent. This conrms that accuracy inates performance scores in X -CT imaging due to large background areas. Cases 4-7 achieved perfe ct sensitivity (1.0), showing complete detection of all ROI pixels, while Cases 1-3 had slightly lower sensitivity (0.9306-0.9986). Especially , case 2 r evealed a critical nding: despite the e xcellent DSC (0.9947), it had the highest A verage Hausdor Distance (52.41 pixels), indi- cating poor boundar y precision that overlap metrics alone cannot detect. In contrast, cases 4-7 showed both high DSC ( >0.997) and low AHD (1.0-1.91), representing optimal performance. These results 5 conrm our method performs excellently overall, with only Case 3 SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan Amarjit Singh, Kento Sato, K ohei Y oshida, Kentaro Uesugi, Y asumasa Joti, Takaki Hatsui, and Andrès Rubio Pr oaño DSC: 0.9992 Acc: 0.9993 IoU: 0.9983 Kap: 0.9986 Sens: 0.9986 AUC: 0.9993 Spec: 0.9998 AHD: 3.61 DSC: 0.9947 Acc : 0.9930 IoU: 0.9895 Kap : 0.9843 Sens: 0.9938 AUC : 0. 9924 Spec: 0.9913 AHD : 52.41 DSC: 0.9508 Acc: 0.9882 IoU: 0.9062 Kap: 0.9441 Sens: 0.9306 AUC : 0.9640 Spec: 0.9962 AHD : 35.03 Comparati ve Ev aluation of Segmentati on Metrics Across V ar ying Segmentation Scenarios DSC: 0.9982 Acc : 0.9993 IoU: 0.9964 Kap : 0.9977 Sens: 1. 0 AUC : 1. 0 Spec: 0.9991 AHD : 1.0 0 DSC: 0.9989 Acc: 0.9992 IoU: 0.9979 Kap: 0.9983 Sens: 1. 0 AUC : 1. 0 Spec: 0.9987 AHD : 1.91 DSC: 0.9972 Acc: 0.9988 IoU: 0.9945 Kap: 0.9965 Sens: 1. 0 AUC : 1. 0 Spec: 0.9985 AHD : 1.5 0 DSC: 0.9988 Acc: 0.9994 IoU: 0.9977 Kap: 0.9984 Sens: 1. 0 AUC : 1. 0 Spec: 0.9992 AHD : 1.0 Wood [24] Fossile [25] Ryugu [26] Chicken [2 7] Walnut [2 7] Pine cone [27] Seashe ll [27] Figure 5: Evaluation metrics measuring dierent aspects of segmentation performance: overlap accuracy (DSC, IoU), detection capability (Sensitivity , Specicity), overall correctness (Accuracy ), and boundar y precision (AHD). showing moderate performance and Case 2 highlighting the impor- tance of using boundar y-sensitive metrics alongside overlap-based measures. 5.3 Compression Ratio evaluation W e evaluated the compression performance of dierent compr es- sors applied to multiple X-CT datasets without error bound quanti- zation (lossless). W e have evaluated the compression ratio (CR) illustrated in Figure 6 and compared the compression ratio performance of ROIX- Gzip, ROIX -Zstd, ROIX-Sz3, and ROIX-Zfp across multiple X -CT datasets. Among the compressors tested, ROIX-Gzip and ROIX - Zstd consistently achieve higher compression ratios across most datasets. ROIX-Gzip reaches a compression ratio of 18.39 in the ryugu dataset [ 25 ]. ROIX-Sz3 demonstrates moderate compression eciency . The results indicate that ROIX-Gzip outperforms other methods when no quantization is applied. In terms of relative im- provement (ROIX -Comp ÷ Standard), the ryugu dataset [ 25 ] shows the best performance, achieving improvement factors of 12.34 × and 11.95 × respectively . The wood dataset [ 23 ] also benets from our approach, with 5.99 × and 5.83 × improvements using the same compression methods. Although most datasets show p ositive re- sults for various compression algorithms, the fossil dataset [ 24 ] reveals an interesting pattern where ROIX-Sz3 performs worse than the baseline (0.79 × ), suggesting that our approach may not universally improve all compression techniques for all types of data. Generally , ROIX-Gzip and ROIX-Zstd consistently deliv er the best performance across most datasets, while ROIX-Zfp shows minimal improvements or e ven slight degradation in some cases. The results in T able 2 demonstrate the eect of the absolute error b ound quantization [ 19 ] on the compression ratio for dierent compressors using the X-CT datasets. A s the absolute error bound increases, all compressors show an improvement in the compression ratio. W e have evaluated multiple error bound levels, ranging from 1e-01 to 1.5e1, where 1e-01 represents minimal data reduction, and 1.5e1 represents a greater reduction with potentially greater information loss. Based on the compr ession ratio data visualized in our tables, se v- eral important patterns appear across the dierent datasets. When examining the compression performance through the per-dataset T able 2: Compression ratios for dierent datasets and meth- ods across various error bounds Dataset Method Error Bound 1e-1 1e0 5e0 1e1 1.5e1 W o od ROIX-Gzip 6.53 6.60 10.07 17.46 27.01 ROIX-Zstd 6.35 6.36 9.10 18.29 29.47 ROIX-Sz3 4.40 8.05 17.22 25.02 39.56 ROIX-Zfp 1.03 2.92 3.57 4.03 4.03 Fossil ROIX-Gzip 4.71 4.85 10.04 39.90 80.34 ROIX-Zstd 4.81 4.87 8.87 42.19 79.62 ROIX-Sz3 4.91 8.20 19.23 36.96 74.86 ROIX-Zfp 1.90 2.00 3.57 2.20 2.20 Ryugu ROIX-Gzip 18.39 18.39 26.31 40.29 52.56 ROIX-Zstd 17.69 17.69 22.27 37.81 50.53 ROIX-Sz3 5.44 8.40 16.28 23.01 35.64 ROIX-Zfp 1.01 3.14 3.88 4.42 4.42 Chicken ROIX-Gzip 4.42 8.26 122.09 183.04 208.20 ROIX-Zstd 4.17 6.32 118.40 197.25 230.81 ROIX-Sz3 3.91 7.50 16.24 28.66 46.35 ROIX-Zfp 1.02 2.94 3.61 4.07 4.07 W alnuts ROIX-Gzip 3.08 8.40 27.82 52.42 73.74 ROIX-Zstd 3.94 6.59 62.40 107.64 153.57 ROIX-Sz3 3.35 6.28 11.28 16.44 21.23 ROIX-Zfp 1.08 1.48 1.86 2.60 3.40 Pine Cone ROIX-Gzip 3.87 7.42 54.83 100.10 138.36 ROIX-Zstd 3.83 5.90 56.94 96.48 132.31 ROIX-Sz3 3.51 6.22 11.11 16.15 20.77 ROIX-Zfp 1.07 1.62 2.02 2.70 4.00 Seashell ROIX-Gzip 3.94 8.34 43.43 75.64 109.97 ROIX-Zstd 3.88 6.86 40.68 68.63 101.80 ROIX-Sz3 3.57 5.63 9.35 12.94 16.41 ROIX-Zfp 1.10 1.65 2.08 2.70 4.05 gradient coloring, we observe that ROIX-Zstd and ROIX -Gzip con- sistently achiev e the highest compression ratios at higher error ROIX-Comp: Optimizing X-ray Computed T omography Imaging Strategy for Data Reduction and Reconstruction SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan Figure 6: Comparative Analysis of Compression Ratios Across X-CT Datasets with relative improvements bounds (1e1 and 1.5e1), with the Chicken dataset sho wing excep- tional results reaching up to 230.81 × compression with ROIX-Zstd. The wood[ 23 ] and ryugu[ 25 ] datasets show more modest but still signicant compression, while ROIX -Sz3 demonstrates more bal- anced p erformance across dierent error b ounds. Most datasets exhibit a dramatic increase in compression ratio when moving from 1e0 to 5e0 error bounds, indicating a key threshold where lossy compression b ecomes signicantly more eective. In con- trast, ROIX-Zfp consistently shows the low est compression perfor- mance across all datasets, rar ely exceeding 4.5 × even at the highest error b ounds. The p er-dataset visualization approach eectively highlights that dierent datasets respond uniquely to compression methods, with objects like pine cone[ 15 ] and walnuts[ 15 ] showing exceptional compressibility with ROIX -Zstd (reaching 132-153 × compression), while ROIX-Sz3 performs particularly well on the wood[ 23 ] dataset. This suggests that compression algorithm selec- tion should be tailored to specic dataset characteristics for optimal results in practical applications. 5.4 Compression time evaluation The compression times presented in Figur e 7 in seven X -CT datasets reveal signicant performance dierences between the four ROIX compression methods. ROIX-Zstd sho ws superior spe ed in most cases, particularly ex- celling with the chicken [ 15 ] dataset (0.47ms), while ROIX-Sz3 shows competitive performance, being fastest for the wood dataset (1.106ms). ROIX-Zfp performs well in certain datasets ( wood [ 23 ], ryugu [ 25 ]), but exhibits notable slowdowns in others (pinecone [ 15 ], seashell [ 15 ]), suggesting data-dep endent characteristics. ROIX- Gzip consistently r equires the longest processing times, particularly struggling with fossil [ 24 ] (8656.19ms) and seashell [ 15 ] (2301.29ms) datasets. The fossil dataset [ 24 ] presents the greatest challenge for all compressors, requiring signicantly longer processing times for all methods. Performance dierences span multiple orders of mag- nitude, and ROIX -Zstd and ROIX-Sz3 perform consistently faster W ood Fossil Embryos Ryugu Chicken W alnuts Pinecone Seashell 10 0 10 1 10 2 10 3 10 4 Datasets Compression Time (ms) ROIX-Gzip ROIX-Zstd ROIX-Sz3 ROIX-Zfp Figure 7: Compression Time Performance Evaluation (Log Scale) than ROIX -Gzip, making them preferable choices for time-sensitive X-CT data pr ocessing applications. 5.5 Decompression time evaluation The decompression times shown in Figure 8 follow a similar trend to the compression times. Comparison of decompression time demon- strates notable performance variations among the four ROIX meth- ods. ROIX-Sz3 generally exhibits the fastest decompression spee ds, particularly excelling with woo d [ 23 ] (0.58ms) and ryugu [ 25 ] (1.13ms) datasets, while showing consistent performance in most samples. ROIX -Gzip and ROIX-Zstd p erform similarly to each other , SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan Amarjit Singh, Kento Sato, K ohei Y oshida, Kentaro Uesugi, Y asumasa Joti, Takaki Hatsui, and Andrès Rubio Pr oaño W ood Fossil Embryos Ryugu Chicken W alnuts Pinecone Seashell 10 0 10 1 10 2 10 3 Datasets Decompression Time (ms) ROIX-Gzip ROIX-Zstd ROIX-Sz3 ROIX-Zfp Figure 8: De compression Time Comparison (Log Scale) with ROIX-Zstd showing slightly better results in some datasets, although both struggle with the more comple x fossil dataset [ 24 ] (1046.08ms and 979.99ms, respectively). ROIX-Zfp consistently shows the slowest decompression times in most datasets, particularly with walnuts [ 15 ] (449ms), seashell [ 15 ] (359ms), and pinecone [ 15 ] (256ms). For time-critical applications requiring fast de compres- sion, ROIX-Sz3 emerges as the optimal choice, with decompression performance advantages compared to other methods on certain datasets. 5.6 Decompression quality evaluation Quantitative analysis was performed on reconstructed images de- rived from raw data. The evaluation focused specically on targeted spatial features, enabling a comprehensive assessment of the se- lected structural elements. T o verify the eectiveness of our object extraction process, we compared the original image with the reconstructed image after compression and decompression. W e evaluated the quality and sim- ilarity between these images using the Structural Similarity Index (SSIM). Figure 9 highlights these metric scores and conrms that our method preserves objects with exceptional precision. For loss- less compression congurations, the analysis demonstrates p erfect structural preservation with an SSIM score of 1.0, indicating iden- tical structural patterns b etween the original and reconstructed images. If we used lossy compression, it would likely aect the SSIM score, reducing it from the perfect 1.0 achieved with lossless methods. The degree of reduction would depend on the sp ecic error-bounded settings applied during compression. These detailed measurements sho w that our extraction method pr eserves even the smallest details of the original image with high accuracy . Original image with all surrou nding inform ation A. Origi nal Image B. ROI Marked ROI Extra ction ROI – surrounding data removed Figure 9: Original vs ROI image analysis 6 Discussion Our ROI-based approach shows promising results but faces chal- lenges with complex structur es, particularly low contrast bound- aries and irregular shapes. The exceptional compression perfor- mance of the Ryugu dataset demonstrates how image characteris- tics fundamentally impact eciency: homogeneous backgr ounds and well-dened boundaries achieve signicantly better results than complex X-CT images with overlapping tissues and noise. Our analysis conrms that accuracy metrics can be misleading in X-CT imaging, as large backgr ound areas articially inate scores while masking segmentation quality degradation. Compression performance is strongly correlated with background homogene- ity , noise levels, and ROI characteristics. Traditional compr ession algorithms show limited gains with ROI-e xtracted data, while HPC- based compressors performed particularly poorly . ZFP achiev es only 3-4 × compression even at high error b ounds, signicantly underperforming its typical b ehavior on scientic data. Possible factors include ZFP’s blo ck structure that interacts poorly with our ROI patch dimensions, 8-bit normalization reducing exploitable redundancy , or mismatch between normalized data characteristics and ZFP’s oating-point design. More research is needed to fully characterize the underlying factors that contribute to these perfor- mance dier ences, which we identify as important futur e work. W e recommend optimizing acquisition parameters to minimize back- ground complexity and enhance tissue contrast. These ndings highlight opportunities to develop image-characteristic-aware com- pression algorithms that automatically adapt to the properties of the dataset. Although our results demonstrate eective ROI evaluation and compression p erformance, no direct comparison was performe d with other state-of-the-art ROI methods on identical datasets. Fair comparison between dierent ROI approaches is complex due to varying optimization targets, preprocessing requirements, and dataset-specic parameter tuning ne eds. Our evaluation focused primarily on the integration of ROI detection with compression eciency rather than comparative segmentation performance. ROIX-Comp: Optimizing X-ray Computed T omography Imaging Strategy for Data Reduction and Reconstruction SCA/HPCAsia 2026, January 26–29, 2026, Osaka, Japan 7 Conclusion In this paper , we have proposed an ROI detection and extraction framework (ROIX -Comp) optimized specically for compression eciency while maintaining data quality . Our method applies in- tensity normalization and adaptive thresholding to improve object boundary dete ction and structure preservation in X -CT data. The framework supports both lossless and lossy compression methods, depending on the application requirements. The evaluation results demonstrate that compr ession performance is highly dependent on image characteristics and error-bounded quantization. Integrating ROI-based extraction with adaptive compression improves storage eciency and computational performance for large-scale X-CT datasets, achieving up to 88% data reduction while preserving valu- able information. Future work will include a comprehensiv e comparative evalua- tion against state-of-the-art ROI detection metho ds using identical datasets to establish relative performance b enchmarks. W e also plan to expand this framework to supp ort diverse X-CT applications in materials science and X-CT imaging, integrate advanced deep learning-based segmentation models, and explore newer compres- sion te chniques tailored for scientic imaging to ensure optimal performance across various applications. Acknowledgments This work has been supported by the COE research grant in com- putational science from Hyogo Prefecture and K obe City through Foundation for Computational Science. This work (" AI for Science" supercomputing platform project) was supported by the RIKEN TRIP initiative (System software for AI for Science). References [1] H. L. Abbott and M. S. T aylor. 2023. Applications of X -ray Computed T omography in Science, Industr y , and Me dicine. https://www.xctexamples.com Accessed: 2025-03-30. [2] APS. 2025. Advanced P hoton Source. https://ww w .aps.anl.gov Accessed: 2025- 03-30. [3] Y ann Collet and Chip T urner . 2016. Smaller and Faster Data Compression with Zstandard. https://engineering.fb.com/2016/08/31/core- infra/smaller- and- faster- data- compression- with- zstandard/ Facebook Engineering Blog. [4] ESRF. 2025. European Synchrotron Radiation Facility. https://www .esrf.eu Accessed: 2025-03-30. [5] GZIP. 1993. The GZIP Home Page. http://ww w .gzip.org Accessed: 2025-02-01. [6] Sascha Manuel Huck, George S. K. Fung, K atia Parodi, and Karl Stierstorfer . 2021. On the potential of ROI imaging in x-ray CT – A comparison of novel dynamic beam attenuators with current technology . Medical Physics 48, 7 (July 2021), 3479–3499. doi:10.1002/mp.14879 [7] David A. Human. 1952. A Method for the Construction of Minimum- Redundancy Codes. Proceedings of the IRE 40, 9 (September 1952), 1098–1101. doi:10.1109/JRPROC.1952.273898 [8] T . Ishikawa et al . 2014. SPring-8-II Conceptual Design Report . Technical Report. RIKEN SPring-8 Center , Hyogo, Japan. https://rsc.riken.jp/pdf/SPring- 8- II.p df [9] T . Kameshima and T . Hatsui. 2022. Development of 150 Mpixel lens-couple d X-ray imaging detectors equipped with diusion-free transparent scintillators based on an analytical optimization approach. J. Phys. Conf. Ser . 2380, 1 (2022), 012094. doi:10.1088/1742- 6596/2380/1/012094 [10] Manpreet Kaur and Vikas W asson. 2015. ROI Based Medical Image Compression for T eleme dicine Application. Procedia Computer Science 70 (2015), 579–585. doi:10.1016/j.procs.2015.10.037 [11] Xin Liang, K ai Zhao , Sheng Di, Sihuan Li, Robert Underwood, Ali M. Gok, Jiannan Tian, Junjing Deng, Jon C. Calhoun, Dingwen T ao, Zizhong Chen, and Franck Cappello. 2021. SZ3: A Mo dular Framework for Composing Prediction-Base d Error-Bounded Lossy Compressors. arXiv:2111.02925 [cs.DC] abs/2111.02925 [12] P-S. Liao, T -S. Chen, and P-C. Chung. 2001. A fast algorithm for multilevel thresholding. Journal of Information Science and Engineering 17, 5 (2001), 713– 727. [13] Peter Lindstrom, Jerey Hittinger , James Dienderfer, Alyson Fo x, Daniel Osei- Kuuor , and Jerey Banks. 2025. ZFP: A compressed array representation for numerical computations. The International Journal of High Performance Comput- ing Applications 39, 1 (2025), 104–122. doi:10.1177/10943420241284023 [14] Michael T . McCann, Laura Vilaclara, and Michael Unser . 2018. Region of interest X-ray computed tomography via corrected back projection. In 2018 IEEE 15th International Symposium on Biomedical Imaging (ISBI 2018) . 65–69. doi:10.1109/ ISBI.2018.8363524 [15] Alexander Meaney . 2022. Cone-Beam Computed T omography Dataset of a Chicken Bone Imaged at 4 Dierent Dose Levels . doi:10.5281/zenodo.6990764 [16] Viswanath Muthukrishnan, Sandeep Jaipurkar, and Nedumaran Damo daran. 2024. Continuum topological derivative - A novel application tool for segmentation of CT and MRI images. NeuroImage: Reports 4, 3 (2024), 100215. doi:10.1016/j.ynirp. 2024.100215 [17] Dominik Müller , Iñaki Soto-Rey , and Frank Kramer . 2022. Towar ds a guideline for evaluation metrics in medical image segmentation. BMC Research Notes 15, 1 (2022), 210. doi:10.1186/s13104- 022- 06096- y PMID: 35725483; PMCID: PMC9208116. [18] N. Otsu. 1979. A Threshold Selection Method from Gray-Level Histograms. IEEE Transactions on Systems, Man, and Cyb ernetics 9, 1 (1979), 62–66. doi:10.1109/ TSMC.1979.4310076 [19] Rupak Roy , Kento Sato, Subhadeep Bhattachrya, Xingang Fang, Y asumasa Joti, T akaki Hatsui, T oshiyuki Nishiyama Hiraki, Jian Guo, and W eikuan Yu. 2021. Compression of Time Evolutionary Image Data through Predictive Deep Neural Networks. In 2021 IEEE/ACM 21st International Symposium on Cluster, Cloud and Internet Computing (CCGrid) . 41–50. doi:10.1109/CCGrid51090.2021.00014 [20] Julian Seward. 1996. bzip2 and libbzip2. http://www.bzip .org Accessed: 2025-02- 01. [21] Sony Semiconductor Solutions Corp oration. 2024. Industrial Camera Sensors Selector . https://w ww .sony- semicon.com/en/products/is/industry/sele ctor .html Accessed: 2025-04-14. [22] SPring-8. 2025. SPring-8 Synchrotron Radiation Facility . http://w ww .spring8.or . jp/en/ Accessed: 2025-03-30. [23] SPring-8 Research Facility. 2022. W ood Dataset: X-ray CT Imaging at Beamline BL20B2. http://w ww- bl20.spring8.or.jp/xct/ Sample: W ood, Pixel size: 5.4 𝜇 m, Energy: 15 ke V , 900 projections (180 ° ), Exposure time: 200 ms/projection. Obtained from SPring-8 Research Facility . [24] SPring-8 Research Facility. 2022. X-ray CT Dataset: High-Resolution Imaging of Internal Structures. http://ww w- bl20.spring8.or .jp/detectors/manual/Manual_ HiPic_6_4_Jpn.pdf 904 images & 1804 images, HiPic File Format. Obtaine d from SPring-8 Research Facility . [25] SPring-8 Research Facility. 2023. Ryugu Particle Dataset: X-ray CT Imaging at Beamline BL47X U. Sample: Ryugu particle (A3_MPF_X002), Pixel size: 51.1 nm, Energy: 7.35 ke V , 900 projections (180 ° ), Exposure time: 200 ms/projection. Obtained from SPring-8 Research Facility . [26] Shuohao Zhang, Yuting Y ang, Guangming Lu, and David Bull. 2023. ROI- based De ep Image Compression with Swin Transformers. arXiv preprint arXiv:2305.07783 (2023). https://ar xiv .org/abs/2305.07783 [27] Junbao Zheng, Lixian W ang, Jiangsheng Gui, and Abdulla Hamad Y ussuf. 2024. Study on Lung CT Image Segmentation Algorithm Based on Threshold-Gradient Combination and Improved Conv ex Hull Method. Scientic Reports 14, 1 (July 2024), 17731. doi:10.1038/s41598- 024- 68409- 4 [28] Jacob Ziv and Abraham Lempel. 1977. A Universal Algorithm for Se quential Data Compression. IEEE Transactions on Information Theory 23, 3 (May 1977), 337–343. doi:10.1109/TI T .1977.1055714

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment