Foundation Models for Medical Imaging: Status, Challenges, and Directions

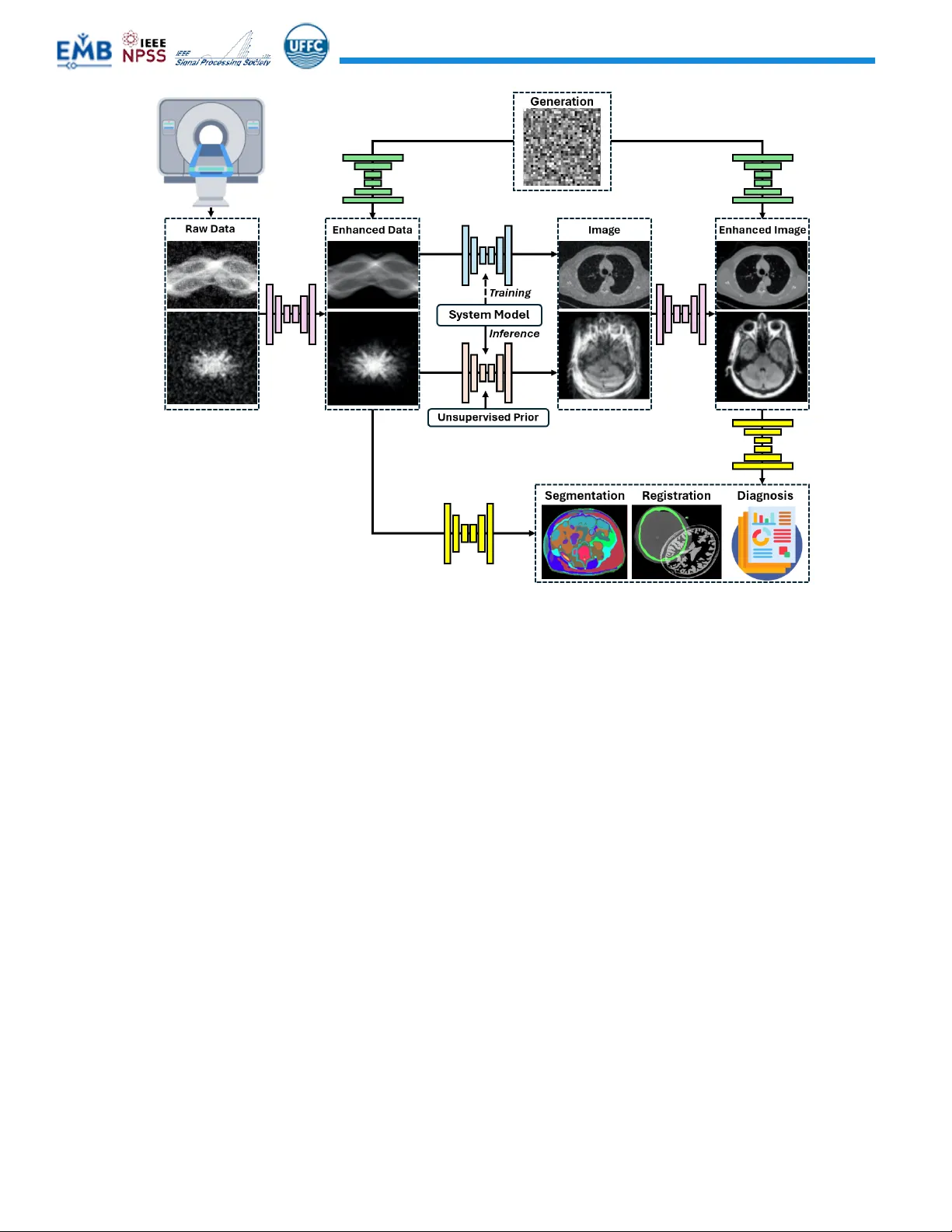

Foundation models (FMs) are rapidly reshaping medical imaging, shifting the field from narrowly trained, task-specific networks toward large, general-purpose models that can be adapted across modalities, anatomies, and clinical tasks. In this review,…

Authors: Chuang Niu, Pengwei Wu, Bruno De Man