Benchmarking Self-Supervised Models for Cardiac Ultrasound View Classification

Reliable interpretation of cardiac ultrasound images is essential for accurate clinical diagnosis and assessment. Self-supervised learning has shown promise in medical imaging by leveraging large unlabelled datasets to learn meaningful representation…

Authors: Youssef Megahed, Salma I. Megahed, Robin Ducharme

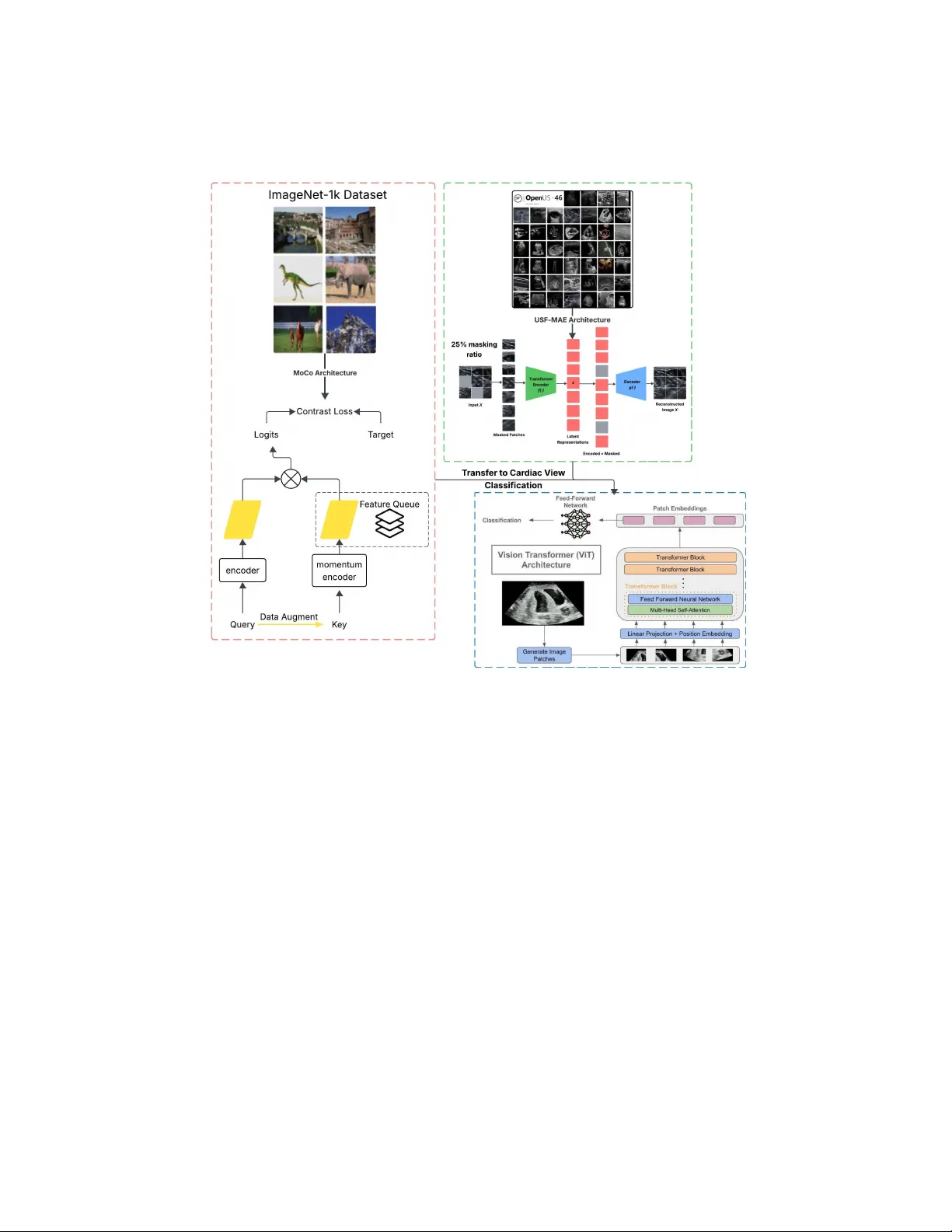

Benc hmarking Self-Sup ervised Mo dels for Cardiac Ultrasound View Classification Y oussef Megahed 1 , 2 [0009–0004–2595–5468] , Salma I. Megahed 11 [0009–0008–7763–1288] , Robin Duc harme 3 [0000–0002–5665–7429] , Inok Lee 3 , A drian D. C. Chan 1 [0000–0002–2111–247X] , Mark C. W alk er 3 , 4 , 5 , 6 , 7 [0000–0001–8974–4548] , and Stev en Ha wken 1 , 2 , 5 [0000–0002–3341–9022] 1 Departmen t of Systems and Computer Engineering, Carleton Univ ersity , Ottaw a, On tario, Canada 2 Departmen t of Metho dological and Implemen tation Research, Ottaw a Hospital Researc h Institute, Otta wa, On tario, Canada 3 Departmen t of A cute Care Researc h, Otta wa Hospital Researc h Institute, Otta wa, On tario, Canada 4 Departmen t of Obstetrics and Gynecology , Universit y of Otta wa, Otta wa, On tario, Canada 5 Sc ho ol of Epidemiology and Public Health, Universit y of Ottaw a, Ottaw a, Ontario, Canada 6 Departmen t of Obstetrics, Gynecology & Newb orn Care, The Ottaw a Hospital, Otta wa, On tario, Canada 7 In ternational and Global Health Office, Universit y of Ottaw a, Ottaw a, Ontario, Canada 8 College of Medicine, Alfaisal Universit y , Riy adh, Saudi Arabia Abstract. Reliable in terpretation of cardiac ultrasound images is es- sen tial for accurate clinical diagnosis and assessment. Self-sup ervised learning has sho wn promise in medical imaging by lev eraging large unla- b elled datasets to learn meaningful represen tations. In this study , w e ev aluate and compare t wo self-supervised learning frameworks, USF- MAE, developed b y our team, and MoCo v3, on the recen tly in tro duced CA CTUS dataset (37,736 images) for automated simulated cardiac view (A4C, PL, PSA V, PSMV, Random, and SC) classification. Both mo dels used 5-fold cross-v alidation, enabling robust assessment of generaliza- tion p erformance across multiple random splits. The CACTUS dataset pro vides exp ert-annotated cardiac ultrasound images with div erse views. W e adopt an identical training protocol for b oth mo dels to ensure a fair comparison. Both mo dels are configured with a learning rate of 0.0001 and a weigh t deca y of 0.01. F or each fold, we record p erformance metrics including R OC-AUC, accuracy , F1-score, and recall. Our results indi- cate that USF-MAE consistently outperforms MoCo v3 across metrics. The a v erage testing A UC for USF-MAE is 99.99% ( ± 0.01% 95% CI), compared to 99.97% ( ± 0.01%) for MoCo v3. USF-MAE ac hieves a mean testing accuracy of 99.33% ( ± 0.18%), h igher than the 98.99% ( ± 0.28%) 2 Y. Megahed et al. rep orted for MoCo v3. Similar trends are observed for the F1-score and recall, with improv emen ts statistically significan t across folds (paired t-test, p=0.0048 < 0.01). This proof-of-concept analysis suggests that USF-MAE learns more discriminative features for cardiac view classifi- cation than MoCo v3 when applied to this dataset. The enhanced p er- formance across multiple metrics highligh ts the p oten tial of USF-MAE for improving automated cardiac ultrasound classification. Keyw ords: Self-Supervised Learning · USF-MAE · Cardiac View Clas- sification. 1 In tro duction Cardiac ultrasound (ec ho cardiograph y) is a cornerstone imaging modality in b oth adult and fetal cardiology due to its real-time assessmen t of cardiac anatom y and function, lac k of ionizing radiation, and widespread clinical a v ailability . Ho wev er, reliable interpretation of echocardiographic images requires extensive training and exp erience, and manual annotation of cardiac views is b oth time- consuming and sub ject to inter-observ er v ariabilit y [1]. The complexit y of cardiac ultrasound in terpretation is further amplified in fetal imaging, where small car- diac structures and v ariable fetal p ositions increase the difficult y of consistent view iden tification. Deep learning has demonstrated substan tial promise for automating medical image analysis, achieving expert-level p erformance on diverse tasks across mo dal- ities such as radiography , ultrasound [2–8], and magnetic resonance imaging [9]. T raditional sup ervised learning approac hes rely heavily on large quan tities of lab elled data, whic h are often scarce and costly to obtain in clinical settings. In con trast, self-sup ervised learning (SSL) enables mo dels to leverage v ast collec- tions of unlabelled medical images to learn useful representations, subsequently fine-tuning on smaller annotated datasets with improv ed p erformance and an- notation efficiency [9, 10]. Recen t trends in SSL include b oth contrastiv e metho ds and generativ e pre- text tasks. Methods suc h as momen tum con trast (MoCo) [11] exploit a con- trastiv e learning ob jective to cluster semantically similar examples in embedding space without lab els [13]. Mask ed auto enco der (MAE) approac hes [14], originally in tro duced for natural images, learn to reconstruct masked p ortions of inputs, effectiv ely encouraging mo dels to capture rich, global structure in image repre- sen tations. These pre-training strategies hav e b een adapted to medical imaging tasks, and empirical evidence suggests that in-domain SSL pre-training can yield b etter downstream p erformance than mo dels initialized on natural images alone [2, 15]. In the domain of cardiac view classification, prior w ork has applied con- trastiv e SSL to learn discriminative features from echocardiograms, impro ving classification accuracy relative to purely sup ervised baselines [1]. More recently , the CACTUS dataset w as introduced as the first op en, graded large-scale dataset of cardiac ultrasound views, encompassing multiple standard views and random Title Suppressed Due to Excessive Length 3 T able 1. Dataset distribution of cardiac view classes and data split used in stratified 5-fold cross-v alidation. Category Num b er of Images T otal Images 37,736 A4C 7,422 PL 6,102 PSA V 5,832 PSMV 6,014 Random 6,021 SC 6,345 T esting Set (p er fold) 7,547 T raining Set (4-folds) 30,189 images to supp ort b oth classification and quality assessmen t tasks [16]. The a v ailability of CACTUS enables rigorous ev aluation of self-sup ervised mo dels tailored sp ecifically for cardiac ultrasound representations. Despite this progress, there remains a need to systematically b enc hmark SSL framew orks on CACTUS to identify the most effective pre-training paradigms for cardiac ultrasound view classification. F urthermore, foundation mo dels trained on large, div erse unlab eled ultrasound corp ora may offer impro ved transferability compared with SSL mo dels pre-trained on smaller or natural image datasets. In this study , we ev aluate and compare our recen tly published ultrasound self- sup ervised foundation mo del with mask ed auto enco ding (USF-MAE) [2] with MoCo v3 [12] on the CACTUS dataset [16]. This comparison serves as a pro of-of- concept (POC) to assess whether large-scale, domain-sp ecific self-sup ervised pre- training yields more discriminative features than contrastiv e learning for cardiac view classification, an essential first step tow ards downstream applications suc h as congenital heart defects (CHD) detection. 2 Metho dology 2.1 Dataset W e conducted all exp eriments using the publicly av ailable CACTUS dataset [16], whic h contains 37,736 exp ert-annotated cardiac ultrasound images generated by a phantom across six classes: apical four-cham ber (A4C), parasternal long-axis (PL), parasternal short-axis aortic v alv e (PSA V), parasternal short-axis mitral v alv e (PSMV), Random, and sub costal four-cham b er (SC) views (T able 1). The Random views class includes non-standard or non-diagnostic frames, increasing classification difficult y and b etter simulating real-world v ariabilit y . T o ensure robust ev aluation, we adopted a stratified 5-fold cross-v alidation proto col suc h that each of the six classes was equally represented in every fold. In each fold, four splits w ere used for training, and the remaining split was used for testing. 4 Y. Megahed et al. Fig. 1. A) Original ultrasound frame with ov erlay annotations. B) Cropp ed and cleaned, pro cessed and inpainted image used for analysis. 2.2 Image Prepro cessing Ultrasound images often contain sector-shap ed acquisition regions and colour annotations or markers, as shown in Fig. 1A. T o standardize the input and reduce irrelev an t visual artifacts, we applied a three-stage prepro cessing pipeline. Sector masking and cropping. Each image was first con verted to R GB, and a sector mask w as applied to isolate the ultrasound field of view. The mask w as defined using a pie-slice geometry centred at the b ottom midp oin t of the image with angular limits of 210 ◦ to 330 ◦ and radius equal to 90% of the im- age height. Pixels outside this region were set to zero. The mask ed image was then cropp ed using fixed b ounding co ordinates to remov e p eripheral b orders and scanner o verla ys. Annotation mask extraction. T o remov e embedded colour annotations, images were conv erted to the HSV colour space. Colour thresholds w ere applied to detect yello w, blue, and red ov erla ys commonly used for measuremen t markers. Binary masks were generated using these thresholds and subsequen tly dilated with a 5 × 5 k ernel to ensure complete cov erage of annotation regions. Inpain ting. Detected annotation regions were remo v ed and filled in the missing pixel v alues using the Na vier–Stokes–based inpainting [17] implemen ted in Op enCV (Fig. 1B). This step ensured that classification p erformance reflected anatomical conten t rather than o verlaid measuremen t graphics or noisy artifacts. 2.3 Mo del Architectures W e b enc hmark ed tw o self-sup ervised frameworks: MoCo v3 [12], and our previ- ously developed ultrasound foundation mo del, USF-MAE [2]. A detailed com- parison of their pretraining configurations is pro vided in T able 2. MoCo v3 Fine-T uning F or MoCo v3 [12], w e initialized a Vision T ransformer (ViT)-base mo del (ViT-B/16) backbone with publicly av ailable MoCo v3 SSL pretrained weigh ts. The classification head w as replaced with a linear la yer map- ping the ViT feature dimension to six output classes. All backbone parameters w ere fine-tuned during training. Title Suppressed Due to Excessive Length 5 T able 2. Comparison of pre-training configurations for MoCo v3 and USF-MAE. Comp onen t MoCo v3 [12] USF-MAE (ours) [2] Bac kb one architecture ViT-B/16 ViT-B/16 Pretraining paradigm Con trastive SSL Mask ed auto enco ding (MAE) Pretraining dataset ImageNet-1K Op enUS-46 Dataset domain Natural images Ultrasound images Num b er of images ∼ 1.28M ∼ 370K Pretraining ob jective Instance discrimination via momen tum con trast P atch reconstruction (MSE o ver mask ed patches) Masking ratio N/A 25% Pretrained weigh ts Publicly released weigh ts Publicly released weigh ts Fine-tuning on CACTUS F ull model fine-tuning F ull model fine-tuning USF-MAE Fine-T uning F or USF-MAE [2], we initialized its ViT-B/16 back- b one with our publicly av ailable ultrasound-sp ecific USF-MAE SSL pretrained w eights in GitHub, similar to MoCo v3. The classification head was replaced with a linear lay er mapping the ViT feature dimension to six output classes. All backbone parameters were fine-tuned during training as well. Consequently , the tw o mo dels share identical backbone architecture and fine-tuning proto col, differing only in their pretraining strategy and data domain, namely natural images v ersus ultrasound images (T able 2). 2.4 T raining and T esting Proto col T o ensure comparability , b oth models were trained using identical h yp erparam- eters and optimization strategies (end-to-end fine-tuning). All exp eriments were conducted using NVIDIA L40S GPU acceleration. Input pro cessing. Images were resized to 224 × 224 pixels. During training, data augmen tation included random rotation (0–90 ◦ ), horizon tal and vertical flipping of 50% probability , and random resized cropping with scale range [0.5, 2.0]. Pixel intensities were normalized using the mean of [0.485, 0.456, 0.406] and the standard deviation of [0.229, 0.224, 0.225]. Optimization. T raining w as p erformed for 15 ep ochs p er fold using Adam W [18] with a learning rate of 0.0001 and a w eight decay of 0.01. A cosine learning rate sc heduler with linear warm-up was applied. The batc h size was set to 32. A weigh ted cross-en tropy loss was emplo yed. Class weigh ts were computed from the training split of eac h fold using inv erse frequency balancing. Mo del selection. F or each fold, the mo del ac hieving the lo west training loss w as selected as the b est chec kpoint. Ev aluation Metrics. Performance w as ev aluated on the testing split of each fold after training and av eraged across all fiv e folds. Rep orted metrics include accuracy , weigh ted recall, w eighted F1-score, and macro one-versus-rest ROC- A UC. This exp erimental framew ork allo ws a con trolled assessment of represen- tation quality learned through differen t self-sup ervised pretraining paradigms when transferred to cardiac ultrasound view classification. 6 Y. Megahed et al. Fig. 2. Comparison of MoCo v3 and USF-MAE Pip elines. 3 Results T able 3 summarizes the mean testing performance across 5-fold cross-v alidation for b oth self-sup ervised frameworks. Ov erall, both mo dels achiev ed near-p erfect discrimination on the CA CTUS dataset; how ever, USF-MAE consistently out- p erformed MoCo v3 across all ev aluation metrics. USF-MAE achiev ed a mean testing accuracy of 99.33% ( ± 0.18% 95% CI), compared to 98.99% ( ± 0.28% 95% CI) for MoCo v3. A similar improv ement w as observed in weigh ted F1-score (99.33% vs. 98.99%) and recall (99.33% vs. 98.99%). In terms of macro ROC-A UC, USF-MAE reached 99.99% ( ± 0.01% 95% CI), slightly higher than the 99.97% ( ± 0.01% 95% CI) obtained with MoCo v3. T o assess whether the observed performance differences were statistically signifi- can t, we conducted a paired t-test on the fold-wise F1-scores. USF-MAE achiev ed significan tly higher F1-scores than MoCo v3 across the five folds, p=0.0048 (< α of 0.01), corresp onding to a mean improv ement of 0.34% p oin ts. Title Suppressed Due to Excessive Length 7 T able 3. P er-fold p erformance across 5-fold cross-v alidation. F old A ccuracy (%) Recall (%) F1-score (%) A UC (%) MoCo v3 USF-MAE MoCo v3 USF-MAE MoCo v3 USF-MAE MoCo v3 USF-MAE 1 99.18 99.35 99.18 99.35 99.18 99.35 99.98 99.99 2 99.01 99.35 99.01 99.35 99.01 99.35 99.97 99.99 3 98.91 99.42 98.91 99.42 98.91 99.42 99.97 99.98 4 98.97 99.22 98.97 99.22 98.97 99.22 99.98 99.99 5 98.90 99.31 98.90 99.31 98.90 99.31 99.98 99.98 Mean 98.99 99.33 98.99 99.33 98.99 99.33 99.97 99.99 95% CI 0.28 0.18 0.28 0.18 0.28 0.18 0.01 0.01 The normalized confusion matrix for the b est-performing USF-MAE mo del (Fig. 3A) demonstrates minimal inter-class confusion, with p er-class sensitivi- ties exceeding 97.5% across all views. The Random class sho wed slightly higher v ariabilit y relativ e to standard anatomical views, but remained highly discrimi- nativ e. The p er-class ROC curves (Fig. 3B) further confirm the strong separabilit y of all five cardiac views and the random class, with A UC v alues approac hing 1.0 for each class. These findings indicate that both self-sup ervised approaches learn highly discriminative representations for cardiac view classification, with USF- MAE providing consistently sup erior p erformance under iden tical fine-tuning conditions. Collectiv ely , these results supp ort the h yp othesis that large-scale ultrasound- sp ecific MAE pretraining yields more transferable and discriminative features than contrastiv e pretraining when applied to cardiac ultrasound view classifica- tion, within the scop e of this POC study . Fig. 3. Classification p erformance for cardiac view classification: A) normalized con- fusion matrix and B) p er-class ROC curves sho wing near p erfect discrimination across all classes. 8 Y. Megahed et al. 4 Discussion In this POC study , we b enc hmarked tw o SSL paradigms, contrastiv e learning (MoCo v3) and masked auto enco ding (USF-MAE), for cardiac ultrasound view classification on the CA CTUS dataset. While b oth approac hes achiev ed near- ceiling p erformance across all ev aluation metrics, USF-MAE consistently demon- strated sup erior accuracy , F1-score, and ROC-A UC under identical fine-tuning conditions. These results suggest that ultrasound-sp ecific MAE pretraining pro- duces highly transferable represen tations for cardiac view discrimination. T w o k ey factors differentiate the ev aluated mo dels: (1) the SSL ob jectiv e (mask ed auto enco ding vs. contrastiv e learning), and (2) the pretraining data domain (ultrasound-sp ecific data vs. natural images). MoCo v3 was pretrained using contrastiv e learning on ImageNet-scale natural images, whereas USF-MAE w as pretrained using masked auto encoding on large-scale ultrasound data. While b oth metho dological differences may con tribute to p erformance v ariation, prior studies hav e consistently demonstrated that domain-sp ecific pretraining often has a larger impact on downstream medical imaging performance than initial- ization from natural image datasets alone. F or example, Raghu et al. [19] show ed that ImageNet representations ma y not fully transfer to medical imaging tasks due to domain mismatch. Similarly , Azizi et al. [20] demonstrated that large-scale self-sup ervised pretraining within the medical domain substantially improv es do wnstream classification p erformance compared to natural image sup ervised pretraining. Although the absolute gain in accuracy , as an example, is 0.34%, this cor- resp onds to a substantial reduction in classification error. Sp ecifically , the error rate decreased from 1.01% to 0.67%, representing a relative error reduction of 33.7%. In already high-p erformance regimes, suc h reductions indicate mean- ingful impro vemen ts in representation quality rather than marginal numerical gains. Given existing evidence supp orting the imp ortance of domain alignmen t in medical imaging, we hypothesize that ultrasound-specific pretraining contributes more substantially to the observed p erformance gain than the difference in SSL ob jective alone. Imp ortan tly , MoCo v3 also ac hieved excellent p erformance, confirming that con trastive SSL remains a strong baseline for cardiac ultrasound representation learning. The near-p erfect discrimination observed for b oth mo dels suggests that CA CTUS view classification is a suitable b enchmarking task for ev aluating rep- resen tation qualit y b efore using the mo dels for more complex do wnstream tasks. F rom a clinical p ersp ectiv e, accurate cardiac view classification is a foun- dational prerequisite for automated CHD detection in fetal echocardiography . CHD diagnosis dep ends heavily on the correct acquisition and identification of standard views b efore structural abnormalities can be assessed. Therefore, robust and transferable representations learned through self-supervised pretraining may facilitate improv ed generalization when transitioning from view classification to CHD detection tasks. Sev eral limitations should b e ackno wledged. First, the CACTUS dataset con- sists of simulator-acquired images generated by scanning a phantom, which may Title Suppressed Due to Excessive Length 9 not fully capture the v ariabilit y present in real-w orld ultrasound examinations. Second, p erformance was ev aluated on a single dataset, and external v alidation w as not p erformed in this study . Although this study fo cused on a single dataset, evidence from our recent work on first-trimester fetal heart view classification [6] supp orts the generalizabilit y of ultrasound-sp ecific SSL. In that study , USF- MAE also outp erformed all baselines on real fetal ultrasound images, demon- strating the practical utility of this pretraining paradigm beyond simulator data. Third, the classification task is inherently easier than fine-grained abnormality detection, and performance differences may b ecome more pronounced in more c hallenging downstream tasks. F uture work will extend this analysis b eyond view classification to grading and diagnostic p erformance using the CACTUS dataset, enabling ev aluation of whether ultrasound-sp ecific pretraining improv es clinically relev ant assessment tasks. W e also plan to v alidate the mo dels on real fetal echocardiography datasets and examine the impact of ultrasound-sp ecific foundation pretraining on CHD detection and multi-class diagnostic classification in the near future. Such inv esti- gations will determine whether the observed represen tation adv antages translate in to meaningful clinical gains. 5 Conclusion In this study , we b enc hmarked USF-MAE and MoCo v3 for cardiac ultrasound view classification on the CA CTUS dataset. Under iden tical fine-tuning con- ditions, USF-MAE achiev ed consisten tly sup erior p erformance across all ev al- uation metrics. These findings suggest that ultrasound-sp ecific MAE pretrain- ing provides highly transferable represen tations for cardiac view discrimination. The USF-MAE framework and pretrained weigh ts are publicly a v ailable at: h ttps://github.com/Y usufii9/USF-MAE. As a POC analysis, this w ork supp orts ultrasound foundation mo dels as strong initializations for downstream clinical tasks. F uture studies will ev aluate whether these adv antages translate to im- pro ved CHD detection in real fetal echocardiography . Use of AI Assistance The author(s) used ChatGPT (by Op enAI) for language editing (grammar) and sen tence clarity impro vemen t. The to ol was used solely to enhance readabilit y and refine sen tence structure. It was not used to generate scientific conten t, ex- p erimen tal results, data analysis, or tec hnical conclusions. After using this to ol, the author(s) carefully review ed and edited the output and tak e full resp onsibil- it y for the conten ts of the manuscript. References 1. Chartsias, A., et al.: Contrastiv e learning for view classification of echocardiograms. In: MICCAI W orkshop on Deep Learning in Medical Image Analysis (2021). 10 Y. Megahed et al. 2. Megahed, Y., et al.: USF-MAE: Ultrasound Self-Sup ervised F oundation Model with Mask ed Autoenco ding. arXiv pr eprint arXiv:2510.22990 (2025). 3. Megahed, Y., et al.: Deep Learning Analysis of Prenatal Ultrasound for Identifica- tion of V entriculomegaly . arXiv pr eprint arXiv:2511.07827 (2025). 4. Megahed, Y., et al.: Self-Sup ervised Ultrasound Representation Learning for Renal Anomaly Prediction in Prenatal Imaging. arXiv pr eprint arXiv:2512.13434 (2025). 5. Megahed, Y., et al.: Improv ed cystic hygroma detection from prenatal imaging using ultrasound-sp ecific self-sup ervised representation learning. arXiv pr eprint arXiv:2512.22730 (2025). 6. Megahed, Y., et al.: Automated Classification of First-T rimester F etal Heart Views Using Ultrasound-Sp ecific Self-Supervised Learning. arXiv pr eprint arXiv:2512.24492 (2025). 7. W alker, M.C., et al.: Using deep-learning in fetal ultrasound analysis for diagnosis of cystic hygroma in the first trimester. PL oS One (2022). 8. Miguel, O.X., et al.: Deep learning prediction of renal anomalies for prenatal ultra- sound diagnosis. Scientific R ep orts (2024). 9. Huang, S.C., Pareek, A., Jensen, M., Lungren, M.P ., Y eung, S., Chaudhari, A.S.: Self-sup ervised learning for medical image classification: a systematic review and implemen tation guidelines. np j Digital Medicine, 6, 74 (2023). 10. Zeng, X., Ab dullah, N., Sumari, P .: Self-sup ervised learning framew ork application for medical image analysis: a review and summary . Journal of BioMe dic al Engineer- ing OnLine (2024). 11. He, K., F an, H., W u, Y., Xie, S., Girshic k, R.: Momen tum Contrast for Unsup er- vised Visual Representation Learning. 2020 IEEE/CVF Confer enc e on Computer Vision and Pattern Re c o gnition (CVPR) (2020). Seattle, W A, USA. 12. Chen, X., Xie, S., He, K.: An Empirical Study of T raining Self-Supervised Vi- sion T ransformers. 2021 IEEE/CVF International Confer enc e on Computer Vision (ICCV) (2020). Montreal, QC, Canada. 13. Chen, X., F an, H., Girshick, R., He, K.: Improv ed Baselines with Momentum Con- trastiv e Learning. arXiv pr eprint arXiv:2003.04297 (2020). 14. He, K., et al.: Masked Auto enco ders Are Scalable Vision Learners. Pr o c e e dings of the IEEE/CVF Confer enc e on Computer Vision and Pattern R e c o gnition (2022). 15. Xiao, J., Bai, Y., Y uille, A., Zhou, Z.: Delving in to Masked Autoenco ders for Multi-Lab el Thorax Disease Classification. 2023 IEEE/CVF Winter Confer enc e on Applic ations of Computer Vision (W ACV) (2023). W aikoloa, HI, USA. 16. Elmekki, H., et al.: CACTUS: An op en dataset and framework for automated Cardiac Assessment and Classification of Ultrasound images using deep transfer learning. Computers in Biolo gy and Medicine (2025). 17. Bertalmio, M., Bertozzi, A. L., and Sapiro, G., Na vier-Stokes, Fluid Dynamics, and Image and Video Inpainting. In Pro ceedings of the 2001 IEEE Computer So ciet y Conference on Computer Vision and P attern Recognition (CVPR 2001). 18. Loshc hilov, I., Hutter, F., Decoupled W eigh t Deca y Regularization. 7th Interna- tional Confer enc e on L e arning R epr esentations (ICLR) (2019). 19. Ragh u, M., Zhang, C., Kleinberg, J., Bengio, S.: T ransfusion: Understanding T rans- fer Learning for Medical Imaging. In: Adv ances in Neural Information Pro cessing Systems (NeurIPS) (2019). V ancouver, BC, Canada. 20. Azizi, S., et al.: Big Self-Supervised Mo dels Adv ance Medical Image Classifications. In: Pro ceedings of the IEEE/CVF In ternational Conference on Computer Vision (ICCV) (2021).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment