On Convergence Analysis of Network-GIANT: An approximate Hessian-based fully distributed optimization algorithm

In this paper, we present a detailed convergence analysis of a recently developed approximate Newton-type fully distributed optimization method for smooth, strongly convex local loss functions, called Network-GIANT, which has been empirically illustr…

Authors: Souvik Das, Luca Schenato, Subhrakanti Dey

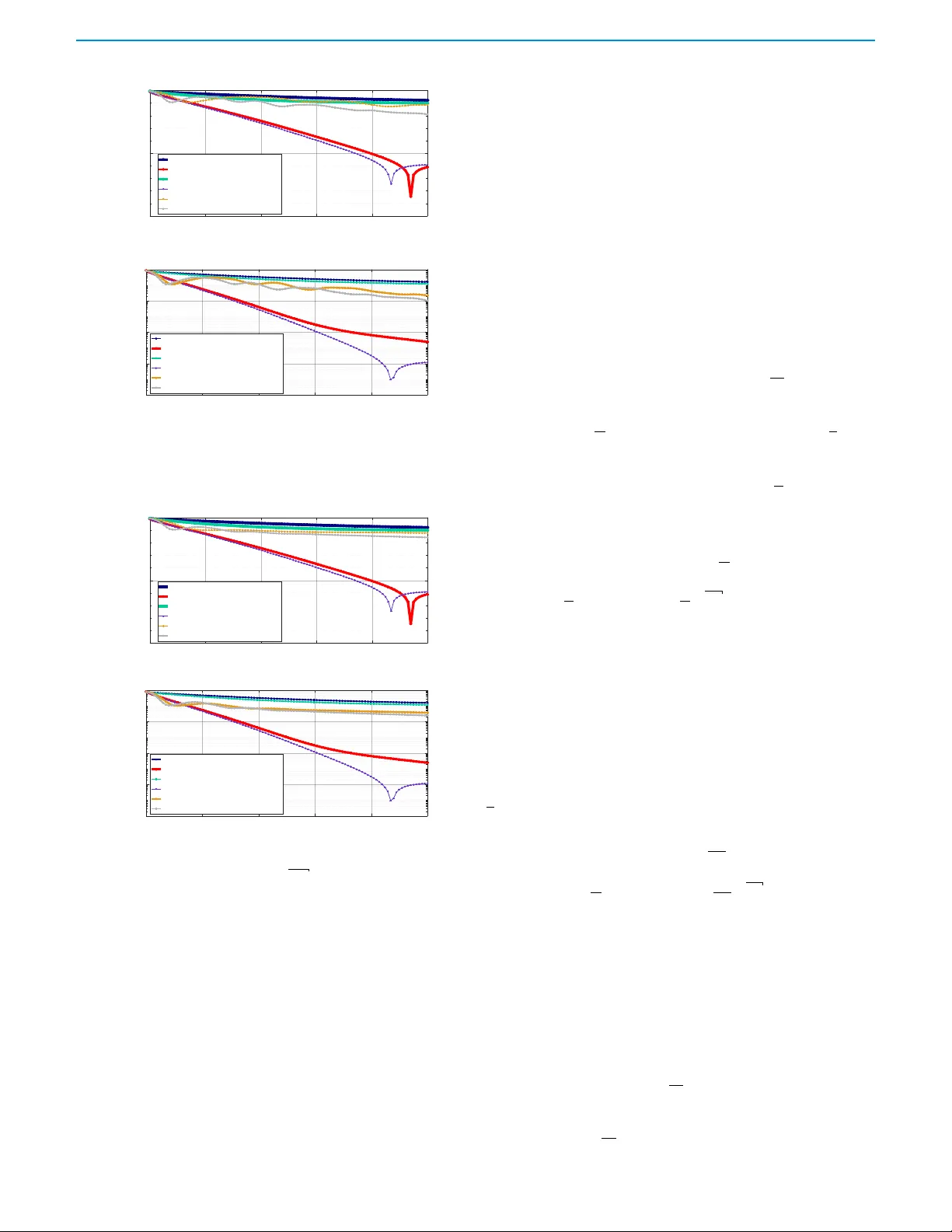

On Con v ergence Analysis of Netw or k-GIANT : An appro ximate Hessian-based fully distrib uted optimization algor ithm Souvik Das, Luca Schenato , F ellow ,IEEE , and Subhrakanti De y , F ellow , IEEE Abstract — In this paper , we present a detailed con- vergence analysis of a recently developed appr oximate Newton-type fully distributed optimization method for smooth, strongl y conve x local loss functions, called Network-GIANT (adapted from the Federated learning al- gorithm GIANT possessing mixed linear-quadratic con ver- gence properties), which has been empirically illustrated to show faster linear con vergence properties compared to its gradient-based counterpar ts, and several other exist- ing second order distributed algorithms, while having the same communication complexity (per iteration) as its first order distributed counterpar ts. By using consensus based parameter updates, and a local Hessian based descent direction at the individual nodes with gradient tracking, we first explicitl y characterize a global linear conver g ence rate for Network-GIANT when the local objective functions are smooth and strongly conve x, which can be computed as the spectral radius of a 3 × 3 matrix dependent on the Lipschitz continuity ( L ) and strong con vexity ( µ ) parameters of the objective functions, and the spectral norm ( σ ) of the underl ying undirected graph represented by a doubly stochastic consensus matrix. W e pro vide an explicit bound on the step siz e parameter η , below which this spectral radius is guaranteed to be less than 1 . Furthermore, we de- rive a mixed linear -quadratic inequality based upper bound for the optimality gap norm, which allo ws us to conclude that, under small step siz e v alues, asymptotically , as the algorithm approaches the global optimum, it achieves a locally linear con vergence rate of 1 − η ( 1 − γ µ ) for Network- GIANT , provided the Hessian appro ximation error γ (be- tween the harmonic mean of the local Hessians and the global hessian (the arithmetic mean of the local Hessians) is smaller than µ . This asymptotically linear conver gence rate of ≈ 1 − η explains the faster con vergence rate of Network-GIANT f or the first time. Numerical experiments are carried out with a reduced Co vT ype dataset for binary logistic regression o ver a v ariety of graphs to illustrate the above theoretical results. I . I N T RO D U C T I O N Distributed optimization and learning generally refer to the paradigm where multiple agents optimize a global cost func- tion or train a global model collaborativ ely , often by optimiz- This publication has emanated from research conducted with the financial support of Swedish Research Council (VR) Gr ant 2023-04232. Souvik Das and Subhrakanti Dey are with the Depar tment of Elec- trical Engineering, Uppsala Univ ersity , Sweden. (e-mail: { souvik.das, subhrakanti.dey } @angstrom.uu.se). Luca Schenato is with the Depar tment of Information Engineering, University of P adov a, Italy . e-mail: (schenato@dei.unipd.it). ing their local cost functions or training a local model based on their local data sets. In applications such as networked autonomous systems, Internet of Things (IoT), collaborati ve robotics and smart manufacturing, moving large amounts of locally collected data to a central processing unit can be expensi ve from both communication and storage point of view . Additionally , distributed optimization/learning prev ents sharing of ra w data, thus guaranteeing data pri vac y to a certain extent. Distributed/decentralized optimization or learning can occur over a network consisting of a central server with multiple local nodes (Federated Learning), or ov er a fully distributed setting without a central serv er , where nodes only communicate to their neighbours. There ha ve been significant progress in recent years in both settings - see [1], [2], [3] for an extensi ve survey on these topics focusing on their key challenges and opportunities. In this work, we will primarily focus on the topic of fully distributed optimization where nodes collaboratively optimize a global cost which is a sum of local cost functions. W e will also restrict ourselves to con ve x, and in particular , strongly con vex cost functions. In this context, distrib uted optimization or learning algorithms proceed in an iterati ve f ashion, where each node updates its local estimate of the global optimization variable based on consensus-type averaging of its o wn infor - mation and the information received from its neighbours, com- bined with (typically) a gradient-descent type algorithm with a suitably chosen step size (constant or diminishing with time). W ithout going into a detailed literature survey of gradient based distributed optimization algorithms, we refer the readers to [4], [5]. For strongly con ve x functions, it is well known that gradient based techniques achie ve linear (exponential) con vergence with a constant step size. Replicating this in a fully distributed setting requires a gradient-tracking algorithm where each node keeps a vector that keeps a local estimate of the global gradient [6]. A detailed con vergence analysis was carried out in [6] to illustrate linear con ver gence of gradient- tracking based distributed first order optimization algorithms for smooth and strongly con vex functions ov er undirected graphs, whereas a sublinear con ver gence rate O (1 /k ) was guaranteed for smooth conv ex functions that are not strongly con vex. A gradient-tracking based distrib uted first-order op- timization with an additional distributed eigen vector tracking was presented in [7], with a prov able linear conv ergernce rate. It is well kno wn that for strongly con ve x and smooth 2 functions, centralized optimization algorithms using curvature (or Hessian) information of the cost function, such as the Newton-Raphson method, achiev es faster locally quadratic con vergence in the vicinity of the optimum. In the context of fully distributed optimization algorithms utilizing Newton- type methods, achieving quadratic conv ergence is difficult, primarily due to the fact that communicating local Hessian information to neighboring nodes is infeasible for large model size or parameter dimension, and the underlying consensus algorithm essentially slows do wn the con vergence to at best a linear rate. Computation of the Hessian and its in version is another bottleneck, which has moti vated a plethora of recent approximate Ne wton-type F ederated learning algorithms [8], [9], [10], [11], which can also display a mixed linear-quadratic con vergence rate. In the fully distributed regime, earlier ex- amples of second order optimization algorithms include [12], [13], [14], [15] that approximate the Hessian based on T aylor series expansion and historical data. More recent works, such as [16] developed a fully distributed version of D ANE [17] (termed as Network-D ANE) to ov ercome the high compu- tational costs of the existing algorithms, and [18] captured the second order information of the objective functions based on an augmented Lagrangian function along with gradient diffusion technique to come up with a distributed algorithm lev eraging the Hessian information of the objectiv e function. Recent papers such as [19] and [20] have shown howe ver , that the superlinear con ver gence properties of Federated second order optimization algorithms such as GIANT can be retrieved only when exact av erages of gradients or parameters can be computed via finite-time e xact consensus [19], or through distributed finite-time set consensus [20], both of which can require up to O ( N ) (where N is the number of nodes) con- sensus rounds between two successiv e optimization iterations, thus making them infeasible for large networks. In this article, we focus on a recently de veloped fully distributed optimization algorithm called Net w ork - GIANT [21], which combined gradient tracking with consensus based updates and a descent direction computed by the product of the inv erse local Hessian and the gradient tracking v ector at each node. Built on an extension of the approximate Newton-type federated learning algorithm GIANT [22], it was shown that Net work - GIANT lev erages gradient tracking to achiev e semi-global exponential con vergence to the exact optimal solution for a suf ficiently small step-size, and enjoys a low communication ov erhead of O ( n ) per iteration at each node, where n is the dimension of the decision space, thus making it comparable to first-order distributed optimization techniques. The authors of [21] empirically also demonstrated a superior con ver gence rate compared to gradient-tracking based first order methods, as well as a number of other second order distrib uted optimization algorithms, including the well-known Network-D ANE [16]. Although [21] proved semi-global exponential con ver gence using a two-time-scale separation principle that guarantees linear con ver gence and empirically verified its superior performance, an explicit ex- pression for the actual con vergence rate w as not established. In addition, while a superior linear con ver gence was empirically demonstrated compared to its first order optimization counter- parts, there was no analytical justification for this observation. In this paper, we set out to provide a deeper analysis of the con vergence of Netw ork-GIANT and pro vide partial answers to some of the abov e unanswered questions. Contributions The main contributions are highlighted belo w: • W e first pro vide a global linear conv ergence result with an explicitly computable rate for Netw ork - GIANT for a suf ficiently small step size η . In a similar vein to [6], utilizing a linear conv ergence result for a damped Newton algorithm, we establish a linear inequality for a three-dimensional system consisting of the norms of consensus error , gradient tracking error and the optimality gap error . W e show that the linear con vergence rate is explicitly giv en by the spectral radius of an associated 3 × 3 matrix dependent on the step size η , Lipschitz continuity ( L ) and strong conv exity parameter ( µ ) of the local cost functions, and the spectral norm ( σ ) of the doubly-stochastic consensus matrix of the underlying graph connecting the nodes. • W e also provide an explicit (albeit conserv ativ e) upper bound on the step size below which Netw ork - GIANT is guaranteed to con verge linearly (i.e. the aforementioned spectral radius is less than 1 ). • Finally , we deriv e a mixed linear -quadratic inequality based upper bound for the optimality gap norm, under the condition that the error due to Hessian approximation is sufficiently small. Exploring this result further, we argue that under a small step-size η assumption, the gradient- tracking and consensus error norms decay much faster than the optimality gap error , and asymptotically as the algorithm approaches the optimum, Net work - GIANT displays a netw ork-independent approximate local linear con vergence rate of 1 − η , which explains analytically the superior con vergence rate of Netw ork - GIANT compared to its first-order counterparts. • W e illustrate the abo ve findings through a distributed logistic-regression example with a reduced CovT ype dataset ov er regular expander type and Erd ˝ os–R ´ enyi random graphs with v arying degrees of connecti vity . Furthermore, we refer the readers to [21] for a thorough comparison of Netw ork - GIANT with other state-of-the-art fully distributed second-order algorithms. I I . P RO B L E M F O R M U L A T I O N W e consider a fully distributed unconstrained optimization problem over a communication network that can be modeled by a connected and time-inv ariant graph G = ( N , E ) , where N = { 1 , 2 , ..., N } denotes the set of nodes and E ⊆ N × N the set of bidirectional edges connecting the nodes. Nodes can only directly communicate with their 1-hop neighbors. The network can be equi valently described by a weight matrix W ∈ R N × N , where the element w ij is positiv e if there is an edge ( i, j ) ∈ E and zero otherwise. The consensus matrix W is chosen to be symmetric and doubly stochastic, which implies that W 1 = 1 (here 1 ∈ R N × 1 is a column vector 3 of all 1’ s), and the eigenv alues of W lie in ( − 1 , 1] . It is possible to construct this matrix in a distributed w ay , for example using the Metropolis algorithm [23]. W e will use the following conv entions for vector and matrix norms: the vector norm ∥ . ∥ represents the 2 -norm, whereas for a matrix, ∥ . ∥ denotes the Frobenius norm. The matrix 2 -norm (or spectral norm), if used, will be denoted by ∥ . ∥ 2 . It is a well known fact that σ = W − 1 N 11 T 2 satisfies 0 < σ < 1 . The unconstrained optimization problem is a fully dis- tributed version of the Federated optimization problem consid- ered in [22]: The unconstrained global optimization problem is defined as f ( x ⋆ ) = min x ∈ R n ( f ( x ) = 1 N N X i =1 f i ( x ) ) , (1) where f ( x ) denotes the local loss function for the i -th node. Remark 1: In typical distributed machine learning applica- tions, the local loss function is often chosen as f i ( x ) = 1 m m X j =1 l ij ( x T a ij ) + ˜ λ 2 ∥ x ∥ 2 . (2) where each node i ∈ N owns m data samples { a ij } j ∈ { 1 , ..., m } , each associated with a conv ex, twice differentiable and smooth loss function l ij ( · ) . The ov erall cost function at node i is gi ven by the sum of the local empirical error and a regularization term. T ypical examples of such loss functions are linear regression or logistic regression problems. W e make the follo wing standard assumption regarding the local loss functions f i ( · ) : Assumption 1: The local objecti ve f i ( · ) is µ -strongly con- ve x and L -smooth, i.e. there exists a constant L such that ∀ x, x ′ ∈ R n , and for all i ∈ { 1 , 2 , . . . , N } , ∥∇ f i ( x ) − ∇ f i ( x ′ ) ∥ 2 ⩽ L ∥ x − x ′ ∥ , and µ I ⩽ ∇ 2 f i ( x ) ⩽ L I , where I denotes an identity matrix of size N × N , Note that this assumption implies that the global objective function f ( x ) is also µ -strongly con vex and L -smooth. Assumption 1 guarantees the uniqueness of the minimizer of (1) and is standard in second-order optimization, and can often be guaranteed by the addition of a regularizer to the cost function if it is not strictly conv ex. W e define the following matrices x k , s k , ∇ k ∈ R N × n , such that: x k = x k 1 x k 2 . . . x k N , s k = s k 1 s k 2 . . . s k N , ∇ k = ∇ f 1 ( x k 1 ) ∇ f 2 ( x k 2 ) . . . ∇ f N ( x k N ) . (3) In order to av oid Kronecker -product based cumbersome notations, we define the relev ant vectors in the ro w-vector format throughout the paper . W e assume that each node i keeps a local copy x k i ∈ R 1 × n of the global state vector x k at the k -th iteration, and a vector s k i ∈ R 1 × n that tracks the global average gradient ∇ f ( x k ) = 1 N P N i =1 ∇ f i ( x k i ) , where ∇ f i ( x k i ) ∈ R 1 × n , denotes the local gradient at the node i . W e also define the global Hessian as ∇ 2 f ( x k ) = 1 N ∇ 2 f i ( x k i ) , and with a slight abuse of notation ∇ 2 f ( ˜ x ) = ∇ 2 f ( x k ) | x k i = ˜ x T for further analysis. I I I . A L G O R I T H M D E S C R I P T I O N The Network-GIANT algorithm is giv en by the two fol- lowing consensus-based updates at each node i at iteration k , one for the parameter vector x k i , and the other one being the familiar update for the gradient-tracking v ector s k i [6], as gi ven below: x k +1 i = n X j =1 w ij x k i − η s k i ∇ 2 f i ( x k i ) − 1 s k +1 i = n X j =1 w ij s k i + ∇ f i ( x k +1 i ) − ∇ f i ( x k i ) , s 0 i = ∇ f i ( x 0 i ) , (4) where ˜ H i k = ∇ 2 f i ( x k i ) is the local Hessian at the i -th node at the k -the iteration, which is inv ertible due to the strong con vexity assumption on f i ( x ) . 0 < η < 1 is a stepsize that needs to be chosen carefully . Remark 2: Note that although the above algorithm requires an in verse local Hessian computation at each node, there are computationally ef ficient iterativ e approximate Hessian in verse calculation methods, e.g. a v ariation of the standard conjugate-gradient algorithm called LiSSA [24]. There are also similar methods for computing the product of in verse Hessian and gradients. These techniques can be used to alleviate the computational burden for Hessian inv ersion at the nodes in case of high dimensional problems. Using the matrix notation from (3), one can write the above algorithm compactly as follo ws: x k +1 = W x k − η y k s k +1 = W s k + ∇ k +1 − ∇ k , s 0 = ∇ 0 . (5) Here y k = [ y k 1 y k 2 . . . , y k N ] T , where y k i = s k i ∇ 2 f i ( x k i ) − 1 . For the purpose of the subsequent con ver gence analysis of the algorithm giv en in (4), we also define the following av erage quantities ¯ x k = 1 T x k N = 1 N P N i =1 x k i , ¯ s k = 1 T s k N = 1 N P N i =1 s k i and g k = ∇ f ( x k ) = 1 T ∇ k N . Using a similar inductiv e proof as in [6], it follows that ¯ s k = g k , that is, the av erage gradient tracking vector tracks the av erage global gradient at all steps, when initialized as s 0 = ∇ 0 . I V . C O N V E R G E N C E A N A L Y S I S In this section, we provide two main results regarding the con vergence of the Network-GIANT algorithm gi ven by (5). First, under Assumptions 1(a) and 1(b), we establish that for a sufficiently small step size η , the Network-GIANT algorithm enjoys a linear conv ergence rate, implying that the optimality gap || ¯ x k − x ⋆ || decays exponentially with the iteration number k . W e are able to provide an explicit expression for an upper bound ¯ η such that for η < ¯ η , this result holds true. In the second result, with additional assumptions on the Lipschitz continuity of the Hessians of the local loss functions and a small enough approximation error bound for the error between the true Hessian of f ( x ) , and the one gi ven by the harmonic mean of the local Hessians, we prove a mixed linear-quadratic inequality based upper bound for the optimality gap in the Network-GIANT algorithm. 4 A. Linear Conv ergence Analysis of Network-GIANT Before we proceed further, we quote the follo wing results that hav e been established in [6] under Assumption 1. W e also define the three error terms: ∥ x k − 1 ¯ x k ∥ , ∥ s k − 1 g k ∥ , and ∥ ¯ x k − x ⋆ ∥ , which correspond to the norms of the con- sensus err or , gr adient tr acking err or , and the optimality gap , respectiv ely . Lemma 1: Under Assumption 1, we have (i) ∥∇ k − ∇ k − 1 ∥ ⩽ L ∥ x k − x k − 1 ∥ , (6) ∥ g k − g k − 1 ∥ ⩽ L √ N ∥ x k − x k − 1 ∥ . (7) (ii) ∥ s k ∥ ⩽ ∥ s k − 1 g k ∥ + L ∥ x k − 1 x ⋆ ∥ , (8) ⩽ ∥ s k − 1 g k ∥ + L ∥ x k − 1 ¯ x k ∥ + L √ N ∥ ¯ x k − x ⋆ ∥ . W e will also need another result concerning the global linear con vergence of a damped Newton method, stated as follows. Lemma 2: Consider a column vector z ∈ R n , and a function u ( z ) that satisfies the smoothness and conv exity assumptions in Assumption 1 (a)-(b). A damped Newton iterativ e update to find z ⋆ , the unique minimum of u ( z ) can be written as z + = z − η [ ∇ 2 u ( z )] − 1 ∇ u ( z ) , 0 < η < 1 . (9) It can be sho wn that if η ⩽ µ L , then z + − z ⋆ ⩽ (1 − η µ L ) ∥ z − z ⋆ ∥ . (10) Pr oof: Define H z = [ ∇ 2 u ( z )] − 1 R 1 0 ∇ 2 ( z ⋆ + λ ( z − z ⋆ ) dλ . Then one can sho w that [ ∇ 2 u ( z )] − 1 ∇ u ( z ) = [ ∇ 2 u ( z )] − 1 ( ∇ u ( z ) − ∇ u ( z ⋆ )) = [ ∇ 2 u ( z )] − 1 Z 1 0 ∇ 2 ( z ⋆ + λ ( z − z ⋆ ) dλ ( z − z ⋆ ) = H z ( z − z ⋆ ) (11) which implies z + − z ⋆ = ( I − η H z )( z − z ⋆ ) Using µ L I ⩽ H z ⩽ L µ I , it then follo ws that (1 − η L µ ) I ⩽ ( I − η H z ) ⩽ (1 − η µ L ) I . Therefore, if η ⩽ µ L , we obtain 0 ⩽ I − η H z ⩽ (1 − η µ L ) I , implying ∥ I − η H z ∥ 2 ⩽ (1 − η µ L ) , from which the proof follows. Remark 3: It is well kno wn that a standard Newton’ s method, initialized with a damped stage where the step size η is chosen by a backtracking line search, will ev entually allow η → 1 , as the gradient norm falls below a certain threshold, resulting in a local quadratic con ver gence of the Newton’ s method with η = 1 [25]. Howe ver , the damped Newton’ s method with a fixed step size η also enjoys a global linear con ver gence for a sufficiently sma ll η for strongly con vex and smooth functions, as sho wn in the abov e proof. Interestingly , the global linear conv ergence of a slightly more general damped Newton’ s method is left as an exercise in [26]. Using the abov e two lemmas, one can show the following result: Theor em 1: Consider the NETWORK-GIANT algorithm giv en by (5), implemented with a doubly stochastic consensus matrix W with a spectral norm σ = W − 1 N 11 T 2 < 1 . Assuming η ⩽ µ L , and that Assumption 1 (a)-(b) holds, we can show the following inequality: ∥ x k +1 − 1 ¯ x k +1 ∥ ∥ s k +1 − 1 g k +1 ∥ √ N ∥ ¯ x k +1 − x ⋆ ∥ ⩽ G ( η ) ∥ x k − 1 ¯ x k ∥ ∥ s k − 1 g k ∥ √ N ∥ ¯ x k − x ⋆ ∥ G ( η ) = σ + η L µ η µ η L µ 2 L + η L 2 µ σ + η L µ η L 2 µ η L µ η µ 1 − η µ L (12) Pr oof: See Appendix A for a proof. It is clear from (12) that the three error norms will go to zero exponentially fast as long as the spectral radius ρ ( G ( η )) < 1 . Since G ( η ) is a matrix with all positiv e elements, from the Perron-Frobenius theorem, the lar gest eigenv alue is real and positiv e. In the ne xt theorem, we pro vide an upper bound on the step size η that ensures that the spectral radius ρ ( G ( η )) < 1 . Theor em 2: If one chooses η < ¯ η = (1 − σ ) 2 2(2 − σ )( κ + κ 3 ) , where κ = L µ is the condition number of the global Hessian, we can show that ρ ( G ( η )) < 1 . Pr oof: See Appendix B. Remark 4: In [6], which established a linear con ver gence result for the gradient tracking based first-order distributed optimization algorithm, the authors showed that (see Lemma 2 in [6]) when η < 1 L (using the notation of our paper), the spectral radius of the corresponding matrix ¯ G ( η ) = σ η 0 2 L + η L 2 σ + η L η L 2 η L 0 1 − η µ is upper bounded, and for a specific value of η = µ L 2 ( 1 − σ 6 ) 2 , the spectral radius ρ ( ¯ G ( η )) < 1 . Using a similar analysis as in the proof of Theorem 2 in our paper , one can obtain a tighter bound on η compared to 1 L , which is given by η < ˜ η = − (3 − σ )+ √ (3 − σ ) 2 +4(1 − σ ) 2 (1+ κ ) 2 L (1+ κ ) , for which, the spectral radius ρ ( ¯ G ( η )) < 1 . Remark 5: Note that the upper bounds on the step sizes for both the Network-GIANT algorithm and gradient tracking based algorithm in [6], given by ¯ η , and ˜ η are simply sufficient conditions, as the evolution of the three error norms in (12) are given by inequalities rather than equalities. Thus while choosing an η below the corresponding upper bounds will definitely guarantee con ver gence, it may be possible to obtain faster con ver gence with a larger step size. For example, for a graph with σ = 0 . 7 , and L = 5 , µ = 1 (i.e. a mild condition number of 5 ), it can be shown through numerical computation for 0 < η < ˜ η , that the smallest v alue of ρ ( ¯ G ( η )) is 0 . 9954 for the distributed gradient tracking algorithm in [6]. Similar conservati ve con vergence rates based on Theorems 1 and 2 can be found for the Network-GIANT algorithm. 5 Remark 6: It is also not possible to directly compare ¯ η and ˜ η as functions of only σ and the condition number κ , since ˜ η also depends on L , the Lipschitz continuity parameter . B. A Mixed Linear-Quadratic Inequality for the Optimality Gap Motiv ated by the conserv ativ eness of the global linear con- ver gence results in the pre vious subsection, we in vestigate why we obtain substantially faster con vergence rates for Network- GIANT in numerical results, than those predicted by Theorems 1 and 2. T o this end, we show that under some further assump- tions, one can obtain a mixed linear-quadratic con vergence rate of the optimality gap norm ∥ ¯ x k − x ⋆ ∥ . Before we proceed, we define the true global Hessian (arithmetic mean of the local Hessians) as H tr ( x k ) = 1 N P N i =1 ˜ H k i , and the approximate Hessian gi ven by the harmonic mean of the local Hessians as H app ( x k ) = 1 N P N i =1 ( ˜ H k i ) − 1 − 1 . W e make the following two assumptions, the first of which is standard in the analysis of Ne wton-type optimization algo- rithms. Assumption 2: W e assume that the Hessians of all the local cost functions are Lipschitz continuous, i.e. ∇ 2 f i ( x ) − ∇ 2 f i ( y ) ⩽ ¯ L ∥ x − y ∥ , ∀ i. (13) The second assumption is on the tightness of the approxi- mation of global hessian by the harmonic mean of the local Hessians. Assumption 3: There exists a suf ficiently small γ such that ∥ H tr ( x ) − H app ( x ) ∥ ⩽ γ , ∀ x . Remark 7: Note that it is not generally possible to obtain useful explicit analytical bounds on ∥ H tr ( x ) − H app ( x ) ∥ , although it has been empirically illustrated in [22] that for the regression based local loss functions f i ( x ) = 1 m P m j =1 l ij ( x T a ij ) + λ 2 ∥ x ∥ 2 , when the data samples are homogeneously distributed across the different agents, and the individual local Hessian approximation based on the local sample set is reasonably accurate, one can indeed approximate the arithmetic mean H tr ( x ) by the harmonic mean H app ( x ) within a reasonably good accuracy . Furthermore, note that the following linear quadratic inequality result is not limited to the Network-GIANT algorithm only , as any distrib uted approximate-Newton algorithm that can achiev e a global Hes- sian approximation within a small error will satisfy the mixed linear-quadratic inequality result stated below . Theor em 3: Under Assumptions 1, 2, and 3, one can show that if γ < µ , and η < 1 , gi ven specific error norms ∥ ¯ x k − x ⋆ ∥ , ∥ x k − 1 ¯ x k ∥ , ∥ s k − 1 g k ∥ at iteration k , the opti- mality gap error norm ∥ ¯ x k +1 − x ⋆ ∥ at iteration k + 1 satisfies the following mixed linear -quadratic inequality: ∥ ¯ x k +1 − x ⋆ ∥ ⩽ 1 − η (1 − γ µ ) ∥ ¯ x k − x ⋆ ∥ + η ¯ L µ √ N ∥ x k − 1 ¯ x k ∥ ∥ ¯ x k − x ⋆ ∥ + η ¯ L 2 µ ∥ ¯ x k − x ⋆ ∥ 2 + η µ √ N ∥ s k − 1 g k ∥ + η L µ √ N ∥ x k − 1 ¯ x k ∥ . (14) Pr oof: See Appendix C. It was illustrated with a comprehensiv e set of numerical results in [21] that Network-GIANT outperforms first-order optimization algorithms based on gradient tracking and a num- ber of other distributed optimization methods using different types of Hessian approximation techniques, such as Network- D ANE [27], and v arious other Network-Ne wton methods in- cluding the one presented in [28]. While it is generally dif ficult to compare exact con ver gence rates of various distrib uted Newton type methods, Theorem 3 provides an explanation why Network-GIANT pro vides a faster linear con vergence rate than the gradient tracking based first order algorithm, e.g. in [6]. An intuitiv e explanation follo ws like this: note that when the step-size η is small, from Theorem 1, it is clear that the matrix G ( η ) is diagonally dominant, and the consensus error norm and the gradient tracking error norm decay faster with an approximate linear rate of σ < 1 , while the optimality gap norm decays at a slo wer rate of 1 − η µ L . Thus it is clear that when the consensus error norm and the gradient tracking error norm are sufficiently small, the linear quadratic con vergence result in Theorem 3 above indicates that the optimality gap norm at time iteration k + 1 depends on a linear and a quadratic term of the optimality gap norm at iteration k . Clearly , the quadratic term will only dominate in the early stages when the optimality gap is larger (see [29] for a similar con vergence behaviour for a centralized approximate Newton method). Asymptotically , as the optimality gap decreases, the linear term will dominate and the asymptotic conv ergence rate is giv en by (1 − η (1 − γ µ )) . When the hessian approximation error γ is sufficiently small compared to the strong conv exity constant µ , then this linear con ver gence rate is approximately (1 − η ) , which is much faster than the corresponding rate of the gradient tracking based first order method, giv en by approximately (1 − η µ ) in the third diagonal entry of ¯ G ( η ) . In the next section, we will illustrate these somewhat qualitativ e arguments through numerical illustrations via a logistic regression based binary classification algorithm based on a CovT ype data set. W e will compare Network-GIANT and the gradient tracking based first order method from [6], as well as a Nesterov-acceleration based gradient tracking algorithm A CC-NGD-SC [30]. Note that to a void repetition, we do not include comparative performance results with other second or- der approximate Newton algorithms that were already reported in [21]. V . N U M E R I C A L A N A L Y S I S This section empirically studies the con vergence prop- erties of Net work - GIANT . Extensi ve comparisons of Net work - GIANT with other first-order and second-order distributed algorithms were reported in [21, §V] and will, therefore, not be included in the numerical analysis of this article. Here, we pose the following research questions: (a) Ho w does the connecti vity of the underlying graph af fect the con vergence rate? (b) What is the rate of conv ergence of the algorithm, when the consensus steps of the decision v ariable and the 6 gradient tracker , as described in Theorem 2 , are com- bined with the mixed linear-quadratic optimality gap error norm? W e observed that for a graph with higher connectivity , with a small spectral norm, ensures faster con vergence in comparison to a graph with less connecti vity and a spectral norm close to 1. Moreover , when η is small, we numerically verified that Netw ork - GIANT achiev es a faster con vergence, gi ven by 1 − η in contrast to its gradient tracking based first order counterpart. Experimental setup: For the experiments, we considered a binary logistic classification problem given by the distributed optimization problem min x ∈ R n 1 N N X i =1 1 m m X j =1 log 1 + exp − v i j ( x ⊤ u i j ) + ˜ λ 2 ∥ x ∥ 2 , where ˜ λ is the regularizer chosen to be 0 . 01 across all experiments. W e performed distributed classification on a reduced CovT ype dataset [31], consisting of 566602 sample points and n = 10 features. After random reshuffling to minimize bias, the samples ( u i j , v i j ) were uniformly distrib uted across the N nodes with ( m samples each). The v ariables x (0) were initialized uniformly randomly , and we imposed ∇ f i ( x i (0)) = s i (0) for each i = { 1 , 2 , . . . , N } . The experiments were conducted on d -regular e xpander graphs and Erd ˝ os-R ´ enyi graphs denoted by G ( N , p ) with N = 20 nodes and dif ferent graph parameters, summarized in T able I. Here d and p denote the degree of the expander graph and the edge/connection probability associated with the Erd ˝ os-R ´ enyi graph, respectiv ely , and σ is the spectral radius of these graphs. W e assumed that each agent can exchange data Graph T ype d / p σ d / p σ Expander d = 4 0 . 8800 d = 14 0 . 3026 Erd ˝ os–R ´ enyi p = 0 . 2 0 . 8699 p = 0 . 75 0 . 4416 T ABLE I : Spectral norm ( σ ) for dif ferent graph parameters. with their 1 -hop neighbors. The consensus weight matrices of these graphs were constructed using the Metropolis-Hastings algorithm [32]. Results and discussions: In the following e xperiments, the true optimal solution x ∗ and the corresponding optimal value f ( x ∗ ) , used as baselines, are obtained from the centralized Newton-Raphson algorithm with backtracking line search. Figure 1 displays the con vergence of dif ferent errors, namely , the consensus error (CE), the gradient-tracking error (Grad- T), and the optimality gap error (OE), for two different graph configurations of d -regular expander graphs. The details regarding the spectral norm and the graph parameters are summarized in T able I, and the corresponding stepsizes were picked according to T able II. W e observed that for small stepsize ( η ) choices, and for an expander graph with degree d = 14 and an Erd ˝ os-R ´ enyi graph with connection probability p = 0 . 75 (both of which exhibit a higher degree of connectivity and a lower spectral norm), the consensus error dynamics and the gradient-tracking Graph T ype d / p η d / p η Expander d = 4 0 . 05 d = 14 0 . 07 Erd ˝ os–R ´ enyi p = 0 . 2 0 . 05 p = 0 . 75 0 . 07 T ABLE II : Stepsize η for dif ferent graph configurations. error achieve a faster con vergence rate in comparison with the optimality gap error dynamics decaying at a slower rate of 1 − η µ L . This is consistent with the observation reported in the assertion of [21, Theorem 2]. F or graphs with a lower degree of connecti vity , faster con vergence is not distinctly observed, as shown in Figure 1. A similar behavior was observed for the Erd ˝ os-R ´ enyi graph with a lower connection probability as well, which we omit here for brevity . W e further observe that 0 200 400 600 800 1000 Iterations 10 -10 10 0 E rro r norm s CE (d=4) OE (d=4) Grad-T (d=4) CE (d=14) OE (d=14) Grad-T (d=14) Fig. 1 : Errors associated with Netw ork - GIANT . Here the acronyms, ‘CE’, ‘OE’, and ‘Grad-T’, are short forms for consensus error , optimality gap error, and gradient-tracking error , respectively . when the consensus and gradient tracking errors conv erge to sufficiently small values, the linear term in the optimality error gap dominates the quadratic term at a later stage of consensus when ¯ x k lies in a neighborhood of x ∗ . Empirical evidence f or improv ed rate of convergence: Figures 2 and 3 illustrate that when η is small, the ratio r k , defined by r k : = ∥ x k +1 − x ∗ ∥ ∥ x k − x ∗ ∥ ⩽ 1 − η + η γ µ ≈ 1 − η for each k, (15) provided that the Hessian approximation error γ is sufficiently small. T wo observations are noteworth y here. First, we report that for both the graph models, ( r k ) k ∈ N con verges asymptot- ically approximately to the limiting v alue of 1 − η , provided η and the Hessian approximation error are small. This is consistent with our discussion preceding §V . F or a larger stepsize value η = 0 . 07 and a lo wer graph connectivity d = 4 in Figures 2 and 3 (both top figures), the above conv ergence property no longer remains v alid. Similar beha vior was ob- served for larger values of stepsizes beyond 0 . 07 , although the ov erall Network-GIANT algorithm still con ver ges. Howe ver , for graphs with a higher degree of connectivity , a locally asymptotic approximate conv ergence rate of 1 − η is seen in Figures 2 and 3 (both bottom figures). Finally , we observed a slightly improved rate of conv ergence when the spectral norm of the graph is small, e ven for larger v alues of η . This trend is consistent for both the d -regular expander graph and the 7 Iterations 0 50 100 150 200 250 300 350 r k ! 0.85 0.9 0.95 1 r k ( 2 = 0 : 01) 2 = 0 : 01 r k ( 2 = 0 : 03) 2 = 0 : 03 r k ( 2 = 0 : 05) 2 = 0 : 05 r k ( 2 = 0 : 07) 2 = 0 : 07 Iterations 0 50 100 150 200 250 300 350 r k ! 0.85 0.9 0.95 1 r k ( 2 = 0 : 01) 2 = 0 : 01 r k ( 2 = 0 : 03) 2 = 0 : 03 r k ( 2 = 0 : 05) 2 = 0 : 05 r k ( 2 = 0 : 07) 2 = 0 : 07 Fig. 2 : Rate of conv ergence, as defined in (15), of Net work - GIANT for d -regular expander graph with d = 4 (top) and d = 14 (bottom). Iterations 0 50 100 150 200 250 300 350 r k ! 0.85 0.9 0.95 1 r k ( 2 = 0 : 01) 2 = 0 : 01 r k ( 2 = 0 : 03) 2 = 0 : 03 r k ( 2 = 0 : 05) 2 = 0 : 05 r k ( 2 = 0 : 07) 2 = 0 : 07 Iterations 0 50 100 150 200 250 300 350 r k ! 0.85 0.9 0.95 1 r k ( 2 = 0 : 01) 2 = 0 : 01 r k ( 2 = 0 : 03) 2 = 0 : 03 r k ( 2 = 0 : 05) 2 = 0 : 05 r k ( 2 = 0 : 07) 2 = 0 : 07 Fig. 3 : Rate of conv ergence, as defined in (15), of Net work - GIANT for Erd ˝ os–R ´ enyi graph with p = 0 . 2 (top) and p = 0 . 75 (bottom). Erd ˝ os-R ´ enyi graph. An exact characterization of the region of ( σ, η ) where this locally approximate asymptotic conv ergence rate of 1 − η for the optimality gap holds, remains an open problem for future in vestig ation. Comparison with state-of-the-ar t algorithms: For comparing Net work - GIANT with the gradient-tracking based first-order method de veloped in [6], termed as ‘GradT rack’ for con- venience, and a Nesterov-accelerated version of the gradi- ent tracking based optimization algorithm (ACC-NGD-SC) [30], we considered the full CovT ype dataset, and solve the multinomial logistic classification problem [33, Chapter 4]. Specifically , we solve the distributed optimization problem (1), where local objective function is defined by f i ( x ) : = − 1 m i m i X j =1 M X l =1 1 { v i j = l } log φ l ( u i j ; x ) + λ ∥ x ∥ 2 , λ > 0 is the re gularization penalty , and φ l ( u i j ; x ) = exp ( x l ) ⊤ u i j 1+ P M − 1 l =1 exp ( x l ) ⊤ u i j for l = 1 , . . . , M − 1 , 1 1+ P M − 1 l =1 exp ( x l ) ⊤ u i j for l = M . Here the parameters x : = ( x 1 x 2 · · · x M − 1 ) ⊤ are ( M − 1) × ( d + 1) -dimensional matrices, where x l ∈ R N +1 for each l ∈ { 1 , . . . , M } . The full CovT ype dataset consists of 581012 data points with 54 -dimensional feature vectors distributed among 7 classes. For these experiments, the regularizer λ was chosen to be 0 . 001 . The stepsizes for dif ferent algorithms were chosen according to T able III. For ACC-NGD-SC Algorithms d = 4 d = 14 p = 0 . 2 p = 0 . 75 GradT rack 0.08 0.18 0.08 0.12 Netw ork - GIANT 0.075 0.08 0.075 0.08 A CC-NGD-SC 0.09 0.29 0.2 0.3 T ABLE III : Stepsize η for dif ferent algorithms and graph models. [30], we considered a grid of [0 . 035 , 0 . 3] , and the step-sizes, η ∈ [0 . 035 , 0 . 3] , corresponding the values reported in T able III corresponding to the best result obtained. The momentum parameter was chosen to be α = √ µη as stated in [30], where µ is the strong con vexity parameter . For the dataset considered, µ ≃ 0 . 002 was computed empirically . 1 It is observed that Network-GIANT comfortably outperforms the A CC-NGD- SC algorithm as well, illustrating that using approximate curvature information can provide significant improvement in con vergence rate in distributed optimization. V I . C O N C L U S I O N S This paper focused on deriv ation of a number of de- tailed con vergence results of a recently dev eloped approximate Newton-type fully distributed optimization algorithm called Network-GIANT . F or strongly con ve x local cost functions with the step size belo w an explicit bound, a global linear 1 In our experiments, we observed that a larger α can be appropriately chosen to obtain a damped response; see Figure 5 for more details. 8 0 20 40 60 80 100 Iterations 10 -10 10 -5 10 0 log jj f ( X k ) ! f ( x $ ) jj GradT rack ( d = 4) Netw ork-GIANT ( d = 4) GradT rack ( d = 14) Netw ork-GIANT ( d = 14) Acc-DNGD-SC ( d = 4) Acc-DNGD-SC ( d = 14) (a) d -regular expander graph with d = 4 and d = 14 . 0 20 40 60 80 100 Iterations 10 -8 10 -6 10 -4 10 -2 10 0 log jj f ( X k ) ! f ( x $ ) jj GradT rack ( p = 0 : 2) Netw ork-GIANT ( p = 0 : 2) GradT rack ( p = 0 : 75) Netw ork-GIANT ( p = 0 : 75) Acc-DNGD-SC ( p = 0 : 2) Acc-DNGD-SC ( p = 0 : 75) (b) Erd ˝ os-R ´ enyi graph with p = 0 . 2 and p = 0 . 75 . Fig. 4 : Comparison of Netw ork - GIANT with GradT rack and A CC-NGD-SC. 0 20 40 60 80 100 Iterations 10 -10 10 -5 10 0 log jj f ( X k ) ! f ( x $ ) jj GradT rack ( d = 4) Netw ork-GIANT ( d = 4) GradT rack ( d = 14) Netw ork-GIANT ( d = 14) Acc-DNGD-SC ( d = 4) Acc-DNGD-SC ( d = 14) 0 20 40 60 80 100 Iterations 10 -8 10 -6 10 -4 10 -2 10 0 log jj f ( X k ) ! f ( x $ ) jj GradT rack ( p = 0 : 2) Netw ork-GIANT ( p = 0 : 2) GradT rack ( p = 0 : 75) Netw ork-GIANT ( p = 0 : 75) Acc-DNGD-SC ( p = 0 : 2) Acc-DNGD-SC ( p = 0 : 75) Fig. 5 : Comparison when α > √ µη was chosen, keeping all other parameter identical, and considered α = 0 . 05 . A damped response for ACC-NGD-SC [30] is reported. con vergence result is sho wn, which can be explicitly computed as the spectral radius of a 3 × 3 matrix, the elements of which depend on the cost function Lipschitz continuity ( L ) and strong con vexity ( µ ) parameters, the step size ( η ) and the spectral norm ( σ ) of the underlying consensus matrix. A mix ed linear-quadratic inequality based iterati ve upper bound is also established for the optimality gap, which indicates that for small step sizes and a negligible Hessian approximation error , Network-GIANT achieves an asymptotic approximate local linear con vergence rate of 1 − η , which pro vides a mathematical justification as to why Network-GIANT is empirically seen to achiev e a faster conv ergence rate than its first order gradient- tracking based counterparts. Ongoing and future work will focus on extensions of Network-GIANT to graphs with com- pressed and noisy communication, incorporating acceleration techniques such as Nesterov-acceleration and heavy-ball type methods, and more general (e.g. directed) graphs. A P P E N D I X I P R O O F O F T H E O R E M 1 W e prove three norm inequalities in (12) indi vidually . Consensus err or norm : First, note that, using the state/parameter update equation of (5), we have (using W 1 = 1 ) x k +1 − 1 ¯ x k +1 = W x k − 1 ¯ x k − η ( I − 1 N 11 T ) y k , where I denotes the identity matrix of order N × N . Noting that the 2-norm I − 1 N 11 T 2 ⩽ 1 for all n > 1 , ∥ y k ∥ ⩽ 1 µ ∥ s k ∥ , and ∥ W x k − 1 ¯ x k ∥ ⩽ σ ∥ x k − 1 ¯ x k ∥ , it follows that ∥ x k +1 − 1 ¯ x k +1 ∥ ⩽ σ ∥ x k − 1 ¯ x k ∥ + η µ ∥ s k ∥ . Finally using equation (ii) of Lemma 1, we ha ve ∥ x k +1 − 1 ¯ x k +1 ∥ ⩽ ( σ + η L µ ) ∥ x k − 1 ¯ x k ∥ + η µ ∥ s k − 1 g k ∥ + η L µ √ N ∥ ¯ x k − x ⋆ ∥ Gradient tracking error norm : Next, using a result from [6], we have ∥ s k +1 − 1 g k +1 ∥ ⩽ σ ∥ s k − 1 g k ∥ + L ∥ x k +1 − x k ∥ . Next, by using x k +1 − x k = ( W − I ) x k − η y k = ( W − I )( x k − 1 ¯ x k ) − η y k , and ∥ W − I ∥ 2 ⩽ 2 , we get (after substituting for ∥ y k ∥ ⩽ 1 µ ∥ s k ∥ , and some rearranging) ∥ s k +1 − 1 g k +1 ∥ ⩽ (2 L + η L 2 µ ) ∥ x k − 1 ¯ x k ∥ + ( σ + η L µ ) ∥ s k − 1 g k ∥ + η L 2 µ √ N ∥ ¯ x k − x ⋆ ∥ Optimality gap norm : T o simplify the following analysis, we use ˜ x k = ¯ x T k , ˜ x ⋆ = ( x ⋆ ) T , and ˜ s k i = ( s k i ) T . Once again, av eraging both sides of the state/parameter update equation in (5), and using the shorthand notation ˜ H k i = ∇ 2 f i ( x k i ) for the local Hessians (note that these matrices are positiv e definite symmetric), we can write ˜ x k +1 − ˜ x ⋆ = ˜ x k − ˜ x ⋆ − η 1 N N X i =1 ( ˜ H k i ) − 1 ˜ s k i = ˜ x k − ˜ x ⋆ − η 1 N N X i =1 ( ˜ H k i ) − 1 ( ˜ s k i − ∇ f ( ˜ x k ) + ∇ f ( ˜ x k )) 9 where ∇ f ( ˜ x k ) = ( ∇ f ( ¯ x k )) T . Define ¯ H k i = ( ˜ H k i ) − 1 ∇ f ( ˜ x k ) = ( ˜ H k i ) − 1 Z 1 0 ∇ 2 f ( ˜ x ⋆ + λ ( ˜ x k − ˜ x ⋆ )) dλ ( ˜ x k − ˜ x ⋆ ) Consequently , one can write ˜ x k +1 − ˜ x ⋆ = ( I − η P ¯ H k i N )( ˜ x k − ˜ x ⋆ ) − η 1 N N X i =1 ( ˜ H k i ) − 1 ( ˜ s k i − ∇ f ( ˜ x k )) Using a similar technique as in the Proof of Lemma 2, one can show that µ L I ⩽ ¯ H k i ⩽ L µ I , and hence 1 − η L µ I ⩽ I − η P ¯ H k i N ⩽ 1 − η µ L I It follows then that if η ⩽ µ L , we have 0 ⩽ I − η P ¯ H k i N ⩽ 1 − η µ L I , and finally , I − η P ¯ H k i N 2 ⩽ (1 − η µ L ) . This allows us to write ∥ ˜ x k +1 − ˜ x ⋆ ∥ ⩽ (1 − η µ L ) ∥ ˜ x k − ˜ x ⋆ ∥ + η 1 N N X i =1 ( ˜ H k i ) − 1 ( ˜ s k i − ∇ f ( ˜ x k )) Denoting S a = η 1 N P N i =1 ( ˜ H k i ) − 1 ( ˜ s k i − ∇ f ( ˜ x k )) , using the strong conv exity of the local cost functions and the fact that the norm of the transpose of a vector is the same as the norm of the v ector, it is easy to show that S a ⩽ η µ 1 N N X i =1 s k i − ∇ f ( ¯ x k )) ⩽ η µ 1 N N X i =1 s k i − ∇ f ( x k ) + ∇ f ( x k ) − ∇ f ( ¯ x k )) ⩽ η µ 1 N N X i =1 s k i − ∇ f ( x k ) + ∥∇ f ( x k ) − ∇ f ( ¯ x k )) ∥ ! ⩽ η µ 1 N N X i =1 s k i − g k + η µ L √ N ∥ x k − 1 ¯ x k ∥ where we hav e used the Lipschitz continuity of the gradients. Finally , noting again that ∥ ˜ x k − ˜ x ⋆ ∥ = ∥ ¯ x k − x ⋆ ∥ , and 1 N N X i =1 s k i − g k ⩽ v u u t 1 N N X i =1 s k i − g k 2 = ∥ s k − 1 g k ∥ √ N , we have √ N ∥ ¯ x k +1 − x ⋆ ∥ ⩽ (1 − η µ L ) √ N ∥ ¯ x k − x ⋆ ∥ + η L µ ∥ x k − 1 ¯ x k ∥ + η µ ∥ s k − 1 g k ∥ Combining the three inequalities for the three error norms, we obtain the inequality in (12). A P P E N D I X I I P R O O F O F T H E O R E M 2 In order to analyze the spectral radius of the matrix G ( η ) defined in (12), we first recall that since G ( η ) is a positiv e ma- trix, its spectral radius is real v alued and positiv e. Therefore, we need to in vestigate under what conditions on the step-size η , this real eigenv alue lies between 0 and 1 . First, note that at η = 0 , the eigen values G (0) are σ, σ , and 1 . Clearly , the spectral radius ρ ( G ( η )) = 1 at η = 0 . From continuity of eigen values as a function of the elements of the matrix, we know that ρ ( G ( η )) is a continuous function of η . Next, we ev aluate the deriv ativ e of ρ ( G ( η )) with respect to η at η = 0 . Define α = 1 − η µ L , and β = σ + η L µ , and δ = η L µ for simplifications. By analyzing the characteristic polynomial of G ( η ) , one can write that an eigen value λ of G ( η ) satisfies the following equation ( λ − α )( λ − β ) 2 − ( λ − α )(2 δ + δ 2 ) − 2 δ 2 ( λ − β ) − 2 δ 3 − 2 δ 2 = 0 T aking the deriv ative with respect to η , and substituting λ = 1 , and δ = 0 at η = 0 , one can get after some algebra ( ∂ λ ∂ η + µ L )(1 − σ ) 2 = 0 . Since σ < 1 , this implies that ∂ λ ∂ η < 0 at η = 0 . Therefore, from continuity of eigenv alues as a function of the matrix elements, it follows that the largest eigen value remains real and decreases as η increases slightly from 0 . Since the other two eigen values are σ < 1 at η = 0 , we obtain that ρ ( G ( η )) < 1 for a sufficiently small η > 0 . Next, we check for what values of η , we hav e a solution λ = 1 . Clearly , one such value, as we saw earlier , is η = 0 . By substituting λ = 1 (and consequently , ( λ − α ) = η µ L , ( λ − β ) = 1 − σ − η L µ ) the characteristic polynomial, and discarding the solution η = 0 , we get µ L (1 − σ − η L µ ) 2 − η L µ (2 + η L µ ) − η 2 L 3 µ 3 − η L 2 µ 2 (1 − σ − η L µ ) + 1 µ (1 − σ − η L µ )( − η L 2 µ − 2 η L 2 µ − η 2 L 3 µ 2 = 0 After some algebraic manipulation, surprisingly , the abo ve equation yields a single solution ¯ η = (1 − σ ) µ L 2(2 − σ )(1+( L µ ) 2 ) . Since at η = 0 , the eigen v alues are 1 , σ, σ , and the spectral radius is less than 1 in the immediate vicinity of η > 0 , and there is only one other v alue η = ¯ η , where an eigen value can be 1 , we conclude that the magnitudes of all the eigen values (and hence the spectral radius) are less than 1 for 0 < η < ¯ η . By noting that ¯ η < µ L (thus satisfying the global con vergence condition on the step size for the damped Newton method), and substituting κ = L µ , we complete the proof of Theorem 2. A P P E N D I X I I I P R O O F O F T H E O R E M 3 Similar to the optimality err or norm proof of Theorem 1, we work with the transposed notations ˜ x k , ˜ x ⋆ , ˜ s k i , and ∇ f ( ˜ x k ) . 10 W e adapt a similar proof technique as presented in [24]. As before, we can write ˜ x k +1 − ˜ x ⋆ = ˜ x k − ˜ x ⋆ − η 1 N N X i =1 ( ˜ H k i ) − 1 ( ˜ s k i − ∇ f ( ˜ x k ) + ∇ f ( ˜ x k )) = ˜ x k − ˜ x ⋆ − η 1 N N X i =1 ( ˜ H k i ) − 1 ( ˜ s k i − ∇ f ( ˜ x k )) | {z } S 1 − η 1 N N X i =1 ( ˜ H k i ) − 1 ∇ f ( ˜ x k )) | {z } S 2 W e note that S 2 can be written as S 2 = η ( H app ( x k )) − 1 Z 1 0 ∇ 2 f ( ˜ x ⋆ + λ ( ˜ x k − ˜ x ⋆ )) dλ ( ˜ x k − ˜ x ⋆ ) This allows us to write ˜ x k +1 − ˜ x ⋆ = I − η ( H app ( x k )) − 1 Z 1 0 ∇ 2 f ( ˜ x ⋆ + λ ( ˜ x k − ˜ x ⋆ )) dλ | {z } δ ( ˜ x k ) × ( ˜ x k − ˜ x ⋆ ) + S 1 = (1 − η )( ˜ x k − ˜ x ⋆ ) + η ( H app ( x k )) − 1 [ H app ( x k ) − δ ( ˜ x k )] × ( ˜ x k − ˜ x ⋆ ) + S 1 It follows then from strong con vexity and η < 1 ∥ ˜ x k +1 − ˜ x ⋆ ∥ ⩽ (1 − η ) + η µ ∥ H app ( x k ) − δ ( ˜ x k ) ∥ × ∥ ˜ x k − ˜ x ⋆ ∥ + ∥ S 1 ∥ (16) One can no w show (recalling that v T = ∥ v ∥ for a vector v ) ∥ H app ( x k ) − δ ( ˜ x k ) ∥ ⩽ ∥ H app ( x k ) − H tr ( x k ) ∥ + ∥ H tr ( x k ) − δ ( ˜ x k ) ∥ ⩽ γ + Z 1 0 ∇ 2 f ( x k ) − ∇ 2 f ( ˜ x k ) dλ + Z 1 0 ∇ 2 f ( ˜ x k ) − ∇ 2 f ( ˜ x ∗ + λ ( ˜ x k − ˜ x ∗ )) dλ ⩽ γ + ¯ L √ N ∥ x k − 1 ¯ x k ∥ + ¯ L 2 ∥ ¯ x k − x ⋆ ∥ where the last term in the last inequality is due to the Lipschitz continuity of the global Hessian. Using the above result in (16), we obtain (observing that since γ < µ , and η < 1 , η (1 − γ µ ) < 1 ) ∥ ¯ x k +1 − x ⋆ ∥ ⩽ 1 − η (1 − γ µ ) ∥ ¯ x k − x ⋆ ∥ + η ¯ L µ √ N ∥ x k − 1 ¯ x k ∥ ∥ ¯ x k − x ⋆ ∥ + η ¯ L 2 µ ∥ ¯ x k − x ⋆ ∥ 2 + ∥ S 1 ∥ Finally , noting that ∥ S 1 ∥ is the same as the quantity S a defined in the Proof of Theorem 1, we can obtain the final expression for the upper bound on ∥ ¯ x k +1 − x ⋆ ∥ giv en in (14). R E F E R E N C E S [1] L. Y uan, Z. W ang, L. Sun, P . S. Y u, and C. G. Brinton, “Decentralized federated learning: A survey and perspective, ” IEEE Internet of Things Journal , vol. 11, no. 21, pp. 34 617–34 638, 2024. [2] C. Liu, N. Bastianello, W . Huo, Y . Shi, and K. H. Johansson, “ A survey on secure decentralized optimization and learning, ” arXiv pr eprint arXiv:2408.08628 , 2024. [3] T . Y ang, X. Y i, J. W u, Y . Y uan, D. W u, Z. Meng, Y . Hong, H. W ang, Z. Lin, and K. H. Johansson, “ A survey of distributed optimization, ” Annual Reviews in Contr ol , v ol. 47, pp. 278–305, 2019. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S1367578819300082 [4] A. Nedi ´ c and J. Liu, “Distributed optimization for control, ” Annual Review of Contr ol, Robotics, and Autonomous Systems , vol. 1, no. 1, pp. 77–103, 2018. [5] A. Nedic, “Distributed gradient methods for conv ex machine learning problems in networks: Distributed Optimization, ” IEEE Signal Pr ocess- ing Magazine , vol. 37, no. 3, pp. 92–101, 2020. [6] G. Qu and N. Li, “Harnessing smoothness to accelerate distributed optimization, ” IEEE T ransactions on Control of Network Systems , vol. 5, no. 3, pp. 1245–1260, 2018. [7] C. Xi, V . S. Mai, R. Xin, E. H. Abed, and U. A. Khan, “Linear con vergence in optimization over directed graphs with ro w-stochastic matrices, ” IEEE Tr ansactions on Automatic Control , vol. 63, no. 10, pp. 3558–3565, 2018. [8] C. T . Dinh, N. H. T ran, T . D. Nguyen, W . Bao, A. R. Balef, B. B. Zhou, and A. Y . Zomaya, “DONE: distributed approximate Newton-type method for federated edge learning, ” IEEE Tr ansactions on P arallel and Distributed Systems , vol. 33, no. 11, pp. 2648–2660, 2022. [9] G. Sharma and S. Dey , “On analog distributed approximate Newton with determinantal averaging, ” in 2022 IEEE 33r d Annual International Symposium on P ersonal, Indoor and Mobile Radio Communications (PIMRC) . IEEE, 2022, pp. 1–7. [10] M. Safaryan, R. Islamov , X. Qian, and P . Richt ´ arik, “FedNL: Making Newton-type methods applicable to federated learning, ” in 39th Inter- national Confer ence on Machine Learning, ICML 2022 , 2022. [11] M. Sen, A. Qin, and K. M. C, “FAGH: Accelerating federated learning with approximated global hessian, ” arXiv e-prints , pp. arXiv–2403, 2024. [12] A. Mokhtari, Q. Ling, and A. Ribeiro, “Network Newton distributed optimization methods, ” IEEE T ransactions on Signal Pr ocessing , vol. 65, no. 1, pp. 146–161, 2016. [13] D. Bajovic, D. Jakov etic, N. Krejic, and N. K. Jerinkic, “Ne wton-like method with diagonal correction for distributed optimization, ” SIAM Journal on Optimization , vol. 27, no. 2, pp. 1171–1203, 2017. [14] N. Bof, R. Carli, G. Notarstefano, L. Schenato, and D. V aragnolo, “Multiagent Newton–Raphson optimization over lossy networks, ” IEEE T ransactions on Automatic Control , vol. 64, no. 7, pp. 2983–2990, 2018. [15] J. Zhang, Q. Ling, and A. M.-C. So, “ A Ne wton tracking algorithm with exact linear conver gence for decentralized consensus optimization, ” IEEE T ransactions on Signal and Information Processing over Networks , vol. 7, pp. 346–358, 2021. [16] H. Y e, C. He, and X. Chang, “ Accelerated distributed approximate Newton method, ” IEEE Tr ansactions on Neural Networks and Learning Systems , vol. 34, no. 11, pp. 8642–8653, 2022. [17] O. Shamir, N. Srebro, and T . Zhang, “Communication-efficient dis- tributed optimization using an approximate Newton-type method, ” in International conference on machine learning . PMLR, 2014, pp. 1000– 1008. [18] Z. Qu, X. Li, L. Li, and Y . Hong, “Distributed second-order method with diffusion strategy , ” in 2023 IEEE International Conference on Systems, Man, and Cybernetics (SMC) . IEEE, 2023, pp. 2022–2027. [19] A. Maritan, G. Sharma, S. Dey , and L. Schenato, “Fully-distributed optimization with Network Exact Consensus-GIANT, ” in 2024 IEEE 25th International W orkshop on Signal Pr ocessing Advances in W ir eless Communications (SP A WC) . IEEE, 2024, pp. 436–440. [20] J. Zhang, K. Y ou, and T . Bas ¸ar, “Distributed adaptive Newton methods with global superlinear conv ergence, ” Au- tomatica , v ol. 138, p. 110156, 2022. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S0005109821006865 11 [21] A. Maritan, G. Sharma, L. Schenato, and S. Dey , “Network-GIANT: Fully distributed Newton-type optimization via harmonic hessian con- sensus, ” in 2023 IEEE Globecom W orkshops (GC Wkshps) , 2023, pp. 902–907. [22] S. W ang, F . Roosta, P . Xu, and M. W . Mahoney , “GIANT : Globally improved approximate Newton method for distributed optimization, ” Advances in Neural Information Processing Systems , vol. 31, 2018. [23] L. Xiao, S. Boyd, and S.-J. Kim, “Distrib uted av erage consensus with least-mean-square deviation, ” Journal of parallel and distributed computing , vol. 67, no. 1, pp. 33–46, 2007. [24] N. Agarwal, B. Bullins, and E. Hazan, “Second-order stochastic optimization for machine learning in linear time, ” Journal of Machine Learning Researc h , vol. 18, no. 116, pp. 1–40, 2017. [Online]. A vailable: http://jmlr .org/papers/v18/16-491.html [25] S. Boyd and L. V andenber ghe, Conve x Optimization . Cambridge Univ ersity Press, March 2004. [26] B. Polyak, “Introduction to Optimization, ” T ranslations Series in Math- ematics and Engineering. New Y ork: Optimization Softwar e Inc. Publi- cations Division , 1987. [27] B. Li, S. Cen, Y . Chen, and Y . Chi, “Communication-efficient distributed optimization in networks with gradient tracking and variance reduction, ” The Journal of Machine Learning Resear ch , vol. 21, no. 1, 2020. [28] A. Mokhtari, Q. Ling, and A. Ribeiro, “Network Newton distributed optimization methods, ” IEEE Tr ansactions on Signal Processing , 2016. [29] M. A. Erdogdu and A. Montanari, “Con vergence rates of sub-sampled Newton methods, ” in Advances in Neural Information Pr ocessing Sys- tems , C. Cortes, N. Lawrence, D. Lee, M. Sugiyama, and R. Garnett, Eds., vol. 28. Curran Associates, Inc., 2015. [30] G. Qu and N. Li, “ Accelerated distributed Nesterov gradient descent, ” IEEE Tr ansactions on Automatic Control , vol. 65, no. 6, pp. 2566–2581, 2019. [31] D. Dua and C. Graff, “UCI Machine Learning Repository , ” 2019, DOI: http://archiv e.ics.uci.edu/ml. [32] L. Xiao, S. Boyd, and S.-J. Kim, “Distrib uted av erage consensus with least-mean-square deviation, ” Journal of parallel and distributed computing , vol. 67, no. 1, pp. 33–46, 2007. [33] T . Hastie, R. Tibshirani, and J. Friedman, The Elements of Statistical Learning , 2nd ed., ser . Springer Series in Statistics. Springer, Ne w Y ork, 2009, Data Mining, Inference, and Prediction. [Online]. A vailable: https://doi.org/10.1007/978-0-387-84858-7 Souvik Das is a postdoctoral researcher in the Depar tment of Electr ical Engineering at Uppsala University , Sweden. He received his doctoral degree from the Centre f or Systems and Con- trol at the IIT Bombay in 2025. His research interests are broadly in the field of optimization and optimization-based control, and security and privacy of cyber-ph ysical systems. Luca Schenato (Fellow , IEEE) receiv ed the Dr .Eng. degree in electrical engineer ing from the University of Pado va, P adov a, Italy , in 1999, and the Ph.D . degree in electrical engineering and computer sciences from the University of Calif or- nia (UC), Berkeley , Berkeley , CA, USA, in 2003. He was a P ostdoctoral Researcher in 2004 and Visiting Professor during 2013–2014 with UC Berkeley . He is currently a Full Professor with the Information Engineering Depar tment, University of Padov a. His interests include networked con- trol systems , multi-agent systems, wireless sensor networks, distr ib uted optimisation and synthetic biology . Luca Schenato has been awarded the 2004 Researchers Mobility Fello wship by the Italian Ministr y of Education, University and Research (MIUR), the 2006 Eli Jury A ward in U.C . Ber k eley and the EUCA European Control Aw ard in 2014, and IEEE Fellow in 2017. He ser ved as Associate Editor for IEEE T rans. on Automatic Control from 2010 to 2014 and he is he is currently Senior Editor f or IEEE T rans. on Control of Networ k Systems and Associate Editor for A utomatica. Subhrakanti Dey (Fello w , IEEE) receiv ed the Ph.D . degree from the Depar tment of Systems Engineering, Research School of Information Sciences and Engineering, Australian National University , Canberra, in 1996. He is currently a Professor and Head of the Signals and Systems division in the Dept of Electrical Engineering at Uppsala University , Sweden. He has also held prof essor ial positions at NUI Ma ynooth, Ireland and University of Melbourne, A ustralia. His current research interests include networked control systems, distr ib uted machine learning and optimization, and detection and estimation theor y f or wireless sensor networks. He is currently a Senior Editor for IEEE T ransactions of Control of Network Systems and IEEE T ransactions on Signal and Information Processing ov er Networks, and an Associate Editor for Automatica. He also ser v ed as a Senior Editor for IEEE Control Systems Letter until 2025.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment