Generation of Uncertainty-Aware High-Level Spatial Concepts in Factorized 3D Scene Graphs via Graph Neural Networks

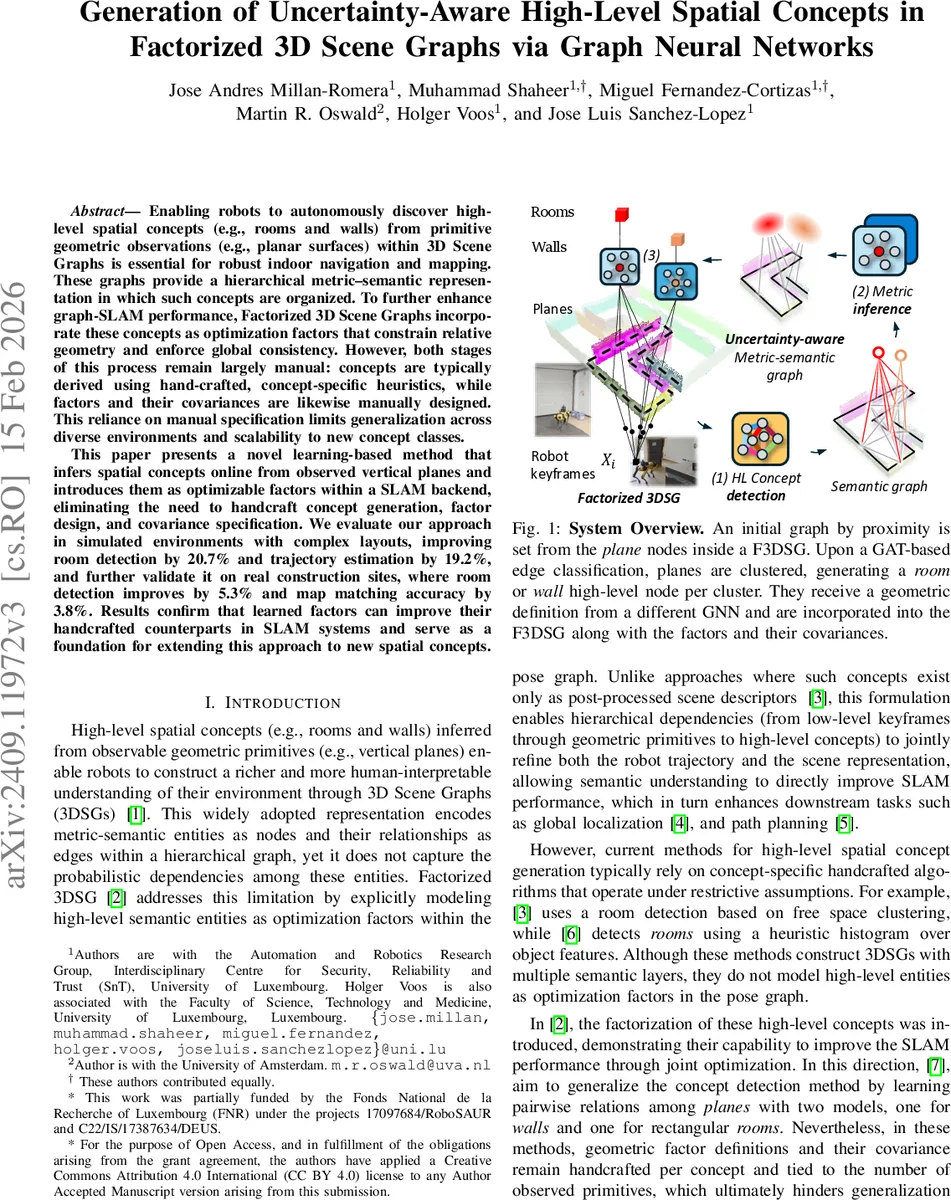

Enabling robots to autonomously discover high-level spatial concepts (e.g., rooms and walls) from primitive geometric observations (e.g., planar surfaces) within 3D Scene Graphs is essential for robust indoor navigation and mapping. These graphs provide a hierarchical metric-semantic representation in which such concepts are organized. To further enhance graph-SLAM performance, Factorized 3D Scene Graphs incorporate these concepts as optimization factors that constrain relative geometry and enforce global consistency. However, both stages of this process remain largely manual: concepts are typically derived using hand-crafted, concept-specific heuristics, while factors and their covariances are likewise manually designed. This reliance on manual specification limits generalization across diverse environments and scalability to new concept classes. This paper presents a novel learning-based method that infers spatial concepts online from observed vertical planes and introduces them as optimizable factors within a SLAM backend, eliminating the need to handcraft concept generation, factor design, and covariance specification. We evaluate our approach in simulated environments with complex layouts, improving room detection by 20.7% and trajectory estimation by 19.2%, and further validate it on real construction sites, where room detection improves by 5.3% and map matching accuracy by 3.8%. Results confirm that learned factors can improve their handcrafted counterparts in SLAM systems and serve as a foundation for extending this approach to new spatial concepts.

💡 Research Summary

The paper introduces a learning‑based framework that automatically discovers high‑level spatial concepts such as rooms and walls from observed vertical planes and integrates them as uncertainty‑aware factors into a Factorized 3D Scene Graph (F3DSG) SLAM backend. Traditional F3DSG pipelines rely on hand‑crafted heuristics to generate concepts and manually designed factors with fixed covariances, which hampers scalability to new environments and concept classes. To overcome this, the authors propose a two‑stage Graph Neural Network (GNN) architecture.

The first stage, called Sem‑GAT, builds a directed k‑nearest‑neighbor proximity graph over plane nodes using centroid distances. It then performs message passing to update node and edge embeddings, and a final MLP decoder classifies each edge as “same‑room”, “same‑wall”, or “none”. Epistemic uncertainty is estimated via Monte‑Carlo dropout, and only edges whose confidence exceeds a threshold are retained. Using the classified edges, a community detection step clusters planes into candidate rooms (via modularity maximization on same‑room edges) and walls (each same‑wall edge forms a wall candidate). Temporal stabilization matches current communities with tracked ones using IoU and an exponential moving average of confidence, promoting a community to a high‑level node only after consistent observations.

The second stage, Met‑GNN, predicts metric attributes for each high‑level node. For a given community, a star graph is formed with incident plane nodes. A single‑hop message passing from planes to the community node, followed by mean pooling and two MLPs, regresses a 2‑D centroid. Dropout again provides a distribution over the centroid, yielding per‑dimension variance that quantifies geometric uncertainty.

Each generated high‑level node is attached to a factor: an N‑ary factor for rooms (connecting the room centroid to all its supporting planes) and a binary factor for walls (connecting the wall centroid to its two planes). The factor’s information matrix is constructed by combining the semantic confidence from Sem‑GAT and the geometric variance from Met‑GNN, thus producing data‑driven covariances rather than hand‑tuned ones. These learned factors are inserted into the factor graph used by the SLAM optimizer, allowing joint refinement of robot trajectory and scene representation.

Experiments were conducted in two settings. In simulated indoor environments with complex layouts, the proposed method increased room detection F1‑score by 20.7 % and reduced trajectory RMSE by 19.2 % compared to a baseline using handcrafted concepts and factors. In real‑world construction site data, room detection improved by 5.3 % and map‑matching accuracy by 3.8 %. These results demonstrate that learned, uncertainty‑aware factors can outperform manually designed counterparts and enhance overall SLAM performance.

Key contributions include: (1) an end‑to‑end pipeline that generates high‑level spatial concepts online and models both semantic and geometric uncertainty, (2) modular GNN components for semantic edge classification and metric centroid regression that can be reused or extended, and (3) integration of learned factors with data‑driven covariances into a factor‑graph SLAM system, yielding measurable gains in accuracy and robustness.

Limitations are acknowledged: the current system only handles vertical planar primitives, limiting applicability to environments with non‑vertical or curved structures, and centroid regression is confined to 2‑D positions, which may be insufficient for fully 3‑D concept representations. Future work aims to incorporate diverse geometric primitives (e.g., curved surfaces), extend metric inference to full 3‑D pose and shape, and explore Bayesian GNNs for richer uncertainty modeling, thereby broadening the range of spatial concepts that can be learned and optimized within factorized scene graphs.

Comments & Academic Discussion

Loading comments...

Leave a Comment