SaVe-TAG: LLM-based Interpolation for Long-Tailed Text-Attributed Graphs

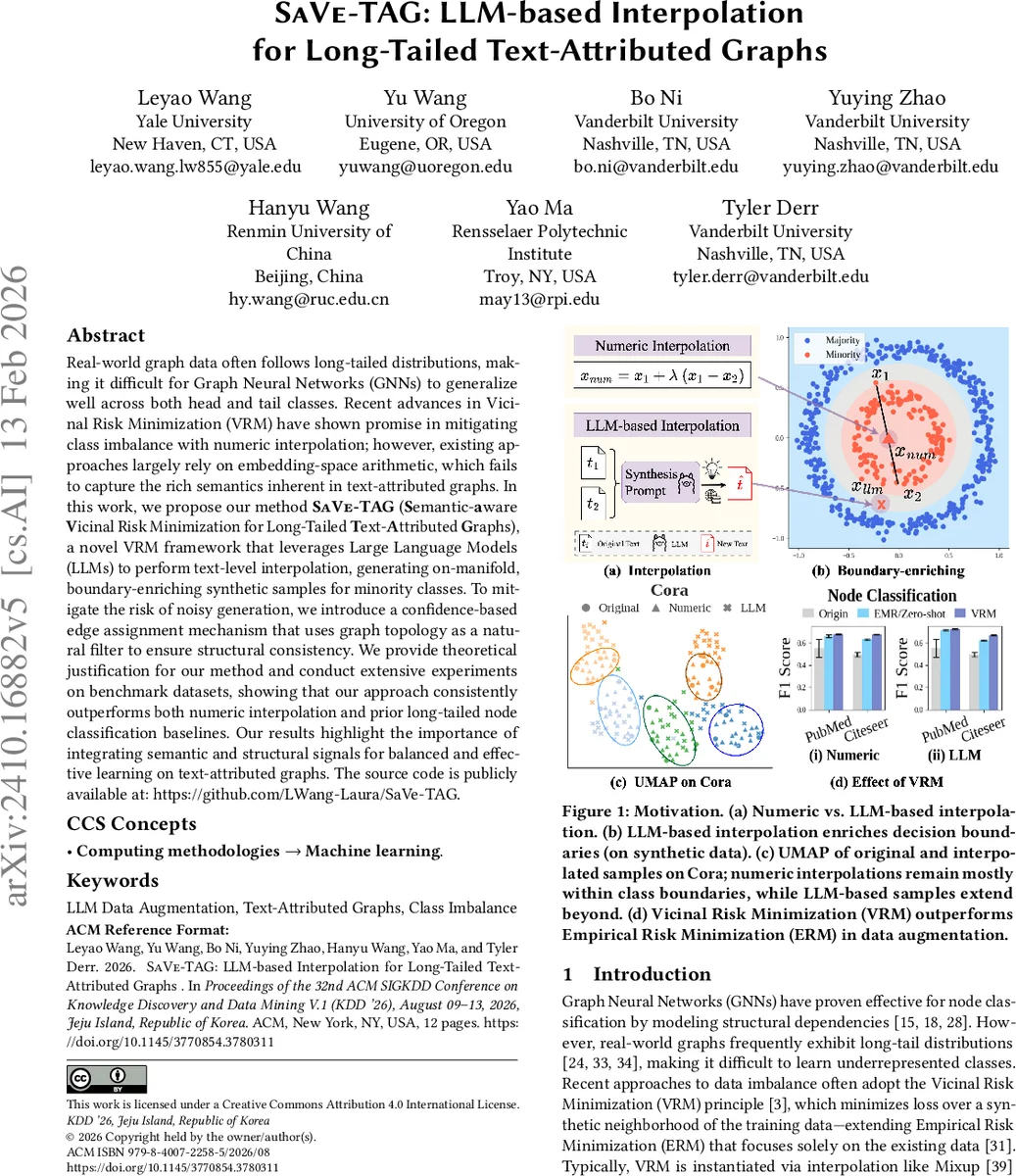

Real-world graph data often follows long-tailed distributions, making it difficult for Graph Neural Networks (GNNs) to generalize well across both head and tail classes. Recent advances in Vicinal Risk Minimization (VRM) have shown promise in mitigating class imbalance with numeric interpolation; however, existing approaches largely rely on embedding-space arithmetic, which fails to capture the rich semantics inherent in text-attributed graphs. In this work, we propose our method, SaVe-TAG (Semantic-aware Vicinal Risk Minimization for Long-Tailed Text-Attributed Graphs), a novel VRM framework that leverages Large Language Models (LLMs) to perform text-level interpolation, generating on-manifold, boundary-enriching synthetic samples for minority classes. To mitigate the risk of noisy generation, we introduce a confidence-based edge assignment mechanism that uses graph topology as a natural filter to ensure structural consistency. We provide theoretical justification for our method and conduct extensive experiments on benchmark datasets, showing that our approach consistently outperforms both numeric interpolation and prior long-tailed node classification baselines. Our results highlight the importance of integrating semantic and structural signals for balanced and effective learning on text-attributed graphs. The source code is publicly available at: https://github.com/LWang-Laura/SaVe-TAG.

💡 Research Summary

SaVe‑TAG addresses the pervasive problem of long‑tailed class distributions in text‑attributed graphs (TAGs) by integrating Large Language Models (LLMs) into a Vicinal Risk Minimization (VRM) framework. Traditional VRM approaches for graph data, such as Mixup or SMOTE, operate on numeric embeddings and often violate the underlying semantic manifold of textual data, especially when class manifolds are non‑convex or multimodal. SaVe‑TAG introduces “vicinal twins”: pairs of same‑label nodes that are close in the original feature space (identified via k‑nearest‑neighbor search). For each twin pair, a class‑conditioned prompt is sent to an LLM (with a controllable temperature τ) to generate a synthetic text that inherits the twin’s label. Theoretical analysis (Theorem 3.2) guarantees that, assuming the LLM approximates the true class‑conditional distribution, the generated text lies on the class manifold with high probability (≥ 1 − δ − ε).

To prevent noisy generations from harming downstream learning, SaVe‑TAG employs a confidence‑aware edge assignment mechanism. A frozen text encoder (e.g., BERT) maps all texts (original and synthetic) to embeddings, which are then passed through two small MLPs: an encoder producing node representations and a predictor that converts inner‑product similarity into a logit. The predictor is trained on the original graph to assign higher scores to true edges than to non‑edges, using a LogSigmoid loss. At inference time, the logit is passed through a sigmoid to obtain a confidence score κ(u, v) ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment