DiffPlace: Street View Generation via Place-Controllable Diffusion Model Enhancing Place Recognition

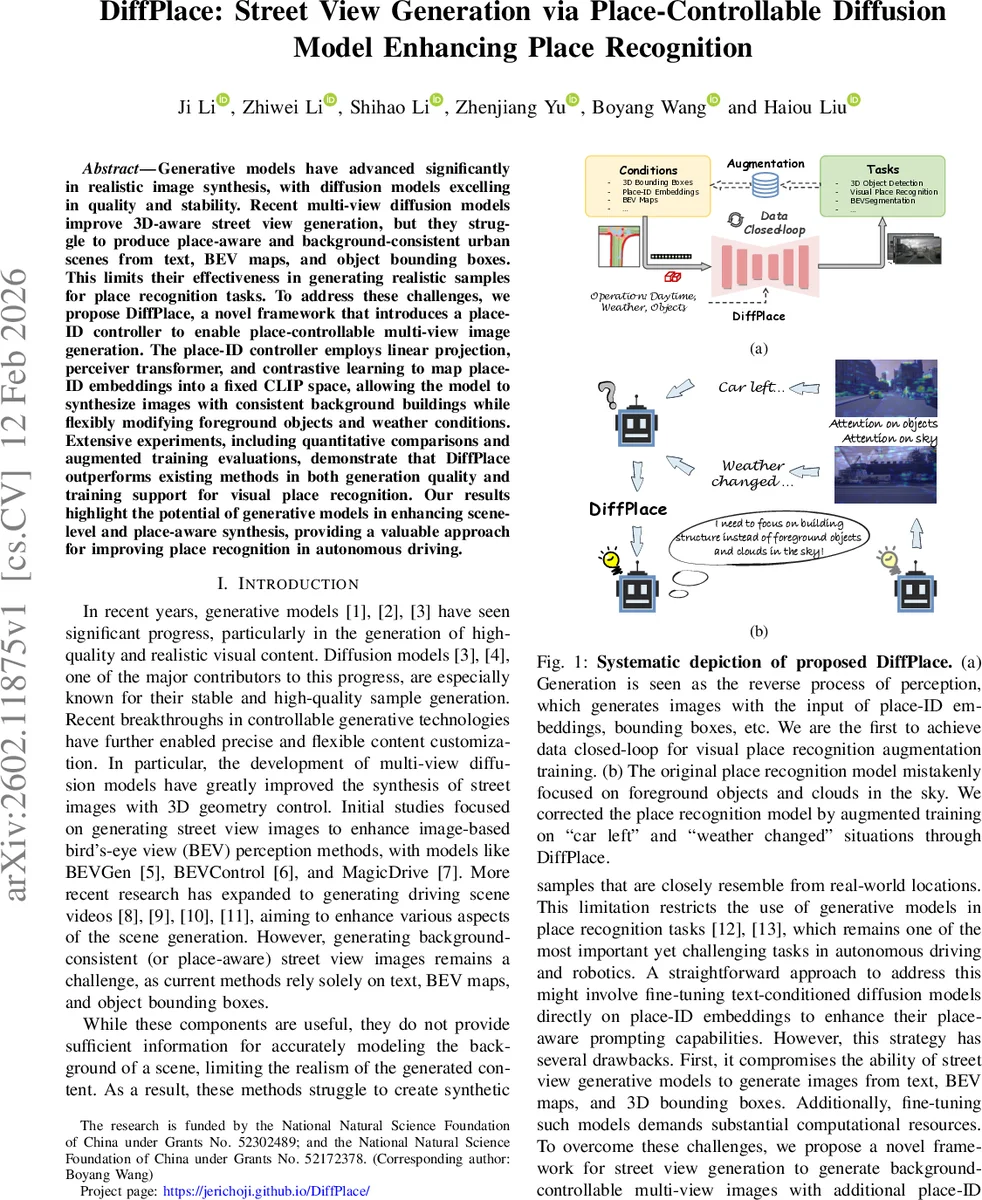

Generative models have advanced significantly in realistic image synthesis, with diffusion models excelling in quality and stability. Recent multi-view diffusion models improve 3D-aware street view generation, but they struggle to produce place-aware and background-consistent urban scenes from text, BEV maps, and object bounding boxes. This limits their effectiveness in generating realistic samples for place recognition tasks. To address these challenges, we propose DiffPlace, a novel framework that introduces a place-ID controller to enable place-controllable multi-view image generation. The place-ID controller employs linear projection, perceiver transformer, and contrastive learning to map place-ID embeddings into a fixed CLIP space, allowing the model to synthesize images with consistent background buildings while flexibly modifying foreground objects and weather conditions. Extensive experiments, including quantitative comparisons and augmented training evaluations, demonstrate that DiffPlace outperforms existing methods in both generation quality and training support for visual place recognition. Our results highlight the potential of generative models in enhancing scene-level and place-aware synthesis, providing a valuable approach for improving place recognition in autonomous driving

💡 Research Summary

DiffPlace introduces a novel place‑controllable diffusion framework for generating multi‑view street‑level images that are both background‑consistent and flexible in foreground and weather variations. The core innovation is a “place‑ID controller” that maps place embeddings extracted from a visual place recognition (VPR) network into the fixed CLIP image latent space. This mapping is achieved through two linear projection layers, an attribute perceiver transformer, and a contrastive learning objective (Soft‑CLIP loss). The linear projections reshape a 4096‑dimensional place‑ID vector into a sequence of Nₛ (set to 4) tokens of dimension 768, aligning it with other conditioning embeddings (text, BEV map, bounding boxes).

The perceiver transformer then fuses these place tokens with CLIP image features derived from reference images where foreground objects and sky are masked, ensuring the model focuses on structural elements of the street scene. Three cross‑attention layers (dimension 1024) enable the place tokens to attend to the CLIP features, effectively injecting detailed background information while preserving the ability to control foreground objects and weather via existing modalities.

Contrastive learning further aligns the place‑ID embeddings with the CLIP space by maximizing cosine similarity for positive pairs (same place‑ID and its reference image) and minimizing it for negatives, reinforcing the semantic correspondence between the place condition and visual appearance.

The diffusion backbone follows a latent multi‑view diffusion architecture with a VAE encoder, a denoising UNet, and a VAE decoder. Cross‑view attention modules ensure viewpoint consistency across adjacent camera views, allowing the generated images to maintain coherent 3D geometry. Conditioning inputs (text, BEV map, 3D bounding boxes) are encoded and concatenated with the place embeddings before being injected into the UNet via cross‑attention.

Experiments evaluate both image quality (FID, LPIPS, SSIM) and downstream VPR performance. DiffPlace‑augmented training data improve VPR models’ top‑1 recall by an average of 7 % over baselines that use BEVGen, MagicDrive, or other multi‑view diffusion generators. The generated images exhibit high background fidelity (high SSIM) while allowing diverse foreground objects and weather conditions, demonstrating that the place‑ID controller successfully decouples background consistency from foreground variability.

Key contributions include: (1) the first place‑controllable diffusion model that can generate background‑consistent street views for data augmentation; (2) a novel mapping pipeline (linear projection + perceiver transformer + contrastive loss) that aligns place embeddings with CLIP, enabling seamless integration with existing controllable diffusion pipelines; (3) empirical evidence that synthetic data from DiffPlace substantially boosts VPR robustness to environmental changes.

Limitations are noted: the approach relies on a pre‑trained VPR network to obtain place‑ID embeddings, which may require re‑training for new geographic domains. The contrastive alignment is performed independently of text and map conditions, potentially limiting fine‑grained joint control (e.g., specific architectural style under a particular weather). Future work could explore unified multimodal encoders that jointly learn text, map, and place embeddings, as well as lightweight controller designs for real‑time simulation scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment