FlowMind: Execute-Summarize for Structured Workflow Generation from LLM Reasoning

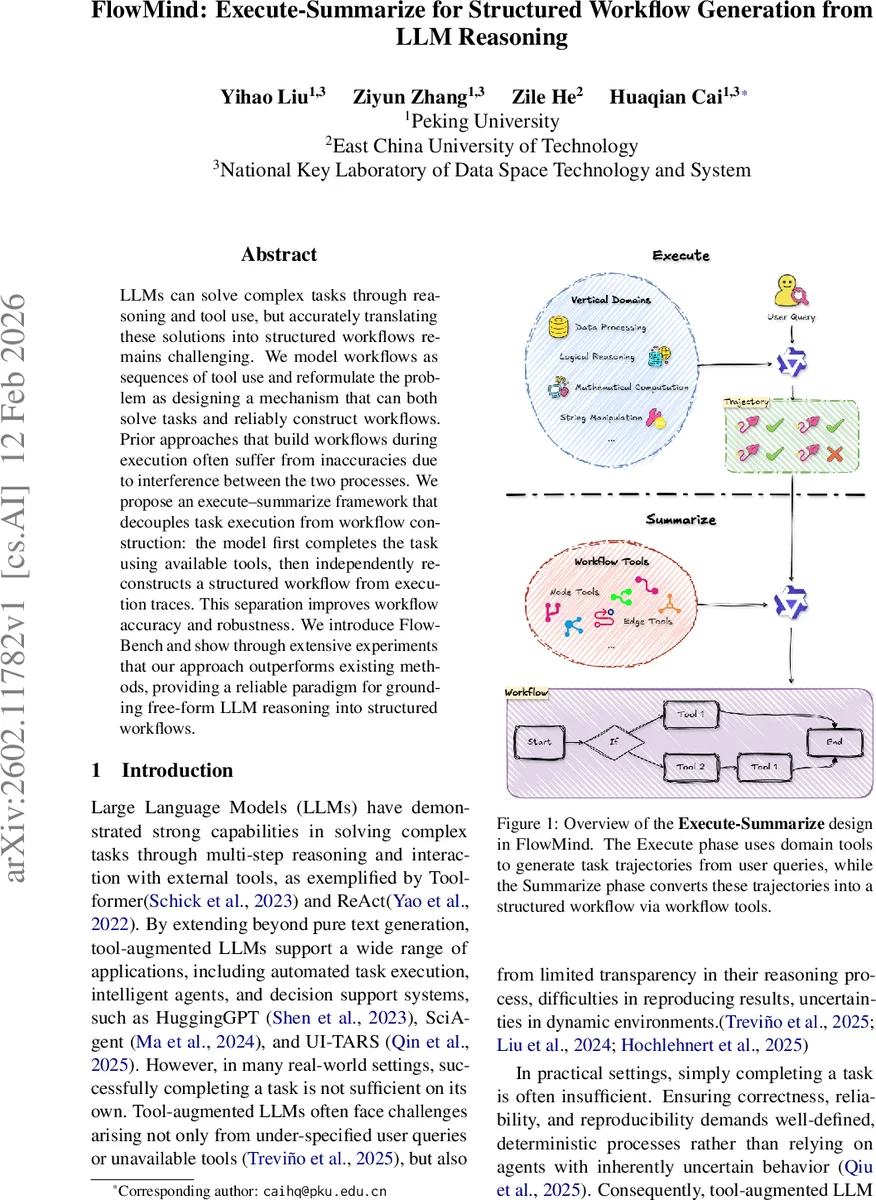

LLMs can solve complex tasks through reasoning and tool use, but accurately translating these solutions into structured workflows remains challenging. We model workflows as sequences of tool use and reformulate the problem as designing a mechanism that can both solve tasks and reliably construct workflows. Prior approaches that build workflows during execution often suffer from inaccuracies due to interference between the two processes. We propose an Execute-Summarize(ES) framework that decouples task execution from workflow construction: the model first completes the task using available tools, then independently reconstructs a structured workflow from execution traces. This separation improves workflow accuracy and robustness. We introduce FlowBench and show through extensive experiments that our approach outperforms existing methods, providing a reliable paradigm for grounding free-form LLM reasoning into structured workflows.

💡 Research Summary

The paper addresses a core challenge in tool‑augmented large language model (LLM) systems: converting the free‑form reasoning and tool interactions that solve a user query into a reliable, structured workflow that can be audited, reproduced, and reused. Existing approaches typically intertwine task execution with workflow construction, forcing the model to simultaneously reason about the problem and maintain a formal representation of its actions. This coupling creates a cognitive burden that degrades both problem‑solving performance and the correctness of the generated workflow, especially for long‑horizon or tool‑intensive tasks where steps may be omitted, ordered incorrectly, or have mismatched arguments.

To overcome this, the authors propose FlowMind, an Execute‑Summarize (ES) framework that explicitly separates execution from workflow generation. In the Execute phase, the LLM is free to use any available domain tools to solve the task, focusing solely on functional correctness. All interactions—tool calls, inputs, outputs, intermediate states—are recorded as an execution trace. In the Summarize phase, a second pass operates only on these traces, using dedicated “workflow tools” (graph‑building primitives) to abstract away low‑level details and produce a high‑level, tool‑call‑sequence workflow graph (e.g., JSON or a directed acyclic graph). Because summarization is grounded in verified execution, the resulting workflow reflects exactly what was performed, eliminating the errors that arise from speculative reasoning.

Key technical contributions include:

- Formalizing workflows as ordered sequences of tool invocations, which aligns naturally with the tool‑calling interface LLMs are already trained on.

- Demonstrating that decoupling reduces the model’s cognitive load, allowing deeper reasoning during execution while enabling a focused abstraction step for workflow construction.

- Introducing a many‑to‑one mapping where multiple execution attempts can be collapsed into a single, generalized workflow, mirroring human learning from trial‑and‑error.

To evaluate the approach, the authors construct FlowBench, a synthetic benchmark comprising (i) a curated set of domain‑specific tools with natural‑language documentation, (ii) a diverse set of multi‑tool tasks described in natural language, and (iii) verified execution traces generated by strong LLMs. FlowBench provides both black‑box evaluation (executing the generated workflow and checking functional correctness against a gold answer) and white‑box diagnostics (inspecting graph structure and tool orchestration).

Experiments compare four paradigms across several model families and scales (Qwen‑3‑8B, Qwen‑3‑14B, Qwen‑3‑32B, GPT‑4.1, GPT‑5): traditional ReAct, Plan‑and‑Execute (P&E), ES‑ReAct (execution via ReAct, summarization afterward), and ES‑P&E (execution via P&E, summarization afterward). Metrics include Execution Completeness, Graph Validity, and “Both Success” (simultaneous satisfaction of both). Across all settings, the ES frameworks consistently outperform the baselines. ES‑P&E, in particular, achieves the highest joint success rates, often reaching 100 % execution completeness and >80 % workflow validity even on the largest models. The advantage remains stable across model scales, indicating that the benefit is orthogonal to raw model size.

A detailed cognitive‑burden analysis shows that while ReAct attains high execution rates, its workflow graphs are frequently invalid, limiting overall success. P&E improves graph validity but suffers from plan‑execution mismatches. The ES approach bridges this gap by grounding workflow construction in actual execution traces, yielding a strong alignment between functional correctness and structural soundness. Ablation studies explore variations in execution strategies, JSON output constraints, and summarization techniques, confirming the robustness of the design choices.

In summary, FlowMind introduces a principled two‑stage pipeline that transforms LLM‑driven problem solving into trustworthy, reusable workflows. By decoupling execution from summarization, it reduces cognitive load, improves workflow accuracy, and supports many‑to‑one abstraction of multiple execution attempts. FlowBench provides a standardized testbed for future research on workflow generation. The results suggest that the Execute‑Summarize paradigm is a promising direction for building dependable AI agents that can both solve complex tasks and expose their reasoning as auditable, reproducible pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment