Beyond Parameter Arithmetic: Sparse Complementary Fusion for Distribution-Aware Model Merging

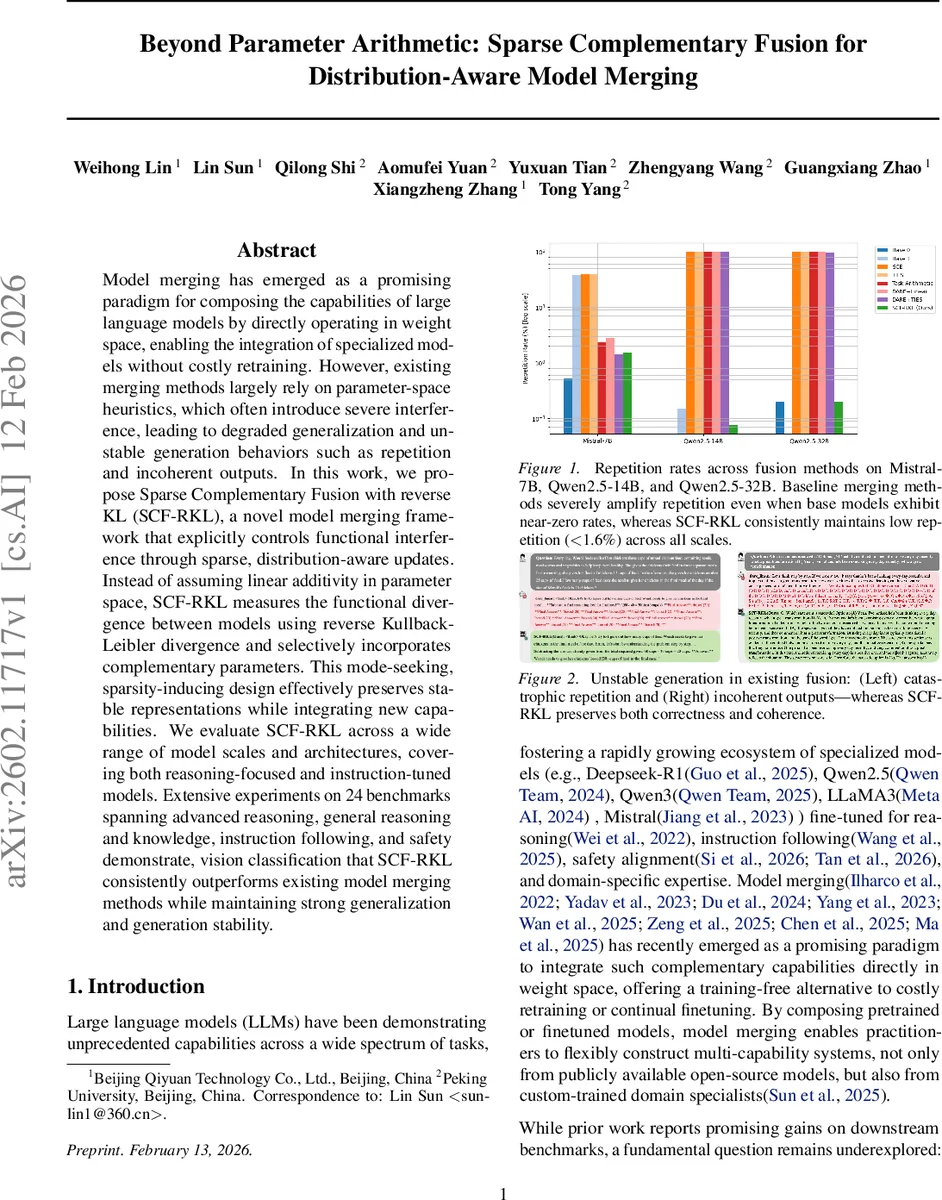

Model merging has emerged as a promising paradigm for composing the capabilities of large language models by directly operating in weight space, enabling the integration of specialized models without costly retraining. However, existing merging methods largely rely on parameter-space heuristics, which often introduce severe interference, leading to degraded generalization and unstable generation behaviors such as repetition and incoherent outputs. In this work, we propose Sparse Complementary Fusion with reverse KL (SCF-RKL), a novel model merging framework that explicitly controls functional interference through sparse, distribution-aware updates. Instead of assuming linear additivity in parameter space, SCF-RKL measures the functional divergence between models using reverse Kullback-Leibler divergence and selectively incorporates complementary parameters. This mode-seeking, sparsity-inducing design effectively preserves stable representations while integrating new capabilities. We evaluate SCF-RKL across a wide range of model scales and architectures, covering both reasoning-focused and instruction-tuned models. Extensive experiments on 24 benchmarks spanning advanced reasoning, general reasoning and knowledge, instruction following, and safety demonstrate, vision classification that SCF-RKL consistently outperforms existing model merging methods while maintaining strong generalization and generation stability.

💡 Research Summary

Model merging has become an attractive way to combine the capabilities of specialized large language models (LLMs) without expensive retraining. However, most existing merging techniques—Task Arithmetic, TIES, DARE, SCE, and their variants—operate directly in weight space by averaging or linearly interpolating all parameters. This dense approach often distorts the output distribution of the base model, causing entropy collapse, repetitive loops, and incoherent generations, especially in long‑form or multi‑step reasoning tasks.

The paper introduces Sparse Complementary Fusion with reverse KL (SCF‑RKL), a principled framework that (1) quantifies functional divergence between a base model θ₀ and a complementary model θ₁ using reverse Kullback‑Leibler divergence, and (2) selects only the most informative parameters for update via a dynamic sparsity mask. Concretely, both models are converted to probability distributions via softmax (q for the base, p for the complement). Reverse KL RKL(q‖p)=∑ qᵢ log(qᵢ/pᵢ) penalizes changes that significantly alter high‑probability regions of the base model, making it a natural “conservative” metric. An importance score I = |θ₁‑θ₀| · RKL is computed for each parameter group; the median and inter‑quartile range (IQR) of I define a threshold τ = median(I) + α·IQR (α = 1.5). Parameters with I ≥ τ receive a binary mask M = 1, all others M = 0. The fused model is then θ_f = θ₀ + M ⊙ (θ₁‑θ₀). This “discrete composition” guarantees that every fused parameter originates from either the base or the complement, never from an untrained linear interpolation.

The authors provide three theoretical guarantees. Theorem 3.1 (Semantic Stability) shows that the KL divergence between the base distribution q and the fused distribution q_f is bounded above by the KL divergence between q and the complement p, i.e., D_KL(q‖q_f) ≤ D_KL(q‖p). This follows from the convexity of reverse KL and the fact that updates are applied only where the directional derivative is maximal. Theorem 3.2 (Entropy Preservation) proves that under standard Lipschitz assumptions for softmax and entropy, the entropy loss of the fused model is proportional to the squared ℓ₂ norm of the sparse update, which is dramatically smaller than that of dense merging. Consequently, the fused model retains token‑level diversity and avoids repetitive loops. Theorem 3.3 (Subspace Rotation Bound) leverages matrix perturbation theory (Wedin’s sin Θ theorem) to bound the maximal principal angle between the singular subspaces of the base and fused models by ‖M⊙Δθ‖₂ / δ, where δ is the spectral gap. Because the mask makes ‖M⊙Δθ‖₂ ≪ ‖Δθ‖₂, rotation is kept low, preserving geometric consistency.

Empirically, SCF‑RKL is evaluated on 24 benchmarks covering advanced reasoning (e.g., GSM‑8K, ARC‑E), general knowledge (MMLU, TruthfulQA), instruction following (AlpacaEval), safety (RealToxicityPrompts), and vision classification (ImageNet‑1K, CIFAR‑100). The experiments span three model families (Qwen2.5, LLaMA3, Mistral) and three scales (7 B, 14 B, 32 B). Compared to the baselines, SCF‑RKL consistently achieves:

- Higher task performance (1‑3 percentage points on average).

- Dramatically lower repetition rates (< 1.6 % across all scales) where baselines can reach 30‑100 %.

- Superior spectral preservation: normalized spectral shift (NSS) ≈ 10⁻³ versus an order of magnitude higher for dense methods.

- Lower maximal principal angle (< 40°) and bounded singular‑value drift, indicating that the fused model’s internal representations stay close to those of its parents.

- “Discrete composition” property: over 80 % of parameters in the fused model are directly copied from either the base or the complement, eliminating the “phantom” parameters that arise from linear interpolation.

The method also shines in asymmetric fusion scenarios where the base model is weaker; SCF‑RKL retains the base’s stability while still injecting the complementary expertise. In safety‑critical settings, the fused model produces fewer toxic or unsafe tokens than any baseline. The approach extends to multimodal settings: when applied to a text‑image model, SCF‑RKL improves ImageNet top‑1 accuracy by 2‑3 % and maintains the same spectral stability.

Limitations include reliance on a simple IQR‑based threshold (α fixed at 1.5) and the use of reverse KL computed per parameter group rather than token‑level distributions, which may miss finer‑grained divergences. The authors suggest future work on meta‑learning the sparsity schedule, exploring alternative divergence measures, and scaling to models beyond 32 B parameters.

In summary, SCF‑RKL offers a theoretically grounded, sparsity‑driven alternative to dense weight averaging. By measuring functional divergence with reverse KL and updating only the most informative parameters, it mitigates mode collapse, preserves entropy, and maintains geometric structure, leading to more reliable and higher‑performing merged models across a wide spectrum of tasks and modalities.

Comments & Academic Discussion

Loading comments...

Leave a Comment