MAPLE: Modality-Aware Post-training and Learning Ecosystem

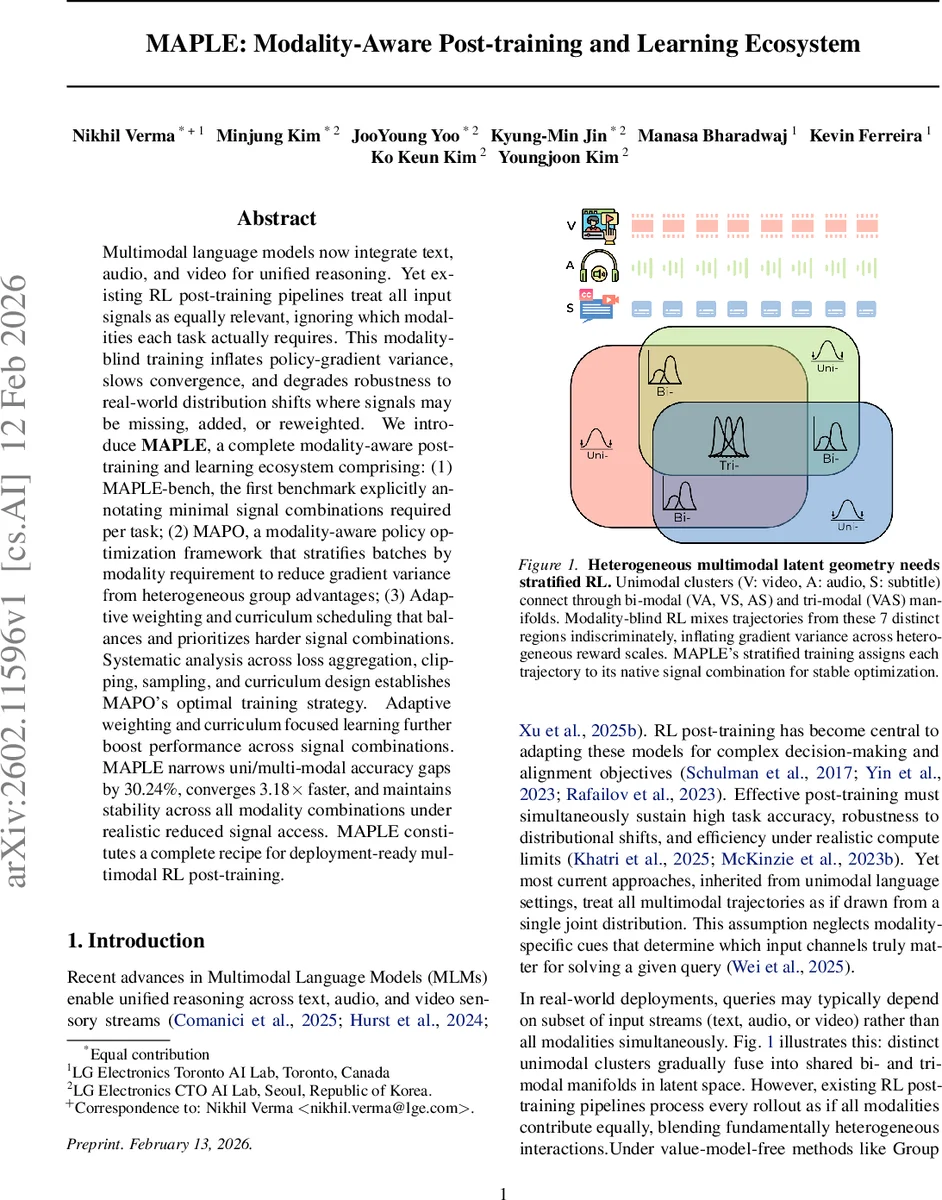

Multimodal language models now integrate text, audio, and video for unified reasoning. Yet existing RL post-training pipelines treat all input signals as equally relevant, ignoring which modalities each task actually requires. This modality-blind training inflates policy-gradient variance, slows convergence, and degrades robustness to real-world distribution shifts where signals may be missing, added, or reweighted. We introduce MAPLE, a complete modality-aware post-training and learning ecosystem comprising: (1) MAPLE-bench, the first benchmark explicitly annotating minimal signal combinations required per task; (2) MAPO, a modality-aware policy optimization framework that stratifies batches by modality requirement to reduce gradient variance from heterogeneous group advantages; (3) Adaptive weighting and curriculum scheduling that balances and prioritizes harder signal combinations. Systematic analysis across loss aggregation, clipping, sampling, and curriculum design establishes MAPO’s optimal training strategy. Adaptive weighting and curriculum focused learning further boost performance across signal combinations. MAPLE narrows uni/multi-modal accuracy gaps by 30.24%, converges 3.18x faster, and maintains stability across all modality combinations under realistic reduced signal access. MAPLE constitutes a complete recipe for deployment-ready multimodal RL post-training.

💡 Research Summary

The paper introduces MAPLE (Modality‑Aware Post‑training and Learning Ecosystem), a comprehensive framework designed to improve reinforcement‑learning (RL) post‑training for multimodal language models (MLMs) that process text, audio, and video. Existing RL post‑training pipelines treat all input modalities as equally relevant, mixing trajectories that require different subsets of signals. This “modality‑blind” approach inflates policy‑gradient variance because reward scales and noise characteristics differ across modality combinations, leading to slower convergence and poor robustness when some modalities are missing, noisy, or re‑weighted at test time.

To address these issues, MAPLE consists of three tightly integrated components.

-

MAPLE‑bench – a new benchmark in which every sample is annotated with a Required Modality Tag (RMT) indicating the minimal set of modalities (V, A, S, VA, VS, AS, VAS) needed to solve the task. The authors curate the dataset from Daily‑Omni and VAST‑Omni corpora, extracting aligned video, audio, and subtitle streams, generating both multiple‑choice QA and open‑ended captioning instances, and balancing the seven RMT groups. The final benchmark contains roughly 48 k QA pairs and 5 k captioning examples for training, plus separate validation splits, each with explicit modality metadata.

-

MAPO (Modality‑Aware Policy Optimization) – a value‑model‑free RL algorithm that stratifies training batches by RMT. For each modality group M, advantages are normalized using only rewards from that group, eliminating the between‑group variance term that plagues the standard gradient estimator. The authors provide a formal variance decomposition showing that the modality‑aware estimator retains only the within‑group variance, guaranteeing lower gradient noise whenever reward distributions differ across modalities. MAPO further introduces adaptive difficulty weights w_M derived from the KL‑divergence between the empirical reward distribution of a batch and a target Beta(100, 1) distribution (high‑reward bias). These weights are smoothed over a sliding window and applied multiplicatively to the loss, ensuring that harder batches (low‑reward) receive proportionally smaller updates while easier batches dominate early learning. A curriculum schedules batches from easy to hard based on the same KL statistics, counteracting gradient‑scale skew.

-

Adaptive Training Curriculum – training starts with uni‑modal tasks and progressively incorporates bi‑modal and tri‑modal examples. This staged curriculum lets the model first master single‑signal reasoning before learning to fuse multiple signals, while MAPO’s per‑RMT weighting and batch ordering continuously adapt to the evolving difficulty landscape.

Extensive experiments compare MAPO against state‑of‑the‑art value‑model‑free optimizers (GRPO, Dr‑GRPO, DAPO) under identical compute budgets. Evaluation metrics include per‑RMT accuracy, Modality Gap (performance drop from uni‑ to multi‑modal), training efficiency (wall‑clock time per step), and Fusion Gain (fraction of samples where multi‑modal input outperforms the best uni‑modal baseline). MAPO achieves a 3.18× speed‑up in convergence, reduces the average uni/multi‑modal accuracy gap by 30.24 %, and maintains stable performance when up to 50 % of modalities are randomly omitted at test time—conditions under which the modality‑blind baseline suffers severe degradation. Fusion Gain rises from 0.42 to 0.61, confirming that the model truly leverages complementary signals rather than over‑fitting to a dominant modality.

In summary, MAPLE delivers a full recipe for deployment‑ready multimodal RL post‑training: a modality‑aware benchmark that makes signal requirements explicit, a theoretically grounded policy optimizer that removes inter‑modality variance and dynamically balances batch difficulty, and a curriculum that gradually expands signal complexity. The combined system markedly improves efficiency, robustness, and overall performance, establishing a new standard for multimodal reinforcement learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment