Benchmarking for Single Feature Attribution with Microarchitecture Cliffs

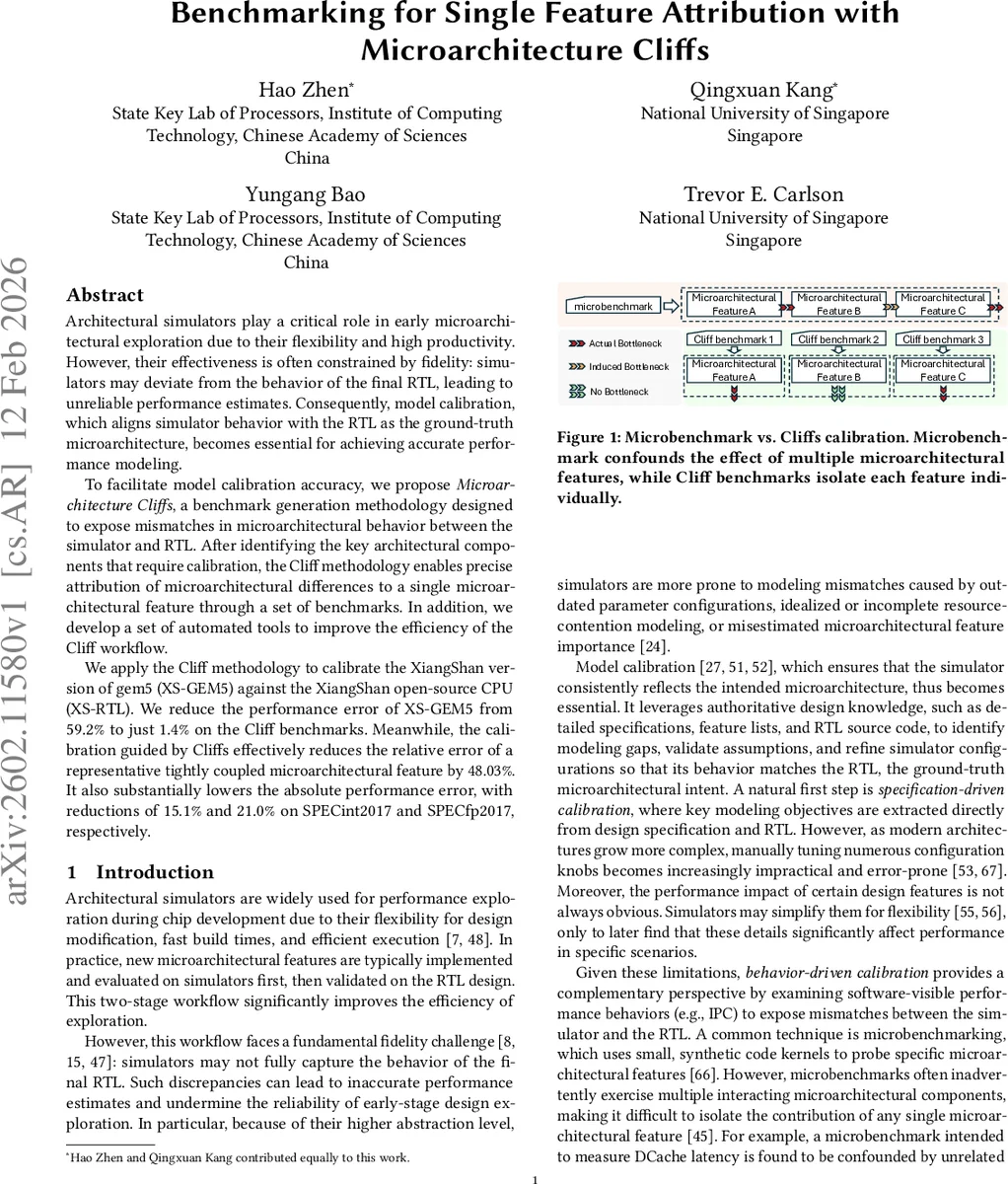

Architectural simulators play a critical role in early microarchitectural exploration due to their flexibility and high productivity. However, their effectiveness is often constrained by fidelity: simulators may deviate from the behavior of the final RTL, leading to unreliable performance estimates. Consequently, model calibration, which aligns simulator behavior with the RTL as the ground-truth microarchitecture, becomes essential for achieving accurate performance modeling. To facilitate model calibration accuracy, we propose Microarchitecture Cliffs, a benchmark generation methodology designed to expose mismatches in microarchitectural behavior between the simulator and RTL. After identifying the key architectural components that require calibration, the Cliff methodology enables precise attribution of microarchitectural differences to a single microarchitectural feature through a set of benchmarks. In addition, we develop a set of automated tools to improve the efficiency of the Cliff workflow. We apply the Cliff methodology to calibrate the XiangShan version of gem5 (XS-GEM5) against the XiangShan open-source CPU (XS-RTL). We reduce the performance error of XS-GEM5 from 59.2% to just 1.4% on the Cliff benchmarks. Meanwhile, the calibration guided by Cliffs effectively reduces the relative error of a representative tightly coupled microarchitectural feature by 48.03%. It also substantially lowers the absolute performance error, with reductions of 15.1% and 21.0% on SPECint2017 and SPECfp2017, respectively.

💡 Research Summary

Architectural simulators are indispensable for early‑stage processor design because they allow rapid exploration of microarchitectural ideas. However, their usefulness is limited by fidelity gaps: the simulated behavior often diverges from the ground‑truth RTL, leading to inaccurate performance estimates. Traditional calibration follows two complementary paths. Specification‑driven calibration extracts critical features from design documents and manually tunes simulator parameters, but this becomes impractical as modern CPUs contain hundreds of interacting structures. Behavior‑driven calibration, on the other hand, compares observable performance metrics (IPC, event counters) between simulator and RTL using targeted benchmarks, but existing microbenchmarks usually stress multiple structures simultaneously, making it hard to attribute a performance discrepancy to a single microarchitectural feature.

The authors introduce Microarchitecture Cliffs (Cliffs), a methodology that systematically generates benchmarks which isolate the effect of one microarchitectural component at a time. The workflow consists of three stages. First, performance counters from both simulator and RTL runs are clustered to identify which components exhibit the largest mismatches, thereby prioritizing calibration targets. Second, guided by this prioritization and a feature list, the Cliffs generator constructs instruction snippets that progressively stress a single feature while suppressing interference from others (e.g., varying ROB size, L1‑DCache bank conflicts, pipeline width). Third, the generated Cliffs benchmarks are executed on both platforms; the resulting IPC, cycle counts, and event statistics are plotted against the stressed parameter to reveal non‑linear response curves and inflection points that directly expose modeling errors.

The methodology was applied to calibrate the XiangShan‑derived gem5 port (XS‑GEM5) against the open‑source XiangShan RTL (XS‑RTL). Initially, the average performance error across the Cliffs suite was 59.2 %. After iteratively adjusting simulator models based on the single‑feature insights provided by the Cliffs, the error dropped dramatically to 1.4 %. To demonstrate the practical impact, the authors examined the Store‑Set memory dependency predictor, a tightly coupled feature whose effectiveness depends on several internal mechanisms (Nuke Replay, STA‑STD separation). Using Cliffs to isolate and tune these mechanisms reduced the relative error of Store‑Set’s performance evaluation on the h264ref workload by 48.03 %. Moreover, the calibrated simulator showed substantially lower absolute errors on full SPEC benchmarks: SPECint2017 error decreased by 15.1 % and SPECfp2017 by 21.0 %.

Automation is a key contribution. The authors provide three tool components: (1) a counter‑clustering module that automatically highlights mismatched components, (2) a dependency‑graph analyzer that determines which resources interact, and (3) a benchmark‑generation pipeline that synthesizes, compiles, and runs the Cliffs snippets across a configurable parameter space. This pipeline can evaluate hundreds of candidate benchmarks within a few hours, dramatically reducing manual effort.

The paper’s main contributions are: (i) showing that isolating performance differences to a single feature dramatically improves calibration accuracy, (ii) proposing the Cliffs methodology and an accompanying automated toolchain, (iii) demonstrating a successful calibration of a complex open‑source CPU where the performance error shrank from 59.2 % to 1.4 %, and (iv) proving that the calibrated simulator yields more trustworthy results on real workloads (SPEC). The authors argue that Cliffs is not tied to XiangShan; the same approach can be applied to any modern ISA (RISC‑V, ARM, x86) where simulators need to be aligned with RTL.

In summary, “Microarchitecture Cliffs” offers a systematic, high‑resolution way to expose and fix fidelity gaps between architectural simulators and RTL implementations. By generating benchmarks that attribute performance discrepancies to a single microarchitectural feature, the method enables precise, efficient calibration, leading to far more reliable simulation results for both research and industry processor development.

Comments & Academic Discussion

Loading comments...

Leave a Comment