Adaptive Milestone Reward for GUI Agents

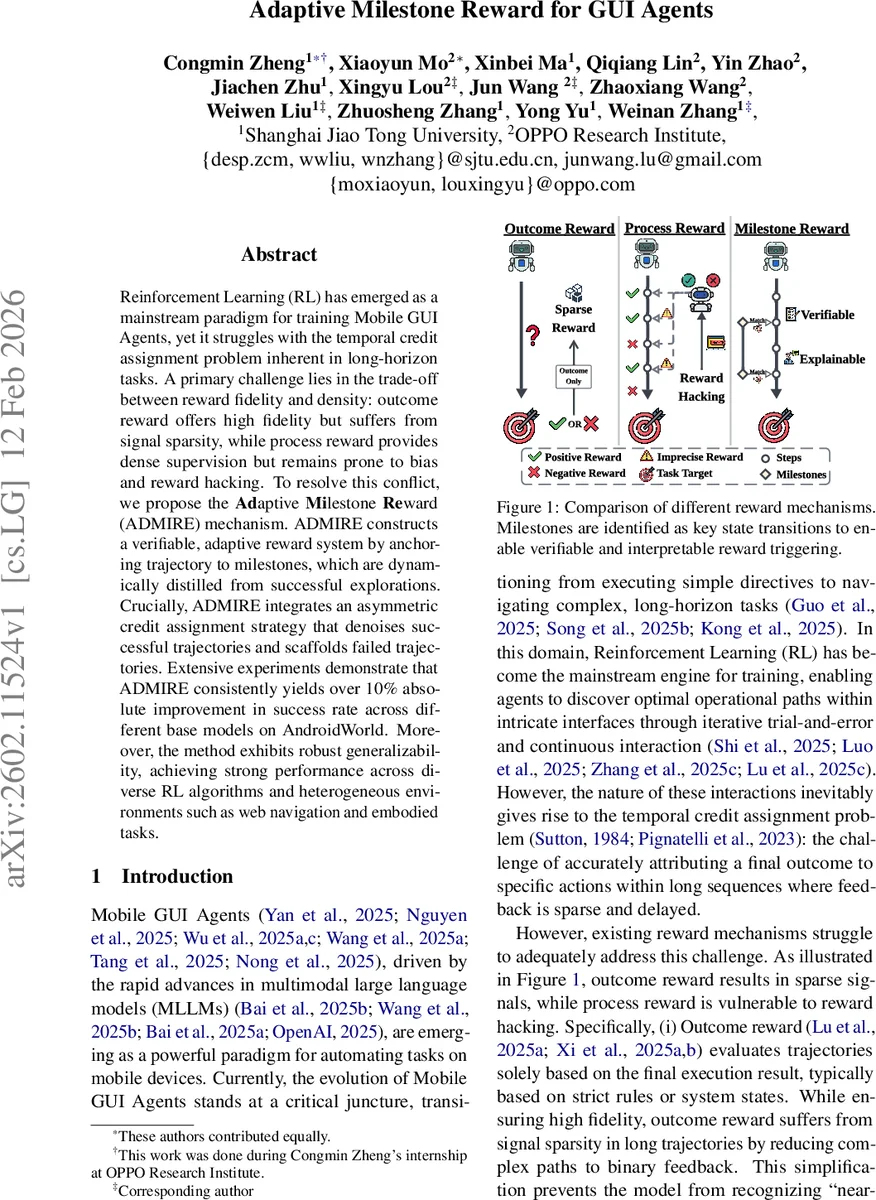

Reinforcement Learning (RL) has emerged as a mainstream paradigm for training Mobile GUI Agents, yet it struggles with the temporal credit assignment problem inherent in long-horizon tasks. A primary challenge lies in the trade-off between reward fidelity and density: outcome reward offers high fidelity but suffers from signal sparsity, while process reward provides dense supervision but remains prone to bias and reward hacking. To resolve this conflict, we propose the Adaptive Milestone Reward (ADMIRE) mechanism. ADMIRE constructs a verifiable, adaptive reward system by anchoring trajectory to milestones, which are dynamically distilled from successful explorations. Crucially, ADMIRE integrates an asymmetric credit assignment strategy that denoises successful trajectories and scaffolds failed trajectories. Extensive experiments demonstrate that ADMIRE consistently yields over 10% absolute improvement in success rate across different base models on AndroidWorld. Moreover, the method exhibits robust generalizability, achieving strong performance across diverse RL algorithms and heterogeneous environments such as web navigation and embodied tasks.

💡 Research Summary

The paper tackles a fundamental challenge in training mobile GUI agents: the temporal credit‑assignment problem that arises in long‑horizon tasks where feedback is sparse and delayed. Traditional reward schemes fall into a trade‑off: outcome‑based rewards are highly faithful to the final task success but provide only a binary signal, making exploration inefficient; process‑based rewards give dense step‑wise feedback but are prone to bias, reward hacking, and may reinforce irrelevant actions.

To resolve this dilemma, the authors introduce Adaptive Milestone Reward (ADMIRE). The core idea is to automatically extract “milestones”—key state transitions that signify genuine progress—from successful trajectories during online training. A large language model (LLM) is prompted to abstract these checkpoints from a representative successful episode, producing an ordered list

Comments & Academic Discussion

Loading comments...

Leave a Comment