Exploring Multiple High-Scoring Subspaces in Generative Flow Networks

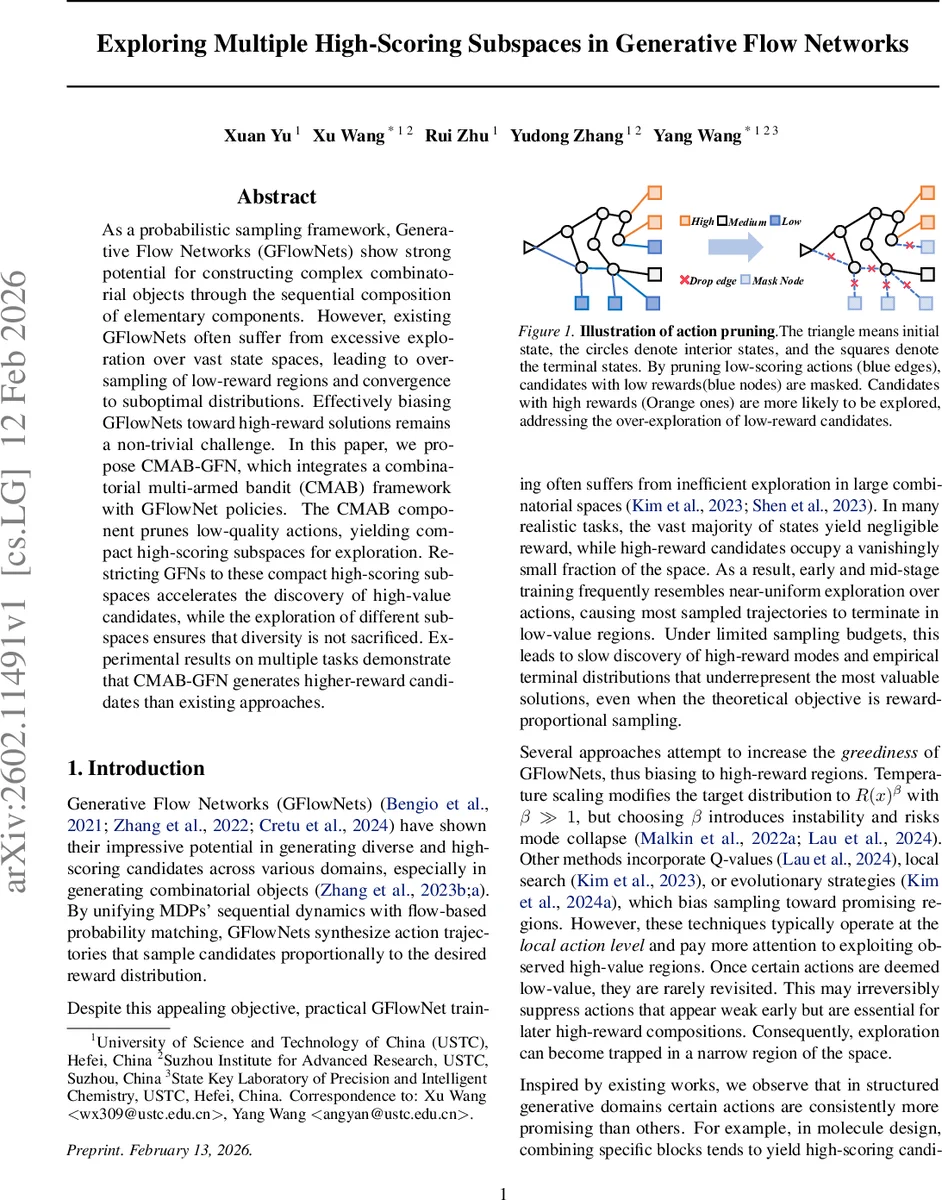

As a probabilistic sampling framework, Generative Flow Networks (GFlowNets) show strong potential for constructing complex combinatorial objects through the sequential composition of elementary components. However, existing GFlowNets often suffer from excessive exploration over vast state spaces, leading to over-sampling of low-reward regions and convergence to suboptimal distributions. Effectively biasing GFlowNets toward high-reward solutions remains a non-trivial challenge. In this paper, we propose CMAB-GFN, which integrates a combinatorial multi-armed bandit (CMAB) framework with GFlowNet policies. The CMAB component prunes low-quality actions, yielding compact high-scoring subspaces for exploration. Restricting GFNs to these compact high-scoring subspaces accelerates the discovery of high-value candidates, while the exploration of different subspaces ensures that diversity is not sacrificed. Experimental results on multiple tasks demonstrate that CMAB-GFN generates higher-reward candidates than existing approaches.

💡 Research Summary

Generative Flow Networks (GFlowNets) have shown promise for sampling complex combinatorial objects proportionally to a reward function, but in practice they often waste a large portion of the sampling budget exploring vast low‑reward regions. This paper introduces CMAB‑GFN, a novel framework that reframes GFlowNet exploration as a combinatorial multi‑armed bandit (CMAB) problem. The key idea is to treat state‑independent primitive actions (e.g., molecular building blocks, nucleotides, binary values) as base arms. At each iteration a subset of K base arms is selected, forming a super‑arm that defines a compact “high‑scoring subspace”. The GFlowNet policy is then constrained to use only actions whose primitive component belongs to this super‑arm, effectively pruning low‑quality actions before the trajectory generation begins.

Reward feedback for each base arm is estimated in a semi‑bandit fashion: after sampling a batch of candidates within the current subspace, the normalized rewards of all candidates containing a particular base arm are averaged to obtain an empirical estimate Xᵗᵢ. These estimates are fed into a standard CMAB algorithm (e.g., UCB or Thompson Sampling) to guide the selection of the next super‑arm, balancing exploration of new subspaces against exploitation of previously successful ones.

Training proceeds in two phases per round. First, the GFlowNet is trained under the current subspace constraint using the usual flow‑matching loss; this step does not increase the computational complexity of the network. Second, an unrestricted evaluation phase samples candidates without any action restrictions solely to update the arm‑level statistics. The unrestricted samples are not used for final performance metrics, preserving a fair comparison. This two‑phase scheme ensures that arm reward estimates converge as the flow network becomes more diverse, while keeping overhead modest (parallel sampling can further reduce cost).

When the primitive action set is small but trajectories are long (e.g., bit‑sequence generation with only “0” and “1”), the authors propose to define base arms as short sequences of t consecutive actions. Super‑arms then consist of sets of such sequences, widening the effective search space and preventing the collapse of the solution space that would occur if a single primitive action were pruned.

The authors also discuss the non‑stationary nature of the problem: as the GFlowNet learns, the underlying reward distribution changes, violating the static assumptions of classic CMAB. Their two‑phase sampling strategy, together with continual updating of arm statistics, mitigates this issue.

Empirical evaluation spans three representative domains: molecular design (using a vocabulary of 105 building blocks), three RNA design tasks, and a synthetic bit‑sequence task. Across all benchmarks, CMAB‑GFN consistently discovers higher‑reward candidates faster than baselines such as temperature scaling (β≫1), Q‑value integration, local search, and evolutionary strategies. Moreover, because the method cycles through multiple high‑scoring subspaces rather than fixing on a single one, it maintains greater diversity among generated samples, as demonstrated by diversity metrics in the experiments.

In summary, CMAB‑GFN offers a principled way to bias GFlowNets toward promising regions without sacrificing exploration. By elevating the granularity of exploration from individual actions to action‑induced subspaces, it achieves a better trade‑off between exploitation and exploration, reduces wasted sampling on low‑reward areas, and improves both convergence speed and solution quality in large combinatorial spaces. This work opens a new avenue for integrating bandit‑style combinatorial optimization techniques into deep generative flow models.

Comments & Academic Discussion

Loading comments...

Leave a Comment